A Comprehensive Guide to Shotgun Metagenomics Bioinformatics Pipelines: From Foundational Concepts to Clinical Validation

This article provides a comprehensive guide to shotgun metagenomics bioinformatics pipelines, tailored for researchers, scientists, and drug development professionals.

A Comprehensive Guide to Shotgun Metagenomics Bioinformatics Pipelines: From Foundational Concepts to Clinical Validation

Abstract

This article provides a comprehensive guide to shotgun metagenomics bioinformatics pipelines, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of metagenomic analysis, contrasting key methodological approaches such as read-based, assembly-based, and detection-based workflows. The guide details best practices for sample preparation, data processing, and analysis, while addressing common challenges like host DNA contamination and computational demands. Furthermore, it explores rigorous pipeline validation strategies using mock communities and performance metrics, synthesizing recent benchmarking studies to aid in the selection and implementation of robust pipelines for biomedical and clinical research applications.

Understanding Shotgun Metagenomics: Core Concepts and Analytical Approaches

Defining Shotgun Metagenomics and Its Advantages Over Amplicon Sequencing

Shotgun metagenomics and amplicon sequencing represent two foundational approaches for characterizing microbial communities. While amplicon sequencing targets specific phylogenetic markers such as the 16S rRNA gene for bacteria, shotgun metagenomics employs an untargeted strategy to sequence all DNA fragments within a sample [1] [2]. This application note delineates the technical principles, advantages, and limitations of each method. We provide a detailed protocol for a standardized shotgun metagenomics workflow, contextualized within a bioinformatics pipeline for drug development and clinical research. The note further presents a comparative analysis, demonstrating that shotgun metagenomics enables superior taxonomic resolution to the species and strain level, facilitates functional gene annotation, and provides a more accurate correlation with microbial biomass, thereby offering a comprehensive toolkit for researchers and scientists in the field [3] [4].

The study of microbial communities through genomic technologies has revolutionized fields from human health to environmental science. Two primary sequencing methodologies have emerged: amplicon sequencing and shotgun metagenomics. Amplicon sequencing, often referred to as metataxonomics, is a highly targeted approach that relies on the polymerase chain reaction (PCR) to amplify specific, conserved genomic regions, such as 16S ribosomal RNA (rRNA) for bacteria and archaea, 18S rRNA for microbial eukaryotes, or the Internal Transcribed Spacer (ITS) for fungi [1] [5]. These regions contain variable sequences that allow for taxonomic discrimination. In contrast, shotgun metagenomics is a comprehensive approach that involves randomly shearing all DNA in a sample into small fragments and sequencing them without prior amplification of specific targets [1]. This strategy provides a relatively unbiased view of the entire genetic material within a sample, enabling simultaneous assessment of taxonomic composition and functional potential [4].

The choice between these methods is critical and hinges on the research objectives, available resources, and the specific biological questions being asked. This document provides a detailed comparison and a standardized protocol to guide researchers in applying shotgun metagenomics effectively within a bioinformatics pipeline.

Comparative Analysis: Shotgun Metagenomics vs. Amplicon Sequencing

Fundamental Principles and Workflows

The workflows for amplicon and shotgun sequencing are fundamentally distinct, from initial library preparation through final data analysis. The schematic below illustrates the key steps and differences in the two approaches.

Quantitative and Qualitative Comparison

A direct comparison of the technical and practical aspects of each method reveals a trade-off between depth of information and resource requirements. The table below summarizes the core differences.

Table 1: Comparative overview of amplicon sequencing and shotgun metagenomics

| Feature | Amplicon Sequencing | Shotgun Metagenomics |

|---|---|---|

| Principle | Targeted PCR amplification of specific marker genes (e.g., 16S, 18S, ITS) [1] | Random sequencing of all DNA fragments in a sample [1] |

| Primary Research Objective | Phylogenetic relationship, species composition, and biodiversity [1] | Taxonomic composition, functional potential, and genome reconstruction [1] [4] |

| Typical Taxonomic Resolution | Genus-level; some species-level [1] | Species-level and strain-level; enables discrimination of subspecies and strains [1] [4] |

| Functional Profiling | Not available | Yes, enables pathway analysis (e.g., KEGG, GO) [1] |

| Correlation with Biomass | Weaker correlation, biased by primer mismatches and PCR amplification [3] | Stronger correlation with biomass, though influenced by factors like GC-content [3] |

| Relative Cost | Cost-efficient [1] [5] | Higher sequencing and computational costs [1] |

| Sensitivity to Host DNA | Applicable to samples with high host DNA contamination [1] | Requires host DNA removal to avoid unnecessary sequencing costs [1] |

| Risk of False Positives | Lower risk [1] | Higher risk, requires careful filtering (e.g., thresholds at 0.2% of total read count) [3] [1] |

| Recommended Applications | Evaluating differences in a large number of microbiota samples across different environments [1] | Deeply investigating a smaller number of samples for comprehensive taxonomic and functional insights [1] |

A key empirical finding is that while shotgun metagenomics provides a more comprehensive view, the data it generates can be harmonized with amplicon sequencing data at the genus level. This allows for the pooling of datasets for large-scale meta-analyses, leveraging the vast repository of existing amplicon data [6].

A Standardized Shotgun Metagenomics Wet-Lab and Bioinformatics Protocol

The following section outlines a detailed, end-to-end protocol for shotgun metagenomic analysis, from sample preparation to biological interpretation. This protocol is designed to be modular, allowing researchers to select components based on their specific project goals.

Wet-Lab Procedure: Library Preparation and Sequencing

- DNA Extraction: Use a kit designed for microbial lysis and DNA recovery to ensure representative extraction from all cell types in the community. Quantify DNA using fluorometric methods (e.g., Qubit).

- Library Preparation: This step does not involve targeted PCR.

- Fragmentation: Mechanically or enzymatically shear the purified DNA into fragments of a defined size (e.g., 200-500 bp).

- Adapter Ligation: Ligate platform-specific sequencing adapters to the ends of the fragmented DNA. Optional: Include index (barcode) sequences to allow for multiplexing of multiple samples in a single sequencing run.

- Sequencing: Load the prepared library onto a next-generation sequencing platform (e.g., Illumina NovaSeq) for paired-end sequencing. The required sequencing depth is highly variable; for complex environmental samples, a higher depth (e.g., 10-20 million reads per sample) is recommended to capture low-abundance members [3].

Bioinformatics Analysis Pipeline

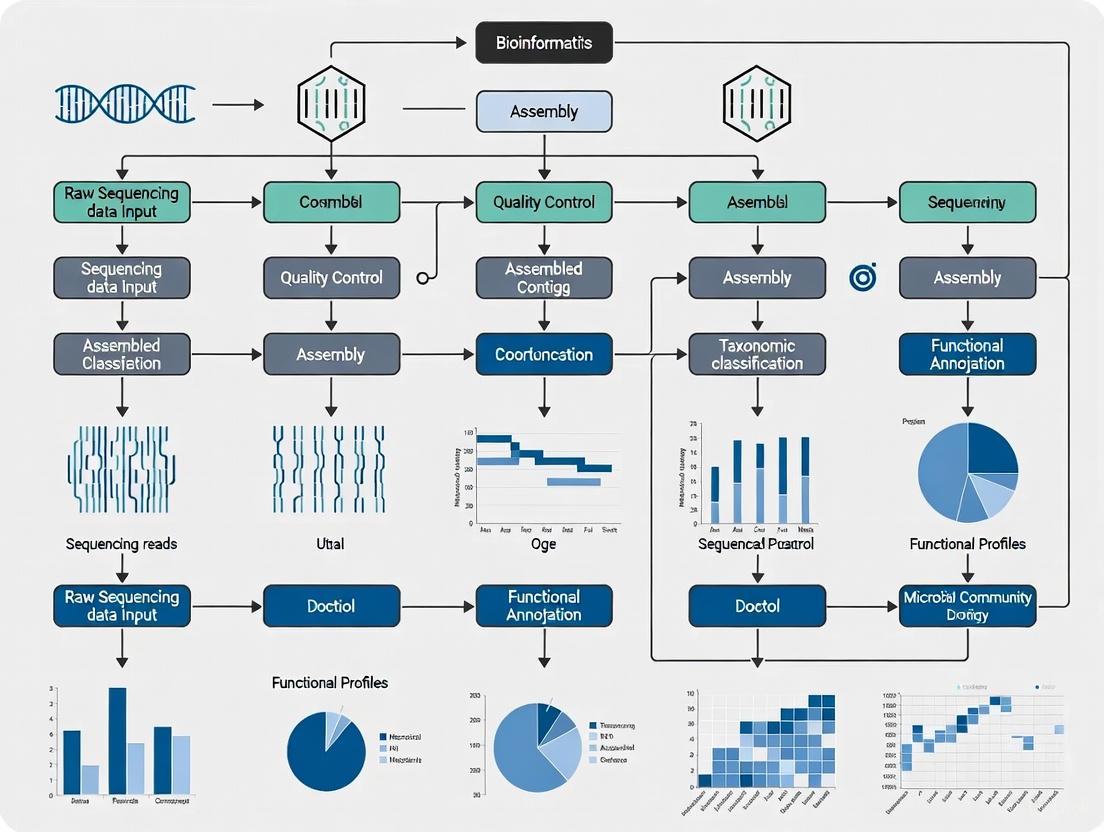

The computational workflow for shotgun metagenomics is complex and can be divided into several core modules. The graph below maps the logical flow and key decision points in a comprehensive bioinformatics pipeline.

Protocol Steps:

Quality Control and Preprocessing:

Host DNA Decontamination:

- Software: KneadData, Bowtie2 [4].

- Action: Align reads to the host reference genome (e.g., human, mouse) using Bowtie2. Discard all reads that align to the host to reduce contamination and focus computational resources on non-host sequences.

Taxonomic Profiling (Read-Based):

Functional Profiling (Read-Based):

Metagenome Assembly (Assembly-Based):

Binning and Metagenome-Assembled Genomes (MAGs):

- Software: MetaWRAP [4].

- Action: Group assembled contigs that likely originate from the same organism based on sequence composition (k-mers) and abundance across samples. This process, called binning, allows for the recovery of draft genomes from uncultured organisms.

The Scientist's Toolkit: Essential Research Reagents and Software

A successful shotgun metagenomics study relies on a suite of bioinformatics tools and reference databases. The following table catalogs key resources.

Table 2: Essential tools and databases for a shotgun metagenomics pipeline

| Category | Tool/Resource | Primary Function | Key Reference/Resource |

|---|---|---|---|

| Quality Control | FastQC | Quality assessment of raw sequencing reads | [7] [4] |

| fastp | Fast, all-in-one preprocessor for quality control and adapter trimming | [4] | |

| Host Removal | KneadData | Pipeline for removing host-associated sequences | [4] |

| Bowtie2 | Ultrafast, memory-efficient short read aligner for host read alignment | [4] | |

| Taxonomic Profiling | Kraken2 | Taxonomic classification of reads using k-mer matches | [4] [8] |

| Bracken | Bayesian estimation of species abundance from Kraken2 output | [4] | |

| MetaPhlAn4 | Profiling microbial composition using unique clade-specific markers | [4] | |

| Functional Profiling | HUMAnN3 | Profiling the abundance of microbial metabolic pathways | [4] [8] |

| Assembly & Binning | MEGAHIT | Metagenome assembler for large and complex datasets | [4] |

| MetaWRAP | A flexible pipeline for metagenome binning and refinement | [4] | |

| Gene Annotation | eggNOG-mapper | Functional annotation of genes using orthology | [4] [8] |

| Reference Databases | Greengenes2, SILVA | Curated databases of ribosomal RNA genes | [6] |

| RefSeq/GTDB | Comprehensive genome databases for taxonomic classification | ||

| UniRef90, MetaCyc | Protein family and metabolic pathway databases |

Integrated pipelines like EasyMetagenome [4] and the Sydney Informatics Hub workflow [7] bundle many of these tools into cohesive, scalable workflows, significantly reducing the burden of software deployment and ensuring reproducibility.

Shotgun metagenomics and amplicon sequencing are complementary yet distinct tools for microbial community analysis. Amplicon sequencing remains a powerful, cost-effective method for large-scale taxonomic surveys, particularly when focusing on well-characterized phylogenetic markers. However, shotgun metagenomics offers a transformative advantage by providing a comprehensive view of the microbiome, enabling high-resolution taxonomic assignment, functional potential analysis, and the reconstruction of metagenome-assembled genomes without prior cultivation [3] [4].

The choice of method should be guided by the research question. For projects requiring deep functional insights, strain-level discrimination, or the discovery of novel genes and pathways, shotgun metagenomics is the unequivocal choice. As sequencing costs continue to decline and bioinformatics pipelines become more standardized and accessible, shotgun metagenomics is poised to become the gold standard for in-depth microbiome investigation in drug development, clinical diagnostics, and beyond.

Shotgun metagenomics has revolutionized the study of microbial communities, enabling researchers to investigate microorganisms directly from their natural environments without the need for cultivation [9]. The analysis of these complex datasets relies on core computational approaches, each with distinct strengths and applications. This application note provides a detailed comparative analysis of the three principal analytical frameworks: read-based, assembly-based, and detection-based approaches. We frame this comparison within the context of developing robust bioinformatics pipelines for metagenomic research, offering structured experimental protocols, performance metrics, and implementation guidelines tailored for researchers, scientists, and drug development professionals. The choice of analytical strategy significantly impacts downstream interpretations, making selection criteria a critical consideration for study design [10].

Comparative Analysis of Approaches

Conceptual Foundations and Applications

Read-based approaches analyze unassembled sequencing reads, comparing them directly against reference databases for taxonomic classification and functional profiling [10]. This method is particularly valuable for quantitative community profiling when relevant references are available [9]. Tools such as Kraken2, Centrifuge, and MetaPhlAn2 operate within this paradigm, identifying organisms through alignment to clade-specific marker genes or k-mer matches [9] [11].

Assembly-based approaches attempt to reconstruct longer genomic segments (contigs) from short reads before analysis [10]. This workflow typically involves quality control, co-assembly of multiple samples, binning contigs into genomes, and subsequent gene annotation [12]. Popular assemblers include MEGAHIT, MetaSPAdes, and IDBA-UD, which are specifically designed for metagenomic data [9] [13]. This approach enables researchers to recover novel genomes and study genetic elements in their genomic context.

Detection-based approaches prioritize high-precision identification of specific organisms, often pathogens, with lower sensitivity compared to other methods [10]. These workflows typically employ alignment or k-mer based matching against curated datasets followed by heuristic classification methods [10]. This approach is particularly valuable in clinical diagnostics where confirming the presence of specific pathogens is critical.

Table 1: Core Characteristics of Metagenomic Analytical Approaches

| Feature | Read-based | Assembly-based | Detection-based |

|---|---|---|---|

| Primary Application | Bulk taxonomic/functional composition | Genomic context, novel genome recovery | High-confidence pathogen detection |

| Typical Questions | How do communities differ between sites/treatments? | What are metabolic capabilities of specific microbes? | Are known pathogens present in the sample? |

| Key Advantages | Fast, memory-efficient, quantitative | Recovers novel sequences, enables genomic analysis | High specificity, low false positive rate |

| Limitations | Limited by reference databases | Computationally intensive, challenging for complex communities | Limited to known targets, lower sensitivity |

| Representative Tools | Kraken2, Centrifuge, MetaPhlAn2 | MEGAHIT, MetaSPAdes, MaxBin | Taxonomer, Surpi, One Codex |

Performance Metrics and Benchmarking

Comparative studies demonstrate that the performance of these approaches varies significantly depending on the dataset characteristics and analytical goals. In a comprehensive benchmark of classification tools for long-read datasets, general-purpose mappers like Minimap2 achieved similar or better accuracy than best-performing specialized classification tools, though they were significantly slower than kmer-based methods [11]. The random forest technique has shown promising results as a suitable classifier, with models developed from read-based taxonomic profiling achieving 91% accuracy with a 95% confidence interval between 80% and 93% [9].

Assembly-based approaches face unique challenges in metagenomic contexts compared to single-genome assembly. The unknown abundance and diversity in samples complicate graph simplification, as low-coverage nodes may originate from genuine low-abundance genomes rather than sequencing errors [13]. Metagenomic abundance often follows a power law distribution, meaning many species occur with similarly low abundances, making distinguishing them problematic [13].

Detection-based approaches, particularly when combined with enrichment techniques, can significantly improve sensitivity. Capture panels can increase sensitivity by at least 10-100-fold over untargeted sequencing, making them suitable for detecting low viral loads (60 genome copies per ml) [14]. However, this enhanced sensitivity for targeted organisms comes at the cost of missing novel or unexpected pathogens not included in the panel.

Table 2: Performance Comparison Across Metagenomic Approaches

| Metric | Read-based | Assembly-based | Detection-based |

|---|---|---|---|

| Sensitivity | Limited for novel organisms | High for abundant community members | Excellent for targeted organisms |

| Specificity | Database-dependent | High with quality binning | Very high |

| Computational Demand | Low to moderate | Very high | Low to moderate |

| Reference Dependency | High | Low | Very high |

| Novel Discovery Potential | Limited | High | Very limited |

Detailed Experimental Protocols

Read-based Analysis Protocol

Sample Preparation and Sequencing

- Extract DNA/RNA using kits that preserve integrity of microbial nucleic acids

- Perform library preparation with unique dual indices to enable sample multiplexing

- Sequence using Illumina short-read or Nanopore long-read platforms

- Include appropriate controls (negative, positive, internal standards)

Quality Control and Preprocessing

- Demultiplex samples using barcode information (e.g.,

iu-demultiplexwith barcode file) [12] - Perform quality filtering with tools like

iu-filter-quality-minochefor large-insert libraries [12] - Remove adapter sequences and trim low-quality bases

- For host-associated samples, consider host DNA depletion methods

Taxonomic Profiling

- Select appropriate reference database (RefSeq, GTDB, custom)

- Run taxonomic classifier (Kraken2, Centrifuge, or MetaPhlAn2)

- For k-mer based tools, use Bracken for abundance estimation [11]

- For long reads, consider Minimap2 or Ram for alignment-based classification [11]

Downstream Analysis

- Import abundance tables into R/Python for statistical analysis

- Perform differential abundance testing between sample groups

- Conduct multivariate analysis (PCoA, PERMANOVA) for community comparisons

- Visualize results using heatmaps, bar plots, and ordination diagrams

Assembly-based Analysis Protocol

Data Preparation a. Perform quality control as in read-based protocol b. For multiple samples, consider co-assembly to maximize recovery c. Normalize read coverage to reduce computational complexity

Metagenomic Assembly

- Select assembler based on data type and resources:

- Execute assembly with optimized parameters:

- Assess assembly quality with N50, contig counts, and completeness metrics

Binning and Genome Resolution

- Map reads back to contigs for abundance estimation (Bowtie2, BBmap) [10]

- Perform metagenomic binning using multiple algorithms:

- MetaBAT2 for abundance-aware binning

- MaxBin2 for universal single-copy gene-based binning

- CONCOCT for sequence composition and coverage integration

- Consolidate bins using DAS Tool to generate non-redundant set

- Assess bin quality with CheckM for completeness and contamination

Gene Prediction and Annotation

- Identify open reading frames with Prodigal or MetaGeneMark [10]

- Annotate against functional databases (KEGG, COG, Pfam)

- Conduct pathway analysis and metabolic reconstruction

- Compare genomes across samples using average nucleotide identity

Reference-Guided Assembly Protocol

Reference Selection

- Identify relevant references from NCBI, GTDB, or specialty collections

- Use marker genes (e.g., single-copy core genes) to identify candidate genomes [15]

- Filter references based on sample-specific relevance

- Cluster references to reduce redundancy (e.g., with MinHash) [15]

Read Mapping and Assembly

- Align reads to reference genomes using BWA-MEM or Minimap2

- Identify coverage breaks and structural variations

- Generate sample-specific contigs using polishing algorithms

- "Mix and match" segments from multiple references for pangenome representation [15]

Validation and Quality Assessment

- Compare with de novo assemblies for completeness

- Validate with orthogonal methods (qPCR, culture)

- Assess contiguity metrics (NG50, NGA50) [15]

Workflow Visualization

Figure 1: Comparative Workflows for Metagenomic Analysis Approaches. Each approach begins with raw sequencing reads but follows distinct analytical pathways with different tool requirements and output types.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Category | Item | Specification/Version | Application Notes |

|---|---|---|---|

| Wet Lab Reagents | NEBNext Microbiome DNA Enrichment Kit | E2612L | Depletes methylated host DNA, improves microbial detection [14] |

| KAPA RNA HyperPrep with RiboErase | KK8561 | rRNA depletion for RNA metagenomics, preserves host transcriptome [14] | |

| Twist Comprehensive Viral Research Panel | N/A | Targets 3153 viruses, increases sensitivity 10-100x [14] | |

| xGen UDI-UMI Adapters | 10005903 | Unique dual indices for sample multiplexing, reduces index hopping [14] | |

| Computational Tools | MEGAHIT | v1.0.6+ | Efficient metagenomic assembler, suitable for large datasets [12] |

| Kraken2/Bracken | v2.0+ | Fast kmer-based classification with abundance estimation [11] | |

| Minimap2 | v2.0+ | Versatile aligner for long reads, effective for metagenomics [11] | |

| MetaBAT2 | v2.0+ | Metagenomic binning tool using abundance and composition [10] | |

| CheckM | v1.0+ | Assesses completeness/contamination of genome bins [10] | |

| Reference Databases | GTDB | Release 200+ | Genome Taxonomy Database, standardized bacterial/archaeal taxonomy |

| RefSeq | Updated regularly | NCBI Reference Sequence Database, comprehensive genome collection | |

| UniProt | Updated regularly | Protein sequence and functional information [10] | |

| Quality Control | FastQC | v0.11+ | Quality control visualization for sequencing data |

| MultiQC | v1.0+ | Aggregates results from multiple tools into single report |

Implementation Considerations

Computational Resource Requirements

The computational demands of these approaches vary significantly. Kmer-based tools generally offer the fastest processing times with moderate memory requirements, while general-purpose mappers like Minimap2 provide slightly better accuracy but at significantly slower speeds [11]. Assembly-based approaches are the most computationally intensive, with memory requirements often scaling with dataset size and complexity [13]. For large-scale projects, assembly may require high-memory nodes (≥512GB RAM) and days of processing time, whereas read-based classification can often be completed in hours on standard servers.

Selection Guidelines

The choice of analytical approach should be guided by research questions and sample characteristics:

- Clinical diagnostics with known pathogen suspects: Detection-based approaches offer the highest specificity and rapid turnaround [10] [14]

- Community ecology studies: Read-based approaches efficiently characterize taxonomic and functional differences between sample groups [9]

- Novel genome discovery: Assembly-based approaches enable recovery of previously uncharacterized microorganisms [13] [15]

- Low biomass samples: Targeted enrichment combined with detection-based methods provides necessary sensitivity [14]

- Mixed communities with related strains: Reference-guided assembly approaches can leverage existing genomes to improve reconstructions [15]

For comprehensive studies, hybrid approaches often yield the best results, using multiple methods to compensate for individual limitations. A common strategy employs read-based analysis for initial community assessment followed by assembly-based methods for in-depth characterization of key community members.

The three core analytical approaches for metagenomics—read-based, assembly-based, and detection-based—each offer distinct advantages for different research scenarios. Read-based methods provide efficient community profiling, assembly-based approaches enable novel genome discovery, and detection-based methods deliver high-specificity pathogen identification. The optimal choice depends on research objectives, sample characteristics, and computational resources. As metagenomic applications expand in research and clinical settings, understanding these fundamental approaches and their appropriate implementation becomes increasingly critical for generating robust, reproducible microbiological insights. Future methodology developments will likely focus on hybrid approaches that combine the strengths of each method while addressing challenges of scalability, accuracy, and interpretation.

Typical Workflows and Research Questions Addressed by Each Method

Metagenomics, a term first coined by Handelsman in 1998, refers to "the genomes of the total microbiota found in nature" and involves obtaining sequence data directly from environmental samples [16]. This culture-independent approach has become a cornerstone of modern microbiology, enabling researchers to explore microbial communities in diverse habitats, from the human gut to soil and aquatic environments [17]. The field primarily utilizes two fundamental sequencing strategies: targeted (amplicon) sequencing and shotgun metagenomic sequencing. Each method offers distinct advantages and addresses specific research questions, with the choice between them depending on factors such as research objectives, resolution requirements, and budgetary constraints [18].

Targeted metagenomics, often called metagenetics, focuses on sequencing taxonomically informative genetic markers, typically the 16S rRNA gene for prokaryotes or the ITS region for fungi [19]. This approach provides a cost-effective means for characterizing microbial community composition and diversity. In contrast, shotgun metagenomics involves randomly sequencing all DNA fragments from a sample, enabling comprehensive analysis of both taxonomic content and functional potential [18]. The following sections provide a detailed examination of these methodologies, their workflows, applications, and the bioinformatics pipelines required to interpret the resulting data.

Targeted (Amplicon) Metagenomics

Research Questions and Applications

Targeted metagenomics, predominantly using 16S rRNA gene sequencing, is the preferred method for studies focusing primarily on microbial community composition and diversity. The 16S rRNA gene contains conserved regions that facilitate primer binding and hypervariable regions that provide taxonomic discrimination, making it an ideal biomarker for prokaryotic identification [17]. This approach addresses specific research questions including:

Microbial Community Profiling: Determining the taxonomic composition and relative abundance of microorganisms in a given environment. For example, studies have successfully used 16S sequencing to characterize rhizosphere microbial communities of crops like rice, wheat, and legumes [17], as well as to identify bacterial wilt disease pathogens in plants [17].

Comparative Diversity Analysis: Investigating how microbial communities differ across various conditions, time points, or habitats. This includes studies examining the effects of dietary interventions on gut microbiota or environmental perturbations on soil microbiomes.

Pathogen Identification and Diagnostics: Detecting and identifying pathogenic organisms in clinical, agricultural, or environmental samples. The high sensitivity of targeted sequencing makes it valuable for outbreak tracing and disease diagnostics [17].

The principal advantage of targeted metagenomics lies in its cost-effectiveness and lower sequencing depth requirements, enabling higher sample throughput for diversity studies. However, its limitations include primer bias affecting amplification efficiency and restricted taxonomic resolution, which often fails to reliably distinguish beyond the genus level for many taxa [20].

Experimental Protocol

The experimental workflow for targeted metagenomics follows a structured pathway from sample collection to sequencing:

- Sample Collection: The process begins with careful selection and collection of the target sample (e.g., soil, water, clinical specimens). Sample integrity is maintained through immediate processing or proper preservation to prevent microbial community shifts [17].

- DNA Isolation: Community DNA is extracted using methods appropriate for the sample type. This critical step often incorporates enzymatic (e.g., lysozyme, lysostaphin, mutanolysin) and mechanical lysis to address the diverse cell wall structures present in mixed microbial communities [17].

- PCR Amplification: Using consensus primers targeting conserved regions of the 16S rRNA gene (e.g., V3-V4 or V4-V5 hypervariable regions), the taxonomic marker is amplified. Appropriate controls are essential to detect potential contamination [17].

- Library Preparation and Sequencing: Amplified products are prepared for sequencing by adding platform-specific adapters. The library is quantified using methods such as qPCR or Bioanalyzer systems before undergoing high-throughput sequencing on platforms such as Illumina MiSeq or Ion Torrent [17].

Bioinformatics Pipelines and Analysis

The analysis of targeted metagenomics data involves multiple processing steps, which can be broadly categorized into "clustering-first" and "assignment-first" approaches [19]. The following workflow diagram illustrates the key stages and tools involved in this process:

Figure 1: Bioinformatics Workflow for Targeted Metagenomics

As illustrated in Figure 1, the analytical process begins with quality control and preprocessing of raw sequencing reads to remove artifacts and errors. The subsequent analysis branches into two methodological approaches:

Clustering-First Approaches: Tools such as DADA2, QIIME 2, and Mothur employ an initial step where sequences are clustered into Operational Taxonomic Units (OTUs) or denoised into Amplicon Sequence Variants (ASVs) based on sequence similarity. Representative sequences from each cluster are then taxonomically classified by comparison against reference databases [20] [19].

Assignment-First Approaches: Emerging tools like Kraken 2 and PathoScope 2 use an alternative method where reads are first classified against a reference database using k-mer matching or read mapping, before being grouped into taxonomic units [20] [19].

Recent benchmarking studies using mock microbial communities have demonstrated that assignment-first tools like Kraken 2 and PathoScope 2 can outperform traditional clustering-first approaches in species-level taxonomic assignments, especially when paired with comprehensive reference databases such as SILVA or RefSeq [20].

Table 1: Comparison of Bioinformatics Pipelines for Targeted Metagenomics

| Pipeline | Approach | Reference Databases | Strengths | Species-Level Accuracy |

|---|---|---|---|---|

| QIIME 2 | Clustering-first | Greengenes, SILVA, RDP | User-friendly interface, extensive plugins | Moderate [20] |

| DADA2 | Clustering-first | SILVA, RDP, Greengenes | High-resolution ASVs, precise error correction | Moderate [20] |

| Mothur | Clustering-first | SILVA, RDP, Greengenes | Comprehensive workflow, SOP guidance | Moderate [20] |

| Kraken 2 | Assignment-first | Kraken 2 Standard, SILVA | Fast k-mer based classification, sensitive | High [20] |

| PathoScope 2 | Assignment-first | RefSeq | Bayesian read reassignment, handles ambiguities | High [20] |

Shotgun Metagenomics

Research Questions and Applications

Shotgun metagenomic sequencing provides a comprehensive view of all genes and organisms in a complex sample, enabling researchers to address broader research questions that extend beyond taxonomic classification to functional potential [18]. This approach is particularly valuable for:

Functional Gene Annotation: Identifying and characterizing metabolic pathways, virulence factors, antimicrobial resistance genes, and other functional elements within microbial communities. For example, shotgun sequencing has been applied to surveil biological impurities and antimicrobial resistance genes in vitamin-containing food products [21].

Unculturable Microorganism Discovery: Studying microorganisms that cannot be cultivated in laboratory settings, potentially revealing novel taxa and genes. This has led to the discovery of novel antimicrobials like Terbomycine A and B, and bacterial enzymes such as NHLase [17].

Metagenome-Assembled Genomes (MAGs): Reconstructing genomes from complex microbial communities without the need for isolation and cultivation. Recent advances in long-read sequencing and bioinformatics have enabled recovery of more high-quality, single-contig MAGs [22].

Strain-Level Differentiation: Discriminating between closely related microbial strains, which is crucial for outbreak investigations and understanding microbe-disease relationships.

The key advantage of shotgun metagenomics is its ability to simultaneously assess both taxonomic composition and functional capabilities of microbial communities. However, this comprehensive approach requires deeper sequencing, resulting in higher costs and more complex computational requirements compared to targeted methods [18].

Experimental Protocol

The shotgun metagenomics workflow involves the following key experimental steps:

Sample Collection and DNA Extraction: Similar to targeted approaches, samples are collected with consideration for temporal and geographical factors. DNA extraction must be comprehensive to capture genetic material from diverse microorganisms, often requiring rigorous lysis protocols [17].

Library Preparation without Target Enrichment: Unlike targeted metagenomics, shotgun sequencing does not involve PCR amplification of specific markers. Instead, total DNA is fragmented physically or enzymatically, and sequencing adapters are ligated to the fragments. Protocols vary by platform, such as the NEBNext Ultra II DNA library prep kit for Illumina [23] or the Ligation Sequencing Kit for Oxford Nanopore platforms [24].

High-Throughput Sequencing: Libraries are sequenced using platforms such as Illumina NovaSeq, PacBio Sequel II, or Oxford Nanopore GridION/MinION. Sequencing depth is critical, with recommendations ranging from millions to billions of reads depending on complexity and objectives [23] [18].

Specialized Protocols: Advanced applications may require specialized approaches. For example, the FDA protocol for bacterial enrichments using Oxford Nanopore R10 flow cells enables multiplexing of up to 16 samples per flow cell [24]. HiFi shotgun metagenomics with PacBio systems provides long-read data that improves genome completeness and enables recovery of more species and MAGs [22].

Bioinformatics Pipelines and Analysis

The analysis of shotgun metagenomic data involves a more complex workflow than targeted approaches, with multiple specialized steps as illustrated below:

Figure 2: Bioinformatics Workflow for Shotgun Metagenomics

As shown in Figure 2, shotgun metagenomics analysis involves several interconnected pathways:

Read Preprocessing and Host Removal: Quality control tools like FastQC and fastp remove adapters and low-quality reads. Host DNA contamination is eliminated using tools like Kraken2 with custom host databases or minimap2 [23] [25].

Taxonomic Profiling: Processed reads are directly classified using tools such as the DRAGEN Metagenomics Pipeline or Kraken 2, which perform taxonomic classification and provide abundance estimates [18] [25].

Assembly and Binning: For functional analysis, quality-filtered reads are assembled into contigs using tools like MEGAHIT or metaSPAdes. Contigs are then binned into metagenome-assembled genomes (MAGs) using tools such as MAXBIN [25].

Gene Prediction and Functional Annotation: Open reading frames are predicted from assembled contigs using tools like Prodigal or MetaGeneMark. Predicted genes are functionally annotated by comparison against databases including KEGG, eggNOG, and CAZy using alignment tools like DIAMOND or BLAST+ [25].

Recent advances in shotgun metagenomics analysis have demonstrated significant improvements in outcomes. Updated bioinformatics pipelines for HiFi shotgun metagenomics data have shown 162-808% increases in species detection and 18-48% improvements in high-quality MAG recovery compared to previous methods [22].

Table 2: Comparison of Bioinformatics Pipelines for Shotgun Metagenomics

| Pipeline/Tool | Application | Key Features | Reference Databases | Performance |

|---|---|---|---|---|

| DRAGEN Metagenomics | Taxonomic profiling | Optimized for Illumina data, efficient processing | Custom curated databases | High accuracy for species identification [18] |

| Kraken 2 | Taxonomic profiling | Ultra-fast k-mer classification, sensitive | Kraken 2 Standard, customizable | High species-level accuracy [20] |

| PathoScope 2 | Taxonomic profiling | Bayesian reassignment of ambiguous reads | RefSeq | Accurate strain-level identification [20] |

| MGS-Fast | Functional annotation | Rapid alignment to microbial gene catalogs | Custom gene catalogs | Identifies differential functional genes [25] |

| Prodigal | Gene prediction | Prokaryotic gene prediction, precise start/stop codon identification | None (ab initio predictor) | Accurate ORF detection [25] |

Research Reagent Solutions

The following table outlines essential reagents and materials used in shotgun metagenomics library preparation and sequencing, based on the Oxford Nanopore Platform protocol [24]:

Table 3: Essential Research Reagents for Shotgun Metagenomics

| Component | Function | Example Product |

|---|---|---|

| Native Barcode | Sample multiplexing and identification | Native Barcode Plate (NB01-96) |

| DNA Control Sample | Sequencing process control | DNA Control Sample (DCS) |

| Native Adapter | Library attachment to sequencing matrix | Native Adapter (NA) |

| Sequencing Buffer | Provides optimal chemical environment | Sequencing Buffer (SB) |

| Library Beads | Purification and size selection of DNA fragments | AMPure XP Beads |

| Elution Buffer | Final resuspension of purified library | Elution Buffer (EB) |

| End Repair Mix | Prepares DNA fragments for adapter ligation | NEBNext UltraII End repair/dA-tailing Module |

| Ligation Master Mix | Catalyzes adapter ligation to DNA fragments | NEB Blunt/TA Ligase Master Mix |

| Flow Cell | Platform-specific sequencing matrix | Oxford Nanopore R10 Flow Cell |

Targeted and shotgun metagenomics represent complementary approaches with distinct strengths and applications in microbial community analysis. Targeted metagenomics, primarily using 16S rRNA gene sequencing, provides a cost-effective method for comprehensive taxonomic profiling and diversity analysis across large sample sets. In contrast, shotgun metagenomics offers unparalleled insights into both taxonomic composition and functional potential, enabling discovery of novel genes, pathways, and metagenome-assembled genomes.

The choice between these methods should be guided by specific research questions, resources, and analytical requirements. Targeted approaches remain ideal for studies focused primarily on community composition and dynamics, while shotgun methods are essential for investigating functional capabilities and genetic potential. As sequencing technologies continue to advance and bioinformatics pipelines become more sophisticated, both methods will continue to evolve, providing increasingly powerful tools for exploring the microbial world across diverse research contexts from human health to environmental monitoring.

Shotgun metagenomic sequencing represents a powerful, culture-independent method for analyzing the totality of genomic material within a microbial sample, enabling comprehensive insights into both taxonomic composition and functional potential [26]. Unlike targeted 16S rRNA gene sequencing, which focuses on specific hypervariable regions, shotgun sequencing randomly fragments all DNA, providing sequences that can be assembled into contigs and potentially complete genomes, while also allowing for superior species-level resolution [27]. The primary analytical outputs of this approach are taxonomic profiles, which detail the identity and relative abundance of microorganisms present, and Metagenome-Assembled Genomes (MAGs), which are reconstructed genomes of individual microbial population members derived from the assembly of sequencing reads [26]. These outputs are foundational for exploring the structure and function of microbial communities in diverse environments, from the human gut to complex ecosystems. The reliability of these outputs, however, is intrinsically linked to the bioinformatics pipelines and computational tools used for processing, each employing distinct methodologies—such as k-mer-based classification, marker gene analysis, and assembly-based approaches—that can significantly impact the final results [27] [28]. This document outlines the key outputs, benchmarks performance across available tools, and provides detailed protocols for generating robust taxonomic profiles and MAGs.

Benchmarking Pipelines and Performance Metrics

Choosing an appropriate bioinformatics pipeline is critical, as the performance of taxonomic classifiers and profilers varies significantly in terms of sensitivity, precision, and accuracy of abundance estimation. Benchmarking studies using mock microbial communities with known compositions provide essential objective assessments of these tools.

Table 1: Performance of Shotgun Metagenomics Taxonomic Classification Pipelines

| Pipeline Name | Classification Approach | Key Features | Reported Performance Highlights |

|---|---|---|---|

| bioBakery (MetaPhlAn4) | Marker gene & MAG-based [27] | Utilizes clade-specific marker genes and species-level genome bins (SGBs) [27]. Integrated within a comprehensive suite of tools [28]. | Ranked best overall in a recent assessment using multiple mock communities, demonstrating high accuracy across most metrics [27]. |

| JAMS | Assembly-assisted, k-mer-based (Kraken2) [27] | Performs whole-genome assembly and uses Kraken2 for classification. Provides detailed genomic analysis [27]. | Achieved among the highest sensitivity for detecting species, though may require validation against false positives [27]. |

| WGSA2 | k-mer-based (Kraken2) [27] | Offers optional genome assembly. Focuses on taxonomic profiling from reads [27]. | Showed high sensitivity in benchmarking studies, comparable to JAMS [27]. |

| Woltka | Operational Genomic Unit (OGU) [27] | Classifies based on phylogeny and evolutionary lineage of reference genomes. Does not perform assembly [27]. | A newer classifier that offers a phylogenetically-aware alternative to k-mer and marker-based methods [27]. |

| BugSeq | Long-read optimized [29] | Designed specifically for long-read (PacBio HiFi, ONT) data. | Demonstrated high precision and recall with PacBio HiFi data, detecting all species down to 0.1% abundance without filtering [29]. |

| MEGAN-LR & DIAMOND | Long-read optimized [29] | Uses alignment-based classification for long-read datasets. | Along with BugSeq and sourmash, displayed high precision and recall on long-read datasets without requiring heavy filtering [29]. |

Table 2: Comparative Analysis of Classification Methodologies

| Methodology | Representative Tools | Advantages | Disadvantages |

|---|---|---|---|

| Marker Gene-Based | MetaPhlAn4 [27] [28] | Computationally efficient, low false positive rate, provides direct relative abundance estimates [27]. | Limited to organisms with known marker genes; may miss novel taxa [27]. |

| k-mer-Based | Kraken2, WGSA2, JAMS [27] [28] | High sensitivity, uses comprehensive reference databases, can classify a broad range of reads [27]. | Can produce false positives; often requires filtering; computationally intensive for large databases [29]. |

| Alignment-Based (for Long Reads) | MEGAN-LR, MetaMaps [29] | Leverages long-range information in reads (multiple genes), high accuracy for high-quality long reads [29]. | Performance can be affected by read quality and length; computationally demanding [29]. |

| Assembly-Based | MEGAHIT, metaSPAdes | Enables reconstruction of genomes (MAGs) and discovery of novel genes [26]. | Computationally very intensive; assembly of complex communities can be fragmented and challenging [26]. |

Workflow Diagram: From Raw Data to Key Outputs

The following diagram illustrates the standard bioinformatics workflow for processing shotgun metagenomics data, from raw sequencing reads to the key outputs of taxonomic profiles and MAGs, integrating the tools and pipelines discussed.

Diagram Title: Shotgun Metagenomics Analysis Workflow

Detailed Experimental Protocols

Protocol 1: Generating a Taxonomic Profile with bioBakery

The bioBakery suite, specifically the MetaPhlAn4 tool, is a widely used and well-performing pipeline for taxonomic profiling from shotgun metagenomic reads [27] [28]. This protocol is adapted from established workflows and benchmarking studies.

Principle: MetaPhlAn4 uses a database of clade-specific marker genes to taxonomically assign sequencing reads, providing species-level resolution and relative abundance estimates. It incorporates both known and unknown species-level genome bins (SGBs) for improved coverage of microbial diversity [27].

Materials:

- Computing Environment: A computer with a command-line interface (Linux or macOS) or access to a high-performance computing (HPC) cluster. Basic command-line knowledge is required [30].

- Container Software: Docker or Singularity installed to ensure reproducibility [28].

- Input Data: Quality-controlled and host-depleted paired-end or single-end sequencing reads in FASTQ format.

- Database: The MetaPhlAn4 database, which can be downloaded automatically on first run or manually.

Procedure:

- Quality Control and Host Depletion: Begin with raw FASTQ files. Use Trimmomatic to remove adapter sequences and low-quality bases. If the sample is host-derived (e.g., from a human biopsy), use a tool like HISAT2 to align reads against the host genome (e.g., human GRCh38) and retain only the unmapped reads for downstream analysis [28].

- Run MetaPhlAn4: Execute the following basic command, replacing the placeholders with your file paths.

- For paired-end reads: Use

--nprocto specify the number of parallel processing threads for faster execution. - The

--bowtie2outflag saves the intermediate Bowtie2 alignment file for potential re-use.

- For paired-end reads: Use

- Interpret Output: The primary output

taxonomic_profile.txtis a tab-separated file listing detected taxa from kingdom to species level, their unique taxonomic IDs, and their relative abundance in the sample.

Troubleshooting and Optimization:

- If many reads remain unclassified, consider that your sample may contain microbial lineages not well-represented in the standard MetaPhlAn4 database.

- For large-scale studies, consider using integrated pipelines like MeTAline, which wraps MetaPhlAn4 (and other tools like Kraken2) within a Snakemake workflow, automating the steps from quality control to profiling and ensuring reproducibility [28].

Protocol 2: Reconstructing Metagenome-Assembled Genomes (MAGs)

This protocol outlines the assembly-based pathway for reconstructing near-complete genomes from complex metagenomic samples, which allows for in-depth functional analysis and discovery of novel microorganisms [26].

Principle: Short sequencing reads are assembled into longer contiguous sequences (contigs). These contigs are then grouped ("binned") based on sequence composition (e.g., k-mer frequency, GC content) and abundance patterns across multiple samples, ultimately resulting in draft genomes for individual populations.

Materials:

- Input Data: High-quality, pre-processed sequencing reads (as from Protocol 1, Step 1). Deeper sequencing coverage is generally required for successful MAG recovery than for taxonomic profiling.

- Software:

- Assembler: MEGAHIT or metaSPAdes.

- Binner: MetaBAT2, MaxBin2, or CONCOCT.

- CheckM or similar for assessing MAG quality and completeness.

Procedure:

- De Novo Assembly: Assemble the quality-controlled reads into contigs. Example using MEGAHIT:

The final contigs are typically found in

assembly_output/final.contigs.fa. - Read Mapping: Map the original quality-controlled reads back to the assembled contigs to generate abundance information for each contig. This is typically done using Bowtie2 to create a BAM file, which is then sorted and indexed.

- Binning: Execute one or more binning tools on the contigs and the sorted BAM file to group contigs into putative genomes.

- Quality Assessment and Refinement: Evaluate the quality of the reconstructed MAGs using CheckM. CheckM will report estimates of completeness and contamination. High-quality MAGs are typically defined as those with >90% completeness and <5% contamination. Use these metrics to select the best-quality MAGs for downstream analysis.

Troubleshooting and Optimization:

- A high rate of fragmented assemblies can result from low sequencing depth or highly complex communities. Consider increasing sequencing depth or using a combination of assembly algorithms.

- Binners often perform better on different datasets; using a consensus approach (dereplicating results from multiple binners) can yield a more complete and higher-quality set of MAGs.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents, Databases, and Software for Shotgun Metagenomics

| Item Name | Type | Function and Application |

|---|---|---|

| Trimmomatic | Software Tool | Removes adapter sequences and low-quality bases from raw sequencing reads during the essential quality control step [28]. |

| Kraken2 Database | Reference Database | A comprehensive k-mer database used by classifiers like Kraken2, JAMS, and WGSA2 to assign taxonomy to reads or contigs [27] [28]. Can be customized to include specific genomes. |

| MetaPhlAn4 Database | Reference Database | A curated collection of clade-specific marker genes used by MetaPhlAn4 for highly efficient and specific taxonomic profiling and relative abundance estimation [27] [28]. |

| MetaBAT2 | Software Tool | A widely used tool for binning assembled contigs into Metagenome-Assembled Genomes (MAGs) based on sequence composition and abundance across samples [26]. |

| CheckM | Software Tool | Assesses the quality of reconstructed MAGs by estimating completeness and contamination using a set of conserved, single-copy marker genes, which is critical for downstream analysis [26]. |

| MeTAline Pipeline | Integrated Workflow | A containerized Snakemake pipeline that integrates multiple tools (e.g., Trimmomatic, Kraken2, MetaPhlAn4, HUMAnN) into a single, reproducible workflow from reads to taxonomy and function [28]. |

| HUMAnN3 | Software Tool | Performs functional profiling of microbial communities by determining the abundance of microbial pathways from metagenomic data, often stratifying results by contributing species [28]. |

Building Your Pipeline: A Step-by-Step Workflow from Sample to Insight

The reliability of any shotgun metagenomics study is fundamentally contingent on the quality and precision of its initial, wet-lab phase. The pre-analytical steps—encompassing sample collection, nucleic acid extraction, and library preparation—form the foundational pillar upon which all subsequent bioinformatics analysis is built [31]. Errors or inconsistencies introduced at these stages can propagate through the entire workflow, leading to biased taxonomic profiles, compromised functional annotations, and ultimately, misleading biological conclusions [32] [33]. This application note provides a detailed protocol for these critical pre-analytical procedures, framed within the context of a comprehensive bioinformatics pipeline for shotgun metagenomics research. It is designed to equip researchers and drug development professionals with the methodologies to ensure the generation of high-quality, reproducible sequencing data.

Sample Collection and Preservation

The goal of sample collection is to obtain a representative microbial biomass while minimizing the introduction of contaminants and preserving the integrity of the nucleic acids.

Key Considerations

- Sample Type: The strategy must be tailored to the sample matrix (e.g., whole blood, plasma, tissue, environmental swabs) [32] [31]. For instance, blood stream infection diagnostics must contend with high levels of human background DNA, which can drastically reduce the sequencing depth of microbial pathogens [32].

- Biomass and Volume: Sufficient microbial biomass is critical. For low-biomass samples, the use of ultraclean reagents and "blank" sequencing controls is essential to distinguish true microbial signals from contamination [33]. The recommended volume for blood culture diagnostics is 40–60 mL, though molecular tests often use only 1–10 mL, which can impact detection sensitivity [32].

- Preservation and Storage: Immediate freezing at -80°C or use of appropriate stabilization buffers is recommended to prevent microbial growth or degradation post-collection.

This protocol is adapted from a study developing a shotgun metagenomics protocol for blood stream infections.

Materials:

- Fresh whole blood (WB) from healthy volunteers, collected in EDTA tubes.

- Bacterial strains (e.g., Staphylococcus aureus, Escherichia coli, Streptococcus pneumoniae)

- 0.85% NaCl solution

- Blood agar plates

Method:

- Culture and Standardize Inoculum:

- Culture bacterial strains overnight on blood agar plates at 36.5°C.

- Suspend bacterial colonies in 0.85% NaCl to a turbidity of 0.5 McFarland (approximately 10^8 CFU/mL).

- Perform serial 10-fold dilutions in 0.85% NaCl. Plate 100 µL of each dilution in triplicate to confirm the CFU/mL.

Spiking into Whole Blood:

- Combine EDTA-blood from volunteers in a falcon tube.

- Spike the WB with the prepared bacterial suspensions within the same hour of the blood draw to achieve final concentrations typically between 10^3 to 10^5 CFU/mL.

Preparation of Plasma Samples (Optional):

- To obtain plasma, centrifuge 5 mL of spiked WB at 180 g or 100 g for 10 minutes at room temperature.

- Carefully collect 1 mL of the supernatant (plasma) for downstream DNA extraction.

DNA Extraction and Human DNA Depletion

Efficient extraction of microbial DNA and concomitant depletion of host DNA is arguably the most critical step for sensitivity, particularly in clinical samples where host DNA can constitute over 75% of the total sequenced reads [31].

Experimental Comparison of Extraction Efficiency

A study evaluating DNA extraction for shotgun metagenomics from blood reported significant differences in performance based on sample matrix and bacterial species [32]. The key quantitative findings are summarized in the table below.

Table 1: Comparison of DNA Extraction Efficiency from Whole Blood vs. Plasma [32]

| Sample Matrix | Bacterial Read Yield | Method Reproducibility | Performance by Gram Stain | Human DNA Depletion (ddPCR for RPP30 gene) |

|---|---|---|---|---|

| Whole Blood (WB) | Higher | Less consistent | More efficient for Gram-positive bacteria (S. aureus, S. pneumoniae) | Variable efficiency |

| Plasma | Lower | More consistent, better reproducibility | Negative effect on Gram-negative bacteria (E. coli) | More consistent and efficient |

Materials:

- Molzym Blood Pathogen Kit

- Automatic extraction system (e.g., Arrow, Diasorin)

- Qubit dsDNA HS Assay Kit

- Nanodrop spectrophotometer

- Agilent TapeStation with gDNA ScreenTape assay

Method:

- Extract DNA from Whole Blood:

- Use the Blood Pathogen Kit combined with the add-on 10 complement to extract DNA from 10 mL of spiked WB. This kit includes a step for selective lysis of human cells and degradation of human DNA.

Extract DNA from Plasma:

- For 1 mL of plasma obtained in Section 2.2, use the Blood Pathogen Kit without the add-on 10 complement, following the manufacturer's instructions for automatic extraction.

DNA Elution and Storage:

- Elute the extracted DNA in 100 µL of the kit's elution buffer.

- Store the DNA at -80°C until library preparation.

DNA Quality and Quantity Assessment:

- Quantify DNA using the Qubit dsDNA HS assay.

- Assess Purity using a Nanodrop spectrophotometer (A260/A280 and A260/A230 ratios).

- Evaluate Fragment Size using the gDNA ScreenTape assay on an Agilent TapeStation.

Library Preparation for Nanopore Sequencing

Library preparation converts the extracted DNA into a format compatible with the sequencing platform. The choice of technology impacts turnaround time and application suitability.

This protocol enables same-day diagnostics, offering a short turnaround time meaningful in a clinical context.

Materials:

- Oxford Nanopore Rapid PCR Barcoding Kit (SQK-RPB004)

- AMPure XP beads

- MinION device with FLO-MIN106 R9.4 flowcell

Method:

- Library Input: Use 1–5 ng of extracted DNA as input, depending on the yield from the extraction step.

PCR Amplification and Barcoding:

- Perform the library preparation according to the manufacturer's instructions for the Rapid PCR Barcoding Kit.

- Modification: Increase the number of PCR cycles from the standard 14 to 24 cycles to enhance yield from low-biomass samples.

Clean-up:

- Incubate the library with AMPure XP beads and Tris-HCl buffer for 10 and 5 minutes, respectively (increased from the standard protocol to improve recovery).

Sequencing:

- Load the DNA library onto a FLO-MIN106 R9.4 flowcell.

- Sequence on a MinION device for 24 hours.

The Researcher's Toolkit: Essential Materials

Table 2: Key Research Reagent Solutions for Pre-analytical Workflow

| Item | Function | Example Product/Catalog Number |

|---|---|---|

| Blood Pathogen Kit | Integrated DNA extraction and human DNA depletion from whole blood and plasma. | Molzym Blood Pathogen Kit |

| Rapid PCR Barcoding Kit | Fast preparation of sequencing libraries for Oxford Nanopore platforms, enabling same-day turnaround. | Oxford Nanopore SQK-RPB004 |

| AMPure XP Beads | Solid-phase reversible immobilization (SPRI) beads for post-reaction clean-up and size selection. | Beckman Coulter AMPure XP |

| Qubit dsDNA HS Assay | Highly sensitive, specific fluorescent quantification of double-stranded DNA, crucial for low-concentration samples. | Thermo Fisher Scientific Qubit dsDNA HS Assay |

| gDNA ScreenTape Assay | Automated electrophoretic analysis of genomic DNA size distribution and integrity. | Agilent Technologies gDNA ScreenTape |

Workflow Visualization

The following diagram illustrates the complete pre-analytical workflow, from sample collection to a sequence-ready library, integrating the protocols described in this document.

In shotgun metagenomics, quality control (QC) and trimming form the critical foundation upon which all subsequent analysis relies. Raw sequencing data invariable contains artifacts—low-quality bases, adapter sequences, and contaminating DNA—that can significantly compromise downstream results including assembly, binning, and taxonomic profiling [34]. Effective QC procedures identify and remove these artifacts, preventing erroneous conclusions and ensuring the accuracy of microbial community analysis [34]. This protocol outlines comprehensive QC strategies, tools, and metrics essential for robust metagenomic research, forming an integral component of standardized bioinformatics pipelines for microbiome investigation.

Key Quality Metrics and Their Interpretation

Understanding and monitoring key quality metrics is fundamental for evaluating sequencing data. The table below summarizes the core metrics used in metagenomic QC.

Table 1: Key Quality Control Metrics for Shotgun Metagenomics

| Metric | Description | Interpretation | Common Thresholds |

|---|---|---|---|

| Quality Score (Q Score) | Logarithmic measure of base-calling accuracy [35] | Q20 = 99% accuracy (1% error); Q30 = 99.9% accuracy (0.1% error) [35] | Minimum Q20 for reliable analysis [36] |

| Contiguity | Measure of assembly completeness and continuity | N50: Length of the shortest contig at 50% of total assembly length | Higher values indicate better assembly [37] |

| Completeness | Percentage of single-copy marker genes found in a Metagenome-Assembled Genome (MAG) [37] | Indicates how much of a genome has been recovered | ≥90% for high-quality MAGs [37] |

| Contamination | Percentage of marker genes duplicated in a MAG, suggesting multiple genomes binned together [37] | Lower values indicate purer genome bins | <5% for high-quality MAGs [37] |

| Chimerism | Detection of sequences originating from different genomic backgrounds [37] | Suggests incorrectly joined sequences or bins | Lower values preferred; specific thresholds vary by tool |

Essential Tools for Quality Control and Trimming

A robust QC pipeline utilizes specialized tools at different processing stages. The selection of tools depends on the sequencing technology and specific analysis goals.

Table 2: Essential Tools for Metagenomic Quality Control and Trimming

| Tool | Primary Function | Key Features | Application Context |

|---|---|---|---|

| FastQC | Quality assessment of raw sequencing data [4] [34] | Provides visual reports on per-base quality, GC content, adapter contamination [34] | Initial quality check; pre- and post-trimming [4] |

| fastp | Quality control, filtering, and adapter removal [4] | Performs integrated adapter trimming, quality filtering, and correction [4] | Rapid preprocessing of short-read data [4] |

| KneadData | Removal of host contamination [4] | Identifies and removes reads mapping to host reference genomes [4] | Essential for host-associated microbiome studies (e.g., human gut) |

| Trimmomatic | Read trimming and adapter removal [38] | Uses sliding window approach for quality-based trimming [38] | Reliable preprocessing within larger workflows [38] |

| QUAST | Assembly quality assessment [37] [4] | Evaluates contiguity statistics and assembly completeness [37] | Post-assembly evaluation of contigs and MAGs [37] |

| CheckM2 | MAG quality assessment [37] | Estimates completeness and contamination using machine learning [37] | Bin evaluation and refinement [37] |

| BUSCO | MAG quality assessment [37] | Assesses completeness and duplication based on universal single-copy orthologs [37] | Bin evaluation and comparison [37] |

| QC-Chain | Holistic QC with contamination screening [34] | Provides de novo contamination identification and fast processing [34] | Comprehensive QC for complex metagenomic datasets [34] |

Standardized Workflow for Quality Control and Trimming

The following workflow diagram illustrates the sequential stages of a comprehensive QC process for shotgun metagenomics, integrating the tools and metrics previously described.

Workflow Title: Comprehensive QC and Trimming Pipeline for Shotgun Metagenomics

Initial Quality Assessment

Objective: Evaluate the raw sequencing data quality before any processing.

- Procedure:

- Run FastQC on raw sequencing files (FASTQ format).

- Examine the HTML report for key metrics:

- Per base sequence quality: Identify positions with poor quality scores.

- Per sequence quality scores: Assess overall read quality.

- Sequence length distribution: Confirm expected read lengths.

- Overrepresented sequences: Detect adapter contamination or other contaminants.

- K-mer content: Identify possible sequencing biases.

- Interpretation: This initial assessment determines the specific trimming and filtering parameters needed. Poor quality at read ends typically requires more aggressive trimming, while adapter contamination necessitates adapter removal.

Adapter Trimming and Quality Filtering

Objective: Remove adapter sequences, low-quality bases, and discard poor-quality reads.

- Procedure using fastp:

- Execute fastp with recommended parameters:

--cut_front --cut_tail --cut_window_size 4 --cut_mean_quality 20--length_required 50to discard very short fragments- Specify adapter sequences with

--adapter_fastaif known

- For paired-end data, include

--detect_adapter_for_peto automatically identify common adapters - Enable correction for paired-end data with

--correctionfor overlapping reads

- Execute fastp with recommended parameters:

- Quality Thresholds:

Host DNA Decontamination

Objective: Identify and remove reads originating from host DNA, which is crucial for host-associated microbiome studies.

- Procedure using KneadData:

- Prepare a reference database of the host genome (e.g., human GRCh38).

- Align reads to the host reference using Bowtie2 with sensitive parameters.

- Extract unmapped reads, which represent the microbial fraction.

- For samples with high host contamination (>90%), consider additional probabilistic filtering with tools like BMTagger [38].

- Validation:

- Monitor the percentage of reads remaining after host removal.

- Expected retention rates vary by sample type: typically 60-90% for stool samples, but may be as low as 10-30% for tissue biopsies.

Post-Cleaning Quality Assessment

Objective: Verify the effectiveness of QC procedures and ensure data quality before downstream analysis.

- Procedure:

- Run FastQC on the cleaned sequencing files.

- Compare reports before and after processing to confirm:

- Improved per-base quality scores

- Elimination of adapter sequences

- Appropriate sequence length distribution after trimming

- Generate quality metrics for the final cleaned dataset:

- Total number of reads and total bases

- Average read length and N50

- Overall GC content distribution

Assembly and MAG Quality Assessment

Objective: Evaluate the quality of assembled contigs and Metagenome-Assembled Genomes (MAGs).

- Procedure using MAGFlow framework:

- Run QUAST to evaluate assembly contiguity metrics (N50, total length, number of contigs) [37].

- Execute CheckM2 to estimate completeness and contamination of MAGs [37].

- Perform BUSCO analysis to assess gene space completeness based on universal single-copy orthologs [37].

- Run GUNC to detect chimerism in genome bins [37].

- Quality Standards for MAGs:

- High-quality MAGs: ≥90% completeness, <5% contamination [37].

- Medium-quality MAGs: ≥50% completeness, <10% contamination.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Kits for Metagenomic Sequencing

| Reagent/Kit | Function | Application Notes |

|---|---|---|

| ZymoBIOMICS DNA Kit | DNA extraction from complex samples | Maintains representative representation of community structure; suitable for difficult-to-lyse microbes |

| Nextflex Rapid XP DNA Seq Kit | Library preparation for Illumina platforms | Incorporates unique dual indexes to enable sample multiplexing and reduce index hopping [36] |

| ZR Bashing Bead Lysis Tubes | Mechanical disruption of microbial cells | Essential for breaking tough cell walls of Gram-positive bacteria and fungi [36] |

| Qubit HS DNA Kit | Accurate quantification of DNA concentration | Fluorometric method superior to spectrophotometry for quantifying low-concentration metagenomic DNA [36] |

| LabChip GX Touch Nucleic Acid Analyzer | Fragment size distribution analysis | Quality control check after library preparation to verify insert size and absence of adapter dimers [36] |

Troubleshooting and Optimization Guidelines

Addressing Common QC Challenges

Low Read Quality:

- If persistent quality drops at read ends, increase trimming stringency or truncate reads to a fixed length.

- For overall poor quality, consider requesting resequencing if the percentage of bases above Q20 falls below 70%.

High Host Contamination:

- For samples with >90% host DNA, use probabilistic filtering tools like BMTagger in addition to standard alignment-based approaches [38].

- Optimize wet-lab protocols to enrich for microbial biomass through differential centrifugation or filtration.

Insufficient Sequencing Depth:

- For complex environmental samples, ensure adequate sequencing depth (typically 5-10 Gb per soil sample, 1-5 Gb per gut sample).

- Use rarefaction analysis to determine if diversity has been adequately captured.

Pipeline Integration and Best Practices

Modern metagenomic analysis increasingly utilizes integrated pipelines that incorporate QC steps:

- EasyMetagenome: Provides a comprehensive workflow including quality control, host removal, and multiple analysis paths [4].

- MAGFlow: Specifically designed for quality assessment of MAGs through multiple validation tools [37].

- Reproducibility: Always document QC parameters and software versions. Use containerization (Docker/Singularity) and workflow managers (Nextflow/Snakemake) to ensure reproducible results [37].

Rigorous quality control and trimming are not merely preliminary steps but fundamental components that determine the success of any shotgun metagenomics study. By implementing the protocols outlined in this document—from initial quality assessment through host decontamination to final assembly validation—researchers can ensure the reliability of their taxonomic and functional analyses. The integration of these QC processes into standardized, reproducible bioinformatics pipelines empowers robust microbiome research across diverse fields from clinical diagnostics to environmental monitoring.

Host DNA Removal and Contaminant Filtration Strategies

In shotgun metagenomics, the detection and accurate characterization of microbial communities is often confounded by the presence of host DNA and other contaminants. This challenge is particularly acute in low-biomass samples, such as those from the respiratory tract, where host DNA can constitute over 99.9% of sequenced material, thereby obscuring microbial signals and compromising analytical sensitivity [39]. The development of robust strategies for host depletion and contamination control is therefore paramount for advancing research in microbial ecology, infectious disease diagnostics, and drug development.

This Application Note details integrated wet-lab and computational strategies for host DNA removal and contaminant filtration, contextualized within a bioinformatics pipeline for shotgun metagenomics. We provide a systematic evaluation of current methodologies, detailed protocols for key experimental procedures, and a comparative analysis of computational tools, supported by quantitative data and workflow visualizations to guide researchers in selecting and implementing optimal strategies for their specific applications.

Experimental Host DNA Depletion Methods

Experimental host DNA depletion methods, applied prior to sequencing, are crucial for enriching microbial content and improving sequencing efficiency. These methods primarily operate on the principle of selectively removing host cells or DNA while preserving the integrity of microbial communities.

Performance Comparison of Depletion Methods

A recent comprehensive benchmarking study evaluated seven pre-extraction host DNA depletion methods using bronchoalveolar lavage fluid (BALF) and oropharyngeal swab (OP) samples. The methods' performance was assessed based on host DNA removal efficiency, microbial DNA retention, and fold-increase in microbial reads [39].

Table 1: Performance of Host DNA Depletion Methods in Respiratory Samples

| Method | Host DNA Removal Efficiency (BALF) | Bacterial DNA Retention Rate (BALF) | Fold-Increase in Microbial Reads (BALF) | Key Characteristics |

|---|---|---|---|---|

| K_zym (HostZERO) | 99.99% (0.9‱ of original) | Low | 100.3x | Highest microbial read increase; high host removal |

| S_ase (Saponin + Nuclease) | 99.99% (1.1‱ of original) | Low | 55.8x | Very high host removal efficiency |

| F_ase (Filter + Nuclease)* | High | Moderate | 65.6x | Balanced performance; new method |

| K_qia (QIAamp Microbiome) | High | High (OP: 21%) | 55.3x | Good bacterial retention |

| O_ase (Osmotic Lysis + Nuclease) | Moderate | Moderate | 25.4x | Intermediate performance |

| R_ase (Nuclease Digestion) | Moderate | High (BALF: 31%; OP: 20%) | 16.2x | Best bacterial DNA retention |

| O_pma (Osmotic Lysis + PMA) | Low | Low | 2.5x | Least effective |

*F_ase is a new method developed in the benchmarking study [39].

Detailed Protocol: Saponin Lysis with Nuclease Digestion (S_ase)

The S_ase method, which demonstrated exceptionally high host DNA removal efficiency, is optimized for processing respiratory samples like BALF and oropharyngeal swabs [39].

Reagents and Equipment:

- Saponin stock solution (0.5% w/v in sterile PBS)

- DNase I (e.g., Baseline Zero DNase, 100 U/µL)

- DNase I reaction buffer (10X)

- EDTA solution (0.5 M, pH 8.0)

- PBS (phosphate-buffered saline, sterile)

- Microcentrifuge tubes (DNase-free)

- Thermo-mixer or water bath

- Centrifuge

Procedure:

- Sample Preparation: Thaw frozen samples on ice. For BALF, centrifuge at 500 × g for 10 minutes at 4°C to pellet host cells. Carefully transfer the supernatant to a new tube.

- Saponin Treatment:

- Add saponin to the sample supernatant at a final concentration of 0.025% (v/v).

- Mix thoroughly by vortexing and incubate for 15 minutes at room temperature.

- Critical Step: The saponin concentration is critical. Higher concentrations (>0.5%) may lyse microbial cells, leading to DNA loss.

- Nuclease Digestion:

- Add 10X DNase I reaction buffer to a 1X final concentration.

- Add DNase I to a final concentration of 5 U/µL.

- Mix gently and incubate for 45 minutes at 37°C with occasional mixing.

- Reaction Termination:

- Add EDTA to a final concentration of 10 mM to chelate Mg²⁺ and inactivate DNase I.

- Incubate at 75°C for 10 minutes to ensure complete enzyme inactivation.

- Microbial DNA Extraction:

- Proceed with standard microbial DNA extraction using kits such as the QIAamp DNA Microbiome Kit or PowerSoil Pro Kit, following manufacturer's instructions.

- The extracted DNA is now ready for library preparation and sequencing.

Troubleshooting Notes:

- Low Microbial DNA Yield: Verify saponin concentration; avoid excessive vortexing after saponin treatment.

- Incomplete Host DNA Removal: Ensure fresh DNase I is used; check incubation temperature and duration.

Contamination Prevention Guidelines for Low-Biomass Samples

Effective contamination control begins at sample collection. The following guidelines are essential for reliable metagenomic analysis of low-biomass samples [40]:

Sample Collection:

- Use single-use, DNA-free collection vessels and swabs.

- Decontaminate reusable equipment with 80% ethanol followed by a nucleic acid degrading solution (e.g., 5% sodium hypochlorite) to remove residual DNA.

- Personnel should wear appropriate personal protective equipment (PPE) including gloves, masks, and clean lab coats to minimize human-derived contamination.

Negative and Sampling Controls:

- Include multiple negative controls such as empty collection vessels, swabs exposed to the sampling environment air, and aliquots of preservation solutions.

- Process these controls in parallel with samples through all stages (DNA extraction, library preparation, sequencing) to identify contaminating sources.

Laboratory Processing:

- Use dedicated pre- and post-PCR workstations.

- Employ UV-irradiated biosafety cabinets for sample handling.

- Use filter tips to prevent aerosol cross-contamination.

Diagram 1: Integrated workflow for host DNA removal and contamination control, spanning wet-lab and computational steps.

Computational Host DNA Decontamination

Computational methods provide a complementary approach to wet-lab depletion, removing host-derived reads from sequencing data post-hoc. These tools are essential when experimental depletion is incomplete or impractical.

Tool Performance and Selection Guide

A 2025 benchmarking study evaluated six computational host DNA removal tools using simulated metagenomic datasets with varying levels (90%, 50%, 10%) of host contamination [41].

Table 2: Performance Comparison of Computational Host DNA Removal Tools

| Tool | Strategy | Best Use Case | Key Findings | Resource Usage |

|---|---|---|---|---|

| Kraken2 | k-mer | Rapid screening; large datasets | Fastest tool; low resource consumption | Very low |

| KneadData | Alignment | Standardized processing | Integrated pipeline (Bowtie2 + QC); widely used | Medium |

| Bowtie2 | Alignment | Maximum accuracy | High precision; flexible parameter tuning | High (time) |

| BWA | Alignment | Alternative aligner | Established algorithm | Medium |

| KrakenUniq | k-mer | Unique k-mer counting | Good for strain-level analysis | Low |