Absolute Quantification in Low-Biomass Microbiome Studies: Overcoming Bias to Unlock Clinical Insights

This article addresses the critical need for absolute quantification in low-biomass microbiome research, a field plagued by significant technical challenges and potential for data misinterpretation.

Absolute Quantification in Low-Biomass Microbiome Studies: Overcoming Bias to Unlock Clinical Insights

Abstract

This article addresses the critical need for absolute quantification in low-biomass microbiome research, a field plagued by significant technical challenges and potential for data misinterpretation. Aimed at researchers, scientists, and drug development professionals, it explores the fundamental limitations of relative abundance data, which can obscure true biological signals in samples from environments like skin, tumors, blood, and the respiratory tract. We provide a comprehensive overview of current and emerging methodologies—from flow cytometry and spike-in standards to novel computational approaches—for achieving absolute microbial counts. The content further details rigorous troubleshooting and optimization strategies to mitigate contamination and bias, reinforced by validation studies that demonstrate how absolute quantification transforms the interpretation of therapeutic interventions and disease mechanisms, ultimately paving the way for more reliable diagnostics and therapies.

Why Relative Abundance Fails in Low-Biomass Environments: The Foundational Pitfalls

The exploration of low-biomass environments represents a formidable frontier in microbiome research. These habitats, characterized by exceedingly low levels of microbial life, pose unique methodological challenges that distinguish them from their high-biomass counterparts. Low-biomass environments span a remarkable diversity, including specific human tissues, the atmosphere, plant seeds, treated drinking water, hyper-arid soils, and the deep subsurface [1]. The defining feature of these environments is that microbial biomass approaches the limits of detection using standard DNA-based sequencing approaches, making the inevitability of contamination from external sources a critical concern [1] [2].

The significance of studying these environments extends far beyond academic curiosity. In human health, purported microbiomes of tissues such as the placenta, brain, and blood have been the subject of intense debate and controversy, with subsequent rigorous studies often revealing that initial findings were driven by contamination [3] [4]. In environmental science, accurately characterizing microbial communities in extreme habitats informs our understanding of life's boundaries and has implications for astrobiology, bioremediation, and ecosystem monitoring [1] [5]. This technical guide frames the exploration of these challenging environments within the broader thesis that absolute quantification is paramount for generating biologically meaningful and reproducible results, moving beyond relative abundance measurements that can yield misleading conclusions [6] [5].

Defining the Low-Biomass Niche: A Spectrum of Challenging Environments

Conceptually, low-biomass environments exist on a continuum rather than representing a binary category. While some have proposed quantitative thresholds (e.g., <10,000 microbial cells/mL), it is more informative to consider biomass as a gradient where technical challenges become progressively more severe as microbial abundance decreases [3]. The fundamental challenge in these environments is that the target DNA "signal" can be dwarfed by the contaminant "noise" introduced during sampling or laboratory processing [1]. This problem is exacerbated by the proportional nature of sequence-based datasets, meaning even minute amounts of contaminating DNA can drastically influence the interpretation of a sample's microbial composition [1].

The table below categorizes and exemplifies the diverse range of low-biomass environments currently under investigation.

Table 1: Categories and Examples of Low-Biomass Environments

| Category | Specific Examples | Key Characteristics |

|---|---|---|

| Human Tissues | Placenta [1] [3], Fetal tissues [1], Brain [4], Blood [1] [3] [4], Lower Respiratory Tract [3], Breastmilk [1] | Very low microbial load relative to host DNA; high susceptibility to contamination during collection; often lack resident microbes altogether [1] [3]. |

| Built Environments | Cleanrooms [7], Hospital Operating Rooms [7], Spacecraft Assembly Facilities [7], Metal Surfaces [1] | Ultra-low biomass due to stringent cleaning; critical for planetary protection and human health [7]. |

| Natural & Extreme Environments | Atmosphere [1], Hyper-arid soils [1], Deep subsurface [1] [3], Ice cores [1], Hypersaline brines [1], Treated Drinking Water [1] | Approach limits of microbial life; subject to polyextreme conditions (e.g., temperature, pH, salinity, nutrient availability) [1]. |

| Other Biological Hosts | Plant Seeds [1], Certain Animal Guts (e.g., caterpillars) [1], Salmonid Blood and Brain [4] | Highlight that "sterile" compartments may not be universal across species; salmonids, for instance, have a more permeable blood-brain barrier [4]. |

Technical Hurdles and Methodological Pitfalls

Research in low-biomass environments is fraught with analytical challenges that can compromise biological conclusions if not properly addressed.

Contamination: The Primary Adversary

Contamination, defined as the introduction of external DNA, is the most significant hurdle. It can originate from multiple sources, including human operators, sampling equipment, laboratory reagents, and kits [1] [3]. The "kitome"—the microbial contamination associated with DNA extraction and library preparation kits—is a particularly pernicious source [7]. In high-biomass samples like human stool, contaminants represent a minor component of the total DNA. In low-biomass samples, however, these contaminants can constitute the majority, or even the entirety, of the observed microbial signal [1] [3].

Cross-Contamination and Host DNA Misclassification

Well-to-well leakage, or the "splashome," occurs when DNA from one sample contaminates adjacent samples during plate-based processing [3]. This cross-contamination can violate the core assumptions of computational decontamination tools [3]. Furthermore, in host-associated low-biomass samples, the vast majority of sequenced DNA is from the host. This host DNA can be misclassified as microbial during bioinformatic analysis if not properly accounted for, generating noise or even artifactual signals if confounded with an experimental phenotype [3].

Batch Effects and the Imperative of Careful Design

Technical variability between processing batches (batch effects) can easily overwhelm subtle biological signals [3]. These effects stem from differences in reagents, personnel, protocols, and equipment. A critical principle in study design is to avoid batch confounding, ensuring that the biological groups of interest (e.g., case vs. control) are distributed across all processing batches [3]. Failure to do so can make technical artifacts indistinguishable from true biological phenomena.

Foundational Principles for Rigorous Study Design

Overcoming the challenges of low-biomass research requires meticulous planning from sample collection to data analysis. The following workflow outlines a robust, contamination-aware approach.

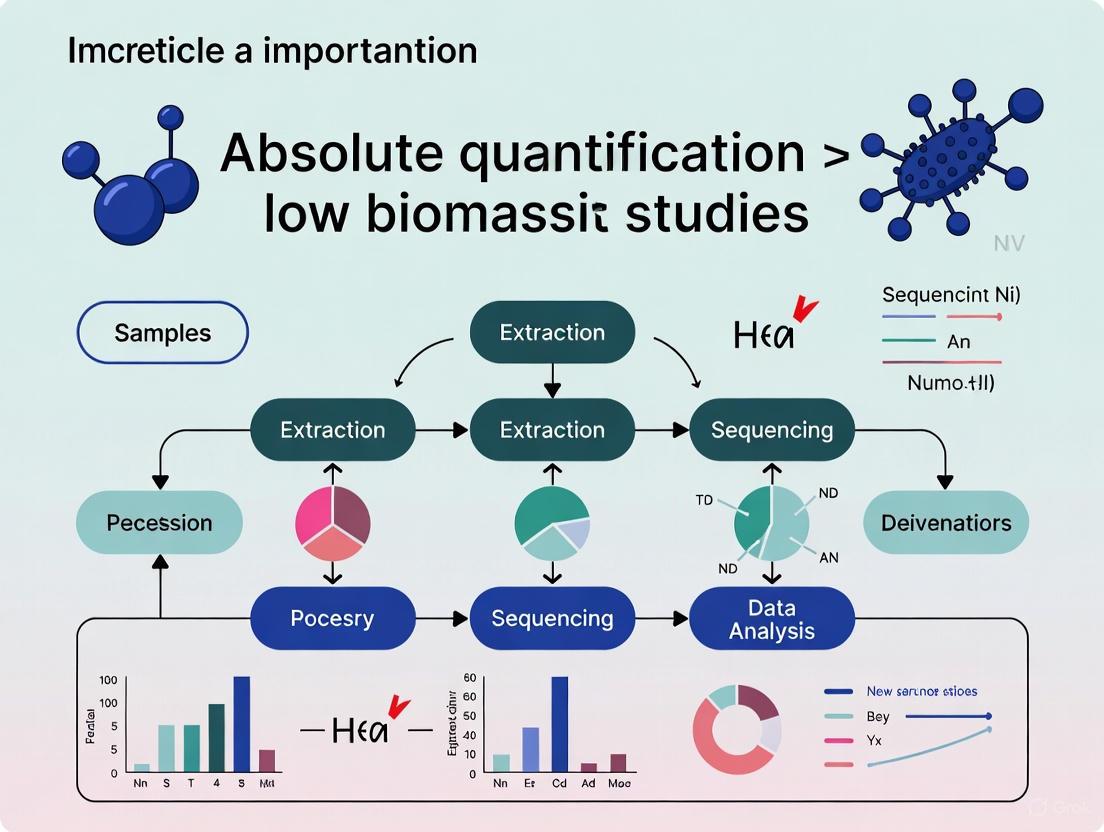

Figure 1: An integrated workflow for low-biomass microbiome studies, highlighting critical steps from planning to reporting.

A Multi-Layered Control Strategy

The incorporation of comprehensive controls is non-negotiable. Two complementary approaches are recommended:

- Process Controls: These are blank samples that pass through the entire experimental workflow alongside real samples, representing the aggregate contamination from all stages [3]. Examples include empty collection tubes, swabs exposed to sampling air, and blank extractions with no sample input [1] [3].

- Source-Specific Controls: To better identify the origin of contaminants, specific controls should be implemented, such as swabs of PPE, sampling equipment, or aliquots of preservation solutions [1] [3]. The collection of multiple control replicates is essential for robust statistical identification of contaminants [3].

Decontamination and Barrier Protection

All equipment and surfaces that contact samples must be decontaminated. A two-step process is effective: first, using 80% ethanol to kill contaminating organisms, followed by a nucleic acid degrading solution (e.g., bleach, UV-C light) to remove residual DNA [1]. Personal protective equipment (PPE), including gloves, masks, and cleanroom suits, acts as a critical barrier to limit contamination from human operators, protecting samples from aerosolized droplets and skin cells [1].

The Scientist's Toolkit: Essential Reagents and Materials

Success in low-biomass research hinges on the use of specialized reagents and materials designed to minimize and monitor contamination.

Table 2: Key Research Reagent Solutions for Low-Biomass Studies

| Item | Function & Importance | Specific Examples & Considerations |

|---|---|---|

| DNA-Decontaminated Reagents | To prevent introduction of microbial DNA from the reagents themselves. Standard molecular biology reagents can contain trace DNA. | Use reagents certified DNA-free. Decontaminate solutions with UV irradiation or sodium hypochlorite where applicable [1]. |

| DNA-Free Sampling Kits | To collect samples without adding contaminating signal. | Use single-use, pre-sterilized swabs and collection vessels [1]. Consider innovative devices like the SALSA sampler for surfaces [7]. |

| Internal Standards (IS) | For absolute quantification. Added in known quantities to correct for technical variation and convert relative to absolute abundance. | Can be cellular standards or synthetic DNA spikes. Allows estimation of microbial load and gene copies per sample unit [5]. |

| Mock Communities | Positive controls with known composition. Used to assess accuracy and bias of the entire workflow. | Comprise defined mixes of microbial strains [8]. Essential for validating bioinformatic pipelines and identifying taxon-specific biases [8]. |

| Nucleic Acid Removal Solutions | To destroy contaminating DNA on surfaces and equipment. Sterilization (e.g., autoclaving) kills cells but may not remove DNA. | Use sodium hypochlorite (bleach), hydrogen peroxide, or commercial DNA removal solutions [1]. |

The Critical Role of Absolute Quantification

Moving from relative to absolute quantification is a paradigm shift essential for accurate interpretation of low-biomass studies. Relative abundance data, which sums to 100%, is compositional. An increase in the relative abundance of one taxon necessitates an apparent decrease in others, which can produce spurious correlations and mask true biological effects [5]. Absolute quantification contextualizes the microbial signal, distinguishing between a substantial population of resident microbes and trace-level contamination.

Methods for Absolute Quantification

Several methods can bridge the gap from relative to absolute abundance:

- Bacterial-to-Host (B:H) DNA Ratio: A recently developed method estimates bacterial biomass in host-associated samples using the ratio of bacterial to host DNA reads in metagenomic data. This uses the host DNA as an inherent internal standard, requiring no additional experiments [6].

- Cellular Internal Standards: Known quantities of non-native cells (e.g., from an unrelated environment) or synthetic DNA sequences are added to the sample prior to DNA extraction. By tracking the recovery of these spikes, researchers can compute the absolute abundance of native taxa in the sample [5].

- Traditional Cell Counting: Methods like flow cytometry (FCM) or quantitative PCR (qPCR) can provide total microbial load, which can then be multiplied by relative abundances from sequencing to obtain absolute counts [5].

Defining and accurately characterizing low-biomass environments—from human tissues to extreme habitats—remains one of the most technically demanding pursuits in microbiology. The history of controversies in this field underscores the necessity of rigorous, contamination-aware methodologies. As outlined in this guide, success depends on a multi-faceted strategy: comprehensive control schemes, stringent decontamination protocols, unconfounded study designs, and a commitment to moving beyond relative abundance data through absolute quantification. By adopting these practices, researchers can ensure that future discoveries in these elusive frontiers are robust, reproducible, and biologically meaningful, fully realizing the potential of low-biomass microbiome research to advance human health and environmental science.

High-throughput sequencing has revolutionized microbiome research, yet the standard analytical paradigm relies on relative abundance data that inherently distorts ecological reality. This compositional data, constrained to a constant sum, introduces severe analytical pathologies including spurious correlations, false positives in differential abundance testing, and an inability to discern true population dynamics. The problem becomes particularly acute in low-biomass environments where contaminant DNA disproportionately influences results. This technical review examines the mathematical foundations of the compositional data problem, demonstrates how relative abundance metrics can produce misleading biological conclusions, and presents rigorous experimental and computational solutions centered on absolute quantification. By integrating compositional data analysis (CoDA) principles with emerging absolute quantification techniques, researchers can overcome these limitations and achieve more accurate ecological interpretations of microbiome data.

Microbiome datasets generated by high-throughput sequencing (HTS) are fundamentally compositional because sequencing instruments deliver reads only up to their fixed capacity, imposing an arbitrary total on the data [9]. This means that HTS output contains information about the relationships between microbial taxa rather than their absolute abundances in the original environment [9]. The constant-sum constraint transforms the data into a closed array where individual components cannot vary independently—an increase in one taxon's relative abundance necessarily produces decreases in others, regardless of their actual absolute abundances [9] [10].

The distinction between absolute and relative abundance represents a critical conceptual divide in microbiome analysis. Absolute abundance refers to the actual number of a specific microorganism present in a sample, typically quantified as "number of microbial cells per gram/milliliter of sample" [11]. In contrast, relative abundance describes the proportion of a specific microorganism within the entire microbial community, where the sum of all relative abundances typically equals 100% [11]. This distinction becomes biologically significant when considering that two subjects may harbor the same relative abundance of a pathogen (e.g., 20%), but if one has double the total microbial load, they consequently harbor twice the absolute quantity of that pathogen [12].

Table 1: Fundamental Differences Between Absolute and Relative Abundance

| Characteristic | Absolute Abundance | Relative Abundance |

|---|---|---|

| Definition | Actual number of microorganisms in a sample | Proportion of a microorganism within the community |

| Measurement Unit | Cells per gram/milliliter | Percentage or proportion (0-100%) |

| Sum Constraint | No constant sum | Constant sum (typically 100%) |

| Dependence on Other Taxa | Independent | Dependent on abundances of all other taxa |

| Information Content | True quantitative abundance | Proportional relationships |

| Impact of Total Load Changes | Directly reflects changes | Can mask true changes |

In low-biomass environments—including certain human tissues (tumors, lungs, placenta, blood), the atmosphere, plant seeds, and treated drinking water—the compositional problem becomes particularly severe [3] [1]. With minimal starting microbial DNA, even small amounts of contaminant DNA can disproportionately influence results, potentially leading to spurious conclusions about microbial presence and community structure [3] [1].

The Mathematical Pathology of Compositional Data

The Spurious Correlation Problem

Compositional data exhibit a negative correlation bias and fundamentally different correlation structure than underlying count data [9]. This pathology arises because the data reside on a simplex space—a geometric representation where the whole is the sum of its parts—rather than in real Euclidean space [10]. The consequences are profound: correlation coefficients calculated from raw relative abundances are inherently misleading and cannot reliably indicate underlying biological relationships.

The mathematical basis for this distortion was first recognized by Pearson in 1897 and has been rediscovered repeatedly in various fields, including microbiome research [9]. The core issue stems from the closure property of compositional data, where the measurement of any single component depends on all other components in the system. This dependency creates a situation where apparent "increases" in one taxon may actually reflect decreases in others, completely reversing biological interpretation [10].

The severity of false-positive rates in differential abundance testing is particularly alarming. Studies have demonstrated that traditional analyses of relative abundance data can produce false-positive rates exceeding 30%, even with modest sample sizes [10]. This high error rate stems from the inherent interdependency of relative values, where an increase in one taxon's relative abundance mathematically necessitates decreases in others, creating the illusion of differential abundance where none exists in absolute terms [10].

Directional Misinterpretation in Differential Abundance

The inability to determine directionality of change represents one of the most clinically problematic aspects of compositional data analysis. Consider a community with only two taxa: an increase in the ratio between Taxon A and Taxon B could indicate (i) Taxon A increased, (ii) Taxon B decreased, (iii) a combination of both effects, (iv) both increased but Taxon A increased more, or (v) both decreased but Taxon B decreased more [13]. Knowing which scenario occurs is crucial for biological interpretation but cannot be determined from relative abundance data alone [13].

Real-world examples demonstrate how profoundly relative abundance can distort biological reality. In soil microbiome research, Yang et al. (2018) found that 33.87% of bacterial genera showed opposite change directions—described as decreased relative abundance but increased absolute abundance—when analyzed using absolute quantification methods [14]. Similarly, in sodium azide-treated soil, 40.58% of total genera exhibited an upregulation trend using relative quantification but downregulation via absolute quantification [14]. These discrepancies arise from failure to account for changes in total bacterial count, leading to false-positive results and incorrect biological interpretations [14].

Table 2: Common Analytical Pitfalls in Relative Abundance Analysis

| Pitfall | Mathematical Cause | Biological Consequence |

|---|---|---|

| Spurious Correlation | Negative bias due to sum constraint | False associations between taxa |

| Directional Ambiguity | Inability to distinguish increases from decreases | Misinterpretation of treatment effects |

| False Positives in Differential Abundance | Interdependency of relative values | Incorrect identification of biomarker taxa |

| Compositional Bias in Diversity Metrics | Uneven sampling depth and sensitivity to dominant taxa | Distorted alpha and beta diversity estimates |

| Subsetting/ Aggregation Artifacts | Change in reference frame when selecting taxa | Inconsistent results at different taxonomic levels |

Experimental Solutions: Absolute Quantification Frameworks

Digital PCR Anchoring

A rigorous absolute quantification framework based on digital PCR (dPCR) anchoring combines the precision of dPCR with the high-throughput nature of 16S rRNA gene amplicon sequencing [13]. This method involves using dPCR to obtain an absolute count of 16S rRNA gene copies in a sample, then using this value to convert relative abundances from sequencing to absolute quantities [13].

The experimental workflow begins with efficient DNA extraction across diverse sample types. Validation studies spiking a defined 8-member microbial community into gastrointestinal samples from germ-free mice demonstrated near-equal and complete recovery of microbial DNA over five orders of magnitude [13]. The lower limit of quantification (LLOQ) was established at 4.2 × 10⁵ 16S rRNA gene copies per gram for stool/cecum contents and 1 × 10⁷ copies per gram for mucosal samples [13]. The critical innovation lies in using dPCR to precisely quantify total 16S rRNA gene copies, then applying the formula: Absolute Abundance of Taxon A = (Relative Abundance of Taxon A) × (Total 16S rRNA Gene Copies) [13].

This approach was validated in a murine ketogenic-diet study comparing microbial loads in lumenal and mucosal samples along the GI tract. Quantitative measurements of absolute abundances revealed decreases in total microbial loads on the ketogenic diet that were undetectable using relative abundance analysis, enabling researchers to determine differential effects of diet on each taxon with unprecedented accuracy [13].

Internal Standard-Based Quantification

Internal standard (IS)-based absolute quantification involves adding known quantities of exogenous cells or DNA to samples prior to DNA extraction [5]. Also known as "spike-in" methods, these approaches use the recovery rate of the internal standard to calibrate the entire measurement process, accounting for variations in DNA extraction efficiency, PCR amplification bias, and other technical variables [5].

The optimal internal standard should be absent from native samples yet resemble the target microorganisms in cell structure and DNA extraction characteristics. Common choices include synthetic communities, purified DNA from non-native species, or genetically modified cells [5]. The absolute abundance of native taxa is calculated using the formula: Absolute Abundance = (Relative Abundance of Native Taxon) × (Amount of Spiked IS / Relative Abundance of IS) [5].

This method was applied to analyze microbial population dynamics in horizontal surface layer soil and parent material soil. The absolute quantification revealed that the total bacteria count in the developed surface layer soil was 4.78 times less than the parent material soil (3.55 × 10⁸ vs. 1.7 × 10⁹ cells/g) [14]. Crucially, absolute quantification detected significant changes in 20 out of 25 total phyla, while relative quantification detected only 12 phyla, demonstrating the enhanced sensitivity of absolute methods [14].

Flow Cytometry with Sequencing Integration

Flow cytometry provides a robust method for quantifying total microbial load by counting individual cells in a sample [12]. When combined with sequencing, flow cytometry enables conversion of relative abundances to absolute quantities without the need for standard curves [12]. The procedure involves analyzing sample aliquots using flow cytometry to obtain total cell counts, then applying the formula: Absolute Abundance = Relative Abundance × Total Cell Count [12].

This approach is particularly valuable for detecting clinically relevant changes in total microbial load. For example, healthy adult human fecal samples show up to tenfold variation (10¹⁰⁻¹¹ cells/g) with daily fluctuations of 3.8 × 10¹⁰ cells/g [14]. Similarly, mucosal bacterial loads in Crohn's disease and inflammatory bowel disease patients are higher than in healthy controls—differences that would be obscured in relative abundance analyses [14]. Flow cytometry counting is most suitable for environmental samples with low biomass and well-dispersed cells, such as drinking water, cooling water samples, and river samples [5].

Diagram 1: Absolute Quantification Experimental Workflow. This integrated approach combines internal standards, digital PCR, and high-throughput sequencing to derive absolute abundances.

Computational Solutions: Compositional Data Analysis (CoDA)

Log-Ratio Transformations

Compositional data analysis (CoDA) provides a mathematical framework that respects the relative nature of microbiome data while avoiding spurious conclusions [9] [10]. The core innovation involves transforming data from the simplex to real Euclidean space using log-ratio transformations, which effectively eliminates the sum constraint [10].

The center log-ratio (CLR) transformation normalizes abundances to the geometric mean of a sample. For a composition with D components, the CLR transformation is defined as:

CLR(x) = [ln(x₁/g(x)), ln(x₂/g(x)), ..., ln(x_D/g(x))]

where g(x) is the geometric mean of all components [10]. This transformation symmetrizes the data and enables application of standard statistical methods that assume Euclidean geometry [10].

The additive log-ratio (ALR) transformation normalizes abundances to a carefully chosen reference component. The transformation is defined as:

ALR(x) = [ln(x₁/xD), ln(x₂/xD), ..., ln(x{D-1}/xD)]

where x_D is the reference component [10]. The choice of reference is critical and should ideally be a taxon that is abundant, prevalent, and biologically stable across samples [10].

When applied to glycomics data (which share the compositional nature of microbiome data), CLR transformation resulted in dramatically improved clustering compared to raw relative abundances (Dunn index 0.828 vs. 8.647) [10]. Similarly, in a bacteremia N-glycomics dataset, Aitchison distance (Euclidean distance after ALR transformation) better separated patient and donor classes than clustering on log-transformed abundances (adjusted Rand index: 0.79 vs. 0.74) [10].

Experimental Design for Low-Biomass Studies

Low-biomass microbiome research requires specialized experimental designs to address contamination challenges [3] [1]. Process controls that represent contamination sources are essential, including blank extraction controls, no-template controls, and empty collection kit controls [3]. These controls should be included in every processing batch to account for batch-specific contamination [3].

Avoiding batch confounding is particularly critical. Experimental designs must ensure that phenotypes and covariates of interest are not confounded with batch structure at any experimental stage [3]. This requires active de-confounding through balanced sample allocation across batches rather than reliance on randomization alone [3].

Minimizing well-to-well leakage ("cross-contamination" or "splashome") requires physical separation of samples during processing and inclusion of negative controls interspersed with samples [3] [1]. Recent research demonstrates that well-to-well leakage into contamination controls violates the assumptions of most computational decontamination methods, highlighting the need for physical prevention rather than computational correction [3].

Table 3: Research Reagent Solutions for Absolute Quantification

| Reagent/Method | Function | Key Considerations |

|---|---|---|

| Digital PCR (dPCR) | Absolute quantification of 16S rRNA gene copies | Microfluidic format reduces host DNA amplification bias; no standard curve needed |

| Flow Cytometry | Total microbial cell counting | Distinguishes live/dead cells with appropriate dyes; requires single-cell suspensions |

| Internal Standards (Spike-ins) | Calibration of extraction and amplification efficiency | Should mimic native cells in lysis characteristics; must be absent from native samples |

| CARD-FISH Probes | Specific taxon quantification via fluorescence | Signal amplification enables low-abundance taxon detection; requires specialized expertise |

| DNA Decontamination Solutions | Remove contaminating DNA from reagents and surfaces | Sodium hypochlorite, UV-C exposure, or commercial DNA removal solutions |

Case Study: Absolute Quantification Reveals Drug Effects Obscured by Relative Abundance

A compelling demonstration of absolute quantification's power comes from a 2025 study comparing berberine (BBR) and sodium butyrate (SB) effects on gut microbiota in DSS-induced colitis mice [15]. Using both relative and absolute quantitative sequencing, researchers found that relative abundance measurements failed to accurately reflect the true microbial changes induced by these compounds [15].

Notably, absolute quantitative sequencing provided results more consistent with the actual microbial community and revealed drug effects that were obscured or misrepresented by relative abundance analysis [15]. Specifically, the regulatory effects of BBR on gut microbiota were more accurately captured using absolute quantification, demonstrating that relative quantitative sequencing analyses are prone to misinterpretation and incorrect correlation of results [15].

This study underscores how absolute quantitative analysis better represents true microbial counts when evaluating drug modulatory effects on the microbiome [15]. The findings have vital implications for pharmaceutical development targeting the microbiome, as relative abundance measurements might lead to erroneous conclusions about drug mechanisms or loss of key bacterial genera involved in therapeutic effects [15].

The compositional nature of relative abundance data represents a fundamental challenge in microbiome research that transcends analytical approaches. While compositional data analysis methods provide mathematical rigor for working within the relative abundance framework, absolute quantification approaches offer the most direct path to ecological truth by measuring actual cellular abundances in biological samples.

The future of rigorous microbiome science lies in integrating absolute quantification into standard practice, particularly for low-biomass environments where compositional effects are most pronounced. Methods such as dPCR anchoring, internal standard calibration, and flow cytometry integration now provide feasible pathways to absolute quantification without prohibitive cost or technical burden. By adopting these approaches and following rigorous experimental designs that minimize contamination, microbiome researchers can overcome the distortions of compositional data and build a more accurate understanding of microbial ecology in health and disease.

The Pervasive Challenge of Contamination and Environmental DNA

In the study of low-biomass environments—such as human tissues, treated drinking water, and hyper-arid soils—the inevitability of contamination presents a fundamental challenge that can compromise scientific validity. These environments harbor minimal microbial biomass, approaching the limits of detection for standard DNA-based sequencing approaches [1]. The proportional impact of contaminating DNA is dramatically amplified in these systems, where the target DNA 'signal' can be easily overwhelmed by contaminant 'noise' [1]. This challenge extends beyond mere technical nuisance; it has fueled major scientific controversies, including debates surrounding the existence of a placental microbiome and the accurate characterization of tumor microbiomes, where initial findings were later attributed to contamination [3]. Consequently, rigorous contamination control is not simply a best practice but a foundational requirement for generating reliable data, particularly for absolute quantification where accurate measurement of DNA copy numbers is paramount.

Contaminating DNA can infiltrate an experiment at virtually any stage, from sample collection to data analysis. Recognizing these sources is the first step in developing effective mitigation strategies.

The major vectors for introducing contamination include:

- Human Operators: Microbial cells and DNA can be shed from skin, hair, or clothing, or introduced via aerosols generated from breathing or talking [1].

- Sampling Equipment: Collection tools, vessels, and surfaces can carry microbial DNA from previous uses or the environment [1].

- Laboratory Reagents and Kits: Commercial kits for DNA extraction, amplification, and library preparation often contain trace amounts of microbial DNA that become detectable in low-biomass contexts [1] [3].

- Cross-Contamination (Well-to-Well Leakage): DNA can transfer between samples processed concurrently, for example, in adjacent wells of a 96-well plate, a phenomenon also termed the "splashome" [1] [3].

Table 1: Summary of Major Contamination Sources and Their Vectors

| Source | Vectors | Typical Impact |

|---|---|---|

| Human Operator | Skin cells, aerosols, improper personal protective equipment (PPE) | Introduction of human-associated microbes (e.g., Propionibacterium, Staphylococcus) |

| Laboratory Reagents | Extraction kits, polymerase enzymes, water | Dominated by low-diversity, ultra-clean-associated taxa (e.g., Caulobacter, Burkholderia) |

| Sampling Equipment | Non-sterile swabs, collection tubes, fluids | Environmental species (e.g., from soil or water) distorting in-situ signals |

| Cross-Contamination | Aerosols during pipetting, poorly sealed plates | False positives, blending of community signatures between samples |

The Analytical Challenge: Confounding and Signal Distortion

The presence of contamination is problematic enough, but its impact is magnified when confounded with the experimental variables of interest. If samples from different experimental groups (e.g., case vs. control) are processed in separate batches using different reagent lots or by different personnel, the differential contamination profiles can create artifactual signals that are misinterpreted as biological reality [3]. In such scenarios, what appears to be a statistically significant biomarker could merely reflect batch-specific contamination.

A Framework for Contamination Mitigation: From Collection to Analysis

A multi-layered defense strategy is essential to minimize, identify, and account for contamination throughout the research workflow.

Pre-Sampling and Collection Protocols

During the initial stages of research, proactive measures are critical:

- Decontamination of Equipment: Use single-use, DNA-free consumables where possible. Reusable equipment should be decontaminated with 80% ethanol to kill organisms, followed by a nucleic acid degrading solution (e.g., bleach, UV-C light) to remove residual DNA [1].

- Use of Personal Protective Equipment (PPE): Operators should wear gloves, masks, clean suits, and shoe covers as appropriate to create a barrier between the sample and potential human contaminants [1].

- Environmental Controls: Sample collection should minimize exposure to ambient air and surfaces. In some cases, cleanroom-level protocols may be necessary [1].

Essential Experimental Controls

The use of comprehensive process controls is non-negotiable for identifying the contaminant profile of a workflow [3]. These controls should be processed alongside actual samples through all stages.

Table 2: Critical Negative Controls for Low-Biomass Studies

| Control Type | Description | Function |

|---|---|---|

| Field Blank | An empty, sterile collection vessel taken into the field. | Identifies contamination from collection vessels and the field environment. |

| Extraction Blank | Reagents without a sample carried through the DNA extraction process. | Reveals contamination inherent to extraction kits and reagents. |

| PCR Blank | Molecular grade water used as a template in amplification. | Detects contamination in PCR/master mix reagents and the laboratory environment. |

| Internal Standards | Known quantities of synthetic or foreign DNA spikes. | Monitors PCR inhibition, quantifies efficiency, and enables absolute quantification [16]. |

Strategic Study Design

Proper experimental design is the most powerful tool for neutralizing the effects of unavoidable contamination.

- Avoid Batch Confounding: The highest priority is to ensure that biological groups of interest are distributed evenly across all processing batches (e.g., DNA extraction plates, sequencing runs). This prevents contamination and batch effects from being misinterpreted as biological signals [3].

- Randomization and Replication: Sample processing order should be randomized with respect to experimental groups. Including technical replicates helps assess variability and the impact of stochastic contamination.

Advanced Methodologies for Absolute Quantification

Moving from relative to absolute quantification is a crucial frontier in low-biomass research, as it allows researchers to distinguish genuine, abundant signals from low-level contamination.

The qMiSeq Approach

The quantitative MiSeq (qMiSeq) approach is a metabarcoding method that converts sequence read counts into absolute DNA copy numbers. This is achieved by spiking each sample with known quantities of synthetic internal standard DNA sequences (which are distinguishable from natural sequences) prior to library preparation [16]. A sample-specific linear regression is then created between the known standard copy numbers and their observed read counts. This regression model is used to convert the read counts of all other taxa in that sample into estimated DNA copies, thereby correcting for sample-specific PCR bias and inhibition [16]. This method has shown significant positive correlations with both the abundance and biomass of fish communities in environmental studies, validating its utility for quantitative assessment [16].

Digital PCR (dPCR) and Quantitative PCR (qPCR)

For targeted, species-specific detection, these methods provide high-sensitivity quantification:

- qPCR relies on comparing the amplification cycle threshold (Ct) of a sample to a standard curve of known DNA concentrations to estimate copy number. The development of species-specific assays, including careful design and validation of primers and probes, is critical for accuracy [17].

- dPCR partitions a sample into thousands of individual reactions, providing absolute quantification without the need for a standard curve. It often offers superior sensitivity and resistance to PCR inhibitors, making it well-suited for low-biomass targets.

The Scientist's Toolkit: Essential Reagent Solutions

Successful low-biomass research relies on a suite of specialized reagents and materials, each chosen to minimize interference and maximize fidelity.

Table 3: Key Research Reagent Solutions and Their Functions

| Reagent/Material | Function | Technical Considerations |

|---|---|---|

| DNA-Decontaminated Reagents | Molecular grade water, enzymes, and buffers treated to remove microbial DNA. | Critical for all molecular steps. Verify via rigorous negative controls. |

| Ultra-Clean Collection Swabs & Tubes | Pre-sterilized, DNA-free consumables for sample acquisition and storage. | Prefer plasticware treated by autoclaving and UV irradiation. |

| Internal Standard DNA | Synthetic, non-natural DNA sequences (e.g., gBlocks, Spike-ins). | Added to samples pre-extraction (for process control) or pre-amplification (for qMiSeq) [16]. |

| High-Fidelity DNA Polymerase | Enzyme for PCR with high processivity and low error rate. | Reduces amplification artifacts and chimeras in final sequences. |

| Barcoded Adapters & Index Primers | Oligonucleotides for labeling and preparing sequencing libraries. | Enable multiplexing of samples; unique dual indexing is essential to identify cross-talk [3]. |

| DNA Removal Solutions | Chemical agents like bleach or sodium hypochlorite. | Used for decontaminating work surfaces and non-disposable equipment [1]. |

Visualizing the Experimental Workflow for Quantitative Low-Biomass Analysis

The following diagram outlines a robust, contamination-aware workflow integrating the principles and methods discussed above.

The pervasive challenge of contamination in low-biomass and eDNA research demands a paradigm shift from simple detection to rigorous, quantification-focused science. By integrating meticulous experimental design, comprehensive controls, and advanced quantitative methods like qMiSeq, researchers can transcend mere contamination awareness and achieve true quantitative accuracy. This disciplined approach is the foundation upon which reliable, reproducible, and biologically meaningful conclusions are built, ultimately advancing our understanding of the hidden microbial worlds in low-biomass environments.

This case study examines the transformative role of relic-DNA depletion in skin microbiome research, a critical advancement for achieving absolute quantification in low-biomass environments. Traditional sequencing methods conflate DNA from live bacterial cells with extracellular DNA and genetic material from dead cells, significantly skewing microbial community profiles. By implementing innovative methodologies that discriminate between intact and relic DNA, researchers can overcome fundamental biases that have historically obstructed accurate characterization of the living skin microbiome. This technical analysis details experimental protocols, quantitative findings, and methodological frameworks that demonstrate how relic-DNA depletion reveals authentic microbial patterns, providing a refined baseline for mechanistic studies of skin health, disease progression, and therapeutic development.

The skin microbiome presents unique investigational challenges due to its inherently low microbial biomass, where standard sequencing approaches struggle to distinguish true biological signals from technical artifacts [18]. In these environments, relic DNA—extracellular DNA and genetic material from non-viable cells—can comprise a substantial portion of sequenced material, dramatically distorting community profiles [19] [20]. This relic DNA acts as a "genetic fossil record" of past microbial inhabitants rather than representing the currently living community, complicating efforts to establish causal relationships between microbiome composition and skin health or disease states.

The imperative for absolute quantification stems from the limitations of relative abundance data, which can produce misleading interpretations in dynamic microbial systems [14]. When data are expressed only as relative proportions, an apparent increase in one taxon's abundance may result from the actual decline of other community members rather than its true proliferation. This compositional nature of standard sequencing data obscures true population dynamics and interspecies interactions, necessitating methods that provide cell-count resolution for accurate ecological inference [14] [21].

The Problem: Relic DNA Obscures the Living Skin Microbiome

Quantifying the Relic DNA Burden in Skin Samples

Recent investigations have revealed the astonishing prevalence of relic DNA in skin microbiome samples. One landmark study demonstrated that up to 90% of microbial DNA obtained from standard skin swabs originates from non-viable sources rather than living bacterial communities [19]. This overwhelming proportion of relic material means that conventional sequencing approaches primarily capture a historical archive of microbial presence rather than the physiologically active community relevant to skin health and function.

The impact of this relic DNA burden is particularly pronounced in skin environments due to their low bacterial density compared to other body sites. With an estimated 10^4 to 10^6 bacteria inhabiting each square centimeter of skin, even minimal relic DNA contamination can disproportionately influence community profiles [18]. This effect varies across different skin sites, with dry regions typically exhibiting lower biomass and consequently greater susceptibility to relic DNA bias [18].

Consequences for Microbiome Data Interpretation

The presence of substantial relic DNA creates multiple interpretive challenges for skin microbiome researchers:

- Inflated Diversity Estimates: Relic DNA preserves genetic signatures of transient or deceased microorganisms, creating the illusion of greater microbial diversity than actually exists in the living community [20].

- Obscured Ecological Patterns: Spatiotemporal dynamics of viable microbial populations are masked by the stable background of relic DNA, blurring distinctions between body sites and individuals [19].

- Misattributed Biological Effects: Interventions that affect microbial viability without immediately removing DNA may produce misleading community profiles that fail to reflect actual therapeutic outcomes.

Methodological Framework: Approaches for Relic-DNA Depletion and Absolute Quantification

Integrated Workflow for Live Microbiome Characterization

The following workflow diagram illustrates the comprehensive integration of relic-DNA depletion with absolute quantification for authentic skin microbiome profiling:

Relic-DNA Depletion Techniques

Benzonase Endonuclease Treatment

Benzonase endonuclease has emerged as a highly effective method for relic-DNA removal in soil and skin microbiomes [20]. This enzyme digests all forms of DNA and RNA without cell membrane penetration, selectively eliminating extracellular nucleic acids while preserving genetic material within intact cells.

Optimized Protocol:

- Sample Preparation: Resuspend skin swab samples in 500μL phosphate-buffered saline (PBS)

- Enzyme Application: Add 2μL Benzonase endonuclease (≥250 units) and incubate at 37°C for 45 minutes

- Reaction Termination: Heat inactivate at 70°C for 10 minutes

- Efficiency Validation: Assess DNA reduction via fluorometric quantification

Comparative studies demonstrate that Benzonase removes relic DNA with 40-60% efficiency in skin samples, approximately double the performance of propidium monoazide (PMA) treatments (0-30% efficiency) [20]. Unlike light-dependent PMA methods, Benzonase functions effectively in opaque media like skin homogenates without requiring photoactivation.

Propidium Monoazide (PMA) Treatment

As an alternative approach, PMA selectively penetrates membrane-compromised cells and intercalates with DNA upon photoactivation, rendering it insoluble and unavailable for amplification [19]. While less efficient than Benzonase for skin applications, PMA remains valuable for specific experimental contexts requiring viability PCR.

Absolute Quantification Methods

Flow Cytometry for Total Bacterial Load

Flow cytometry provides rapid, single-cell enumeration of bacterial abundance in skin samples, establishing essential baseline data for converting relative sequencing abundances to absolute cell counts [14].

Implementation Protocol:

- Staining: Apply nucleic acid stains (e.g., SYBR Green I) to distinguish microbial cells from background particles

- Calibration: Use fluorescent microspheres of known concentration for instrument calibration

- Analysis: Process samples at low flow rate (≤100 events/sec) to ensure accurate counting of low-biomass samples

- Gating Strategy: Establish conservative gates based on positive control samples (e.g., pure bacterial cultures)

Quantitative PCR (qPCR) for Specific Taxa

qPCR enables sensitive, taxon-specific quantification with detection limits as low as 10^3 cells/gram in fecal samples, demonstrating compatibility with low-biomass skin applications [21].

Strain-Specific qPCR Design Workflow:

- Marker Identification: Identify strain-specific genomic regions through comparative genomics

- Primer Design: Develop primers with 18-25 bp length, 40-60% GC content, and melting temperature of 58-62°C

- Specificity Validation: BLAST analysis against non-target genomes; empirical testing against related strains

- Standard Curve Generation: Use gBlock gene fragments or genomic DNA from pure cultures

- Efficiency Optimization: Adjust annealing temperatures to achieve 90-110% amplification efficiency

Recent systematic comparisons indicate that qPCR provides superior dynamic range and cost-effectiveness compared to droplet digital PCR (ddPCR) for strain-specific quantification in complex samples, though ddPCR offers advantages for absolute quantification without standard curves [21].

Quantitative Findings: Comparative Impact of Relic-DNA Depletion

Methodological Performance Metrics

Table 1: Performance Comparison of Relic-DNA Depletion Methods

| Method | Removal Efficiency | Key Advantages | Limitations | Compatibility with Skin Samples |

|---|---|---|---|---|

| Benzonase | 40-60% [20] | No light activation required; broad substrate range | Potential impact on Gram-positive bacteria if lysis occurs | High - effective in opaque samples |

| PMA | 0-30% [20] | Selective for membrane-compromised cells | Requires transparent samples for photoactivation | Moderate - limited by skin sample opacity |

| DNase I | 20-40% [20] | Specific for DNA without RNase activity | Narrow optimal activity conditions | Moderate - sensitive to sample inhibitors |

Taxonomic Shifts Following Relic-DNA Depletion

Table 2: Taxonomic Abundance Changes After Relic-DNA Removal in Skin Microbiome

| Taxon | Change in Relative Abundance | Interpretation | Statistical Significance |

|---|---|---|---|

| Bacillus | Significant decrease [20] | High relic-DNA contributor in skin environments | p < 0.01 |

| Sphingomonas | Significant decrease [20] | Common environmental contaminant with persistent DNA | p < 0.05 |

| Cutibacterium | Variable response across skin sites [19] | Site-specific viability patterns revealed | p < 0.05 between sites |

| Staphylococcus | Increased relative abundance [19] | Underestimated in total DNA due to high relic from other taxa | p < 0.05 |

Implementation of relic-DNA depletion produces consistent methodological improvements across multiple parameters. Studies report approximately 10% reduction in microbial diversity and richness on average after removing relic DNA, reflecting the elimination of non-viable community members from diversity calculations [20]. Perhaps more importantly, relic-DNA depletion reduces intraindividual similarity between samples from different body sites, strengthening the resolution of true spatial patterning across skin microenvironments [19].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents for Relic-DNA Depletion and Absolute Quantification

| Reagent/Material | Function | Application Notes | Representative Product Examples |

|---|---|---|---|

| Flocked nylon swabs (eSwabs) | Sample collection | Superior biomass recovery compared to cotton swabs [22] [23] | Copan eSwab, Puritan HydraFlock |

| Benzonase endonuclease | Relic-DNA degradation | Digests all forms of DNA/RNA without cell penetration [20] | Millipore Sigma Benzonase, Novagen Benzonase |

| Propidium monoazide (PMA) | DNA intercalation in dead cells | Selective inhibition of relic-DNA amplification [19] | Biotium PMA, GenIUL PMA Dye |

| SYBR Green I | Nucleic acid staining | Flow cytometric bacterial enumeration [14] | Thermo Fisher SYBR Green I, Lonza SYBR Green |

| Kit-based DNA extraction | Nucleic acid purification | Higher yield and reproducibility for skin samples [21] [23] | QIAamp Fast DNA Stool Mini Kit, DNeasy PowerSoil Kit |

| Strain-specific primers | Targeted quantification | qPCR-based absolute abundance of specific taxa [21] | Custom-designed oligonucleotides |

Implications for Research and Therapeutic Development

The integration of relic-DNA depletion with absolute quantification methodologies addresses fundamental limitations in skin microbiome research, enabling more accurate associations between microbial community states and dermatological conditions. This technical advancement carries significant implications for multiple research domains:

Disease Mechanism Elucidation

By distinguishing the living microbial community from historical DNA signatures, researchers can establish more reliable correlations between specific viable taxa and skin disorders. The revealed differential abundance of live bacteria across skin regions provides important hypotheses for why certain sites demonstrate heightened susceptibility to pathogenic invasion or inflammatory conditions [19].

Therapeutic Development and Assessment

The accurate quantification of viable microbial populations enables precise monitoring of interventional outcomes, whether evaluating probiotic applications, antibiotic treatments, or microbiome-transplant therapies. Strain-specific qPCR assays permit sensitive tracking of therapeutic strains at levels below conventional sequencing detection limits [21].

Standardization Across Studies

The implementation of relic-DNA depletion creates opportunities for improved cross-study comparisons by eliminating technical variation introduced by differential relic-DNA preservation across sampling strategies and processing methods [22] [23]. This methodological harmonization is particularly valuable for multi-center clinical trials and longitudinal cohort studies.

Relic-DNA depletion represents a methodological paradigm shift in skin microbiome research, overcoming a fundamental bias that has obscured understanding of the living microbial community. The integration of enzymatic relic-DNA removal with absolute quantification techniques provides a powerful framework for generating biologically meaningful data from low-biomass skin samples, transforming our capacity to link microbial ecology with skin health and disease.

Future methodological developments will likely focus on single-cell viability assessments, integration with metatranscriptomic approaches to profile metabolically active communities, and streamlined workflows that combine relic-DNA removal with automated sample processing. As these refined methodologies become standardized, they will accelerate the translation of skin microbiome research into clinically actionable insights and targeted therapeutic interventions.

The Critical Consequences for Disease Association and Drug Mechanism Studies

The advent of high-throughput sequencing has revolutionized microbiome research, enabling large-scale profiling of microbial communities. However, standard microbiome analysis predominantly relies on relative abundance data, which ignores total bacterial load and presents significant interpretation challenges. This whitepaper examines the critical consequences of relying solely on relative abundance in disease association and drug mechanism studies, particularly in the context of low biomass samples. We detail how absolute quantification methods provide more accurate biological insights, prevent misleading conclusions in clinical studies, and enhance drug development research. Methodological guidance, technical protocols, and analytical frameworks are presented to assist researchers in implementing absolute quantification approaches.

The Fundamental Problem with Relative Abundance Data

Microbiome sequencing data is inherently compositional, meaning that all microbial abundances are expressed as proportions that sum to 100% [14] [12]. This fundamental characteristic leads to several critical limitations:

- The Constant Sum Constraint: In compositional data, an increase in one taxon's abundance inevitably forces a decrease in the relative abundance of other taxa, creating spurious negative correlations that may not reflect biological reality [5].

- Masking of True Biological Changes: Changes in absolute abundance of one taxon can artificially alter the relative proportions of all other taxa, even when their absolute counts remain unchanged [14].

- False Positive and False Negative Results: Relative abundance analysis frequently identifies differential abundance that disappears when absolute counts are considered, while simultaneously missing true biological changes [14] [24].

The following example illustrates how relative abundance data can be misleading: When two types of bacteria start with the same initial cell number, a treatment that doubles the cell number of bacteria A (while bacteria B remains unaffected) results in the same relative abundance between bacteria A and B (67% and 33%) as a treatment that halves bacteria B (while bacteria A remains unaffected). However, these two treatment effects are biologically completely different [14].

Critical Consequences for Disease Association Studies

Misinterpretation in Gut Microbiome Research

In gastrointestinal research, reliance on relative abundance has led to contradictory findings and obscured true disease mechanisms:

- Inflammatory Bowel Disease (IBD): Studies have revealed that the overall mucosal bacterial loads in patients with Crohn's disease and inflammatory bowel disease are significantly higher than in healthy controls, a finding that would be masked in relative abundance analyses [14].

- Microbial Load Variations: Healthy adult human fecal samples show substantial variation (10¹⁰–¹¹ cells/g) with daily fluctuations up to 3.8 × 10¹⁰ cells/g, meaning that relative abundance changes may simply reflect these total load variations rather than specific taxonomic shifts [14].

- Bacteroides-Enterotype Association: One study combining sequencing with flow cytometry found that microbial load in people with a Bacteroides-enterotype microbiome was associated with Crohn's disease, a relationship that could not be detected through relative abundance analysis alone [12].

Challenges in Low Biomass Microbiome Studies

Low biomass samples (skin, respiratory tract, air samples) present particular challenges where absolute quantification becomes essential:

- Quality Control Imperative: For low biomass samples, quantifying total microbial load via qPCR is essential to confirm whether bacterial load is sufficient for meaningful sequencing results, preventing false conclusions from amplification artifacts or contamination [12].

- Detection Sensitivity Issues: In low biomass environments, the presence of many rare taxa often results from sequencing artifacts rather than biological reality, requiring careful filtering and quantification approaches to distinguish true signals from noise [24].

- Antibiotic Intervention Studies: Antibiotics significantly reduce microbial load in addition to changing composition. Without absolute quantification, the true extent of microbial depletion and subsequent recovery patterns cannot be accurately assessed [12].

Longitudinal Study Complications

Longitudinal microbiome research suffers particularly from relative abundance limitations:

- Masked Microbial Blooms: A longitudinal study of preterm infants found that relative abundance analysis masked blooms in Klebsiella and Escherichia that occurred over time. Absolute quantification revealed these critical pathological changes that were invisible in relative data [12].

- Dynamic Load Changes: Microbial load and absolute quantities of specific microbes can change over time due to disease, medications, or diet, but these dynamics are frequently obscured by relative abundance normalization [12].

Consequences for Drug Mechanism Studies

Drug Microbiome Interactions

Understanding how pharmaceuticals interact with the microbiome requires absolute quantification to differentiate true effects from compositional artifacts:

- Antibiotic Efficacy Assessment: Evaluating antibiotic effects requires measuring both total bacterial reduction and specific taxonomic changes, as relative abundance alone cannot distinguish between actual pathogen reduction versus proportional shifts due to elimination of commensals [12].

- Drug Metabolism Studies: The absolute abundance of microbial communities responsible for drug metabolism (e.g., microbial enzymes that activate or inactivate pharmaceuticals) must be quantified to understand interindividual variation in drug response [25].

Therapeutic Development Implications

Drug development pipelines incorporating microbiome analysis face significant challenges without absolute quantification:

- False Biomarker Identification: Relative abundance data can identify spurious microbial biomarkers that disappear when absolute counts are considered, leading to failed clinical validation [14] [5].

- Dose-Response Relationships: Establishing proper dose-response relationships for microbiome-modulating therapeutics requires absolute quantification of target organisms, as relative proportions cannot distinguish between actual growth inhibition versus proportional shifts [14].

- Clinical Trial Stratification: Patient stratification based on microbiome signatures requires absolute abundance data to ensure that classifications reflect true biological differences rather than compositional artifacts [14].

Absolute Quantification Methods: Technical Approaches

Multiple absolute quantification approaches are available, each with distinct advantages and limitations:

Table 1: Comparison of Absolute Quantification Methods in Microbiome Research

| Quantification Method | Major Applications | Key Advantages | Key Limitations |

|---|---|---|---|

| Flow Cytometry (FCM) | Feces, aquatic, and soil samples | Rapid; single cell enumeration; distinguishes live/dead cells; high accuracy and reproducibility | Requires well-dispersed cells; interference from debris and aggregates; specialized equipment needed [14] [5] |

| 16S qPCR | Feces, clinical samples, soil, plant, air | Directly quantifies specific taxa; cost-effective; high sensitivity; compatible with low biomass | 16S rRNA copy number variation requires calibration; PCR amplification biases [14] [12] |

| 16S qRT-PCR | Clinical infections, food safety, feces | High resolution; detects metabolically active cells; compatible with low biomass | Unstable RNA requiring careful handling; approximates protein synthesis rather than direct cell count [14] |

| Digital PCR (ddPCR) | Clinical infections, air, feces, soil | No standard curve needed; high precision at low concentrations; resistant to PCR inhibitors | Requires dilution for high-concentration templates; may need numerous replicates [14] |

| Spike-in Internal Standards | Soil, sludge, feces | Easy incorporation into sequencing workflows; high sensitivity; no specialized equipment | Internal standard selection critically affects accuracy; 16S rRNA copy number calibration may be needed [14] [5] |

| Fluorescence Spectroscopy | Aquatic, soil, food, air | Multiple dye options to distinguish live/dead cells; high affinity | Fails to stain dead cells with complete DNA degradation; some dyes bind both DNA and RNA [14] |

Decision Framework for Method Selection

Choosing the appropriate quantification method depends on specific research questions and sample characteristics:

- For distinguishing live vs. dead cells: Flow cytometry or fluorescence spectroscopy with viability dyes provides the most reliable results [14].

- For low biomass samples: 16S qPCR, 16S qRT-PCR, or ddPCR offer the required sensitivity, with spike-in standards providing integration with sequencing workflows [14] [12].

- For large-scale studies: Spike-in standards or flow cytometry provide the necessary throughput, though cost considerations may favor spike-in approaches for very large sample sizes [14] [5].

- For specific taxa quantification: 16S qPCR, ddPCR, or CARD-FISH with flow cytometry enable targeted enumeration with high specificity [14].

Experimental Protocols for Absolute Quantification

Spike-in Internal Standard Protocol

The spike-in method incorporates known quantities of foreign cells or DNA into samples to convert relative sequencing data to absolute counts:

- Internal Standard Selection: Choose genetically distinct, non-competing organisms or synthetic DNA sequences that won't cross-react with sample DNA. Common choices include Pseudomonas fluorescens for soil samples or alien synthetic DNA sequences for human microbiome studies [5].

- Standard Quantification: Precisely quantify the internal standard using flow cytometry or quantitative PCR to establish exact cell counts or DNA copies [5].

- Sample Spiking: Add a known amount of internal standard to the sample either prior to or during DNA extraction, noting that pre-extraction spiking accounts for DNA extraction efficiency variations [14] [5].

- DNA Extraction and Sequencing: Process samples following standard protocols, ensuring consistent handling of both sample and standard DNA [5].

- Computational Conversion: Calculate absolute abundance using the formula: Absolute Abundance = (Sample Read Count / Spike-in Read Count) × Known Spike-in Amount [5].

Flow Cytometry Protocol for Microbial Load Quantification

Flow cytometry provides rapid, accurate total bacterial counts:

- Sample Preparation: For fecal samples, homogenize and filter through 40μm filters to remove large particles. For water samples, concentrate if necessary. For soil samples, separate cells from particles through density gradient centrifugation [5].

- Staining: Use DNA-binding fluorescent dyes such as SYBR Green I at 1× concentration in the dark for 15 minutes. For viability assessment, combine with propidium iodide to distinguish live/dead cells [14] [5].

- Instrument Calibration: Calibrate using fluorescent beads of known concentration. Set appropriate thresholding to exclude background noise and debris [5].

- Acquisition and Analysis: Acquire a minimum of 10,000 events per sample. Gate populations based on forward/side scatter and fluorescence to distinguish bacterial cells from debris [5].

- Quantification Calculation: Use the formula: Cells/g = (Event Count in Bacterial Gate / Total Bead Count) × (Known Bead Concentration / Sample Volume) [5].

Integrated Absolute Quantification Workflow

The following workflow diagram illustrates a comprehensive approach to absolute quantification in microbiome studies:

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents for Absolute Quantification Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| SYBR Green I DNA Stain | Fluorescent nucleic acid binding for cell counting | Distinguishes DNA from background; use at 1× concentration; light sensitive [5] |

| Propidium Iodide | Membrane-impermeant dye for dead cell discrimination | Combines with SYBR Green for viability assessment; excludes dead cells from counts [14] |

| Pseudomonas fluorescens | Non-pathogenic spike-in internal standard | Genetically distinct from mammalian microbiomes; quantifiable by specific primers [5] |

| Synthetic Alien DNA | Artificial spike-in standard for human microbiome studies | Contains unique sequences absent in nature; eliminates cross-reactivity concerns [5] |

| Fluorescent Beads | Flow cytometry calibration and quantification | Enables absolute cell counting; use size-matched beads for bacterial applications [5] |

| DNA Extraction Kits with Bead Beating | Comprehensive cell lysis for diverse taxa | Essential for tough-to-lyse organisms; standardized protocols improve reproducibility [24] |

| 16S rRNA Gene Primers | Taxonomic quantification via qPCR | Target conserved regions; requires copy number correction for absolute quantification [14] |

| Viability Dyes | Metabolic activity assessment in flow cytometry | Distinguishes live cells based on enzymatic activity; complementary to DNA stains [14] |

Data Analysis and Statistical Considerations

Handling Compositional Data Challenges

The compositional nature of microbiome data requires specialized analytical approaches:

- Addressing Zero-Inflation: Microbiome data typically contains 80-95% zeros, requiring zero-inflated models like DESeq2-ZINBWaVE or proper filtering strategies to avoid false discoveries [24].

- Managing Group-wise Structured Zeros: When taxa are completely absent in one group but present in another (structural zeros), specialized approaches like penalized likelihood methods in DESeq2 are required for proper statistical inference [24].

- Normalization Strategies: Methods like trimmed mean of M-values (TMM) or median-of-ratios normalization help mitigate compositionality effects, but require careful handling of zeros through pseudo-counts or specialized algorithms [24].

Integrated Analysis Pipeline

A robust analytical framework for absolute quantification data incorporates multiple approaches:

- Differential Abundance Testing: Combine DESeq2-ZINBWaVE for zero-inflated data with standard DESeq2 for taxa with group-wise structured zeros to address both analytical challenges [24].

- Cross-Study Comparisons: Convert all data to absolute abundances using spike-in standards or total load measurements to enable valid meta-analyses across different studies [26] [5].

- Correlation Network Analysis: Use absolute abundances rather than relative proportions to construct microbial interaction networks, avoiding spurious correlations inherent to compositional data [14].

The implementation of absolute quantification in microbiome research represents a methodological imperative for robust disease association studies and accurate drug mechanism elucidation. The consequences of relying solely on relative abundance data extend beyond academic concerns to tangible impacts on drug development success and clinical translation.

Future methodological developments should focus on:

- Standardized reference materials for cross-laboratory reproducibility

- Integrated workflows combining multiple quantification approaches

- Computational tools specifically designed for absolute abundance data

- Expanded applications in therapeutic monitoring and personalized medicine

By adopting absolute quantification approaches, researchers can overcome the fundamental limitations of compositional data, leading to more reproducible findings, valid biological interpretations, and successful translation of microbiome science into clinical applications.

A Methodological Toolkit: From Cellular Counts to Spike-In Standards for Absolute Quantification

Flow cytometry has established itself as an indispensable tool in modern biological research, providing unparalleled capacity for multiparameter analysis at the single-cell level. This technical guide examines the foundational role of flow cytometry in precise cell enumeration and viability assessment, with particular emphasis on its growing importance in challenging fields such as low-biomass microbiome studies. The ability to obtain absolute quantitative data rather than relative measurements represents a critical advancement for applications requiring precise cellular quantification, including drug development, clinical diagnostics, and microbial ecology [27].

Traditional methods like colony-forming unit (CFU) counting have long been the gold standard for microbiological quantification but suffer from significant limitations, including extended time-to-results (often weeks for slow-growing organisms) and an inherent inability to detect non-culturable subpopulations or cellular aggregates [28]. In contrast, flow cytometry provides real-time quantification with single-cell resolution, enabling researchers to detect and characterize heterogeneous subpopulations that would otherwise remain obscure. This capability is particularly valuable when studying complex microbial communities or assessing physiological responses to therapeutic interventions [28] [27].

The integration of flow cytometry with advanced fluorescent probes and calibration standards has transformed it from a qualitative tool to a precise quantitative platform. Through the implementation of quantitative flow cytometry (QFCM) methodologies, researchers can now determine not just cellular identities but absolute molecule counts per cell, bringing unprecedented rigor to biomarker studies and functional assays [27] [29]. This level of quantification is revolutionizing our approach to low-biomass research, where accurate measurement near detection limits is paramount.

Technical Foundations of Quantitative Flow Cytometry

Core Principles and Instrumentation

Quantitative flow cytometry (QFCM) represents a specialized implementation of flow cytometry that enables precise measurement of the absolute number of specific molecules on individual cells or particles. While conventional flow cytometry provides relative fluorescence intensity to distinguish positive from negative staining, QFCM utilizes fluorescence calibration standards to convert fluorescence intensity into absolute counts, typically expressed as molecules per cell [27]. This quantitative approach requires stringent standardization but enables direct comparison across experiments, instruments, and laboratories—a critical capability for multicenter studies and longitudinal research [27].

The instrumental foundation of QFCM relies on several key components: a fluidics system for hydrodynamic focusing of cells into a single-file stream, an optics system with lasers for excitation and photomultiplier tubes for detection, and an electronics system for signal processing. For absolute counting, instruments like the BD Accuri C6 can record the volume of sample processed without counting beads, simplifying enumeration protocols [28]. Advanced implementations, including imaging flow cytometry, combine the high-throughput capabilities of conventional systems with spatial information from acquired cell images, though traditionally at lower throughput (approximately 100-10,000 events per second) [30]. Recent breakthroughs in optofluidic time-stretch (OTS) imaging flow cytometry have dramatically increased throughput to over 1,000,000 events per second while maintaining sub-micron resolution, opening new possibilities for rare cell detection and large-scale studies [31].

A critical advancement in QFCM has been the development of standardized units for reporting fluorescence quantification. The two most common units are MESF (Molecules of Equivalent Soluble Fluorochrome) and ABC (Antigen Binding Capacity). MESF, formally adopted by the National Institute of Standards and Technology (NIST) and National Committee for Clinical Laboratory Standards (NCCLS), represents the number of soluble fluorochrome molecules required to generate a fluorescence signal equivalent to that from the stained cell or particle [27]. This standardization enables cross-platform comparisons and is essential for clinical applications where precise biomarker quantification directly impacts diagnostic and therapeutic decisions.

Essential Research Reagents and Solutions

Successful implementation of quantitative flow cytometry requires careful selection and optimization of reagents. The table below outlines key research reagent solutions and their specific functions in QFCM workflows.

Table 1: Essential Research Reagents for Quantitative Flow Cytometry

| Reagent Category | Specific Examples | Function & Application |

|---|---|---|

| Viability Dyes | Propidium Iodide (PI), 7-AAD, Calcein AM, Fixable Viability Dyes (eFluor series) | Discrimination of live/dead cells; PI and 7-AAD exclude from live cells with intact membranes; Calcein AM retained in live cells; Fixable dyes compatible with intracellular staining [32]. |

| Absolute Counting Standards | Fluorescent calibration beads (Quantibrite, Quantum Simply Cellular, Quantum MESF beads) | Instrument calibration and conversion of fluorescence intensity to absolute molecule counts; enable quantitative comparisons across experiments [27]. |

| Metabolic Probes | Calcein-AM, SYBR-Gold | Assessment of cellular function; Calcein-AM detects esterase activity as marker of metabolic activity; SYBR-Gold probes membrane integrity and nucleic acid content [28]. |

| Staining Buffers | Flow Cytometry Staining Buffer, PBS with azide | Maintain cell viability and prevent non-specific antibody binding during staining procedures; azide- and protein-free PBS required for optimal Fixable Viability Dye staining [32]. |

| Reference Controls | CD4+ cell counting standards, extracellular vesicle standards | Validation of assay performance; WHO international standards for CD4+ counting in HIV/AIDS monitoring; NIST standards for extracellular vesicle quantification [29]. |

Fluorescence Threshold Optimization for Microbial Detection

A critical technical consideration in microbial flow cytometry is the optimization of fluorescence thresholds to distinguish true cellular events from background noise and debris. Research demonstrates that threshold strategies based solely on light scatter (forward and side scatter) produce unacceptably high false discovery rates (>10%) and inconsistent results across replicates [28]. In contrast, implementing a dual threshold approach combining side scatter (SSC) and fluorescence (FL1) channels consistently reduces false discovery rates to below 0.5% while increasing absolute cell counts by more than one logarithm compared to light scatter thresholding alone [28].

This optimized threshold strategy significantly improves measurement precision, reducing the coefficient of variation between technical replicates to <5% and providing near-perfect linearity (R² > 0.99) across serial dilutions [28]. For mycobacterial studies, staining with SYBR-Gold after heat killing establishes a robust total intact cell count denominator, while SYBR-Gold without heat killing probes membrane integrity, and Calcein-AM staining without heat killing assesses metabolic activity as a marker of cellular vitality [28]. This multiparametric approach enables researchers to distinguish between different physiological states within microbial populations, providing insights beyond mere enumeration.