Balancing Sensitivity and Specificity: Innovations and Applications in Novel Pathogen Detection

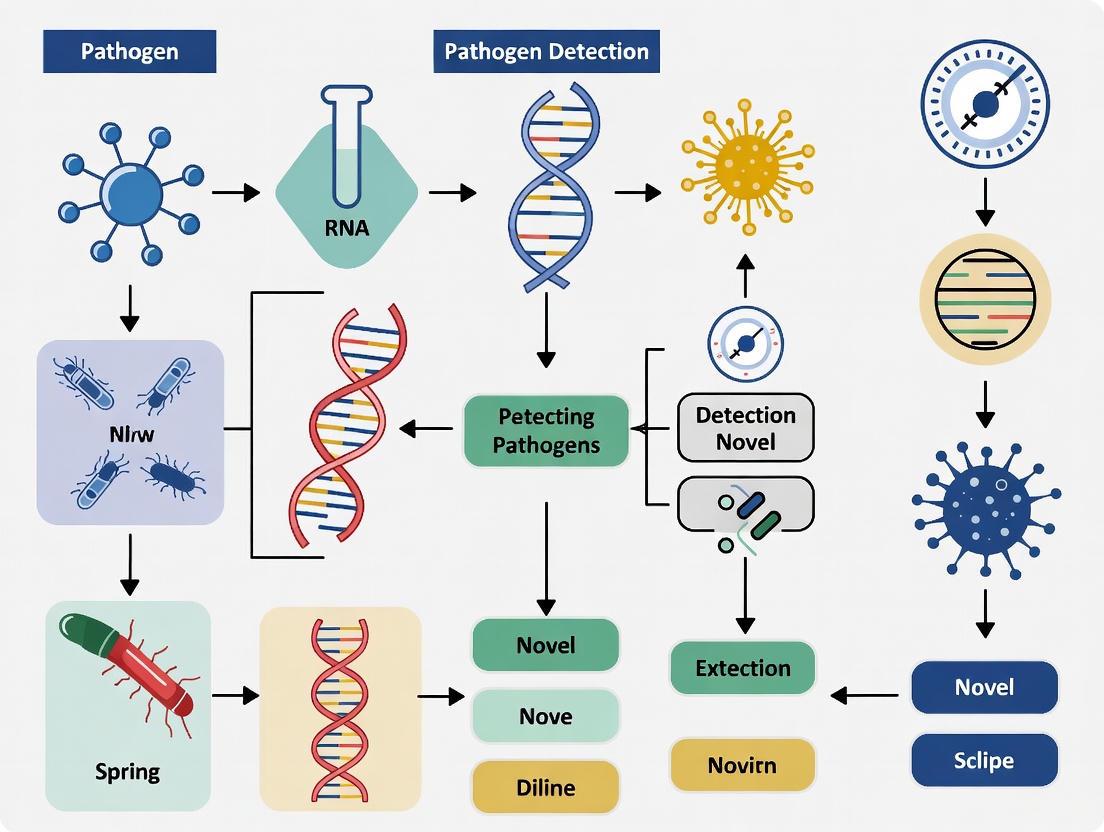

This article provides a comprehensive analysis of sensitivity and specificity in emerging pathogen detection technologies, tailored for researchers, scientists, and drug development professionals.

Balancing Sensitivity and Specificity: Innovations and Applications in Novel Pathogen Detection

Abstract

This article provides a comprehensive analysis of sensitivity and specificity in emerging pathogen detection technologies, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of diagnostic accuracy, examines cutting-edge methodological advances in biosensors and molecular techniques, addresses key optimization challenges, and establishes frameworks for rigorous validation. By synthesizing recent innovations, this review serves as a critical resource for developing, optimizing, and implementing next-generation diagnostic tools to enhance public health response and drug discovery pipelines.

The Critical Balance: Foundational Principles of Diagnostic Accuracy in Pathogen Detection

In the critical fields of medical diagnostics, pathogen detection, and biomedical research, sensitivity and specificity are foundational metrics that quantify the inherent accuracy of a test or classification system. These statistical measures provide a standardized framework for evaluating how well a test discriminates between two conditions, such as the presence or absence of a disease or pathogen. For researchers, scientists, and drug development professionals, a rigorous understanding of these metrics is indispensable for developing novel detection methods, validating diagnostic assays, and interpreting experimental results with precision [1] [2].

Sensitivity and specificity are particularly crucial in the context of novel pathogen detection, where the timely and accurate identification of infectious agents directly impacts public health responses and therapeutic development. These prevalence-independent metrics offer intrinsic assessments of a test's performance, allowing for direct comparisons between different diagnostic platforms regardless of the population in which they are used [3] [2]. This article provides a comprehensive comparison of these core metrics, detailing their definitions, calculations, interpretive frameworks, and applications in modern research and development.

Core Concepts and Definitions

Sensitivity: The Ability to Detect True Positives

Sensitivity, also termed the true positive rate, measures a test's ability to correctly identify individuals who have the target condition [1] [4]. It answers the critical question: "Of all individuals who truly have the condition, what proportion does the test correctly identify as positive?"

The formula for calculating sensitivity is:

Sensitivity = True Positives (TP) / [True Positives (TP) + False Negatives (FN)] [3] [4] [2]

A test with high sensitivity (typically >90%) is excellent at detecting the condition when it is present and consequently has a low rate of false negatives [4]. This characteristic is paramount when failing to identify a condition carries serious consequences, such as with contagious pathogens where missed cases could lead to widespread transmission, or with serious diseases where early treatment is vital [1].

Specificity: The Ability to Exclude True Negatives

Specificity, or the true negative rate, measures a test's ability to correctly identify individuals who do not have the target condition [1] [4]. It addresses the question: "Of all individuals who truly do not have the condition, what proportion does the test correctly identify as negative?"

The formula for calculating specificity is:

Specificity = True Negatives (TN) / [True Negatives (TN) + False Positives (FP)] [3] [4] [2]

A test with high specificity effectively rules out the condition in healthy individuals and minimizes false positive results [4]. This is especially important when a positive test result leads to invasive follow-up procedures, costly treatments, unnecessary anxiety, or social stigma [1].

Quantitative Analysis and Interpretation

Practical Calculation Example

Consider a study evaluating a new diagnostic test for a novel pathogen on a cohort of 1000 individuals, with 384 subsequently confirmed (via gold standard testing) to be infected. The results are distributed as follows:

| Test Result | Infected (Gold Standard) | Not Infected (Gold Standard) | Totals |

|---|---|---|---|

| Positive | 369 (True Positives) | 58 (False Positives) | 427 |

| Negative | 15 (False Negatives) | 558 (True Negatives) | 573 |

| Totals | 384 | 616 | 1000 |

Using this 2x2 contingency table, the performance metrics are calculated as:

- Sensitivity = 369 / (369 + 15) = 369 / 384 = 96.1%

- Specificity = 558 / (558 + 58) = 558 / 616 = 90.6% [3]

This demonstrates that the test is highly sensitive, effectively detecting most true infections, while also being quite specific, correctly ruling out infection in the majority of healthy individuals.

Benchmark Values and Interpretation

The following table provides general guidelines for interpreting sensitivity and specificity values in diagnostic and research contexts:

| Value Range | Interpretation |

|---|---|

| 90–100% | Excellent |

| 80–89% | Good |

| 70–79% | Fair |

| 60–69% | Poor |

| Below 60% | Very Poor [4] |

These benchmarks must be applied within context. A test with "fair" sensitivity might be acceptable for initial screening if followed by a highly specific confirmatory test. Conversely, even a "good" specificity might be insufficient for mass screening of a low-prevalence condition, as it could still generate numerous false positives [4].

The Sensitivity-Specificity Trade-Off and Diagnostic Thresholds

The Inherent Relationship

Sensitivity and specificity typically exhibit an inverse relationship; as one increases, the other tends to decrease [3] [5]. This trade-off is governed by the test cutoff value—the threshold above which a result is considered positive and below which it is considered negative [1].

Adjusting this cutoff directly impacts the test's error profile:

- Lowering the cutoff makes the test more sensitive (fewer false negatives) but less specific (more false positives).

- Raising the cutoff makes the test more specific (fewer false positives) but less sensitive (more false negatives) [1].

The optimal balance depends on the clinical or research context. For novel pathogen detection, a high-sensitivity test is prioritized during outbreak containment to capture all potential cases, while a high-specificity test might be preferred for confirming infection before initiating treatment with significant side effects.

Visualizing the Trade-Off

The diagram below illustrates the relationship between sensitivity and specificity and how shifting the test cutoff changes their values.

Comparative Performance in Recent Research

The table below summarizes the sensitivity and specificity of various diagnostic tools and assessments as reported in recent meta-analyses and validation studies, illustrating their application across different medical fields.

| Test/Assessment Tool | Target Condition / Context | Sensitivity | Specificity | Reference / Year |

|---|---|---|---|---|

| Global Respiratory Severity Scale (GRSS) | Bronchiolitis severity in infants | 87% (95% CI: 0.80-0.92) | 92% (95% CI: 0.88-0.95) | Respir Med. 2025 [6] |

| Zarit Burden Interview-7 (ZBI-7) | Caregiver burden systematic review | 98.6% | 87.4% | J Affect Disord. 2025 [7] |

| High-Sensitivity PubMed Filter | Retrieving any review article | 98.0% (95% CI: 94.3-99.6) | 88.9% (95% CI: 87.5-90.2) | BMC Med Res Methodol. 2025 [8] |

| High-Specificity PubMed Filter | Retrieving systematic reviews | 96.7% (95% CI: 92.4-98.9) | 99.1% (95% CI: 98.6-99.5) | BMC Med Res Methodol. 2025 [8] |

| Enhanced Computed Tomography | Colorectal tumors | 76% (95% CI: 70%-79%) | 87% (95% CI: 84%-89%) | Diagn Interv Radiol. 2025 [9] |

Advanced Metrics and Research Considerations

Predictive Values and Likelihood Ratios

While sensitivity and specificity describe the test's intrinsic performance, their clinical utility is often realized through derivative metrics:

- Positive Predictive Value (PPV): The probability that a subject with a positive test truly has the condition. PPV = TP / (TP + FP) [3] [2].

- Negative Predictive Value (NPV): The probability that a subject with a negative test truly does not have the condition. NPV = TN / (TN + FN) [3] [2].

Unlike sensitivity and specificity, PPV and NPV are highly dependent on disease prevalence in the population being tested [3] [2] [5]. A test will have a higher PPV and a lower NPV when used in a high-prevalence setting.

- Likelihood Ratios (LRs): Combine sensitivity and specificity to quantify how much a test result will shift the probability of disease.

- Positive LR (LR+): How much more likely a positive test is in a diseased vs. non-diseased person. LR+ = Sensitivity / (1 - Specificity) [3] [2]. Good tests typically have an LR+ > 10.

- Negative LR (LR-): How much more likely a negative test is in a diseased vs. non-diseased person. LR- = (1 - Sensitivity) / Specificity [3] [2]. Good tests typically have an LR- < 0.1 [2].

Critical Considerations for Research and Development

- Spectrum Effect: Test performance can vary depending on the clinical spectrum (e.g., severity, stage) of the disease in the study population [2] [10]. A test validated only in severely ill patients may not perform as well in a community screening setting with milder cases.

- Reference Standard: The accuracy of sensitivity and specificity estimates is contingent upon the quality of the gold standard test used for comparison [1] [2]. An imperfect reference standard will lead to biased estimates.

- Setting-Dependent Variation: A 2025 meta-epidemiological study confirmed that sensitivity and specificity can vary in both direction and magnitude between primary care and specialist referral settings, emphasizing the need for context-specific validation [10].

Experimental Protocols for Assay Validation

For researchers developing novel pathogen detection methods, the following protocol provides a framework for rigorously establishing sensitivity and specificity.

Protocol: Diagnostic Accuracy Study for a Novel Pathogen Assay

1. Objective: To determine the diagnostic sensitivity and specificity of a new molecular assay for detecting a novel pathogen against a validated gold standard reference method.

2. Materials and Reagents:

- Index Test: The novel detection assay under evaluation (e.g., PCR reagents, primers/probes, buffer solutions).

- Reference Standard: The accepted best method for pathogen confirmation (e.g., viral culture, CDC-approved RT-PCR assay, clinical expert panel diagnosis).

- Sample Collection Kits: Sterile swabs, viral transport media, and appropriate storage containers.

- Laboratory Equipment: Thermocyclers, plate readers, biosafety cabinets, and micropipettes.

3. Sample Size and Population:

- Recruit a prospectively enrolled, consecutive cohort of participants representing the full spectrum of the target condition (from asymptomatic to severely ill).

- Include a control group of participants known to be free of the pathogen but potentially harboring cross-reactive organisms.

- A power calculation should be performed a priori to ensure a precise estimate of accuracy.

4. Blinded Testing Procedure:

- Each participant sample is tested using both the index test and the reference standard.

- Personnel performing the index test must be blinded to the results of the reference standard, and vice versa, to prevent interpretation bias.

5. Data Analysis:

- Construct a 2x2 contingency table comparing the index test results against the reference standard.

- Calculate sensitivity, specificity, PPV, NPV, and likelihood ratios with corresponding 95% confidence intervals.

- Perform a Receiver Operating Characteristic (ROC) analysis if the test yields a continuous output to evaluate different cutoff values [2].

Essential Research Reagent Solutions

The table below details key reagents and their critical functions in developing and validating diagnostic assays for novel pathogens.

| Research Reagent / Material | Primary Function in Diagnostic Assay Validation |

|---|---|

| Reference Standard Material | Provides the definitive result for comparison; essential for establishing the "truth" to calculate true positives/negatives. (e.g., CDC assay, clinical culture). |

| Clinical Specimen Panels | Well-characterized samples used to challenge the assay; should include positive samples across different disease stages and negative samples with potential cross-reactants. |

| Primers & Probes | Key components of molecular assays (e.g., PCR) that bind to unique pathogen sequences; their design dictates the fundamental specificity of the test. |

| Antibodies (for immunoassays) | Bind to target antigens; the affinity and specificity of capture/detection antibodies are major determinants of both sensitivity and specificity. |

| Enzymes (e.g., Reverse Transcriptase, Polymerase) | Catalyze key reactions in amplification-based tests; their fidelity and efficiency directly impact the detection limit (sensitivity). |

| Control Templates (Positive & Negative) | Used in each test run to monitor for procedural failures, contamination, and to ensure reagent integrity, safeguarding against false results. |

Sensitivity and specificity remain the cornerstone metrics for the objective evaluation of diagnostic tests, from established clinical tools to novel pathogen detection methods. Their interdependent relationship requires researchers and developers to make strategic decisions about test thresholds based on the intended application—prioritizing sensitivity when the cost of missing a case is high, and specificity when false positives pose a greater risk. A comprehensive validation framework, incorporating derivative metrics like predictive values and likelihood ratios, along with a rigorous blinded comparison to a robust gold standard, is essential for generating reliable performance data. As diagnostic technologies evolve, these core principles will continue to guide the development of accurate, reliable, and clinically meaningful tests that inform patient care and public health responses.

In clinical diagnostics, the accuracy of a test is paramount, as erroneous results directly impact patient safety and public health. False positives (a test incorrectly indicating the presence of a condition) and false negatives (a test failing to detect an existing condition) represent the two fundamental types of diagnostic errors [11] [1]. These errors are intrinsic to the relationship between a test's sensitivity—its ability to correctly identify those with the disease—and its specificity—its ability to correctly identify those without the disease [3] [1]. These two metrics are often inversely related, requiring a careful balance based on the clinical context [12] [13]. A test's performance cannot be fully understood without also considering positive predictive value (PPV), the proportion of true positives among all positive results, and negative predictive value (NPV), the proportion of true negatives among all negative results [3]. Crucially, unlike sensitivity and specificity, PPV and NPV are profoundly influenced by the disease prevalence in the tested population [12] [13]. Understanding and managing the trade-offs between these metrics is a core component of modern clinical practice and novel pathogen detection research, guiding the selection and development of diagnostic tools to minimize adverse patient outcomes.

The Direct Consequences of Diagnostic Errors

The Impact of False-Positive Results

When a test produces a false-positive result, the implications extend beyond a simple diagnostic error, initiating a cascade of negative consequences for both the individual and the healthcare system.

- Unnecessary Interventions and Psychological Harm: Patients may be subjected to needless, invasive procedures and treatments, which carry their own risks and side effects [11]. Concurrently, receiving an erroneous diagnosis of a severe condition can cause significant psychological distress, including anxiety, a phenomenon observed in patients receiving false-positive mammography results [11].

- Economic and System-Wide Costs: False positives generate substantial and unnecessary healthcare expenses. These costs accumulate from redundant follow-up tests, therapeutic interventions, and extended hospital stays. For instance, during COVID-19 testing, false positives led to unnecessary hospitalizations, with one analysis suggesting that improving test specificity could save up to $202 million in a single tertiary-care medical center [11].

- Resource Mismanagement and Diagnostic Delays: In laboratory and hospital settings, investigating false positives wastes valuable resources, including time, lab supplies, and hospital beds [11]. This inefficient allocation can delay critical care for other patients with genuine medical needs. Furthermore, a false-positive diagnosis can divert attention from the patient's actual underlying condition, leading to a dangerous delay in the correct diagnosis and appropriate treatment [11].

The Impact of False-Negative Results

False-negative results are equally dangerous, creating a different set of risks that often center on the failure to provide necessary care.

- Delayed or Withheld Treatment: The most immediate consequence of a false negative is that a patient with a confirmed disease does not receive the required treatment in a timely manner [12]. In infectious diseases, this can lead to unchecked progression of the infection, severe complications, and increased mortality [14]. In the context of sepsis, for example, a delay in initiating appropriate antibiotic therapy is associated with a significantly higher mortality rate [14].

- Increased Transmission of Infectious Diseases: For contagious diseases, false negatives pose a significant public health threat. A patient who is incorrectly told they do not have an infection will not isolate, potentially leading to the infection of others. This was a critical consideration in COVID-19 testing strategies, where a high false-negative rate at the peak of an outbreak could have accelerated community transmission [12].

- Undermined Confidence in Health Systems: Frequent diagnostic errors, including false negatives, can erode trust in healthcare providers, laboratories, and public health initiatives [11]. Patients may become hesitant to seek testing or follow medical advice, further compounding public health challenges.

Table 1: Comparative Consequences of Diagnostic Errors

| Consequence Category | False Positive | False Negative |

|---|---|---|

| Patient Clinical Impact | Unnecessary treatments and procedures; medication side effects [11] | Disease progression; severe complications; increased mortality [12] [14] |

| Patient Psychological Impact | Anxiety, stress, and stigma from erroneous diagnosis [11] | False reassurance, leading to delayed care-seeking [12] |

| Public Health Impact | Unnecessary quarantines; misuse of public health resources [11] | Increased community transmission of infectious diseases [12] |

| Economic & Resource Impact | Cost of follow-up tests and unneeded treatments; wasted resources [11] | Cost of managing advanced disease and complications; outbreak containment [12] |

Foundational Concepts: Test Performance and "Fitness Brackets"

The performance of a diagnostic test is quantitatively described by its sensitivity, specificity, and predictive values. The formulas for these key metrics are summarized in the table below [3] [1] [13].

Table 2: Key Metrics for Diagnostic Test Performance

| Metric | Formula | Interpretation |

|---|---|---|

| Sensitivity | True Positives / (True Positives + False Negatives) [3] | Probability that the test is positive when the disease is present [1] |

| Specificity | True Negatives / (True Negatives + False Positives) [3] | Probability that the test is negative when the disease is absent [1] |

| Positive Predictive Value (PPV) | True Positives / (True Positives + False Positives) [3] | Probability that the disease is present when the test is positive [13] |

| Negative Predictive Value (NPV) | True Negatives / (True Negatives + False Negatives) [3] | Probability that the disease is absent when the test is negative [13] |

A critical concept for test selection is the fitness bracket, which defines the range of disease prevalence within which a test is fit-for-purpose, based on acceptable rates of false positives and false negatives [12]. For example, a test with 90% sensitivity and 95% specificity, with a risk tolerance of 10% for both false positives and false negatives, is only fit-for-purpose when disease prevalence is between 33% and 50% [12]. Outside this bracket, diagnostic confidence plummets. Below this prevalence range, the proportion of false positives among all positive results increases dramatically. For instance, at a low prevalence of 5%, over half (51%) of all positive results could be false positives, making the test unreliable for screening in a general population [12]. The clinical context dictates where the balance should be struck. In a dengue pre-vaccination screening program, the consequence of a false positive (vaccinating a dengue-naïve individual, which could lead to severe disease) is considered so grave that the test must prioritize specificity, sometimes accepting a very high false-negative rate [12]. Conversely, during an active COVID-19 outbreak, the priority may shift to identifying as many cases as possible to prevent transmission, necessitating a test with high sensitivity, even if it means a higher rate of false positives [12].

Experimental Approaches in Novel Pathogen Detection

The limitations of traditional culture-based methods have driven innovation in molecular diagnostics. The following experimental protocols highlight advanced approaches designed to enhance sensitivity and specificity in pathogen detection.

Protocol 1: Targeted Next-Generation Sequencing with Host Depletion

This protocol focuses on precise pathogen identification in bloodstream infections by reducing host DNA background [15].

- Workflow Overview: The experimental workflow for this tNGS approach is designed to maximize pathogen detection sensitivity by removing host DNA and enriching for microbial sequences.

- Detailed Methodology:

- Sample Pre-treatment: A novel human cell-specific filtration membrane is used to pre-treat clinical blood samples. This membrane, composed of materials like leukosorb membranes or cellulose-based substrates, is designed to capture nucleated human cells (e.g., leukocytes) while allowing microorganisms like bacteria and viruses to pass into the filtrate. This process achieves over a 98% reduction in host DNA, drastically minimizing background interference [15].

- Nucleic Acid Extraction: DNA is extracted from the filtrate, which is now enriched for microbial pathogens. The protocol emphasizes the use of small beads and Proteinase K to ensure thorough lysis of bacterial cell walls and maximize DNA yield across different pathogen types [14].

- Targeted Amplification and Sequencing: Instead of sequencing all genetic material, a multiplex targeted NGS (tNGS) panel is used. This panel is designed to amplify and capture specific genomic regions from over 330 clinically relevant pathogens. This targeted enrichment increases the sequencing depth for pathogens of interest, boosting pathogen reads by 6- to 8-fold and enabling the detection of low-abundance microbes that would be missed by metagenomic NGS (mNGS) [15].

- Bioinformatic Analysis: The generated sequencing reads are classified using taxonomic annotation software and compared against a curated database of pathogens to generate a final report [15] [16].

Protocol 2: Rapid Bacterial Identification and Quantification via Tm Mapping

This protocol describes a rapid method for identifying and quantifying unknown pathogenic bacteria directly from blood samples within four hours, using a real-time PCR-based system [14].

- Workflow Overview: The Tm mapping method integrates bacterial identification and quantification into a single, rapid workflow suitable for critical care settings.

- Detailed Methodology:

- Bacterial Isolation from Blood: A 2 mL whole blood sample is subjected to low-speed centrifugation (100×g for 5 minutes) to pellet red blood cells. The supernatant fraction containing the buffy coat and plasma, where bacteria remain, is used for DNA extraction, minimizing the loss of bacterial cells [14].

- Contamination-Free DNA Extraction and PCR: DNA is extracted using a protocol that includes small beads and Proteinase K for efficient lysis. A critical component is the use of a eukaryote-made thermostable DNA polymerase, which is free from bacterial DNA contamination. This eliminates false positives that typically arise from trace bacterial DNA in commercial polymerases, enabling highly sensitive and reliable detection [14].

- Nested PCR with Universal Primers: The extracted DNA undergoes a nested PCR using seven bacterial universal primer sets that target conserved regions of the 16S rRNA gene. The use of mixed forward primers compensates for sequence variations among bacteria, ensuring accurate quantification regardless of species. Fluorescence acquisition is set at 82°C to dissociate primer-dimer artifacts, ensuring that quantification reflects only the target amplicons [14].

- Tm Mapping for Identification: The seven PCR amplicons are analyzed to determine their melting temperatures (Tm). These Tm values are plotted in two dimensions to create a unique, species-specific "Tm mapping shape," which is compared against a database to identify the dominant bacterial pathogen in the sample [14].

- Absolute Quantification: The bacterial concentration is first measured against a standard curve of E. coli DNA with known concentrations. This value is then corrected based on the identified pathogen's 16S rRNA operon copy number, which is retrieved from a database, to yield an accurate bacterial count [14].

Protocol 3: Mitigating False Positives in Metagenomic Sequencing

This bioinformatic protocol addresses the critical challenge of false-positive read classification in shotgun metagenomic sequencing, using Salmonella detection as a model [16].

- Workflow Overview: A two-step bioinformatic pipeline enhances the specificity of pathogen detection in complex metagenomic samples.

- Detailed Methodology:

- Initial Taxonomic Classification with Adjusted Confidence: Sequencing reads are first analyzed using the Kraken2 taxonomic classifier. The default confidence threshold (0) is highly sensitive but prone to false positives. Increasing the confidence threshold (e.g., to 0.25 or higher) reduces false positives but may classify some true positives at a higher taxonomic level (e.g., Enterobacteriaceae) [16].

- Confirmation with Species-Specific Regions (SSRs): To remove false positives while retaining sensitivity, all reads that Kraken2 classifies as belonging to the Salmonella genus are compared to a database of Salmonella species-specific regions (SSRs). These are 1000 bp genomic regions unique to the Salmonella pan-genome. Reads that do not align to these SSRs are discarded as false positives. This step was shown to be highly effective, completely eliminating false positives from simulated datasets at a confidence threshold of ≥0.25 [16].

Comparative Performance of Diagnostic Modalities

The performance of different diagnostic technologies can be objectively compared based on their key characteristics, including their strengths and limitations concerning false positives and false negatives.

Table 3: Comparison of Pathogen Detection Methods

| Methodology | Key Principle | Reported Performance & Data | Advantages | Disadvantages |

|---|---|---|---|---|

| Traditional Blood Culture [15] [14] | Growth of viable pathogens in culture media | Considered the historical gold standard but with lengthy time-to-result (several days) [15] | Provides live isolate for downstream phenotyping (e.g., antibiotic resistance) [15] | Low positive rate; long turnaround time delays critical treatment [15] [14] |

| Metagenomic NGS (mNGS) [15] [16] | Untargeted sequencing of all nucleic acids in a sample | Broad, unbiased pathogen detection; but prone to false positives without specific parameters [16] | Hypothesis-free; detects unexpected pathogens [15] | High human background DNA can obscure low-abundance pathogens; costly and complex bioinformatics [15] [16] |

| Targeted NGS (tNGS) with Filtration [15] | Host cell depletion followed by targeted amplification of pathogen genes | >98% host DNA reduction; 6- to 8-fold boost in pathogen reads [15] | High sensitivity for low-abundance pathogens; reduced background noise [15] | Panel design limits detection to pre-defined pathogens; additional step for host depletion [15] |

| RPA-CRISPR/Cas12a [17] | Isothermal amplification combined with CRISPR-based sequence recognition | High sensitivity and specificity for rapid, visual detection at point-of-care [17] | Simplicity; minimal equipment; potential for point-of-care use [17] | Typically detects a single or few pathogens per test run [17] |

| Tm Mapping & Quantification [14] | Bacterial identification via melting profiles of 16S rRNA amplicons | Identification and quantification of unknown bacteria directly from blood within 4 hours [14] | Rapid; quantitative; uses contamination-free reagents to minimize false positives [14] | Primarily for bacterial detection; requires a pre-established Tm database [14] |

The Scientist's Toolkit: Essential Reagents and Technologies

Successful implementation of advanced pathogen detection methods relies on a suite of specialized reagents and tools designed to optimize accuracy and efficiency.

Table 4: Key Research Reagent Solutions

| Reagent / Technology | Function | Role in Mitigating Diagnostic Errors |

|---|---|---|

| Eukaryote-Made DNA Polymerase [14] | A recombinant thermostable DNA polymerase produced in yeast. | Eliminates false positives caused by bacterial DNA contamination in standard polymerases, enabling highly sensitive bacterial universal PCR [14]. |

| Human Cell-Specific Filtration Membrane [15] | A substrate (e.g., leukosorb, cellulose) that captures nucleated human cells. | Selectively removes >98% of host DNA, reducing background and enhancing the signal from low-abundance pathogens to prevent false negatives [15]. |

| BioCode Barcoded Magnetic Beads [11] | Magnetic beads with unique barcodes for multiplex molecular assays. | Enables high-specificity multiplex detection (e.g., 17 GI pathogens simultaneously), reducing the risk of cross-reactivity and false positives [11]. |

| Species-Specific Regions (SSRs) [16] | Curated genomic sequences unique to a pathogen's pan-genome. | Used in bioinformatic pipelines to confirm taxonomic classifications from tools like Kraken2, effectively filtering out false positives [16]. |

| Multiplex tNGS Panels [15] | A set of probes or primers targeting over 330 clinically relevant pathogens. | Enriches for pathogen sequences prior to sequencing, increasing sensitivity and reads for targeted pathogens while controlling costs [15]. |

The impact of false-positive and false-negative results on patient outcomes underscores the non-negotiable need for accuracy in clinical diagnostics. The trade-off between sensitivity and specificity is not merely a statistical concept but a central consideration in clinical decision-making and test development [12] [13]. As demonstrated by the featured experimental protocols, the field of pathogen detection is evolving rapidly. Innovations such as host-cell filtration [15], contamination-free reagents [14], sophisticated bioinformatic pipelines [16], and CRISPR-based detection [17] are systematically addressing the challenges of diagnostic errors. The future of diagnostics lies in the intelligent application of these technologies, guided by a deep understanding of their performance characteristics and the clinical context in which they are used. By defining "fitness brackets" for tests and implementing robust methods to expand them, researchers and clinicians can better ensure that patients receive timely, accurate diagnoses, leading to improved therapeutic interventions and enhanced patient safety.

The performance of a diagnostic test is not determined solely by its inherent accuracy. Sensitivity (ability to correctly identify true positives) and specificity (ability to correctly identify true negatives) are often considered stable test attributes [13] [18]. However, the clinical usefulness of a test is ultimately judged by its Predictive Values—the probabilities that a positive or negative test result is correct. These values are profoundly influenced by the prevalence of the condition in the population being tested [19] [20] [21]. A test with fixed sensitivity and specificity will yield different predictive values when applied to a high-prevalence population (e.g., a specialized clinic) versus a low-prevalence population (e.g., general community screening) [20]. This article explores this fundamental relationship and its critical implications for evaluating novel pathogen detection methods.

Foundational Definitions and Statistical Relationships

To objectively compare diagnostic tests, a clear understanding of core performance metrics is essential. These metrics are derived from a 2x2 contingency table that cross-tabulates test results with true disease status, defined by a reference standard or "gold standard" [13] [19].

- Sensitivity (True Positive Rate): The proportion of truly diseased individuals who test positive. A high-sensitivity test is optimal for "ruling out" a disease when the result is negative, as it misses few true cases [1] [18]. Calculated as: Sensitivity = True Positives / (True Positives + False Negatives) [13] [1].

- Specificity (True Negative Rate): The proportion of truly non-diseased individuals who test negative. A high-specificity test is optimal for "ruling in" a disease when the result is positive, as it minimizes false alarms [1] [18]. Calculated as: Specificity = True Negatives / (True Negatives + False Positives) [13] [1].

- Positive Predictive Value (PPV): The probability that an individual with a positive test result truly has the disease. This is a crucial metric for clinicians acting upon a positive finding [20] [21]. Calculated as: PPV = True Positives / (True Positives + False Positives) [13] [21].

- Negative Predictive Value (NPV): The probability that an individual with a negative test result truly does not have the disease [13] [21]. Calculated as: NPV = True Negatives / (True Negatives + False Negatives) [13] [21].

Table 1: Diagnostic Test Performance Metrics at a Glance

| Metric | Definition | Clinical Utility Focus | Governed by Test Attributes or Prevalence? |

|---|---|---|---|

| Sensitivity | Proportion of sick who test positive | "Ruling out" disease | Test Attributes |

| Specificity | Proportion of well who test negative | "Ruling in" disease | Test Attributes |

| Positive Predictive Value (PPV) | Probability disease is present after a positive test | Confidence in a positive result | Prevalence |

| Negative Predictive Value (NPV) | Probability disease is absent after a negative test | Confidence in a negative result | Prevalence |

The relationship between these metrics is not fixed. There is typically a trade-off between sensitivity and specificity; adjusting a test's cutoff point to increase sensitivity will often decrease specificity, and vice versa [13] [1]. Most importantly, while sensitivity and specificity are considered intrinsic to the test, PPV and NPV are highly dependent on disease prevalence [13] [19] [20]. The mathematical relationship is defined as follows [21]:

PPV = (Sensitivity × Prevalence) / [ (Sensitivity × Prevalence) + (1 - Specificity) × (1 - Prevalence) ]

NPV = (Specificity × (1 - Prevalence)) / [ (Specificity × (1 - Prevalence)) + (1 - Sensitivity) × Prevalence ]

This relationship can be visualized in the following diagnostic testing pathway:

The Critical Impact of Prevalence on Predictive Values

Pre-test probability, often reflected by disease prevalence in a population, is the key driver of a test's predictive value. A test with excellent sensitivity and specificity can have surprisingly low clinical utility if applied to a population where the target condition is rare [20].

Consider a screening test with 90% sensitivity and 90% specificity. Its performance varies dramatically between a high-prevalence and a low-prevalence setting, as illustrated in the table below.

Table 2: Impact of Prevalence on Predictive Values for a Test with 90% Sensitivity and 90% Specificity (for a population of 1,000 individuals)

| Parameter | High-Prevalence Setting (50%) | Low-Prevalence Setting (5%) |

|---|---|---|

| True Positives (TP) | 450 | 45 |

| False Negatives (FN) | 50 | 5 |

| True Negatives (TN) | 450 | 855 |

| False Positives (FP) | 50 | 95 |

| Positive Predictive Value (PPV) | 450 / (450 + 50) = 90% | 45 / (45 + 95) = 32.1% |

| Negative Predictive Value (NPV) | 450 / (450 + 50) = 90% | 855 / (855 + 5) = 99.4% |

In the high-prevalence setting, a positive test result is highly reliable (90% PPV). However, in the low-prevalence setting, the same test produces a positive result that is more likely to be wrong than right (PPV of only 32.1%), meaning about two-thirds of positive results are false positives [20]. This demonstrates that using a screening test with modest specificity in a low-prevalence population can lead to substantial over-investigation and unnecessary anxiety.

Case Studies in Novel Pathogen Detection

The principles of predictive values are acutely relevant in the development and deployment of new technologies for detecting pathogens, where minimizing false results is critical for public health and clinical decision-making.

Case Study 1: Targeted Next-Generation Sequencing for Bloodstream Infections

Experimental Protocol: A 2025 study by frontiersin.org introduced an integrated diagnostic approach for precise pathogen identification in bloodstream infections (BSIs) [15].

- Sample Pre-treatment: Blood samples are passed through a proprietary human cell-specific filtration membrane. This membrane, with surface charge properties attractive to leukocytes, selectively captures nucleated human cells while allowing microorganisms to pass into the filtrate [15].

- Host DNA Depletion: This filtration step achieves over a 98% reduction in host DNA, drastically reducing background interference and increasing the relative abundance of pathogen-derived nucleic acids [15].

- Targeted Amplification and Sequencing: The filtrate undergoes targeted next-generation sequencing using a multiplex panel designed to amplify genomic regions of over 330 clinically relevant pathogens. This enrichment step boosts pathogen reads by 6- to 8-fold compared to metagenomic NGS, enhancing sensitivity for low-abundance pathogens [15].

Performance Data: The synergy between host DNA depletion and targeted sequencing significantly improved the signal-to-noise ratio. This method demonstrated high consistency with blood culture results and showed a significant improvement in detection sensitivity, enabling reliable identification even in cases of low-abundance pathogens [15]. This enhanced sensitivity directly contributes to a higher Negative Predictive Value, providing greater confidence in negative results to rule out infections.

Case Study 2: Rapid Bacterial Identification and Quantification via Tm Mapping

Experimental Protocol: A 2024 study in Scientific Reports detailed a novel method for identifying and quantifying unknown pathogenic bacteria in whole blood within four hours [14].

- Sample Preparation: A 2 mL whole blood sample is subjected to low-speed centrifugation to pellet red blood cells. The supernatant fraction containing bacteria and buffy coat is retained [14].

- DNA Extraction: Bacterial DNA is extracted from the supernatant using a protocol involving Proteinase K and small beads to maximize cell wall lysis efficiency across different bacterial species [14].

- Nested Real-time PCR: Extracted DNA undergoes a nested PCR using seven bacterial universal primer sets targeting the 16S rRNA gene. A key innovation is the use of a eukaryote-made thermostable DNA polymerase, which is free from bacterial DNA contamination, eliminating false positives and enabling highly sensitive quantification [14].

- Identification & Quantification: The seven melting temperature (Tm) values of the amplicons are plotted to create a species-specific "Tm mapping shape" for identification. Quantification is performed using a standard curve and then corrected based on the identified bacterium's 16S rRNA operon copy number [14].

Performance Data: This method allows for the direct quantification of bacterial load, proposed as a novel biomarker for infection severity and therapeutic monitoring. The use of contamination-free reagents and a multi-parameter identification system (Tm mapping) ensures high specificity, which is critical for maintaining a high Positive Predictive Value, especially when testing for a specific pathogen in a focused clinical context [14].

The workflow for this rapid identification method is as follows:

The Scientist's Toolkit: Essential Reagents for Advanced Pathogen Detection

The following table details key reagents and materials used in the featured novel detection methods, highlighting their critical functions in ensuring test accuracy.

Table 3: Key Research Reagent Solutions for Novel Pathogen Detection

| Reagent / Material | Function in Experimental Protocol | Impact on Test Performance |

|---|---|---|

| Human Cell-Specific Filtration Membrane [15] | Selective capture and removal of leukocytes from blood samples based on surface charge properties. | Reduces host DNA background by >98%, dramatically improving the signal-to-noise ratio and enhancing sensitivity for low-abundance pathogens. |

| Multiplex tNGS Panel [15] | A set of probes designed to simultaneously target and enrich genetic sequences from over 330 clinically relevant pathogens. | Increases pathogen reads by 6- to 8-fold, boosting detection sensitivity and enabling highly multiplexed, specific identification. |

| Eukaryote-Made Thermostable DNA Polymerase [14] | A recombinant DNA polymerase manufactured in yeast, ensuring it is free from bacterial DNA contamination. | Eliminates a major source of false positives in universal bacterial PCR, ensuring high specificity and reliable detection of low bacterial loads. |

| Bacterial Universal Primer Sets [14] | Primers targeting conserved regions of the 16S rRNA gene, allowing for the amplification of a wide range of bacterial species. | Enables unbiased, broad-range detection and identification of unknown pathogens in a sample. |

| Magnetic Probes for Separation (implied in similar platforms) [22] | Surface-functionalized magnetic beads used to capture and isolate target pathogens or nucleic acids from complex sample matrices. | Concentrates the target and purifies it from inhibitors, improving both the sensitivity and robustness of the downstream detection assay. |

Understanding the dynamic interplay between test characteristics (sensitivity/specificity) and population prevalence is not merely an academic exercise—it is a fundamental requirement for the valid design, evaluation, and application of novel diagnostic methods. For researchers and drug developers, this means:

- Contextual Performance Validation: A test's predictive values must be evaluated within the specific epidemiological context of its intended use. A test developed for a critical care setting (high prevalence) may perform poorly in general screening (low prevalence) [20].

- Strategic Method Selection: The choice between a highly sensitive test (e.g., tNGS for ruling out infection) and a highly specific test (e.g., a confirmatory PCR) should be guided by the clinical question and the expected prevalence [18].

- Mitigating False Results: Technological advancements that enhance specificity, such as host DNA depletion [15] and contamination-free enzymes [14], are paramount for improving PPV in low-prevalence scenarios. Conversely, methods that boost sensitivity, like targeted enrichment [15], are key for achieving high NPV.

Ultimately, reporting only sensitivity and specificity is insufficient. A comprehensive evaluation of any novel pathogen detection method must include a discussion of its predictive values across a range of plausible prevalence levels to truly inform clinicians, public health experts, and drug development professionals.

In the field of diagnostic medicine, particularly for novel pathogen detection, researchers and developers face a fundamental trade-off: the inverse relationship between sensitivity and specificity. These two core metrics of test accuracy are intrinsically linked, where optimizing one typically compromises the other [3]. Highly sensitive tests excel at correctly identifying true positives, minimizing missed cases, while highly specific tests excel at correctly identifying true negatives, reducing false alarms [23] [3]. Navigating this balance is especially critical during outbreak response, where decisions about isolation protocols and resource allocation depend heavily on diagnostic test characteristics [24]. This guide explores the theoretical and practical aspects of this trade-off, compares its implications across different diagnostic approaches, and provides methodologies for optimizing test performance in real-world scenarios.

Theoretical Framework: Understanding the Trade-off

Core Definitions and Calculations

The performance of a binary classifier or diagnostic test is evaluated using a 2x2 contingency table, which cross-references the test results with the true disease status [23] [3]. From this table, key metrics are derived:

- Sensitivity (True Positive Rate): The proportion of truly diseased individuals correctly identified by the test. Calculated as: Sensitivity = True Positives / (True Positives + False Negatives) [3] [25].

- Specificity (True Negative Rate): The proportion of truly non-diseased individuals correctly identified by the test. Calculated as: Specificity = True Negatives / (True Negatives + False Positives) [3] [25].

- Inverse Relationship: Sensitivity and specificity are inversely related. As sensitivity increases, specificity generally decreases, and vice versa [3]. This relationship is governed by the selected decision threshold, which determines whether a result is classified as positive or negative.

The ROC Curve and AUC as Analytical Tools

The Receiver Operating Characteristic (ROC) curve is a fundamental tool for visualizing and analyzing the sensitivity-specificity trade-off across all possible decision thresholds [23] [26] [27].

- ROC Curve Interpretation: This plot shows the true positive rate (Sensitivity) against the false positive rate (1 - Specificity) for different cutoff points [27] [25]. A test with perfect discrimination (no overlap in disease and non-disease distributions) has a ROC curve that passes through the upper-left corner (100% sensitivity and 100% specificity) [25]. The diagonal line from the bottom-left to top-right represents a test with no discriminatory power, equivalent to random guessing [23] [27].

- Area Under the Curve (AUC): The AUC is a single metric summarizing the overall performance of a test across all thresholds [23] [27]. Its value ranges from 0.5 to 1.0, with higher values indicating better discriminatory ability [23].

Table 1: Interpretation of AUC Values for Diagnostic Tests

| AUC Value | Interpretation |

|---|---|

| 0.9 ≤ AUC | Excellent |

| 0.8 ≤ AUC < 0.9 | Considerable |

| 0.7 ≤ AUC < 0.8 | Fair |

| 0.6 ≤ AUC < 0.7 | Poor |

| 0.5 ≤ AUC < 0.6 | Fail |

Comparative Analysis of Diagnostic Approaches

The sensitivity-specificity trade-off is managed differently across diagnostic technologies and operational contexts. The choice between high-sensitivity versus high-specificity tests depends on the clinical or public health scenario.

Case Study: Ebola Virus Detection

Research modeling the 2014-2016 Sierra Leone EBOV epidemic demonstrated that the trade-off extends beyond simple accuracy metrics to include operational factors like testing rate and time-to-isolation [24].

- Impact of Individual Parameters: Isolated reductions in test sensitivity or specificity alone significantly increased the expected number of cases (from 11.7% to 223%). Conversely, any decrease in time-to-isolation (due to faster results) or an increase in testing rate alone decreased expected cases by 47.7–87.7% [24].

- The Net Benefit of Rapid Tests: When combining all three factors, the benefits of a Rapid Diagnostic Test (RDT)—faster turnaround and increased accessibility—outweighed the harms of its lower accuracy, resulting in a net reduction of mean cases between 71.6% and 92.3% compared to relying on PCR alone [24].

Table 2: Impact of Diagnostic Test Properties on Ebola Outbreak Size (Modeled Data)

| Parameter Variation | Effect on Expected Number of Cases |

|---|---|

| Decrease in test sensitivity or specificity alone | Increase of 11.7% to 223% |

| Decrease in time-to-isolation alone | Decrease of 47.7% to 87.7% |

| Increase in testing rate alone | Decrease of 47.7% to 87.7% |

| Combined use of RDT (faster, more accessible) | Net reduction of 71.6% to 92.3% |

Comparison of Validated Diagnostic Tests

External validation studies show how different tests achieve varying levels of sensitivity and specificity based on their design and application.

- Discrete Choice Experiments (DCEs) in Health: A meta-analysis found that DCEs, used to predict health-related behaviors, had a pooled sensitivity of 89% and a specificity of 52%, with an AUC of 0.81, indicating a clear preference for sensitivity over specificity in this context [28].

- Uromonitor Test for Bladder Cancer: This urine-based molecular test for detecting non-muscle-invasive bladder cancer recurrence demonstrated a balance of 73.5% sensitivity and 93.2% specificity, showing a design that prioritizes high specificity to minimize false positives [29].

Table 3: Performance Metrics of Various Validated Diagnostic Tools

| Diagnostic Tool / Context | Sensitivity | Specificity | AUC |

|---|---|---|---|

| Discrete Choice Experiments (Health behavior prediction) | 89% (95% CI: 77-95) | 52% (95% CI: 32-72) | 0.81 (95% CI: 0.77-0.84) [28] |

| Uromonitor Test (Bladder cancer recurrence) | 73.5% | 93.2% | Not specified [29] |

Methodologies for Optimizing the Trade-off

Advanced Statistical Methods

Novel statistical approaches are being developed to directly optimize classification rules based on predefined clinical needs.

- SMAGS Method: The "Sensitivity Maximization at a Given Specificity" (SMAGS) method is a machine learning framework that finds a linear decision rule yielding the maximum sensitivity for a given, clinically desirable specificity (or vice versa) [30]. This differs from standard logistic regression, which maximizes overall likelihood without a fixed specificity target. In one application for colorectal cancer detection, SMAGS improved sensitivity from 0.31 to 0.57 at a fixed specificity of 98.5% [30].

Determining the Optimal Cutoff Point

For tests with continuous outputs, selecting the optimal decision threshold is crucial for balancing sensitivity and specificity.

- The Youden Index: A common method for identifying the optimal cutoff is the Youden Index (J), calculated as J = Sensitivity + Specificity - 1 [23] [25]. The threshold that maximizes this index is considered optimal as it maximizes the overall discriminatory power [23].

- Cost-Benefit Analysis: A more sophisticated approach incorporates disease prevalence and the costs of different decision outcomes. This method calculates a slope (S) based on the prevalence and the relative costs of false positives, false negatives, true positives, and true negatives. The point on the ROC curve where a line with this slope touches the curve is the optimal operating point [25].

Diagram 1: Workflow for Determining Optimal Diagnostic Threshold

The Scientist's Toolkit: Research Reagent Solutions

The accurate validation of diagnostic tests requires specific reagents and controls. The following table details key materials used in developing and validating molecular diagnostic methods, as exemplified in recent research.

Table 4: Essential Research Reagents for Molecular Diagnostic Validation

| Reagent / Material | Function in Diagnostic Development & Validation |

|---|---|

| Chimeric Plasmid DNA (cpDNA) | A non-pathogenic positive control containing target pathogen genes. Allows for cost-effective, safe, and reproducible sensitivity testing without handling infectious agents [31]. |

| Competitive Allele-Specific PCR (CAST-PCR) | An ultra-sensitive molecular technique used to detect trace amounts of specific mutations (e.g., in TERT, FGFR3). Provides high specificity needed for distinguishing low-frequency variants [29]. |

| Contamination Indicator Probe | An additional probe within the cpDNA that emits a distinct fluorescent signal. Serves as an internal control to detect and prevent false positives caused by genetic contamination from control DNA in the lab [31]. |

| Droplet Digital PCR (ddPCR) | A highly precise absolute nucleic acid quantification method. Used as a gold standard to validate the sensitivity of other PCR assays by providing a direct copy number count [31]. |

| Multiple Fluorescent Dyes (e.g., FAM, HEX, TxR, Cy5) | Dyes used to label detection probes. Their robustness across different chemistries allows for multiplexing and validates that assay performance is independent of the reporter dye [31]. |

Navigating the sensitivity-specificity trade-off is a central challenge in the design and deployment of novel pathogen detection methods. There is no universal "best" balance; the optimal point depends on the specific context, including the disease's transmissibility and severity, the purpose of testing (e.g., screening vs. confirmation), and operational constraints like turnaround time and testing capacity [24] [3]. As demonstrated in outbreak scenarios, strategic trade-offs that accept slightly lower accuracy in exchange for faster, more accessible testing can lead to significantly better public health outcomes [24]. Future advancements will rely on sophisticated statistical methods like SMAGS [30] and robust validation protocols using tools like chimeric plasmid DNA [31] to create diagnostics that are not only analytically accurate but also clinically and epidemiologically impactful.

The rapid and precise identification of pathogens is a cornerstone of public health, clinical diagnostics, and drug development. The global impact of infectious diseases, exemplified by over 3.5 million deaths from COVID-19 and an estimated 600 million annual foodborne infections, underscores the non-negotiable need for accurate diagnostic tools [22]. For researchers and scientists developing novel detection methods, establishing benchmark accuracy against recognized standards is not merely a regulatory formality but a fundamental scientific requirement to ensure reliability and clinical validity. This process typically requires studies that compare results from the new candidate method to at least an already-approved method for the same analyte [32].

The evaluation of any diagnostic test, especially for novel pathogens, hinges on two pivotal performance metrics: sensitivity (the test's ability to correctly identify those with the disease) and specificity (the test's ability to correctly identify those without the disease) [3]. These metrics are most rigorously validated through comparison to a reference method, often termed a "gold standard." However, a significant challenge emerges as these reference tests themselves are almost never perfect, a critical consideration for professionals interpreting test performance data [33]. This guide provides a comparative analysis of reference methods and emerging technologies, complete with experimental protocols and performance data, to equip researchers working at the forefront of pathogen detection.

Foundational Concepts: Performance Metrics and the Imperfect Gold Standard

Defining Diagnostic Accuracy Metrics

The validity of a diagnostic test is primarily quantified by its sensitivity and specificity. These are foundational for understanding a test's operational characteristics [3].

- Sensitivity is the proportion of true positives correctly identified by the test. It is calculated as: Sensitivity = True Positives / (True Positives + False Negatives) [3]

- Specificity is the proportion of true negatives correctly identified by the test. It is calculated as: Specificity = True Negatives / (True Negatives + False Positives) [3]

In practice, when comparing a new candidate method to a comparative method that is not a perfect gold standard, the terms Positive Percent Agreement (PPA) and Negative Percent Agreement (NPA) are often used instead of sensitivity and specificity, respectively. The calculations are identical, but the terminology reflects the lower confidence in the comparator [32].

Two other crucial metrics, influenced by disease prevalence, are Positive Predictive Value (PPV) and Negative Predictive Value (NPV). PPV indicates the probability that a person with a positive test truly has the disease, while NPV indicates the probability that a person with a negative test truly does not have the disease [3].

The Challenge of Imperfect Reference Standards

A core complication in diagnostic test evaluation is that the reference standard used to determine the "true" health status of an individual is itself rarely infallible. Using an imperfect reference standard leads to "apparent" sensitivity and specificity, which are merely rates of agreement with the reference and can misrepresent the true performance of the index test [33]. This bias can be significant; for instance, studies of a COVID-19 rapid antigen test showed that the true false-negative rate could be 3.17 to 4.59 times higher than the "apparent" rate derived from an imperfect RT-PCR reference [33].

Statistical correction methods, such as those by Staquet et al. and Brenner, can be employed to adjust for a known imperfect reference standard, but their performance depends on factors like disease prevalence and conditional dependence between the tests [34]. Furthermore, test accuracy is not static; a 2025 meta-epidemiological study demonstrated that the sensitivity and specificity of the same diagnostic test can vary in both direction and magnitude between non-referred (e.g., primary care) and referred (e.g., specialist care) settings, emphasizing that benchmarking context matters [10].

Established Reference Methods and Their Performance

Established reference methods provide the benchmark for validating new technologies. The following table summarizes the performance of several key diagnostic and screening tests as reported in large-scale studies.

Table 1: Diagnostic Accuracy of Established Screening and Diagnostic Tests for Tuberculosis from a State-Wide Survey (n=130,932) [35]

| Test or Method | Sensitivity (%) (95% CI) | Specificity (%) (95% CI) | Role/Notes |

|---|---|---|---|

| Symptom: Cough >2 weeks | 41.6 (31.6–52.1) | 72.8 (72.1–73.5) | Screening |

| Symptom: Any one symptom | 55.2 (44.7–65.3) | 50.9 (50.1–51.6) | Screening |

| Abnormal Chest X-Ray (CXR) | 86.4 (77.9–92.5) | 42.1 (41.3–42.8) | Screening |

| Smear Microscopy | 53.1 (42.6–63.3) | 99.7 (99.6–99.8) | Diagnostic |

| Xpert MTB/RIF (Mobile Van) | 71.8 (61.7–80.5) | 99.3 (99.1–99.4) | Diagnostic, molecular |

| Xpert MTB/RIF (Ref. Lab) | 96.6 (88.0–99.5) | Not Reported | Diagnostic, molecular |

Traditional Culture and Phenotypic Methods

Culture-based methods, such as using blood cultures for bloodstream infections (BSIs) or solid media for bacterial pathogens, are often considered the historical gold standard for pathogen identification [15]. These methods allow for pathogen isolation and subsequent analysis. However, they are hampered by lengthy turnaround times (2–3 days for definitive results), low positive rates in some cases, and the requirement for skilled operators [22] [15]. For novel or fastidious organisms, such as Pantoea piersonii, culture may be insufficient for definitive identification without supplementary genetic analysis [36].

Genomic Reference Methods

- Polymerase Chain Reaction (PCR) and Real-Time PCR: These methods amplify specific genetic targets and are widely used for their sensitivity and specificity. Real-time PCR assays, like one developed for Pantoea piersonii, can provide rapid, specific identification where other methods like MALDI-TOF or 16S rRNA sequencing fail [36]. A key limitation is that they typically require precise thermal cycling and can only detect pre-defined targets [22].

- Whole Genome Sequencing (WGS): WGS provides the highest resolution for pathogen identification and is used to definitively characterize novel organisms, as in the case of Pantoea piersonii isolated from the International Space Station [36]. While providing comprehensive data, it is relatively costly and complex for routine use as a reference method in all settings.

Emerging Platforms and Novel Methodologies

Novel detection methods aim to overcome the limitations of traditional techniques by offering greater speed, multiplexing capability, and ease of use.

Optical Biosensors

Optical biosensors are gaining prominence for pathogen detection due to their rapid analysis, portability, high sensitivity, and potential for multiplexing. Their working principle involves measuring changes in optical properties (e.g., absorption, fluorescence) caused by the interaction between a target pathogen and a biorecognition element [22].

Table 2: Comparison of Optical Biosensing Platforms for Multiplexed Pathogen Detection

| Biosensor Type | Principle | Example Pathogens Detected | Reported Performance / Advantage |

|---|---|---|---|

| Colorimetric | Visual color change from physical/chemical reactions [22]. | Salmonella, S. aureus, E. coli O157:H7 [22]. | Naked-eye readout; simple & cost-effective; LOD of 10 CFU/mL for S. aureus/E. coli shown in one study [22]. |

| Fluorescence-Based | Emission of light from fluorescent labels after specific stimulation [22]. | Multiple bacterial species (e.g., S. aureus, E. coli) [22]. | Rapid visualization & real-time monitoring; ratiometric probes can improve sensitivity [22]. |

| Surface-Enhanced Raman Scattering (SERS) | Enhancement of Raman signal on a nanostructured surface [22]. | Not specified in results, but applicable to various pathogens. | Provides molecular fingerprinting; high sensitivity [22]. |

| Surface Plasmon Resonance (SPR) | Detection of changes in refractive index on a sensor surface [22]. | Not specified in results, but applicable to various pathogens. | Label-free, real-time monitoring [22]. |

Advanced Sequencing and Molecular Techniques

- Targeted Next-Generation Sequencing (tNGS): This method uses multiplex PCR or probe hybridization to enrich for specific genomic regions of clinically relevant pathogens prior to sequencing. One developed panel targets over 330 pathogens, covering >95% of known infection types. When combined with a novel filtration membrane that reduces host DNA by over 98%, tNGS achieved a 6- to 8-fold increase in pathogen reads, enabling detection of low-abundance pathogens in BSIs with high sensitivity [15].

- Metagenomic NGS (mNGS): mNGS allows for unbiased, broad-range pathogen detection but is often costly and can be overwhelmed by high background host DNA, which complicates analysis and can lead to false positives. Its main advantage is the ability to detect unexpected or novel pathogens without prior target selection [15].

Experimental Protocols for Method Comparison

For a novel detection method to gain acceptance, its performance must be rigorously compared to a reference method through a structured experimental protocol.

The Method Comparison Experiment for Qualitative Tests

A widely accepted approach for comparing qualitative tests (positive/negative results) is detailed in the CLSI document EP12-A2. The fundamental steps are as follows [32]:

- Sample Set Assembly: A set of well-characterized samples, both positive and negative for the target analyte, is assembled. The confidence in the final results is strengthened by a larger sample size and higher confidence in the accuracy of the comparative method.

- Testing with Candidate Method: The sample set is tested using the novel candidate method.

- Contingency Table Analysis: The results are compiled into a 2x2 contingency table comparing the candidate method against the comparative method.

Table 3: 2x2 Contingency Table for Method Comparison [32]

| Comparative Method: Positive | Comparative Method: Negative | Total | |

|---|---|---|---|

| Candidate Method: Positive | a (True Positive, TP) | b (False Positive, FP) | a + b |

| Candidate Method: Negative | c (False Negative, FN) | d (True Negative, TN) | c + d |

| Total | a + c | b + d | n (Total N) |

From this table, PPA and NPA are calculated [32]:

- PPA = 100% × a / (a + c)

- NPA = 100% × d / (b + d)

If the comparative method is a true gold standard, these values represent estimates of sensitivity and specificity, and Positive/Negative Predictive Values can be calculated if the sample prevalence matches the target population [32].

Protocol for Evaluating a Novel Filtration-tNGS Workflow

A study on bloodstream infection diagnostics provides a robust protocol for evaluating a combined technological approach:

- Sample Pre-treatment with Filtration Membrane: Process clinical blood samples through a human cell-specific filtration membrane (e.g., leukosorb membrane). This step is designed to capture nucleated human cells (leukocytes) while allowing microorganisms to pass into the filtrate, reducing host DNA background by >98% [15].

- Nucleic Acid Extraction: Extract total nucleic acids from the filtrate.

- Targeted Amplification and Sequencing: Apply the extracted DNA to a multiplex tNGS panel targeting the specific genomic regions of over 330 clinically relevant pathogens. Sequence the prepared library on a next-generation sequencer [15].

- Bioinformatic Analysis: Map the generated sequences to a comprehensive pathogen database for identification.

- Comparison to Reference Methods: Compare the tNGS results to those obtained from standard blood cultures and/or mNGS to determine concordance, sensitivity, and specificity [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials used in the advanced experiments cited in this guide.

Table 4: Research Reagent Solutions for Advanced Pathogen Detection

| Item | Function / Application | Example Use Case |

|---|---|---|

| Human Cell-Specific Filtration Membrane | Selectively captures nucleated human cells (e.g., leukocytes) from whole blood, drastically reducing host DNA background to enhance pathogen signal [15]. | Pre-treatment for tNGS/mNGS of bloodstream infections [15]. |

| Multiplex tNGS Panel | A set of primers/probes designed to simultaneously enrich genetic sequences from hundreds of pre-defined pathogens, reducing cost and complexity versus mNGS [15]. | Sensitive and specific detection of >330 pathogens in a single assay [15]. |

| Colorimetric Reporter Probes (e.g., TMB) | Enzyme substrates that produce a visible color change (e.g., upon oxidation) for naked-eye or spectrophotometric detection [22]. | Lateral flow assays; enzyme-linked colorimetric biosensors [22]. |

| Ratiometric Fluorescence Probes | Fluorescent dyes whose emission intensity shifts between two or more wavelengths upon target binding, providing internal calibration and reducing external interference [22]. | Differentiating bacterial species and Gram-stain characteristics via sensor arrays [22]. |

| Functionalized Nanoparticles (Au, Ag) | Metal nanoparticles used as colorimetric labels or signal amplifiers in biosensors due to their unique plasmonic properties [22]. | Multiplexed detection by generating distinct color hues for different pathogens [22]. |

Establishing benchmark accuracy through comparison with reference methods remains a central requirement for the validation and adoption of any novel pathogen detection technology. While traditional culture and molecular methods like PCR and WGS continue to serve as important benchmarks, the field is rapidly advancing with the emergence of highly multiplexed, sensitive, and rapid platforms like optical biosensors and tNGS. A critical understanding of core performance metrics (sensitivity, specificity, PPV, NPV) and the inherent challenges of imperfect reference standards is essential for researchers to design robust validation studies and accurately interpret their results. As these novel methods evolve, so too must the statistical frameworks and gold-standard databases used to evaluate them, ensuring that the diagnostic tools of tomorrow are both innovative and reliably accurate.

Next-Generation Technologies: Advanced Methodologies for Multiplex Pathogen Detection

Optical biosensors have emerged as transformative tools in diagnostic science, particularly for the sensitive and specific detection of pathogens and disease biomarkers. These devices transduce biological binding events into measurable optical signals, enabling real-time, often label-free analysis. For researchers and drug development professionals, the selection of an appropriate biosensing platform is critical and hinges on a clear understanding of the trade-offs between sensitivity, specificity, cost, and operational complexity. This guide provides an objective comparison of three prominent optical biosensing platforms—colorimetric, fluorescent, and surface-enhanced Raman scattering (SERS)-based biosensors—framed within the context of novel pathogen detection methods. By synthesizing current experimental data and detailed methodologies, this review aims to inform strategic decisions in research and development.

Comparative Performance Analysis of Optical Biosensing Platforms

The table below summarizes the key performance characteristics of colorimetric, fluorescent, and SERS-based biosensors, drawing on recent experimental studies and reviews.

Table 1: Performance Comparison of Colorimetric, Fluorescent, and SERS-Based Biosensors

| Feature | Colorimetric Biosensors | Fluorescent Biosensors | SERS-Based Biosensors |

|---|---|---|---|

| Typical Limit of Detection (LOD) | µM to nM range [37] [38] | pM to fM (e.g., SIMOA, CRISPR) [38] | fM to aM (single-molecule level possible) [39] [40] |

| Specificity & Molecular Information | Good; relies on biorecognition elements (e.g., antibodies, aptamers) [38] | Excellent; high specificity from assays like ELISA and CRISPR; can be multiplexed [38] | Outstanding; provides unique molecular "fingerprint" for definitive identification [41] [40] |

| Quantitative Performance | Good; signal intensity correlates with analyte concentration [37] | Excellent; high dynamic range and precision, especially with digital assays [38] | Excellent; quantitative with advanced substrates and data analysis [41] |

| Multiplexing Capability | Low to moderate [38] | High (e.g., using different fluorophores) [38] | Very high; narrow spectral bands allow simultaneous detection of multiple analytes [39] [40] |

| Key Advantages | Simplicity, low cost, rapid result visibility, suitable for point-of-care (POC) [37] [38] | High sensitivity, well-established protocols, versatility, digital readout options [38] | Ultra-high sensitivity, fingerprinting, resistance to photobleaching, works in complex media [41] [40] |

| Major Limitations | Lower sensitivity compared to other methods, can be susceptible to sample interference [38] | Can require complex instrumentation, potential for photobleaching, may need labels [38] | Substrate reproducibility and signal uniformity can be challenging [41] |

A recent comparative study of optical sensing methods further highlights the practical performance differences, demonstrating that optimized LED-based photometry (PEDD) can surpass laboratory spectrophotometry in key metrics like dynamic range and sensitivity for colorimetric detection [37]. This underscores the importance of not only the core technique but also the chosen readout technology.

Experimental Protocols and Methodologies

Colorimetric Detection Using Gold Nanoparticle (AuNP) Aggregation

Colorimetric assays that leverage the plasmonic properties of AuNPs are popular for their visual readout and simplicity.

- Principle: Target analytes induce the aggregation of AuNPs, causing a visible color shift from red (dispersed) to blue (aggregated) [38].

- Detailed Protocol:

- Substrate Preparation: Synthesize or procure spherical citrate-capped AuNPs (typically 10-50 nm in diameter).

- Functionalization: Incubate the AuNPs with a biorecognition element (e.g., an antibody or a specific oligonucleotide aptamer) that binds to the target pathogen or biomarker. This is often done via passive adsorption or covalent chemistry using linkers like EDC/NHS.

- Assay Execution: Mix the functionalized AuNPs with the sample solution. The presence of the target analyte causes cross-linking or non-cross-linking aggregation of the AuNPs.

- Signal Detection: The color change can be observed visually for qualitative analysis. For quantitative results, the solution's absorbance spectrum is measured using a spectrophotometer, a smartphone-based colorimeter, or a low-cost Paired Emitter–Detector Diode (PEDD) system. The ratio of absorbance at specific wavelengths (e.g., A520/A650) is calculated to quantify the aggregation extent [37] [38].

Fluorescent Detection via CRISPR-Cas Systems

CRISPR-based biosensors represent a cutting-edge fluorescent method with exceptional specificity and sensitivity.

- Principle: The trans-cleavage (collateral) activity of certain Cas enzymes (e.g., Cas12a, Cas13) is activated upon recognition of a specific target nucleic acid. This activity non-specifically cleaves fluorescently labeled reporter molecules, releasing a measurable signal [38].

- Detailed Protocol:

- Reagent Preparation: Prepare a mixture containing the Cas protein, a guide RNA (gRNA) designed to be complementary to the target pathogen's DNA or RNA sequence, and fluorescently quenched single-stranded DNA or RNA reporters.

- Amplification (Optional but common): To achieve ultra-high sensitivity, the target nucleic acid from the sample is often pre-amplified using techniques like Recombinase Polymerase Amplification (RPA) or Loop-Mediated Isothermal Amplification (LAMP).

- Detection Reaction: The amplified product (or the raw sample if sensitivity is sufficient) is introduced into the CRISPR reaction mix. If the target is present, the Cas/gRNA complex binds and is activated, cleaving the reporters and producing a fluorescence signal.

- Signal Readout: Fluorescence intensity is measured in real-time using a plate reader or a portable fluorometer. The time-to-positivity or the endpoint fluorescence intensity is proportional to the target concentration, enabling detection in the attomolar range [38].

SERS-Based Immunoassay for Biomarker Detection

SERS-based immunoassays combine the specificity of antibody-antigen interactions with the profound sensitivity and fingerprinting capability of SERS.

- Principle: Target analytes are captured on a nanostructured SERS substrate functionalized with antibodies. The presence of the analyte is confirmed and quantified by the characteristic Raman signal of a reporter molecule [42] [41].

- Detailed Protocol:

- Substrate Fabrication: Prepare a SERS-active substrate, such as Au-Ag nanostars or Ag nanoparticles, known for providing high electromagnetic field enhancement due to their sharp tips and nanogaps [42] [41].

- Functionalization: The substrate is functionalized with a capture antibody (e.g., monoclonal anti-α-fetoprotein antibodies). This often involves creating a self-assembled monolayer (e.g., using mercaptopropionic acid, MPA) followed by covalent antibody conjugation via EDC/NHS chemistry [42].

- Assay Execution: The functionalized substrate is incubated with the sample. After washing, a secondary antibody, labeled with a Raman reporter molecule (e.g., methylene blue), is applied to form a "sandwich" complex.

- SERS Measurement: The substrate is rinsed and analyzed using a Raman spectrometer. The intensity of the unique Raman peaks of the reporter molecule is directly correlated with the concentration of the captured analyte. Advanced platforms can perform this analysis in a liquid phase for rapid detection [42].

Figure 1: Workflow of a SERS-based immunoassay for pathogen detection, illustrating the key steps from sample introduction to signal measurement.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful development and deployment of optical biosensors require a suite of specialized materials and reagents.