Beyond Proportions: Navigating the Pitfalls of Relative Abundance Analysis in Low-Biomass Microbiome Studies

Relative abundance analysis, while standard in microbiome research, presents significant and often overlooked limitations in low-biomass environments such as human tissues, blood, and treated drinking water.

Beyond Proportions: Navigating the Pitfalls of Relative Abundance Analysis in Low-Biomass Microbiome Studies

Abstract

Relative abundance analysis, while standard in microbiome research, presents significant and often overlooked limitations in low-biomass environments such as human tissues, blood, and treated drinking water. This article details how the compositional nature of sequencing data, combined with heightened susceptibility to contamination and batch effects, can distort biological conclusions and generate artifactual signals. We provide a foundational explanation of these pitfalls, explore methodological advancements including absolute quantification and contamination-aware bioinformatics, and outline robust troubleshooting and optimization strategies for experimental design and data analysis. Finally, we present a framework for validating findings through comparative analysis and spike-in controls, offering researchers and drug development professionals a comprehensive guide to producing reliable and interpretable data from challenging low-biomass samples.

The Inherent Pitfalls: Why Relative Abundance Fails in Low-Biomass Environments

Low-biomass microbial communities, characterized by exceptionally low levels of microorganisms, represent a frontier in microbiome research with significant implications for human health and environmental science. These ecosystems exist in diverse environments ranging from human tissues (tumors, blood, placenta) to extreme terrestrial habitats such as the hyper-arid soils of the Atacama Desert [1] [2]. The study of these communities pushes the limits of modern detection technologies, as the target DNA signal often approaches or falls below the level of contamination from external sources [2]. While some have attempted quantitative definitions (e.g., <10,000 microbial cells/mL), it is more practical to consider biomass as a continuum, with certain methodological challenges becoming exponentially more impactful as biomass decreases [1].

This technical guide frames the discussion within a critical analytical context: the inherent limitations of relative abundance analysis in low-biomass research. In standard, high-biomass samples (e.g., human gut, fertile soil), the microbial DNA signal vastly exceeds contaminant noise. In low-biomass systems, however, the proportional nature of sequence-based data means that even minute amounts of contaminating DNA can drastically skew perceived community structure, leading to false biological conclusions [1] [2]. This whitepaper explores the defining characteristics of low-biomass samples, the analytical pitfalls of their study, and the advanced experimental protocols required to derive meaningful data, with a specific focus on the challenges of relative abundance analysis.

Defining the Low-Biomass Niche

Low-biomass environments are united not by a specific microbial count but by the shared analytical challenges they present. In these systems, the fundamental relationship between signal (target microbial DNA) and noise (contamination) is inverted.

Key Characteristics and Exemplary Environments

- Near-Limit Detection: Microbial DNA yields approach the detection limits of standard sequencing and amplification protocols [2].

- High Contaminant Proportion: The quantity of contaminating DNA from reagents, kits, or the environment can be comparable to, or exceed, the authentic signal from the sample itself [1] [2].

- Diverse Habitats: This category includes human tissues like the respiratory tract, placenta, and blood [1] [2]; built environments like treated drinking water [2]; and extreme natural environments like the atmosphere, deep subsurface, and hyper-arid deserts [2].

Table 1: Exemplary Low-Biomass Environments and Their Research Challenges

| Environment | Defining Feature | Primary Research Challenge |

|---|---|---|

| Human Tumors & Blood | High proportion of host DNA relative to microbial DNA. | Host DNA misclassification; contamination during clinical collection [1]. |

| Hyper-Arid Soils | Exceptionally long periods of desiccation and low nutrient availability. | Very low in situ microbial biomass and activity; soil particulate contamination [3] [4]. |

| Placenta & Fetal Tissues | Historical debate over existence of a resident microbiome. | Contamination from maternal tissue and the birth canal during delivery [1] [2]. |

| Treated Drinking Water | 刻意控制的低微生物水平. | Cross-contamination during filtration; reagent contamination [2]. |

The Central Challenge of Relative Abundance Analysis

Relative abundance analysis, which expresses the abundance of each taxon as a percentage of the total community, is a standard metric in microbiome studies. In low-biomass research, its utility is severely compromised. The introduction of a small, consistent amount of contaminant DNA into different samples will be normalized to different relative abundances depending on the sample's true biomass. This can:

- Create Illogical Taxa Distributions: Make contaminants appear as dominant community members [1].

- Obfuscate True Biological Signals: Mask real, but low-abundance, indigenous populations [2].

- Generate Spurious Correlations: If contamination levels are confounded with a phenotype (e.g., cases and controls processed in separate batches), it can produce false associations [1].

Therefore, data derived from relative abundance analyses of low-biomass samples must be interpreted with extreme caution and require robust, context-specific validation.

Methodological Pitfalls and Critical Controls

The vulnerability of low-biomass studies to contamination and bias necessitates a rigorous, defensive approach to experimental design. The most common pitfalls can be categorized as follows.

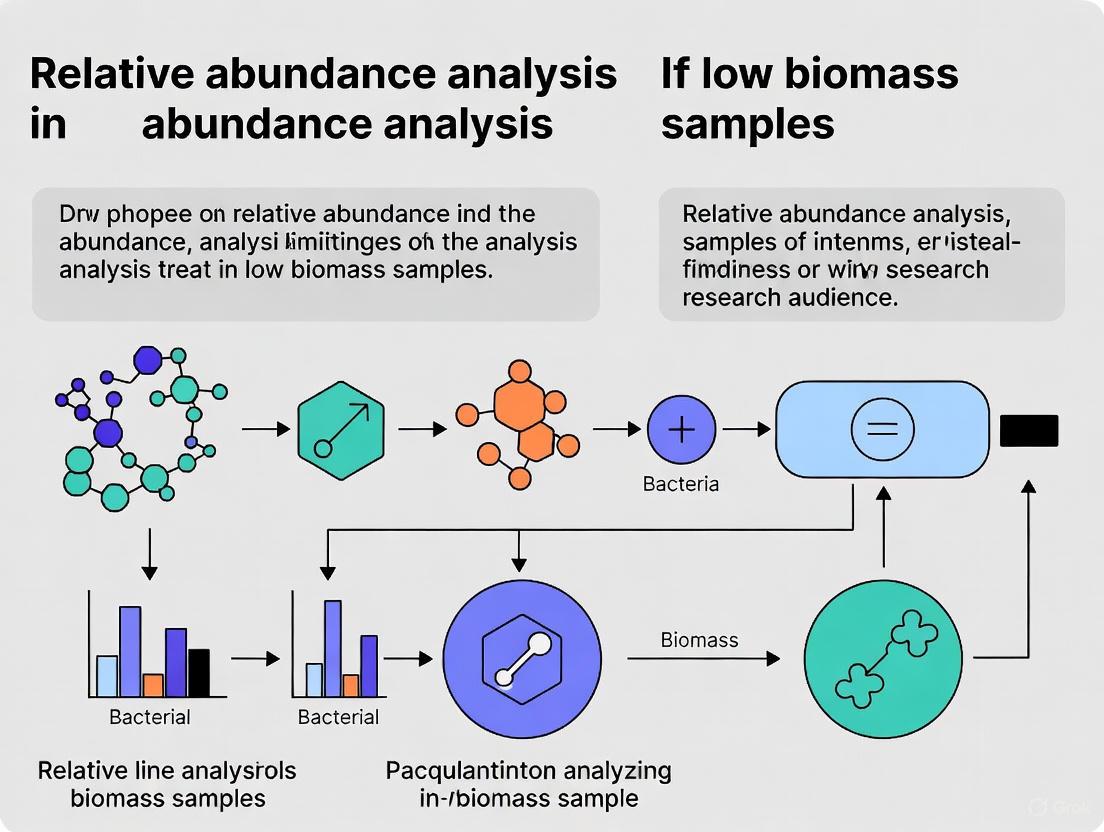

Diagram 1: Key Pitfalls and Mitigation Strategies in Low-Biomass Research

- External Contamination: Microbial DNA is ubiquitously present in molecular biology reagents, kits, and laboratory environments. In low-biomass samples, this external DNA can constitute a majority of the sequenced data, making the contaminant profile appear as the sample's microbiome [1] [2]. This includes DNA from human operators, sampling equipment, and collection vessels [2].

- Host DNA Misclassification: In host-associated samples (e.g., tumors), the vast majority of sequenced DNA is from the host. If this host DNA is not adequately identified and removed during bioinformatic processing, it can be misclassified as microbial, generating significant noise or even artifactual signals if confounded with a phenotype [1].

- Cross-Contamination (Well-to-Well Leakage): During PCR amplification or library preparation, DNA can "leak" from one sample to an adjacent well on a plate. This "splashome" effect can compromise the integrity of all samples processed concurrently and is particularly problematic because it can violate the assumptions of standard decontamination algorithms [1] [2].

- Batch Effects and Processing Bias: Technical variability introduced by different reagent lots, personnel, or laboratory conditions can create systematic differences between sample groups that are processed in separate batches. If these batches are confounded with the experimental groups (e.g., all cases processed in one batch and all controls in another), the technical artifacts can be misinterpreted as biological signals [1].

Essential Mitigation Strategies

- Comprehensive Process Controls: It is standard practice to include a variety of control samples that undergo the entire experimental workflow alongside the actual samples. These are critical for identifying the source and composition of contamination [1] [2]. Recommended controls include:

- Blank Extraction Controls: Contain only the reagents used for DNA extraction.

- No-Template PCR Controls: Contain molecular-grade water instead of sample DNA.

- Sampling Controls: For example, an empty collection swab or vessel, or swabs of the air in the sampling environment [2].

- Unconfounded Study Design: The single most important step in experimental planning is to ensure that the biological variable of interest (e.g., case/control status) is not confounded with batch structure. Cases and controls should be randomly distributed across all processing batches to ensure that technical biases affect all groups equally, thus producing noise rather than false signals [1].

- Rigorous Decontamination and PPE: Sampling equipment should be decontaminated with solutions like sodium hypochlorite (bleach) or UV-C light to remove viable cells and trace DNA, as sterility (e.g., autoclaving) does not equate to being DNA-free [2]. Personnel should use personal protective equipment (PPE) such as gloves, masks, and clean suits to minimize the introduction of human-associated contaminants [2].

Experimental Protocols in Hyper-Arid Soil Research

Hyper-arid soils, such as those in the Atacama Desert, serve as a model system for studying life at its limits. Research in these environments provides a template for the meticulous protocols required for low-biomass analysis.

A Case Study: Simulated Rainfall in the Atacama Desert

A 2023 study investigated how hyper-arid soil microbial communities respond to a simulated rainfall event, providing a robust example of a controlled low-biomass experiment [3].

1. Objective: To characterize the temporal response of indigenous microbial communities to rewetting without nutrient amendment, testing the hypothesis that communities from distinct hyper-arid locations would respond similarly [3].

2. Site and Soil Characterization:

- Locations: Surface soils were collected from two hyper-arid sites near Yungay, Chile (YUN1242 and YUN1609), previously classified as long-term hyper-arid based on nitrate and sulfate profiles [3].

- Soil Chemistry: Soils were alkaline (pH 8.4–8.9) with low organic carbon (0.02–0.04%) and high electrical conductivity, indicating high salinity. Higher nitrate and sulfate levels at YUN1609 suggested greater long-term aridity compared to YUN1242 [3].

3. Experimental Microcosm Setup:

- Treatment: Soils were rewetted with sterile water to 5% (g water/g dry soil) to simulate a rainfall event [3].

- Duration & Sampling: Microcosms were incubated for 30 days, with destructive sampling at multiple time points to track dynamic changes [3].

- Controls: The use of sterile techniques and the analysis of pre-wetted soils (Day 0) served as essential baselines.

4. Microbial Community Analysis:

- Quantitative Abundance: Bacterial and archaeal abundance was tracked via 16S rRNA gene qPCR, which quantifies gene copy numbers and indicates metabolically active fractions [3].

- Community Composition: 16S rRNA gene amplicon sequencing was used to profile the relative abundances of specific bacterial and archaeal taxa over time [3].

- Functional Inference: The metabolic response of the communities was inferred by analyzing the shifts in the relative abundance of taxa with known metabolic capacities (e.g., oligotrophs, mixotrophs, spore-formers) [3].

Table 2: Key Methodological Techniques for Low-Biomass Soil Analysis

| Technique | Function | Key Insight from Atacama Studies |

|---|---|---|

| 16S rRNA qPCR | Quantifies total bacterial and archaeal gene copy numbers; measures abundance. | Initial bacterial 16S rRNA gene copies were significantly higher at YUN1242 than YUN1609; bacteria increased while archaea decreased after wetting [3]. |

| Amplicon Sequencing | Profiles the relative abundance of microbial taxa. | Revealed distinct community structures and different successional patterns after wetting between the two sites [3]. |

| PLFA Analysis | Measures phospholipid fatty acids to assess viable microbial biomass and community structure. | Shifts in PLFA composition (e.g., from saturated to unsaturated) indicate physiological adaptation or community shifts upon rewetting [4]. |

| GDGT Analysis | Targets archaeal membrane lipids to assess archaeal community and metabolism. | Provided evidence for a metabolically active archaeal community in hyper-arid soils upon rewetting [4]. |

Key Findings and Workflow

The study demonstrated that bacterial communities in these extreme soils could be reactivated by water alone, but the responses were site-specific, refuting the initial hypothesis. The YUN1242 community showed rapid changes in actinobacterial taxa, while the YUN1609 community remained stable until day 30, suggesting different historic exposures to hyperaridity shaped communities with distinct metabolic capacities [3]. A separate rewetting study further highlighted that while growth occurred, it was at rates 100–10,000-fold lower than in other soils, and that available carbon was the primary factor limiting microbial growth and biomass gains [4].

Diagram 2: Experimental Workflow for Soil Rewetting Studies

The Scientist's Toolkit: Research Reagent Solutions

Working with low-biomass samples requires specific reagents and materials designed to minimize contamination and maximize the recovery of the target signal.

Table 3: Essential Research Reagents and Materials for Low-Biomass Studies

| Item Category | Specific Examples | Function & Importance |

|---|---|---|

| Nucleic Acid Removal | DNA removal solutions (e.g., based on sodium hypochlorite); UV-C light chambers. | Critically degrades contaminating DNA on surfaces of non-disposable equipment. Essential because standard autoclaving removes viable cells but not persistent DNA [2]. |

| DNA-Free Reagents | Certified DNA-free water, extraction kits, and polymerase enzymes. | Minimizes the introduction of microbial DNA from the reagents themselves, which is a major contamination source in low-biomass workflows [2]. |

| Sample Collection | Sterile, single-use swabs; DNA-free collection tubes/vessels. | Provides a pristine, uncontaminated starting point for sample collection. Pre-sterilized plasticware treated by autoclaving or UV-C is standard [2]. |

| Personal Protective Equipment (PPE) | Gloves, masks, cleanroom suits (coveralls), shoe covers. | Creates a barrier between the sample and the human operator, reducing contamination from skin, hair, and aerosols generated by breathing [2]. |

| Process Controls | Empty collection kits; blank extraction and no-template PCR controls; sample preservation solutions. | Serves as a proxy for the contamination introduced at each step of the workflow. These are non-negotiable for identifying and computationally subtracting contaminants [1] [2]. |

The study of low-biomass samples, from human tumors to hyper-arid soils, demands a paradigm shift from standard microbiome research. The core challenge lies in the fundamental inadequacy of relative abundance analysis when the signal-to-noise ratio is critically low. Without rigorous experimental design—featuring comprehensive controls, unconfounded batch processing, and stringent decontamination protocols—the resulting data are highly susceptible to being dominated by technical artifacts rather than biology. The protocols and guidelines outlined here provide a framework for navigating these challenges. As methods continue to evolve, a commitment to these rigorous standards is essential for producing reliable, reproducible data that can advance our understanding of life at its limits, both within our bodies and in the most extreme environments on Earth.

In the analysis of complex biological systems, particularly in low-biomass microbiome research, scientists frequently encounter a subtle yet profound methodological challenge: the compositionality problem. This statistical phenomenon arises when working with data that carries only relative information, where individual measurements are constrained to a constant sum, such as proportions, percentages, or parts-per-million. In microbiome studies, this constraint manifests inherently in sequencing data, where the number of sequences obtained per sample (sequencing depth) varies, forcing researchers to normalize counts to relative abundances to enable comparison across samples. While this practice allows for practical analytical workflows, it fundamentally alters the mathematical properties of the data, creating a closed system where an increase in the relative abundance of one component necessitates a decrease in one or more other components.

The core issue with compositional data lies in its capacity to generate spurious correlations—statistical associations that emerge solely as artifacts of the data structure rather than from genuine biological relationships. These artifactual correlations present a significant threat to biological interpretation, potentially leading researchers to identify microbial associations that do not exist in reality or miss genuine biological signals obscured by the compositional nature of the data. The problem is particularly acute in low-biomass environments such as tumors, lungs, placenta, and blood, where contaminating DNA can constitute a substantial proportion of observed sequences and the true biological signal represents only a minute fraction of the total data [1]. In these challenging contexts, the combination of compositionality with external contaminants, host DNA misclassification, well-to-well leakage, and batch effects can create perfect storms of statistical artifacts that compromise biological conclusions and have fueled several high-profile controversies in the field [1].

The Statistical Foundation of Spurious Correlation

Historical Context and Mathematical Basis

The problem of spurious correlation in relative data has been recognized for over a century in statistical literature. Karl Pearson first identified and mathematically formalized the phenomenon in 1897, demonstrating how correlations between ratios can arise artificially when the variables share a common component [5]. Pearson illustrated this through a simple example: when three uncorrelated random variables (x, y, z) are used to form ratios (x/z and y/z), the resulting indices will exhibit correlation despite the complete absence of any genuine relationship between the original variables [5].

The mathematical foundation of this phenomenon stems from the constrained nature of compositional data. In a D-part composition [x1, x2, ..., xD], where the components are subject to a unit sum constraint (x1 + x2 + ... + xD = 1), the sample space is restricted to a simplex rather than the real Euclidean space. This constraint introduces negative bias in the covariance structure, as an increase in one component must be compensated by decreases in others. Pearson derived an approximation for the expected spurious correlation between two ratios (x1/x3 and x2/x4) when the underlying variables (x1, x2, x3, x4) are uncorrelated [5]:

Table 1: Mathematical Framework of Spurious Correlation

| Concept | Mathematical Expression | Interpretation |

|---|---|---|

| General Case | $\rho = \frac{r{12}v1v2 - r{14}v1v4 - r{23}v2v3 + r{34}v3v4}{\sqrt{v1^2 + v3^2 - 2r{13}v1v3} \sqrt{v2^2 + v4^2 - 2r{24}v2v4}}$ | Correlation between ratios x1/x3 and x2/x4 |

| Common Divisor | $\rho0 = \frac{v3^2}{\sqrt{v1^2 + v3^2} \sqrt{v2^2 + v3^2}}$ | Simplified case when x3 = x4 (common divisor) |

| Equal Variation | $\rho_0 = 0.5$ | Special case when all coefficients of variation are equal |

This mathematical framework demonstrates that the magnitude of spurious correlation increases with the variance of the common divisor relative to the variances of the numerators. In practical terms, for microbiome data, this means that taxa with low abundance and high variance can create substantial artifactual correlations throughout the dataset.

Visualizing the Mechanism of Spurious Correlation

The diagram below illustrates how spurious correlations emerge from the mathematical structure of relative data, using Pearson's classic example of ratios sharing a common divisor:

The visual mechanism demonstrates how two originally uncorrelated variables (X and Y) can appear correlated when transformed into ratios sharing a common divisor (Z). This mathematical artifact directly translates to microbiome research, where sequencing data is inherently relative—the abundance of each taxon is effectively a ratio of its count to the total sequences in the sample.

Compositionality in Low-Biomass Microbiome Research

Amplified Challenges in Low-Biomass Environments

Low-biomass microbiome research presents a perfect storm for compositional artifacts, where multiple technical challenges interact to exacerbate the compositionality problem. In environments such as tumors, lungs, placenta, and blood, microbial biomass is minimal, creating a scenario where contaminating DNA from reagents, kits, and laboratory environments can constitute the majority of observed sequences [1]. The combination of low true biological signal with high and variable contamination creates ideal conditions for spurious correlations to dominate analytical results.

Table 2: Analytical Challenges in Low-Biomass Microbiome Studies

| Challenge | Impact on Compositionality | Consequence for Interpretation |

|---|---|---|

| External Contamination | Introduces non-biological components that inflate denominators in relative abundance calculations | Genuine microbial signals become diluted; contamination-associated taxa appear correlated |

| Host DNA Misclassification | Host sequences misidentified as microbial further constrain the composition space | Artifactual associations between misclassified host sequences and true microbes |

| Well-to-Well Leakage | Creates artificial dependencies between samples processed in proximity | Spatial patterns in processing can be misinterpreted as biological associations |

| Batch Effects | Technical variation confounded with biological groups creates structured noise | Batch-associated technical artifacts generate spurious group differences |

| Sparsity | Many zero counts due to biological absence or undersampling | Inflated variances and unstable correlation estimates |

The interaction between these challenges and compositionality is particularly problematic when technical factors become confounded with biological variables of interest. For example, if case and control samples are processed in separate batches with different contamination profiles, the resulting data may show apparent microbial signatures of disease that actually reflect batch-specific contaminants rather than genuine biological differences [1]. This confounding was dramatically illustrated in the placental microbiome controversy, where initial findings of a placental microbiome were later attributed to contamination, with the contamination profile differing systematically between studies that reported positive versus negative findings [1].

Case Study: The Consequences of Unaddressed Compositionality

A hypothetical case study demonstrates how severe these artifacts can become in practice. Consider a simulated case-control dataset with 54 samples from cases and 54 from controls, where 53 samples from each group have identical distributions of two taxa, with one extra sample per group containing monocultures of a third and fourth taxon [1]. If cases and controls are processed in separate batches with distinct contamination profiles, well-to-well leakage patterns, and processing biases, analysis of the resulting data would identify six taxa apparently associated with case/control status—two from contamination, two from well-to-well leakage, and two from processing bias—despite 98% of samples being identical in their true biological composition [1].

This case study illustrates how the combination of compositionality with technical artifacts can generate completely artifactual biological conclusions. The spurious signals emerge specifically because the batch structure (case vs. control processing) is confounded with the biological variable of interest, creating the illusion of microbial associations where none exist.

Methodological Solutions and Experimental Design

Compositional Data Analysis Approaches

The field of compositional data analysis (CoDA) provides mathematically rigorous approaches to address the problem of spurious correlation. These methods are based on the principle that meaningful statistical analysis of compositional data must occur in log-ratio space, which transforms the data from the constrained simplex to unconstrained real space [5] [6] [7].

Table 3: Compositional Data Analysis Methods

| Method | Transformation | Application Context | Advantages |

|---|---|---|---|

| Center Log-Ratio (CLR) | clr(x) = ln[x_i / g(x)] where g(x) is geometric mean | General purpose CoDA; PCA-like explorations | Symmetric treatment of components; preserves distances |

| Additive Log-Ratio (ALR) | alr(x) = ln[xi / xD] where x_D is reference component | When a natural reference component exists | Simple interpretation; reduces dimension by one |

| Isometric Log-Ratio (ILR) | ilr(x) = orthogonal coordinates in simplex | Hypothesis testing; regression analysis | Orthogonal coordinates; appropriate for Euclidean methods |

| Robust Compositional Methods | Log-ratio transforms with robust estimators | Soil science; environmental data with outliers | Reduces influence of outliers on parameter estimates |

In practice, these log-ratio transformations effectively eliminate the spurious correlation problem by breaking the constant-sum constraint. For example, in soil science research, applying CoDA methods to analyze relationships between soil organic matter content and chemical/physical properties revealed findings that contrasted with previous non-compositional approaches, including a weak positive association between calcium and organic matter content and a positive effect of phosphorus [7]. Similarly, in plant microbiome studies, proper compositional normalization methods like centered log-ratio (CLR) transformation have been employed to predict potato yield from microbiome data with >80% accuracy [6].

Experimental Design Strategies for Low-Biomass Research

Beyond analytical approaches, careful experimental design is essential for mitigating compositionality artifacts in low-biomass research. The following workflow outlines a comprehensive strategy:

The critical elements of this experimental strategy include:

- Avoiding Batch Confounding: Ensuring biological groups of interest are balanced across processing batches prevents technical variation from being misinterpreted as biological signal [1].

- Comprehensive Process Controls: Collecting multiple types of control samples across all batches enables computational identification and removal of contamination. Different control types capture different contamination sources, with extraction blanks, no-template controls, and library preparation controls being particularly valuable [1].

- Minimizing Well-to-Well Leakage: Randomizing sample positions and including blank controls between samples reduces cross-contamination that can create artificial correlations between samples processed in proximity [1].

The Scientist's Toolkit: Essential Reagents and Methods

Table 4: Essential Research Reagents and Computational Tools

| Category | Specific Items | Function in Addressing Compositionality |

|---|---|---|

| Laboratory Reagents | DNA/RNA-free water, Ultrapure reagents, Sterile collection kits | Minimize introduction of external contamination that distorts composition |

| Process Controls | Extraction blanks, No-template controls (NTCs), Mock communities | Quantify and correct for technical contamination sources |

| DNA Extraction Kits | Low-biomass optimized kits, Host DNA depletion methods | Maximize microbial DNA yield while reducing host DNA misclassification |

| Computational Tools | QIIME2, Calypso, MicrobiomeAnalyst, Mothur, Mixomics | Implement compositional transformations and decontamination algorithms |

| Compositional Methods | CLR/ILR transformations, Aitchison distance, Compositional regression | Proper statistical analysis of relative abundance data |

| Decontamination Algorithms | Decontam, SourceTracker, Prevalence-based methods | Identify and remove contamination signals using control samples |

This toolkit provides researchers with essential resources for addressing compositionality throughout the experimental workflow, from sample collection to data analysis. The computational tools listed have incorporated compositional data analysis methods, making them accessible to researchers who may not have specialized expertise in compositional statistics [6].

The compositionality problem represents a fundamental challenge in the analysis of relative abundance data, with particularly serious implications for low-biomass microbiome research. The mathematical reality that spurious correlations inevitably arise in relative data necessitates a paradigm shift in how we collect, process, and analyze microbiome data. The solutions—both experimental and computational—require careful attention to study design, comprehensive control strategies, and proper use of compositional data analysis methods.

As Pearson, Galton, and Weldon cautioned over a century ago, without appropriate methodological care, conclusions drawn from compositional data risk reflecting statistical artifacts rather than genuine biological relationships [5]. This warning remains critically relevant today, especially as microbiome research expands into increasingly challenging low-biomass environments and employs increasingly sophisticated machine learning approaches that may be vulnerable to compositional artifacts [6]. By recognizing the inherent constraints of relative data and implementing the methodological solutions outlined here, researchers can overcome the problem of spurious correlations and build a more robust foundation for understanding microbial communities in health and disease.

In low-biomass microbiome research, the accurate interpretation of biological signals is critically threatened by two pervasive sources of noise: microbial contamination and overwhelming host DNA. These factors introduce substantial distortion in relative abundance analyses, where the proportional nature of sequencing data can magnify minor contaminants into dominant apparent signals. In environments with minimal microbial biomass—such as certain human tissues, treated drinking water, and atmospheric samples—the inevitable introduction of exogenous DNA from reagents, sampling equipment, and laboratory environments becomes disproportionately impactful relative to the authentic biological signal [2]. Similarly, in host-associated samples with high host-to-microbe ratios, the sheer volume of host genomic material can obscure the much rarer microbial sequences, effectively burying the true signal beneath overwhelming background noise [8]. This technical whitepaper examines the mechanisms through which these noise sources compromise data integrity, presents quantitative evidence of their effects, and provides detailed methodological solutions for researchers and drug development professionals working within this challenging analytical space.

The Nature and Impact of Analytical Noise

Microbial Contamination as Systematic Noise

Microbial contamination represents a form of systematic noise that introduces non-biological signals into sequencing data. This contamination originates from multiple sources throughout the experimental workflow, with DNA extraction kits, laboratory reagents, and sampling equipment being particularly significant contributors [2] [9]. The compositional nature of relative abundance data means that even minute amounts of contaminant DNA can dramatically distort community profiles when the authentic microbial signal is faint. In severe cases, contaminating sequences have been shown to comprise over 80% of the total sequences in extremely low-biomass samples, fundamentally altering perceived community structure and diversity [10].

The problem extends beyond consistent reagent contaminants to include stochastic sequencing noise—irreproducible signals that appear when DNA input falls below critical thresholds. This phenomenon creates a "noise floor" below which authentic biological signals become indistinguishable from technical artifacts [11]. Unlike consistent contamination, this stochastic noise is not reproducible between technical replicates, yet in any single replicate can generate the illusion of a distinct microbial community different from both the authentic sample and control samples [11].

Table 1: Quantitative Impact of Contamination in Low-Biomass Samples

| Sample Type | Contamination Level | Key Contaminants Identified | Impact on Diversity Metrics |

|---|---|---|---|

| Diluted Mock Community (1:100,000) | 80.1% of total sequences [10] | Kit-related bacteria | Overinflated alpha diversity, distorted community composition |

| Respiratory Samples (EBC) | Dominated by noise below 10^4 16S copies/sample [11] | Variable, non-reproducible taxa | Irreproducible community profiles between replicates |

| DNA Extraction Kit Controls | Up to 655 ASVs across 136 genera [10] | Bacteroides, Faecalibacterium, Lachnospiraceae | False positive detection of common gut taxa |

Host DNA as Biological Noise

In host-associated low-biomass environments, the microbial signal must be detected against an overwhelming background of host DNA. This host-derived material creates substantial analytical noise that reduces sequencing depth for the target microorganisms and increases required sequencing costs to achieve sufficient microbial coverage. For example, in upper respiratory tract samples, which represent ecologically distinct niches with characteristically low bacterial biomass, host DNA can constitute the vast majority of genetic material recovered [8]. The resulting reduction in microbial sequencing depth diminishes statistical power for detection and quantification, potentially obscuring biologically significant but numerically minor community members.

Methodological Framework for Noise Reduction

Pre-analytical Contamination Control

Implementing rigorous pre-analytical controls is essential for minimizing contamination introduction during sample collection and processing. The following evidence-based practices represent the current consensus for contamination-sensitive microbiome research:

Equipment Decontamination: Treat sampling tools and collection vessels with 80% ethanol to kill contaminating organisms, followed by a nucleic acid degrading solution (e.g., sodium hypochlorite, UV-C exposure, or commercial DNA removal solutions) to eliminate residual DNA [2]. Note that sterility and DNA-free status are distinct—autoclaving alone may not remove persistent DNA.

Personal Protective Equipment (PPE): Utilize appropriate PPE including gloves, masks, cleansuits, and shoe covers to minimize contamination from human operators. This barrier approach reduces introduction of human-associated microorganisms and environmental contaminants [2].

Single-Use DNA-Free Consumables: Whenever possible, employ single-use DNA-free collection materials (swabs, vessels) to prevent cross-contamination between samples [2].

Experimental Design with Comprehensive Controls

Incorporating appropriate controls throughout the experimental workflow enables subsequent computational correction for persistent contamination:

Negative Controls: Process blank samples (containing only preservation buffer or sterile swabs) alongside experimental samples through all stages from DNA extraction to sequencing. These identify reagent-derived contaminants [2] [9].

Positive Controls: Utilize dilution series of mock microbial communities with known composition to establish detection limits and quantify stochastic noise effects [10] [11].

Technical Replicates: Process multiple aliquots of low-biomass samples to distinguish reproducible signal from stochastic noise through consistency analysis [11].

Table 2: Essential Research Reagent Solutions for Low-Biomass Studies

| Reagent/Solution | Function | Application Notes |

|---|---|---|

| DNA-free collection swabs | Sample acquisition | Pre-treated with UV irradiation and DNA degradation solutions |

| Sodium hypochlorite solution (0.5-1%) | Surface decontamination | Effective DNA degradation; must be compatible with sample type |

| DNA degradation solutions | Equipment treatment | Commercial formulations or diluted bleach solutions |

| DNA-free preservation buffers | Sample storage | Validated for absence of microbial DNA contaminants |

| Mock microbial communities | Positive controls | Commercially available or custom-designed for specific environments |

| DNA extraction kits (low-biomass optimized) | Nucleic acid extraction | Selected for minimal reagent contamination; pre-tested with controls |

Laboratory Protocols for Low-Biomass Samples

DNA Extraction from Upper Respiratory Tract Samples

This protocol exemplifies optimized procedures for low-biomass host-associated environments [8]:

Sample Collection: Collect URT samples using DNA-free synthetic tip swabs. Immediately place swabs in DNA-free preservation buffer and store at -80°C until processing.

Cell Lysis:

- Apply mechanical lysis through bead beating (0.1mm glass beads) for 3-5 minutes at high frequency.

- Simultaneously employ chemical lysis using lysozyme (10mg/mL) and proteinase K (1mg/mL) in appropriate buffer.

- Incubate at 56°C for 30-60 minutes with agitation.

DNA Purification:

- Use silica membrane columns specifically designed for small DNA fragment recovery.

- Include carrier RNA during binding steps to enhance recovery of low-concentration DNA.

- Elute in low-EDTA TE buffer or molecular grade water (preferably pre-heated to 55°C) to maximize DNA yield.

Host DNA Depletion (Optional):

- For samples with high host contamination, consider enzymatic or probe-based host DNA depletion between steps 2 and 3.

- Validate depletion efficiency against non-depleted controls to assess potential microbial loss.

Library Preparation and Sequencing

16S rRNA Gene Amplification:

- Target hypervariable regions (e.g., V4) with primers containing Illumina adapters.

- Use minimal PCR cycles (typically 25-30) to reduce amplification bias.

- Include negative controls in every amplification batch.

Quantification and Normalization:

- Precisely quantify amplicon yield using fluorometric methods (e.g., Qubit) rather than spectrophotometry.

- Normalize samples to equal concentration before pooling.

Sequencing Parameters:

- Utilize paired-end sequencing on Illumina MiSeq or similar platforms.

- Aim for minimum 50,000 reads per sample after quality control.

- Include PhiX control (10-20%) to improve base calling for low-diversity libraries.

Computational Approaches for Noise Identification and Removal

Decontamination Algorithms and Their Applications

Computational decontamination represents a crucial post-sequencing step for identifying and removing contaminant sequences from low-biomass datasets. Multiple algorithmic approaches have been developed, each with distinct strengths and requirements:

Decontam: This R package employs two complementary approaches: (1) a frequency method that identifies contaminants as sequences with an inverse correlation to DNA concentration, and (2) a prevalence method that identifies sequences significantly more abundant in negative controls than in true samples [10] [9]. The frequency method has demonstrated effectiveness in removing 70-90% of contaminants without removing expected sequences from mock communities [10].

Squeegee: A de novo contamination detection tool that identifies potential contaminants by leveraging the principle that contaminants from common sources (e.g., DNA extraction kits) will appear across samples from distinct ecological niches [9]. Squeegee performs taxonomic classification and searches for shared organisms across multiple sample types, then applies similarity metrics and coverage analysis to filter false positives. This approach is particularly valuable when negative controls are unavailable, achieving weighted precision of 0.856 and recall of 0.958 on Human Microbiome Project data [9].

SourceTracker: This Bayesian approach predicts the proportion of sequences in experimental samples that originated from defined contaminant sources [10]. While highly effective when contaminant sources are well-characterized (successfully removing over 98% of contaminants in controlled conditions), performance declines when source environments are poorly defined, failing to remove >97% of contaminants in such scenarios [10].

Noise Filtering Approaches for Sequencing Data

The noisyR package implements a comprehensive noise filtering pipeline that assesses variation in signal distribution to achieve optimal information consistency across replicates and samples [12]. This approach:

- Quantifies noise based on correlation of expression across gene subsets or distribution of signal across transcripts

- Outputs sample-specific signal/noise thresholds and filtered expression matrices

- Improves convergence of predictions (differential expression calls, enrichment analyses, gene regulatory network inference) across different analytical approaches [12]

For environmental DNA applications, simple frequency-based filtering—removing less frequent sequences—can significantly improve signal-to-noise ratios. One study demonstrated that retaining only 10-100 of the most frequent sequences generated near-maximal signal-to-noise ratios, partitioning an additional 25% of variance from noise to explanatory factors [13].

Table 3: Performance Comparison of Computational Decontamination Tools

| Tool | Methodology | Input Requirements | Performance Metrics | Limitations |

|---|---|---|---|---|

| Decontam | Prevalence and frequency-based contamination identification | Negative controls or DNA concentration data | Removes 70-90% of contaminants without removing expected sequences [10] | Requires appropriate controls for optimal performance |

| Squeegee | De novo detection via cross-sample contaminant sharing | Multiple samples from distinct niches | Weighted precision: 0.856; Recall: 0.958 [9] | May miss contaminants unique to single sample types |

| SourceTracker | Bayesian source proportion estimation | Defined contaminant source samples | Removes >98% contaminants with well-defined sources [10] | Performance poor with undefined sources (<3% removal) [10] |

| noisyR | Correlation-based noise thresholding | Count matrices or alignment data | Improves consistency in downstream analyses [12] | May require optimization for specific data types |

Validation and Quality Assessment Framework

Establishing Biomass Thresholds for Reliable Detection

Determining the minimum bacterial biomass required for reproducible results is essential for validating findings in low-biomass studies. Experimental evidence suggests that samples containing fewer than 10^4 copies of the 16S rRNA gene per sample transition from producing reproducible microbial sequences to ones dominated by stochastic noise [11]. Researchers should:

- Quantify bacterial load using targeted qPCR or droplet digital PCR prior to sequencing

- Establish sample-specific minimum biomass thresholds based on mock community dilution series

- Cautiously interpret results from samples falling below empirically determined detection limits

Technical Replication for Noise Discrimination

Incorporating technical replicates provides a powerful approach for distinguishing authentic signal from stochastic noise. The consistency between replicates serves as a reliability metric:

- Process multiple aliquots of low-biomass samples through entire workflow (extraction to sequencing)

- Calculate Bray-Curtis dissimilarity between technical replicates

- Treat inconsistently detected taxa (those appearing in only a subset of replicates) as potential noise rather than authentic signal [11]

Positive Control Validation

Utilizing dilution series of mock microbial communities with known composition enables:

- Quantification of detection limits for specific experimental protocols

- Assessment of contamination levels and sources

- Evaluation of computational decontamination effectiveness

- Optimization of sequencing depth requirements [10]

The challenges posed by contamination and host DNA in low-biomass microbiome research are substantial but not insurmountable. Through implementation of rigorous contamination-aware protocols, appropriate experimental controls, and validated computational decontamination approaches, researchers can significantly enhance the reliability of their findings. The field must move beyond simple relative abundance analyses that are particularly vulnerable to distortion in low-biomass contexts and adopt the comprehensive quality assessment framework presented here. Only through such methodological rigor can we advance our understanding of authentic low-biomass microbial communities and their roles in human health, environmental processes, and therapeutic development.

The once-established dogma of sterility in certain human tissues has been fundamentally challenged by next-generation sequencing (NGS) technologies, giving rise to two of the most contentious areas in contemporary microbiome science: the placental and blood microbiomes. For more than a century, the prenatal environment was considered sterile, and blood was similarly viewed as a microbially barren environment except during pathological states like sepsis [14] [15]. However, beginning in 2014 with a landmark study claiming a "unique placental microbiome," this paradigm was directly challenged, suggesting that microbes might routinely inhabit these environments [16]. These claims have gathered substantial interest from academics, high-impact journals, and funding agencies due to their profound implications for understanding human development, immunity, and disease etiology [15] [14].

The central controversy in these fields stems from the inherent methodological challenges of studying low-biomass microbial communities, where the genetic signal from potential resident microbes is dwarfed by background noise from contamination and host DNA. This review examines the placental and blood microbiome debates as critical case studies, framing them within the broader context of analytical limitations—specifically the pitfalls of relative abundance analysis—that can lead to spurious biological conclusions. As we will demonstrate, these controversies highlight an urgent need for rigorous, standardized methodologies and a cautious interpretation of data when investigating environments with minimal microbial presence.

The Placental Microbiome: A Controversy in Prenatal Origins

The Emergence and Rejection of a Paradigm

The concept of a placental microbiome was first proposed by Aagaard et al. in 2014, who identified multiple bacterial phyla in placental tissue, including Firmicutes, Tenericutes, Proteobacteria, Bacteroidetes, and Fusobacteria, and suggested this community was unique and potentially functional [17] [16]. This study, and others that followed, utilized 16S rRNA gene sequencing and shotgun metagenomics to detect bacterial DNA in placental samples, arguing against the long-held belief in uterine sterility [18]. Proponents suggested this microbiome could originate from the maternal oral cavity or vaginal tract and translocate via the bloodstream to the placenta, potentially influencing fetal development and pregnancy outcomes [18].

However, this nascent paradigm faced immediate skepticism. Critics pointed to the existence of germ-free animal models as compelling evidence against a consistent prenatal microbiota. "The development of a germ-free line depends on the founding members being born by Cesarean-section, and continued in xenobiosis to breed. Based on all conventional ascertainment methods such animals, and the line of their progeny, are sterile," noted Martin Blaser, emphasizing that if a resident microbiota existed, it would likely propagate across generations [14]. Subsequent, more rigorously controlled studies failed to support the initial findings. A comprehensive study from the University of Cambridge analyzed over 500 placental samples and found that after implementing stringent controls, the signals of bacterial DNA were either contaminants or represented rare pathogens like Streptococcus agalactiae, a known cause of neonatal sepsis [16]. The authors concluded that "there is no functional microbiota in the placenta" [16].

The primary issue confounding placental microbiome research is contamination at multiple stages of sample processing. Low-biomass samples are exceptionally vulnerable to the "kitome"—traces of microbial DNA present in DNA extraction kits and other laboratory reagents [16]. Common environmental bacteria with no known capacity to infect human cells, such as Bradyrhizobium (a plant root symbiont), have been frequently identified in placental microbiome studies, a clear indicator of contaminating DNA [16]. As summarized by Vincent Young, "simply demonstrating that you can detect microbes... by culture-independent methods... isn't enough. You need to show that this potential community is stable over time, reproducing in situ and is metabolically active" [14].

Table 1: Summary of Key Contradictory Findings in the Placental Microbiome Debate

| Supporting a Placental Microbiome | Refuting a Placental Microbiome |

|---|---|

| Aagaard et al. (2014) identified diverse bacterial phyla in 320 placental samples [17] [16]. | De Goffau et al. (2019) found most bacterial DNA in placental samples likely originated from contaminants [17]. |

| Some studies report microbial communities differing in pregnancies complicated by preterm birth or preeclampsia [18]. | The Cambridge study (2019) of 500+ placentas found no consistent microbial community after controlling for contamination [16]. |

| Claims of bacterial visualization via fluorescent in situ hybridization (FISH) [14]. | Cultivation efforts have largely failed to grow microbes from healthy placentas, contradicting DNA-based findings [16]. |

| Hypothesized oral or vaginal origins for placental microbes [18]. | Germ-free mammals can be derived and maintained, proving sterility of the prenatal environment is possible [14]. |

The Blood Microbiome: From Core Community to Transient Translocation

Evolution of the Blood Microbiome Concept

Conventional medical science has long held that blood is sterile outside of explicit infectious states. Recent culture-independent studies initially challenged this, reporting the presence of bacterial 16S rRNA in a high percentage of healthy individuals' blood and conceptualizing a "blood microbiome" potentially vital for wellbeing [15]. Early, smaller-scale sequencing studies suggested the presence of a common set of microbes, such as Staphylococcus spp. and Cutibacterium acnes, and linked dysbiosis of this purported community to conditions like myocardial infarction, cirrhosis, and inflammatory diseases [15] [19].

This concept has been radically reshaped by larger, more methodologically rigorous studies. A landmark 2023 population study in Nature Microbiology analyzed blood sequencing data from 9,770 healthy individuals, applying stringent decontamination filters to account for batch-specific contaminants and reagent-derived DNA [20]. The findings starkly contradicted the idea of a core blood microbiome: no microbial species were detected in 84% of individuals, and the remaining 16% had a median of only one microbial species per person [20]. No species were shared by more than 5% of the cohort, and no patterns of microbial co-occurrence were observed [20].

A New Model: Sporadic Translocation

The current evidence now supports a model of transient and sporadic translocation of commensal microbes from established body sites like the gut, mouth, and urogenital tract into the bloodstream, rather than a resident microbial community [15] [20]. The 117 microbial species identified in the large-scale study were primarily human commensals, but they were so infrequent and inconsistent that they cannot be considered a core "microbiome" endogenous to blood [20]. This distinction is critical: the presence of microbial genetic material does not equate to a resident, functioning community. As David Relman emphasized in the context of the placenta, "the presence of DNA is quite distinct from 'bacterial colonization' and very different from the presence of a true 'microbiota'" [14].

Table 2: Key Analytical Challenges in Low-Biomass Microbiome Studies (e.g., Blood and Placenta)

| Challenge | Description | Impact on Data Interpretation |

|---|---|---|

| External Contamination | Introduction of microbial DNA from reagents ("kitome"), collection kits, or the laboratory environment [1] [20]. | Can be misinterpreted as authentic signal, especially when contaminant profiles are confounded with sample groups [1]. |

| Host DNA Misclassification | In metagenomic studies, host DNA can be misidentified as microbial due to limitations in reference databases [1]. | Generates noise and false positives; can create artifactual signals if confounded with a phenotype [1]. |

| Well-to-Well Leakage | Cross-contamination between samples processed concurrently on multi-well plates [1]. | Can compromise the inferred composition of every sample and violate assumptions of decontamination tools [1]. |

| Batch Effects & Processing Bias | Technical variations between different laboratories, personnel, reagent lots, or protocols [1]. | Can distort inferred microbial signals and create false associations if batches are confounded with a phenotype of interest [1]. |

The Critical Limitation of Relative Abundance in Low-Biomass Analysis

The controversies surrounding the placental and blood microbiomes are, at their core, a demonstration of the severe limitations of standard relative abundance analysis in low-biomass settings. This widely used metric expresses the abundance of each taxon as a proportion of the total community, a method that becomes highly misleading when the total microbial DNA is minimal and dominated by contaminating sequences.

In a low-biomass sample, a contaminant species introduced from a reagent kit can constitute a large relative proportion of the sequenced reads, creating the illusion of a dominant and potentially significant organism. This artifact is vividly illustrated by the detection of plant-associated bacteria like Bradyrhizobium in human placental and blood samples [16]. In a high-biomass sample like stool, such a contaminant would be a negligible fraction, but in a low-biomass sample, it can appear to be a major community member. This reliance on relative abundance, without an absolute quantification of the microbial load, can lead researchers to identify a "core microbiome" that is, in fact, a "core contaminantome."

The following diagram illustrates how this reliance on relative data, combined with contamination, leads to flawed conclusions in case-control studies where processing batches are confounded with the experimental groups.

Best Practices and Future Directions for Reliable Research

The Scientist's Toolkit: Essential Reagents and Controls

To overcome the challenges outlined, research in low-biomass environments must adopt a toolkit of rigorous experimental and analytical controls. The following table details essential components for a robust study design.

Table 3: Research Reagent Solutions and Essential Controls for Low-Biomass Microbiome Studies

| Item / Control | Function & Importance |

|---|---|

| Multiple Process Controls | Includes blank extraction controls (no sample), no-template PCR controls, and swabs from collection kits. Critical for profiling the "kitome" and other contaminant sources [1]. |

| Positive Controls with Spike-Ins | Using a known, rare microbe (e.g., Salmonella bongori) spiked into samples validates that the protocol can detect low-abundance species and allows for quantification of background noise [16]. |

| Different DNA Extraction Kits | Processing subsets of samples with different kits helps identify kit-specific contaminants, as these will vary between kits while true biological signals should persist [20] [16]. |

| Robust Bioinformatics Decontamination | Computational pipelines (e.g., Decontam) that use control data to identify and subtract contaminant sequences are essential. Must account for batch-specific contaminants [1] [20]. |

| Host DNA Depletion Kits | Chemical or enzymatic methods to reduce the overwhelming proportion of host DNA in samples, thereby increasing the relative signal of microbial reads for more reliable detection [1]. |

A Rigorous Experimental Workflow

The following diagram outlines a comprehensive workflow that integrates these controls to mitigate the risk of contamination and false discovery, moving from sample collection to validated results.

Moving Beyond Relative Abundance

Future research must transition from purely qualitative, relative descriptions to quantitative and functional assessments. This includes:

- Absolute Quantification: Employing methods like 16S rRNA qPCR or digital droplet PCR to determine the total microbial load in a sample, providing essential context for interpreting sequencing data [20].

- Viability and Functional Assessment: Recognizing that DNA can persist from dead microbes. Techniques like RNA sequencing (to detect transcriptional activity), propidium monoazide (PMA) treatment (to exclude DNA from dead cells), and culture assays are critical to confirm the presence of living, functionally active communities [15] [14].

- Standardization and Reproducibility: The field requires community-wide adoption of standardized protocols for sample collection, processing, and analysis, along with full transparency and reporting of all controls and batch information [1].

The contentious debates surrounding the placental and blood microbiomes serve as critical cautionary tales for the entire field of microbiome science. They underscore a fundamental principle: in low-biomass environments, the signal of life must be meticulously disentangled from the noise of contamination. The over-reliance on relative abundance data from NGS, without robust controls and absolute quantification, has been a primary driver of these controversies, leading to claims of core microbiomes that later evidence attributed to sporadic translocation or outright contamination.

The lessons learned are invaluable. They compel researchers to prioritize rigorous experimental design over convenience, to embrace rather than ignore the issue of contamination, and to demand a higher standard of evidence—including viability, metabolic activity, and reproducibility—before accepting the existence of a novel microbial niche. As the field continues to explore other low-biomass environments like the brain, tumors, and lungs, the methodological framework refined through these debates will be essential for distinguishing true biological discovery from analytical artifact.

From Theory to Practice: Methodological Frameworks for Robust Low-Biomass Analysis

In microbiome and molecular biology research, data interpretation has long relied on relative abundance profiles, a approach that obscures true biological dynamics and can lead to misleading conclusions. This limitation is particularly acute in the study of low-biomass samples, where contaminating DNA can disproportionately influence results. This technical guide elucidates the critical transition to absolute quantification methods, detailing the principles, protocols, and applications of spike-in standards, quantitative PCR (qPCR), and flow cytometry. By providing a framework for quantifying absolute cellular or molecular counts, these methods enable more accurate cross-sample comparisons, reveal true microbial population dynamics, and enhance the rigor of biomarker validation—addressing fundamental weaknesses inherent in relative abundance analysis.

The Critical Limitations of Relative Abundance in Low-Biomass Research

Analyses based on relative abundance, which express the quantity of a target entity (e.g., a bacterial taxon) as a proportion of the total detected entities in a sample, present significant interpretive challenges. These limitations become critically pronounced in low-biomass environments where the target signal is minimal.

- Compositional Data Fallacy: Relative data is compositional; an increase in the proportion of one component necessitates a decrease in the others. This can create a "balancing act" where the absolute abundance of a microbe remains unchanged, but its relative abundance appears to increase simply because other microbes have decreased. Research demonstrates that this can lead to false conclusions: in soil microbiome studies, 33.87% of genera at the genus level showed opposite trends (decreased relative abundance but increased absolute abundance) when analyzed using absolute methods [21].

- Masked True Biological Dynamics: Relative abundance fails to capture changes in the total microbial load. A treatment that doubles the population of Bacteria A while leaving Bacteria B unchanged yields the same relative profile (67% A, 33% B) as a treatment that halves the population of Bacteria B while leaving A unchanged. The biological implications of these two scenarios are fundamentally different, yet indistinguishable through relative analysis alone [21].

- Vulnerability to Contamination: In low-biomass samples (e.g., tissue, blood, urine), even minute amounts of contaminating DNA from reagents, kits, or the laboratory environment can constitute a substantial proportion of the total sequenced DNA [1] [2]. This contamination can drastically skew relative profiles, leading to the misidentification of contaminants as authentic signals [22] [23]. The risk of well-to-well leakage or "splashome" effects further compounds this problem [1] [2].

Core Methodologies for Absolute Quantification

Spike-In Standards

Spike-in methodologies involve adding a known quantity of an internal reference material (non-native to the sample) prior to nucleic acid extraction. This allows for the calibration of sequencing data to determine the absolute number of cells or gene copies in the original sample.

- Experimental Protocol:

- Standard Selection: Choose an appropriate internal standard. For microbial community sequencing, this is typically a known number of cells from a genetically distinct microbe (e.g., Pseudomonas aureofaciens [24]) or synthetic DNA sequences.

- Quantification of Standard: Precisely quantify the standard suspension using a high-accuracy method like flow cytometry or a Coulter counter [25] [24].

- Sample Processing: Spike a known volume of the standard into the sample before DNA extraction. This is critical, as it controls for losses and biases throughout the entire workflow [21] [24].

- DNA Extraction and Sequencing: Proceed with standard library preparation and sequencing.

- Computational Calibration: Bioinformatically, the number of sequence reads from the spike-in is used as a scaling factor.

- The absolute abundance of a target taxon is calculated as:

(Target Taxon Reads / Spike-in Reads) * Known Spike-in Cells Added[24].

- The absolute abundance of a target taxon is calculated as:

- Key Applications: This method is particularly valuable for metagenomic and metatranscriptomic studies where PCR amplification bias is a concern, allowing for the calculation of genome copies per sample and subsequent estimation of growth and decay rates of microbial populations [24].

Quantitative PCR (qPCR)

qPCR estimates the absolute quantity of a target gene (e.g., the 16S rRNA gene for total bacterial load) in a sample by comparing its amplification to a standard curve of known copy numbers.

- Experimental Protocol:

- Standard Curve Preparation: Create a serial dilution of a plasmid or DNA fragment containing the target sequence, with known copy numbers [21].

- DNA Extraction: Extract DNA from all samples and standards simultaneously to minimize batch effects.

- Amplification Reaction: Run the qPCR assay with primers specific to the target gene (e.g., universal 16S primers for total bacteria or taxon-specific primers) for both samples and the standard curve.

- Data Analysis: The cycle threshold (Ct) value for each sample is interpolated from the standard curve to determine the starting quantity of the target gene. Results are typically reported as 16S rRNA gene copies per gram or milliliter of sample [21] [26].

- Considerations: A key limitation is the variability in 16S rRNA gene copy number between different bacterial species, which can lead to over- or under-estimation of cell counts. Digital PCR (ddPCR) is an advanced alternative that does not require a standard curve and is more precise for quantifying low-abundance targets [21].

Flow Cytometry

Flow cytometry provides a direct, culture-independent method for enumerating total microbial cells in a suspension, based on their light-scattering and fluorescence properties.

- Experimental Protocol:

- Sample Homogenization: Liquefy and homogenize the sample (e.g., fecal samples in a suitable buffer) to create a uniform suspension [21] [24].

- Staining (Optional): Add a fluorescent dye that binds to nucleic acids (e.g., SYBR Green I) to distinguish cells from abiotic particles. Viability dyes can be used to differentiate live/dead cells [21].

- Analysis and Gating: Pass the sample through the flow cytometer. The forward and side scatter signals are used to identify particles of bacterial size. Fluorescence triggering is applied to specifically count nucleic acid-containing events. A defined gating strategy is crucial to exclude background noise and debris [21] [25].

- Quantification: The instrument provides a direct count of cells per unit volume, which can be extrapolated to the original sample mass or volume [21] [24].

- Validation: Studies have validated flow cytometry counts against other methods, showing strong correlation, though absolute counts may vary slightly (e.g., within one order of magnitude) from spike-in based methods [24].

Table 1: Comparison of Absolute Quantification Methods

| Method | Major Applications | Key Advantages | Key Limitations |

|---|---|---|---|

| Spike-In Standards [21] [24] | Soil, sludge, feces, metagenomics | Controls for biases in DNA extraction and sequencing; easy incorporation into HTS workflows. | Choice of internal reference and spiking amount is critical for accuracy. |

| qPCR [21] | Feces, clinical samples, soil, air | High sensitivity; cost-effective; directly quantifies specific taxa. | Requires a standard curve; PCR biases; 16S copy number variation. |

| Flow Cytometry [21] [24] | Feces, aquatic, soil | Rapid; single-cell enumeration; can differentiate live/dead cells. | Not ideal for complex, heterogeneous samples; requires a gating strategy. |

| ddPCR [21] | Clinical samples, air, feces | No standard curve needed; high precision for low-concentration targets. | Requires dilution for high-concentration templates; can be costly. |

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Reagent Solutions for Absolute Quantification

| Item | Function | Example & Notes |

|---|---|---|

| Cellular Spike-ins [24] | Provides an internal count standard for metagenomic sequencing. | Genetically distinct bacteria (e.g., P. aureofaciens); must be quantitated via flow cytometry. |

| Fluorescent Dyes [21] [25] | Stains nucleic acids to enable cell detection and viability assessment in flow cytometry. | SYBR Green I, Propidium Iodide (for dead cells), PKH26 cell linker (for cell tracking). |

| DNA Decontamination Solutions [2] | Removes contaminating DNA from surfaces and equipment prior to sample handling. | Sodium hypochlorite (bleach), UV-C light, hydrogen peroxide, commercial DNA removal kits. |

| Process Controls [1] [2] | Identifies contamination introduced during sampling and processing. | Blank extraction controls (reagents only), no-template PCR controls, swabs of sampling environment. |

| Heavy-labeled Peptides [27] | Acts as an internal standard for absolute quantitation of proteins via LC-MS. | AQUA peptides, IGNIS prime peptides; used with a universal calibration curve. |

Visualizing the Spike-in Workflow for Absolute Metagenomic Quantification

The following diagram illustrates the integrated workflow of using cellular spike-ins for absolute quantification in metagenomic studies, highlighting how raw sequencing data is transformed into absolute abundance data.

The shift from relative abundance to absolute quantification represents a paradigm change essential for robust scientific inference, especially in low-biomass research. Techniques like spike-in standards, qPCR, and flow cytometry move beyond proportional data to deliver concrete, quantitative measurements of cellular abundance. While each method has its specific strengths and considerations, their collective adoption addresses the core compositional fallacy of relative data, mitigates the impact of contamination, and reveals true biological dynamics that would otherwise remain hidden. As the field moves toward more complex questions regarding microbial dynamics, host-microbe interactions, and clinical biomarker validation, the integration of absolute quantification will become not just best practice, but a fundamental requirement for generating reliable and interpretable data.

The analysis of low-biomass microbial communities, derived from environments such as human tissues, cleanrooms, and ancient specimens, presents extraordinary challenges for bioinformatics research. The fundamental principle of "garbage in, garbage out" is particularly salient in this context, as the quality of analytical outcomes is inextricably linked to input data quality [28]. In low-biomass studies, where microbial signals are faint, contamination from external sources or misclassified host DNA can constitute a substantial proportion of sequenced material, potentially leading to erroneous biological conclusions [1]. Several high-profile controversies have emerged from such studies, including retracted findings regarding tumor microbiomes and previously claimed placental microbiomes that subsequent research revealed were driven largely by contamination [1].

The central challenge lies in the inherent limitations of relative abundance analysis for low-biomass samples. Because microbiome data are compositional (constrained to sum to 1), an increase in the relative abundance of one taxon necessarily causes decreases in others [29]. This property becomes particularly problematic when contamination is present, as the introduction of contaminant DNA distorts all relative proportions, potentially creating artificial correlations or masking true biological signals [29]. The problem is exacerbated by the fact that many bioinformatics tools struggle to distinguish genuine low-abundance taxa from contamination, especially when the contaminant organisms or their close relatives are absent from reference databases [30] [31]. This review synthesizes current methodologies, tools, and experimental frameworks designed to address these challenges, providing researchers with a comprehensive resource for implementing contamination-aware bioinformatics pipelines.

Experimental Design: The First Line of Defense Against Contamination

Fundamental Challenges in Low-Biomass Studies

Low-biomass microbiome research must contend with multiple interconnected challenges that can compromise data integrity. External contamination represents one of the most pervasive issues, where DNA from sources other than the target environment—including reagents, sampling kits, or laboratory personnel—is introduced during sample collection or processing [1] [32]. This "kitome" contamination can dominate the signal in ultra-low biomass samples [32]. Host DNA misclassification occurs when host DNA is incorrectly identified as microbial in origin, particularly problematic in metagenomic studies of human tissues where host DNA may comprise the vast majority of sequenced material [1]. Well-to-well leakage (or "cross-contamination") describes the transfer of DNA between adjacent samples during processing, while batch effects and processing biases introduce non-biological variation that can be confounded with experimental conditions [1].

Perhaps most critically, the compositional nature of microbiome data means that measurements represent relative rather than absolute abundances [29]. In low-biomass contexts, this limitation is acute because the introduction of contaminant DNA or variation in host DNA depletion efficiency alters the compositional structure, creating spurious correlations that can be misinterpreted as biological findings [29]. Research has demonstrated that sample biomass itself represents a primary limiting factor, with bacterial densities below 10^6 cells resulting in loss of sample identity regardless of the protocol used [33].

Foundational Principles for Robust Study Design

Strategic experimental design provides the most effective protection against contamination artifacts. Avoiding batch confounding is paramount—experimental batches should contain balanced representations of all experimental conditions to ensure that technical variability is not misinterpreted as biological signal [1]. Comprehensive process controls are equally essential, including blank extraction controls, no-template PCR controls, and sampling controls that account for potential contamination at each processing stage [1] [32]. The collection of multiple control types is recommended, as different controls capture different contamination sources; for instance, empty collection kits reveal contamination introduced during sampling, while extraction blanks identify contamination from reagents [1].

Sample randomization throughout processing helps distribute technical artifacts evenly across experimental groups. For studies where complete deconfounding is impossible, analyzing batches separately and assessing result generalizability across them provides a more robust approach than combining all data [1]. Additionally, meticulous documentation of all processing steps—including reagent lots, personnel, and equipment used—creates an audit trail that facilitates identification of contamination sources when they occur [28].

Table 1: Essential Process Controls for Low-Biomass Studies

| Control Type | Description | Purpose | Recommended Replication |

|---|---|---|---|

| Blank Extraction Control | Reagents processed without sample | Identifies contamination from DNA extraction kits | 2+ per extraction batch |

| No-Template PCR Control | PCR reaction without DNA template | Detects contamination in amplification reagents | 1-2 per PCR plate |

| Kit/Reagent Blank | Sampling reagents without contact with sample | Reveals "kitome" contamination | Varies by reagent lot |

| Negative Sampling Control | Sterile material from sampling environment | Identifies environmental contamination during sampling | 2+ per sampling session |

| Positive Control | Mock community with known composition | Assesses technical sensitivity and bias | 1-2 per sequencing run |

Computational Decontamination: Tools and Algorithms

Taxonomy of Decontamination Approaches

Computational decontamination methods can be broadly categorized by their underlying algorithms and the type of data they process. Similarity-based tools leverage sequence alignment or homology searching to classify individual sequences or contigs taxonomically, then flag those inconsistent with the expected taxonomic profile. Composition-based methods utilize genomic features such as k-mer frequencies, GC content, or codon usage to identify foreign sequences. Control-based approaches explicitly model contaminants using negative controls processed alongside experimental samples. Hybrid methods combine multiple strategies to improve classification accuracy.

Table 2: Computational Decontamination Tools and Their Applications

| Tool | Algorithm Type | Input Data | Strengths | Limitations |

|---|---|---|---|---|

| ContScout [30] | Similarity-based + gene position | Annotated genomes/proteomes | High specificity with closely related contaminants; distinguishes HGT from contamination | Limited for taxa poorly represented in databases |

| Conterminator [31] | Similarity-based | Genomic assemblies | Effective for cross-kingdom contamination; identifies mislabelled sequences | Primarily designed for assembly-level contamination |

| FCS-GX [31] | Composition-based + similarity | Raw reads/assemblies | Rapid processing; high sensitivity for diverse contaminants | Part of specialized NCBI pipeline |

| BASTA [30] | Lowest common ancestor (LCA) | Protein sequences | Flexible taxonomy assignment | Lower contamination detection rates in benchmarks |

| GUNC [30] | Phylogenetic integrity | Genomic assemblies | Detects chimeric genomes; effective for prokaryotes | Limited to prokaryotic genomes |

| Decontam [1] | Prevalence/frequency | Feature tables (e.g., OTU/ASV) | Control-based method; integrates with microbiome analysis pipelines | Requires well-designed control experiments |

Performance Assessment and Benchmarking