Beyond Trial and Error: Machine Learning vs Traditional DOE for Bioprocess Medium Optimization

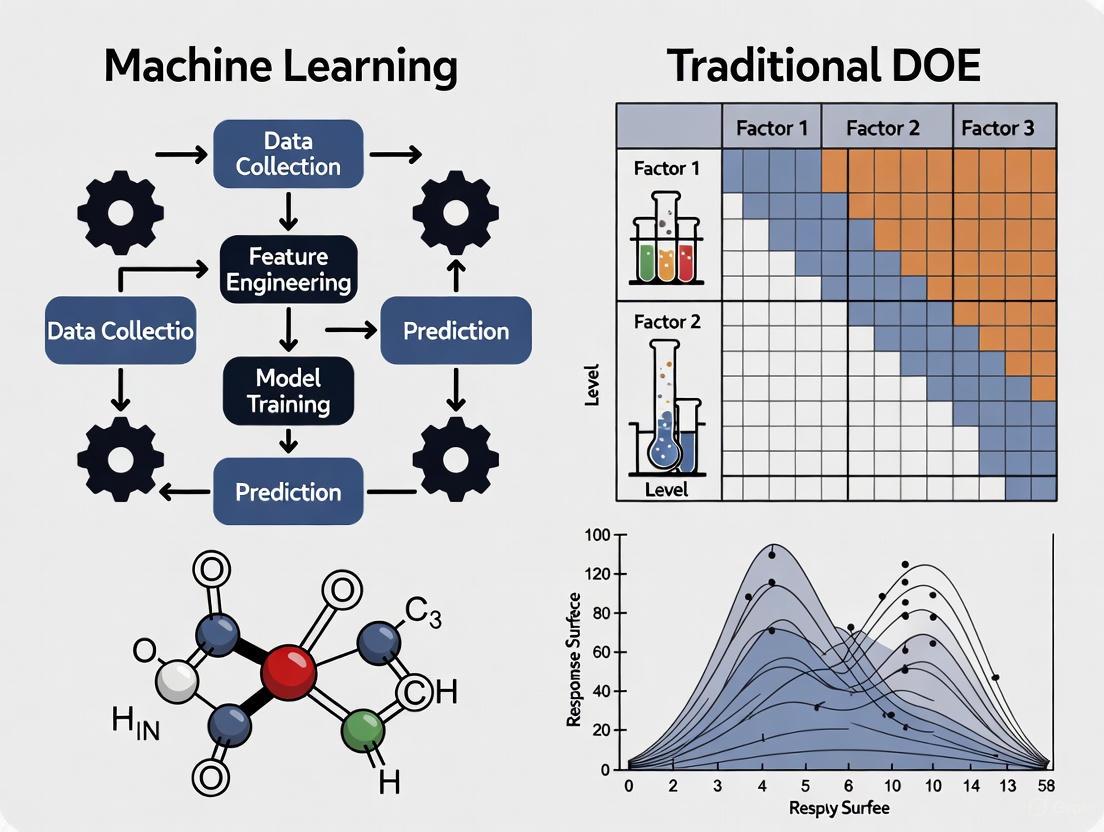

This article provides a comprehensive comparison between Traditional Design of Experiments (DOE) and Machine Learning (ML)-guided DOE for medium optimization in biomedical and clinical research.

Beyond Trial and Error: Machine Learning vs Traditional DOE for Bioprocess Medium Optimization

Abstract

This article provides a comprehensive comparison between Traditional Design of Experiments (DOE) and Machine Learning (ML)-guided DOE for medium optimization in biomedical and clinical research. Tailored for researchers, scientists, and drug development professionals, it covers the foundational principles of both approaches, delves into practical methodologies and applications, addresses common troubleshooting and optimization challenges, and offers a rigorous validation framework. The goal is to equip R&D teams with the knowledge to select the right strategy, enhance experimental efficiency, reduce costs, and accelerate the development of robust bioprocesses.

Understanding the Core Principles: From Statistical Frameworks to Adaptive Learning

What is Traditional DOE? The Backbone of Systematic Experimentation

Traditional Design of Experiments (DOE) is a branch of applied statistics that deals with the planning, conducting, analyzing, and interpreting of controlled tests to evaluate the factors that control the value of a parameter or group of parameters [1]. It represents a systematic framework for conducting scientific investigations that allows researchers to efficiently explore the relationship between multiple input factors and a desired output. As a powerful data collection and analysis tool, traditional DOE enables scientists to manipulate several input factors simultaneously, determining their individual and combined effects on a response variable [1]. This methodology stands in stark contrast to less efficient one-factor-at-a-time (OFAT) approaches, which often fail to identify critical interactions between variables that can significantly impact experimental outcomes [2].

The historical foundation of modern DOE traces back to the pioneering work of Sir Ronald Fisher in the early 20th century, who demonstrated how serious consideration of experimental design before implementation helps avoid common analytical problems [1]. Fisher's principles—including randomization, replication, and blocking—established the bedrock upon which traditional DOE was built [3]. These principles ensure that experiments produce reliable, valid, and reproducible results that can withstand scientific scrutiny. Over subsequent decades, DOE has evolved into an indispensable methodology across scientific and engineering disciplines, particularly in fields requiring rigorous process optimization and characterization [2].

In the context of modern research, particularly in bioprocess optimization and medium development, traditional DOE serves as a crucial bridge between rudimentary OFAT approaches and emerging machine learning-driven methodologies. While advanced computational approaches offer new opportunities for modeling complex systems, traditional DOE provides the structured statistical framework and foundational experimental principles necessary for generating high-quality data [4]. This article explores traditional DOE as the backbone of systematic experimentation, examining its core principles, methodological framework, and contemporary applications in research settings where medium optimization is critical.

Core Principles: The Pillars of Traditional DOE

The robustness of traditional Design of Experiments stems from several foundational principles that ensure the validity and reliability of experimental findings. These principles, established through Fisher's early work and refined over decades of application, provide the theoretical underpinnings for all designed experiments [3].

Comparison

The principle of comparison emphasizes that experimental evaluations gain scientific value when treatments are compared against appropriate baselines or controls [3]. In traditional DOE, this often involves comparing the effects of different factor levels against a standard or control treatment that serves as a reference point. This comparative framework allows researchers to distinguish actual treatment effects from random variation or external influences. In medium optimization research, for instance, new nutrient combinations might be systematically compared against established basal media formulations to determine their relative effectiveness [4].

Randomization

Randomization refers to the practice of determining the experimental run order through a random sequence, which helps eliminate the effects of unknown or uncontrolled variables [1]. By randomizing the order in which experimental trials are performed, researchers minimize the risk that systematic biases or lurking variables will confound the results. For example, in a cell culture experiment investigating multiple medium components, randomization ensures that any undetected environmental fluctuations (such as minor temperature variations in an incubator) do not systematically favor one experimental condition over another. This principle is essential for establishing cause-and-effect relationships with confidence [2].

Replication

Replication involves the repetition of complete experimental treatments, including the setup [1]. This principle serves two crucial purposes: it allows researchers to estimate the inherent variability in the experimental system, and it provides a more reliable estimate of treatment effects by averaging out random variations. The number of replications directly impacts the statistical power of an experiment—its ability to detect true effects when they exist. In traditional DOE, replication should not be confused with repeated measurements; true replication involves independently executing the entire experimental treatment multiple times [3].

Blocking

Blocking is the non-random arrangement of experimental units into groups (blocks) consisting of units that are similar to one another [3]. This principle allows researchers to account for known sources of variation that are not the primary focus of investigation but could otherwise obscure treatment effects. When randomizing a factor is impossible or too costly, blocking enables researchers to restrict randomization by carrying out all trials with one setting of the factor before switching to another setting [1]. In biological experiments, blocking might involve grouping experimental units by batch of raw materials, time of day, or operator to control for these potential sources of variation.

Traditional DOE vs. One-Factor-at-a-Time Approach

A fundamental advantage of traditional DOE over the more intuitive one-factor-at-a-time (OFAT) approach lies in its efficient and systematic investigation of factor effects and interactions. The OFAT method, while straightforward and widely taught, involves changing a single factor at a time while holding all others constant [5]. This approach suffers from critical limitations that traditional DOE effectively addresses.

Table 1: Comparison of OFAT and Traditional DOE Approaches

| Aspect | One-Factor-at-a-Time (OFAT) | Traditional DOE |

|---|---|---|

| Efficiency | Inefficient use of resources [5] | Establishes solutions with minimal resources [5] |

| Experimental Space Coverage | Limited coverage [5] | Thorough, systematic coverage [5] |

| Interaction Detection | Fails to identify interactions [5] [2] | Systematically investigates all interactions [1] |

| Optimal Solution Identification | May miss the optimal solution [5] | Comprehensive mapping to identify true optimum |

| Underlying Methodology | Straightforward, widely taught [5] | Systematic structured approach [5] |

The critical limitation of OFAT is its inability to detect interactions between factors—situations where the effect of one factor depends on the level of another factor [2]. In complex biological systems such as culture media optimization, these interactions are common and often significant. For example, the effect of a growth hormone in a culture medium might depend on the concentration of specific micronutrients—a relationship that OFAT would likely miss but that traditional DOE would systematically uncover [4]. Traditional DOE captures these interactions by deliberately varying all factors simultaneously according to a predetermined pattern, allowing researchers to build a comprehensive model of the system behavior [1].

The statistical efficiency of traditional DOE derives from its ability to extract maximum information from a limited number of experimental runs. While a OFAT approach investigating 5 factors at 3 levels each would require 3⁵ = 243 experiments, a carefully designed fractional factorial DOE might achieve similar insights with only a fraction of these runs [2]. This efficiency makes traditional DOE particularly valuable in research settings where resources, time, or materials are constrained—common challenges in medium optimization and bioprocess development [6].

The Traditional DOE Methodology: A Structured Framework

Implementing traditional DOE typically follows a structured, multi-stage process that guides researchers from initial planning through final verification. This methodological framework ensures that experiments are strategically designed to answer specific research questions while efficiently utilizing resources.

The Five Stages of Traditional DOE

Table 2: Stages of Traditional DOE Implementation

| Stage | Primary Objective | Key Activities |

|---|---|---|

| Planning | Define objectives and constraints [2] | - Establish clear experimental objectives- Identify potential factors and responses- Assess resources and constraints- Form multidisciplinary team |

| Screening | Identify vital few factors [2] | - Narrow from many potential factors to critical few- Use efficient designs (e.g., Plackett-Burman)- Focus on main effects rather than interactions |

| Optimization | Determine optimal factor settings [2] | - Characterize response surfaces- Identify factor settings that maximize, minimize, or achieve target response- Use designs like Central Composite or Box-Behnken |

| Robustness Testing | Make process insensitive to noise [2] | - Identify control factor settings that minimize variability- Make product/process robust to uncontrollable factors- Use parameter designs |

| Verification | Confirm optimal settings [2] | - Conduct follow-up confirmation runs- Validate that system performs as expected- Finalize operational specifications |

The planning phase is particularly critical, as careful planning before embarking on experimentation significantly enhances the value and efficiency of the entire process [2]. During this stage, researchers develop a comprehensive understanding of the inputs and outputs being investigated, often using process flowcharts or process maps for visualization [1]. Consultation with subject matter experts is essential to identify all potentially relevant factors and ensure that the appropriate response measures are selected. The planning phase also involves determining realistic high and low levels for each input factor that represent extreme but achievable operating conditions [1].

Screening experiments deserve special attention in medium optimization contexts, where numerous factors (pH, temperature, nutrient components, dissolved oxygen, etc.) might potentially influence critical quality attributes [6]. Traditional DOE offers specialized screening designs, such as fractional factorials or Plackett-Burman designs, that efficiently identify the "vital few" factors from the "trivial many" using a minimal number of experimental runs [2]. This approach prevents researchers from wasting resources investigating insignificant factors while ensuring that important factors aren't overlooked.

Traditional DOE Methodology: A Cyclical Process for Systematic Optimization

Factorial Designs: The Workhorse of Traditional DOE

At the heart of traditional DOE lie factorial designs, which involve varying multiple factors simultaneously according to a predetermined pattern. In these designs, multiple factors are investigated together, and the objective is to identify which factors significantly affect the response while also investigating interaction effects [2].

The most basic factorial design involves factors each at two levels (high and low), coded as +1 and -1 for mathematical convenience [1]. For example, a 2-factor experiment requires 4 experimental runs (2²), while a 3-factor experiment requires 8 runs (2³). The number of experimental runs can be calculated using the formula 2ⁿ, where n is the number of factors [1]. Full factorial designs investigate all possible combinations of factor levels, allowing researchers to estimate all main effects and interactions [2].

When the number of factors makes full factorial designs impractical, fractional factorial designs investigate only a carefully selected subset of the possible combinations [1]. These designs sacrifice the ability to estimate higher-order interactions (which are often negligible) in exchange for significantly reduced experimental effort. The strategic selection of which runs to include follows sophisticated statistical principles that preserve the ability to estimate important effects while minimizing confounding.

Application in Medium Optimization: A Detailed Protocol

Traditional DOE finds particularly valuable application in culture medium optimization, where multiple components and process parameters interact to influence critical quality attributes of biological products [6]. The following detailed protocol illustrates how traditional DOE can be implemented for optimizing a culture medium formulation.

Experimental Protocol: Traditional DOE for Medium Optimization

Objective: To optimize a culture medium formulation for enhanced monoclonal antibody production in CHO cells by investigating four critical medium components and their interactions.

Factors and Levels:

- Factor A: Glucose concentration (2g/L vs. 6g/L)

- Factor B: Glutamine concentration (2mM vs. 6mM)

- Factor C: Specific growth factor (Present vs. Absent)

- Factor D: Trace element cocktail (Standard vs. Enhanced)

Response Variables:

- Primary: Final mAb titer (mg/L)

- Secondary: Cell viability (%), Specific productivity (pg/cell/day)

Experimental Design: 2⁴ Full Factorial Design (16 experimental runs, plus 3 center point replicates for curvature detection)

Table 3: Traditional DOE Design Matrix for Medium Optimization

| Run Order | Glucose | Glutamine | Growth Factor | Trace Elements | mAb Titer (mg/L) | Cell Viability (%) | Specific Productivity (pg/cell/day) |

|---|---|---|---|---|---|---|---|

| 1 | -1 (2g/L) | -1 (2mM) | -1 (Absent) | -1 (Standard) | 245 | 78.2 | 8.4 |

| 2 | +1 (6g/L) | -1 (2mM) | -1 (Absent) | -1 (Standard) | 285 | 82.5 | 9.1 |

| 3 | -1 (2g/L) | +1 (6mM) | -1 (Absent) | -1 (Standard) | 265 | 80.1 | 8.7 |

| 4 | +1 (6g/L) | +1 (6mM) | -1 (Absent) | -1 (Standard) | 325 | 85.3 | 9.8 |

| 5 | -1 (2g/L) | -1 (2mM) | +1 (Present) | -1 (Standard) | 295 | 83.2 | 9.3 |

| 6 | +1 (6g/L) | -1 (2mM) | +1 (Present) | -1 (Standard) | 365 | 87.6 | 10.5 |

| 7 | -1 (2g/L) | +1 (6mM) | +1 (Present) | -1 (Standard) | 335 | 85.1 | 10.1 |

| 8 | +1 (6g/L) | +1 (6mM) | +1 (Present) | -1 (Standard) | 425 | 89.8 | 11.4 |

| 9 | -1 (2g/L) | -1 (2mM) | -1 (Absent) | +1 (Enhanced) | 275 | 81.3 | 8.9 |

| 10 | +1 (6g/L) | -1 (2mM) | -1 (Absent) | +1 (Enhanced) | 315 | 84.7 | 9.6 |

| 11 | -1 (2g/L) | +1 (6mM) | -1 (Absent) | +1 (Enhanced) | 305 | 83.5 | 9.4 |

| 12 | +1 (6g/L) | +1 (6mM) | -1 (Absent) | +1 (Enhanced) | 385 | 88.1 | 10.8 |

| 13 | -1 (2g/L) | -1 (2mM) | +1 (Present) | +1 (Enhanced) | 345 | 86.2 | 10.3 |

| 14 | +1 (6g/L) | -1 (2mM) | +1 (Present) | +1 (Enhanced) | 445 | 90.5 | 11.9 |

| 15 | -1 (2g/L) | +1 (6mM) | +1 (Present) | +1 (Enhanced) | 405 | 88.9 | 11.2 |

| 16 | +1 (6g/L) | +1 (6mM) | +1 (Present) | +1 (Enhanced) | 525 | 93.7 | 12.8 |

| 17-19 | 0 (4g/L) | 0 (4mM) | 0 (Half) | 0 (Intermediate) | 395-405 | 87.1-88.2 | 10.5-10.7 |

Statistical Analysis Approach:

- Calculate main effects for each factor

- Estimate two-factor interaction effects

- Perform Analysis of Variance (ANOVA) to identify statistically significant effects (p < 0.05)

- Develop regression model to predict responses

- Identify optimal factor level combinations

Effect Calculation Example: The main effect of Glucose on mAb titer would be calculated as the average of all runs at high glucose level minus the average of all runs at low glucose level [1]. For the above data, this would be approximately 105 mg/L, indicating a strong positive effect of increasing glucose concentration.

Research Reagent Solutions for Medium Optimization

Table 4: Essential Research Reagents for Culture Medium Optimization

| Reagent/Category | Function in Medium Optimization | Typical Concentration Ranges |

|---|---|---|

| Basal Medium | Provides essential nutrients, vitamins, and salts for cell growth | NA (formulation basis) |

| Glucose | Primary carbon and energy source for cellular metabolism | 1-6 g/L [6] |

| Glutamine | Essential amino acid providing nitrogen and carbon skeletons | 2-8 mM [6] |

| Growth Factors | Signaling molecules that regulate cell proliferation and productivity | Species-specific |

| Trace Elements | Metal ions (Cu, Zn, Fe, etc.) serving as enzyme cofactors | Various cocktails |

| pH Buffers | Maintain optimal pH for cellular processes and product stability | Physiological range (7.0-7.4) |

| Amino Acids | Building blocks for protein synthesis, including mAbs | Various compositions |

| Hormones/Steroids | Regulate metabolic pathways and cellular differentiation | Species-specific |

Traditional DOE in the Machine Learning Era: A Complementary Foundation

The emergence of machine learning (ML) approaches in bioprocess optimization has created a new context for traditional DOE, with these methodologies serving complementary rather than competing roles. While ML offers powerful capabilities for modeling complex, nonlinear relationships, traditional DOE provides the structured approach to experimental design that generates the high-quality data necessary for effective ML model training [4].

Traditional DOE fails to capture the complex, nonlinear interactions between culture parameters in systems where multiple factors interact in higher-order ways [6]. This limitation becomes particularly evident in biological systems characterized by extensive nonlinearity and complex interactions, where response surface methodology and other advanced DOE variants still struggle to fully characterize the system behavior [7]. ML approaches can model these complex relationships without requiring predetermined model forms, making them particularly valuable for optimizing complex biological systems [6].

However, the effectiveness of ML is heavily dependent on the quality and structure of the training data. Traditional DOE provides an ideal framework for generating this data through its systematic variation of input factors and efficient coverage of the experimental space [4]. The structured approach of traditional DOE ensures that the resulting data set has appropriate statistical properties (orthogonality, balance, etc.) that enable ML algorithms to accurately discern patterns and relationships. In this sense, traditional DOE serves as the foundational data generation engine that powers effective ML applications in medium optimization [6].

Traditional DOE and Machine Learning: A Synergistic Relationship for Process Optimization

The integration of traditional DOE and ML follows a logical progression where initial screening and characterization experiments using traditional DOE provide the foundational understanding of the system, which then informs more focused data generation for ML model development [6]. This hybrid approach leverages the strengths of both methodologies: the structured efficiency of traditional DOE for initial exploration and the adaptive, nonlinear modeling capabilities of ML for refined optimization. As noted in recent research, "Machine learning accelerates and improves tissue culture media optimization" precisely because it can build upon the structured knowledge generated through traditional experimental approaches [4].

Traditional Design of Experiments remains an indispensable methodology in scientific research, particularly in complex optimization challenges such as culture medium development. Its systematic approach to experimental planning, execution, and analysis provides a rigorous framework for efficiently extracting maximum information from limited resources. The core principles of comparison, randomization, replication, and blocking ensure the validity and reliability of experimental findings, while factorial and fractional factorial designs enable comprehensive investigation of factor effects and interactions.

In contemporary research contexts, traditional DOE serves as the crucial foundation upon which more advanced optimization approaches, including machine learning, are built. While ML offers exciting capabilities for modeling complex biological systems, its effectiveness depends heavily on the quality of training data—data that traditional DOE is uniquely positioned to generate through its structured approach. The integration of these methodologies represents the future of efficient process optimization in biotechnology and pharmaceutical development.

For researchers, scientists, and drug development professionals, mastery of traditional DOE principles and methodologies remains essential for designing informative experiments, avoiding common pitfalls of one-factor-at-a-time approaches, and building the foundational knowledge necessary for implementing more advanced optimization strategies. As the backbone of systematic experimentation, traditional DOE continues to provide the statistical rigor and methodological discipline required for advancing scientific understanding and technological innovation in medium optimization and beyond.

In the competitive fields of drug development and materials science, optimizing complex processes like medium composition is a fundamental but resource-intensive challenge. For decades, Design of Experiments (DOE) has been the cornerstone statistical approach for systematically exploring how different variables influence a desired outcome. Traditional DOE is excellent for local optimization using linear models within a limited design space [8]. However, the exponential growth in experimental complexity and the high-dimensional nature of modern research problems have exposed its limitations. Enter Machine Learning (ML)-guided DOE—a paradigm that merges adaptive AI modeling with iterative experimentation to accelerate discovery.

This new approach uses machine learning algorithms to create predictive models from existing data. Crucially, these models not only predict outcomes but also quantify their own uncertainty [8]. This allows researchers to strategically select each subsequent experiment, either to exploit promising leads with high predicted performance or to explore regions of the design space where the model lacks information. This iterative, AI-guided methodology, often called Sequential Learning, is revolutionizing research and development by making every experiment count [8].

Head-to-Head Comparison: Traditional DOE vs. ML-Guided DOE

The fundamental differences between traditional and ML-guided DOE impact everything from experimental efficiency to the types of problems that can be tackled. The table below summarizes these core distinctions.

Table 1: Fundamental Differences Between Traditional DOE and ML-Guided DOE

| Feature | Traditional DOE | ML-Guided DOE (Sequential Learning) |

|---|---|---|

| Primary Goal | Statistical inference of treatment effects; local optimization [9] [8] | Accurate prediction and global optimization over complex spaces [9] [8] |

| Experimental Strategy | One-off, fixed grid of experiments designed upfront [8] | Iterative, closed-loop; each experiment is informed by previous results [8] [10] |

| Handling of High Dimensions | Number of experiments grows exponentially with variables [8] | Number of experiments scales linearly with the number of dimensions [8] |

| Data Utilization | Purely statistical; does not use domain knowledge or past project data [8] | Leverages existing data and incorporates domain knowledge to improve models [8] |

| Data Type Compatibility | Best with simple, structured, tabular data [8] | Can handle varied, complex, and unstructured data (e.g., micrographs) [8] |

| Uncertainty Quantification | Not a core feature | Core feature; guides strategic choice between exploration and exploitation [8] |

Quantitative Performance Analysis

The theoretical advantages of ML-guided DOE are compelling, but the most convincing evidence comes from documented performance in real-world R&D settings. The following table compiles key metrics from published studies and industry implementations.

Table 2: Documented Performance Metrics of ML-Guided DOE vs. Traditional Methods

| Metric | Traditional DOE | ML-Guided DOE | Context/Source |

|---|---|---|---|

| Reduction in Experiments | Baseline | 50% - 90% [8] | Materials & chemicals R&D to reach target performance [8] |

| Acceleration of Timelines | Baseline | ~20x faster (6 months vs. 10 years) [10] | Synthetic biology for new commercially viable molecules [10] |

| Throughput of Automated Labs | Human researcher baseline | 50x - 100x more samples per day [10] | A-Lab at Berkeley Lab for materials synthesis [10] |

| Candidate Screening Speed | Trial-and-error synthesis of a few candidates | Screening of ~50,000 structures to identify top candidates [10] | Discovery of a record-breaking capacitor material [10] |

| Data Analysis Speed | Years | Minutes [10] | OmniFold tool for particle collider data analysis [10] |

Experimental Protocols and Workflows

Understanding the practical implementation of these methodologies is crucial for researchers. Below are the generalized protocols for both traditional and ML-guided approaches.

Traditional DOE Workflow

Traditional DOE is a linear, upfront process focused on modeling and inference.

Diagram 1: Traditional DOE Linear Workflow

Step-by-Step Protocol:

- Define Objective and Variables: Clearly state the optimization goal (e.g., maximize cell growth). Identify all independent variables (factors) and their ranges.

- Design Fixed Experiment Grid: Select a DOE design (e.g., full factorial, fractional factorial, Response Surface Methodology - RSM) to create a predefined set of experimental runs. This design aims to maximize information while controlling for the number of experiments.

- Execute All Experiments: Conduct the entire set of experiments from the design matrix. The order is typically randomized to avoid bias.

- Statistical Analysis: Analyze the collected response data using statistical methods like Analysis of Variance (ANOVA) to identify which factors have significant effects.

- Build Model: Fit a linear or polynomial model (e.g., a quadratic model in RSM) that describes the relationship between the variables and the response.

- Validate Model: Perform confirmation experiments to test the model's predictive accuracy within the studied experimental region.

ML-Guided DOE (Sequential Learning) Workflow

This is an iterative, closed-loop process that emphasizes continuous learning and prediction.

Diagram 2: ML-Guided DOE Iterative Workflow

Step-by-Step Protocol:

- Acquire Initial Training Dataset: Compile data from prior experiments, literature, or initial screening studies. This dataset forms the foundation for the first model.

- Train ML Model: Use an ML algorithm (e.g., Random Forest, Gaussian Process, Neural Networks) to train a model that maps input variables to the output response(s).

- Predict Outcomes & Uncertainty: The trained model is used to predict the performance of untested variable combinations across the entire design space. Crucially, the model also estimates the uncertainty for each prediction.

- AI Suggests Next Experiments: An acquisition function uses the predictions and uncertainty to propose the most informative next experiments. This balances exploration (testing high-uncertainty areas) and exploitation (testing high-predicted-performance areas).

- Execute Selected Experiments: A human researcher or an automated robotic system (like Berkeley Lab's A-Lab [10]) performs the shortlisted experiments.

- Update Dataset: The new experimental results are added to the training dataset.

- Iterate or Conclude: The model is retrained with the enriched dataset. The loop continues until a performance target is met or the budget is exhausted.

The Scientist's Toolkit: Essential Research Reagents and Materials

Implementing these methodologies, especially in biological contexts, requires a suite of key reagents and tools. The following table details essential items for a medium optimization study in drug development.

Table 3: Key Research Reagent Solutions for Medium Optimization

| Reagent/Material | Function in Experiment |

|---|---|

| Chemically Defined Media Components | Provides a base with known concentrations of nutrients (e.g., amino acids, vitamins, salts). Essential for precisely controlling independent variables in a DOE or ML-DOE study. |

| Growth Factors & Cytokines | Signaling molecules that can be varied as factors to optimize cell growth, viability, and productivity in biopharmaceutical production. |

| Metabolomics Assay Kits | Used to measure metabolite consumption/production (e.g., glucose, lactate) as critical response variables to understand cell metabolism and medium efficiency. |

| Cell Viability & Apoptosis Assays | Measures key performance indicators (KPIs) like viability and apoptosis rates, which are common optimization targets in bioprocessing. |

| High-Throughput Screening Plates | (e.g., 96-well, 384-well) Enable the parallel execution of dozens to hundreds of medium condition tests, which is fundamental for both DOE and ML-DOE. |

| Process Analytical Technology (PAT) | Sensors for online monitoring of parameters like pH, dissolved oxygen, and biomass. Provide rich, high-frequency data to train more accurate ML models. |

The evidence clearly demonstrates that ML-guided DOE represents a significant leap forward in experimental science. While traditional DOE remains a powerful tool for well-defined, low-dimensional problems, its rigid structure is ill-suited for the complex, high-dimensional optimization challenges that define modern R&D. ML-guided DOE, with its ability to learn from data, quantify uncertainty, and strategically guide an iterative experimental process, offers unparalleled efficiency, speed, and scope [8] [10].

The documented outcomes—reductions in experiments by 50-90%, the acceleration of development timelines from a decade to mere months, and the successful navigation of vast design spaces—make a compelling case for its adoption [8] [10]. For researchers, scientists, and drug development professionals focused on medium optimization and similar complex tasks, integrating ML-guided DOE is no longer a futuristic concept but a practical strategy to drive innovation and maintain a competitive edge.

In the field of medium optimization for bioprocesses, such as drug development, researchers are often faced with a fundamental choice between two distinct analytical philosophies: the traditional, knowledge-driven rule-based approach and the modern, pattern-based data-driven logic. The rule-based approach, often implemented through traditional Design of Experiments (DOE), relies on predefined human expertise and structured experimental designs to systematically explore variable space [11]. In contrast, the data-driven approach, frequently employing machine learning (ML), learns relationships directly from historical and experimental data to model and predict optimal conditions [12] [13]. This guide objectively compares these methodologies within the context of medium optimization research, providing experimental data, detailed protocols, and resource information to inform researchers and scientists in the pharmaceutical and biotech industries.

Core Philosophical Differences

The divergence between rule-based and data-driven logic stems from their foundational principles, which dictate their application, strengths, and limitations.

Rule-Based Logic (Traditional DOE): This philosophy is rooted in causation and control. It depends on explicit domain knowledge encoded into a system—for example, in the form of "if-then" rules or a predefined experimental matrix [12] [14]. The experimenter controls the input variables based on a specific design (e.g., factorial or response surface methodology) to establish clear, causal links between inputs and outputs [11]. The primary goal is to understand the "why" behind a process, ensuring that the system's behavior is transparent, deterministic, and valid within expected conditions [12].

Data-Driven Logic (Machine Learning): This philosophy is centered on correlation and learning. Instead of being explicitly programmed with rules, ML algorithms identify complex, non-linear patterns and relationships from data [12] [15]. The system's logic is embedded in the algorithm's model, which is derived from the data itself [14]. The focus shifts from pre-defined understanding to discovering hidden insights, enabling the model to adapt and improve as more data becomes available [15]. The goal is often high-fidelity prediction, even if the underlying causal mechanisms remain partially opaque—a characteristic often described as the "black box" problem [11].

Table 1: A Comparison of Foundational Philosophies

| Aspect | Rule-Based / Traditional DOE | Data-Driven / Machine Learning |

|---|---|---|

| Primary Foundation | Pre-existing expert knowledge, physical laws [12] | Historical and experimental data patterns [12] [15] |

| Core Logic | Deterministic, "if-then" rules [14] | Probabilistic, statistical models [15] |

| Goal of Analysis | Causal inference, understanding "why" [11] | Predictive accuracy, forecasting outcomes [11] |

| Adaptability | Static; requires manual updates by experts [12] [15] | Dynamic; autonomously adapts to new data [15] [14] |

| Transparency | High; decisions are easily interpretable [12] [14] | Lower; can be a "black box" requiring XAI techniques [13] [11] |

Experimental Performance and Quantitative Data

Recent studies in synthetic biology and biomanufacturing provide concrete data on the performance of these approaches for medium optimization.

Case Study: Flaviolin Production inPseudomonas putida

A seminal 2025 study directly compared a traditional one-factor-at-a-time (OFAT) method, a rule-based Response Surface Methodology (RSM), and a data-driven ML active learning process for optimizing the production of flaviolin, a valuable chemical precursor [13].

Key Findings:

- The ML active learning process achieved the highest improvements, increasing flaviolin titer by 60% and 70% in two different campaigns, and process yield by 350% in a third campaign [13].

- The ML model, through explainable AI (XAI) techniques, identified that sodium chloride (NaCl) was the most important component influencing production—a non-intuitive relationship that was not pre-defined in any rule set [13].

- The optimal salt concentration was found to be very high, comparable to seawater, demonstrating ML's ability to discover novel and high-performing operating regimes beyond typical human expertise [13].

Table 2: Performance Comparison in Flaviolin Medium Optimization

| Optimization Method | Key Outcome | Experimental Effort | Key Insight Revealed |

|---|---|---|---|

| Traditional OFAT | Baseline performance | Cumbersome, inefficient for multi-variable systems [13] | Limited to one variable at a time |

| Rule-Based RSM | Moderate improvement | Structured but can be suboptimal for complex spaces [13] | Models based on 2nd-degree polynomials |

| Data-Driven ML | +60% to +70% titer; +350% yield [13] | Highly efficient via active learning [13] | Identified NaCl as critical factor [13] |

General Performance Trends

A broader analysis of the two approaches reveals consistent trade-offs:

- Rule-based/DOE systems excel in environments where failures are rare and data is scarce, as they do not require large historical datasets [12]. They provide high reliability and accuracy within their predefined, stable domains [12] [14].

- Data-driven/ML systems significantly outperform in complex, non-linear scenarios with many interacting variables [12] [13]. They are, however, highly dependent on the quality and quantity of the data used for training, and their development is often more complex and resource-intensive [14].

Detailed Experimental Protocols

Protocol for Rule-Based Medium Optimization using DOE

This protocol outlines a standard RSM approach for optimizing a medium with multiple components [13] [11].

- Problem Formulation: Define the response variable (e.g., product titer, growth rate) and select the critical medium components (e.g., carbon source, nitrogen source, salts, inducters) to be investigated as independent variables.

- Experimental Design: Create a design matrix (e.g., Central Composite Design) that specifies the exact combinations and concentrations of each variable to be tested. This design efficiently covers the experimental space while minimizing the number of required runs.

- Execution & Data Collection:

- Prepare media according to the design matrix.

- Inoculate with the production host (e.g., P. putida, E. coli).

- Cultivate under controlled conditions (temperature, pH, O₂).

- Harvest samples and quantify the response (e.g., via HPLC, absorbance assays).

- Model Fitting & Analysis: Fit a second-degree polynomial model to the collected data. Use analysis of variance (ANOVA) to identify which factors and interactions are statistically significant.

- Optimization & Validation: Use the fitted model to predict the combination of factor levels that will maximize the response. Perform confirmatory experiments at the predicted optimum to validate the model's accuracy.

Protocol for Data-Driven Medium Optimization using Active Learning

This protocol describes an ML-driven active learning cycle, as implemented in the flaviolin case study [13].

- Initial Data Collection: Compile any existing historical data on the host and product. If no data exists, start with a small, space-filling set of experiments (e.g., a sparse DOE or random sampling).

- Model Training: Train a machine learning model (e.g., Random Forest, Gaussian Process, or a custom tool like the Automated Recommendation Tool - ART [13]) on the available data. The model learns to map media compositions to the output performance metric.

- Recommendation (Active Learning): The trained ML algorithm evaluates millions of potential media compositions in silico and recommends a shortlist (e.g., 10-15) of the most promising candidates to test next. These are typically points predicted to yield high performance or those where the model is most uncertain, balancing exploration and exploitation.

- Automated Testing:

- An automated liquid handler prepares the recommended media designs in multi-well plates.

- The plates are inoculated and cultivated in an automated bioreactor system (e.g., a BioLector) for highly repeatable, small-scale cultivation.

- Product formation is measured using a high-throughput assay (e.g., microplate reader absorbance).

- Iteration (DBTL Cycle): The new experimental results are added to the dataset. The cycle repeats from Step 2, creating a closed Design-Build-Test-Learn (DBTL) loop. With each iteration, the model becomes more accurate and hones in on the global optimum more efficiently than one-shot DOE [13].

Workflow and Relationship Visualization

The following diagrams illustrate the logical workflows of both the rule-based and data-driven approaches, culminating in a hybrid model.

Rule-Based DOE Workflow

Data-Driven Active Learning Workflow

Hybrid DOE-ML Optimization Workflow

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successfully implementing these optimization strategies requires a combination of biological, computational, and automation resources.

Table 3: Essential Reagents and Platforms for Medium Optimization Research

| Item | Function / Description | Relevance to Approach |

|---|---|---|

| Automated Liquid Handler | Precisely dispenses liquid to prepare complex media formulations according to a digital design file. | Critical for ML active learning to rapidly prepare many different media designs [13]. |

| Micro-scale Bioreactors (e.g., BioLector) | Provides high-throughput, reproducible cultivation with tight control over conditions (O₂, pH) in 48- or 96-well plates. | Essential for both, but enables the rapid DBTL cycles in ML workflows [13]. |

| High-Throughput Assay | A fast, reliable method to quantify the output (e.g., product titer, cell density). Absorbance assays are common. | Vital for ML to quickly phenotype many samples; used in both for data collection [13]. |

| DOE Software (e.g., JMP, MODDE) | Software used to create optimal experimental designs (e.g., RSM) and analyze the resulting data. | Core for rule-based DOE strategy [16]. |

| Machine Learning Platform / Code | Software or custom code (e.g., in Python/R) for building ML models. Active learning tools (e.g., ART) are key. | Core for data-driven ML strategy [13]. |

| Data Management System (e.g., EDD) | A database (e.g., Experiment Data Depot) to store and manage experimental designs and results. | Important for both, but critical for ML to maintain a clean, accessible dataset for iterative learning [13]. |

The comparison reveals that the choice between rule-based and data-driven logic is not necessarily a binary one. Rule-based DOE offers interpretability, reliability, and structured causal inference, making it ideal for well-understood systems or when data is limited [12] [11]. Data-driven ML provides superior adaptability, efficiency in complex spaces, and the ability to uncover novel, non-intuitive relationships, as demonstrated by the 70% titer increase and the discovery of salt's critical role in flaviolin production [13].

The most powerful emerging trend is the hybridization of these philosophies [16] [11]. In this synergistic model, an initial structured DOE can be used to generate a high-quality foundational dataset, which is then used to train an ML model. The ML model then guides subsequent, more efficient active learning cycles to refine and optimize the process further [16]. This combined approach leverages the structured, causal grounding of DOE with the adaptive, predictive power of ML, creating a robust framework for accelerating medium optimization and bioprocess development in drug discovery and beyond.

Core Components of an ML-DOE Framework for Bioprocesses

The optimization of bioprocesses, particularly culture media, is fundamental to achieving the titers, rates, and yields (TRY) required for commercially viable biomanufacturing. For decades, the field has been dominated by traditional Design of Experiments (DoE) approaches, which use structured experimental campaigns to fit statistical models, typically linear or second-order polynomials, to process data [13]. While these methods brought rigor, they often struggle with the complex, non-linear nature of biological systems and can require an impractical number of experiments when many factors are involved [17]. The emergence of Machine Learning (ML) presents a paradigm shift. ML algorithms can learn complex, non-linear relationships directly from data, offering the potential to uncover deeper insights and identify optimal conditions with greater efficiency [18] [6]. This guide provides a comparative analysis of ML-based and traditional DoE frameworks, detailing their core components through objective data and experimental protocols.

Core Components of an ML-DOE Framework

An effective ML-DOE framework integrates the strategic design of experiments with advanced data modeling. Its core components form an iterative cycle for accelerated process understanding and optimization.

Experimental Design: This component involves selecting which experiments to run. Traditional DoE often uses factorial or response surface methodology (RSM) designs that perturb factors around "corner points." In contrast, ML-beneficial designs, such as space-filling designs (e.g., Latin Hypercube Sampling), distribute experiments uniformly across the entire design space to generate richer data for training non-linear models [17]. Definitive Screening Designs (DSDs) are a hybrid, enabling efficient evaluation of main effects and quadratic relationships with minimal experimental runs [19].

Data Generation and Management: This involves executing experiments and curating the resulting data. A key advancement is the development of semi-automated pipelines that use liquid handlers and automated bioreactors to rapidly and reproducibly test ML-suggested media conditions, drastically reducing hands-on time and variability [13]. The data generated—including process parameters, metabolite concentrations, and product quality attributes—must be stored in structured databases.

Modeling Engine: This is the analytical core of the framework. While traditional DoE relies on linear regression, ML employs a suite of algorithms. Artificial Neural Networks (ANNs) are frequently used for their high predictive accuracy with complex data [20] [21]. Hybrid models combine a mechanistic backbone (representing known scientific principles) with a machine learning layer to capture unknown non-linear behaviors, offering high accuracy and data efficiency [17]. For improved interpretability, Symbolic Regression can find simple, human-readable equations that rival the performance of "black-box" models like ANNs [20].

Insight and Recommendation: The trained models are used to generate predictions and insights. Explainable AI (XAI) techniques, such as SHapley Additive exPlanations (SHAP), quantify the importance of each input parameter (e.g., pH, salt concentration) on the output, moving beyond correlation to actionable causality [20] [13]. Based on these insights, the model recommends a new set of promising conditions to test, thus closing the loop.

The following diagram illustrates the workflow of an ML-DOE framework, highlighting its iterative "Design-Build-Test-Learn" (DBTL) nature.

Comparative Analysis: ML vs. Traditional DoE

The choice between ML and traditional DoE has significant implications for experimental burden, model complexity, and interpretability. The table below summarizes their key differences.

Table 1: A comparative overview of Machine Learning and Traditional DoE frameworks for bioprocess optimization.

| Feature | Machine Learning (ML) Framework | Traditional DoE Framework |

|---|---|---|

| Underlying Model | Non-linear models (e.g., ANN, Random Forest), Hybrid models [17] [20] | Linear regression, Response Surface Methodology (RSM) [13] |

| Handling of Complexity | Excels at capturing complex, non-linear interactions and combinatorial effects [17] | Struggles with high non-linearity; often oversimplifies complex systems [17] |

| Data Efficiency | Hybrid models are highly data-efficient; pure ML can be data-hungry [17] | Relatively data-efficient for linear effects, but limited in scope [17] |

| Experimental Design | Space-filling designs, active learning cycles [17] [13] | Factorial designs, central composite designs, Definitive Screening Designs (DSDs) [19] |

| Interpretability | Often "black-box"; requires XAI (e.g., SHAP) for interpretation [20] | Highly interpretable; main and interaction effects are directly quantified [13] |

| Integration of Prior Knowledge | Enabled via transfer learning and hybrid modeling [17] | Challenging; each DoE is typically treated as a de novo project [17] |

Performance and Experimental Data

Direct comparisons and real-world case studies demonstrate the tangible impact of adopting an ML-DOE framework.

Quantitative Performance Comparison

ML approaches have demonstrated superior performance in several key metrics, including a significant reduction in the number of experiments required and improved predictive accuracy.

Table 2: Summary of quantitative performance data from comparative studies and case studies.

| Application / Case Study | Optimization Method | Key Outcome | Experimental Load |

|---|---|---|---|

| General Bioprocess Characterization [19] | Definitive Screening Design (DSD) | Identified Critical Process Parameters (CPPs) and optimal ranges for pDNA production | >50% reduction vs. traditional DoE |

| CHO Cell mAb Production [21] | Artificial Neural Network (ANN) | Increased final mAb titer by up to 48% | Trained on historical data + new experiments |

| Flaviolin Production in P. putida [13] | Active Learning (Automated Recommendation Tool) | Increased titer by 60-70%; increased process yield by 350% | 15 media designs tested per DBTL cycle |

Detailed Experimental Protocols

To ensure reproducibility, below are the detailed methodologies from two key studies cited in the performance table.

CHO Cell mAb Production Optimization using ANN [21]

- Cell Line and Culture: A CHO DG44 cell line producing an IgG1 mAb was used. Cultivations were performed in a small-scale modular bioreactor system (ambr15) with a working volume of 10–15 mL.

- Data Collection: Online data (pH, dissolved oxygen) and daily offline samples were collected. Offline analysis included viable cell density (VCD), viability, and concentrations of metabolites (glucose, lactate) and mAb (IgG1).

- Data Preprocessing: A dataset of 735 data points was curated. Data cleaning removed 19 points due to contamination or equipment issues. Feature engineering was performed to identify the most important process parameters.

- Model Training and Validation: A Multilayer Perceptron (MLP) was selected after comparing classical methods (Linear Regression, PLS, Random Forest). The ANN was trained on the historical and newly generated data to predict mAb titer based on process parameters.

- Optimization and Validation: The trained model suggested new cultivation settings. These were tested in validation experiments, which confirmed the significant increase in final mAb titer.

Flaviolin Production Optimization via Active Learning [13]

- Semi-Automated Pipeline Setup: A system was created integrating an automated liquid handler, a microbioreactor platform (BioLector), and a microplate reader.

- Active Learning Cycle (DBTL):

- Design: The ML algorithm (Automated Recommendation Tool, ART) suggested 15 media designs by varying the concentrations of 12-13 components.

- Build: The liquid handler automatically prepared the media designs according to ART's instructions.

- Test: The media were dispensed into a 48-well plate, inoculated with engineered P. putida, and cultivated for 48 hours. Flaviolin production was measured via absorbance at 340 nm.

- Learn: Production data and media compositions were stored. ART used these to update its model and recommend a new set of 15 media designs for the next cycle.

- Analysis: After multiple DBTL cycles, Explainable AI techniques were applied to the model to identify the most influential media components, revealing NaCl as a critical factor.

The Scientist's Toolkit: Key Reagents and Materials

The successful implementation of an ML-DOE framework relies on a suite of laboratory technologies and reagents.

Table 3: Essential research reagents and solutions for ML-DOE driven bioprocess optimization.

| Item | Function in the Framework | Example from Research |

|---|---|---|

| Automated Bioreactor Systems | Enables high-throughput, reproducible generation of cultivation data under controlled conditions. | ambr15 system [21]; BioLector [13] |

| Automated Liquid Handlers | Precisely and rapidly prepares complex media designs as specified by the ML algorithm, ensuring consistency. | Central to semi-automated pipelines [13] |

| Chemically Defined Media & Feed | Serves as the base for optimization; a defined composition is essential for modeling input-output relationships. | Sartorius Stedim Cellca platform media [21] |

| High-Throughput Assays | Rapidly quantifies key outputs (e.g., product titer, metabolite concentration) to feed data back to the model. | Cedex Bio Analyzer [21]; Absorbance microplate reader [13] |

| Critical Process Parameter (CPP) Stocks | The variables being optimized (e.g., salts, vitamins, pH, temperature). Their systematic variation drives learning. | NaCl was identified as a key CPP for flaviolin production [13] |

The integration of Machine Learning with Design of Experiments represents a significant leap forward in bioprocess optimization. While traditional DoE remains a valuable tool for simpler, well-understood systems, the ML-DOE framework excels in navigating the complexity and non-linearity inherent in biology. As evidenced by the experimental data, its ability to unlock substantial performance gains—such as 48% higher mAb titers and 350% improved process yields—while simultaneously reducing experimental burden, makes it an indispensable approach for modern researchers and drug development professionals. The future of bioprocess development lies in the continued adoption and refinement of these intelligent, data-driven frameworks.

Identifying the Right Problem Type for Each Approach

In the field of bioprocess development, optimizing culture medium is a critical and costly endeavor. Researchers traditionally relied on Traditional Design of Experiments (DOE), but Machine Learning (ML)-guided approaches are now emerging as a powerful alternative. This guide provides an objective comparison of these methodologies, helping scientists select the right tool based on their project's specific constraints and goals.

At a Glance: DOE vs. Machine Learning

The table below summarizes the core characteristics of each approach to help you make an initial assessment.

| Feature | Traditional Design of Experiments (DOE) | Machine Learning (ML)-Guided Optimization |

|---|---|---|

| Core Philosophy | Statistically driven pre-planned experimental matrices [22] [23] | Iterative, data-driven learning loop; an AI-guided R&D methodology [13] [8] |

| Typical Workflow | Single-phase experiment execution followed by model building and analysis [23] | Cyclic "Design-Build-Test-Learn" (DBTL) process [13] |

| Experimental Efficiency | Number of experiments grows exponentially with variables; efficient for local optimization [8] | 50-90% fewer experiments reported; linear growth with variables; suited for global optimization [13] [8] |

| Data Handling | Best with simple, structured, tabular data [8] | Can handle varied, complex, and unstructured data (e.g., micrographs) [8] |

| Model Output | Polynomial regression models (e.g., linear, quadratic) [22] [23] | Flexible models (e.g., tree-based, neural networks); can provide uncertainty estimates [24] [13] [8] |

| Ideal Problem Scope | Problems with a limited number of variables (factors); local optimization [22] [8] | Multi-dimensional, complex problems with large search spaces; leveraging existing data [13] [8] |

| Key Advantage | Well-established, does not require pre-existing data [8] | High data efficiency and ability to find non-obvious, high-performing conditions [13] [8] |

Quantitative Performance Comparison

Independent studies across various domains consistently show that ML methods can match or surpass the predictive accuracy of models derived from Traditional DOE, while often achieving this with greater experimental efficiency.

The table below presents a comparison of model performance from various optimization studies.

| Study / Application | Methodologies Compared | Key Performance Outcomes |

|---|---|---|

| Flaviolin Production in P. putida [13] | ML-led Active Learning vs. Traditional Baseline | ML led to 60% and 70% increases in titer and a 350% increase in process yield over three optimization campaigns. |

| Diclofenac Potassium Removal [25] | RSM vs. Artificial Neural Network (ANN) | Both models were effective, but the ANN model demonstrated superior predictive accuracy compared to the RSM model. |

| Dyeing Process Optimization [22] | Taguchi vs. Box-Behnken (BBD) vs. Central Composite (CCD) | Taguchi achieved 92% accuracy (most efficient), BBD 96%, and CCD 98% accuracy (most accurate). |

| UBC of Shallow Foundations [24] | Six ML Algorithms (e.g., AdaBoost, kNN, RF) | AdaBoost performed best (Training R²: 0.939, Testing R²: 0.881), demonstrating ML's high predictive capability for complex systems. |

Experimental Protocols in Practice

Understanding the detailed workflows of each approach is crucial for selecting and implementing the right methodology.

Detailed Protocol: ML-Guided Medium Optimization

The following protocol is adapted from a study that optimized flaviolin production in Pseudomonas putida using a active learning pipeline [13]. This molecule- and host-agnostic process can be applied to recombinant protein production.

1. Planning and Initial Data Collection:

- Define the Design Space: Select 12-13 variable medium components (e.g., carbon sources, nitrogen sources, salts, trace metals) and their concentration ranges [13].

- Establish a Baseline: Run initial experiments (e.g., using a standard medium) to establish a baseline production titer or yield [13].

- Build an Initial Dataset: Perform a small, space-filling set of experiments (e.g., 15-20 conditions) to generate the initial data required to train the first ML model [8].

2. ML Model Training and Recommendation:

- Train the Model: Use a machine learning algorithm (e.g., the Automated Recommendation Tool - ART, Random Forest, or Bayesian Optimization) to train a model on the collected dataset. The model learns the complex relationships between medium composition and the output (e.g., protein titer) [13].

- Recommend New Experiments: The ML algorithm selects the next set of promising medium compositions to test. It often uses an "exploration vs. exploitation" strategy, balancing testing of high-performing predictions with testing in uncertain regions of the design space [13] [8].

3. Automated Experimental Execution ("Semi-Automated Pipeline"):

- Media Preparation: Use an automated liquid handler to prepare the recommended medium designs in triplicate or quadruplicate in a 48-well plate [13].

- Cultivation: Inoculate the media and cultivate them in a controlled, automated bioreactor platform (e.g., a BioLector) for a defined period (e.g., 48 hours). This ensures high reproducibility [13].

- Product Quantification: Measure the product (e.g., via absorbance for colored compounds, or HPLC for recombinant proteins) using a microplate reader or other high-throughput systems [13].

4. Analysis and Iteration:

- The new production data is fed back into the database (e.g., Experiment Data Depot - EDD) [13].

- The ML model is retrained with the expanded dataset, and the DBTL cycle repeats until a performance target is met or the experimental budget is exhausted [13].

Detailed Protocol: Traditional DOE for Medium Optimization

This protocol outlines the key stages of a "smart" medium optimization process using Traditional DOE, as applied in recombinant protein production [26] [27].

1. Planning:

- Define Objectives: Identify the key response variables (e.g., protein yield, quality, cell growth) [26].

- Select Factors and Levels: Choose which medium components (factors) to investigate and the concentration ranges (levels) to test. The complexity depends on the number of factors and levels [26].

2. Screening:

- Execute Screening Design: Use a screening design (e.g., a fractional factorial or Plackett-Burman design) to identify which of the many potential medium components have a statistically significant impact on the response variables. This reduces the number of factors for subsequent, more detailed optimization steps [26].

3. Modeling and Optimization:

- Execute Detailed DOE: For the significant factors (typically 3-5), run a more detailed experimental design like a Central Composite Design (CCD) or Box-Behnken Design (BBD) [26] [22] [23].

- Build a Response Model: Use regression analysis to fit a quadratic polynomial model (e.g., using Response Surface Methodology - RSM) to the experimental data. The model describes how the factors influence the response [22] [23].

- Find the Optimum: Use the fitted model to identify the factor levels that theoretically produce the maximum or minimum response (e.g., highest protein yield). This is often visualized with contour plots [23].

4. Validation:

- The optimal conditions predicted by the model are experimentally validated in a follow-up experiment to confirm performance [26].

Decision Framework: Choosing Your Approach

The choice between DOE and ML is not about which is universally better, but which is more suitable for your specific context. The diagram below outlines a decision pathway to guide researchers.

Pathway to Selection:

- Choose Machine Learning when facing high-dimensional problems, seeking a global optimum, or when valuable historical data exists. ML is transformational for exploring vast, complex design spaces efficiently [26] [8].

- Choose Traditional DOE when starting from scratch without pre-existing data, when the system is well-understood with few critical factors, or when project goals are focused on local optimization of a known region [8].

- Consider a Hybrid Strategy for a balanced approach. Use Traditional DOE (e.g., a screening design) to identify the most important factors from a large set, then apply ML for in-depth optimization of those key factors [26].

The Scientist's Toolkit: Key Reagents & Materials

The table below lists essential materials and resources used in the featured experiments, particularly in ML-guided bioprocess optimization.

| Item | Function in the Experiment |

|---|---|

| Automated Liquid Handler | Precisely combines multiple stock solutions to create numerous, distinct medium formulations with minimal human error, enabling high-throughput [13]. |

| Microtiter Plates (e.g., 48-well) | Serve as mini-bioreactors for cultivating dozens of different medium conditions in parallel under controlled conditions [13]. |

| Automated Cultivation Platform (e.g., BioLector) | Provides tight control and real-time monitoring of culture conditions (e.g., O₂, pH, shake speed), ensuring highly reproducible growth data across many samples [13]. |

| Microplate Reader | Enables rapid, high-throughput quantification of the product, such as by measuring absorbance for pigments like flaviolin [13]. |

| Machine Learning Platform | Software (e.g., Citrine, custom scripts with ART) that trains models on experimental data and recommends the next best experiments to run, driving the active learning cycle [13] [8]. |

| Stock Solutions | Concentrated solutions of carbon sources, nitrogen sources, salts, trace metals, and buffers used as building blocks for creating defined medium compositions [13]. |

Key Takeaways for Researchers

- For unprecedented processes with little existing data, Traditional DOE provides a robust, reliable starting point.

- For optimizing complex processes with many interacting variables, ML-driven approaches offer significant efficiency gains and a higher probability of discovering high-performing conditions.

- The future is hybrid: Combining the initial screening power of DOE with the deep optimization capabilities of ML presents a powerful strategy for accelerating bioprocess development.

The decision between Traditional DOE and Machine Learning is strategic. By aligning the problem type with the strengths of each approach, researchers and drug development professionals can dramatically increase their R&D efficiency and achieve superior outcomes.

A Practical Guide to Implementing DOE and ML in Your Lab

In the context of medium formulation and optimization research, a fundamental choice often presents itself: using a traditional Design of Experiments (DoE) approach or employing a Machine Learning (ML)-driven method. While ML optimization offers the power to capture complex, non-linear interactions and can enable rapid project turnaround, its success is almost entirely dependent on the quality and quantity of the data used for its training [28].

Traditional DoE provides a structured, statistical framework for acquiring this essential process knowledge efficiently. It is a systematic approach to planning, conducting, and analyzing controlled tests to determine the relationship between multiple input factors (e.g., component concentrations) and output responses (e.g., cell growth, yield) [29] [30]. This guide will provide a detailed, step-by-step protocol for executing a traditional DoE, serving as a critical reference for comparing its performance and data generation capabilities against ML-based techniques.

Core Concepts: Why Move Beyond One-Factor-at-a-Time?

The "one-factor-at-a-time" (OFAT) approach, where a single variable is changed while all others are held constant, is an intuitive but flawed method for complex systems like medium formulation. Its primary weakness is the inability to detect interactions between factors [29] [31]. For example, the optimal level of a growth factor might depend on the concentration of a specific salt. OFAT experiments would miss this interaction, potentially leading to a suboptimal formulation and an incomplete understanding of the system [31].

Traditional DoE overcomes this by varying all relevant factors simultaneously across a predefined experimental space. This allows researchers to not only measure the individual (main) effect of each factor but also to quantify the interaction effects between them, leading to a more robust and predictive model [29].

Key Terminology of DoE

- Factors: The independent input variables that can be controlled and manipulated (e.g., temperature, pH, concentration of components A, B, C) [29] [32].

- Levels: The specific settings or values chosen for each factor (e.g., for Concentration A: 0.1 g/L (low) and 0.5 g/L (high)) [29].

- Responses: The dependent output variables or measured results (e.g., final titer, cell density, product purity) [29] [32].

- Interactions: Occur when the effect of one factor on the response depends on the level of another factor. Uncovering these is a key advantage of DoE [29] [31].

Experimental Protocol: A Step-by-Step Guide

Executing a traditional DoE for medium formulation is a disciplined process. The following workflow outlines the critical stages, from planning to validation.

Diagram Title: Traditional DoE Workflow

Step 1: Define the Problem and Goals

Clearly state the objective of the experiment. For a medium formulation study, this could be "to maximize final product titer while minimizing impurity levels." Identify the key performance indicators (responses) you need to measure [29].

Step 2: Select Factors and Levels

Identify all potential variables (factors) that could influence the responses. These can be quantitative (e.g., concentration of glucose, glutamine) or qualitative (e.g., supplier of a raw material) [32]. Based on prior knowledge or preliminary experiments, select a realistic and sufficiently wide range for each factor by defining its high and low levels [29] [30]. For a screening design, 2-3 factors each at two levels is a common starting point.

Table: Example Factors and Levels for a Medium Formulation Screening DoE

| Factor Name | Factor Type | Low Level (-1) | High Level (+1) |

|---|---|---|---|

| Glucose Concentration | Quantitative | 2.0 g/L | 6.0 g/L |

| Glutamine Concentration | Quantitative | 2.0 mM | 6.0 mM |

| Serum Percentage | Quantitative | 2% | 5% |

Step 3: Choose an Experimental Design

The choice of design depends on the number of factors and the goal of the study.

- Full Factorial Design: The gold standard for investigating a small number of factors (typically ≤ 4). It tests every possible combination of factor levels. For k factors each at 2 levels, it requires 2k runs. This design can estimate all main effects and all interactions [29] [30].

- Fractional Factorial Design: An efficient alternative for screening a larger number of factors (e.g., 5-10). It tests only a carefully selected fraction of the full factorial combinations, sacrificing the ability to measure higher-order interactions in exchange for a significantly reduced number of experiments [29].

For the 3-factor example in the table above, a full factorial design would require 2³ = 8 unique experimental runs.

Table: Full Factorial Design Matrix for 3 Factors

| Run Order | Glucose | Glutamine | Serum | Response: Titer |

|---|---|---|---|---|

| 1 | -1 (2.0 g/L) | -1 (2.0 mM) | -1 (2%) | To be measured |

| 2 | +1 (6.0 g/L) | -1 (2.0 mM) | -1 (2%) | ... |

| 3 | -1 (2.0 g/L) | +1 (6.0 mM) | -1 (2%) | ... |

| 4 | +1 (6.0 g/L) | +1 (6.0 mM) | -1 (2%) | ... |

| 5 | -1 (2.0 g/L) | -1 (2.0 mM) | +1 (5%) | ... |

| 6 | +1 (6.0 g/L) | -1 (2.0 mM) | +1 (5%) | ... |

| 7 | -1 (2.0 g/L) | +1 (6.0 mM) | +1 (5%) | ... |

| 8 | +1 (6.0 g/L) | +1 (6.0 mM) | +1 (5%) | ... |

Step 4: Conduct the Experiments

The experiments must be executed according to a randomized run order generated by statistical software. Randomization is critical to minimize the effects of uncontrolled, "lurking" variables (e.g., minor day-to-day equipment calibration drift) that could bias the results [29] [30].

Step 5: Analyze the Data and Build a Model

Input the experimental results into a statistical software package. The analysis will typically involve:

- Analysis of Variance (ANOVA) to determine which factors and interactions have a statistically significant effect on the responses.

- Generating coefficients for a mathematical model (e.g., a linear or quadratic equation) that describes the relationship between the factors and the response [31].

- Creating visualizations like Pareto charts (to see the relative importance of effects) and interaction plots (to understand how factors influence each other) [30].

Step 6: Validate the Model and Confirm Optimal Settings

Run a small number of confirmation experiments at the factor settings predicted by the model to be optimal. The agreement between the predicted and actual measured values validates the model and confirms the robustness of the optimized medium formulation [29].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents commonly used in bioprocess development and medium optimization studies.

Table: Essential Reagents for Cell Culture Medium Optimization

| Research Reagent | Function in Medium Formulation |

|---|---|

| Basal Medium | Provides the essential foundation of inorganic salts, amino acids, vitamins, and carbohydrates to support basic cellular metabolism and growth. |

| Growth Factors | Proteins (e.g., IGF, FGF) that signal cells to proliferate and survive, directly impacting final cell density and productivity. |

| Serum | A complex, undefined mixture (e.g., Fetal Bovine Serum) that provides a wide range of growth factors, hormones, and attachment factors. |

| Chemically Defined Supplements | Used to replace serum, these precise formulations (e.g., lipids, trace elements) reduce variability and enhance process control. |

| pH Indicator | A dye (e.g., phenol red) that provides a visual assessment of medium pH, serving as an initial check for metabolic activity and culture health. |

Performance Comparison: Traditional DoE vs. Machine Learning

The following table summarizes a objective comparison between Traditional DoE and ML-based optimization based on available literature and practical considerations.

Table: Performance Comparison of Traditional DoE vs. Machine Learning

| Characteristic | Traditional DoE | Machine Learning |

|---|---|---|

| Underlying Approach | Structured, statistical framework based on predefined matrices and linear regression/RSM [29] [31]. | Ensemble of algorithms (e.g., neural networks, random forest) trained on data to capture complex patterns [28]. |

| Data Generation | Active and Direct: Designs and executes specific experiments to efficiently fill the design space [29]. | Passive and Dependent: Requires a pre-existing dataset (often initially generated by DoE) for training [28]. |

| Handling of Interactions | Excellent at detecting and quantifying two-factor interactions; can model higher-order interactions with more complex designs [29]. | Superior at modeling complex, non-linear, and higher-order interactions if the training data is sufficient [28]. |

| Number of Experiments | Highly efficient and predetermined; provides maximum information from a minimum number of runs (e.g., 8 runs for 3 factors) [29] [31]. | Can be high; requires a substantial dataset for accurate training, though it can predict optima without running every possible combination [28]. |

| Interpretability | High: Produces a transparent model (e.g., an equation) where the effect of each factor is clear and quantifiable [30]. | Low (Black Box): The model's predictions can be difficult to interpret and trace back to specific factor contributions. |

| Best Application | Building foundational process understanding, screening critical factors, and initial optimization under a Quality by Design (QbD) framework [29]. | Fine-tuning and optimization within a well-understood design space, especially for systems with known high non-linearity [28]. |

| Computational Cost | Low to moderate. | High, due to the training of complex models. |

| Regulatory Compliance | Well-established and accepted by regulatory bodies like the FDA as part of a QbD paradigm [29]. | Emerging; requires rigorous validation to demonstrate model predictability and reliability. |

Traditional Design of Experiments remains an indispensable tool in the researcher's arsenal, particularly for the initial stages of medium formulation. Its power lies in its systematic, efficient, and transparent approach to building fundamental process understanding. By revealing critical main effects and interactions through a minimal number of experiments, it creates a robust and defensible knowledge base.

In the broader thesis of ML versus traditional DoE, they are not purely adversarial but often complementary. A traditional DoE is frequently the most scientifically sound and resource-efficient method to generate the high-quality initial dataset required to train a powerful ML model. This synergy allows researchers to first establish causal relationships and a stable operating space with DoE, and then leverage ML to navigate that space and uncover deeper, non-linear patterns for ultimate optimization.

The optimization of culture media is a critical, yet notoriously challenging, step in biopharmaceutical development and regenerative medicine. Both media and biological systems are highly complex, with numerous components interacting in non-linear ways that are difficult to predict. For decades, researchers have relied on traditional One-Factor-at-a-Time (OFAT) approaches and statistical Design of Experiments (DOE) methods to navigate this complexity, despite their recognized limitations. The emergence of machine learning (ML) presents a transformative opportunity to enhance these traditional methodologies, particularly when integrated within a structured data foundation.

This guide objectively compares the performance of traditional DOE, ML, and hybrid ML-DOE approaches for medium optimization, drawing on recent experimental studies and providing the methodological details researchers need to implement these strategies effectively.

Traditional vs. Modern Optimization Approaches

Limitations of Traditional Methods

Traditional optimization methods have been widely used but possess significant drawbacks for complex biological systems:

One-Factor-at-a-Time (OFAT): This approach varies a single factor while holding all others constant. While straightforward and widely taught, OFAT provides limited coverage of the experimental space, fails to identify interactions between factors, and is an inefficient use of resources, often missing the optimal solution [5].

Design of Experiments (DOE): DOE employs a structured set of tests to systematically explore the experimental "space." It is more efficient than OFAT, able to establish solutions with minimal resources. However, it typically requires a minimum entry of approximately 10 experiments and may still require running experiments that researchers anticipate will "fail" [5]. Furthermore, DOE methods like Response Surface Methodology (RSM) often rely on quadratic polynomial approximations, which may be too simple to represent the comprehensive interactions in a highly complex medium [33].

The Rise of Machine Learning and Active Learning

Machine learning, particularly active learning, has emerged as a powerful alternative. Unlike traditional DOE, active learning uses ML algorithms to select which experiments to perform next in an iterative loop, dramatically increasing data efficiency [33] [13].