Breaking the Biomass Barrier: A Guide to Accurate Sequencing with Minimal Input

Accurately profiling microbial communities in low-biomass environments—such as human tissues, cleanrooms, and air—is a formidable challenge in microbiome research.

Breaking the Biomass Barrier: A Guide to Accurate Sequencing with Minimal Input

Abstract

Accurately profiling microbial communities in low-biomass environments—such as human tissues, cleanrooms, and air—is a formidable challenge in microbiome research. Contamination, host DNA, and technical biases can easily obscure true biological signals when DNA input is minimal. This article synthesizes the latest methodological advances, from optimized sampling and specialized library prep to sophisticated bioinformatic decontamination. We provide a actionable framework for researchers and drug development professionals to determine the minimum input requirements for robust 16S rRNA, metagenomic, and long-read sequencing, enabling reliable species-resolution insights from picogram quantities of DNA and paving the way for discoveries in clinical diagnostics and biomedical science.

The Low-Biomass Landscape: Defining Challenges and Critical Thresholds

What Constitutes a Low-Biomass Sample? Key Environments from Clinical to Industrial

Definition and Key Environments of Low-Biomass Samples

In microbiome research, a low-biomass sample is characterized by a low absolute amount of microbial DNA, which approaches the limits of detection of standard DNA-based sequencing methods [1]. In these samples, the target DNA 'signal' can be very close to the contaminant 'noise', making them disproportionately vulnerable to contamination and cross-contamination [1] [2]. Biomass exists on a continuum, and the associated challenges become more pronounced the fewer microbes are present in the sample [2].

The table below summarizes the key environments where low-biomass samples are commonly encountered, spanning clinical, industrial, and natural settings.

Table 1: Key Low-Biomass Environments and Their Associated Challenges

| Environment Category | Specific Examples | Key Characteristics & Challenges |

|---|---|---|

| Clinical & Host-Associated | Human blood [1] [2], respiratory tract [1] [3] [4], placenta [1] [2], fetal tissues [1], breast milk [1], brain [1], tumors [2] | High host-to-microbial DNA ratio; often involves sterile sites where contamination can lead to false positives [2] [5]. |

| Industrial & Built Environments | Dairy/food processing facilities [6], cleanrooms (e.g., for spacecraft assembly) [7], hospital operating rooms [7], treated drinking water [1] | Surfaces are designed to be clean; microbial load is intentionally minimized, making contamination a major concern [7] [6]. |

| Natural Environments | Hyper-arid soils [1], deep subsurface [1] [2], atmosphere/air [1], ice cores [1], glaciers [2], hypersaline brines [1] | Extreme conditions limit microbial life; sample collection is often complex, increasing contamination risk [1]. |

Core Analytical Challenges and Troubleshooting

Working with low-biomass samples presents a unique set of methodological challenges that can compromise biological conclusions if not properly addressed.

The main technical pitfalls in low-biomass research stem from the introduction of non-native DNA and analytical errors.

- External Contamination: This is the unwanted introduction of DNA from sources other than the sample itself. Contaminants can be introduced from laboratory reagents and kits ("kitome") [7] [8], sampling equipment, and personnel during sample collection or processing [1] [2]. In low-biomass samples, these contaminants can constitute a large proportion, or even the majority, of the sequenced DNA [2].

- Cross-Contamination (Well-to-Well Leakage): Also known as the "splashome," this occurs when DNA from one sample leaks into adjacent samples during processing, for example, on a 96-well plate [1] [2]. This can violate the assumptions of many computational decontamination tools [2].

- Host DNA Misclassification: In metagenomic studies of host-associated samples (e.g., tissues, blood), the vast majority of sequenced DNA is from the host. If not properly accounted for, this host DNA can be misclassified as microbial by analysis software, generating noise or even artifactual signals [2].

- Batch Effects and Processing Bias: Differences in protocols, reagent batches, personnel, or laboratory conditions can introduce technical variations that are confounded with the biological groups of interest, leading to false conclusions [2].

Experimental Design and Workflow Troubleshooting

A contamination-aware experimental design is the most critical step in ensuring the validity of a low-biomass study. The following workflow diagram outlines key considerations at each stage.

Essential Research Reagent Solutions

Using the right reagents and tools is fundamental to success. The table below lists key materials for low-biomass research.

Table 2: Essential Research Reagent Solutions for Low-Biomass Studies

| Item | Function | Key Considerations |

|---|---|---|

| DNA-Free Nucleic Acid Extraction Kits | Isolate microbial DNA from samples while minimizing co-extraction of contaminants. | Opt for "ultra-clean" kits designed for low biomass (e.g., for serum/plasma) [8]. Be aware of the kit-specific "kitome" [7]. |

| Personal Protective Equipment (PPE) | Creates a barrier between the operator and the sample to reduce human-derived contamination. | Should include gloves, masks, cleansuits, and shoe covers as appropriate. Critical during sampling and lab processing [1]. |

| DNA Decontamination Solutions | Removes contaminating DNA from surfaces and equipment. | Sodium hypochlorite (bleach) is effective for degrading DNA on surfaces and can even be used to pre-treat silica columns [1] [8]. |

| Negative Controls | Characterize the background contaminant DNA present in reagents and the workflow. | Should include blank extraction controls, no-template PCR controls, and collection kit controls [2]. Multiple controls are essential [1]. |

| Magnetic Bead-Based Purification Systems | High-efficiency recovery of trace amounts of DNA during cleanup steps. | More efficient for low-input samples than traditional spin columns. Can be used with carrier RNA to improve recovery [9]. |

| High-Sensitivity DNA Quantification Kits | Accurately measure the very low concentrations of DNA obtained. | Fluorometric methods (e.g., Qubit) are required over UV spectrophotometry (NanoDrop), which is inaccurate at low concentrations [9]. |

Frequently Asked Questions (FAQs)

What is the single most important step in a low-biomass microbiome study? The most critical step is a rigorous experimental design that includes a comprehensive set of negative controls collected and processed alongside your true samples. These controls are non-negotiable for identifying contamination sources and validating your findings [1] [2] [7].

My DNA concentration is too low for sequencing. What can I do? You have several options:

- Concentrate your eluate: Use a speed vacuum or magnetic beads to reduce the elution volume and increase concentration [7] [9].

- Use a high-sensitivity library prep kit: Some kits are specifically designed for low DNA inputs.

- Employ whole-genome amplification (WGA): Methods like Multiple Displacement Amplification (MDA) can generate sufficient DNA, but may introduce bias [6]. The choice depends on your downstream analysis goals.

How can I tell if my results are valid or just contamination? Compare your experimental samples to your negative controls.

- Taxonomic Profile: Microbes that are dominant in your controls are likely contaminants.

- Abundance: Taxa present in your samples at levels only marginally higher than in your controls should be interpreted with extreme caution.

- Biomass Quantification: Using qPCR to quantify the 16S rRNA gene load in both samples and controls can provide evidence of a true signal; a significantly higher load in samples adds confidence [5].

Are there specific computational tools to decontaminate my data?

Yes, several R packages exist. The decontam package uses prevalence or frequency to identify contaminants [10]. SCRuB and the newer micRoclean package can account for well-to-well leakage and decontaminate multiple batches, providing a filtering loss statistic to avoid over-filtering [10]. The choice of tool should align with your research goal (e.g., estimating original composition vs. strict biomarker identification) [10].

Is 16S rRNA gene sequencing or shotgun metagenomics better for low-biomass samples? For very low-biomass samples, 16S rRNA gene sequencing is currently the more reliable approach. It involves targeted amplification of a single gene, making it more sensitive. Shotgun metagenomics, which sequences all DNA, often yields mostly host DNA in host-associated samples, making it difficult to obtain sufficient microbial sequences for robust analysis [5]. However, protocols for shotgun metagenomics of low-biomass samples are improving [7] [6].

In low-biomass sequencing research, where the target microbial DNA signal is minimal, contamination is not a minor inconvenience—it is a fundamental crisis. When studying samples with low microbial biomass, such as certain human tissues, atmospheric particles, or deep subsurface environments, the DNA from external sources and even the reagents themselves can drastically skew results, leading to false conclusions and irreproducible science. This guide provides actionable troubleshooting and FAQs to help you secure the integrity of your low-input sequencing research.

Troubleshooting Guide: FAQs on Contamination

Contamination can be introduced at virtually every stage of your workflow. The table below summarizes the primary sources and their origins [1].

| Source Category | Specific Examples | Typical Origin Point |

|---|---|---|

| Human Operator | Skin cells, hair, aerosol droplets from breathing | Sample collection, handling in lab |

| Sampling Equipment | Non-sterile swabs, collection vessels, tools | Sample collection, storage |

| Laboratory Reagents & Kits | Enzymes, buffers, purification kits | DNA/RNA extraction, library preparation |

| Laboratory Environment | Airborne particles, bench surfaces, equipment | Sample processing, library preparation |

| Cross-Contamination | Well-to-well leakage during PCR, sample mixing | Library amplification, multiplexing |

How can I prevent contamination during sample collection?

Prevention is the most effective strategy. Key methods include [1]:

- Decontaminate Equipment: Use single-use, DNA-free consumables whenever possible. Reusable equipment should be decontaminated with 80% ethanol (to kill cells) followed by a nucleic acid-degrading solution like sodium hypochlorite (bleach) to remove trace DNA. Autoclaving alone does not remove persistent DNA.

- Use Personal Protective Equipment (PPE): Wear gloves, masks, lab coats, and—for extremely sensitive samples—hair nets and shoe covers. PPE acts as a physical barrier against human-derived contamination from skin, breath, and clothing.

- Collect Sampling Controls: Include controls such as an empty collection vessel, a swab of the air, or an aliquot of preservation solution. Process these controls alongside your samples to identify the profile of contaminating DNA.

My sequencing results show unexpected microbial profiles. How do I determine if it's contamination?

Follow this diagnostic workflow to systematically identify the source of contamination.

My library yield is low. Could this be related to contamination?

Yes, certain contaminants can directly cause low yield by inhibiting enzymatic reactions. The table below outlines common causes and solutions for low library yield, which can be related to contamination or other preparation errors [11].

| Root Cause | Mechanism of Failure | Corrective Action |

|---|---|---|

| Sample Contaminants | Residual phenol, EDTA, salts, or guanidine inhibit ligases and polymerases [11]. | Re-purify input sample; ensure 260/230 ratio > 1.8; use fresh wash buffers. |

| Inaccurate Quantification | UV absorbance (NanoDrop) overestimates concentration by counting non-template background [11]. | Use fluorometric quantification (e.g., Qubit) for accurate measurement of usable DNA/RNA. |

| Overly Aggressive Cleanup | Desired fragments are accidentally removed during size selection or purification [11]. | Optimize bead-to-sample ratios; avoid over-drying magnetic beads. |

| Adapter Dimer Formation | Excess adapters ligate to each other, consuming reagents and dominating the final library [11]. | Titrate adapter-to-insert molar ratio; optimize ligation conditions. |

What are the best practices for nucleic acid extraction from low-biomass samples?

- Optimized Lysis Protocols: For tough samples like bone, a combination of chemical (e.g., EDTA for demineralization) and mechanical homogenization (e.g., bead beating) is often necessary. However, balance is critical, as EDTA is a known PCR inhibitor [12].

- Environmental Control: Maintain precise temperature control (often 55°C–72°C) during digestion and optimize pH conditions to maximize yield while preserving DNA integrity [12].

- Extraction Controls: Always include a "blank" extraction control where nuclease-free water is put through the entire extraction process. This identifies contaminants inherent to your kits and reagents [1].

The Scientist's Toolkit: Essential Reagents & Controls

The following table details key reagents, controls, and equipment essential for conducting reliable low-biomass sequencing research [1] [12].

| Tool Category | Specific Item | Function & Importance |

|---|---|---|

| Decontamination Agents | Sodium Hypochlorite (Bleach) | Degrades nucleic acids on surfaces and equipment; crucial for removing contaminating DNA that ethanol alone cannot. |

| 80% Ethanol | Kills microbial cells on surfaces, gloves, and equipment. Use before a DNA-degrading solution for full decontamination. | |

| Essential Controls | Sampling Controls (Blanks) | Identifies contaminants introduced from the collection environment, air, or equipment. |

| Extraction Blank (Water) | Pinpoints contamination originating from DNA extraction kits and reagents. | |

| PCR/ Library Prep Blank | Detects contamination from enzymes, buffers, and tubes used during library construction. | |

| Specialized Equipment | Bead Homogenizer (e.g., Bead Ruptor Elite) | Provides controlled, mechanical lysis for tough samples while minimizing DNA shearing through optimized speed and temperature settings [12]. |

| UV-C Crosslinker | Sterilizes plasticware and surfaces by degrading nucleic acids, helping to ensure an RNA/DNA-free work area. | |

| Validated Kits | SMARTer Universal Low Input RNA Kit | Utilizes SMART and random priming technology for sensitive cDNA synthesis from low amounts of degraded or poly(A)-lacking RNA (e.g., from FFPE samples) [13]. |

Frequently Asked Questions (FAQs)

Q1: What is well-to-well contamination, and why is it a critical concern in low-biomass research? Well-to-well contamination, or well-to-well leakage, is a previously undocumented form of cross-contamination where genetic material from one sample migrates to neighboring wells in a plate during laboratory processing [14]. This is particularly critical for low-biomass sequencing research because the contaminant DNA can make up a large proportion of the total genetic material in samples with very few microbial cells, severely distorting results and leading to false conclusions about the sample's true composition [14] [1].

Q2: During which steps of the experimental workflow does well-to-well leakage occur? Research has quantified that this contamination occurs primarily during DNA extraction and, to a lesser extent, during library preparation. The contribution of barcode leakage (index hopping) is negligible when using error-correcting barcodes [14] [15].

Q3: Which laboratory methods are more susceptible to this problem? Plate-based DNA extraction methods demonstrate significantly higher levels of well-to-well contamination compared to manual single-tube extraction methods. However, single-tube methods may have higher levels of background contaminants from reagents [14].

Q4: How far can contamination travel across a plate? Contamination events are most frequent in immediately adjacent wells, with a strong distance-decay effect. However, rare transfer events can occur up to 10 wells apart [14].

Q5: How does sample biomass influence the risk? The effect of well-to-well contamination is greatest in samples with lower biomass. In high-biomass samples, the signal from the true sample is strong enough to dwarf the contaminant signal, but in low-biomass samples, the contaminant can dominate [14].

Troubleshooting Guide: Identifying and Mitigating Well-to-Well Leakage

Problem: Suspected Well-to-Well Contamination

You observe unexpected microbial sequences in your negative controls, or the community composition of your low-biomass samples seems to be influenced by their proximity to high-biomass samples on the processing plate.

Investigation and Diagnosis

- Review Your Plate Layout: Check if blanks or low-biomass samples are placed adjacent to high-biomass samples. A non-randomized layout is a primary risk factor [14].

- Analyze Contamination Patterns: Map the sequences from your negative controls and low-biomass samples against the plate layout. Well-to-well contamination often shows a spatial pattern, where contaminants match the sources in neighboring wells, unlike reagent contamination which is more uniform [14].

- Quantify the Impact: Assess how the suspected contamination affects your alpha and beta diversity metrics. Well-to-well leakage can negatively impact both [14].

Solutions and Best Practices

To reduce and manage well-to-well contamination, implement the following strategies:

- Randomize Samples Across Plates: Do not group all blanks or low-biomass samples together. Randomize their placement relative to high-biomass samples to break up spatial patterns [14].

- Group Samples by Biomass: Whenever possible, process samples of similar biomasses together on the same plate [14].

- Choose Extraction Methods Wisely: For critical low-biomass work, consider using manual single-tube extraction protocols or hybrid plate-based cleanups, which have been shown to reduce well-to-well transfer [14].

- Employ Rigorous Controls: Include multiple negative controls (e.g., blank extraction controls) distributed across the plate, not just in a single column. This helps map the pattern and extent of contamination [1].

- Avoid Over-Simplistic Decontamination: Do not automatically remove all taxa found in negative controls, as this can remove genuine signal. Many sequences in blanks may be microbes from other samples in your study (well-to-well contamination), not just reagent-derived contaminants [14].

Quantitative Data on Well-to-Well Contamination

The following table summarizes key quantitative findings from a systematic study on well-to-well contamination [14].

Table 1: Quantified Characteristics of Well-to-Well Contamination

| Aspect | Finding | Experimental Context |

|---|---|---|

| Primary Source | DNA extraction step | 96-well plate extraction with unique source isolates |

| Effect of Extraction Method | Plate-based methods had more well-to-well contamination than single-tube methods | Comparison of automated plate-based vs. manual column cleanups |

| Typical Contamination Distance | Highest in immediately proximate wells, with a strong distance-decay relationship | Measurement of contamination frequency vs. Pythagorean well distance |

| Maximum Observed Distance | Rare events up to 10 wells apart | 96-well plate layout |

| Impact of Biomass | Greatest in samples with lower biomass | Plates contained high-biomass sources and low-biomass "sink" samples |

Experimental Protocol: Assessing Well-to-Well Contamination in Your Lab

This protocol is adapted from a published experimental design to empirically characterize well-to-well contamination [14].

Objective: To quantify the rate and extent of well-to-well contamination in your laboratory's DNA extraction and library preparation workflow.

Materials:

- Genomic DNA or cultured isolates from 16 unique bacterial species.

- Sterile water or buffer for blanks.

- Low-biomass sample material (e.g., a dilute culture of a distinct organism).

- 96-well plates for DNA extraction and PCR.

- DNA extraction kits (both plate-based and single-tube if comparing methods).

- Library preparation reagents.

Method:

- Plate Layout: Design a 96-well plate layout containing:

- 16 source wells: Each containing a high biomass (~10^8 cells/ml) of a unique bacterial isolate.

- 24 sink wells: Containing a low-biomass (~10^6 cells/ml) of a different, identifiable organism.

- 48 blank wells: Containing no-template control (sterile water). Arrange these in a checkerboard or defined pattern to track contamination sources [14].

- DNA Extraction: Perform DNA extraction on the prepared plate according to your standard plate-based protocol.

- Library Preparation and Sequencing: Proceed with library preparation and sequencing. To control for barcode leakage (index hopping), include a separate plate with replicate wells of a unique control organism processed in its own PCR.

- Bioinformatic Analysis:

- Process sequencing reads to identify operational taxonomic units (OTUs) or amplicon sequence variants (ASVs).

- For each sink well and blank well, identify sequences that map to the unique source isolates.

- Quantify the transfer frequency and read counts from source wells to other wells.

Interpretation:

- Generate a heatmap of your plate layout to visualize cross-contamination.

- Plot contamination frequency against the distance from source wells to confirm the distance-decay relationship.

- A high frequency of source sequences in neighboring sink wells confirms significant well-to-well leakage in your workflow.

Research Reagent Solutions

Table 2: Key Reagents and Materials for Managing Contamination

| Item | Function/Description | Contamination Consideration |

|---|---|---|

| Automated Liquid Handler | For reproducible liquid handling in plate-based workflows. | Reduces human error and cross-contamination; enclosed hoods create a cleaner workspace [16]. |

| HEPA-Filtered Laminar Flow Hood | Provides a sterile air environment for sample handling. | Prevents airborne contaminants from settling on samples or plates [16]. |

| "Ultra-Clean" DNA/RNA Kits | Specially manufactured extraction kits. | Designed with lower levels of inherent kit-borne contaminants, crucial for low-biomass work [8]. |

| DNA Decontamination Solutions | Solutions like sodium hypochlorite (bleach). | Used to decontaminate surfaces and equipment by degrading trace DNA [1]. |

| Aerosol-Resistant Filter Pipette Tips | For liquid handling. | Prevent aerosols and liquids from entering pipette shafts, a common vector for cross-contamination [17]. |

The diagram below outlines the critical control points for well-to-well and background contamination in a typical low-biomass sequencing workflow.

Frequently Asked Questions

What is considered "low input" for DNA sequencing? Low input refers to DNA quantities that are at or below the nanogram (ng) level, extending down to picogram (pg) and even femtogram (fg) ranges [18] [19]. At these levels, the DNA from a sample may be equivalent to that of just a few hundred to a few thousand microbial cells [20].

Why is low-biomass sequencing so challenging? The primary challenges include:

- Contamination: The DNA from your sample can be overwhelmed by background DNA from reagents (the "kitome"), the laboratory environment, or personnel [7] [1] [2].

- Amplification Bias: Whole Genome Amplification (WGA) methods can unevenly amplify sequences, skewing genomic representation based on factors like GC-content [20] [21].

- Technical Biases: Library preparation methods themselves can introduce shifts in GC content, fragment size distributions, and overall community composition, especially at the lowest input levels [20].

What are the most important controls to include? A rigorous experimental design for low-biomass sequencing must include multiple negative controls to identify contamination sources [1] [2]. These should encompass:

- Process Controls: Blank extractions (using water instead of sample) to profile the "kitome" [2] [22].

- Sampling Controls: For surface samples, this includes controls from the sampling equipment and air [1].

- Mock Communities: Samples with known compositions of bacteria to validate that your entire workflow is accurate and unbiased [22].

Can I sequence without amplification? Yes, it is possible but requires highly sensitive technology. One study demonstrated that the MinION nanopore sequencer could correctly identify microbes from a pure culture with input amounts as low as 2 pg of DNA, without any amplification [18]. However, for most complex, low-biomass samples, some form of amplification is currently required.

Technology Benchmarks and Protocols

The following table summarizes the performance of different technologies and kits as reported in various studies for processing low-input DNA.

| Technology / Kit | Minimum Input Demonstrated | Key Observations / Biases | Citation |

|---|---|---|---|

| Nanopore MinION (no amplification) | 2 pg | Successfully identified E. coli and S. cerevisiae; requires very low number of active nanopores (50). | [18] |

| Zymo Microbiomics Services (in-house method) | 100 fg | Accurate reconstruction of a mock microbial community standard with little discernible bias. | [19] |

| NuGEN Ovation RNA-Seq System (SPIA) | 500 pg total RNA | Achieved <3.5% rRNA reads; retained transcriptome fidelity for mouse tissues. Compared favorably to poly-A and rRNA depletion methods. | [23] |

| Illumina Nextera XT | 1 pg | Shift towards more GC-rich sequences at lower inputs; increased duplicate read rate. | [20] |

| MALBAC (Single-cell WGA) | 1 pg | Displayed a different GC profile compared to other methods and the unamplified control. | [20] |

| Mondrian (NuGEN Ovation) | 1 pg | GC content shifted towards richer sequences at lower input quantities. | [20] |

Troubleshooting Common Issues

Problem: High levels of human or host DNA in metagenomic data.

- Solution: This is a major challenge for host-associated low-biomass samples (e.g., tissue, blood). Use analysis tools designed to distinguish microbial reads from host reads to avoid misclassification, which can create artifactual signals [2].

Problem: Contamination from reagents or the kitome dominates the sequencing results.

- Solution: This is one of the most critical issues. Incorporate multiple negative controls (blank extractions, etc.) from the start of your experiment. Use computational decontamination tools (e.g., SourceTracker, decontam) that leverage these controls to identify and remove contaminant sequences from your data [7] [1] [2].

Problem: Low library yield or amplification bias.

- Solution:

- Re-purify Input: Ensure your starting DNA is free of contaminants like salts or phenol that inhibit enzymes [11].

- Quantify Accurately: Use fluorometric methods (Qubit) over UV absorbance (NanoDrop) for more accurate DNA quantification [11].

- Optimize Amplification: If using WGA, test different methods and minimize cycles to reduce bias. For PCR-based library prep, avoid overcycling [11] [21].

- Titrate Adapters: Use the optimal adapter-to-insert molar ratio to maximize ligation efficiency and minimize adapter-dimer formation [11].

- Solution:

Experimental Workflow: From Sample to Sequence

The diagram below outlines a generalized workflow for a low-input DNA sequencing experiment, highlighting critical control points.

The Scientist's Toolkit: Essential Research Reagents and Materials

| Item | Function | Example Use Case |

|---|---|---|

| DNeasy PowerLyzer Powersoil Kit | DNA extraction from tough-to-lyse samples, including soil and microbial cultures. | Used to extract DNA from E. coli and S. cerevisiae for ultra-low input sensitivity testing [18]. |

| Maxwell RSC Instrument | Automated nucleic acid extraction system, enabling standardized processing. | Used for extracting DNA from ultra-low biomass surface samples collected with the SALSA device [7]. |

| InnovaPrep CP Concentrator | Concentrates dilute liquid samples using hollow fiber filtration. | Used to concentrate samples from large surface areas into a smaller volume suitable for DNA extraction [7]. |

| Agencourt RNAClean XP Beads | SPRI (Solid Phase Reversible Immobilization) beads for DNA cleanup and size selection. | Used in the Ovation RNA-Seq protocol to purify double-stranded DNA before amplification [23]. |

| ZymoBIOMICS Microbial Community DNA Standard | A defined mock community of known microbial composition. | Serves as a positive control to validate the accuracy and bias of the entire sequencing workflow [18] [22]. |

| SALSA Sampling Device | A handheld device that uses a squeegee and aspiration to sample large surface areas efficiently. | Designed for collecting microbiome samples from ultra-low biomass surfaces like cleanrooms [7]. |

Cutting-Edge Protocols for Ultra-Low Input Sequencing Success

Frequently Asked Questions (FAQs)

1. What is the single most critical factor for ensuring accurate low-biomass sequencing results? The most critical factor is the rigorous use of multiple negative controls throughout the entire process. In low-biomass studies, the signal from contaminating DNA present in laboratory reagents and environments (the "kitome") can easily overwhelm the true environmental signal. Sequencing these controls alongside your true samples is non-negotiable for distinguishing contamination from genuine findings [7] [8].

2. How can I improve sampling efficiency from surfaces? Traditional swabs have low recovery efficiency (~10%). For larger surface areas, specialized devices like the Squeegee-Aspirator for Large Sampling Area (SALSA) can increase recovery to 60% or higher by transferring sampling solution directly into a collection tube, bypassing the inefficient elution step from swab fibers [7].

3. Our RNA sequencing of low-biomass plasma samples shows exogenous sequences. Are these real? Not necessarily. Contaminating RNA molecules have been identified in the silica-based columns of widely used microRNA extraction kits. These artefactual sequences can dominate sequencing libraries. It is essential to perform "mock extractions" using only water to identify these kit-derived contaminants [8].

4. What are the best practices for storing purified RNA? To preserve RNA integrity, divide purified RNA into small aliquots to avoid repeated freeze-thaw cycles. Store aliquots in RNase-free water or TE buffer at –20°C for short-term needs (a few weeks) or at –70°C for long-term storage. Always use tightly sealed, RNase-free containers [24].

5. How do sample preparation practices differ for inorganic trace element analysis? For trace metals analysis, you must avoid glassware, as metals can leach from glass into acidic solvents. Instead, use high-purity polymer materials like polypropylene or fluoropolymer pipette tips and containers. Always wear powder-free nitrile gloves to prevent contamination from powders or skin [25].

Troubleshooting Common Low-Biomass Workflow Failures

Problem: High Levels of Contaminant Sequences in Sequencing Data

- Symptoms: Sequencing results are dominated by non-target species (e.g., Cutibacterium acnes, Paracoccus) that are also present in your negative control samples [7] [8].

- Root Causes:

- Solutions:

- Employ Multiple Controls: Include negative controls at every stage: sample collection (e.g., spraying and aspirating sterile water in the field), DNA extraction (reagent blanks), and library preparation [7].

- Use DNA/RNA-Free Reagents: Source certified DNA-free or "ultra-clean" kits, especially for RNA work where column contamination is known [8] [24].

- Decontaminate Surfaces: Clean work surfaces and tools with reagents like 70% ethanol, 10% bleach, or specific commercial products (e.g., DNA Away) to create a DNA-free environment [26].

- Increase Starting Material: If possible, sample a larger surface area or volume to increase the target analyte concentration above the contamination threshold [8].

Problem: Low DNA Yield from Surface Samples

- Symptoms: Insufficient DNA concentration for downstream library preparation, leading to failed sequencing runs or poor-quality data [7] [11].

- Root Causes:

- Solutions:

- Optimize Collection Method: Consider more efficient samplers like the SALSA device or validate the recovery efficiency of your swabs/wipes [7].

- Concentrate Samples: Use concentration methods such as hollow fiber concentration pipette tips (e.g., InnovaPrep CP) or SpeedVac concentration after collection [7].

- Minimize Sample Transfer: Choose collection methods that require fewer processing steps. The SALSA device, for example, deposits samples directly into a tube, eliminating an elution step [7].

- Validate Each Step: Use qPCR to quantify bacterial 16S rRNA genes at different stages to identify where the greatest losses are occurring [7].

Problem: Degraded or Poor-Quality RNA

- Symptoms: RNA appears degraded on a bioanalyzer trace, or downstream applications like reverse transcription fail.

- Root Causes:

- Solutions:

- Create an RNase-Free Zone: Designate a workspace cleaned with RNase-deactivating reagents. Use disposable RNase-free plasticware and filter tips. Always wear gloves [24].

- Stabilize Immediately: Flash-freeze tissue samples in liquid nitrogen or use commercial stabilization reagents (e.g., RNAprotect) immediately upon collection to halt enzymatic activity [24].

- Avoid Freeze-Thaw: Aliquot RNA extracts and store them at -70°C. Thaw each aliquot only once for a single use [24].

Experimental Protocols for Low-Biomass Research

Protocol: Microbial Profiling of a Low-Biomass Surface

This protocol outlines a method for rapid on-site characterization of microbiomes from ultra-low biomass surfaces, such as cleanrooms, using nanopore sequencing [7].

Workflow Overview:

Diagram Title: Low-Biomass Surface Sampling Workflow

Detailed Steps:

- Surface Sampling:

- Spray the target surface area (~1 m²) with sterile, DNA-free PCR-grade water using a UV-treated spray bottle.

- Use the SALSA device with a sterile, disposable collection head to squeegee and aspirate the liquid into a 5-mL collection tube [7].

- Sample Concentration:

- Concentrate the collected sample immediately using a device like the InnovaPrep CP-150 with a 0.2-µm hollow fiber concentrating pipette tip.

- Elute into a final volume of 150 µL of phosphate-buffered saline (PBS) [7].

- DNA Extraction:

- Extract DNA from a 100 µL aliquot of the concentrated sample using a automated system (e.g., Promega Maxwell RSC) with a kit designed for cells.

- Elute DNA in a small volume (e.g., 50 µL) of 10-mM Tris buffer [7].

- Library Preparation and Sequencing:

- Use a modified version of a low-input nanopore sequencing kit (e.g., Oxford Nanopore's Rapid PCR Barcoding Kit).

- Modifications may include additional PCR cycles to amplify the ultra-low input DNA [7].

- Sequence on a nanopore device (e.g., MinION). The total sample-to-sequencing time can be as little as ~9 hours, providing data within ~24 hours of collection [7].

Protocol: Identification and Removal of RNA Contaminants

This protocol helps identify and mitigate the effects of RNA contamination from extraction kits in small RNA (sRNA) studies of low-biomass samples like blood plasma [8].

Workflow Overview:

Diagram Title: RNA Contaminant Identification Process

Detailed Steps:

- Identify Contaminants:

- Perform a "mock extraction" by running nucleic acid-free water through your standard RNA extraction kit (e.g., miRNeasy Serum/Plasma kit) as if it were a real sample.

- Sequence the sRNA from this mock extract. Highly abundant non-host sequences are potential kit contaminants [8].

- Validate by qPCR:

- Design qPCR assays for the highly abundant non-host sequences found in step 1.

- Confirm their presence in your mock extracts and their absence in the nuclease-free water used for the extraction [8].

- Pinpoint the Source:

- Pass nuclease-free water through an otherwise untreated spin column from the kit and collect the eluate.

- If the contaminant sequences are amplified from this column eluate, the spin column is the confirmed source [8].

- Apply Mitigation:

- Option A (Decontamination): Treat columns with an oxidant like sodium hypochlorite, followed by thorough washing with RNase-free water, to reduce contaminant RNA levels by >100-fold. Note: Always validate that this treatment does not compromise the column's performance for your sample type. [8]

- Option B (Ultra-Clean Kits): Switch to commercially available "ultra-clean" kits specifically designed for low-biomass work, which show dramatically reduced contaminant levels [8].

- Option C (Minimum Input): Determine and use a minimum input volume of your starting material where the true biological signal reliably exceeds the contaminant background [8].

Data Presentation: Quantitative Comparisons

Table 1: Comparison of Surface Sampling Methods for Low-Biomass Recovery

| Method | Typical Recovery Efficiency | Key Advantages | Key Limitations | Best For |

|---|---|---|---|---|

| SALSA Device [7] | ~60% or higher | High efficiency; direct collection into tube; large surface area | Requires specialized device; may be less practical for small, intricate surfaces | Large, flat surfaces in cleanrooms or operating rooms |

| Traditional Swab [7] | ~10% | Inexpensive; readily available; flexible for various surfaces | Low and variable recovery; requires elution step, which causes sample loss | Small or curved surfaces where larger devices cannot be used |

| Wipes/Tape Strips [7] | 10-50% (often lower end) | Can cover large areas | Low recovery efficiency for DNA; requires complex processing and elution | Large, flat surfaces when SALSA is not available |

Table 2: Common Sequencing Preparation Problems and Solutions in Low-Biomass Work

| Problem Category | Typical Failure Signals | Common Root Causes in Low-Biomass Context | Corrective Actions |

|---|---|---|---|

| Sample Input / Quality [11] | Low library yield; high duplicate rate; smear in electropherogram | Sample degradation; contaminants (salts, phenol) inhibiting enzymes; inaccurate quantification of very low concentrations | Re-purify samples; use fluorometric quantification (Qubit) over UV absorbance; include carrier DNA if compatible |

| Contamination [7] [8] | Dominance of non-target species (e.g., C. acnes) in data; same species in negative controls | Kit-derived DNA/RNA ("kitome"); contaminated reagents or lab surfaces | Use ultra-clean kits; employ multiple negative controls; decontaminate workspaces; use dedicated equipment |

| Amplification / PCR [11] | Over-amplification artifacts; high duplicate rate; bias | Too many PCR cycles due to very low input; polymerase inhibitors | Optimize and minimize PCR cycles; use high-fidelity polymerases; ensure complete removal of inhibitors during cleanup |

| Purification / Cleanup [11] | Incomplete removal of adapter dimers; significant sample loss | Wrong bead-to-sample ratio; over-drying beads; small sample volumes being hard to handle | Precisely follow cleanup protocols; avoid over-drying beads; use glycogen or other carriers during precipitation |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Low-Biomass Research

| Item | Function | Consideration for Low-Biomass |

|---|---|---|

| SALSA Sampler [7] | Surface sample collection | Increases recovery efficiency to >60% by avoiding swab elution losses. |

| Ultra-Clean DNA/RNA Kits [8] | Nucleic acid extraction | Specifically manufactured to have lower background contamination. |

| Hollow Fiber Concentrator [7] | Sample concentration | Enables concentration of large volume liquid samples into a small elution volume. |

| RNase/DNase Inactivation Reagents [26] [24] | Workspace decontamination | Critical for creating a DNA/RNA-free environment (e.g., DNA Away, 10% bleach). |

| Negative Control Kits | Process control | Use the same extraction kits and reagents for your negative controls as for your samples. |

| Powder-Free Nitrile Gloves [25] | Personal protective equipment | Prevents contamination from powder particles and skin cells. |

| Non-Glassware Labware [25] | Sample containers and transfers | Use polypropylene or fluoropolymer tubes and tips to avoid leaching of metals and other contaminants from glass. |

Troubleshooting Guides

Common Low-Input Library Preparation Failures and Solutions

| Problem Category | Typical Failure Signals | Common Root Causes | Corrective Actions |

|---|---|---|---|

| Sample Input & Quality | Low starting yield; smear in electropherogram; low library complexity [11] | Degraded DNA/RNA; sample contaminants (phenol, salts); inaccurate quantification [11] | Re-purify input sample; use fluorometric quantification (e.g., Qubit) instead of UV absorbance alone; ensure purity ratios (e.g., 260/280 ~1.8) [11] [27]. |

| Fragmentation & Ligation | Unexpected fragment size; inefficient ligation; adapter-dimer peaks [11] | Over- or under-shearing; improper buffer conditions; suboptimal adapter-to-insert ratio [11] | Optimize fragmentation parameters; titrate adapter:insert molar ratios; ensure fresh ligase and optimal reaction temperature (~20°C) [11] [28]. |

| Amplification & PCR | Overamplification artifacts; high duplicate rate; bias [11] | Too many PCR cycles; inefficient polymerase due to inhibitors; primer exhaustion [11] | Reduce the number of PCR cycles; use a high-fidelity polymerase; ensure primers are not degraded [11] [28]. |

| Purification & Cleanup | Incomplete removal of adapter dimers; high sample loss; carryover of salts [11] | Wrong bead-to-sample ratio; over-drying beads; inadequate washing [11] | Precisely follow bead cleanup protocols; avoid letting beads crack; remove all residual ethanol during washes [11] [28]. |

Low Library Yield: Diagnosis and Action Plan

| Cause of Low Yield | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality / Contaminants | Enzyme inhibition during end-prep, ligation, or amplification by residual salts, phenol, or EDTA [11]. | Re-purify input sample using clean columns or beads; check 260/230 and 260/280 ratios [11] [27]. |

| Inaccurate Quantification | Overestimation of usable DNA mass by NanoDrop leads to suboptimal enzyme stoichiometry [11] [27]. | Use fluorometric methods (e.g., Qubit) for template quantification; calibrate pipettes [11] [27]. |

| Adapter Ligation Issues | Poor ligase performance or incorrect molar ratios reduce adapter incorporation into fragments [11]. | Titrate adapter:insert ratio; ensure fresh ligase and buffer; maintain optimal incubation temperature [11] [28]. |

| Overly Aggressive Cleanup | Desired library fragments are accidentally excluded or lost during size selection steps [11]. | Optimize bead-to-sample ratios for size selection; avoid over-drying beads [11] [28]. |

Frequently Asked Questions (FAQs)

Protocol Selection and Adaptation

Q: What are the critical differences between standard and low-input library prep workflows, and can I use a standard protocol for low-input samples?

A: Low-input protocols are specifically optimized to maximize library yield from limited material. Key differences often include [29]:

- Master Mix Formulation: Low-input workflows may pre-incubate enzymes to enhance library quality for tiny inputs, whereas this can reduce yield for standard inputs [29].

- Size Selection: The low-input protocol's size selection step can result in slightly lower sequencing metrics (e.g., Q30 scores, insert size) [29].

- Quantification: Library yield must be assessed by qPCR before pooling for low-input preps, as yields are not normalized [29]. Using a standard workflow for low-input DNA will result in significantly lower library yields and is not recommended [29].

Q: How can I successfully sequence low-input chromatin conformation capture (3C) libraries, which are notoriously challenging?

A: Traditional multi-contact 3C methods (e.g., Pore-C) require millions of cells. The novel CiFi method overcomes this by incorporating a genome-wide amplification step after the 3C procedure. This step dramatically increases raw sequence yields and read lengths, enabling efficient PacBio HiFi sequencing from as little as ~370 ng of DNA (equivalent to ~62,000 cells) [30].

Input Material and Quality Control

Q: What is the minimum input requirement, and how do I quantify my sample accurately?

A: Requirements vary by platform and application.

- PacBio HiFi: Ultra-low-input (ULI) protocols have been demonstrated with inputs as low as 10 ng, with newer refinements (Ampli-Fi) requiring only 1 ng [31].

- Oxford Nanopore: A specific low-input-by-PCR protocol requires 100 ng of sheared genomic DNA [32]. For accurate quantification, do not rely on NanoDrop alone, as it overestimates concentration by counting non-template background and RNA. Use a fluorometer (e.g., Qubit) for accurate DNA mass measurement [11] [27].

Q: What are the critical quality checks for my input DNA before starting a low-input protocol?

A: A comprehensive QC check is vital for success [27]:

- Purity: Use a NanoDrop to check 260/280 ratio (~1.8) and 260/230 ratio (2.0-2.2). Low ratios indicate contaminants that require additional purification [27].

- Size: Assess fragment size distribution using an Agilent Bioanalyzer, Femto Pulse system, or gel electrophoresis. Verify that the size matches the expectations of your protocol [27].

- Degradation: Look for smearing on a gel or electropherogram, which indicates degraded DNA that will result in low-complexity libraries [11] [27].

During the Experiment

Q: I see a sharp peak at ~127 bp on my Bioanalyzer. What is this and how do I fix it?

A: This is a classic sign of adapter dimer formation [11] [28]. To address this:

- Recovery: Perform another bead cleanup using a 0.9x bead ratio to preferentially remove the smaller dimer fragments [28].

- Prevention: In future preps, ensure your adapter-to-insert molar ratio is optimized. Avoid adding the adapter to the ligation master mix; instead, add the adapter to the sample first, mix, and then add the ligase master mix [28].

Q: What is the expected library recovery rate, and how much should I load onto the sequencer?

A: Recovery depends on experience and the specific kit.

- Recovery Rate: For a standard Nanopore ligation sequencing kit starting with 1 µg of DNA, new users can expect 350-500 ng of final library (35-50% recovery), while experienced users can achieve 600-800 ng (60-80% recovery) [27].

- Sequencer Loading:

Experimental Protocols for Key Low-Input Methods

CiFi: Low-Input Chromatin Conformation Capture with HiFi Sequencing

This protocol enables the analysis of 3D genome architecture from low-input samples, down to ~62,000 cells [30].

Key Steps [30]:

- Cross-linking & Digestion: Cross-link cells with formaldehyde and digest chromatin with a restriction enzyme (e.g., DpnII or HindIII).

- Proximity Ligation: Perform in situ proximity ligation to join cross-linked DNA fragments.

- De-crosslinking & Purification: Reverse cross-links and purify the DNA.

- Whole-Genome Amplification (Critical Step): Amplify the entire 3C library using a high-fidelity PCR enzyme. This step is essential for overcoming the low yields of traditional 3C preps and generating sufficient material for sequencing.

- Size Selection: Select fragments >5 kbp.

- PacBio HiFi Sequencing: Prepare the library and sequence on a system such as Revio.

The following diagram illustrates the core workflow and how the CiFi method overcomes the limitation of traditional long-read 3C sequencing.

Oxford Nanopore Ligation Sequencing for Low Input by PCR

This protocol uses PCR amplification to generate sufficient library from 100 ng of sheared genomic DNA or amplicons [32].

Key Steps [32]:

- DNA End-Prep (for gDNA) / Tailed Primers (for amplicons): For gDNA, repair ends and add dA-tails in a single step. For amplicons, perform a first-round PCR with tailed primers.

- PCR Adapter Ligation & Amplification: Ligate PCR adapters and amplify the library using LongAmp Hot Start Taq Master Mix.

- End-Prep: Repair and dA-tail the amplified DNA ends in preparation for sequencing adapter ligation.

- Adapter Ligation & Clean-up: Ligate sequencing adapters to the DNA and perform a final clean-up.

- Priming and Loading: Prime the flow cell and load the library for sequencing on a MinION or GridION device with an R10.4.1 flow cell.

The workflow is summarized in the following diagram.

The Scientist's Toolkit: Essential Research Reagent Solutions

| Item | Function | Example Use Case |

|---|---|---|

| High-Fidelity PCR Enzyme | Amplifies entire genomes or libraries from low inputs with minimal errors, crucial for WGA and post-3C amplification [30]. | Used in the CiFi protocol to amplify the 3C library after proximity ligation [30]. |

| AMPure XP Beads | Magnetic beads used for post-reaction clean-up and size selection. Critical for removing adapter dimers and selecting the desired fragment size range [32]. | Used in multiple clean-up steps in the Nanopore low-input protocol; a 0.9x ratio can remove adapter dimers [28] [32]. |

| NEBNext Ultra II End Repair/dA-tailing Module | Prepares DNA fragments for adapter ligation by creating blunt ends and adding a single 'A' base to the 3' end [32]. | A key component in the Oxford Nanopore low-input library prep workflow [32]. |

| Qubit Fluorometer & Assay Kits | Provides highly accurate, dye-based quantification of DNA concentration, superior to UV absorbance for measuring usable DNA mass in precious samples [27]. | Essential for quantifying input DNA and final library concentration before sequencing [11] [27]. |

| Agilent 2100 Bioanalyzer | Provides electrophoretic analysis of DNA fragment size distribution and library quality, identifying issues like degradation or adapter dimers [27]. | Used to assess the success of fragmentation and the final library profile before sequencing [11] [27]. |

2bRAD-M (Type IIB Restriction site-associated DNA sequencing for Microbiome) is an advanced sequencing technique designed for species-resolved microbiome profiling of the most challenging samples. This method sequences only about 1% of the metagenome yet simultaneously produces high-resolution taxonomic profiles for bacteria, archaea, and fungi, even with minute amounts of input DNA [33].

Key Technical Advantages

Table 1: Performance Comparison of Microbiome Sequencing Methods

| Technology | Taxonomic Resolution | DNA Input Requirement | Host Contamination Tolerance | Degraded DNA Analysis | Cost | Fungal Identification |

|---|---|---|---|---|---|---|

| 2bRAD-M | Species/Strain level | 1 pg total DNA [33] | High (up to 99% host DNA) [34] | Excellent (50-bp fragments) [33] | Low | Yes |

| 16S rRNA Sequencing | Genus level | Varies | Low | Limited | Low | No [34] |

| Whole Metagenomic Sequencing | Species/Strain level | ≥50 ng preferred [33] | Low | Low | High | Yes |

Table 2: 2bRAD-M Technical Specifications

| Parameter | Specification | Application Benefit |

|---|---|---|

| DNA Input Range | 1 pg to 200 ng [35] [36] | Suitable for extremely low-biomass samples |

| Target Fragment Length | 32 bp (using BcgI enzyme) [34] | Effective with severely degraded DNA |

| Organisms Detected | Bacteria, Archaea, Fungi simultaneously [33] | Comprehensive community profiling |

| Sequencing Coverage | ~1% of genome [33] | Cost-effective alternative to WMS |

| Theoretical Resolution | Species/Strain level [34] | High-precision taxonomic classification |

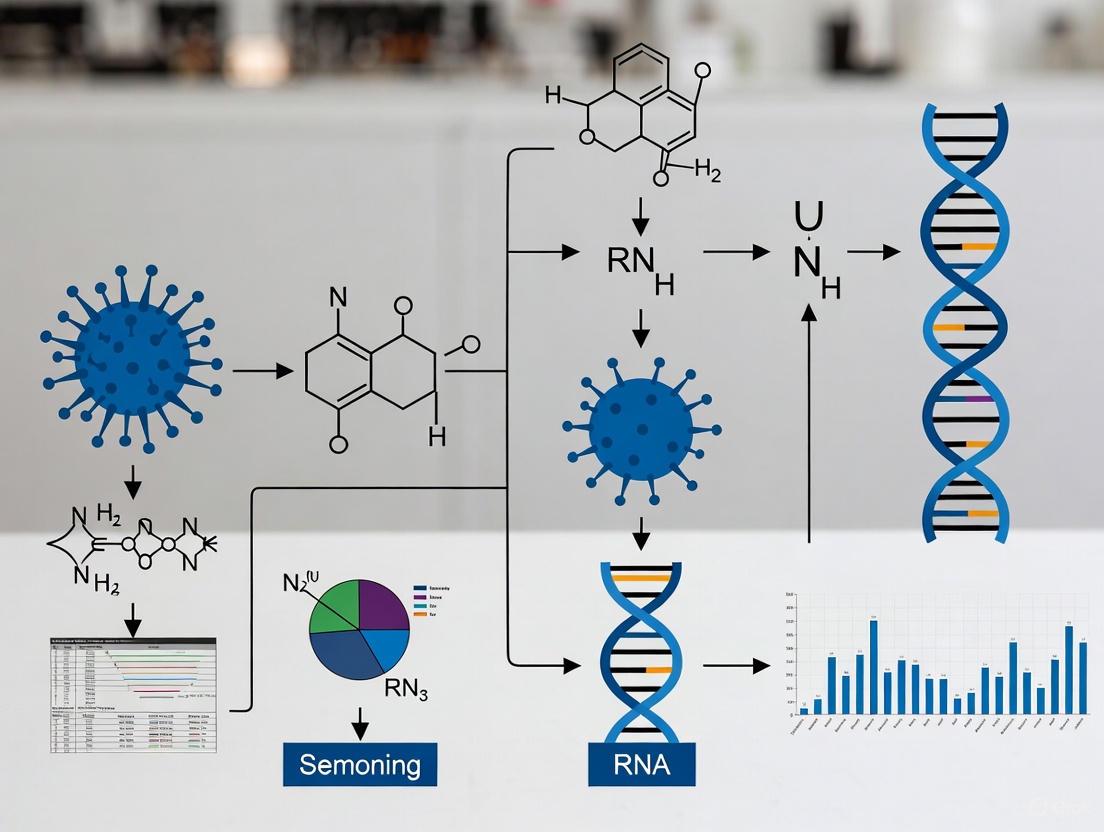

Technical Principles and Workflow

Core Methodology

2bRAD-M utilizes Type IIB restriction enzymes (such as BcgI) that cleave genomic DNA at specific recognition sites on both sides, producing uniform, iso-length fragments (typically 32bp) for sequencing [34] [33]. These taxon-specific sequence tags serve as unique molecular fingerprints, allowing precise identification and quantification of microbial species.

2bRAD-M Experimental and Computational Workflow

Research Reagent Solutions

Table 3: Essential Research Reagents for 2bRAD-M

| Reagent/Equipment | Function | Specifications |

|---|---|---|

| Type IIB Restriction Enzyme (BcgI) | Digests genomic DNA at specific sites | Recognition sequence: CGA-N6-TGC [33] |

| T4 DNA Ligase | Ligates adaptors to digested fragments | 800U reaction volume [37] |

| Phusion High-Fidelity DNA Polymerase | Amplifies ligated fragments | PCR amplification [36] |

| QIAGEN PCR Purification Kit | Purifies library products | Removes enzymes, salts [37] |

| Illumina Sequencing Platform | Sequences 2bRAD libraries | NovaSeq, HiSeq X Ten [37] [36] |

Frequently Asked Questions (FAQs)

Sample Preparation and Input

Q: What is the minimum DNA input required for 2bRAD-M? A: 2bRAD-M can effectively profile microbiomes with as little as 1 picogram (pg) of total DNA [33]. This extreme sensitivity makes it suitable for low-biomass environments like skin surfaces, intervertebral discs, and other tissue samples with minimal microbial load [35].

Q: Can 2bRAD-M handle samples with high host DNA contamination? A: Yes, 2bRAD-M can effectively process samples with up to 99% host DNA contamination [34]. The technology overcomes host contamination through three mechanisms: (1) reduced sequencing of host genome (only ~1%), (2) imbalance in restriction sites favoring microbial genomes, and (3) complete distinction between host and microbial 2bRAD signatures [34].

Q: Is 2bRAD-M suitable for degraded DNA samples? A: Absolutely. 2bRAD-M has demonstrated excellent performance with severely degraded DNA, including fragments as short as 50-bp and formalin-fixed paraffin-embedded (FFPE) tissues [33]. The method's reliance on short (32bp) unique tags makes it ideal for compromised samples that challenge other sequencing approaches [38].

Technical Performance

Q: What taxonomic resolution does 2bRAD-M provide? A: 2bRAD-M delivers species-level resolution and can distinguish between closely related strains [34] [33]. Unlike 16S rRNA sequencing which typically reaches only genus-level classification, 2bRAD-M identifies specific species, as demonstrated in studies differentiating Staphylococcus epidermidis from other Staphylococcus species [34].

Q: How does 2bRAD-M compare to metagenomic sequencing for low-biomass samples? A: While whole metagenome sequencing (WMS) requires substantial DNA input (≥50ng preferred) and performs poorly with high host contamination, 2bRAD-M provides species-level resolution with minimal input and high contamination tolerance [33]. A comparative study on cadaver microbiomes found 2bRAD-M overcame host contamination more effectively than metagenomic sequencing [38].

Q: What microorganisms can be detected with 2bRAD-M? A: 2bRAD-M simultaneously detects and quantifies bacteria, archaea, and fungi in a single sequencing run [33] [39]. This comprehensive profiling capability provides a complete landscape of microbial communities that targeted approaches like 16S rRNA (bacteria only) cannot achieve.

Experimental Design and Analysis

Q: What computational resources are required for 2bRAD-M analysis? A: The standard 2bRAD-M pipeline requires <30GB of RAM and approximately 10GB of disk space for database construction [40]. Typical analysis time is about 40 minutes for species profiling of a standard gut metagenome sample, making it compatible with desktop computing resources [40].

Q: How is the quantitative accuracy of 2bRAD-M? A: 2bRAD-M demonstrates high quantitative accuracy with L2 similarity scores >0.96 compared to ground truth in validation studies [33]. The two-step computational approach—initial qualitative analysis followed by quantitative assessment using a sample-specific database—ensures precise abundance estimates while minimizing false positives [33].

Q: Are there specific restriction enzymes recommended for different applications? A: While BcgI is commonly used, 2bRAD-M supports 16 different Type IIB restriction enzymes (AlfI, AloI, BaeI, BplI, BsaXI, etc.) [40]. Enzyme selection can be optimized based on the specific microbial communities of interest, as different enzymes generate distinct tag profiles [33].

Troubleshooting Guides

Low DNA Yield from Challenging Samples

Problem: Insufficient DNA extraction from low-biomass samples like intervertebral disc tissue [35] or urine [37].

Solutions:

- Use specialized DNA extraction kits designed for low-biomass samples (e.g., TIANamp Micro DNA Kit)

- Implement whole genome amplification prior to digestion for extremely low inputs

- Reduce purification steps to minimize sample loss

- Verify DNA quality using fluorometric methods rather than spectrophotometry

Expected Results: Successful profiling of intervertebral disc samples identified 332 microbial species, including differential abundance between Modic change and herniated disc groups [35].

Host Contamination Issues

Problem: Excessive host DNA in samples such as FFPE tissues or blood-contaminated specimens.

Solutions:

- No additional host depletion required—2bRAD-M naturally favors microbial tags

- Ensure proper digestion time (3 hours at 37°C)[ccitation:4] [37]

- Verify enzyme activity with control reactions

- Optimize PCR cycle number to prevent amplification bias

Expected Results: Effective analysis of FFPE tissue samples despite high host background, enabling species-resolved classification of healthy tissue, pre-invasive, and invasive cancer with 91.1% accuracy [33].

Database and Computational Analysis

Problem: Low species identification rates or high false positives.

Solutions:

- Implement G-score filtering (threshold >5) to control false positives [36]

- Use the two-step analysis approach: initial screening followed by sample-specific database refinement [33]

- Ensure proper database construction using updated reference genomes

- Validate with mock communities when establishing the protocol

Expected Results: High precision (98.0%) and recall (98.0%) in 50-species mock communities, outperforming or equivalent to other profiling tools like Kraken2 and MetaPhlAn2 [33].

Applications in Minimum Input Material Research

2bRAD-M has enabled groundbreaking research across fields where sample material is severely limited:

Forensic Thanatomicrobiome: Characterization of postmortem microbial communities from multiple tissues, even in advanced decomposition states [38].

Cancer Microbiome: Identification of tumor-associated microbes in ovarian cancer tissues with low microbial biomass [36].

Orthopedic Microbiology: Differentiation of microbial communities between Modic changes and disc herniation in intervertebral discs [35].

Urinary Microbiome: Species-level profiling of urinary microbiota in overweight and healthy-weight patients with urinary tract stones [37].

The exceptional sensitivity of 2bRAD-M to work with just 1pg of DNA positions it as the leading technology for advancing low-biomass microbiome research, enabling scientists to explore previously inaccessible microbial environments with species-level resolution.

FAQs and Troubleshooting Guides

Q1: What is the absolute minimum DNA input for a Nanopore sequencing library?

For standard ligation sequencing kits (like SQK-LSK114) without amplification, the practical lower limit is around 100 ng of High Molecular Weight (HMW) DNA. While outputs of over 50 Gb have been observed from 100 ng of HMW DNA on a PromethION flow cell, starting with only 1 ng of DNA is not feasible for standard protocols and requires a specialized, amplified approach [41].

Q2: What is the main consequence of using a DNA input below the recommended amount?

The primary consequence is significantly reduced sequencing output due to low pore occupancy. Pores will spend more time "searching" for molecules to sequence instead of sequencing continuously. This happens because an underloaded library provides too few "pore-threadable ends" to keep the nanopores occupied [41].

Q3: My DNA sample is very limited (e.g., 100 ng or less). What are my options to proceed?

You have two main strategies to boost your library yield from low inputs:

- Shearing: Shearing HMW DNA (e.g., using a Covaris g-TUBE) increases the number of molecules available for pore threading, which can increase pore occupancy and flow cell output. The trade-off is a reduction in observed read length [41].

- PCR Amplification: Using a PCR Expansion Pack (EXP-PCA001) with a ligation sequencing kit is the recommended method for low inputs. This protocol is validated for 100 ng of sheared gDNA or amplicon DNA and includes a PCR step to amplify the material before sequencing [32].

Q4: How does DNA quality affect sequencing success with low inputs?

With low inputs, sample quality is more critical than ever. Using too little DNA, or DNA of poor quality (e.g., highly fragmented or contaminated with salts, proteins, or organic solvents), can severely affect library preparation efficiency and sequencing yield [27] [32]. Rigorous quality control is non-negotiable.

Key Data and Protocols

Quantitative Data on Input vs. Output

The following tables summarize key quantitative data for planning low-input experiments.

Table 1: Recommended DNA Input Mass for Varying Fragment Sizes (for non-amplified protocols)

| Mass | Molarity if fragment size = 200 bp | Molarity if fragment size = 1 kb | Molarity if fragment size = 8 kb | Molarity if fragment size = 20 kb |

|---|---|---|---|---|

| 1000 ng | - | 200 fmol | 50 fmol | 20 fmol |

| 100 ng | 950 fmol | 100 fmol | 20 fmol | 5 fmol |

| 50 ng | 450 fmol | 50 fmol | 10 fmol | 3 fmol |

| 10 ng | 100 fmol | 10 fmol | 2 fmol | - |

| 5 ng | 50 fmol | 5 fmol | - | - |

Data adapted from Oxford Nanopore's Input DNA/RNA QC protocol [27].

Table 2: Example Sequencing Outputs from 100 ng HMW DNA Input

| Sample Type | Treatment | Total Output (Gb) | Mean Read Length (bases) |

|---|---|---|---|

| Human gDNA | Unsheared | ~5-10 Gb* | High (>20 kb) |

| Human gDNA | Sheared | Increased output (see Fig. 3B) | Reduced (see Fig. 3A) |

| HEK293 (Sample 1) | Not specified | 8.1 Gb | 21,339 |

| Human Blood (Sample 1) | Not specified | 11.6 Gb | 21,523 |

| Mouse Kidney | Not specified | 4.4 Gb | 27,121 |

Data synthesized from Oxford Nanopore [41] and NEB [42]. *Output can vary significantly based on sample quality and flow cell type.

Detailed Protocol: Low Input by PCR

This workflow allows for sequencing with a starting input of 100 ng of DNA [32].

Key Steps and Reagents:

- DNA End-Prep (gDNA input) or Tailed Primers (amplicon input): For gDNA, the ends are repaired to be blunt-ended. For amplicons, a first-round PCR with tailed primers (5' TTTCTGTTGGTGCTGATATTGC-[specific sequence] 3' and 5' ACTTGCCTGTCGCTCTATCTTC-[specific sequence] 3') is required [32].

- PCR Adapter Ligation & Amplification: PCR Adapters (PCA) are ligated to the DNA ends. The library is then amplified using PCR Primers (PRM) and a master mix like LongAmp Hot Start Taq 2X Master Mix [32].

- Standard Library Preparation: The amplified product then undergoes a standard end-repair and adapter ligation process using the Ligation Sequencing Kit (SQK-LSK114) to make it ready for the flow cell [32].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for Low-Input Nanopore Sequencing

| Item | Function | Example Product |

|---|---|---|

| PCR Expansion Kit | Enables amplification of limited starting material to generate sufficient DNA for library prep. | EXP-PCA001 (Oxford Nanopore) [32] |

| Fluorometric Quantifier | Accurately measures double-stranded DNA mass; critical for low-input work where photometers (NanoDrop) often overestimate. | Qubit Fluorometer (Thermo Fisher) [27] [43] |

| HMW DNA Shearing Device | Shears DNA to create more molecules from a limited mass, boosting pore occupancy and yield (at the cost of read length). | Covaris g-TUBE [41] |

| Solid-State Reagents | Purifies and size-selects DNA fragments during library prep, removing enzymes, salts, and short fragments. | Agencourt AMPure XP Beads (Beckman Coulter) [32] |

| Library Prep Kit | The core chemistry for preparing DNA libraries for sequencing on nanopore flow cells. | Ligation Sequencing Kit V14 (SQK-LSK114) [32] |

Troubleshooting Guide: Common Experimental Issues and Solutions

| Problem | Possible Cause | Solution |

|---|---|---|

| Low DNA Yield | Ultra-low biomass sample below kit detection limits [7]. | Use an InnovaPrep CP-150 or similar concentrator; modify protocols with carrier DNA [7]. |

| High Background Contamination | Contamination from reagents ("kitome"), lab environment, or personnel [7] [1]. | Use multiple negative controls; employ DNA-free reagents and PPE [7] [1]. |

| Inconsistent/No PCR Amplification | PCR inhibitors present or DNA concentration too low [7]. | Increase PCR cycles; add a concentration step; use low-input library kits [21]. |

| Well-to-Well Leakage (Cross-Contamination) | Contamination between adjacent samples on a plate [2]. | Randomize sample placement; include inter-well controls; use physical seals [2]. |

| Poor Sequencing Classification | High proportion of "noise reads"; incomplete reference databases [7]. | Use specialized bioinformatics pipelines; apply stringent quality filters [7]. |

Frequently Asked Questions (FAQs)

Q1: What is the minimum surface area we should sample for reliable results? The featured study successfully sampled areas of approximately 1 m² using the SALSA device [7]. For swab-based methods, the NASA standard assay often uses a 10 x 10 cm (100 cm²) area [7]. The key is to sample the largest area practical for your environment to maximize biomass collection.

Q2: How many negative controls are sufficient for a reliable study? While the optimal number can vary, the consensus is that two control samples are always preferable to one [2]. For critical studies or when high contamination is expected, more replicates are recommended. You should include a variety of control types, such as:

- Empty collection kit controls

- Sterile water samples (from the same container used for sampling)

- Extraction blanks

- No-template PCR controls [2] [1]

Q3: Our negative controls show microbial growth. Is our study compromised? Not necessarily. The presence of contaminants in controls is expected; their purpose is to identify the "noise" so it can be distinguished from the "signal" [1]. If the biomass and microbial profile of your actual samples are significantly different from the controls, your results may still be valid. However, if samples and controls are indistinguishable, the data from those batches should be interpreted with extreme caution or discarded [2].

Q4: Can we use whole genome amplification (WGA) for these samples? WGA can be used but requires careful consideration. Isothermal methods like Multiple Displacement Amplification (MDA) are common for low-biomass samples [21]. However, WGA can introduce biases and artifacts, such as chimeric sequences, and can amplify contaminating DNA alongside the target DNA [21]. It is often preferable to first explore low-input library preparation kits designed for inputs as low as 1-5 ng or 10 pg [7] [21].

Experimental Protocol: Rapid Nanopore Sequencing for Ultra-Low Biomass Surfaces

This protocol is adapted from the on-site method developed for a NASA Class 100K cleanroom [7].

Phase 1: Sample Collection with the SALSA Device

- Surface Pre-wetting: Spray the target surface area (~1 m²) with 2 mL of sterile, DNA-free PCR-grade water using a UV-treated spray bottle [7].

- Aspiration: Using a new, sterile collection tip for each sample, deploy the SALSA aspirator over the entire pre-wet area. The device will collect the liquid and deposit it directly into a 5-mL collection tube [7].

- Controls: For every sampling batch, collect process control samples by aspirating the sprayer water without surface contact and a laboratory negative control of sterile water [7].

Phase 2: Sample Concentration

- Concentrate the collected liquid sample (e.g., from 2 mL down to 150 µL) using a device like the InnovaPrep CP-150 with a 0.2-µm hollow fiber concentrating pipette tip [7].

- Transfer a 100 µL aliquot for DNA extraction.

Phase 3: DNA Extraction and Library Preparation

- Extract DNA using a commercial kit (e.g., Maxwell RSC) with an elution volume of 50 µL or lower to maximize DNA concentration [7].

- Use a modified version of Oxford Nanopore's Rapid PCR Barcoding Kit for library preparation. This protocol is chosen for its speed (~9 hours from sample to sequencing) and suitability for lower DNA inputs [7].

Workflow Visualization

Research Reagent Solutions

| Item | Function in the Protocol |

|---|---|

| SALSA Sampling Device | A handheld, battery-operated device that uses a vacuum and squeegee to efficiently sample large surface areas (up to 1 m²) with high recovery efficiency, bypassing the need for elution from swabs [7]. |

| DNA-Free Water | Sterile, PCR-grade water used to wet surfaces and as a sampling fluid. Its DNA-free nature is critical to prevent introducing contamination during the first step of sampling [7] [1]. |

| InnovaPrep CP-150 Concentrator | A device used to concentrate large volume liquid samples into a much smaller volume (e.g., 2 mL to 150 µL), thereby increasing the concentration of any microbial cells or DNA for downstream processing [7]. |

| Oxford Nanopore Rapid PCR Barcoding Kit | A library preparation kit designed for speed and lower DNA inputs. The protocol can be modified to work with the ultra-low DNA concentrations typical of cleanroom samples [7]. |

| Hollow Fiber Concentration Tip | A disposable tip used with the concentrator that captures microbial cells and DNA on a 0.2-µm polysulfone membrane before eluting them in a small volume [7]. |

| Multiple Displacement Amplification (MDA) Kit | A form of whole genome amplification (WGA) that can be used as an alternative to generate sufficient DNA for sequencing from picogram quantities of starting material, though it may introduce bias [21]. |

From Pitfalls to Precision: A Troubleshooting Guide for Reliable Data

FAQs on Batch Effects and Controls in Low-Biomass Research

What is batch confounding and why is it particularly problematic in low-biomass studies?

Batch confounding occurs when technical differences between processing batches align perfectly with the biological groups you are comparing. For example, if all your "control" samples are processed in one batch and all "case" samples in another, any technical differences between these batches can create false biological signals or mask real ones [44] [2].

In low-biomass research, where genuine biological signals are faint, this technical variation can overwhelmingly dominate your data. This has led to major controversies and retractions in the field, such as early claims about placental microbiomes that were later shown to be driven by contamination confounded with sample groups [2].

How can I identify the presence of batch effects in my dataset?

You can use several visualization and quantitative methods to detect batch effects:

- Principal Component Analysis (PCA): In PCA plots of your raw data, if samples cluster strongly by processing batch rather than biological group, it indicates a batch effect [45].

- t-SNE/UMAP Plots: Similar to PCA, these clustering visualizations may show samples grouping by batch when batch effects are present [45].

- Quantitative Metrics: Metrics like k-nearest neighbor batch effect test (kBET) or adjusted rand index (ARI) provide numerical scores of batch effect strength [45].

Table: Methods for Batch Effect Detection

| Method | What It Shows | Interpretation |

|---|---|---|

| PCA | Dimensional reduction showing sample grouping | Samples cluster by batch rather than biology |

| t-SNE/UMAP | Non-linear clustering of samples | Fragmented clusters aligned with batch identity |

| kBET | Statistical test for batch mixing | Lower p-values indicate significant batch effects |

| ARI | Measures cluster similarity | Values near 0 indicate different batch clustering |

What types of controls are essential for reliable low-biomass research?

Proper controls are critical for distinguishing contamination from true signal in low-biomass studies [2]:

Negative Controls: These include:

- Empty extraction controls: Contain no sample, identifying contaminants from DNA extraction kits and reagents [2]

- No-template PCR controls: Contain water instead of sample, revealing contamination during amplification [2]

- Surface/solvent controls: Sample sterile surfaces or solvents used in collection [2]

Positive Controls: Known microbial communities or synthetic spikes verify your entire workflow can detect microbes when present [46].

Process-Specific Controls: Since contamination can enter at multiple stages, collect controls representing each processing step and equipment type [2].

What are the most effective methods for correcting batch effects?

Multiple computational approaches can correct batch effects:

- Harmony: Uses PCA and iterative clustering to remove batch effects [45] [47]

- Seurat Integration: Employs canonical correlation analysis and mutual nearest neighbors to align datasets [45] [47]

- ComBat: Uses empirical Bayes framework to adjust for batch effects [44] [48]

- LIGER: Applies integrative non-negative matrix factorization to identify shared and batch-specific factors [45] [47]

Table: Comparison of Batch Effect Correction Methods

| Method | Primary Approach | Best For | Considerations |

|---|---|---|---|

| Harmony | PCA + iterative clustering | Single-cell RNA-seq | Fast, preserves biological variance |

| Seurat | CCA + mutual nearest neighbors | Single-cell & spatial transcriptomics | Identifies "anchors" between datasets |

| ComBat | Empirical Bayes | Bulk RNA-seq & microarray | Can over-correct with small sample sizes |

| MNN Correct | Mutual nearest neighbors | Single-cell RNA-seq | Computationally intensive for large datasets |

How can I prevent batch confounding through experimental design?

The most effective approach is preventing batch confounding during experimental planning:

- Balance Samples Across Batches: Ensure each batch contains similar proportions of all biological groups (e.g., equal numbers of case and control samples in each processing batch) [48]

- Randomize Processing Order: Randomly assign samples to processing batches rather than grouping by experimental condition [44]

- Document All Batch Variables: Record technical factors like reagent lots, personnel, equipment, and processing dates [44] [2]

- Use Positive and Negative Controls in Every Batch: Include controls in each processing batch to monitor batch-specific contamination [2]

Experimental Planning and Analysis Workflow

What are the signs of overcorrection when applying batch effect correction methods?

Overcorrection occurs when batch removal also removes genuine biological signal:

- Loss of Expected Markers: Canonical cell-type or condition-specific markers disappear from differential expression results [45]

- High Overlap in Markers: Cluster-specific markers become largely identical across different cell types or conditions [45]

- Appearance of Ubiquitous Markers: Genes with widespread high expression (e.g., ribosomal genes) become top markers [45]

- Scarce Differential Expression: Few or no significant hits in pathways where differences are biologically expected [45]

Troubleshooting Guide: Common Problems and Solutions

Problem: Suspected Batch Confounding

Symptoms:

- Strong separation of samples by processing date, reagent lot, or personnel in PCA/t-SNE plots [45]

- Statistical associations that align perfectly with batch variables rather than biological logic [44]

- Inability to replicate findings across different processing batches [2]

Solutions:

- Analyze Batches Separately: Assess whether results generalize across batches rather than pooling confounded data [2]

- Include Batch Covariates: In differential analysis, include batch as a covariate in statistical models [44]

- Apply Batch Correction: Use methods like Harmony, ComBat, or Seurat integration on appropriately designed studies [45] [47]

- Collect Additional Data: Process subset of samples across multiple batches to disentangle effects [2]