Comparative Genomic Analysis of Emerging Pathogens: From Surveillance to Drug Discovery

This article provides a comprehensive overview of the transformative role of comparative genomic analysis in understanding and combating emerging pathogens.

Comparative Genomic Analysis of Emerging Pathogens: From Surveillance to Drug Discovery

Abstract

This article provides a comprehensive overview of the transformative role of comparative genomic analysis in understanding and combating emerging pathogens. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles of genomic epidemiology, detailing advanced methodologies from whole-genome sequencing to AI-driven analysis. The article further addresses critical challenges in study design and optimization, presents robust frameworks for data validation and quality control, and synthesizes key takeaways to outline future directions for biomedical research and clinical application. By integrating the latest research and real-world case studies, this review serves as a strategic guide for leveraging pathogen genomics in public health and therapeutic development.

Unveiling Pathogen Emergence: Foundational Concepts and Genomic Epidemiology

Defining Emerging Infectious Diseases and the Role of Pathogen Genomics

Emerging infectious diseases (EIDs) are defined as infections that have recently appeared within a population or whose incidence or geographic range is rapidly increasing or threatens to increase in the near future [1]. This category includes previously undetected or unknown infectious agents, known agents that have spread to new geographic locations or populations, and previously known agents whose role in specific diseases had previously gone unrecognized [1]. Additionally, the re-emergence of agents whose incidence had significantly declined in the past, known as re-emerging infectious diseases, represents a significant public health challenge [1]. Since the 1970s, approximately 40 infectious diseases have been discovered, including SARS, MERS, Ebola, chikungunya, avian flu, swine flu, Zika, and most recently COVID-19 [1].

The critical importance of EIDs lies in their potential to cause widespread morbidity and mortality, disrupt societies and economies, and challenge public health systems globally. The World Health Organization noted in its 2007 report that infectious diseases are emerging at an unprecedented rate [1]. Multiple factors contribute to this emergence, including population growth, migration from rural areas to cities, international air travel, poverty, wars, destructive ecological changes, and climate change [1]. Particularly concerning is that many emerging diseases arise when infectious agents in animals are passed to humans (zoonoses), as the expanding human population increasingly comes into contact with animal species that are potential hosts of infectious agents [1].

In this evolving landscape, pathogen genomics has revolutionized how we detect, monitor, and respond to EIDs. The application of next-generation sequencing (NGS) technologies has transformed public health approaches to infectious diseases, enabling earlier detection, more precise investigation of outbreaks, and better characterization of microbes [2]. Genomic surveillance provides public health agencies with powerful tools to improve their effectiveness across almost all domains of infectious disease management, from foodborne illness outbreaks to tuberculosis control and influenza surveillance [2]. This guide examines the pivotal role of comparative genomic analysis in emerging pathogen research, providing a detailed comparison of methodological approaches and their applications in modern public health practice.

Pathogen Genomics Technologies and Workflows

Fundamental Sequencing Technologies

Pathogen genomics relies on several core sequencing technologies, each with distinct advantages and applications for EID research. Next-generation sequencing (NGS), also called high-throughput sequencing, represents a fundamental advance over earlier Sanger sequencing technology, which was first invented in the 1970s [2]. NGS began with the commercial release of massively parallel pyrosequencing in 2005 and has since undergone rapid efficiency improvements, with sequencing costs falling by as much as 80% year-over-year [2].

The primary sequencing approaches used in pathogen genomics include:

- Whole-genome sequencing (WGS): Provides complete genomic information for comprehensive analysis of pathogens

- Metagenomic next-generation sequencing (mNGS): Sequences all nucleic acids in a sample without targeting, enabling broad pathogen detection

- Targeted next-generation sequencing (tNGS): Enriches specific genetic targets before sequencing, increasing sensitivity for particular pathogens

Each approach offers distinct advantages depending on the research or public health objective, and understanding their comparative performance is essential for effective study design and implementation in EID investigations.

Comparative Performance of Genomic Methodologies

Recent research has directly compared the performance characteristics of different sequencing approaches for pathogen detection. The following table summarizes key findings from a comprehensive comparative study of sequencing methods for lower respiratory tract infections:

Table 1: Performance comparison of sequencing methodologies for pathogen detection

| Parameter | Metagenomic NGS (mNGS) | Capture-based tNGS | Amplification-based tNGS |

|---|---|---|---|

| Number of species identified | 80 | 71 | 65 |

| Cost per sample | $840 | Not specified | Not specified |

| Turnaround time | 20 hours | Shorter than mNGS | Shortest among methods |

| Accuracy | Lower than tNGS | 93.17% | Lower than capture-based tNGS |

| Sensitivity | Lower than capture-based | 99.43% | Poor for gram-positive (40.23%) and gram-negative bacteria (71.74%) |

| DNA virus specificity | Not specified | Lower (74.78%) | Higher (98.25%) |

| Key advantage | Detection of rare pathogens | Optimal for routine diagnostics | Rapid results with limited resources |

This comparative data, derived from a study of 205 patients with suspected lower respiratory tract infections, demonstrates that capture-based tNGS demonstrated significantly higher diagnostic performance than the other two NGS methods when benchmarked against comprehensive clinical diagnosis [3]. The fundamental difference between these approaches lies in their workflows: mNGS aims to sequence as much DNA and/or RNA as possible from a sample, whereas tNGS workflows focus on enriching specific genetic targets for sequencing [3].

Experimental Workflows and Protocols

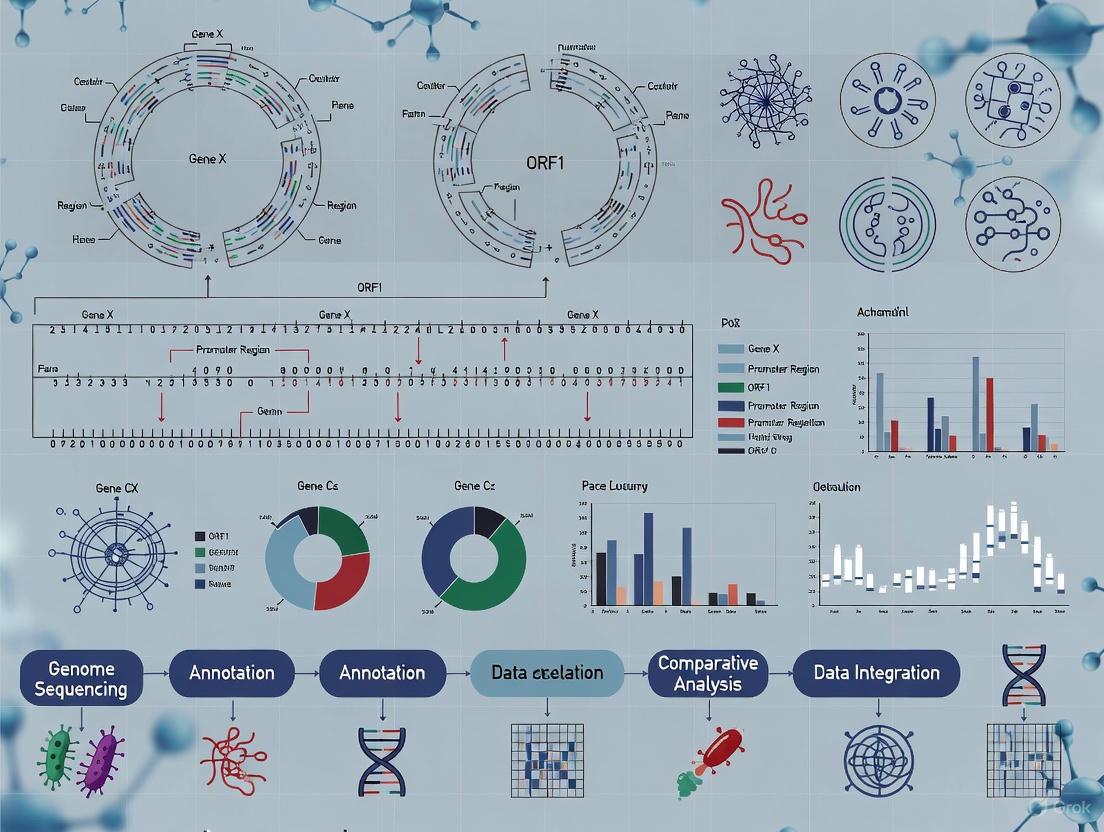

The experimental workflow for pathogen genomic analysis involves multiple critical steps, each requiring specific protocols and quality control measures. The following diagram illustrates a generalized workflow for pathogen genomic sequencing and analysis:

Diagram 1: Generalized pathogen genomics workflow

For metagenomic NGS, the detailed protocol involves several critical steps. DNA is typically extracted from samples using specialized kits such as the QIAamp UCP Pathogen DNA Kit, followed by host DNA depletion using Benzonase and Tween20 [3]. For RNA viruses, total RNA extraction utilizes kits like the QIAamp Viral RNA Kit, followed by ribosomal RNA removal using a Ribo-Zero rRNA Removal Kit [3]. RNA is reverse transcribed and amplified by systems such as the Ovation RNA-Seq system. Following fragmentation, the library is constructed based on combined DNA and reverse transcribed using systems like Ovation Ultralow System V2, with sequencing typically performed on platforms such as the Illumina Nextseq 550Dx with 75-bp single-end reads [3].

For targeted NGS, two primary enrichment methods exist. Amplification-based tNGS uses pathogen-specific primers for ultra-multiplex PCR amplification to enrich target pathogen sequences. One described protocol uses a Respiratory Pathogen Detection Kit with 198 microorganism-specific primers spanning bacteria, viruses, fungi, mycoplasma, and chlamydia [3]. This process encompasses two rounds of PCR amplification, followed by purification and sequencing on platforms such as the Illumina MiniSeq. Capture-based tNGS employs probe hybridization to enrich target sequences, with protocols involving sample lysis followed by mechanical disruption via a vortex mixer and beads [3].

Quality control measures throughout these workflows are essential. Negative controls, such as peripheral blood mononuclear cell samples from healthy donors or sterile deionized water, should be processed in parallel with each batch to monitor for contamination [3].

Key Research Reagents and Solutions

Successful pathogen genomics research relies on specialized reagents and tools optimized for different aspects of the workflow. The following table catalogues essential research reagent solutions for genomic analysis of emerging pathogens:

Table 2: Essential research reagents for pathogen genomic analysis

| Reagent Category | Specific Examples | Function & Application |

|---|---|---|

| Nucleic Acid Extraction Kits | QIAamp UCP Pathogen DNA Kit, QIAamp Viral RNA Kit, MagPure Pathogen DNA/RNA Kit | Extraction and purification of pathogen nucleic acids from clinical samples |

| Host Depletion Reagents | Benzonase, Tween20 | Selective degradation of host nucleic acids to increase pathogen sequencing sensitivity |

| rRNA Removal Systems | Ribo-Zero rRNA Removal Kit | Depletion of ribosomal RNA to improve detection of non-ribosomal pathogen RNA |

| Reverse Transcription & Amplification Systems | Ovation RNA-Seq system, SuperScript IV Reverse Transcriptase | cDNA synthesis from RNA pathogens and amplification of nucleic acids |

| Library Preparation Kits | Ovation Ultralow System V2, Illumina DNA Prep Kit, Respiratory Pathogen Detection Kit | Preparation of sequencing libraries with appropriate adapters and barcodes |

| Target Enrichment Systems | Custom probe panels (e.g., Illumina Pan-CoV library panel), pathogen-specific primer sets | Selective enrichment of target pathogen sequences for increased sensitivity |

| Sequencing Platforms | Illumina Nextseq, MiniSeq, NovaSeq; Oxford Nanopore GridION/MinION | High-throughput sequencing of prepared libraries |

| Bioinformatics Tools | nf-core/viralrecon, Pangolin, Nextclade, Bowtie2, iVar | Data processing, variant calling, lineage assignment, and phylogenetic analysis |

These research reagents form the foundation of robust pathogen genomics workflows. The selection of specific reagents depends on the pathogen type, sample matrix, sequencing approach, and research objectives. For instance, the use of specialized panels like Illumina's Pan-CoV library panel has been instrumental in identifying novel coronaviruses in wildlife reservoirs, as demonstrated by the discovery of novel avian gammacoronaviruses in feral pigeons [4].

Applications in Public Health and Research

Genomic Epidemiology and Outbreak Investigation

Pathogen genomics has transformed public health approaches to infectious disease surveillance and outbreak investigation. Several key applications demonstrate its transformative impact:

Foodborne Illness Surveillance: The transition from pulsed-field gel electrophoresis (PFGE) to whole-genome sequencing (WGS) in programs like PulseNet has dramatically improved outbreak detection and investigation [2]. Compared with PFGE, WGS offers vastly finer resolution: typically, a three- to six-million base-pair sequence, in contrast to a gel pattern with ten to twenty bands that reflect changes in small parts of the genome [2]. This enhanced resolution allows for more precise linking of cases and identification of transmission sources. In the first three years of WGS implementation for Listeria surveillance (September 2013 through August 2016), 18 outbreaks were solved (6 per year) with a median of just 4 cases per outbreak, compared to only 5 outbreaks total in the 20-year period before PulseNet [2].

Tuberculosis Control: WGS provides much finer resolution subtyping of Mycobacterium tuberculosis than older DNA fingerprinting technologies, allowing health department investigators to detect clusters of cases that may be linked to recent transmission with greater confidence [2]. This enables more targeted interventions to stop transmission chains.

Influenza Surveillance: The United States has implemented a "sequence first" approach to influenza virus characterization, where antigenic type and subtype can be inferred directly from sequence data [2]. This approach provides more detailed and timely information for vaccine strain selection and monitoring of antiviral resistance.

SARS-CoV-2 Surveillance: The COVID-19 pandemic demonstrated the critical importance of genomic surveillance for tracking viral evolution and informing public health responses. Large-scale phylogenetic analyses have enabled detailed understanding of variant emergence and spread [5]. For instance, discrete phylogeographic analysis of Omicron BA.5 sublineage introductions revealed that while the earliest introductions came from Africa (the putative variant origin), most were from Europe, matching a high volume of air travelers [5].

Understanding Transmission Dynamics and Pathogen Evolution

Genomic analysis provides powerful insights into the transmission dynamics and evolutionary pathways of emerging pathogens:

Mycoplasma pneumoniae Resurgence: Genomic epidemiological analysis of the 2023 Mycoplasma pneumoniae outbreak in Beijing revealed that the resurgence was not attributable to a novel variant but stemmed from the resurgence of pre-existing strains [6]. The study sequenced 160 M. pneumoniae genomes and identified ST3 and ST14 as the predominant sequence types, with the macrolide-resistant mutation rate of ST3 maintained at 100%, while that of ST14 increased rapidly [6]. This type of analysis helps explain the changing epidemiology and antimicrobial resistance patterns of respiratory pathogens.

Variant Emergence and Spread: Phylogeographic analysis of SARS-CoV-2 Omicron BA.5 emergence in the United States demonstrated extensive domestic transmission between different regions, driven by population size and cross-country transmission between key hotspots [5]. Most BA.5 virus transmission within the United States occurred between three regions in the southwestern, southeastern, and northeastern parts of the country [5]. This understanding of spatial transmission patterns informs targeted surveillance and intervention strategies.

Wildlife Reservoir Surveillance: Genomic analysis of pathogens in animal reservoirs provides early warning of potential emergence threats. For example, the discovery of novel avian gammacoronaviruses in feral pigeons using next-generation sequencing highlights the utility of these technologies in uncovering hidden viral diversity in wildlife populations [4]. This approach aligns with One Health principles that recognize the interconnectedness of human, animal, and environmental health.

The integration of pathogen genomics into public health practice has fundamentally transformed our approach to emerging infectious diseases. Comparative genomic analysis provides unprecedented resolution for detecting outbreaks, tracking transmission, understanding pathogen evolution, and guiding interventions. The methodological comparisons presented in this guide demonstrate that choice of sequencing approach must be guided by specific use cases—whether broad pathogen detection (mNGS), routine diagnostic testing (capture-based tNGS), or rapid results with limited resources (amplification-based tNGS).

As sequencing technologies continue to advance and costs decline, the role of genomics in managing emerging infectious diseases will expand further. Future directions will likely include greater integration of genomic data with clinical and epidemiological information, more rapid point-of-care sequencing technologies, and enhanced global data sharing networks. The decentralized genomic surveillance circuit established in Andalusia, Spain, which sequenced over 42,500 SARS-CoV-2 genomes and tracked the transition through multiple variant waves, demonstrates the feasibility of large-scale sequencing within decentralized healthcare systems [7]. Such frameworks provide a model for future pandemic preparedness.

The ongoing challenge of emerging infectious diseases requires continued investment in genomic surveillance infrastructure, bioinformatics capabilities, and interdisciplinary collaboration across the One Health spectrum. By leveraging the powerful tools of comparative genomic analysis, researchers, public health professionals, and drug development specialists can enhance our collective ability to detect, understand, and respond to the continuous threat of emerging pathogens.

Core Principles of Genomic Epidemiology and Phylodynamics

Genomic epidemiology represents a transformative discipline that integrates pathogen genome sequencing with epidemiological data to track and understand the spread of infectious diseases. This field leverages the genomic signatures left by pathogen evolution during transmission to generate evidence about disease spread and sources [8]. Simultaneously, phylodynamics combines evolutionary biology and epidemiology to infer population-level transmission dynamics from genetic data, exploiting how pathogen genetic diversity accumulates over epidemiological timescales [8]. Together, these approaches have revolutionized outbreak investigations, enabling researchers to identify transmission clusters, uncover unsampled transmission links, and monitor the emergence of variants with concerning properties such as enhanced virulence or antimicrobial resistance [9] [10].

The foundational principle underlying these fields is measurable evolution—the phenomenon whereby pathogens accumulate genetic diversity on the same timescale as transmission occurs, making this diversity informative about transmission timing and patterns [8]. This principle has been successfully applied to diverse pathogens, from rapidly evolving viruses like SARS-CoV-2 and Ebola to bacterial pathogens including Acinetobacter baumannii and Salmonella [8] [9] [11]. The COVID-19 pandemic particularly highlighted the value of genomic surveillance, with global sequencing efforts producing millions of SARS-CoV-2 genomes that enabled real-time tracking of variants and informed public health responses [9].

Foundational Methodological Frameworks

Core Analytical Models

Phylodynamic analyses rely on mathematical models that connect epidemiological processes with observable genetic data. The two foundational tree priors used in phylodynamics are the coalescent and birth-death models, each with distinct assumptions and applications [8].

The coalescent model originated in population genetics and operates backward in time, modeling how sampled lineages merge (coalesce) into common ancestors [8] [10]. This framework is particularly useful for inferring historical population dynamics from genetic data and operates most effectively when the sample size is small relative to the total population size [10]. The coalescent rate depends on the effective population size (Nₑ(t)), which represents the size of an idealized population that would generate the observed genetic diversity [8]. In infectious disease contexts, changes in effective population size reflect fluctuations in the number of infections over time, providing insights into epidemic growth or decline.

In contrast, the birth-death model operates forward in time, explicitly modeling transmission (birth), recovery (death), and sampling events [12]. This approach provides a more natural representation of epidemic processes and remains valid even when sampling is dense [8]. Birth-death models parameterize key epidemiological quantities including transmission rates, recovery rates, and sampling probabilities, enabling direct estimation of the effective reproduction number (Rₑ(t)) and prevalence of infection [12].

Table 1: Comparison of Foundational Phylodynamic Models

| Feature | Coalescent Model | Birth-Death Model |

|---|---|---|

| Temporal direction | Backward-in-time | Forward-in-time |

| Key parameters | Effective population size (Nₑ) | Transmission, recovery, and sampling rates |

| Sampling assumption | Small sample relative to population | Valid for dense sampling |

| Primary output | Historical population size | Transmission tree, Rₑ, prevalence |

| Computational efficiency | Generally faster | More computationally intensive |

| Epidemiological interpretation | Indirect, requires conversion | Direct interpretation |

Key Epidemiological Parameters

Phylodynamic methods estimate crucial epidemiological parameters that quantify transmission dynamics and disease burden:

Basic reproduction number (R₀): The average number of secondary infections from a single infected individual in a fully susceptible population, typically inferred during the early exponential growth phase of an outbreak [8].

Effective reproduction number (Rₑ(t)): The time-varying average number of secondary infections per infectious individual, reflecting changing transmission dynamics due to interventions, immunity, or behavior [8] [12].

Serial interval: The time between symptom onset in an infector and infectee, which informs about transmission speed and timing [13].

Prevalence of infection: The number of infected individuals at a specific time, which can be estimated through phylodynamic methods even with incomplete case observations [12].

These parameters are estimated from time-stamped pathogen genomes, which provide information about evolutionary relationships, and epidemiological data such as case counts or symptom onset dates [12].

Current Applications and Experimental Approaches

Tracking Bacterial Pathogen Evolution

Genomic epidemiology has revealed crucial insights into the population dynamics of bacterial pathogens. A comprehensive study of Acinetobacter baumannii bloodstream isolates in China (2011-2021) demonstrated how genomic analysis can track the expansion of specific lineages and identify factors driving their success [14]. Researchers analyzed 1,506 non-repetitive isolates from 76 hospitals, identifying 149 sequence types (STs) and 101 K-locus types (KLs) through whole-genome sequencing [14]. The study revealed a notable shift in dominant STs within International Clone 2: while ST195 decreased from 42.18% to 8.5% and ST191 declined from 18.37% to 0.9%, ST208 increased from 12.93% to 21.19% between 2014-2021 [14]. This study exemplifies how large-scale genomic surveillance can identify successful lineages and investigate their underlying adaptive advantages.

Table 2: Bacterial Genomic Epidemiology Case Study - A. baumannii in China

| Analysis Component | Methodology | Key Finding |

|---|---|---|

| Population structure | Oxford MLST scheme, capsular typing | 149 STs and 101 KLs identified; IC2 dominant (81.74%) |

| Temporal dynamics | Comparative analysis of isolates across 11 years | Shift from ST195/ST191 to ST208/ST369/ST540 |

| Virulence assessment | Phenotypic experiments on representative strains | ST208 exhibited higher virulence, antibiotic resistance, and desiccation tolerance |

| Transmission patterns | Phylogenetic analysis | ST208 showed more complex transmission networks |

| Antimicrobial resistance | Genomic identification of resistance genes | Carbapenem-resistant A. baumannii (CRAB) rate ~70% in China |

Estimating Transmission Dynamics from Genomic Data

Pathogen genomes enable estimation of key transmission parameters even when direct contact tracing data is unavailable. A novel framework for serial interval estimation using SARS-CoV-2 sequences demonstrated this approach during the COVID-19 pandemic in Victoria, Australia [13]. The method created "transmission clouds" of plausible infector-infectee pairs based on genomic distance and symptom onset times, then applied a mixture model to account for unsampled intermediate cases [13]. Validation against simulated outbreaks showed the method could accurately estimate mean serial intervals even when only 10% of cases were sampled, though with increasing uncertainty [13]. This approach provided cluster-specific estimates revealing that serial intervals were shorter in schools and meat processing plants compared to healthcare facilities, with important implications for transmission control [13].

Integrating Genomic and Time Series Data

Recent methodological advances enable more robust estimation of epidemic dynamics by integrating multiple data sources. The Timtam package for BEAST2 implements an approximate likelihood approach that combines time-stamped pathogen genomes with time series of case counts to estimate both effective reproduction numbers and historical prevalence [12]. This method accounts for the dependency between datasets while remaining computationally tractable for large outbreaks [12]. Application to SARS-CoV-2 data from the Diamond Princess cruise ship outbreak and poliomyelitis in Tajikistan demonstrated that this integrated approach produces estimates consistent with previous analyses while providing additional insights into infection prevalence [12].

Experimental Protocols and Workflows

Standardized Genomic Epidemiology Pipeline

The following workflow represents a generalized protocol for genomic epidemiology studies, synthesized from multiple applications across bacterial and viral pathogens [14] [13] [11]:

Genomic Epidemiology Workflow

Step 1: Sample Collection and Sequencing

- Collect pathogen samples from clinical or environmental sources with associated metadata (date, location, clinical presentation)

- Perform nucleic acid extraction and whole-genome sequencing using appropriate platforms (Illumina, Nanopore, etc.)

- In the A. baumannii study, this involved collecting 1,506 isolates from 76 hospitals over 11 years [14]

Step 2: Genomic Data Processing

- Conduct quality control of raw reads to remove contaminants and low-quality data

- Assemble genomes using appropriate assemblers (SPAdes, Velvet, etc.)

- Annotate genomes to identify genes of interest (virulence factors, resistance markers)

- The NTS study in Peru excluded 158 of 1,000 initially sequenced genomes due to quality concerns [11]

Step 3: Phylogenetic and Population Analysis

- Perform multi-locus sequence typing (MLST) or whole-genome MLST to classify isolates

- Construct phylogenetic trees using appropriate substitution models and clock assumptions

- Analyze population structure and identify clusters

- The NTS analysis identified 40 different STs among 1,122 genomes [11]

Step 4: Integration with Epidemiological Data

- Combine phylogenetic clusters with case data to infer transmission patterns

- Estimate epidemiological parameters (Rₑ, serial intervals, prevalence)

- Spatiotemporal analysis to track pathogen spread

Phylodynamic Analysis for Estimation of Transmission Parameters

Phylodynamic Analysis Pipeline

Step 1: Data Preparation

- Collect time-stamped pathogen sequences with sampling dates

- Gather epidemiological data (case counts, symptom onset dates, etc.)

- Align sequences and assess evolutionary substitution models

Step 2: Model Specification

- Select appropriate molecular clock model (strict, relaxed) based on temporal signal

- Choose tree prior (coalescent, birth-death) based on sampling density and research question

- Specify prior distributions for key parameters based on existing knowledge

Step 3: Parameter Estimation

- Run Bayesian phylogenetic inference using software such as BEAST2

- Assess convergence and effective sample sizes of parameters

- Validate model fit using posterior predictive simulations

Step 4: Interpretation and Visualization

- Extract estimates of key parameters (Rₑ, prevalence, serial intervals)

- Visualize results using skyline plots, transmission trees, or other appropriate formats

- The Timtam package enables estimation of historical prevalence in addition to reproduction numbers [12]

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Genomic Epidemiology

| Category | Specific Tools/Reagents | Function/Application |

|---|---|---|

| Sequencing Platforms | Illumina, Nanopore, PacBio | Whole-genome sequencing of pathogen isolates |

| Bioinformatics Tools | BEAST2, PhyML, RAxML | Phylogenetic inference and evolutionary analysis |

| Genomic Epidemiology Software | Timtam, EpiInf, outbreaker | Phylodynamic analysis and transmission parameter estimation |

| Quality Control Tools | FastQC, MultiQC | Assessment of sequencing read quality |

| Assembly and Annotation | SPAdes, Prokka, Roary | Genome assembly and pan-genome analysis |

| Variant Calling | GATK, SAMtools, FreeBayes | Identification of genetic variants and SNP calling |

| Visualization | Microreact, ITOL, ggplot2 | Visualization of phylogenetic trees and spatiotemporal spread |

Comparative Analysis and Future Directions

Genomic epidemiology and phylodynamics face several methodological challenges that influence their application to emerging pathogens. A key consideration is sampling bias, as uneven sampling across time or geography can distort phylodynamic inferences [9]. Additionally, the evolutionary rate of the pathogen determines the temporal resolution possible, with faster-evolving viruses generally providing more detailed insights into recent transmission events [9]. The assumptions linking transmission events to phylogenetic branching times also present challenges, as multiple transmissions from a single host or within-host evolution can complicate these relationships [8].

Future methodological developments are focusing on integrating multiple data sources more efficiently, improving computational efficiency for large datasets, and extending phylodynamic approaches to slower-evolving pathogens [9] [12]. There is also growing interest in real-time genomic epidemiology that can provide actionable insights during ongoing outbreaks, as demonstrated during the COVID-19 pandemic [9] [13]. As these methods continue to mature, they will enhance our ability to track and control diverse pathogens, from hospital-outbreak bacteria like A. baumannii to foodborne pathogens like non-typhoidal Salmonella and emerging viruses [14] [11].

The resurgence of Mycoplasma pneumoniae infections following the relaxation of COVID-19 pandemic restrictions represents a significant challenge in the field of respiratory pathogens. This case study employs comparative genomic analysis to investigate the genetic foundations of the 2023-2025 global resurgence, focusing on the balance between genomic stability and evolution that enables this pathogen to re-emerge after periods of suppression. Through the lens of genomic epidemiology, we analyze the molecular characteristics of circulating strains, their macrolide resistance profiles, and the phylogenetic relationships that distinguish geographic lineages. The insights gained from this analysis provide a framework for understanding pathogen resurgence patterns and inform public health responses to anticipated epidemic cycles.

Genomic Epidemiology of the Recent Resurgence

Post-Pandemic Resurgence Patterns

The cyclical nature of M. pneumoniae infections, typically occurring every 3-7 years, was disrupted by nonpharmaceutical interventions implemented during the COVID-19 pandemic [15] [16]. The subsequent resurgence in late 2023 represented a delayed epidemic wave, occurring approximately four years after the previous 2019 wave [15]. This pattern was observed globally, with notable outbreaks reported across Asia, Europe, and North America [16]. Genomic surveillance played a crucial role in confirming that this resurgence was driven by conventional respiratory pathogens rather than novel variants, providing reassurance to public health agencies including the World Health Organization [15].

Table 1: Global Distribution of Dominant M. pneumoniae Sequence Types

| Geographic Region | Predominant Sequence Types | Timeline | Key Characteristics |

|---|---|---|---|

| Beijing, China | ST3 (58.1%), ST14 (40.6%) | 2018-2023 | ST3 maintained 100% macrolide resistance [15] |

| United Kingdom | ST3 (34.2%), ST14 (18.4%) | 2016-2024 | Emerging macrolide resistance in ST3 [16] |

| Taiwan | ST3 (60.6%), ST17 (31.3%) | 2017-2020 | Multiple 23S rRNA mutations observed [17] |

| Multiple European Countries | Diverse distribution | 2016-2024 | Lower macrolide resistance rates (<10%) [16] |

Genomic Stability of Resurgent Strains

Comparative genomic analysis revealed that the 2023 outbreak strains exhibited 99% to >99% similarity when aligned to the reference M129 genome, indicating that the resurgence was not attributable to novel variants but rather to the re-emergence of pre-existing strains [15] [18]. The primary genetic variations were concentrated in the P1 adhesion gene, which plays a critical role in host cell attachment and represents a key antigenic target [15] [19]. This genetic conservation across the core genome, juxtaposed with strategic variation in surface proteins, illustrates the evolutionary balance that facilitates recurrent epidemics through partial immune evasion while maintaining fitness.

Comparative Genomic Analysis: Methodologies and Workflows

Sample Processing and Whole Genome Sequencing

The foundational step in genomic epidemiology involves robust sample processing and sequencing. Research groups have employed probe-capture-based enrichment to obtain high-quality M. pneumoniae genomes from clinical samples, significantly enhancing sequencing depth and coverage [15] [18]. The standard workflow begins with culture in specialized Mycoplasma broth or SP4 medium, followed by DNA extraction using commercial kits. Libraries are prepared for next-generation sequencing platforms, with an average sequencing depth of approximately 1062× ensuring comprehensive genomic coverage [15] [18].

Table 2: Key Experimental Protocols in M. pneumoniae Genomic Research

| Methodological Step | Specific Protocols | Applications in Analysis |

|---|---|---|

| Sample Collection | Throat swabs, bronchoalveolar lavage fluid, sputum | Pathogen identification and genomic characterization [16] [20] |

| Culture Methods | Mycoplasma broth (OXOID), SP4 medium, PPLO solid medium | Pathogen isolation and purification [15] [19] |

| DNA Extraction | Wizard Genomic DNA Purification Kit, QIAamp DNA Mini Kit | High-quality DNA for sequencing [15] [17] |

| Whole Genome Sequencing | Illumina NovaSeq 6000, MiSeq; Nanopore GridION X5 | Genome assembly and variant detection [15] [16] |

| Variant Calling | GATK HaplotypeCaller, BWA alignment | SNP and indel identification [15] [18] |

| Phylogenetic Analysis | RAxML, BEAST, Roary, Prokka | Evolutionary relationships and population structure [16] [17] |

Bioinformatic Analysis of Genomic Data

The analytical phase employs a comprehensive bioinformatic pipeline for variant identification and phylogenetic reconstruction. Quality-controlled sequencing reads are aligned to reference genomes (typically M129 for P1-type1 or FH for P1-type2) using Burrows-Wheeler Alignment [15]. Variant calling with GATK HaplotypeCaller identifies single-nucleotide polymorphisms (SNPs) and insertions/deletions (indels), with subsequent filtering to exclude repetitive regions and potential homoplasy effects [15] [18]. Phylogenetic reconstruction utilizes maximum likelihood methods, with temporal analysis performed using BEAST to estimate evolutionary rates and population dynamics [17].

Key Genomic Findings and Regional Variations

Population Structure and Phylogenetic Insights

Global phylogenetic analysis of M. pneumoniae has revealed distinct clustering patterns, with strains generally segregating into five primary clades: T1-1 (ST1), T1-2 (mainly ST3), T1-3 (ST17), T2-1 (mainly ST2), and T2-2 (mainly ST14) [17]. These clades demonstrate strong association with P1 subtypes, with T1 clades belonging to P1-type 1 and T2 clades to P1-type 2. The phylogenetic reconstruction clearly shows that strains from Asia and other world regions cluster into distinct clades with significant evolutionary differences [15] [18], suggesting long-term geographic segregation and independent evolution.

Macrolide Resistance Mechanisms and Global Distribution

A critical finding from genomic analyses is the striking disparity in macrolide resistance rates between geographic regions. The Western Pacific region exhibits the highest global prevalence of macrolide-resistant M. pneumoniae (MRMP), with rates exceeding 90% in China and 78.5% in South Korea [15]. In contrast, European countries maintain resistance rates below 10% [16]. This resistance is primarily mediated by point mutations in domain V of the 23S rRNA gene, with A2063G being the most prevalent mutation (89.4% of resistant strains), followed by A2064G (5.3%) and A2063T (5.3%) [17].

Table 3: Macrolide Resistance Profile by Sequence Type

| Sequence Type | Resistance Prevalence | Primary Mutations | Geographic Associations |

|---|---|---|---|

| ST3 | 100% in China [15] | A2063G, A2064G | East Asia (China, Japan, Korea) [16] |

| ST14 | Rapidly increasing [15] | A2063G | Global distribution [16] |

| ST17 | 45.2% in Taiwan [17] | A2063G, A2063T | Taiwan, South Korea [17] |

| ST1 | Documented resistance [17] | A2063G | China, South Korea, Tunisia [17] |

The high prevalence of macrolide resistance in Asia cannot be attributed solely to antibiotic selective pressure, as resistance rates in China continue to increase despite implementation of stricter antibiotic regulations and National Action Plans for Curbing Bacterial Resistance [15]. Genomic analyses have identified Asia-dominant genetic variations in genes associated with genome stability, pathogenesis, and drug resistance, suggesting potential genomic factors contributing to this disparity [15] [18].

The Scientist's Toolkit: Essential Research Reagents

Table 4: Essential Research Reagents for M. pneumoniae Genomic Studies

| Reagent/Category | Specific Examples | Research Application |

|---|---|---|

| Culture Media | Mycoplasma broth (OXOID), SP4 medium, PPLO solid medium | Pathogen isolation and propagation [15] [19] |

| DNA Extraction Kits | Wizard Genomic DNA Purification Kit, QIAamp DNA Mini Kit | High-quality genomic DNA preparation [15] [17] |

| Library Preparation | Enzyme Plus Library Prep Kit, TargetSeq One Kit, NEBNext Ultra II DNA Library Prep | Sequencing library construction [15] [17] |

| Enrichment Systems | M. pneumoniae-specific hybridization capture probes | Target pathogen enrichment from clinical samples [15] |

| Sequencing Platforms | Illumina NovaSeq 6000, MiSeq; Nanopore GridION X5 | Whole genome sequencing [15] [16] |

| Bioinformatic Tools | Trimmomatic, BWA, GATK, Gubbins, RAxML, BEAST | Data quality control, assembly, and phylogenetic analysis [15] [17] |

Clinical Implications and Pathogen Evolution

Association Between Genotype and Disease Severity

The integration of genomic data with clinical outcomes has revealed significant associations between specific genetic profiles and disease severity. All strains isolated from severe pneumonia cases were drug-resistant, with some severe refractory pneumonia cases exhibiting a gene multi-copy phenomenon sharing a conserved functional domain with the DUF31 protein family [19]. Patients infected with macrolide-resistant strains experienced more severe clinical presentations, including pleural effusion and the need for glucocorticoid treatment and bronchoalveolar lavage [19].

Mixed infections further complicate the clinical picture, with approximately 40.5% of hospitalized children with M. pneumoniae pneumonia having co-infections with other pathogens [20]. The most common co-infecting pathogen was Rhinovirus (30.8%), followed by Streptococcus pneumoniae (27.3%) and Haemophilus influenzae (16.1%) [20]. Patients with co-infections demonstrated higher rates of macrolide resistance, required more frequent use of hormones, and were more likely to develop severe pneumonia and bronchial mucus plugs [20].

Recombination and Evolutionary Dynamics

Homologous recombination plays a crucial role in the evolution of M. pneumoniae, with RepMP elements serving as hotspots for genetic exchange. Genomic analyses have identified 108 putative recombination blocks spanning an average of 1.3 kb/recombination event, covering approximately 10 kb/isolate (1.3% of the genome) [17]. A key recombination block containing six genes (MPN366-371) has been identified as significant in the evolutionary dynamics of the pathogen [17].

The recombination rate varies substantially between clades, with clade T1-2 (predominantly ST3) showing the highest recombination rate and genome diversity [17]. This enhanced genetic flexibility may contribute to the successful expansion of this clade, particularly in regions with high antibiotic selective pressure. The functional characterization of recombined regions has begun to clarify the biological role of these recombination events in the evolution of M. pneumoniae, particularly in surface antigen variation and potential immune evasion mechanisms.

Genomic analysis has revealed that the recent global resurgence of M. pneumoniae was not driven by novel variants but rather by the re-emergence of pre-existing strains, particularly sequence types ST3 and ST14, following the relaxation of COVID-19 restrictions [15] [18] [16]. The high genomic stability of this pathogen, combined with strategic variation in adhesion genes and differential macrolide resistance profiles, creates a complex epidemiological landscape. The stark geographic disparities in macrolide resistance rates, with East Asia experiencing rates exceeding 90% compared to Europe's 10%, point to multifactorial determinants beyond antibiotic selective pressure alone [15] [16].

Future research directions should include the establishment of comprehensive global genomic surveillance networks to monitor the circulation and evolution of M. pneumoniae strains, particularly focusing on the emergence and spread of macrolide resistance. Functional studies exploring the biological significance of Asia-dominant genetic variations and recombination hotspots will enhance our understanding of the genomic factors contributing to regional disparities in resistance patterns. The integration of genomic data with clinical outcomes through multidisciplinary collaborations will ultimately inform treatment guidelines and public health responses to mitigate the impact of future epidemic cycles.

Comparative genomic analysis has become an indispensable tool in the fight against antimicrobial resistance (AMR), enabling researchers to decipher the complex genetic blueprints of bacterial pathogens with unprecedented precision. By comparing entire genome sequences, scientists can now simultaneously identify virulence factors that cause disease and genetic markers conferring resistance to antibiotics [21]. This dual approach is critical for understanding the pathogenesis and persistence of emerging pathogens, from opportunistic bacteria in clinical settings to strains circulating at the animal-human interface [22] [23]. The integration of genomic data with phenotypic testing provides a powerful framework for tracking the evolution and spread of high-risk clones, informing both clinical management and public health policies aimed at curbing the silent pandemic of AMR [24] [21].

Key Methodologies in Comparative Genomic Analysis

Whole-Genome Sequencing and Assembly

The foundation of any comparative genomic study is high-quality genome sequencing and assembly. The standard workflow begins with extracting genomic DNA from bacterial isolates, followed by library preparation and sequencing on platforms such as the Illumina NovaSeq, which generates short paired-end reads (e.g., 2×150 bp or 2×250 bp) [25]. The resulting raw reads undergo quality control checks using tools like FastQC to assess sequence quality. De novo assembly of quality-filtered reads is then performed using assemblers such as SPAdes, producing contigs that are evaluated for quality and completeness with QUAST [25]. For more complex analyses, including resolving plasmid structures, long-read sequencing technologies (e.g., Oxford Nanopore) may be integrated to produce hybrid assemblies.

Bioinformatics Pipelines for Gene Detection

Specialized bioinformatics pipelines are essential for standardizing the annotation and detection of genes of interest. The "in-house WGSBAC pipeline" exemplifies an integrated approach, coordinating multiple analytical tools [25]. Key functional annotations are typically performed with Prokka, while dedicated databases and detection tools are employed for specific gene categories:

- Antimicrobial Resistance Genes: AMRFinderPlus, Abricate with ResFinder and CARD databases [25] [21]

- Virulence Factors: Abricate with the Virulence Factor Database (VFDB) and Virulence Finder [25] [22]

- Mobile Genetic Elements: Identification of plasmids, insertion sequences, and pathogenicity islands [24] [21]

Phylogenetic and Population Analysis

Strain classification and phylogenetic relationships are determined through several typing methods. Multi-locus sequence typing (MLST) assigns sequence types based on seven housekeeping genes, while core-genome MLST (cgMLST) provides higher resolution by comparing hundreds to thousands of core genes across the entire genome [25]. Phylogenetic trees are constructed using methods like maximum likelihood (FastTree), and population structure analysis often involves clustering algorithms based on evolutionary distances [26]. These analyses help trace transmission pathways, identify outbreaks, and understand the population dynamics of resistant clones.

Table 1: Key Bioinformatics Tools and Databases for Comparative Genomic Analysis

| Tool/Database | Primary Function | Application in Analysis |

|---|---|---|

| SPAdes | De novo genome assembly | Assembles short reads into contigs and scaffolds [25] |

| Prokka | Rapid genome annotation | Annotates features like genes, rRNA, tRNA [25] |

| AMRFinderPlus | Resistance gene identification | Detects AMR genes and mutations [25] |

| Abricate | Screening contigs against databases | Mass-screens for AMR/virulence genes [25] |

| ResFinder/CARD | AMR gene databases | Reference databases for resistance determinants [25] [21] |

| Virulence Factor Database (VFDB) | Virulence gene database | Reference database for virulence factors [25] [22] |

| SeroTypeFinder | In silico serotyping | Determines O and H antigens for E. coli [25] |

Figure 1: Core bioinformatics workflow for comparative genomic analysis of virulence and antimicrobial resistance genes, illustrating the pipeline from sample collection to data integration.

Comparative Analysis of Resistance and Virulence Across Pathogens

Escherichia coli: From Livestock to Humans

Studies of E. coli across different reservoirs reveal concerning patterns of multidrug resistance. A study of E. coli from South American camelids in Germany found that over half (23/39) of cephalosporin- or fluoroquinolone-resistant isolates were genotypically classified as multidrug resistant [25]. Resistance genes for trimethoprim/sulfonamides (22/39), aminoglycosides (20/39), and tetracyclines (18/39) were frequently detected, with blaCTX-M-1 being the most common extended-spectrum β-lactamase gene (16/39) [25]. Similarly, surveillance of Chinese swine farms identified E. coli sequence types ST10 and ST641 as widespread carriers of numerous antimicrobial resistance genes, including blaNDM-1, mcr-1.1, and blaOXA-10 [27]. The co-location of multiple ARGs on single plasmids, flanked by mobile genetic elements, facilitates their horizontal transfer, posing a significant public health risk.

Staphylococcus Species: Clinical and Zoonotic Threats

Comparative genomics of Staphylococcus aureus isolates from patients and retail meat in Saudi Arabia revealed a high prevalence of antibiotic resistance genes (tet38, blaZ, fosB) in both groups [22]. Notably, 100% of patient isolates and 43% of meat isolates were phenotypically multidrug-resistant, with all patient isolates carrying MDR genes [22]. Virulence genes (cap, hly/hla, sbi, isd) and enterotoxin genes (selX, sem, sei) were consistently present in isolates from both sources, highlighting the genetic connectivity between meat-borne and clinical S. aureus populations [22].

Meanwhile, a study on Staphylococcus epidermidis isolated from musculoskeletal infections (MSI) demonstrated that pathogenic isolates were genetically distinct from commensal strains [28]. MSI-derived isolates were significantly more likely to carry the mecA gene (conferring methicillin resistance) and the pathogenic marker IS256, with IS256-positive isolates being eight times more likely to develop persistent infections [28]. These isolates also exhibited higher rates of resistance to ciprofloxacin, gentamicin, and rifampicin, along with enhanced biofilm formation capabilities [28].

Emerging and Opportunistic Pathogens

Genomic analysis of novel Aliarcobacter faecis and Aliarcobacter lanthieri species, isolated from human and livestock feces, identified an array of virulence-related factors in both species [23]. These included flagella genes for motility, secretion pathway genes (Tat, type II, and III), and invasion/immune evasion genes (ciaB, iamA, mviN) [23]. A. lanthieri tested positive for 11 virulence, antibiotic-resistance, and toxin genes, including cadF (adherence) and cytolethal distending toxin genes (cdtA, cdtB, cdtC), highlighting their potential as opportunistic pathogens [23].

Table 2: Distribution of Key Resistance and Virulence Genes Across Bacterial Species

| Pathogen | Source | Key Resistance Genes | Key Virulence Factors |

|---|---|---|---|

| Escherichia coli | SAC (Germany) [25] | blaCTX-M-1, tet, sul, aac | Not emphasized |

| Escherichia coli | Swine Farms (China) [27] | blaNDM-1, mcr-1.1, blaOXA-10 | Not specified |

| Staphylococcus aureus | Patients & Meat (Saudi Arabia) [22] | tet38, blaZ, fosB, mecA | cap, hly/hla, sbi, isd, selX, sem, sei |

| Staphylococcus epidermidis | MSI Patients [28] | mecA | IS256, Biofilm formation genes |

| Aliarcobacter spp. | Human/Livestock Feces [23] | tet(O), tet(W), gyrA mutations | cadF, ciaB, cdtABC, flagella genes |

Experimental Protocols for Genomic Surveillance

Strain Selection and Antimicrobial Susceptibility Testing

Robust genomic surveillance begins with careful strain selection to ensure representativeness. Studies typically employ strategies that maximize diversity based on holding/farm origin, preliminary typing profiles (e.g., MLVA), and antimicrobial resistance profiles [25]. For antimicrobial susceptibility testing (AST), the BD Phoenix M50 Automated System is widely used to determine minimum inhibitory concentrations (MICs) against a panel of relevant antimicrobial agents [27]. The procedure involves preparing 0.5 McFarland bacterial suspensions, inoculating AST panels, and automated incubation/reading. Results are interpreted according to established clinical breakpoints (e.g., EUCAST or CLSI standards) to define resistant, intermediate, and susceptible categories.

Conjugation Assays for Horizontal Transfer Evaluation

To experimentally confirm the mobility of resistance genes, conjugation transfer experiments are performed. These assays typically use a sodium azide-resistant E. coli J53 strain as the recipient [27]. Donor and recipient strains are mixed and incubated together overnight. Transconjugants (recipient cells that have acquired resistance plasmids) are then selected on agar containing both sodium azide and a selecting antibiotic (e.g., meropenem for blaNDM-carrying plasmids). Successful conjugation demonstrates the potential for horizontal spread of resistance genes in natural environments, providing crucial experimental validation to complement genomic predictions of mobility.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents and Solutions for Genomic AMR Studies

| Reagent/Solution | Function in Research | Example Application |

|---|---|---|

| Luria-Bertani (LB) Broth/Agar | General bacterial growth medium | Culturing E. coli and other Gram-negative bacteria prior to DNA extraction [25] [27] |

| Selective Media (MacConkey, m-AAM) | Selective isolation of target bacteria | Primary isolation of E. coli [27] or Aliarcobacter spp. [23] from complex samples |

| DNeasy Microbial Kit | High-quality genomic DNA extraction | Purifying DNA for sequencing; minimizes inhibitors [25] |

| Illumina DNA Library Prep Kits | Preparing sequencing libraries | Fragmenting DNA and adding adapters for Illumina sequencing [25] [23] |

| BD Phoenix NMIC-413 Panels | Automated antimicrobial susceptibility testing | Phenotypic resistance profiling of Gram-negative bacteria [27] |

| Chromogenic Agar (e.g., MRSA) | Selective and differential isolation | Rapid phenotypic screening for specific resistant pathogens [22] |

Discussion and Future Directions

The expanding application of comparative genomics is transforming our understanding of AMR transmission dynamics across One Health sectors. Studies now clearly demonstrate the genetic connectivity between pathogens in livestock and human clinical settings, with identical resistance genes and mobile genetic elements shared between these reservoirs [22] [27]. This evidence underscores the necessity of integrated surveillance systems that track resistance across human, animal, and environmental compartments.

However, significant challenges remain in achieving equitable global genomic surveillance. A recent analysis revealed that 89 countries have no publicly available genomic data for key drug-resistant pathogens, while 146 countries have not contributed any such data since 2020 [29]. Nearly 90% of all usable AMR genomic data originates from high-income countries, with the USA and UK alone accounting for over 65% of sequences, creating dangerous blind spots in global health surveillance [29].

Future progress will depend on overcoming barriers to sequencing capacity in resource-limited settings, standardizing analytical pipelines, and promoting data sharing following FAIR principles (Findable, Accessible, Interoperable, and Reusable) [21]. The continued development of platforms like amr.watch, which automatically aggregates and contextualizes global genomic data, represents a crucial step toward building more equitable and effective surveillance networks [29]. As access to sequencing technologies improves, the integration of real-time genomic data into public health decision-making will be essential for designing targeted interventions to curb the spread of resistant pathogens.

Figure 2: Translational impact pathway of genomic AMR data, illustrating how genomic surveillance informs multiple sectors from clinical practice to global health security.

Phylogenetics, the study of evolutionary relationships among biological entities, has transformed from a historical discipline into a powerful tool for addressing pressing public health challenges. In research on emerging pathogens, it provides the quantitative framework needed to reconstruct transmission networks, trace the origin of outbreaks, and understand the evolutionary forces shaping epidemics. This guide compares the performance of key phylogenetic methods and products used in comparative genomic analysis, providing researchers with data-driven insights to select the right tools for their work.

Key Phylogenetic Metrics and Methods in Action

The performance of phylogenetic methods is best evaluated by their application to real-world public health problems. The table below summarizes findings from recent studies that used different metrics to investigate pathogen transmission.

Table 1: Comparison of Phylogenetic Metrics Applied to Pathogen Transmission Studies

| Pathogen / Context | Phylogenetic Method | Key Finding | Performance Insight |

|---|---|---|---|

| Mycobacterium tuberculosis in Brazilian prisons [30] | Genomic Clustering, THD, LBI, Bayesian Transmission Trees (BREATH) | No significant difference in transmission metrics between symptomatic vs. asymptomatic cases (e.g., clustering: 77% vs. 85%, p=0.816) [30] | Multiple genomic metrics provided consistent, robust evidence, underscoring the major role of asymptomatic TB. |

| HIV-1 in Nantong, China [31] | Molecular Transmission Network (0.5% genetic distance threshold) | 27.1% (326/1203) of sequences incorporated into the transmission network; older age and subtype C were key risk factors for being in clusters [31] | Molecular networks effectively identified active transmission clusters and associated demographic risk factors. |

| SARS-CoV-2 Pandemic [32] | Multi-scale Phylodynamic Agent-Based Model (PhASE TraCE) | Model replicated real-world virus evolution, linking public health interventions to the punctuated emergence of new Variants of Concern (VOCs) [32] | Integrated models can capture complex feedback loops between human behavior, interventions, and pathogen evolution. |

These studies demonstrate that no single metric is sufficient. A multi-faceted approach, using clustering, population genetic indices, and model-based inference, is often necessary to build a confident picture of transmission dynamics.

Experimental Protocols for Phylogenetic Analysis

Below are detailed methodologies for two key phylogenetic applications: building a transmission network and estimating the time-varying reproduction number (ℛt).

Protocol 1: Constructing a Molecular Transmission Network for HIV-1

This protocol is based on the study of HIV-1 in Nantong, China [31].

Sample Collection and Sequencing:

- Collect plasma or blood samples from newly diagnosed HIV-1 patients.

- Amplify and sequence the HIV-1 pol gene using reverse transcription polymerase chain reaction (RT-PCR) and Sanger sequencing.

Sequence Alignment and Quality Control:

- Align the obtained sequences with reference sequences (e.g., HXB2) using a multiple sequence alignment tool like MAFFT or MUSCLE.

- Manually inspect and edit the alignment to ensure accuracy.

Phylogenetic Tree and Genotype Analysis:

- Construct a preliminary phylogenetic tree (e.g., using Maximum Likelihood in IQ-TREE) to identify the circulating genotypes and check for any obvious contamination or outliers.

Molecular Transmission Network Construction:

- Calculate the pairwise genetic distance between all sequences using the Tamura-Nei 93 (TN93) model or a similar substitution model.

- Define a transmission cluster by setting a genetic distance threshold (e.g., 0.5% substitutions per site). A pair of sequences is linked if their distance is less than or equal to this threshold.

- Use network visualization software (e.g., Cytoscape) to represent the clusters, where nodes represent individual patients and edges represent a genetic link.

Statistical Analysis of Risk Factors:

- Use logistic regression to identify factors (e.g., age, genotype, CD4+ count) associated with being part of a transmission cluster.

Protocol 2: Estimating Time-Varying Reproduction Number (ℛt) from Genomic Data

This protocol compares estimates from genomic and case-count data, as outlined by Are et al. [33].

Outbreak Simulation (for validation):

- Use a simulation platform like

OOPidemicin R to generate an outbreak with a known ground truth ℛt [33]. - The simulator uses a Susceptible-Exposed-Infectious-Recovered (SEIR) framework and generates a genomic sequence for every infected individual.

- Use a simulation platform like

Data Preparation:

- From the simulation, export two files: a linelist (CSV file with infection times) and a FASTA file of pathogen sequences.

ℛt Estimation from Case Count Data (EpiEstim):

- Process the linelist to generate a daily incidence curve and estimate the generation time distribution.

- Input the incidence data and serial interval/generation time distribution into the

EpiEstimR package to estimate ℛt.

ℛt Estimation from Genomic Data (BDSKY):

- Use the FASTA file of sequences as input for the Birth-Death Skyline (BDSKY) model in the BEAST 2.7 software package.

- Run a Markov Chain Monte Carlo (MCMC) analysis to generate a posterior distribution of phylogenetic trees and estimate ℛt through time.

- Post-process the BEAST output using

bdskytoolsin R to summarize the ℛt estimates.

Performance Comparison:

- Compare the accuracy of ℛt estimates from EpiEstim and BDSKY against the known ground truth from the simulation, particularly under different sampling scenarios (e.g., complete vs. sparse sampling).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Reagents for Phylogenetic Analysis of Transmission Networks

| Item / Solution | Function / Application | Example Use |

|---|---|---|

| BEAST 2 (BDSKY Model) | Bayesian evolutionary analysis software; Birth-Death Skyline model infers time-varying reproduction number (ℛt) and population dynamics from genomic data. | Estimating the effective reproductive number of an emerging virus over the course of an epidemic [33]. |

| EpiEstim R Package | Estimates the time-varying reproduction number (ℛt) from case incidence data and the serial interval distribution. | Providing a comparison for phylogenetically-derived ℛt estimates or when genomic data is unavailable [33]. |

| OOPidemic R Package | An outbreak simulator that generates both epidemiological linelists and pathogen genomic sequences for a known ground truth. | Validating and comparing the performance of different phylogenetic and epidemiological inference methods [33]. |

| Molecular Transmission Network Pipeline | A custom workflow (often in R or Python) for calculating genetic distances, identifying clusters based on a threshold, and visualizing networks. | Identifying active transmission clusters and super-spreaders for public health intervention, as in HIV-1 studies [31]. |

| Genetic Distance Threshold | A pre-defined cut-off (e.g., 0.5% substitutions/site) used to determine if two pathogen sequences are linked in a transmission chain. | The core parameter for defining links in a molecular transmission network; sensitivity analyses are recommended [31]. |

Visualizing Phylogenetic Workflows

The following diagrams illustrate the logical workflow for a key phylogenetic analysis and the architecture of an advanced multi-scale modeling framework.

Phylogenetic Analysis Workflow

Multi-scale Phylodynamic Modeling

Discussion and Future Directions

The comparative data and protocols presented here underscore that modern phylogenetic analysis relies on integrating multiple methods to achieve high-confidence conclusions. The choice between methods often involves a trade-off between the rich, linked transmission data provided by genomic clustering and the population-level overview of epidemic growth provided by ℛt estimates.

Future directions in the field point towards even deeper integration. Multi-scale phylodynamic models, which couple within-host pathogen evolution with between-host transmission in an agent-based framework, represent the cutting edge [32]. These models can simulate the feedback loops between public health interventions and pathogen evolution, helping to explain phenomena like the punctuated emergence of SARS-CoV-2 variants. Furthermore, the application of artificial intelligence (AI) is poised to enhance the integration of phylogenetic data with other heterogeneous data sources, such as multi-omics and clinical information, promising to unlock new levels of predictive power in infectious disease research [34] [35]. For researchers, the strategic combination of these powerful and validated phylogenetic tools is essential for illuminating the transmission networks and evolutionary history of future emerging pathogens.

Advanced Methodologies and Public Health Applications in Pathogen Genomics

The rapid and accurate identification of pathogens is a cornerstone of effective public health response, particularly for emerging infectious diseases. Next-generation sequencing (NGS) technologies have revolutionized this field by enabling comprehensive genomic analysis directly from clinical and environmental samples. Among the available platforms, Illumina and Oxford Nanopore Technologies (ONT) have emerged as dominant technologies, each with distinct strengths and limitations for pathogen surveillance [36]. Furthermore, metagenomic next-generation sequencing (mNGS) represents a powerful, culture-independent approach that can detect unexpected or novel pathogens without prior assumptions [3].

This guide provides an objective comparison of these technologies, focusing on their application in emerging pathogens research. We compare their performance characteristics, present experimental data from recent studies, detail standardized protocols, and visualize key workflows to inform researchers, scientists, and drug development professionals in selecting appropriate methodologies for their specific research objectives.

Technology Platform Comparison

Illumina sequencing operates on sequencing-by-synthesis principles, generating massive volumes of short reads (typically 100-300 bp) with exceptionally high per-base accuracy (exceeding Q30) [37]. This technology excels in applications requiring quantitative accuracy, such as variant calling and SNP-based phylogenetic analysis. In contrast, Oxford Nanopore sequencing utilizes nanopore-based electronic sensing to generate long reads by measuring current changes as DNA or RNA molecules pass through protein nanopores. This approach produces significantly longer reads (frequently spanning tens of kilobases) enabling resolution of complex genomic regions, though with higher raw error rates (typically Q10-Q15) that can be mitigated through consensus sequencing [36] [37].

Table 1: Fundamental Characteristics of Major Sequencing Platforms

| Feature | Illumina | Oxford Nanopore |

|---|---|---|

| Core Technology | Sequencing-by-synthesis with reversible terminators [36] | Nanopore-based electronic sensing [36] |

| Typical Read Length | Short reads (100-300 bp) [36] | Long reads (≥1,500 bp to >10 kb) [36] |

| Raw Read Accuracy | Very High (>99.9%) [37] | Moderate (96-97%) [37] |

| Primary Strengths | High throughput, low per-base cost, excellent for SNP calling [37] | Long reads for assembly, real-time analysis, portability [38] |

| Typical Applications | Whole genome sequencing, metagenomics, transcriptomics [3] | Genome assembly, structural variant detection, direct RNA sequencing [38] |

Emerging Innovations

Both platforms are undergoing rapid innovation. Illumina is developing Constellation mapped read technology, which uses cluster proximity on the flow cell to generate long-range information without changing core chemistry, expected to improve mapping in complex genomic regions with commercial release slated for 2026 [39] [40]. The 5-base solution for simultaneous genetic and epigenetic variant detection is already available [40]. Oxford Nanopore is focusing on enhancing throughput and consistency, targeting a 60-70% output enhancement into 2026, and developing a voltage-controlled ASIC architecture to handle diverse analytes from DNA to proteins, reinforcing its position as a single-platform solution for multiomic data [38].

Performance Data in Pathogen Research

Recent comparative studies provide empirical data on the performance of these technologies across various applications relevant to emerging pathogen research.

16S rRNA Profiling for Respiratory Microbiomes

A 2025 study comparing Illumina NextSeq and ONT for 16S rRNA profiling of respiratory microbial communities revealed platform-specific biases. Illumina, sequencing the V3-V4 hypervariable region (~300 bp), captured greater taxonomic richness, while ONT, sequencing the full-length 16S rRNA gene (~1,500 bp), provided superior species-level resolution for dominant taxa [36]. Differential abundance analysis showed ONT overrepresented certain taxa (e.g., Enterococcus, Klebsiella) while underrepresenting others (e.g., Prevotella, Bacteroides) [36]. Beta diversity differences were more pronounced in complex porcine microbiomes than in human samples, indicating that sequencing platform effects are sample-type dependent [36].

Table 2: Performance Comparison in 16S rRNA Profiling of Respiratory Samples [36]

| Performance Metric | Illumina NextSeq | Oxford Nanopore |

|---|---|---|

| Target Region | V3-V4 hypervariable region (~300 bp) | Full-length 16S gene (~1,500 bp) |

| Species Richness | Higher | Lower |

| Species-Level Resolution | Limited | Improved |

| Community Evenness | Comparable | Comparable |

| Taxonomic Bias | Detected broader range of taxa | Overrepresented certain dominant species |

Whole Genome Sequencing for Bacterial Pathogens

A 2025 study on Clostridioides difficile highlights the trade-off between accuracy and resolution. Illumina sequencing produced reads with an average quality of 99.68% (Q25), while Nanopore sequencing produced reads with 96.84% (Q15) quality, representing a tenfold difference in error rates [37]. This resulted in approximately 640 base errors per genome in Nanopore data, which incorrectly assigned over 180 alleles in core genome MLST (cgMLST) analysis, rendering Nanopore-derived phylogenies inadequate for high-resolution outbreak investigation [37]. However, both platforms performed comparably in detecting key virulence genes (tcdA, tcdB, cdtAB) and identifying sequence types (STs) when using raw read-based tools [37].

Metagenomic and Targeted NGS for Lower Respiratory Infections

A comprehensive 2025 diagnostic performance comparison of three NGS approaches for lower respiratory infections revealed distinct clinical use cases. Metagenomic NGS (mNGS) identified the highest number of species (80) but had the highest cost ($840) and longest turnaround time (20 hours) [3]. Capture-based targeted NGS (tNGS) demonstrated the highest accuracy (93.17%) and sensitivity (99.43%) against a comprehensive clinical diagnosis, while amplification-based tNGS showed poor sensitivity for gram-positive (40.23%) and gram-negative bacteria (71.74%) but high specificity for DNA viruses (98.25%) [3].

Table 3: Diagnostic Performance of NGS Methods for Lower Respiratory Infections [3]

| Parameter | Metagenomic NGS (mNGS) | Capture-based tNGS | Amplification-based tNGS |

|---|---|---|---|

| Number of Species Identified | 80 | 71 | 65 |

| Cost (USD) | $840 | Information Missing | Information Missing |

| Turnaround Time | 20 hours | Information Missing | Information Missing |

| Diagnostic Accuracy | Lower | 93.17% | Lower |

| Sensitivity | Lower | 99.43% | Lower (40.23% for G+, 71.74% for G-) |

| Specificity (DNA Virus) | Lower | Lower | 98.25% |

| Best Application | Rare/novel pathogen detection | Routine diagnostic testing | Rapid results with limited resources |

Environmental DNA and Targeted Detection

In environmental DNA (eDNA) applications for detecting an invasive host-parasite complex, both Illumina and Nanopore showed similar detection rates for the host species (P. parva), but only when Nanopore sequencing was performed under optimal conditions [41]. Interestingly, Nanopore detected the parasite (S. destruens) in multiple sites where Illumina failed, potentially due to different bioinformatic approaches or Nanopore's higher error rate leading to misassignments [41].

Experimental Protocols for Pathogen Sequencing

Standardized protocols are essential for reproducible genomic research on emerging pathogens. Below are detailed methodologies for key applications cited in the performance comparisons.

Protocol 1: 16S rRNA Profiling for Respiratory Microbiomes

This protocol is adapted from the comparative study of respiratory microbial communities [36].

Sample Collection and DNA Extraction:

- Sample Collection: Collect respiratory samples (e.g., bronchoalveolar lavage fluid) and store immediately at -80°C.

- DNA Extraction: Extract genomic DNA using a commercial kit (e.g., Sputum DNA Isolation Kit). Use ~1 mL of sample, following the manufacturer's instructions with optional modifications to optimize yield and purity.

- Quality Control: Assess DNA concentration and quality using a fluorometer (e.g., Qubit 4) and spectrophotometer (e.g., Nanodrop 2000).

Library Preparation and Sequencing: For Illumina Sequencing:

- Target Amplification: Prepare libraries targeting the V3-V4 hypervariable region of the 16S rRNA gene using a region-specific panel (e.g., QIAseq 16S/ITS Region Panel).

- PCR Amplification: Use the following program: denaturation at 95°C for 5 min; 20 cycles of 95°C for 30 s, 60°C for 30 s, 72°C for 30 s; final elongation at 72°C for 5 min.

- Indexing: Attach index barcodes in a second amplification step.

- Sequencing: Pool libraries and sequence on an Illumina NextSeq platform to generate 2 × 150 bp or 2 × 300 bp paired-end reads.

For Oxford Nanopore Sequencing:

- Library Preparation: Prepare sequencing libraries using the 16S Barcoding Kit (e.g., SQK-16S114.24), following the manufacturer's protocol.

- Sequencing: Pool barcoded libraries and load onto a flow cell (e.g., R10.4.1). Sequence on a MinION Mk1C device using MinKNOW software until flow cell end of life (e.g., 72 hours).

Data Analysis: Illumina Data:

- Processing: Process data using a standardized workflow like nf-core/ampliseq.

- Quality Control: Evaluate sequence quality with FastQC and summarize with MultiQC.

- Primer Trimming: Trim primers using Cutadapt, discarding sequences without primers.

- ASV Inference: Use DADA2 for error correction, read merging, and chimera removal to generate amplicon sequence variants (ASVs).

- Taxonomic Classification: Classify ASVs against the SILVA 138.1 database.

Nanopore Data:

- Basecalling and Demultiplexing: Perform using the Dorado basecaller integrated into MinKNOW, using the High Accuracy (HAC) model.

- Quality Control and Classification: Process reads using the EPI2ME Labs 16S Workflow, which includes filtering and taxonomic classification against the SILVA 138.1 database.

Protocol 2: Metagenomic NGS for Mycobacterium tuberculosis Detection

This protocol is adapted from the large-scale clinical comparison of mNGS and RT-PCR for tuberculosis diagnosis [42].

Sample Processing and DNA Extraction:

- Sample Collection: Collect lower respiratory tract specimens (e.g., BALF, sputum) or extrapulmonary samples.

- DNA Extraction: Extract DNA using a dedicated kit (e.g., IDSeq Micro DNA Kit), following the manufacturer's instructions.

- Negative Controls: Include negative controls in each batch to monitor for contamination.

Library Preparation and Sequencing:

- Library Construction: Construct DNA libraries using the transposase method, which fragments DNA and adds adapters in a single step.

- Quality Control: Assess library quality and concentration.

- Sequencing: Perform 75 bp single-end sequencing on an Illumina NextSeq 550 platform. Generate a minimum of 10 million reads per sample, with a quality score (Q30) ≥ 85%.

Bioinformatic Analysis:

- Quality Filtering: Use fastp software to remove low-quality sequences and short reads (< 35 bp).

- Host Depletion: Map reads to the human reference genome (GRCh38) using BWA and remove aligned reads to deplete host sequences.

- Pathogen Identification: Align remaining non-host reads to comprehensive pathogen genome databases.

- Positive Identification: For Mycobacterium tuberculosis, apply a threshold of standardized microbial read numbers (SMRNs) ≥ 1 for a positive report.

Figure 1: mNGS Workflow for Mycobacterium tuberculosis Detection. This diagram outlines the key steps in the metagenomic NGS protocol for detecting MTB from clinical samples, from nucleic acid extraction to bioinformatic analysis and reporting. SMRN: Standardized Microbial Read Numbers [42].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful sequencing for pathogen research relies on a foundation of carefully selected reagents, kits, and computational tools. The following table details key solutions used in the experimental protocols cited in this guide.

Table 4: Essential Research Reagents and Kits for Pathogen Sequencing

| Item Name | Function/Application | Specific Example(s) |

|---|---|---|

| Nucleic Acid Extraction Kits | Isolation of high-quality DNA/RNA from diverse sample matrices (BALF, sputum, bacterial cultures). | Sputum DNA Isolation Kit [36], QIAamp UCP Pathogen DNA Kit [3], IDSeq Micro DNA Kit [42], DNeasy PowerSoil Pro Kit [37] |

| 16S rRNA Amplification Panels | Targeted amplification of 16S rRNA gene regions for microbiome profiling. | QIAseq 16S/ITS Region Panel (for Illumina) [36], ONT 16S Barcoding Kit SQK-16S114.24 (for Nanopore) [36] |

| Library Preparation Kits | Fragmenting DNA/RNA and attaching sequencing adapters for NGS. | Nextera XT Kit (Illumina WGS) [37], Respiratory Pathogen Detection Kit (amplification-based tNGS) [3] |

| Enzymes for Sample Prep | Digesting host nucleic acids and facilitating cell lysis to enhance pathogen detection. | Benzonase (human DNA depletion) [3], Lysozyme (bacterial cell wall lysis) [37], Proteinase K (general protein digestion) [37] |