Machine Learning for Automated AFM Biofilm Analysis: From Imaging to Clinical Prediction

This article explores the transformative integration of Machine Learning (ML) with Atomic Force Microscopy (AFM) for automated, quantitative biofilm analysis.

Machine Learning for Automated AFM Biofilm Analysis: From Imaging to Clinical Prediction

Abstract

This article explores the transformative integration of Machine Learning (ML) with Atomic Force Microscopy (AFM) for automated, quantitative biofilm analysis. Aimed at researchers, scientists, and drug development professionals, it details how ML overcomes traditional AFM limitations—such as small scan areas and labor-intensive manual analysis—to enable high-throughput, large-area imaging and sophisticated classification of biofilm architecture. The content covers foundational concepts, practical methodologies for implementation, solutions for troubleshooting, and rigorous validation of ML models. By synthesizing recent advancements, this review highlights how automated AFM biofilm image analysis is paving the way for new strategies in combating biofilm-associated infections and industrial biofouling, with a forward-looking perspective on its clinical and industrial applications.

The Convergence of Machine Learning and AFM: A New Paradigm for Biofilm Research

FAQ: Why is the standard scan area of a traditional Atomic Force Microscope (AFM) a major limitation for biofilm research?

Biofilms are inherently heterogeneous communities that can span millimeter-scale areas, exhibiting significant structural and chemical variation across different regions. Traditional AFM has a restricted scan range, typically less than 100 micrometers per image, as limited by its piezoelectric actuator [1]. This creates a fundamental scale mismatch, making it impossible to capture the full, functionally relevant architecture of a biofilm and raising concerns about the representativeness of data taken from a single, small scan area [1].

FAQ: What specific artifacts can occur when using AFM on biofilm structures, and how do they arise?

Biofilms contain fine structures like flagella, pili, and extracellular polymeric substances (EPS), which are often on the same scale as or smaller than the AFM probe tip. This leads to a common artifact known as tip convolution [2]. During scanning, the finite size and shape of the tip physically interact with these nanoscale features, distorting the image. The result is a significant overestimation of the width of these structures and an inability to accurately resolve their true shape [2]. For example, flagella with a actual height of 20-50 nm can appear much wider in a standard AFM image [1].

FAQ: My biofilm samples are delicate and hydrated. How does the AFM scanning process itself potentially alter the sample?

The labor-intensive and slow nature of traditional AFM operation is a key concern for delicate biological samples. Biofilms are highly hydrated structures, and their native state is best studied in liquid. The prolonged scanning time in traditional AFM increases the risk of sample deformation, especially when operating in contact mode where the tip is in constant physical contact with the soft, vulnerable biofilm surface [1] [2]. This can compress or even tear the EPS matrix, leading to inaccurate topographical and mechanical data.

FAQ: I need to gather statistically significant data from my biofilms. Why is this challenging with conventional AFM?

The limited field of view and slow data acquisition speed of traditional AFM make comprehensive, high-throughput analysis impractical. Manually finding regions of interest and collecting a sufficient number of scans to represent the entire biofilm is extremely time-consuming [1]. Furthermore, the vast amount of high-resolution data generated from multiple scans requires manual processing, which is inefficient and can introduce operator bias, hindering the extraction of robust, quantitative parameters like cell count, confluency, and morphology across the entire community [1].

Troubleshooting Guide: Overcoming Traditional AFM Limitations

| Critical Challenge | Root Cause | Impact on Biofilm Analysis | Potential Solution Pathway |

|---|---|---|---|

| Limited Field of View [1] | Restricted scan range of piezoelectric actuators (<100 µm). | Inability to link cellular-scale features to the functional, millimeter-scale organization of the biofilm; non-representative sampling [1]. | Implement automated large-area AFM that stitches multiple high-resolution images together [1]. |

| Tip Convolution Artifacts [2] | Finite size/shape of probe tip interacting with nanoscale biofilm features (e.g., flagella, EPS). | Distorted topography; overestimation of feature widths; inaccurate structural resolution [2]. | Use sharper, high-aspect-ratio tips; apply tip deconvolution algorithms during data processing [2]. |

| Slow Throughput & Labor Intensity [1] | Manual operation for region selection, scanning, and data analysis. | Inability to capture dynamic processes or achieve statistical significance; operator-dependent results [1]. | Integrate machine learning (ML) for autonomous operation, site selection, and sparse scanning to accelerate acquisition [1]. |

| Sample Deformation [2] | Physical forces between tip and soft, hydrated biofilm matrix during prolonged contact-mode scanning. | Damage to delicate structures like flagella; inaccurate nanomechanical property measurements [2]. | Employ gentler imaging modes (e.g., tapping mode in liquid); optimize scanning parameters (setpoint, feedback gains) [2]. |

| Data Analysis Bottleneck [1] | Manual processing of high-volume, information-rich AFM image data. | Inefficient and subjective extraction of quantitative parameters (cell count, shape, orientation) [1]. | Deploy ML-based image segmentation and classification for automated, high-volume quantitative analysis [1]. |

Experimental Protocol: Automated Large-Area AFM with ML Analysis

This methodology outlines the procedure for overcoming the limitations of traditional AFM, as demonstrated in recent research on Pantoea sp. YR343 biofilms [1].

1. Sample Preparation

- Surface Treatment: Grow biofilms on PFOTS-treated glass coverslips or silicon substrates to study the effect of surface properties on bacterial adhesion [1].

- Inoculation: Inoculate a petri dish containing the prepared coverslips with the bacterial strain in a liquid growth medium.

- Incubation & Harvesting: At selected time points (e.g., 30 minutes for initial attachment, 6-8 hours for cluster formation), remove a coverslip and gently rinse it with a buffer solution to remove non-adherent planktonic cells [1].

- Drying: Air-dry the sample before AFM imaging. Note: This step may alter native hydrated structures, and imaging under liquid is preferable for physiological conditions.

2. Automated Large-Area AFM Imaging

- Hardware Setup: Use an AFM system equipped with a large-range scanner and a stage capable of precise millimeter-scale movement.

- Software Automation: Implement a control script to automatically navigate the stage and acquire a grid of multiple, contiguous high-resolution AFM images (e.g., 100 µm x 100 µm each) across the biofilm.

- Image Acquisition: Scan images with minimal overlap to maximize acquisition speed. The use of a sharp probe (nominal radius <10 nm) is recommended to minimize tip convolution artifacts [2].

3. Image Stitching and Data Pre-processing

- Stitching Algorithm: Use a computational stitching algorithm that can seamlessly merge the individual AFM tiles into a single, large, high-resolution mosaic, even with limited matching features between images [1].

- Deconvolution (if needed): Apply tip deconvolution algorithms to the stitched image to correct for broadening effects and more accurately determine the true dimensions of nanofibers and other fine structures [2].

4. Machine Learning-Based Analysis

- Segmentation: Train a machine learning model (e.g., a U-Net convolutional neural network) on a manually annotated dataset to accurately identify and segment individual bacterial cells from the background and the EPS matrix in the large-area AFM image.

- Classification & Quantification: Use the segmented output for automated extraction of quantitative data, including [1]:

- Cell density (count per unit area)

- Surface confluency (% coverage)

- Cellular morphology (length, width, aspect ratio)

- Spatial orientation and distribution

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| PFOTS-Treated Glass | Creates a hydrophobic surface to study the effect of surface properties on bacterial attachment and early biofilm assembly [1]. |

| Pantoea sp. YR343 | A model Gram-negative, rod-shaped bacterium with peritrichous flagella, used for studying the genetic regulation of biofilm formation [1]. |

| Flagella-Deficient Mutant | A control strain used to confirm the identity of filamentous appendages (e.g., flagella) imaged by AFM [1]. |

| Large-Range AFM Scanner | A piezoelectric scanner capable of moving the probe over millimeter-scale distances, which is essential for large-area analysis [1]. |

| Sharp AFM Probes | Probes with a high aspect ratio and a nominal tip radius of <10 nm are critical for resolving nanoscale features like flagella and minimizing image distortion [2]. |

| Image Stitching Algorithm | Software that computationally merges multiple AFM images into a single, seamless mosaic, enabling the study of large biofilm areas [1]. |

| ML Segmentation Model | A trained algorithm (e.g., U-Net) that automatically identifies and outlines individual cells in AFM images, enabling high-throughput quantification [1]. |

Frequently Asked Questions (FAQs)

Q1: What are the primary benefits of using Core ML for Atomic Force Microscopy (AFM) in biofilm research?

Core ML brings several key benefits to AFM-based biofilm research. It significantly enhances data analysis by enabling automated, high-throughput segmentation, and classification of complex biofilm features from AFM topographical data, overcoming limitations of manual analysis which is time-consuming and subject to observer bias [1] [3]. Furthermore, Core ML is instrumental in automating the experimental process itself. It can control image stitching for large-area AFM scans and, when integrated within advanced frameworks, can even autonomously orchestrate entire AFM workflows—from experimental design and instrument control to data capture and analysis—dramatically accelerating research throughput [1] [4].

Q2: My Core ML model works correctly on macOS but produces incorrect results on iOS. What could be causing this?

This is a known compatibility issue, particularly with models converted from certain frameworks like YOLO. The discrepancy often arises from differences in how the neural engine on iOS devices handles certain model architectures or operators compared to macOS. A verified solution is to ensure you use a compatible conversion pipeline. For instance, when exporting YOLO models, using the format=mlmodel parameter with coremltools==6.2 has been shown to resolve these issues and produce a model that works consistently across both macOS and iOS [5].

Q3: After a macOS/iOS update, my previously functional Core ML model fails to load or produces scrambled outputs. How can I resolve this?

This indicates a potential regression in the operating system's Core ML framework. The first step is to verify if the issue is a known bug by checking the release notes for the OS update and the Apple Developer Forums [6] [7]. If it is a widespread issue, you may need to wait for a subsequent OS update that contains a fix. In the interim, you can try to re-convert your source model to Core ML format using the latest version of coremltools, as this might generate a model compatible with the updated framework.

Q4: How can I improve the prediction speed of my Core ML model in a real-time analysis app?

To optimize prediction speed, first use Xcode's Instruments tool to profile your app and identify the bottleneck [6] [7]. Ensure your model is configured to use the most appropriate compute unit (CPU, GPU, or Neural Engine) for your specific model and task; sometimes forcing CPU-only execution can be more predictable. Implement async prediction APIs to avoid blocking your app's main thread. For video processing, consider downsampling the input frames or running inference on every other frame to reduce the load, as parallel inference on multiple threads may not always yield a speedup due to internal resource contention [6] [7].

Q5: Can I use a custom Core ML model for feature detection with the Vision framework?

Yes, you can use custom Core ML models with the Vision framework via VNCoreMLRequest. However, for optimal integration, ensure your model's input and output formats are compatible. The framework can handle input image resizing and padding (e.g., converting a 1920x1080 frame to a 512x512 model input). For output, while Vision has built-in support for certain feature types like rectangles or human body points, a custom model will typically return a CoreMLFeatureValueObservation, requiring you to manually parse the results and map the coordinates back to the original image space, as Vision may not automatically undo the preprocessing steps [6] [7].

Troubleshooting Guides

Issue 1: Model Conversion Errors or Incompatibility

Problem: Errors occur when converting a trained model (e.g., from PyTorch, TensorFlow) to the Core ML (.mlpackage) format, or the converted model does not behave as expected.

| Step | Action | Details/Command |

|---|---|---|

| 1 | Verify coremltools Version | Use the latest stable version. For some models (e.g., YOLO), legacy versions like 6.2 are required [5]. |

| 2 | Check Operator Support | Ensure all model operators are supported by Core ML. The coremltools documentation lists supported layers. |

| 3 | Simplify the Model | For PyTorch models, try converting a traced model (torch.jit.trace) instead of a scripted one for better compatibility [7]. |

| 4 | Explore Alternative Paths | If direct conversion fails, first export to ONNX, then use a dedicated ONNX to Core ML converter. Note: Apple's official ONNX support may be limited, requiring legacy tools [7]. |

Issue 2: Incorrect Model Predictions on Specific Devices

Problem: A Core ML model produces correct results in the Xcode preview or on a Mac, but yields nonsense predictions on an iOS device or in the simulator.

| Possible Cause | Diagnosis Steps | Solution |

|---|---|---|

| Model Conversion Flaw | Check if the issue occurs on both physical iOS devices and the simulator. | Re-export the model using a verified conversion workflow. For YOLO models, use format=mlmodel [5]. |

| Compute Unit Discrepancy | In Xcode, change the model's "Compute Units" to CPU Only and GPU and test again. | The Neural Engine on some devices may have precision or operator issues. Locking the model to CPU/GPU can ensure consistency [5]. |

| Input/Output Preprocessing | Manually verify the input data normalization and output interpretation logic in your Swift code. | Ensure the preprocessing (e.g., pixel value scaling) in your app exactly matches what was done during the model's training. |

Issue 3: Poor Performance or Slow Inference Speed

Problem: Model prediction takes too long, causing lag in the application, especially when processing video streams or multiple images.

| Area to Investigate | Optimization Strategy |

|---|---|

| Model Architecture | Design or select a lighter-weight model (e.g., MobileNet for vision). Use Core ML's model compression tools to reduce size and latency. |

| Input Resolution | Reduce the input image dimensions for the model, balancing the trade-off between accuracy and speed. |

| App Integration | Use the async version of the prediction API (prediction(image: completionHandler:)) to avoid blocking the UI. For video, ensure you are not queuing multiple overlapping inference requests [6] [7]. |

| Hardware Utilization | Profile with Instruments. If GPU utilization is low (~20%), the model or task might be inherently CPU-bound, and threading may not help [6] [7]. |

Experimental Protocols & Workflows

Protocol 1: Automated Large-Area AFM with ML-Powered Stitching and Analysis

This protocol enables the creation of high-resolution, millimeter-scale maps of biofilm topography from multiple AFM scans [1].

1. Sample Preparation:

- Microorganism: Pantoea sp. YR343 (or other biofilm-forming strain) [1].

- Substrate: PFOTS-treated glass coverslips or silicon substrates to modulate bacterial adhesion [1] [8].

- Growth Conditions: Inoculate coverslips in a petri dish with liquid growth medium. Incubate for desired time (e.g., 30 min for initial attachment; 6-8 h for cluster formation) [1].

- Preparation for AFM: Gently rinse coverslips to remove unattached cells and air-dry before imaging [1].

2. Automated Large-Area AFM Scanning:

- Instrument Setup: Configure the AFM for automated stage movement and sequential imaging.

- Scan Acquisition: Program a grid of overlapping high-resolution scans (e.g., 100+ individual images) to cover the millimeter-scale area of interest [1].

3. Machine Learning-Powered Image Processing:

- Image Stitching: Use a machine learning algorithm to automatically align and stitch the individual AFM scans into a seamless large-area map, even with minimal overlapping features [1].

- Feature Segmentation & Classification: Implement a second ML model (e.g., a convolutional neural network) to analyze the stitched image. The model is trained to:

Protocol 2: Autonomous AFM Operation Using an LLM-Powered Agent Framework (AILA)

This protocol outlines the use of a multi-agent LLM framework for fully autonomous design and execution of AFM experiments [4].

1. Framework Setup:

- Architecture: Deploy the AILA (Artificially Intelligent Lab Assistant) framework, which uses a multi-agent system (e.g., AFM Handler Agent, Data Handler Agent) coordinated by a central planner [4].

- Tool Integration: Equip agents with tools for document retrieval (e.g., AFM manuals), code execution (Python API for AFM control), image optimization, and image analysis [4].

2. Experimental Workflow Execution:

- Task Input: Provide a natural language query to the framework (e.g., "Acquire an AFM image of HOPG and extract its friction and roughness parameters") [4].

- Autonomous Planning: The LLM planner dissects the query into sequential objectives and routes tasks to the appropriate agents [4].

- Instrument Control: The AFM Handler Agent generates and executes Python scripts to control the AFM hardware, performing tasks like cantilever selection, probe approach, and image acquisition [4].

- Data Analysis: Upon image acquisition, the Data Handler Agent takes over, directing analysis tools (e.g., Image Analyzer) to compute the requested parameters (e.g., roughness, friction) [4].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and computational tools used in advanced, ML-enhanced AFM biofilm research.

| Item/Tool | Function in Research |

|---|---|

| PFOTS-treated Glass Coverslips | A modified surface substrate used to study and promote controlled bacterial adhesion and early biofilm assembly dynamics [1] [8]. |

| Pantoea sp. YR343 | A gram-negative, rod-shaped model bacterium with peritrichous flagella, used for studying the genetic regulation of biofilm formation and cell-surface interactions [1]. |

| coremltools | The primary Python package from Apple for converting trained models from popular frameworks (PyTorch, TensorFlow) into the Core ML (.mlpackage) format for on-device deployment [5]. |

| AILA Framework | An LLM-powered multi-agent framework (Artificially Intelligent Lab Assistant) that automates the complete scientific workflow for AFM, from experimental design to results analysis [4]. |

| Vision Framework (Apple) | An iOS/macOS framework that simplifies working with computer vision and Core ML models, handling tasks like image resizing/padding and facilitating real-time analysis on video streams [6] [7]. |

| Staphylococcal Biofilm ML Classifier | A specialized machine learning algorithm, available as an open-access tool, designed to automatically classify the maturity stage of staphylococcal biofilms from AFM images into one of six predefined classes [3]. |

Performance Benchmarks and Model Evaluation

Table: LLM Agent Performance on AFMBench Tasks (Success Rate %) [4]

| Task Category | GPT-4o | Claude-3.5-Sonnet | GPT-3.5-Turbo | Llama-3.3-70B |

|---|---|---|---|---|

| Documentation | 88.3% | 85.3% | 46.7% | 40.0% |

| Analysis | 33.3% | Information Missing | Information Missing | Information Missing |

| Calculation | 56.7% | Information Missing | Information Missing | Information Missing |

| Documentation + Analysis | 23.3% | Information Missing | Information Missing | Information Missing |

Table: Human vs. Machine Learning Performance in Biofilm Classification [3]

| Metric | Human Observers | Machine Learning Algorithm |

|---|---|---|

| Mean Accuracy | 0.77 ± 0.18 | 0.66 ± 0.06 |

| Off-by-One Accuracy | Not Reported | 0.91 ± 0.05 |

Frequently Asked Questions (FAQs)

Q1: My AFM images of biofilms appear blurry and lack fine detail. The automated tip approach completed, but the image seems out of focus. What could be causing this? This is a classic symptom of "false feedback," where the AFM's tip approach is tricked into stopping before the probe interacts with the sample's hard forces. This is often caused by:

- Surface Contamination Layer: In ambient air, a layer of contamination exists on every surface. The probe can become trapped in this soft layer, preventing it from reaching the actual biofilm surface [9].

- Electrostatic Forces: Surface charge on the cantilever or sample can create electrostatic interactions that mimic hard surface contact, causing the approach to stop prematurely. This is particularly common when using soft cantilevers in non-vibrating mode [9].

Q2: I see repetitive, unexpected lines or patterns across my AFM image. What are the common sources of this noise? Repetitive lines can stem from two primary issues:

- Electrical Noise: This often appears at a frequency of 50/60 Hz. You can identify it by comparing the noise frequency to your scan rate. If the scan rate is 1 Hz, you would see 25-30 lines in the trace direction [10].

- Laser Interference: If your sample is highly reflective, laser light can reflect off the sample surface and interfere with the light reflecting from the cantilever in the photodetector, creating noise patterns. Using a probe with a reflective coating can mitigate this [10].

Q3: My biofilm structures look distorted or duplicated in the AFM image. What is the most likely culprit? This typically indicates a tip artefact. A contaminated, worn, or broken tip will produce irregular, repeating features because the shape of the tip, rather than the sample, is being recorded. If you see structures that appear larger than expected or trenches that seem smaller, the tip may be blunt. The solution is to replace the probe with a new, sharp one [10].

- Cause: Conventional, low-aspect-ratio pyramidal tips are physically unable to reach the bottom of narrow trenches or accurately trace steep-sided features in a biofilm's EPS matrix [10].

- Solution: Switch to a High Aspect Ratio (HAR) or conical tip. These tips are taller and sharper, allowing them to access and resolve high-relief features more accurately [10].

Troubleshooting Guide: Common AFM Imaging Issues with Biofilms

Table 1: Summary of common AFM issues, their causes, and solutions for biofilm imaging.

| Problem | Primary Cause | Recommended Solution |

|---|---|---|

| Blurry, out-of-focus images | False feedback from surface contamination or electrostatic charge | Increase tip-sample interaction: Decrease setpoint (vibrating mode) or Increase setpoint (non-vibrating mode) [9] |

| Repetitive lines/patterns | Electrical noise (50/60 Hz) or laser interference from reflective samples | Identify quiet imaging periods; Use probes with reflective coatings (e.g., gold, aluminum) [10] |

| Distorted/duplicated features | Tip artefact from a blunt, broken, or contaminated tip | Replace the AFM probe with a new, sharp one [10] |

| Inaccurate trench/vertical feature resolution | Low-aspect-ratio or pyramidal tip geometry | Use High Aspect Ratio (HAR) or conical tips [10] |

| Streaks on images | Environmental vibrations or loose particles on sample surface | Use anti-vibration table; Ensure sample preparation minimizes loose material [10] |

Experimental Protocols: Characterizing Biofilm EPS Composition

For ML models to accurately interpret AFM data, correlating topographic information with chemical composition is essential. Here are key methodologies for analyzing the Extracellular Polymeric Substances (EPS) that constitute the biofilm matrix.

Protocol 1: Fourier Transform Infrared (FT-IR) Spectroscopy for EPS Chemical Analysis FT-IR spectroscopy is a non-destructive technique that provides information about the molecular composition and functional groups present in a biofilm's EPS [11].

- Principle: Organic molecules absorb infrared light at specific wavelengths, causing chemical bonds to vibrate. The resulting absorption spectrum is a fingerprint of the sample's molecular composition [11].

- Workflow:

- Sample Preparation: Biofilms can be analyzed hydrated (for early-stage formation) or dried (for mature biofilms) on a suitable crystal (e.g., germanium) [11].

- Data Acquisition: Collect absorption spectra in the 900-3000 cm⁻¹ range. Key spectral windows correspond to major EPS components [11].

- Data Analysis: Monitor the evolution of band intensity ratios (e.g., Amide II/Polysaccharide) to track changes in biomass composition, such as preferential polysaccharide or protein production during biofilm development [11].

Table 2: Key FT-IR Spectral Signatures for Biofilm EPS Components [11].

| IR Spectral Window | Corresponding EPS Component | Main Functional Groups |

|---|---|---|

| 1500–1800 cm⁻¹ | Proteins | C=O, N-H (Amide I & II bands) |

| 900–1250 cm⁻¹ | Polysaccharides, Nucleic Acids | C-O, C-O-C, P=O |

| 2800–3000 cm⁻¹ | Lipids | CH, CH₂, CH₃ |

Protocol 2: Enzymatic EPS Disruption for Functional Insight Using enzymes to target specific EPS components helps determine their role in biofilm integrity and can be a strategy for biofilm removal [11].

- Principle: If an enzyme causes biofilm detachment, the molecule it targets is critical for structural stability. This reveals structure-function relationships within the matrix [11].

- Workflow:

- Enzyme Selection: Choose enzymes based on the EPS components you wish to target.

- Treatment: Incubate biofilms with the selected enzyme(s) for a defined period (e.g., 24 hours) [11].

- Analysis: Quantify the reduction in sessile biomass or the detachment of cells. The efficacy is species and enzyme-specific, providing insight into the dominant EPS composition of the biofilm under study [11].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and reagents for AFM-based biofilm analysis and EPS characterization.

| Item | Function/Benefit | Example Use-Case |

|---|---|---|

| High Aspect Ratio (HAR) AFM Probes | Accurately resolve high-relief features like trenches and vertical structures in biofilms [10] | Imaging the complex, heterogeneous architecture of mature biofilms [10] |

| Reflective Coated AFM Probes (Au, Al) | Reduce laser interference noise on reflective samples [10] | High-resolution imaging of biofilms formed on medical device materials |

| Cation Exchange Resin (CER) | Extracts EPS from microbial cultures with minimal cell disruption [12] | Isolating the EPS matrix from bacterial and fungal cultures for compositional analysis [12] |

| Hydrolytic Enzymes (Proteases, Amylases) | Target specific EPS components to study their functional role or disrupt biofilms [11] | Determining if proteins or polysaccharides are key to a biofilm's mechanical stability [11] |

| Fluorescent Lectins | Bind to specific glycoconjugates (sugars) in the EPS for visualization [13] | Mapping the spatial distribution of different polysaccharides within the biofilm matrix using microscopy [13] |

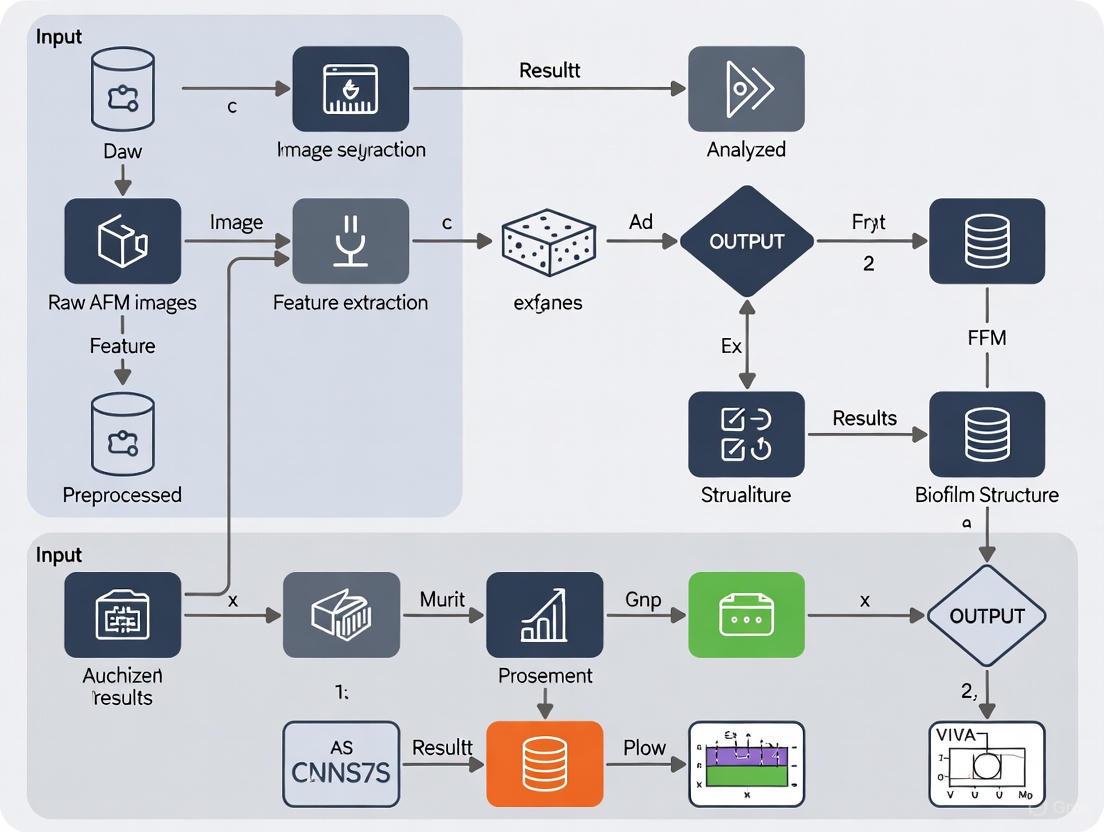

ML-Enhanced AFM Biofilm Analysis Workflow

The integration of machine learning with established experimental protocols creates a powerful pipeline for automated and insightful biofilm analysis. The diagram below illustrates this workflow from data acquisition to model-driven insight.

ML-AFM Biofilm Analysis Pipeline

This workflow highlights how ML models are trained on fused datasets. AFM provides high-resolution spatial and mechanical data, while complementary techniques like FT-IR and enzymatic assays supply chemical composition. The resulting model can then automatically quantify key characteristics like spatial heterogeneity and classify EPS components directly from AFM images.

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing Common AFM Imaging Artifacts in Biofilm Samples

Problem 1: Unexpected or Repetitive Patterns in Images

- Symptoms: Structures appear duplicated; irregular shaped features repeat across the image; trenches appear smaller than expected.

- Cause: Tip artefacts, often from a broken or contaminated AFM probe [10].

- Solution: Replace the AFM probe with a new, sharp one. Ensure proper probe handling and storage to prevent contamination [10].

Problem 2: Repetitive Lines Across the Image

- Symptoms: Horizontal or vertical lines repeating at regular intervals.

- Cause A: Electrical noise, often at 50/60 Hz, from building circuits or other instrumentation [10].

- Solution: Image during quieter electrical periods (e.g., early mornings or late evenings). If possible, relocate the AFM to a location with cleaner power [10].

- Cause B: Laser interference from reflections off a highly reflective sample surface [10].

- Solution: Use an AFM probe with a reflective coating (e.g., gold or aluminum) on the cantilever to prevent spurious laser reflections [10].

Problem 3: Streaks on Images

- Symptoms: Long, directional smearing or streaks in the image.

- Cause A: Environmental noise or vibration from doors, traffic, or people moving nearby [10].

- Solution: Ensure the anti-vibration table is functional. Image during quieter times or use an acoustic enclosure. Place a "STOP AFM in progress" sign to alert others [10].

- Cause B: Loose particles or surface contamination on the sample [10].

- Solution: Optimize sample preparation protocols to minimize loosely adhered material. Ensure samples are thoroughly rinsed and dried if appropriate [10].

Guide 2: Optimizing AFM for Large-Area Biofilm Analysis

This guide is based on the methodology from the featured case study [14] [1] [15].

Problem: Difficulty Capturing Millimeter-Scale Biofilm Architecture

- Challenge: Conventional AFM has a small imaging area (<100 µm), making it impossible to link cellular-scale events to the functional macroscale organization of a biofilm [14] [1].

- Solution: Implement an automated large-area AFM approach.

- Equipment: Use a commercial AFM (e.g., DriveAFM from Nanosurf) equipped with an automated sample stage [15].

- Automation: Develop or use software for automated stage positioning and image acquisition with minimal user intervention [14] [15].

- Image Stitching: Employ algorithms that can stitch multiple high-resolution images together seamlessly, even with limited overlap to maximize speed [14] [1].

- Data Analysis: Integrate machine learning-based tools for automated image segmentation, cell detection, and classification to manage the high volume of data [14] [1].

Frequently Asked Questions (FAQs)

FAQ 1: What are the key advantages of using AFM over other microscopy techniques for biofilm research? AFM provides high-resolution topographical images and nanomechanical property maps without extensive sample preparation that can alter native structures (e.g., dehydration, metal coating) [1]. It can be operated under physiological conditions (in liquid), preserving the biofilm's native state, and allows for the visualization of fine structures like flagella and EPS matrix components [1] [15].

FAQ 2: My biofilm images are noisy. What post-processing steps can I apply? Several post-processing steps can significantly improve AFM image quality [16]:

- Leveling/Flattening: Corrects for sample tilt and unevenness caused by the scanning process.

- Noise Filtering: Apply spatial filters (e.g., low-pass filters for high-frequency noise, median filters for spike noise) or use Fourier transforms to remove unwanted noise from the image [16].

FAQ 3: How can Machine Learning (ML) assist in analyzing large-area AFM biofilm data? ML is transformative for handling the complex, high-volume data from large-area AFM [14] [1] [3]. Key applications include:

- Image Analysis: Automating the segmentation of images, detection of individual cells, and classification of biofilm features [14] [1].

- Classification: ML algorithms can be trained to classify biofilm maturity stages based on topographic characteristics, reducing observer bias and saving time [3].

- Scanning Optimization: AI can automate routine tasks like selecting scanning regions and optimizing scanning parameters, enabling continuous, multi-day experiments [1].

FAQ 4: What are the critical sample preparation steps for imaging Pantoea sp. biofilms with AFM? As described in the case study [1] [15]:

- Surface Treatment: Use PFOTS-treated glass coverslips or structured silicon substrates as the adhesion surface.

- Inoculation: Inoculate a petri dish containing the prepared coverslips with Pantoea sp. YR343 cells in a liquid growth medium.

- Incubation: Incubate for selected time points (e.g., ~30 minutes for initial attachment studies).

- Rinsing: Gently rinse the coverslip to remove unattached (planktonic) cells.

- Drying: Dry the sample before AFM imaging (Note: drying may not be necessary if using a liquid cell for in-situ imaging).

Experimental Protocols

Protocol 1: Analyzing Early-Stage Biofilm Assembly ofPantoea sp.YR343

Objective: To characterize the initial attachment, cellular orientation, and role of flagella in biofilm formation using large-area automated AFM.

Materials:

- Pantoea sp. YR343 (gram-negative, rod-shaped, motile bacterium with peritrichous flagella) [1] [15].

- PFOTS-treated glass coverslips or gradient-structured silicon substrates [14] [15].

- Liquid growth medium.

- Automated large-area AFM system [15].

Methodology:

- Sample Preparation: Prepare surfaces and inoculate with bacteria as described in FAQ #4 [1] [15].

- AFM Imaging:

- Mount the sample on the automated AFM stage.

- Program the system to acquire multiple contiguous high-resolution images over a millimeter-scale area.

- Use a scan size for individual images that balances resolution and acquisition time (e.g., 10x10 µm to 100x100 µm).

- Data Processing:

Expected Outcomes:

- Visualization of a preferred cellular orientation forming a distinctive "honeycomb" pattern [14] [15].

- High-resolution mapping of flagella interactions between cells, suggesting flagellar coordination beyond initial attachment [14] [1].

- Quantitative data on how surface topography (e.g., pillar/ridge spacing) disrupts or influences honeycomb structure formation [15].

Protocol 2: Machine Learning-Assisted Classification of Biofilm Maturity

Objective: To automate the classification of biofilm growth stages from AFM topographical data.

Materials:

- AFM image dataset of biofilms at various incubation times.

- ML classification software (e.g., open-access tools designed for AFM biofilm image classification) [3].

Methodology:

- Training Data: Establish a ground truth by having independent researchers manually classify AFM images into pre-defined maturity classes based on topographic features (e.g., substrate coverage, cell density, EPS presence) [3].

- Algorithm Training: Train a machine learning algorithm (e.g., a convolutional neural network) on the classified dataset.

- Validation: Validate the algorithm's performance by comparing its classification accuracy, recall, and "off-by-one" accuracy against the established ground truth [3].

Expected Outcomes:

- An automated tool capable of classifying biofilm maturity stages with high accuracy, reducing manual analysis time and observer bias [3].

Table 1: Key Quantitative Findings from Pantoea sp. YR343 AFM Analysis

| Parameter | Measured Value | Experimental Context | Significance |

|---|---|---|---|

| Cell Dimensions | ~2 µm length, ~1 µm diameter [1] [15] | Single surface-attached cell after ~30 min incubation. | Provides a baseline for cellular morphology and surface area (~2 µm²) [15]. |

| Flagella Height | ~20-50 nm [1] [15] | Appendages visualized around cells during early attachment. | Confirms the ability of AFM to resolve sub-cellular structures critical for motility and attachment. |

| Biofilm Architecture | Distinctive "honeycomb" pattern [14] [15] | Cell clusters formed after 6-8 hours of propagation. | Reveals a highly organized spatial structure beyond random clustering. |

| Inhibition Efficacy | 76.99% biofilm inhibition [17] | Treatment of Pantoea agglomerans with garlic extract. | Highlights a potential natural anti-biofilm agent by targeting quorum sensing (pagI/R gene) [17]. |

Table 2: Essential Research Reagent Solutions

| Reagent / Material | Function in Experiment |

|---|---|

| Pantoea sp. YR343 | A model gram-negative, biofilm-forming bacterium used to study early assembly dynamics and pattern formation [1] [15]. |

| PFOTS-treated Glass | A hydrophobic surface treatment used to promote and study bacterial adhesion and biofilm formation [14] [15]. |

| Structured Silicon Substrates | Surfaces with engineered pillar/ridge architectures to combinatorially screen how surface topography influences bacterial attachment and biofilm structure [15]. |

| TasA Protein (B. subtilis) | An amyloid-like protein essential for the structural integrity and wrinkling of dual-species biofilms with Pantoea agglomerans [18]. |

| Garlic Extract | A natural substance shown to inhibit Pantoea agglomerans biofilm formation by interfering with quorum sensing pathways [17]. |

Workflow Visualization

Automated AFM Biofilm Analysis Workflow

Building Your Analysis Pipeline: A Step-by-Step Guide to ML-Driven AFM

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using large-area, automated AFM for biofilm research? Automated large-area AFM overcomes the key limitation of conventional AFM: its small imaging area (typically <100 µm), which is restricted by piezoelectric actuator constraints [1]. This new approach enables high-resolution imaging over millimeter-scale areas, allowing researchers to link cellular and sub-cellular scale features to the functional macroscale organization of biofilms. The integration of machine learning automates image stitching and analysis, providing a comprehensive view of spatial heterogeneity that was previously obscured [1].

Q2: My AFM images appear blurry and lack fine detail, even though the system says it is in feedback. What could be causing this? This symptom is typical of "false feedback," where the system stops the probe approach before it interacts with the hard surface forces [19]. The two most common causes are:

- Surface Contamination Layer: In ambient air, a layer of contamination on the sample can trap the probe. Solution: Increase the probe-surface interaction. In vibrating (tapping) mode, decrease the setpoint value; in non-vibrating (contact) mode, increase the setpoint value [19].

- Surface/Cantilever Charge: Electrostatic forces can bend the cantilever or affect vibration amplitude. Solution: Create a conductive path between the cantilever and sample, or use a stiffer cantilever [19].

Q3: How can machine learning assist in the analysis of AFM biofilm images? Machine learning (ML) transforms AFM data analysis by automating tasks that are time-consuming and prone to human bias [1]. In biofilm research, ML algorithms can be designed to:

- Classify Biofilm Maturity: ML can automatically classify AFM images of biofilms into different stages of maturity based on topographic characteristics, achieving accuracy comparable to human observers [3].

- Segment and Identify Features: ML aids in automating image segmentation, cell detection, and classification, enabling efficient extraction of quantitative parameters like cell count, confluency, and cell shape over large areas [1].

Q4: What are some common sources of artifacts in AFM images, and how can I minimize them? Artifacts are distortions of the true topography and can arise from several sources [20]:

- The Probe: A blunt, broken, or dirty tip is a common source. It can cause features to appear larger than they are or reduce image resolution. Regularly inspect and clean your tip.

- The Scanner: Thermal drift due to slow scanning can cause inaccurate distances between features. Ensure your operating environment is thermally stable.

- Image Processing: Over-processing images during leveling or filtering can introduce artifacts. Apply minimal, necessary processing steps [16].

Troubleshooting Guides

Problem 1: Failure to Achieve a Stable Image During Large-Area Scanning

| Symptom | Possible Cause | Solution | Principle |

|---|---|---|---|

| Blurry, out-of-focus image with loss of nanoscopic detail [19]. | False feedback from a surface contamination layer. | In Tapping Mode: Decrease the amplitude setpoint. In Contact Mode: Increase the deflection setpoint [19]. | Increases tip-sample interaction force to penetrate the soft contamination layer and interact with the hard surface. |

| Image distortion and false feedback, especially with soft cantilevers [19]. | Electrostatic forces between a charged cantilever and sample. | Create a conductive path between the cantilever holder and sample. If not possible, switch to a stiffer cantilever [19]. | Dissipates electrostatic charge, reducing attractive/repulsive forces that mimic hard surface contact. |

| Poor image resolution after nanoindentation or scanning on contaminated surfaces [21]. | Dirty or contaminated probe tip. | Perform cleaning indentations on a soft, sacrificial sample (e.g., gold film). Use a large trigger threshold (e.g., 2.0 V) for multiple indents in the same location [21]. | The high force interaction can knock debris off the tip. This should only be done on a sample specifically intended for tip cleaning. |

| Streaks or bands in the image [20]. | Particles moving on the surface due to the scanning tip. | Ensure the sample is securely fixed. For dispersed particles, verify that the sample preparation (e.g., spin-coating) is adequate [20]. | Prevents the tip from pushing loose material across the surface during the scan. |

Problem 2: Poor Image Quality and Artifacts After Stitching

| Symptom | Possible Cause | Solution | Principle |

|---|---|---|---|

| Misalignment or visible seams between stitched image tiles. | Drift or lack of sufficient overlap between individual scans. | Use a large-area AFM system with automated navigation and ensure minimal (~10%) overlap between tiles. Apply ML-enhanced stitching algorithms [1]. | Corrects for small positional inaccuracies and blends images using features common to adjacent tiles. |

| Uneven background or "waviness" across the stitched image. | Improper leveling of individual tiles or the final stitched image. | Apply a plane fit or polynomial leveling routine to each tile before stitching. Perform a final, gentle flattening on the complete stitched image [16]. | Removes low-frequency scanner bow and tilt, creating a flat baseline for accurate topographic measurement. |

| High-frequency noise corrupting fine detail. | Electronic or environmental noise. | Apply post-processing noise filters. A low-pass filter removes high-frequency noise. A median filter is effective at removing shot noise without blurring edges [16]. | Attenuates signal frequencies that are higher than the resolution limit of the image. |

Problem 3: Challenges in Automated Biofilm Classification

| Symptom | Possible Cause | Solution | Principle |

|---|---|---|---|

| ML model fails to classify biofilm maturity stages accurately. | Insufficient or biased training data. | Train the model on a large and diverse dataset of AFM images that have been pre-classified by human experts into distinct topographic classes [3]. | Provides the algorithm with a robust ground truth, enabling it to learn the defining features of each class. |

| Model performance is good on training data but poor on new images. | Overfitting to the training set. | Use a simplified model architecture, increase training data, or employ data augmentation techniques. Validate the model on a completely independent test set of images [3]. | Ensures the model learns generalizable features of biofilm topography rather than memorizing the training images. |

| Inconsistent classification results between different human operators. | Subjective observer bias in defining the ground truth. | Establish a clear, written classification scheme with defined topographic characteristics for each biofilm class (e.g., based on substrate coverage, cell morphology, EPS presence) [3]. | Standardizes the classification process, improving consistency for both human observers and the ML model. |

Experimental Protocols

Protocol 1: Automated Large-Area Scanning of Bacterial Biofilms

Objective: To acquire high-resolution, stitched topographical data of a bacterial biofilm over a millimeter-scale area.

1. Sample Preparation

- Strain: Pantoea sp. YR343 (gram-negative, rod-shaped, peritrichous flagella) is used as a model biofilm-forming bacterium [1].

- Substrate: Use PFOTS-treated glass coverslips to promote adhesion [1].

- Inoculation: Place coverslips in a petri dish and inoculate with bacteria in liquid growth medium.

- Incubation: Incubate for desired time (e.g., 30 min for initial attachment; 6-8 h for cluster formation).

- Rinsing: At time points, gently rinse coverslip to remove unattached cells.

- Drying: Air-dry the sample before AFM imaging [1].

2. AFM Setup and Data Acquisition

- Instrument: A large-area AFM system with automated stage control.

- Probe Selection: A sharp silicon or silicon nitride tip is appropriate for high-resolution imaging of biological samples.

- Mapping the Area: Define a rectangular grid of tiles to cover the desired millimeter-scale area. Set a minimal overlap (~10%) between tiles [1].

- Automated Scanning: Initiate the automated sequence. The system will image each tile consecutively. Machine learning algorithms can assist in selecting scanning sites and optimizing the process [1].

3. Image Processing and Stitching

- Leveling: Apply a plane fit or polynomial flattening to each individual tile to correct for tilt and scanner bow [16].

- Stitching: Use a stitching algorithm to merge the tiles into a single, large image. ML can aid in seamless stitching even with minimal overlap [1].

Protocol 2: ML-Based Classification of Biofilm Maturity from AFM Images

Objective: To train a machine learning model to automatically classify the maturity stage of a staphylococcal biofilm based on its AFM topography [3].

1. Data Preparation (Ground Truth Labeling)

- Acquire AFM Images: Collect a large set of AFM images of biofilms grown under various conditions and for different durations.

- Define Classes: Establish a classification framework. For example, define 6 distinct classes based on topographic characteristics (e.g., isolated cells, microcolonies, EPS-dominated structures) [3].

- Human Classification: Have multiple independent experts classify each image in the dataset according to the defined framework. This set of human-classified images serves as the "ground truth" [3].

2. Model Training and Validation

- Algorithm Design: Develop or select a suitable ML algorithm (e.g., a convolutional neural network) for image classification.

- Train the Model: Input the ground-truthed images to train the model to recognize the features associated with each maturity class.

- Validate Performance: Test the trained model on a separate set of images not used during training. Compare its classifications to the human-generated ground truth. A well-designed model can achieve high off-by-one accuracy (e.g., 0.91) [3].

The Scientist's Toolkit: Research Reagent Solutions

| Essential Material | Function in the Experiment |

|---|---|

| PFOTS-Treated Glass Coverslips | A hydrophobic surface treatment that promotes bacterial adhesion and facilitates the study of early-stage biofilm assembly and surface attachment dynamics [1]. |

| Soft Gold Film Sample | A sacrificial, standardized soft sample used specifically for cleaning contaminated AFM tips. Performing high-force indentations on it can knock debris off the tip without damaging it [21]. |

| Pantoea sp. YR343 | A gram-negative, rod-shaped model bacterium with peritrichous flagella. It is well-characterized for forming biofilms on abiotic surfaces, making it ideal for studying attachment and cluster formation [1]. |

| Stiff Cantilevers | Probes with a high spring constant. They are less sensitive to electrostatic forces and are therefore recommended for use in non-vibrating (contact) mode to avoid false feedback from surface charge [19]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My stitched AFM image shows visible seams and misalignments between individual tiles. What could be the cause and solution?

A: Visible seams often result from insufficient overlap between adjacent image tiles or drift during the lengthy acquisition process. The automated large-area AFM approach addresses this by using machine learning algorithms designed to perform seamless stitching even with minimal matching features between images [1]. To fix this:

- Increase Overlap: Ensure a minimum overlap (e.g., 10-15%) between tiles during the experimental design phase to provide the algorithm with enough data for accurate alignment.

- Leverage Advanced ML Stitching: Utilize ML-based stitching algorithms that are robust to limited features. These algorithms can identify and correlate subtle, non-unique features across tiles, creating a seamless composite image without manual intervention [1].

Q2: The ML model for segmenting individual bacterial cells is performing poorly, failing to distinguish cells from the substrate or from each other in dense clusters. How can I improve its accuracy?

A: Poor segmentation accuracy typically stems from a lack of model specificity or insufficient training data. The solution involves refining the model with high-quality, task-specific data.

- Re-train with Domain-Specific Data: Models like the Segment Anything Model (SAM) show impressive zero-shot capabilities but often underperform on specialized medical or biological images without fine-tuning [22]. Fine-tuning SAM on a curated dataset of your AFM biofilm images, even with a limited number of examples, significantly boosts its performance on your specific task [22].

- Utilize Generative Models for Data Augmentation: In ultra low-data regimes, frameworks like GenSeg can be employed. GenSeg uses generative AI and multi-level optimization to create high-fidelity synthetic image-mask pairs tailored to improve segmentation outcomes [23]. This can provide the diverse training data needed to improve model robustness without extensive manual labeling.

Q3: I am encountering strange, repeating patterns in my AFM images that do not correspond to the sample. What is this and how do I resolve it?

A: This is a classic sign of a contaminated or damaged AFM probe [10]. A blunt or dirty tip can produce artifacts where irregular shapes are duplicated across the image.

- Immediate Action: Replace the AFM probe with a new, clean one.

- Prevention: Always inspect probes before use and ensure proper handling and storage to avoid contamination. Using probes from reputable manufacturers that guarantee tip sharpness can minimize this issue [10].

Q4: My AFM image appears blurry and lacks nanoscale detail, even though the system says it is in feedback. What is happening?

A: This condition, known as "false feedback," occurs when the probe interacts with a surface contamination layer or electrostatic forces before reaching the actual sample surface [24].

- For Surface Contamination: Increase the tip-sample interaction force. In vibrating (tapping) mode, this is done by decreasing the setpoint value; in non-vibrating (contact) mode, it is done by increasing the setpoint value. This forces the probe through the contamination layer to interact with the hard surface forces of the sample [24].

- For Surface/Cantilever Charge: Create a conductive path between the cantilever and the sample. If that is not possible, use a stiffer cantilever to reduce the effects of electrostatic forces [24].

Key Experimental Protocols

Protocol 1: Automated Large-Area AFM Imaging and Stitching for Biofilm Analysis

This protocol is adapted from the large-area automated AFM approach used to study Pantoea sp. YR343 biofilms [1].

Sample Preparation:

- Grow your biofilm on an appropriate substrate (e.g., PFOTS-treated glass coverslips [1]).

- At the desired time point, gently rinse the substrate to remove unattached cells.

- Air-dry the sample before AFM imaging.

AFM Setup and Automated Scanning:

- Mount the sample in the AFM.

- Define a large, millimeter-scale area to be imaged. The software will automatically divide this area into a grid of individual tiles.

- Initiate the automated scanning routine. The system will sequentially image each tile with high resolution.

Machine Learning-Based Image Stitching:

- The acquired image tiles are processed by an ML stitching algorithm.

- This algorithm identifies features in each tile and computes the optimal transformation to align them into a single, continuous large-area image.

- The output is a seamless, high-resolution topographic map of the biofilm.

Protocol 2: Fine-Tuning the Segment Anything Model (SAM) for AFM Biofilm Segmentation

This protocol is based on empirical studies for optimal fine-tuning of foundation models for medical image segmentation [22].

Data Preparation:

- Curate a dataset of AFM biofilm images with corresponding manually annotated ground-truth segmentation masks.

- Split the data into training and validation sets.

Model Selection and Setup:

Fine-Tuning Strategy:

- Employ a parameter-efficient fine-tuning strategy that updates weights in both the encoder and decoder. This has been shown to be superior to other strategies [22].

- Train the model using the curated AFM image-mask pairs.

Validation:

- Evaluate the fine-tuned model's performance on the validation set using metrics like Dice Similarity Coefficient (DSC) to confirm improved segmentation accuracy.

Data Presentation

Table 1: Quantitative Performance of Segmentation Models in Low-Data Regimes

The following table summarizes the performance of a generative segmentation framework (GenSeg) compared to established baselines, demonstrating its utility when training data is scarce. Data is expressed as Dice Similarity Coefficient (DSC) [23].

| Segmentation Task | Imaging Modality | Baseline Model (Performance DSC) | GenSeg-Augmented Model (Performance DSC) | Absolute Performance Gain |

|---|---|---|---|---|

| Placental Vessels | Fetoscopic | DeepLab: 0.31 | GenSeg-DeepLab: 0.51 | +0.206 |

| Skin Lesions | Dermoscopy | UNet: ~0.51 | GenSeg-UNet: ~0.66 | +0.150 |

| Polyps | Colonoscopy | DeepLab: ~0.52 | GenSeg-DeepLab: ~0.63 | +0.113 |

| Breast Cancer | Ultrasound | UNet: ~0.50 | GenSeg-UNet: ~0.62 | +0.126 |

Table 2: Essential Research Reagent Solutions for Automated AFM Biofilm Analysis

This table lists key materials and computational tools used in the featured experiments and the broader field [1] [22] [23].

| Item Name | Function in the Workflow | Specific Example / Note |

|---|---|---|

| PFOTS-treated Substrate | Creates a controlled hydrophobic surface for studying bacterial adhesion and early biofilm formation patterns [1]. | Used for studying Pantoea sp. YR343 [1]. |

| Segment Anything Model (SAM) | Foundation model for image segmentation that can be fine-tuned for specific tasks like segmenting bacterial cells from AFM images [22]. | Fine-tuning with parameter-efficient methods in both encoder and decoder is recommended [22]. |

| Generative Segmentation (GenSeg) Framework | A generative AI model that creates synthetic image-mask pairs to train accurate segmentation models in ultra low-data regimes [23]. | Can improve performance by 10-20% with only 50-100 training samples [23]. |

| nnU-Net | A self-configuring framework for deep learning-based biomedical image segmentation that automatically adapts to new datasets [25]. | Forms the backbone of specialized tools like TotalSegmentator [25]. |

Workflow Visualization

Diagram: Automated AFM Image Processing with ML

The diagram below illustrates the integrated workflow of large-area AFM imaging, ML-powered stitching, and AI-driven segmentation for biofilm analysis.

Troubleshooting Guides

Common Quantification Errors and Solutions

Table 1: Troubleshooting Automated Feature Extraction

| Problem | Possible Cause | Solution |

|---|---|---|

| Inaccurate Cell Counts | - Poor image segmentation due to noise or uneven illumination.- ML model confusion between cells and debris or flagella. [26] | - Pre-process images with filters to reduce noise. [27]- Retrain ML classification model with a more diverse dataset that includes examples of flagella and debris. [26] [28] |

| Incorrect Confluency Measurement | - Inconsistent thresholding for distinguishing cells from background.- Failure to separate individual cells within dense clusters. | - Use a trainable, deep-learning-based segmentation tool (e.g., SINAP in IN Carta Software) that adapts to varying image contrast. [28]- Validate confluency results against a small, manually annotated area. |

| Faulty Orientation Data | - Tip artifacts from a contaminated or broken AFM probe, distorting cell shapes. [10]- Electrical or vibrational noise creating repetitive patterns in the image. [10] | - Inspect and replace the AFM probe with a new, clean one. [10]- Use a conductive cantilever coating to reduce laser interference and image at quieter times to minimize environmental noise. [10] |

Addressing "False Feedback" During AFM Imaging

Q: My AFM images appear blurry and lack nanoscopic detail, leading to failed feature extraction. What is happening?

A: This is a common issue known as "false feedback," where the AFM's automated tip approach stops before the probe interacts with the sample's hard surface forces. This can be caused by:

- Surface Contamination Layer: A layer of contamination in ambient air can trap the probe. Solution: Increase the probe-surface interaction force by decreasing the setpoint value in vibrating mode or increasing it in non-vibrating mode to force the probe through the layer. [29]

- Surface/Cantilever Charge: Electrostatic forces can bend the cantilever or affect vibration amplitude. Solution: Create a conductive path between the cantilever and sample, or use a stiffer cantilever to reduce the effect. [29]

Frequently Asked Questions (FAQs)

Q1: Why is automated analysis like machine learning crucial for quantifying AFM biofilm data?

A: Conventional analysis of individual cell dimensions is labor-intensive and becomes a major bottleneck when characterizing biofilms, which can contain immense numbers of cells. [26] [15] Automated techniques are essential for efficiently extracting parameters like cell count, confluency, shape, and orientation across large datasets, enabling statistically powerful analysis of the entire biofilm community. [26] [27]

Q2: What are the best practices for validating an ML model for cell detection and classification?

A: The development and validation of a robust ML model should follow a strict workflow:

- Training: Use a large set of accurately annotated images ("ground truth") to train the algorithm. [27] [28]

- Validation: Tune the model using a separate validation dataset to optimize its performance. [27]

- Testing: Evaluate the final model's performance on an independent test dataset. Key performance metrics include Precision (Positive Predictive Value) and Recall (Sensitivity), which help quantify false positives and false negatives, respectively. [27]

Q3: Our automated system struggles to segment images of 3D organoids or low-contrast samples. How can this be improved?

A: Challenges with low contrast, uneven background, and complex structures are common. A deep-learning-based segmentation tool is particularly effective here. Unlike conventional fixed-parameter methods, these tools can be trained to account for significant variability in sample appearance, ensuring accurate and reliable object detection across different experimental conditions. [28]

Experimental Protocols for Validation

Protocol: Cohesive Energy Measurement via AFM Abrasion

This method provides quantitative, nanoscale data on biofilm mechanical properties, which can be correlated with structural features. [30]

- Sample Preparation: Grow a 1-day biofilm on a suitable substrate (e.g., a membrane). Equilibrate the moist biofilm in a controlled humidity chamber (~90%) to maintain consistent water content. [30]

- Baseline Imaging: Collect a non-perturbative topographic image of a defined region (e.g., 5x5 µm) at a low applied load (~0 nN). [30]

- Abrasion Phase: Zoom into a smaller sub-region (e.g., 2.5x2.5 µm) and perform repeated raster scans at an elevated load (e.g., 40 nN) to abrade the biofilm. [30]

- Post-Abrasion Imaging: Return to a low load and capture another image of the larger region to visualize the abraded area. [30]

- Data Analysis:

- Subtract the post-abrasion image from the baseline image to determine the volume of displaced biofilm.

- Calculate the frictional energy dissipated during abrasion from the friction force data.

- The cohesive energy (nJ/µm³) is calculated as the frictional energy dissipated divided by the volume of biofilm removed. [30]

Workflow: Large-Area AFM for Multiscale Analysis

This workflow enables the link between cellular/sub-cellular features and the macroscopic organization of a biofilm. [26] [15]

The Scientist's Toolkit

Table 2: Essential Research Reagents and Materials

| Item | Function in Experiment |

|---|---|

| Pantoea sp. YR343 | A gram-negative, rod-shaped model bacterium with peritrichous flagella, used for studying early-stage biofilm assembly and cellular orientation on surfaces. [26] [15] |

| PFOTS-treated Glass | A silane-based treatment used to create a controlled hydrophobic surface for studying bacterial adhesion and the formation of specific patterns like honeycomb structures. [26] [15] |

| High-Aspect-Ratio (HAR) AFM Probes | Conical probes with a high height-to-width ratio are superior for accurately resolving steep-edged features and deep trenches in structured biofilms or engineered surfaces without side-wall artifacts. [10] |

| Metallically-Coated AFM Probes | Probes with a reflective coating (e.g., gold or aluminum) prevent laser interference issues, which is critical when imaging highly reflective samples to avoid streaks and noise in the data. [10] |

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common data-related issues that cause poor model performance in biofilm classification?

The most common issues stem from the data itself and include corrupt, incomplete, or insufficient data [31]. A frequent problem is class imbalance, where the dataset is skewed towards one maturity class (e.g., too many "mature" images and not enough "early-attachment" images) [32] [31]. This can cause the model to become biased and perform poorly on the under-represented classes. Other prevalent issues are overfitting, where the model memorizes the training data too closely and fails on new images, and underfitting, where the model is too simple to capture the relevant patterns [32].

FAQ 2: My model performs well on training data but poorly on new AFM images. What is happening?

This is a classic sign of overfitting [32]. It indicates that your model has learned patterns specific to your training set that do not generalize to new data. Solutions include applying feature selection techniques (e.g., PCA, Univariate Selection) to reduce complexity, implementing cross-validation during training to ensure the model is evaluated on different data subsets, and using data augmentation to artificially increase the size and diversity of your training dataset [31].

FAQ 3: How can I assess the fairness and reliability of my biofilm classification model?

Responsible AI testing is crucial. You should perform fairness testing to ensure the model's outputs are consistent across different demographic groups if such metadata exists [32]. Techniques for bias detection and mitigation, such as reweighting or resampling data, can be applied. Furthermore, focus on model transparency using tools like SHAP or LIME to understand which features in an AFM image (e.g., specific topographic structures) are driving the classification decision [32].

FAQ 4: What should I do if my model's performance degrades after it has been deployed?

Performance degradation over time is often due to model drift, where the statistical properties of the incoming AFM image data change compared to the original training data [32]. Establishing a continuous monitoring system is essential to track performance metrics like accuracy and precision. If drift is detected, it will be necessary to retrain the model with new data that reflects the current conditions [32].

Troubleshooting Guide

Common Problems and Solutions

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Low Accuracy on Test Set | Overfitting, Underfitting, Unbalanced Data, Incorrect Hyperparameters [32] [31] | 1. Use cross-validation for model selection [31].2. Balance the dataset via resampling or augmentation [31].3. Perform hyperparameter tuning (e.g., grid search) [31]. |

| Poor Generalization to New AFM Scans | Model Drift, Overfitting on Training Data, Inadequate Preprocessing [32] | 1. Implement ongoing performance monitoring and retrain the model periodically [32].2. Apply consistent image preprocessing (leveling, noise filtering) to all data [16].3. Use a hold-out test set from a different experimental batch for final validation. |

| Inconsistent Results Between Users | Observer Bias in Ground Truth, Lack of Standardized Protocols [3] [33] | 1. Establish a standardized, pre-labeled ground truth dataset for all users to benchmark against [3].2. Provide clear guidelines for AFM image acquisition to minimize technical variation [33]. |

| API/Deployment Errors | Incorrect Input Format, Payload Size Issues, Authentication Failures [32] | 1. Validate input data format and size before sending to the API [32].2. Test API endpoints for authentication, rate limiting, and error handling [32]. |

Performance Benchmarking

The following table summarizes key performance metrics from a published study on ML-based classification of staphylococcal biofilms, which can serve as a benchmark for your model's performance [3].

| Model / Evaluator | Mean Accuracy | Recall | Off-by-One Accuracy |

|---|---|---|---|

| Human Observers (Ground Truth) | 0.77 ± 0.18 | Not Specified | Not Specified |

| Machine Learning Algorithm | 0.66 ± 0.06 | Comparable to Human | 0.91 ± 0.05 |

Note: The "Off-by-One Accuracy" is a particularly useful metric for ordinal classification tasks like maturity staging, as it considers a prediction correct if it is within one class of the true label [3].

Experimental Protocols

Protocol 1: Standardized AFM Image Acquisition for ML

This protocol is adapted from methods used to generate consistent, high-quality training data [33] [1].

Sample Preparation:

- Grow Staphylococcus aureus biofilms in Brain-Heart Infusion (BHI) broth, which has been found more effective than Trypticase Soy Broth for biofilm formation [33].

- For enhanced biofilm yield, use a supplement mix consisting of 222.2 mM glucose, 116.9 mM sucrose, and 1000 mM NaCl [33].

- Incubate cultures statically at 37°C for varying durations to capture different maturity stages.

AFM Imaging:

- Gently rinse the biofilm sample to remove unattached planktonic cells and air-dry before imaging [1].

- Use an automated large-area AFM system if available to capture high-resolution images over millimeter-scale areas, which helps account for spatial heterogeneity [1].

- Acquire multiple images from different locations and biological replicates for each maturity stage.

Protocol 2: Data Preprocessing and ML Model Training

Image Preprocessing:

- Leveling/Flattening: Correct for unevenness caused by the AFM scanning process using plane fitting algorithms [16].

- Noise Filtering: Apply spatial filters (e.g., low-pass or median filters) to eliminate high-frequency noise unrelated to the sample's topography [16].

- Lateral Calibration: Use reference surfaces to correct for image distortions [16].

Model Training and Evaluation:

- Feature Selection: Use algorithms like Principal Component Analysis (PCA) or Random Forest-based feature importance to reduce dimensionality and select the most informative features [31].

- Model Selection: Try different algorithms (e.g., convolutional neural networks for image data) and use a validation set for initial comparison [31].

- Cross-Validation: Implement k-fold cross-validation to obtain a robust estimate of model performance and to help prevent overfitting [31].

- Hyperparameter Tuning: Optimize model-specific parameters (e.g., learning rate, number of layers) using techniques like grid search [31].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function in Experiment |

|---|---|

| Brain-Heart Infusion (BHI) Broth | A growth medium found to be more effective than Trypticase Soy Broth for maximizing in vitro biofilm formation by clinical isolates of S. aureus [33]. |

| Supplement Mix (Glucose, Sucrose, NaCl) | A solution of 222.2 mM glucose, 116.9 mM sucrose, and 1000 mM NaCl used to significantly increase biofilm biomass yield in TCP assays [33]. |

| PFOTS-treated Glass Coverslips | A surface treatment used to study the initial attachment and assembly dynamics of bacterial biofilms (e.g., for Pantoea sp.) under AFM [1]. |

| Crystal Violet Stain | A dye used in the Tissue Culture Plate (TCP) method to stain adhered biofilm biomass, which is then eluted and quantified spectrophotometrically [33]. |

| Open Access Desktop Classification Tool | A machine learning algorithm designed specifically to classify AFM images of staphylococcal biofilms into one of six maturity classes, available for researcher use [3]. |

Workflow and Troubleshooting Diagrams

Biofilm ML Classification Workflow

ML Troubleshooting Logic

Frequently Asked Questions

FAQ: My AFM images are not representative of the overall biofilm structure. How can I improve this? Conventional AFM has a limited scan range (typically <100 µm), which can miss the spatial heterogeneity of millimeter-scale biofilms [1]. To address this, implement a large-area automated AFM approach. This method automates the collection of multiple high-resolution images across millimeter-scale areas and uses machine learning-based algorithms to stitch them into a seamless, comprehensive image [1].

FAQ: How can I efficiently analyze the large datasets generated by large-area AFM? Manual analysis of large-area AFM data is impractical. Leverage machine learning-based image segmentation and analysis tools [1]. These can automate the extraction of quantitative parameters such as:

- Cell count and confluency

- Cellular morphology (e.g., length, diameter)

- Cellular orientation and spatial distribution [1]

FAQ: My machine learning model performs well on planktonic cell data but fails to predict antibiotic susceptibility in biofilms. Why? Biofilms possess distinct tolerance mechanisms that are not present in planktonic cells [34]. Conventional Antibiotic Susceptibility Tests (ASTs) and models trained on their data often fail because they do not account for the biofilm phenotype [34]. Ensure your training data comes from biofilm-specific susceptibility tests, such as the Biofilm Prevention Concentration (BPC) assay, which determines the lowest antibiotic concentration that prevents 90% of biofilm growth [34].

FAQ: What analytical techniques are best for predicting biofilm susceptibility? Multiple techniques can provide machine learning-ready data. The best choice may depend on whether you are predicting MIC (planktonic susceptibility) or BPC (biofilm susceptibility) [34].

- For MIC prediction: MALDI-TOF MS has shown high accuracy (97.83%) [34].

- For BPC prediction: Multi-excitation Raman spectroscopy has shown the best performance (80.43% accuracy) [34]. Other powerful techniques include Whole-Genome Sequencing (WGS) and Isothermal Microcalorimetry (IMC), which all demonstrate potential for predicting biofilm susceptibility [34].

FAQ: How can I validate my simulated AFM images? Use dedicated software like the BioAFMviewer to simulate AFM scanning on known protein structures from the PDB database [35]. You can then directly compare the simulated graphics with your experimental hs-AFM snapshots. This helps in interpreting resolution-limited images and confirming the orientation and conformation of your sample [35].

Experimental Protocols

Protocol 1: Large-Area AFM for Early Biofilm Assembly Analysis [1]

This protocol details the use of automated large-area AFM to study the initial stages of biofilm formation with high resolution.

- Surface Preparation: Treat glass coverslips with PFOTS to create a hydrophobic surface.

- Biofilm Growth: Inoculate a petri dish containing the treated coverslips with a liquid culture of your bacterial strain (e.g., Pantoea sp. YR343).

- Sample Harvesting: At selected time points (e.g., 30 minutes for initial attachment), remove a coverslip and gently rinse it with deionized water to remove non-adherent cells.

- Drying: Air-dry the sample before AFM imaging.

- Automated AFM Imaging:

- Mount the sample on the AFM stage.

- Use automated software to define a large, millimeter-scale scanning area.

- The system will automatically acquire multiple contiguous high-resolution images with minimal overlap.

- Image Processing:

- Use machine learning-based stitching algorithms to create a seamless composite image.

- Apply ML-based segmentation for automated cell detection, classification, and morphological analysis.

Protocol 2: Predicting Tobramycin Susceptibility in P. aeruginosa Biofilms using Machine Learning [34]

This protocol outlines a workflow for building a model to predict antibiotic susceptibility in biofilms.

- Strain Preparation: Use a collection of experimentally evolved and clinical P. aeruginosa isolates.

- Data Acquisition: For each strain, perform the following:

- Whole-Genome Sequencing (WGS): Sequence genomes and map variants against a wild-type reference.

- MALDI-TOF MS: Acquire proteomic fingerprints.

- Isothermal Microcalorimetry (IMC): Measure metabolic heat flow in the presence of tobramycin.

- Multi-Excitation Raman Spectroscopy (MX-Raman): Obtain biochemical fingerprints using multiple laser wavelengths.

- Susceptibility Testing:

- Determine the Minimal Inhibitory Concentration (MIC) using standard broth microdilution for planktonic cells.

- Determine the Biofilm Prevention Concentration (BPC) in a physiologically relevant medium (e.g., SCFM2). The BPC is the lowest antibiotic concentration that prevents ≥90% of biofilm growth.

- Machine Learning Model Training:

- Train an ordinal regression model using the data from each technique (WGS, MALDI-TOF, IMC, Raman) to predict the MIC and BPC values.

- Validate the model's performance on a held-out set of clinical isolates.

Data Presentation

Table 1: Performance of Machine Learning Models in Predicting Tobramycin Susceptibility [34]

| Analytical Technique | Data Type | MIC Prediction Accuracy (±1 dilution) | BPC Prediction Accuracy (±1 dilution) |

|---|---|---|---|

| MALDI-TOF MS | Proteomic Fingerprint | 97.83% | 73.91% |

| Multi-Excitation Raman | Biochemical Fingerprint | 89.13% | 80.43% |

| Whole-Genome Sequencing | Genomic Variants | 89.13% | 76.09% |

| Isothermal Microcalorimetry | Metabolic Activity | 89.13% | 73.91% |

Accuracy±1 refers to the percentage of samples for which the predicted MIC/BPC was correct within one 2-fold dilution step.