Navigating the Low Biomass Challenge: A Researcher's Guide to Robust 16S rRNA Gene Sequencing

Accurate 16S rRNA gene sequencing of low biomass samples is critical for exploring microbiomes in environments like the respiratory tract, tissues, and clinical specimens, but it is fraught with challenges...

Navigating the Low Biomass Challenge: A Researcher's Guide to Robust 16S rRNA Gene Sequencing

Abstract

Accurate 16S rRNA gene sequencing of low biomass samples is critical for exploring microbiomes in environments like the respiratory tract, tissues, and clinical specimens, but it is fraught with challenges including contamination and stochastic variation. This article provides a comprehensive framework for researchers and drug development professionals to overcome these hurdles. Drawing on the latest evidence, we cover foundational principles, optimized methodological protocols, advanced troubleshooting strategies, and rigorous validation techniques. The guide synthesizes key insights on biomass thresholds, contamination control, DNA extraction optimization, and bioinformatic denoising to ensure the generation of reliable, reproducible, and interpretable data from low biomass studies.

Understanding the Low Biomass Problem: Limits, Contamination, and Impacts on Data

FAQ: What Exactly is a "Low-Biomass" Environment?

In microbiome research, a low-biomass environment contains minimal amounts of microbial DNA, placing it near the limits of detection for standard DNA-based sequencing methods. In these environments, the target DNA signal can be easily overwhelmed by contaminant "noise" [1].

While some definitions classify low biomass quantitatively (e.g., below 10,000 microbial cells/mL), it is often more effective to consider biomass as a continuum. The technical challenges and risk of contamination become increasingly pronounced as the amount of native microbial DNA decreases [2]. The key characteristic is that even small amounts of contaminating DNA can disproportionately influence study results and their interpretation [1].

The table below summarizes key low-biomass environments frequently studied.

Table 1: Key Low-Biomass Environments in Microbiome Research

| Environment Category | Specific Examples | Key Characteristics & Challenges |

|---|---|---|

| Human Tissues & Fluids | Respiratory tract (e.g., nasopharynx), fetal tissues, blood, placenta, breastmilk, certain tumors [1] [3] [2] | Often dominated by host DNA; collection often invasive and requires stringent control for skin and reagent contaminants [2]. |

| Built Environments | Cleanrooms (e.g., spacecraft assembly facilities), hospital operating rooms, metal surfaces [1] [4] | Ultra-low biomass; requires specialized sampling and extensive process controls to distinguish environmental signal from "kitome" contamination [4]. |

| Natural Environments | Hyper-arid soils, deep subsurface, ice cores, treated drinking water, the atmosphere [1] | Native microbial communities are sparse and stressed; potential for contamination from drilling fluids, air, or sampling equipment is high [1]. |

FAQ: Why Do My Low-Biomass Samples Show High Levels of Unclassified Bacteria or Unusual Taxa?

This is a common problem often linked to contamination, low sequence quality, or suboptimal bioinformatics parameters.

- Dominance of Contaminants: In low-biomass samples, contaminating DNA from reagents, kits ("kitome"), or the laboratory environment can constitute a large portion of your sequencing data. These contaminants may include taxa not typically expected in your sample, such as Aquificae or Thermotogae, which are known kit contaminants [4] [5].

- Bioinformatics Pipeline Issues: Using inappropriate parameters during sequence analysis can reduce taxonomic resolution. For example, using an open-reference clustering method with a low percent-identity threshold (e.g., 85%) can drastically reduce your ability to classify sequences accurately. It is generally recommended to skip this clustering step and classify sequences directly using a classifier like

classify-sklearnin QIIME2 for better results [6]. - Low Library Complexity: Samples with very low microbial DNA input can generate sequencing libraries of low complexity, which hinders the classification process. There is no single standard method to automatically filter these, but analyzing high- and low-biomass samples separately can sometimes prevent the latter from skewing overall results [6].

FAQ: How Does Low Biomass Experimentation Differ from High Biomass?

The core difference lies in the proportional impact of contamination and technical variation. Practices suitable for high-biomass samples (like human stool) can produce misleading results when applied to low-biomass contexts [1].

Table 2: Key Differences Between High- and Low-Biomass Microbiome Studies

| Aspect | High-Biomass Samples (e.g., Stool, Soil) | Low-Biomass Samples (e.g., Nasopharynx, Tissue) |

|---|---|---|

| Contamination | Minor concern; target signal is much larger than contaminant noise [1]. | Primary concern; contaminant noise can rival or exceed the target signal, requiring rigorous controls [1] [2]. |

| Technical Variation | Lower impact on overall community profile [7]. | High impact; low biomass leads to greater variability and less reproducibility between technical replicates [8] [7]. |

| Experimental Focus | Discovering dominant community members and structure. | Distinguishing true signal from noise; validating the presence of rare taxa. |

| DNA Yield | High; relatively easy to detect. | Very low; approaches the detection limit of standard methods [1] [3]. |

| Bioinformatics | Standard pipelines are often sufficient. | Requires specialized decontamination steps and careful parameter tuning [8] [6]. |

Quantitative data shows that input biomass directly impacts data reliability. One study using a dilution series of a mock community found that estimates of relative abundance became highly unreliable below approximately 100 copies of the 16S rRNA gene per microliter [7]. Furthermore, the coefficient of variation (CV) for measuring bacterial genera increases dramatically as their relative abundance drops below 1%, a common scenario in low-biomass samples [7].

Experimental Protocol: A Rigorous Workflow for 16S rRNA Gene Sequencing of Low-Biomass Samples

The following protocol, synthesizing best practices from recent literature, is designed for processing respiratory (e.g., nasopharyngeal) or tissue biopsy samples [3] [8].

Step 1: Sample Collection & Nucleic Acid Extraction

Goal: Minimize contamination introduction during sample acquisition and DNA isolation.

- Collection: Use single-use, DNA-free collection vessels and swabs. Personnel should wear appropriate personal protective equipment (PPE) including gloves, masks, and clean lab coats to reduce contamination from skin and aerosols [1].

- Storage: Preserve samples in a suitable DNA-stabilizing buffer. PrimeStore Molecular Transport Medium has been shown to yield lower levels of background OTUs compared to other buffers like STGG [8].

- Extraction: Choose a kit validated for low-biomass samples. The NAxtra nucleic acid extraction protocol, which uses magnetic nanoparticles, has been piloted for respiratory samples and can be automated for high-throughput processing, completing extraction for 96 samples in approximately 14 minutes [3]. Alternatively, the DSP Virus/Pathogen Mini Kit (Kit-QS) has been shown to better represent hard-to-lyse bacteria compared to other kits like the ZymoBIOMICS DNA Miniprep Kit (Kit-ZB) [8].

- Elution: Elute DNA in a small volume (e.g., 50-80 µL) to increase DNA concentration for downstream steps [3].

Step 2: Library Preparation with Controls

Goal: Generate sequencing libraries while tracking and controlling for contaminants.

- PCR Amplification: Amplify the target 16S rRNA gene region (e.g., V1-V2 or V3-V4). For low-biomass samples, evidence suggests that conducting a single PCR reaction per sample (as opposed to pooling multiple PCR replicates) does not significantly impact outcomes like alpha and beta diversity, thereby saving time and resources [5].

- Mastermix: Using a premixed mastermix (vs. manually prepared) is acceptable and does not introduce significant bias, simplifying liquid handling [5].

- Inclusion of Controls: This is non-negotiable. Include the following in every run [1] [8] [2]:

- Negative Controls: No-template controls (NTCs, e.g., water), extraction blanks, and collection kit blanks.

- Positive Controls: A mock microbial community with a known composition (e.g., ZymoBIOMICS Microbial Community DNA Standard). This controls for amplification efficiency and bioinformatics accuracy.

- Technical Repeats: Process a subset of samples in duplicate or triplicate to assess reproducibility.

Step 3: Sequencing & Bioinformatics Analysis

Goal: Generate and analyze sequence data to distinguish biological signal from noise.

- Sequencing: Sequence on an Illumina MiSeq or similar platform. A sequencing depth of 50,000 reads per sample may be sufficient for low-biomass respiratory samples [3].

- Bioinformatics Processing: Use a standardized pipeline like QIIME 2. Key steps include:

- Denoising: Use DADA2 to infer amplicon sequence variants (ASVs) [3].

- Taxonomic Classification: Classify ASVs using a classifier (e.g.,

classify-sklearnin QIIME2) against a reference database (e.g., SILVA) [6]. Avoid open-reference clustering with low identity thresholds, as this reduces taxonomic resolution [6]. - Decontamination: Use statistical packages like

decontam(R) to identify and remove contaminants based on their prevalence in negative controls or their inverse correlation with DNA concentration [8]. Simply subtracting taxa found in NTCs is not recommended, as it can remove true biological sequences that have spilled over into controls via well-to-well contamination [8].

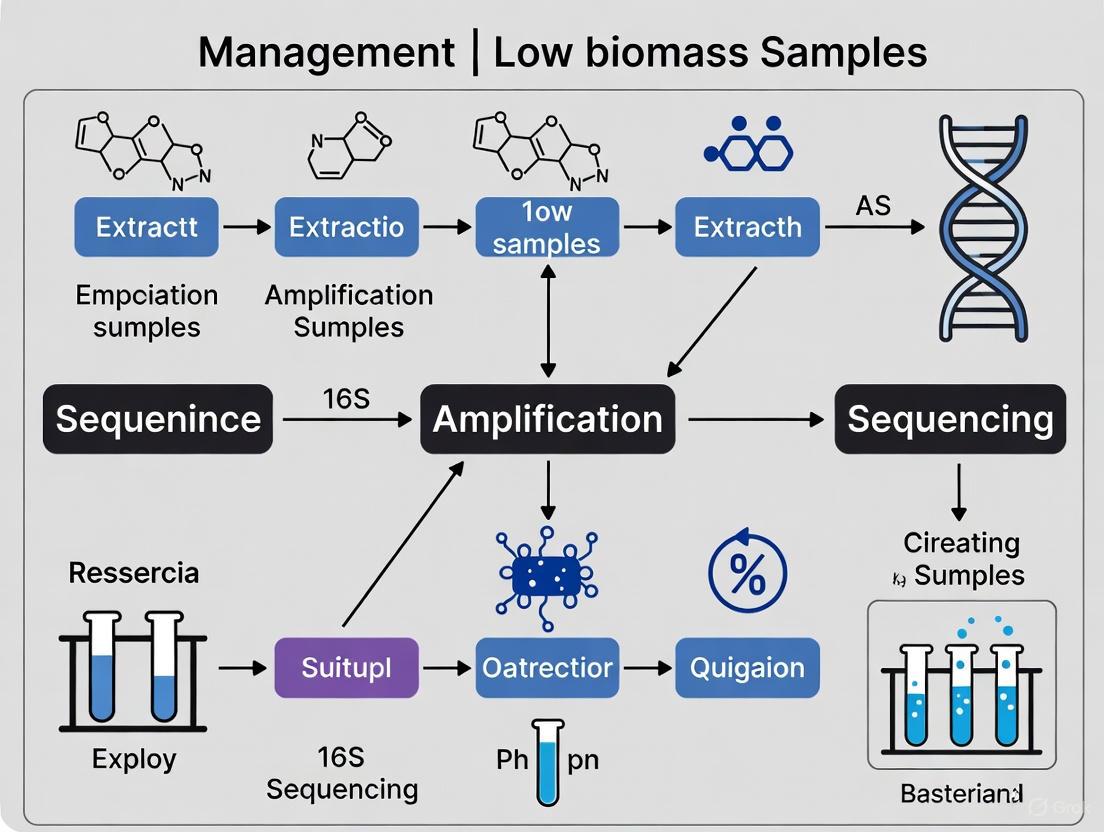

The following diagram visualizes the core workflow and the critical control points integrated at each stage.

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Low-Biomass Studies

| Item | Function & Importance | Examples & Notes |

|---|---|---|

| DNA Decontamination Reagents | To remove contaminating DNA from surfaces and reusable equipment prior to sampling. Critical for reducing background noise [1]. | Sodium hypochlorite (bleach), UV-C light, hydrogen peroxide, DNA removal solutions. Note: Autoclaving and ethanol kill cells but may not remove persistent DNA [1]. |

| DNA-Free Collection Consumables | To collect samples without adding contaminating DNA. | Single-use, pre-sterilized swabs, collection tubes, and suction devices (e.g., SALSA sampler for surfaces) [1] [4]. |

| Nucleic Acid Extraction Kits | To isolate maximal microbial DNA from a minimal starting biomass. | Kits optimized for low biomass: NAxtra kit (magnetic nanoparticles), DSP Virus/Pathogen Mini Kit (Kit-QS) [3] [8]. |

| Mock Microbial Community | A positive control containing known microbes. Validates the entire workflow from extraction to sequencing [8] [5]. | ZymoBIOMICS Microbial Community DNA Standard; helps identify kit-specific contaminants ("kitome") and PCR biases [3] [5]. |

| Premixed PCR Mastermix | A consistent, ready-to-use reagent for amplification. Reduces liquid handling errors and contamination risk [5]. | Q5 Hot Start High-Fidelity 2× Mastermix; shown to perform equivalently to manually prepared mastermix for 16S rRNA gene sequencing [5]. |

FAQ: How Can I Visually Assess and Troubleshoot Contamination in My Data?

Effective troubleshooting involves both visual data exploration and statistical tests.

- Examine Control Samples in Ordination Plots: Plot your beta diversity (e.g., PCoA based on Bray-Curtis dissimilarity). If your negative controls (NTCs, blanks) cluster closely with or within your low-biomass experimental samples, it indicates that contamination is heavily influencing your experimental samples [8] [7].

- Check the Abundance of Known Contaminants: Review the taxonomic composition of your controls. Common reagent contaminants include Cutibacterium acnes, Pseudomonas, Acinetobacter, and Ralstonia [4] [5]. If these taxa are abundant in your controls and also appear in your experimental samples, they are likely contaminants.

- Use Prevalence-Based Decontamination: Employ the

decontampackage in R with the "prevalence" method. This method identifies taxa that are significantly more prevalent in negative controls than in true samples, providing a statistically robust way to flag contaminants for removal [8]. - Assess Biomass Correlation: For samples with quantified 16S copy numbers (e.g., via qPCR), the

decontam"frequency" method can identify contaminants based on their inverse correlation with total biomass [8].

This technical support center provides guidance for researchers working with low microbial biomass samples, where the total bacterial cell count is near or below the detection limits of standard protocols. A primary challenge in this field is establishing a critical biomass threshold—the minimum number of bacterial cells required to generate robust, reproducible, and accurate 16S rRNA gene sequencing data that reflects the true biological signal and is not overwhelmed by technical noise and contamination [9].

What is the Critical Biomass Threshold? Experimental evidence indicates that this threshold is approximately 10^6 bacterial cells [9]. Samples with biomass below this level consistently lose compositional accuracy and show significantly reduced reproducibility in duplicate or triplicate processing [10] [9].

Why is this Threshold Critical? In low biomass conditions, the absolute amount of target microbial DNA is vanishingly small. Consequently, even trace amounts of contaminating DNA from reagents, kits, or the laboratory environment can constitute a large proportion of the total sequenced DNA, leading to spurious results [1] [10]. Adhering to this validated threshold is therefore essential for producing credible data.

Frequently Asked Questions (FAQs)

FAQ 1: What is the definitive evidence for a 10^6 bacterial cell minimum? The most direct evidence comes from a systematic dilution study using stool samples from healthy donors and a mock microbial community [9]. Researchers created samples with precisely defined microbial loads, from 10^4 to 10^8 cells, and processed them using multiple DNA extraction and PCR protocols. The key finding was that samples containing 10^6 or fewer microbes lost their sample identity in cluster analysis, meaning their microbial composition profiles no longer reliably grouped with higher biomass replicates of the same origin. This effect was observed across different protocols, establishing 10^6 as a robust lower limit for reliable analysis [9].

FAQ 2: My samples are biopsies/swabs and likely have low biomass. What are my biggest risks? Working with low biomass specimens like biopsies and swabs introduces several critical risks that can compromise your data [1] [10]:

- Contaminant Dominance: Contaminating DNA from reagents, kits, and the lab environment will make up a larger proportion of your final sequencing library, potentially obscuring or mimicking a true biological signal [10].

- Cross-Contamination: DNA can "spill over" between samples during plate-based setup, especially from high-biomass samples to adjacent low-biomass wells. This is also known as well-to-well contamination [1] [10].

- Reduced Reproducibility: Technical replicates (sample processed in duplicate/triplicate) of low biomass specimens show much lower concordance than high biomass replicates, indicating results are not reliable [10].

- PCR Amplification Bias: At low template concentrations, stochastic effects and primer bias during PCR amplification are exaggerated, distorting the true relative abundances of taxa [9].

FAQ 3: How can I estimate the biomass in my sample before sequencing? While exact cell counts may require culture, you can use quantitative PCR (qPCR) to estimate the number of 16S rRNA gene copies in your extracted DNA, which serves as a proxy for bacterial load [11] [10]. One study defined low biomass technical repeats specifically as those represented by less than 500 16S rRNA gene copies per microlitre of sample [10]. Quantifying your DNA extract this way provides a crucial pre-sequencing check to gauge potential data quality issues.

FAQ 4: Are some DNA extraction kits better for low biomass work? Yes, the choice of DNA extraction method significantly impacts results. Studies comparing kits have found that protocols based on silica membrane columns (e.g., ZymoBIOMICS DNA Miniprep Kit) generally perform better for low biomass samples compared to bead absorption or chemical precipitation methods, both in terms of DNA yield and more accurate representation of the microbial composition [9]. Furthermore, increasing the mechanical lysing time during extraction can improve the lysis of hard-to-lyse bacteria (e.g., Gram-positives), leading to a more representative profile [9].

Troubleshooting Guides

Issue: High Background Noise in Sequencing Data

Problem: Sequencing data from low biomass samples shows a high abundance of taxa typically associated with contaminants (e.g., Pseudomonas, Acinetobacter, Ralstonia), and the profile looks similar to your negative controls.

Solutions:

- Intensify Pre-sequencing Decontamination: [1]

- Decontaminate all surfaces and equipment with 80% ethanol (to kill cells) followed by a nucleic acid degrading solution (e.g., dilute bleach or commercial DNA removal solutions) to destroy residual DNA.

- Use UV-irradiated, pre-cleaned plasticware and DNA-free reagents.

- Wear appropriate personal protective equipment (PPE) including gloves, mask, and clean suit to minimize human-derived contamination.

- Incorporate Comprehensive Controls: [1] [10]

- Negative Controls: Include multiple negative controls that mimic your entire experimental process. These should include "blank" extraction kits (adding only elution buffer) and "no-template" PCR controls.

- Positive Controls: Use a mock microbial community standard with a known composition and cell count at a level similar to your expected samples. This controls for both extraction efficiency and PCR bias.

- Apply In Silico Decontamination: Post-sequencing, use statistical tools like the

decontampackage in R to identify and remove sequences that are prevalent in your negative controls from your experimental samples [10]. This is more nuanced than simply subtracting a control profile.

Issue: Poor Reprobility Between Technical Replicates

Problem: When the same low biomass sample is processed in duplicate or triplicate, the resulting microbial community profiles are highly inconsistent.

Solutions:

- Verify Biomass Sufficiency: First, use qPCR to confirm your sample is at or above the 10^6 cell threshold. If it is consistently below this level, the fundamental issue may be insufficient starting material, and the experimental design may need revision [9].

- Optimize PCR Protocol: Switch from a standard PCR protocol to a semi-nested PCR approach. This has been shown to improve the sensitivity and reproducibility of 16S rRNA gene analysis for samples with low microbial biomass, allowing for more reliable profiling down to the 10^6 cell level [9].

- Minimize Cross-Contamination: Ensure physical separation during plate setup. Use sealed plates and be cautious of aerosol generation when handling samples post-amplification. Including a unique DNA spike-in control in each sample can also help monitor for well-to-well contamination [1] [11].

Experimental Protocols & Data

Validated Protocol for Low Biomass 16S rRNA Gene Analysis

The following workflow summarizes an optimized protocol, refined for low biomass samples, based on experimental evidence [9].

The table below consolidates key experimental findings that support the establishment of a 10^6 bacterial cell minimum.

Table 1: Experimental Evidence for the 10^6 Bacterial Cell Threshold

| Sample Type | Key Experimental Finding | Impact Below 10^6 Cells | Source |

|---|---|---|---|

| Healthy Donor Stool (Dilution Series) | Loss of sample identity in cluster analysis; profiles no longer group with higher biomass replicates. | Major: Inability to distinguish true biological differences from technical noise. | [9] |

| Bacterial Mock Community (Dilution Series) | Low biomass samples cluster midway between undiluted mock community and negative controls. | Major: True signal is lost and replaced by a hybrid of biology and contamination. | [10] |

| Nasopharyngeal & Induced Sputum | Technical replicates with low biomass (<500 16S copies/μL) showed higher alpha diversity and reduced reproducibility. | Major: Data becomes unreliable and non-reproducible. | [10] |

| Various (Theoretical Framework) | Maintenance metabolism converges on total metabolism for the smallest cells, highlighting extreme energy limitation. | Context: Explains the physiological challenge of being small and energy-limited. | [12] |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Low Biomass Research

| Item | Function & Importance | Specific Examples / Notes |

|---|---|---|

| Mock Microbial Community | A defined mix of bacterial cells or DNA used as a positive control to assess extraction efficiency, PCR bias, and sequencing accuracy. | ZymoBIOMICS Microbial Community Standard (D6300/D6305) [11] [13] [9]. |

| DNA Spike-in Control | A known quantity of foreign DNA (not found in your samples) added pre-extraction or pre-PCR to enable absolute quantification and monitor cross-contamination. | ZymoBIOMICS Spike-in Control I [11]. |

| Silica-Column DNA Extraction Kit | Provides high DNA yield and purity from low biomass samples; superior to bead absorption or chemical precipitation for this application. | ZymoBIOMICS DNA Miniprep Kit [9]; QIAamp PowerFecal Pro DNA Kit [11]. |

| DNA-Free Storage Buffer | Preserves sample integrity at collection while minimizing introduction of contaminating DNA. | PrimeStore Molecular Transport Medium [10]. |

| High-Fidelity Taq Polymerase | Reduces PCR amplification errors and bias, which is critical when amplifying tiny amounts of template DNA. | LongAmp Hot Start Taq DNA Polymerase [13]. |

| In Silico Decontamination Tool | A statistical software package to identify and remove contaminant sequences post-sequencing based on control samples. | Decontam (R package) [10]. |

FAQ: Where does contamination in 16S sequencing experiments primarily come from?

Contamination in 16S rRNA gene sequencing, especially for low-biomass samples, originates from several key sources. Reagents and laboratory environments introduce exogenous DNA that can be amplified and sequenced, obscuring the true biological signal.

- Reagents and Kits: DNA extraction kits, PCR master mixes, and molecular-grade water are well-documented sources of contaminating bacterial DNA. These often introduce a consistent set of bacterial taxa, sometimes called the "kitome" [14] [15].

- Laboratory Environment: Contaminants can be introduced from the air, lab surfaces, and equipment during sample processing [1] [14].

- Human Operators: Investigators can inadvertently introduce contamination from their skin, hair, or respiratory tract [1] [16].

- Cross-Contamination: This involves the transfer of DNA between samples during processing, a phenomenon known as well-to-well leakage [1] [17].

Table 1: Common Contaminant Genera and Their Sources

| Contaminant Genera | Typical Source |

|---|---|

| Pseudomonas, Ralstonia, Sphingomonas | Reagents (kits, water) [16] [15] |

| Acinetobacter, Herbaspirillum | Reagents (kits, water) [15] |

| Bacillus, Bradyrhizobium | Reagents (kits, water) [15] |

| Cutibacterium (formerly Propionibacterium) | Human skin, reagents [18] [16] |

| Stenotrophomonas | Reagents, and can also be a genuine pathogen [16] |

FAQ: How can I prevent contamination during sample collection and handling?

Preventing contamination begins at the sampling stage with strict sterile techniques and appropriate protective equipment.

- Use Single-Use, DNA-Free Consumables: Whenever possible, use pre-sterilized, disposable collection vessels and tools [1].

- Thorough Decontamination: Decontaminate reusable equipment and surfaces with 80% ethanol to kill microorganisms, followed by a DNA-degrading solution (e.g., sodium hypochlorite/bleach) to remove residual DNA [1].

- Wear Appropriate PPE: Wear gloves, lab coats, masks, and hair covers to minimize the introduction of human-associated contaminants. For extremely sensitive low-biomass work, more extensive cleanroom-style PPE may be necessary [1].

- Include Sampling Controls: Collect control swabs of the air in the sampling environment, PPE, or empty collection vessels. These controls help identify contaminants introduced during the collection process itself [1].

FAQ: What is the best experimental design to identify contamination in the lab?

A robust experimental design includes multiple types of controls processed alongside your biological samples through every step, from DNA extraction to sequencing.

- Negative Extraction Controls (NECs): These are "blank" samples where you substitute water or buffer for the biological sample during DNA extraction. They are essential for identifying contaminants from extraction kits and reagents [18] [16] [10].

- No-Template Controls (NTCs): Also known as library controls, these use water instead of DNA template during the PCR amplification step. They help identify contaminants present in PCR reagents, such as polymerases and buffers [19] [16].

- Mock Communities: These are commercially available or internally generated mixtures of known bacteria at defined ratios. They serve as positive controls to assess the accuracy and reproducibility of your entire workflow, from DNA extraction to sequencing [19] [10].

The following workflow outlines the key experimental and computational steps for managing contamination:

FAQ: How can I computationally remove contamination from my sequencing data?

After sequencing, bioinformatic tools can help identify and remove contaminant sequences. These methods typically use the control data you generated to distinguish contaminants from true biological signals.

- Frequency/Presence-Based Methods: Tools like the

decontampackage in R use the prevalence or relative abundance of Amplicon Sequence Variants (ASVs) in negative controls compared to true samples to classify contaminants [10] [17]. - Sample-Specific Thresholds: One approach suggests using the abundance of the most dominant contaminant species in your controls to set a sample-specific cutoff. Identifications below this threshold (e.g., 20% of the top contaminant's reads) are treated as potential contamination [18].

- Advanced Algorithms: Newer methods and packages are continuously being developed. For example,

micRocleanoffers pipelines for different research goals, andCleanSeqUis a recently developed algorithm that uses multiple rules, including Euclidean distance similarity and ecological plausibility, to decontaminate low-biomass urine data [17] [15]. - qPCR-Informed Decontamination: A powerful method combines sequencing data with quantitative PCR (qPCR) data measuring total bacterial load. It calculates the ratio of an OTU's "absolute" abundance (relative abundance × 16S gene copy number) in negative controls versus samples, effectively removing OTUs that are disproportionately abundant in controls [16].

Table 2: In Silico Decontamination Tools and Methods

| Tool / Method | Underlying Principle | Key Application / Note |

|---|---|---|

decontam (R package) |

Identifies contaminants based on higher prevalence or frequency in negative controls than in true samples [17]. | Widely used; combines control- and sample-based methods. |

| qPCR-Informed Pipeline | Uses bacterial load from qPCR to calculate "absolute" abundance ratio of OTUs in controls vs. samples [16]. | Removes OTUs disproportionately abundant in controls. |

micRoclean (R package) |

Houses two pipelines: "Original Composition" (estimates pre-contamination state) and "Biomarker" (strict removal) [17]. | Provides a filtering loss statistic to help avoid over-filtering. |

CleanSeqU Algorithm |

Classifies samples by contamination level and applies rules (Euclidean distance, Z-score, blacklist) [15]. | Specifically designed and validated for low-biomass urine samples. |

| Sample-Specific Cutoff | Uses the abundance of the top contaminant in a control to define a threshold for filtering in each clinical sample [18]. | A simple, transparent method not requiring specialized software. |

The Scientist's Toolkit: Key Reagent Solutions

Table 3: Essential Materials for Contamination-Aware 16S Sequencing

| Item | Function & Importance | Example / Note |

|---|---|---|

| DNA Extraction Kit | Extracts microbial DNA; a major source of the "kitome." Different kits have different contaminant profiles and lysis efficiencies [14] [10]. | DNeasy Kit (Qiagen), DSP Virus/Pathogen Mini Kit, ZymoBIOMICS DNA Miniprep Kit [14] [10]. |

| Sample Storage Buffer | Preserves sample integrity at the collection point. The choice of buffer can influence background contamination levels [10]. | PrimeStore Molecular Transport Medium, Skim-milk Tryptone Glucose Glycerol (STGG) [10]. |

| PCR Master Mix | Enzymes and buffers for amplification; a known source of contaminating DNA [14] [15]. | Use high-quality mixes and include NTCs. LongAmp Hot Start Taq Master Mix is used in the Nanopore 16S protocol [20]. |

| 16S Barcoding Primers | Allow multiplexing of samples; unique barcodes per sample are essential to track samples and identify cross-contamination [20] [19]. | e.g., the 24 unique barcodes in the Oxford Nanopore 16S Barcoding Kit [20]. |

| Nucleic Acid Cleanup Beads | Purify DNA and perform size selection to remove unwanted products like primer dimers. Incorrect ratios can cause sample loss or failure to remove small fragments [21] [20]. | AMPure XP Beads are commonly used [20]. |

| Mock Community | A defined mix of bacterial strains used as a positive control to validate the entire workflow's accuracy and reproducibility [19] [10]. | ZymoBIOMICS Microbial Community Standard, BEI Mock Bacterial Community [10]. |

How Low Biomass Amplifies Contaminant Signals and Skews Community Profiles

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Contamination in Low-Biomass 16S rRNA Sequencing

Problem: Your 16S rRNA sequencing results from low-biomass samples (e.g., tissue, blood, urine) show unexpected microbial communities, high alpha diversity, or known common contaminants.

Explanation: In low-biomass environments, the small amount of target microbial DNA is easily overwhelmed by contaminant DNA from reagents, kits, and the laboratory environment [22] [23]. These contaminants constitute a larger proportion of the total DNA in your sample, distorting the true community profile and leading to inflated diversity metrics [22] [24]. Failure to account for this can lead to incorrect biological conclusions [1].

Solution: A multi-pronged approach combining rigorous lab practices and computational decontamination is required.

Step 1: Implement Robust Experimental Controls. Include the following controls in your sequencing run to identify contaminant signals [1] [2]:

- Negative Controls: DNA extraction blanks (e.g., an empty tube or tube with sterile water processed through extraction) and no-template PCR controls [22] [23].

- Positive Controls: A dilution series of a mock microbial community with a known composition [22] [5]. This helps evaluate the performance of your wet-lab and computational methods as microbial biomass decreases.

- Process-Specific Controls: Collect and sequence samples from potential contamination sources, such as swabs of sampling equipment, gloves, or aliquots of preservation solutions [1].

Step 2: Apply Computational Decontamination. Use your negative controls to filter out contaminants bioinformatically. The table below compares common methods:

| Method | Principle | Best Use Case | Key Limitation |

|---|---|---|---|

Decontam (Frequency) |

Identifies sequences with an inverse correlation to sample DNA concentration [22]. | General use; does not require prior knowledge of the environment [22]. | Requires DNA concentration data for all samples [22]. |

Decontam (Prevalence) |

Identifies sequences that are more prevalent in negative controls than in true samples [22]. | When you have multiple negative controls [22]. | May misclassify rare but true taxa if they appear in controls [22]. |

SourceTracker |

Uses a Bayesian approach to predict the proportion of a sample arising from defined contaminant sources [22]. | When the experimental environment is well-defined and source environments are known [22]. | Performs poorly when the experimental environment is unknown [22]. |

| Simple Subtraction | Removes all sequences found in negative controls from all samples [22]. | Quick, simple filtering. | Overly strict; can erroneously remove >20% of expected sequences present in controls due to index-hopping or other artifacts [22]. |

- Step 3: Set Sample-Specific Abundance Thresholds. For clinical diagnostics, one effective strategy is to use the most abundant contaminant species in your controls to set a filter. Reliable identifications in a sample should be above the read abundance of the most dominant contaminant found in that sample's associated controls [18].

Guide 2: Addressing Poor Library Yield and Quality from Low-Biomass Inputs

Problem: Your NGS library preparation from low-biomass samples results in low yield, high adapter-dimer formation, or poor library complexity.

Explanation: Low-input DNA increases the impact of common library prep issues. Suboptimal DNA quality, contaminants inhibiting enzymes, and over-amplification during PCR become major problems [21].

Solution: Systematically optimize each step of your library preparation protocol.

Step 1: Verify Input DNA Quality and Purity.

- Mechanism: Residual salts, phenol, or guanidine from extraction can inhibit enzymes used in fragmentation, ligation, and PCR [21].

- Action: Use fluorometric quantification (e.g., Qubit) instead of UV absorbance (NanoDrop) to accurately measure usable DNA. Check 260/230 and 260/280 ratios to ensure purity [21].

Step 2: Optimize Amplification to Reduce Bias.

- Mechanism: Too many PCR cycles can lead to over-amplification artifacts, chimeras, and a high duplicate rate, skewing representation [21] [5].

- Action: Use the minimum number of PCR cycles necessary. Evaluate whether pooling multiple PCR replicates per sample is necessary for your specific sample type, as it may not always be required and increases handling [5].

Step 3: Fine-Tune Purification and Size Selection.

- Mechanism: Aggressive clean-up can lead to loss of already scarce DNA fragments. An incorrect bead-to-sample ratio can fail to remove adapter dimers or exclude desired fragments [21].

- Action: Precisely follow bead-based clean-up protocols regarding ratios and incubation times. Avoid over-drying beads, which leads to inefficient resuspension and DNA loss [21].

Frequently Asked Questions (FAQs)

What defines a "low-biomass" sample, and why is it so problematic?

A low-biomass sample contains a very low concentration of microbial cells or DNA, placing it near the limits of detection for standard sequencing methods [1]. While sometimes defined quantitatively (e.g., <10,000 microbial cells/mL), it's best considered a continuum [2]. Examples include human tissues (blood, lung, placenta), certain environmental samples (drinking water, deep subsurface), and clinical specimens from normally sterile sites [24] [1]. The problem is proportional: the contaminant DNA "noise" can be as loud as, or louder than, the biological "signal," leading to distorted community profiles and inflated diversity estimates [22] [23].

Contamination can be introduced at virtually every stage of a study:

- Reagents and Kits: DNA extraction kits, PCR master mixes, and water are well-documented sources of bacterial DNA [5] [23].

- Laboratory Environment and Personnel: Human skin and hair, aerosols from talking, and laboratory surfaces are significant sources [1] [23].

- Sampling Equipment: Collection tubes, swabs, and solutions can introduce contaminants during sample acquisition [1].

- Cross-Contamination (Well-to-Well Leakage): DNA can transfer between samples on a 96-well plate during processing, a phenomenon known as the "splashome" [2].

How many negative controls should I include, and what kind?

There is no universal consensus on the number, but two controls are always better than one, and in some cases, more are helpful [2]. You should collect process controls that represent different contamination sources [2]. We recommend:

- Full-process controls that pass through the entire experiment (e.g., an extraction blank) to capture the aggregate contamination [2].

- Process-specific controls to identify specific sources (e.g., swab the collection kit, sample the preservation solution, swab the gloves of the operator) [1] [2]. This allows for more precise identification of contaminants.

Can't I just remove sequences that show up in my negative controls?

Simple subtraction is a common but flawed approach. While it seems straightforward, it can be too strict. It may erroneously remove over 20% of expected, true sequences that are also present in the negative control due to index-hopping or other low-level artifacts [22]. More sophisticated statistical methods like Decontam or SourceTracker are generally recommended as they can more accurately distinguish between contaminants and true signals [22].

Contamination has fueled several major controversies in microbiome research. For example, early claims of a distinct placental microbiome were later shown to likely be the result of contamination from reagents and laboratory processing, as the signal was indistinguishable from negative controls [1] [23]. Similarly, studies of blood and tumors have been debated due to the challenges of distinguishing ultra-low biomass signals from contamination [1] [2]. If contamination is confounded with a study group (e.g., all cases processed in one batch and all controls in another), it can create artifactual "associations" between contaminants and the disease state [2].

Experimental Protocols

Sample Collection & DNA Extraction for Low-Biomass Upper Respiratory Tract (URT) Samples

This protocol is adapted from best practices for microbial profiling of low-biomass upper respiratory tract samples [25].

Key Reagent Solutions:

- DNA-Free Swabs & Collection Tubes: Pre-sterilized, single-use to avoid introducing contaminants.

- Lysis Buffer (with mechanical lysis): A chemical lysis buffer, used in conjunction with a mechanical lysis step (e.g., bead beating with Lysing Matrix E) to ensure efficient cell wall disruption of hardy bacteria [5].

- DNA Extraction Kit: Use a kit designed for low-biomass samples. Include an extraction blank control (lysis buffer only) for every batch.

- Nuclease-Free Water: Certified DNA-free for all PCR and dilution steps.

- Mock Community DNA: A standardized community of known composition (e.g., ZymoBIOMICS Microbial Community Standard) to be used as a positive control [5].

Procedure:

- Sample Collection: Using sterile gloves and aseptic technique, collect the URT sample (e.g., nasal swab). Place the swab immediately into a DNA-free storage tube.

- Storage: Store samples at -70°C or in a preservation solution that stabilizes DNA until extraction.

- Nucleic Acid Extraction:

- Add samples to a tube containing a mechanical lysis matrix (e.g., Lysing Matrix E).

- Add lysis buffer and process on a homogenizer instrument (e.g., MPure-12 instrument) for mechanical disruption.

- Complete the DNA extraction following the manufacturer's protocol for the chosen kit.

- CRITICAL: Process your negative control (extraction blank) and positive control (mock community) alongside the experimental samples in the same batch.

- DNA Quantification: Quantify the extracted DNA using a fluorometric method (e.g., Qubit) for accuracy.

Evaluating Decontamination Methods Using a Mock Community Dilution Series

This methodology allows for the empirical testing of computational decontamination tools [22].

Key Reagent Solutions:

- Mock Microbial Community: A defined mix of known bacterial strains (e.g., from ZymoBIOMICS or ATCC).

- Nuclease-Free Water: For creating serial dilutions.

- Standard 16S rRNA Gene Primers & Master Mix: For library preparation.

Procedure:

- Create a Dilution Series: Perform a serial dilution (e.g., 3-fold or 10-fold dilutions) of the mock community DNA to simulate a range of high-to-low microbial biomass [22].

- Sequence the Series: Subject the entire dilution series, alongside your negative controls (water blanks), to standard 16S rRNA gene sequencing.

- Bioinformatic Analysis:

- Process the raw sequencing data to generate amplicon sequence variants (ASVs).

- Apply different computational decontamination methods (e.g.,

Decontamprevalence and frequency methods,SourceTracker, simple subtraction) to the dataset.

- Evaluation:

- Compare the output of each method against the known composition of the mock community.

- Calculate the percentage of contaminant sequences correctly removed and, crucially, the percentage of expected sequences erroneously removed by each method [22]. This will allow you to select the optimal method and parameters for your specific study.

Visualizations

Diagram 1: Impact of Decreasing Biomass on Contaminant Proportion

Frequently Asked Questions

1. What are the primary sources of false positives in 16S sequencing of low-biomass samples? False positives primarily arise from two key technical issues:

- Index Hopping (Sample Index Misassignment): This occurs during sequencing when DNA fragments from one sample are misassigned to another sample in the same sequencing run. It is a significant problem on certain platforms, with reported rates as high as 5.68% on the Illumina NovaSeq 6000 compared to 0.08% on the DNBSEQ-G400 platform [26]. These are high-quality biological reads, so they cannot be filtered out by standard quality control or denoising algorithms [26].

- Laboratory Contamination: Contaminating DNA can be introduced from reagents (the "kitome"), the laboratory environment, or sampling equipment. In low-biomass samples, this contaminant DNA can constitute a large proportion of the final sequencing library, leading to the detection of microbes that were never part of the original sample [14] [10] [1].

2. How does the loss of sample identity impact my research conclusions? Loss of sample identity, through sample mix-ups or cross-contamination, compromises the integrity of your entire dataset. This can lead to:

- Incorrect correlations between microbial profiles and sample metadata (e.g., linking contaminant microbes to a specific disease state) [26].

- Fake ecological patterns, such as inflated alpha diversity in simple communities or underestimated diversity in complex ones [26].

- Biased inference of community assembly mechanisms and the identification of fake keystone species in network analyses [26].

3. What is the best way to identify contaminating sequences in my data?

The most robust method involves the use of negative controls (e.g., blank extraction kits, sterile swabs, molecular grade water) processed alongside your biological samples. The sequences found in these controls represent the "contaminant profile" of your lab and reagents. These profiles can then be identified and removed from your biological samples using statistical tools like the decontam package in R, which compares the frequency or prevalence of sequences in samples versus controls [14] [10].

4. My samples are very precious and have low DNA yield. Is there a sequencing method better suited for this? Yes, for low-biomass, degraded, or host-DNA-dominated samples, alternative methods like 2bRAD-M sequencing are highly effective. This method uses type IIB restriction enzymes to produce small, uniform fragments, reducing amplification bias and allowing for species-level profiling from as little as 1 pg of total DNA or samples with 99% host DNA contamination [27].

Comparison of Sequencing Platforms and Bioinformatics Tools

Table 1: Quantitative Comparison of Sequencing Platform Index Misassignment Rates [26]

| Sequencing Platform | Technology | Reported Index Misassignment Rate | Impact on Rare Taxa Detection |

|---|---|---|---|

| Illumina NovaSeq 6000 | Sequencing-by-Synthesis | 5.68% | High level of false positive rare taxa |

| DNBSEQ-G400 | Combinatorial Probe-Anchor Synthesis & DNA Nanoballs | 0.08% | Rare taxa more likely to be biologically relevant |

Table 2: Characteristics of Common OTU and ASV Algorithms [28]

| Algorithm | Type | Key Strength | Key Weakness |

|---|---|---|---|

| DADA2 | Denoising (ASV) | Consistent output, high resemblance to expected community | Tends to over-split biological sequences |

| UPARSE | Clustering (OTU) | Low error rates, high resemblance to expected community | Tends to over-merge distinct sequences |

| Deblur | Denoising (ASV) | Consistent output | Tends to over-split biological sequences |

| Opticlust | Clustering (OTU) | Iterative cluster quality evaluation | Tends to over-merge distinct sequences |

Experimental Protocols

Protocol 1: Implementing Synthetic Spike-In Controls for Sample Tracking [29]

Purpose: To unambiguously track sample identity and detect cross-contamination throughout the 16S rRNA gene amplicon sequencing workflow.

Materials:

- Synthetic 16S rRNA gene spike-in controls (e.g., Sample Tracking Mixes - STMs)

- Environmental or host DNA samples

Methodology:

- Design: Create a unique combinatorial Sample Tracking Mix (STM) for each sample. Each STM is an equimolar mixture of 3 synthetic 16S rRNA gene sequences that are not found in nature.

- Spike-In: Add a low abundance of the unique STM (e.g., ~5,000 copies per nanogram of sample DNA) to each sample at the very beginning of DNA extraction.

- Processing: Carry the spiked-in samples through the entire workflow: DNA extraction, PCR amplification, library preparation, and sequencing.

- Bioinformatic Analysis: After sequencing, map all reads to a database containing the synthetic spike-in sequences.

- Verification: The STM detected in each sequenced library must match the one added to the original sample. A mismatch indicates a sample swap; the presence of multiple STMs indicates cross-contamination.

Protocol 2: Using Negative Controls and the Decontam Package [14] [10]

Purpose: To identify and remove contaminating sequences from 16S rRNA gene sequencing data.

Materials:

- DNA extraction kits

- Sterile water or buffer for negative controls

- R statistical software with the

decontampackage installed

Methodology:

- Experimental Setup: Include multiple negative controls (NTCs) in your sequencing batch. These should be tubes containing only the reagents used for DNA extraction and library preparation, with no sample added.

- DNA Extraction and Sequencing: Process the negative controls alongside your biological samples through every step, from DNA extraction to sequencing.

- Generate OTU/ASV Table: Process your raw sequencing data to generate a feature table (OTU or ASV counts per sample).

- Run Decontam: In R, use the

decontamfunction. You can use either the "prevalence" method (which identifies sequences significantly more common in samples than in controls) or the "frequency" method (which identifies sequences with higher relative abundance in controls than in samples). - Filter Table: Remove the sequences identified as contaminants from your feature table before proceeding with downstream ecological analysis.

Research Reagent Solutions

Table 3: Essential Materials for Managing Low-Biomass Sequencing Studies

| Item | Function | Example Use Case |

|---|---|---|

| Commercial Mock Communities | DNA from known mixtures of microbial strains; used as a positive control to assess accuracy, reproducibility, and bias in the entire workflow [26] [10]. | Verifying that your wet-lab and bioinformatic pipeline correctly identifies expected taxa without introducing false positives. |

| Synthetic Spike-In Controls | Artificially designed DNA sequences not found in nature; used to track sample identity and quantify cross-contamination [29]. | Adding a unique DNA barcode to each sample to detect tube mislabeling or well-to-well contamination during PCR. |

| DNA-Free Nucleic Acid Removal Solutions | Reagents (e.g., bleach, specialized commercial solutions) to decontaminate surfaces and equipment of trace DNA [1]. | Wiping down workbenches, centrifuges, and other equipment before working with low-biomass samples to reduce environmental contamination. |

| Specialized DNA Extraction Kits for Low Biomass | Kits optimized for efficient lysis of hard-to-break cells and maximal recovery of minimal DNA. | Extracting DNA from samples with very few cells, such as skin swabs, filtered air, or clinical tissue biopsies. |

Troubleshooting Workflow Diagram

The diagram below outlines a logical workflow for diagnosing and addressing false positives and sample identity issues.

Building a Robust Low Biomass Protocol from Sample to Sequence

Frequently Asked Questions (FAQs)

Q1: What are the most effective chemical agents for decontaminating work surfaces and equipment against DNA contamination?

The most effective decontamination strategies, as determined by controlled studies, are those that degrade DNA rather than just disinfect. For cell-free DNA, sodium hypochlorite (bleach) solutions and Trigene were highly effective, leaving a maximum of only 0.3% recoverable DNA on plastic, metal, and wood surfaces. For cell-contained DNA in substances like blood, 1% Virkon was most effective, with a maximum of 0.8% of DNA recovered post-decontamination [30]. It is critical to note that sterility is not the same as being DNA-free; ethanol and autoclaving kill viable cells but may leave cell-free DNA intact. For critical decontamination, a two-step process is recommended: 80% ethanol (to kill organisms) followed by a nucleic acid degrading solution like sodium hypochlorite to remove DNA traces [1].

Q2: How should we handle sampling equipment and consumables to minimize contamination?

A contamination-informed sampling design is essential. The following steps should be taken:

- Use DNA-Free Consumables: Ideally, use single-use, DNA-free collection vessels and tools [1].

- Decontaminate Reusable Equipment: If reusable equipment is necessary, decontaminate thoroughly with 80% ethanol followed by a DNA-degrading solution like sodium hypochlorite [1]. Commercial DNA removal solutions are also an option [30].

- Proper Handling of Plasticware: Plasticware or glassware for sample collection or storage should be pre-treated by autoclaving or UV-C light sterilization and remain sealed until the moment of use [1].

- Pre-Treat Gloves: Gloves should be similarly decontaminated and should not touch anything before sample collection [1].

Q3: What types of controls are non-negotiable in a low-biomass 16S rRNA sequencing study?

Including the correct controls is paramount for interpreting data from low-biomass studies and for using computational decontamination tools effectively. The necessary controls are [1] [22] [31]:

- Negative Extraction Controls (Blanks): These contain no sample and are processed alongside your experimental samples through DNA extraction and library preparation. They identify contaminating DNA from reagents and kits.

- Sampling Controls: These can include swabs of the air in the sampling environment, an empty collection vessel, or aliquots of the preservation solution. They help identify contaminants introduced during the collection process itself.

- Positive Controls (Mock Microbial Communities): These are mixtures of known bacteria. A dilution series of a mock community is particularly valuable as it helps evaluate the success of contaminant identification methods and shows how decreasing biomass increases the relative impact of contamination [22].

Q4: My negative controls show bacterial sequences. How do I determine if these are also present in my true samples?

This is a central challenge in low-biomass research. Simply removing all sequences found in negative controls from your dataset can be too harsh, as it may errone remove genuine, low-abundance taxa [22]. The recommended approach is to use bioinformatic tools that can distinguish contaminants based on their patterns of abundance. The R package Decontam, for instance, can identify contaminant sequences based on their inverse correlation with DNA concentration (the "frequency" method) or their prevalence in negative controls compared to true samples [22]. Other tools like SourceTracker and the recently developed CleanSeqU algorithm also use control data to statistically identify and remove contaminant sequences while preserving true biological signals [15] [22].

Q5: Beyond chemicals, what PPE and physical barriers are necessary during sampling?

Personal protective equipment (PPE) acts as a critical physical barrier to prevent contamination from the investigator. The appropriate level of PPE depends on the biomass of the sample, but core principles include [1] [32]:

- Full Body Coverage: Wear coveralls or cleansuits, gloves, goggles, shoe covers, and face masks.

- Protection from Aerosols: PPE protects samples from human aerosol droplets generated by breathing or talking, as well as cells shed from skin, hair, and clothing [1].

- Cleanroom Standards: For extremely sensitive work (e.g., ancient DNA or cleanroom sampling), extensive PPE including face masks, full-body suits, visors, and multiple glove layers is standard to eliminate skin exposure [1].

Troubleshooting Guides

Problem: Inconsistent Microbiome Profiles Across Replicates of Low-Biomass Samples

Potential Cause: Cross-contamination between samples during processing or variable contamination from reagents.

Solutions:

- Review Laboratory Workflow: Implement a unidirectional workflow, using separate rooms or dead-air cabinets for pre- and post-PCR work [30]. Use aerosol-resistant pipette tips.

- Include More Controls: Increase the number of negative extraction controls to better account for the "rule of small numbers," where a limited number of controls may not capture all contaminants [15].

- Apply Computational Decontamination: Use a bioinformatic pipeline like

DecontamorCleanSeqUwith your negative control data to identify and remove contaminant sequences from your dataset [15] [22]. - Quantify Bacterial Load: Use qPCR to determine the 16S rRNA gene copy number in each sample. This quantitative data can be integrated with sequencing data to better identify contaminants, as their relative abundance is often inversely correlated with total bacterial load [16].

Problem: Known Reagent Contaminants Appear as Abundant Taxa in Samples

Potential Cause: The high sensitivity of 16S rRNA PCR can amplify trace DNA from reagents, which becomes dominant when the true biological signal is very low.

Solutions:

- Procure DNA-Free Reagents: Source molecular biology grade water and extraction kits that are certified DNA-free.

- Treat Reagents: If possible, pre-treat reagents with methods to degrade DNA, such as UV irradiation or DNase, though effectiveness can vary [22].

- Leverage Positive Controls: Use a dilution series of a mock community. This allows you to observe how contaminants increase as biomass decreases and to fine-tune your decontamination algorithm's parameters [22].

- Use Advanced Bioinformatics: Apply algorithms capable of identifying abundant contaminants. For example,

CleanSeqUuses Euclidean distance similarity to compare the compositional pattern of dominant taxa in samples and blank controls, effectively removing taxa that show a similar proportional pattern to the blank [15].

Experimental Protocols & Data

Detailed Protocol: qPCR-Assisted Decontamination of 16S rRNA Gene Amplicon Datasets

This protocol outlines a method for in silico decontamination that combines sequencing data with quantitative PCR to better distinguish contaminants from true signals [16].

Methodology:

- Sample Processing and qPCR: Extract DNA from your samples and negative controls. Perform qPCR targeting a segment of the 16S rRNA gene (e.g., the V3 region) using universal bacterial primers. Use a standard curve (e.g., from E. coli or S. aureus DNA) to estimate the 16S rRNA gene copy number in each sample and negative control.

- 16S rRNA Gene Amplicon Sequencing: Generate V3–V4 16S rRNA gene amplicons from the same DNA extracts and sequence them on an Illumina platform. Process the sequences through a standard pipeline (e.g., QIIME2 with DADA2) to generate an Amplicon Sequence Variant (ASV) table.

- In Silico Decontamination Calculation:

- For each ASV in each sample, calculate its "absolute" abundance in arbitrary units by multiplying its relative abundance (from the ASV table) by the 16S rRNA gene copy number for that sample (from qPCR).

- Calculate the ratio (R-OTU) for each ASV: (mean "absolute" abundance in negative controls) / (mean "absolute" abundance in true samples).

- Set a cutoff value for R-OTU (e.g., 0.01). Remove any ASV from the dataset where its R-OTU ratio exceeds this cutoff, as it is more abundant in controls than samples and is likely a contaminant.

Workflow Visualization:

Quantitative Data on Decontamination Efficiency

The table below summarizes the efficiency of various cleaning strategies for removing DNA from different surfaces, as recovered from contaminated surfaces post-cleaning [30].

Table 1: Efficiency of Cleaning Strategies for DNA Removal from Different Surfaces

| Cleaning Agent | Surface | Mean mtDNA Copies Recovered (Cell-Free DNA) | Percent Yield vs. Control (Cell-Free DNA) |

|---|---|---|---|

| No-treatment control | Plastic | 9,396,667 | 100.0% |

| Metal | 5,701,333 | 100.0% | |

| Wood | 4,792,667 | 100.0% | |

| 70% Ethanol | Plastic | 1,066,667 | 11.4% |

| Metal | 1,680,000 | 29.5% | |

| Wood | 1,436,000 | 30.0% | |

| UV Radiation | Plastic | 1,733,333 | 18.4% |

| Metal | 1,205,333 | 21.1% | |

| Wood | 1,140,000 | 23.8% | |

| 0.5% Sodium Hypochlorite (Fresh) | Plastic | 11,467 | 0.1% |

| Metal | 17,200 | 0.3% | |

| Wood | 3,333 | 0.1% | |

| 1% Virkon | Plastic | 29,867 | 0.3% |

| Metal | 13,067 | 0.2% | |

| Wood | 10,800 | 0.2% | |

| 10% Trigene | Plastic | 12,533 | 0.1% |

| Metal | 17,467 | 0.3% | |

| Wood | 5,467 | 0.1% |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for Low-Biomass Sampling and Decontamination

| Item | Function / Explanation | Key Considerations |

|---|---|---|

| Sodium Hypochlorite (Bleach) | A highly effective DNA-degrading agent for surface decontamination [30]. | Prepare fresh dilutions for maximum efficacy; concentration of available chlorine decreases over time [30]. |

| DNA-Free Water | Used as a solvent for molecular biology reactions and for moistening swabs. | A common source of contaminating DNA; ensure it is certified DNA-free [15]. |

| Forensic-Grade Swabs | For sample collection from surfaces. | Use single-use, DNA-free swabs to avoid introducing contaminants [32]. |

| Personal Protective Equipment (PPE) | A physical barrier to prevent contamination from the investigator [1] [32]. | Should include gloves, mask, cleansuit, and hair cover. Change gloves frequently. |

| Negative Extraction Control | Contains no sample and is processed identically to true samples to identify reagent-derived contaminants [22] [16]. | Essential for all computational decontamination methods. |

| Mock Microbial Community | A defined mixture of known microorganisms used as a positive control [22] [31]. | A dilution series can be used to validate decontamination protocols and benchmark bioinformatic tools [22]. |

| qPCR Reagents | For quantifying total bacterial load via 16S rRNA gene copy number [16]. | This quantitative data can be combined with sequencing data to improve contaminant identification [16]. |

In 16S rRNA gene sequencing, particularly for low biomass samples, the DNA extraction method is not merely a preliminary step but a major determinant of experimental success. Low biomass samples—such as tissue swabs, biopsies, and human milk—contain few microbial cells, making the complete and unbiased lysis of those cells paramount. The method you choose directly impacts DNA yield, purity, and, most critically, the faithful representation of the microbial community. Inaccurate lysis can skew results, leading to the under-representation of tough-to-lyse Gram-positive bacteria and fundamentally altering the perceived microbial diversity [33] [9] [34]. This guide provides a technical deep dive into the performance of three core DNA extraction technologies—silica columns, bead-based, and chemical precipitation—to help you select and troubleshoot the optimal protocol for your low biomass research.

Methodology: How We Compare the DNA Extraction Methods

To ensure a fair and quantitative comparison, the performance of DNA extraction methods is typically evaluated using a combination of standardized samples and a set of wet- and dry-lab criteria.

Standardized Samples for Evaluation:

- Mock Communities: Defined mixtures of bacterial species with known abundances, including both Gram-positive and Gram-negative bacteria. These are the gold standard for assessing extraction accuracy and bias, as they allow for a direct comparison between observed sequencing results and the theoretical composition [33] [35] [34].

- Low Biomass Clinical Samples: Real-world samples like human milk, gill swabs, or stool dilutions are used to test protocol performance under realistic conditions where inhibitor content and host DNA contamination are challenges [9] [34] [36].

Performance Evaluation Criteria:

- Wet-Lab Metrics: DNA yield (concentration), DNA purity (A260/280 ratio), and DNA fragment size.

- Dry-Lab (Sequencing) Metrics: Alpha-diversity (a measure of within-sample diversity, correlated with effective lysis of Gram-positives), and accuracy in recovering the expected composition of mock communities [33] [9].

Table: Key Performance Metrics for DNA Extraction Method Evaluation

| Metric | Description | Why It Matters for Low Biomass |

|---|---|---|

| DNA Yield | Total quantity of DNA recovered | Critical for downstream library prep; low yield may fail to sequence. |

| DNA Purity (A260/280) | Ratio indicating protein or RNA contamination | Contaminants can inhibit enzymatic reactions in PCR and sequencing. |

| Fragment Size | Average length of extracted DNA fragments | Shorter fragments may indicate excessive shearing, affecting library quality. |

| Alpha-Diversity | Richness and evenness of species in a sample (e.g., Chao1, Shannon) | Under-lysed samples show artificially low diversity. |

| Taxonomic Accuracy | Fidelity in recovering expected mock community composition | Reveals bias against hard-to-lyse (e.g., Gram-positive) bacteria. |

Performance Showdown: A Quantitative Comparison

Independent studies have systematically compared these methods to uncover their strengths and weaknesses. The following table summarizes the typical performance characteristics of each method in the context of low biomass and complex samples.

Table: Direct Comparison of DNA Extraction Methods for 16S Sequencing

| Method | Mechanism | Best For | Pros | Cons |

|---|---|---|---|---|

| Silica Columns (e.g., QIAamp Stool Mini, DNeasy PowerSoil Pro) | DNA binds to silica membrane under high-salt conditions; washed and eluted. | Standardized processing; high purity needs [34]. | High purity; easy to automate; cost-effective for high-throughput [37]. | Can be biased if lysis is incomplete; may not recover all Gram-positives without bead-beating [33]. |

| Bead-Based / Bead-Beating (e.g., DNeasy PowerLyzer PowerSoil, ZymoBIOMICS) | Mechanical disruption via vigorous shaking with small beads. | Low biomass samples; tough-to-lyse Gram-positive bacteria [33] [9]. | Excellent for robust lysis of diverse cells; high yield and diversity [33]. | Can shear DNA if overdone; potential for inter-protocol variability [35]. |

| Chemical Precipitation (e.g., Phenol-Chloroform, Alkaline Lysis) | Organic extraction or alkaline denaturation to separate DNA. | Budget-conscious labs; specific Gram-positive targets (alkaline method) [35]. | No specialized equipment needed; effective on some tough cells [35]. | Toxic reagents (phenol); complex, manual steps; lower purity [37]. |

Key Research Findings:

- Bead-Beating Enhances Diversity: A 2023 study comparing four DNA extraction methods for gut microbiome analysis found that protocols incorporating bead-beating (e.g., DNeasy PowerLyzer PowerSoil) consistently yielded higher observed microbial alpha-diversity, which is strongly correlated with the effective lysis of Gram-positive bacteria [33].

- The Low Biomass Limit: Research from 2021 demonstrated that sample biomass itself is a primary limiting factor. For 16S rRNA gene analysis to be robust and reproducible, a minimum of 10^6 bacterial cells per sample is required. Below this threshold, sample identity is lost, regardless of the extraction method used [9].

- The "Rapid" Alkaline Method: A novel, non-bead-beating method using potassium hydroxide (KOH), heat, and detergent showed promise in 2024. This "Rapid" protocol provided consistent representation of both easily lysed and difficult-to-lyse bacteria, recovering higher levels of Firmicutes (Gram-positives) in mock communities and human fecal samples compared to some standard protocols [35].

- Kit Performance in Human Milk: A 2023 study on human milk microbiome identified the DNeasy PowerSoil Pro (bead-based) and MagMAX Total Nucleic Acid Isolation (magnetic bead-based) kits as providing the most consistent 16S rRNA gene sequencing results with the lowest levels of contamination, a critical factor for low biomass samples [34].

Troubleshooting Guide & FAQs

Frequently Asked Questions

Q1: My DNA yield from a low biomass swab sample is too low for library prep. What can I do?

- A: First, confirm the problem. Use a fluorescence-based assay for quantification, as it is more accurate for low-concentration samples. Consider switching to a bead-beating protocol, as it generally provides higher yields and better lysis efficiency. For very precious samples, you can use a whole genome amplification kit, but be aware this may introduce bias [9]. Optimizing sample collection to maximize bacterial recovery and minimize host DNA—for example, using surfactant-based washes instead of whole tissue—can dramatically improve 16S rRNA gene recovery [36].

Q2: My DNA purity (A260/A280) is low. What does this indicate and how can I fix it?

- A: A low A260/A280 ratio (<1.8) typically indicates protein contamination. Ensure you are using the correct lysis conditions and that all washing steps are thoroughly performed. A high ratio (>2.0) may suggest RNA contamination, which can be mitigated by adding an RNase A digestion step during extraction [33] [38].

Q3: Why is my microbial diversity lower than expected, and how is it related to DNA extraction?

- A: Low diversity often stems from incomplete cell lysis. Gram-positive bacteria with thick peptidoglycan layers are frequently under-represented in gentle lysis protocols. The solution is to incorporate a mechanical lysis step, such as bead-beating. Studies show that increasing mechanical lysing time and repetition ameliorates the representation of bacterial composition [33] [9].

Q4: I'm seeing a lot of contamination in my negative controls. What is the source?

- A: Contamination in low biomass studies is a major challenge. Sources can include reagents, kits, and the laboratory environment. Always include negative control samples (e.g., blank extractions with water) to identify contaminating sequences. Using UV-irradiated benches, dedicated equipment, and commercially verified low-biomass kits can help mitigate this issue [34] [39].

Essential Protocols for Your Research

Detailed Protocol: Bead-Beating Extraction for Low Biomass Samples (based on DNeasy PowerLyzer PowerSoil Kit)

This protocol is recommended for its robust lysis and reproducibility with low biomass samples [33] [34].

- Sample Preparation: Centrifuge your sample (e.g., 3.5 mL of human milk) at 13,000-20,000 × g for 15 minutes at 4°C. Carefully remove the fat layer and supernatant.

- Initial Lysis: Resuspend the pellet in the provided Solution CD1 and transfer it to a PowerBead Pro tube.

- Bead-Beating: Lyse cells using a homogenizer (e.g., Precellys Evolution) at 5000 rpm for 45-60 seconds. Keep samples cool at 4°C during beating to prevent DNA degradation.

- Centrifugation: Centrifuge the tubes at 15,000 × g for 1 minute to pellet debris.

- DNA Binding and Washing: Transfer the supernatant to a new tube and complete the DNA purification according to the manufacturer's instructions, using a silica-based membrane column. This includes binding, washing with ethanol-based buffers, and a final dry spin.

- Elution: Elute the pure genomic DNA in a low-EDTA TE buffer or nuclease-free water. Store at -20°C or -80°C.

Detailed Protocol: Rapid Alkaline Lysis for Milligram Quantities

This is a simplified, non-mechanical protocol suitable for milligram-scale samples when bead-beaters are unavailable [35].

- Sample Input: Transfer ≤10 mg of sample (e.g., fecal material) to a 1.5 mL tube.

- Chemical Lysis: Add a pre-prepared lysis buffer containing potassium hydroxide (KOH), a detergent, and heat to 95°C. Vortex thoroughly to homogenize.

- Incubation: Incubate the sample at 95°C for 10 minutes to facilitate complete cell wall disruption and DNA release.

- Neutralization and Dilution: Dilute the lysate with a neutralization buffer to reduce the pH and ionic strength, making it compatible with downstream PCR.

- Direct Use: The diluted lysate can be used directly as a template in 16S rRNA gene PCR amplification without further DNA purification.

The Scientist's Toolkit: Key Reagents & Solutions

Table: Essential Research Reagents for DNA Extraction from Low Biomass Samples

| Reagent / Kit | Function | Application Note |

|---|---|---|

| DNeasy PowerSoil Pro Kit (QIAGEN) | Bead-beating and silica column purification. | Recommended for low biomass human milk and environmental samples; effective inhibitor removal [34]. |

| ZymoBIOMICS DNA Miniprep Kit (Zymo Research) | Bead-beating and silica column purification. | Effective for a wide range of biomasses down to 10^4 microbes; includes DNA cleanup [9]. |

| Mock Microbial Community (ZymoBIOMICS) | Defined standard for validating extraction bias and sequencing accuracy. | Contains both Gram-positive and Gram-negative bacteria to test lysis efficiency [35] [34]. |

| Proteinase K | Enzyme that digests proteins and degrades nucleases. | Critical for lysis of animal tissues and inactivation of DNases; add before lysis buffer [38] [37]. |

| Lysis Buffer (with KOH) | Alkaline solution that denatures membranes and proteins. | Core of the "Rapid" protocol; effective on tough Gram-positive cell walls [35]. |

| Silica Magnetic Beads | Solid-phase for DNA binding and purification in solution. | Enables automation on liquid handling robots; no centrifugation required [37] [40]. |

Workflow Visualization: Choosing Your Extraction Method

The following diagram illustrates the decision-making process for selecting the most appropriate DNA extraction method based on your sample type and research goals.

Frequently Asked Questions (FAQs)

FAQ 1: Why is mechanical lysis, specifically bead beating, considered critical for 16S rRNA gene sequencing from low-biomass samples?

Mechanical lysis is considered the gold standard for microbiome DNA extraction because it provides a stochastic and unbiased method for breaking open a wide range of bacterial cell types. Complex microbial communities inevitably contain tough-to-lyse species, such as Gram-positive bacteria with thick peptidoglycan cell walls, spores, and yeast [41]. If not lysed efficiently, these organisms will be underrepresented in the final sequencing data, leading to a skewed community profile. Methods that rely solely on chemical or thermal lysis often cause overrepresentation of easy-to-lyse organisms (e.g., Gram-negative bacteria) and poor liberation of DNA from tough-to-lyse organisms [41]. Bead beating's physical disruption helps ensure that DNA is released from both easy-to-lyse and recalcitrant microbes, which is paramount for an accurate representation of the true microbial community, especially in low-biomass samples where every cell counts [42] [33] [41].

FAQ 2: How do the duration and repetition of bead beating impact DNA yield, quality, and community representation?

The intensity and duration of mechanical lysis create a trade-off between DNA yield and DNA fragment length. Higher intensity (speed and time) generally increases DNA yield by lysing more cells but also shears DNA into shorter fragments, which can be detrimental for long-read sequencing technologies [43]. Conversely, lower energy input preserves longer DNA fragments but may reduce total yield [43].

Critically, the community representation can be significantly affected. One study on rumen samples found that including a bead-beating step increased total DNA yield but decreased the observed richness of protozoal amplicons [42]. However, another study on vaginal microbiota found that while different lysis methods (including bead beating) resulted in statistically significant differences in beta diversity, these differences were small compared to the biological variation between samples [44]. The optimal setting must therefore balance these factors for your specific sample type and downstream application.

Table 1: Impact of Bead Beating Intensity on DNA Yield and Fragment Length in Soil Samples [43]

| Homogenisation Parameters | Distance Travelled (m) | DNA Yield (Total µg) | Mean DNA Fragment Length (bp) |

|---|---|---|---|

| 4 m s⁻¹ for 5 s | 20 | ~2.5 | 9,324 |

| 4 m s⁻¹ for 10 s | 40 | Sufficient for sequencing | 7,487 |

| 6 m s⁻¹ for 30 s | 180 | ~4.0 | 4,406 |

| Higher Intensity Settings | 360 - 960 | Plateaued | 3,418 - 4,156 |

FAQ 3: Are there any validated protocols for bead beating that I can follow?

Yes, several studies and manufacturers have provided validated bead-beating protocols. Zymo Research, using their ZymoBIOMICS Microbial Community Standard, has extensively tested and published parameters for various homogenizers to ensure unbiased nucleic acid extraction with their ZymoBIOMICS DNA Miniprep Kit [41]. Furthermore, a 2023 study optimizing DNA extraction for the human gut microbiome found that a protocol combining a stool preprocessing device with the DNeasy PowerLyzer PowerSoil kit (which includes a bead-beating step) showed the best overall performance [33].

Table 2: Examples of Validated Bead Beating Protocols [41]

| Homogenizer | Recommended Protocol |

|---|---|

| MP Fastprep-24 | 1 minute at max speed, 5 minutes rest. Repeat cycle 5 times (total of 5 minutes bead beating). |

| Biospec Mini-BeadBeater-96 (with 2 ml tubes) | 5 minutes at Max RPM, 5 minutes rest. Repeat cycle 4 times (total of 20 minutes bead beating). |

| Bertin Precelys Evolution | 1 minute at 9,000 RPM, 2 minutes rest. Repeat cycle 4 times (total of 4 minutes bead beating). |

| Vortex Genie (with adapter) | 40 minutes of continuous bead beating (max 18 tubes). |

FAQ 4: What common issues should I troubleshoot if my 16S sequencing results show low diversity or underrepresentation of Gram-positive bacteria?

If your sequencing data shows low diversity or an unexpected lack of Gram-positive bacteria, the issue most likely lies with inefficient mechanical lysis.

- Problem: Underrepresentation of Gram-positive taxa.

- Potential Cause: The bead-beating step was not intense or long enough to break open the tough peptidoglycan layers of Gram-positive bacterial cell walls.

- Solution: Increase the intensity of mechanical lysis. This can be done by:

- Increasing the duration of the bead-beating cycles.

- Incorporating repeated cycles of beating with rest periods in between to prevent excessive heat buildup, as seen in the validated protocols above [41].

- Ensuring you are using the appropriate size and material of beads (e.g., a mix of zirconia/silica beads) to enhance lysis efficiency [33].

- Verification: Always include a mock community of known composition (e.g., ZymoBIOMICS Microbial Community Standard) in your extraction batches. If your results from the mock community do not match the expected composition, particularly for the tough-to-lyse species, it confirms a bias in your DNA extraction protocol that needs to be addressed [41] [10].

Experimental Protocols for Key Studies