Navigating the Low Biomass Challenge: Strategies for Accurate Microbial Sequencing in Clinical and Research Settings

Accurate microbial profiling in low-biomass samples is critical for clinical diagnostics and research but presents significant technical challenges, including contamination risks and host DNA interference.

Navigating the Low Biomass Challenge: Strategies for Accurate Microbial Sequencing in Clinical and Research Settings

Abstract

Accurate microbial profiling in low-biomass samples is critical for clinical diagnostics and research but presents significant technical challenges, including contamination risks and host DNA interference. This article synthesizes current methodologies and best practices for reliable sequencing under these conditions. It explores the foundational impact of low microbial load on data integrity, evaluates advanced wet-lab and computational optimization techniques, and provides a comparative analysis of sequencing platforms and validation frameworks. Aimed at researchers and drug development professionals, this resource offers a comprehensive guide for obtaining robust, interpretable data from challenging sample types like urine, blood, and sterile body sites to advance precision medicine and therapeutic discovery.

The Low Biomass Problem: Why Microbial Load Matters for Sequencing Fidelity

Low-biomass environments are characterized by microbial levels that approach the limits of detection of standard DNA-based sequencing approaches [1]. Unlike high-biomass environments like human stool or surface soil, where the target DNA "signal" far exceeds contaminant "noise," low-biomass samples can be disproportionately impacted by even minute amounts of external DNA [1]. This technical challenge underpins a broader thesis: that the intrinsic properties of low microbial load fundamentally alter the reliability and interpretation of sequencing results, potentially leading to spurious biological conclusions if not properly managed.

The definition of low biomass exists on a continuum, but it consistently describes environments where contaminating DNA can constitute a significant, or even majority, fraction of the final sequencing data [2]. These environments range from internal human tissues and blood to ultra-clean industrial manufacturing spaces, presenting a common set of methodological hurdles that must be overcome for accurate characterization [1] [3].

Defining Low-Biomass Environments and Their Challenges

Categories of Low-Biomass Environments

Low-biomass environments span clinical, environmental, and industrial settings. Table 1 summarizes the primary types of low-biomass environments and their specific research challenges.

Table 1: Categories and Characteristics of Low-Biomass Environments

| Category | Example Environments | Key Research Challenges |

|---|---|---|

| Human Tissues | Placenta, fetal tissues, blood, brain, lower respiratory tract, breastmilk, tumors [1] [2] | High host DNA concentration; stringent ethical requirements; difficult sample acquisition [2]. |

| Natural Environments | Atmosphere, hyper-arid soils, deep subsurface, ice cores, treated drinking water [1] | Extreme physical conditions; remote sampling; low and slow-growing microbial populations [1]. |

| Built Environments | Cleanrooms (e.g., spacecraft assembly), hospital operating rooms [3] | Requirement for ultra-sensitive pathogen detection; rigorous sterility standards; reagent contamination dominates signal [3]. |

| Specialized Clinical Samples | Biopsies, cerebrospinal fluid (CSF), synovial fluid [4] [5] | Minimal sample volume; low absolute microbial abundance despite potential clinical significance [4]. |

Core Analytical Challenges in Low-Biomass Research

The accurate characterization of low-biomass microbiomes is hampered by several interconnected technical challenges that can compromise biological conclusions and have fueled scientific controversies [2].

- External Contamination: Microbial DNA introduced from reagents (kitome), sampling equipment, laboratory environments, and personnel can constitute a majority of the sequenced DNA in ultra-low biomass samples [3] [2]. This contamination is proportional—the lower the native biomass, the greater the proportional impact of contaminants on the final dataset [1].

- Host DNA Misclassification: In host-associated samples (e.g., tumors), over 99.99% of sequenced reads can be host-derived [2]. If bioinformatic tools misclassify even a tiny fraction of this host DNA as microbial, it can generate significant false-positive signals and obscure true microbial signals [2].

- Cross-Contamination (Well-to-Well Leakage): DNA can transfer between samples processed concurrently, for instance, in adjacent wells of a 96-well plate [1] [2]. This "splashome" effect can violate the core assumptions of computational decontamination tools that rely on negative controls [2].

- Batch Effects and Processing Bias: Technical variability between different laboratory personnel, reagent lots, or DNA extraction batches can introduce significant non-biological variation [2]. In low-biomass studies, these batch effects are magnified and, if confounded with the experimental groups, can create artifactual signals [2].

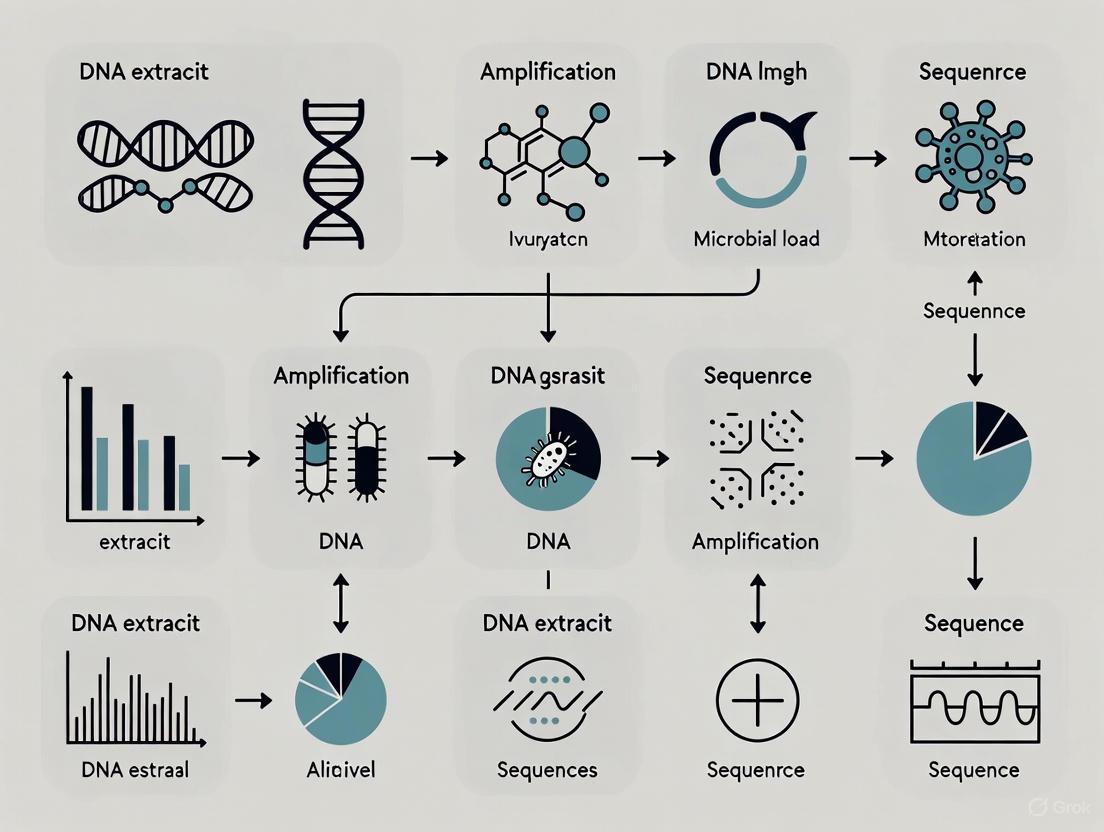

Figure 1: Key analytical challenges in low-biomass microbiome research that can compromise sequencing results and lead to incorrect conclusions.

Methodological Frameworks for Reliable Low-Biomass Analysis

Foundational Principles for Experimental Design

Robust low-biomass research requires strategic planning to mitigate risks at every stage, from sample collection to data analysis [1].

- Avoid Batch Confounding: Experimental groups (e.g., case vs. control) must be distributed evenly across all processing batches (e.g., DNA extraction plates, sequencing runs) [2]. Active balancing is preferred over simple randomization to ensure that technical variation does not create false associations with the phenotype of interest [2].

- Implement Rigorous Decontamination Protocols: All sampling equipment, tools, vessels, and gloves should be decontaminated. A two-step process using 80% ethanol (to kill organisms) followed by a nucleic acid-degrading solution like sodium hypochlorite (bleach) or UV-C irradiation is recommended to remove both viable cells and persistent cell-free DNA [1].

- Use Personal Protective Equipment (PPE) as a Barrier: Personnel should wear extensive PPE—including gloves, masks, cleansuits, and shoe covers—to limit the introduction of human-associated contaminants from skin, hair, and aerosolized droplets [1]. Protocols from ancient DNA labs and cleanrooms, which require full-body coverage, provide a leading standard [1].

The Critical Role of Experimental and Process Controls

The use of comprehensive controls is non-negotiable in low-biomass research. These controls are essential for identifying contamination sources and informing computational decontamination [2].

- Sampling Controls: These account for contaminants introduced during collection and include empty collection vessels, swabs exposed to the air, swabs of PPE, or aliquots of preservation solution [1].

- Process Controls ("Blanks"): These are included at various stages (e.g., DNA extraction, PCR amplification) to profile the contaminating DNA introduced by reagents and laboratory processes, collectively known as the "kitome" [3] [2].

- Positive Controls: Using a synthetic microbial community (e.g., ZymoBIOMICS Standard) in the same dilution solvent as the samples helps benchmark protocol performance and accuracy [5].

Figure 2: Essential process controls must be integrated at each stage of the low-biomass workflow to identify contamination sources.

Advanced Protocols for Enhanced Sensitivity and Specificity

Cutting-edge methodological adaptations are pushing the boundaries of detection in low-biomass environments.

- Micelle PCR (micPCR) for Absolute Quantification: This emulsion-based PCR technique compartmentalizes single template DNA molecules for clonal amplification, preventing chimera formation and PCR competition biases [4]. By incorporating a single internal calibrator (IC), it enables absolute quantification of 16S rRNA gene copies, allowing for the subtraction of contaminating DNA molecules identified in negative controls [4].

- Full-Length 16S rRNA Gene Sequencing with Nanopore: Targeting the full-length 16S rRNA gene with long-read nanopore sequencing, rather than short hypervariable regions (e.g., V4), dramatically improves species-level resolution [4]. This approach has been successfully applied to clinical samples, reducing the time-to-result to just 24 hours [4].

- Efficient Sample Collection and Concentration: For surface sampling, novel devices like the SALSA (Squeegee-Aspirator for Large Sampling Area) can achieve >60% recovery efficiency, far surpassing the ~10% efficiency of traditional swabs [3]. Subsequent concentration of samples using hollow fiber concentration pipette tips is crucial for achieving DNA yields compatible with sequencing [3].

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Low-Biomass Studies

| Item | Function | Application Notes |

|---|---|---|

| DNA Decontamination Solutions | Degrades contaminating environmental DNA on surfaces and equipment. | Sodium hypochlorite (bleach) or commercial DNA removal solutions are used after ethanol decontamination [1]. |

| SALSA Sampling Device | High-efficiency surface sampling via squeegee and aspiration. | Bypasses elution inefficiencies of swabs; achieves >60% recovery; uses disposable components to prevent cross-contamination [3]. |

| Hollow Fiber Concentrator (e.g., InnovaPrep CP) | Concentrates microbial cells and DNA from large volume liquid samples. | Critical for achieving detectable DNA concentrations from dilute samples; uses a 0.2 µm polysulfone hollow fiber [3]. |

| Internal Calibrator (IC) for micPCR | Enables absolute quantification of 16S rRNA gene copies in a sample. | A known quantity of added DNA (e.g., Synechococcus) corrects for amplification biases and allows subtraction of background contamination [4]. |

| Synthetic Microbial Community (e.g., ZymoBIOMICS) | Serves as a positive control to assess protocol accuracy and bias. | Should be diluted in the same solvent as the samples (e.g., elution buffer) to avoid skewed community profiles [5]. |

| Nanopore Rapid PCR Barcoding Kit | Prepares low-input DNA libraries for long-read sequencing. | Allows for sequencing of full-length 16S rRNA genes; requires modification for ultra-low input (<10 pg) [3] [4]. |

| AMPure XP Beads | Purifies and size-selects amplicons post-PCR. | Double-sided clean-up (two consecutive purifications) is recommended for removing primer dimers and improving sequencing quality [5]. |

Defining low-biomass samples extends beyond a simple quantitative threshold of microbial cells; it encapsulates a state where the authentic biological signal is vulnerable to being overwhelmed by technical noise at every stage of the research workflow. The core thesis that low microbial load profoundly impacts sequencing results is well-supported by the persistent controversies and methodological refinements in this field. Success hinges on a holistic strategy that integrates meticulous experimental design, comprehensive controls, and specialized protocols. By adopting these rigorous frameworks, researchers can reliably discern true microbial signals from artifactual noise, enabling accurate exploration of the microbiomes inhabiting the most challenging and extreme environments.

In microbial genomics, samples with low microbial load present a formidable challenge, amplifying the effects of key technical hurdles including host DNA contamination, reagent-derived contaminants, and stochastic effects. The disparity in gene content between host and microbial cells is staggering; a single human cell contains approximately 3 Gb of genomic data, while a viral particle may contain only 30 kb, a difference of up to five orders of magnitude [6]. In samples with high host content, such as tissues and body fluids, more than 99% of sequences in metagenomic data can originate from the host, effectively obscuring signals from pathogenic microorganisms and consuming over 90% of sequencing resources [6]. Simultaneously, the stochastic appearance of contaminating viral sequences in laboratory reagents introduces significant noise, particularly problematic in low-biomass samples where true signals are faint [7] [8]. This technical landscape creates a perfect storm that compromises sensitivity, quantification accuracy, and reproducibility in microbiome research and clinical diagnostics. This review examines these interconnected challenges and synthesizes current methodological solutions to advance the reliability of sequencing-based microbial detection.

The High Host DNA Problem: Strategies and Methodologies

Host DNA Removal Techniques

High host DNA content drastically reduces sequencing sensitivity for detecting microbial pathogens. Effective host DNA removal is therefore a critical prerequisite for metagenomic studies, particularly in clinical samples like tissues and body fluids [6]. The following table summarizes the primary methods available:

Table 1: Methods for Host DNA Removal in Metagenomic Sequencing

| Method | Principle | Advantages | Limitations | Applicable Scenarios |

|---|---|---|---|---|

| Physical Separation (Centrifugation, Filtration) | Exploits density/size differences between host cells and microbes [6] | Low cost, rapid operation [6] | Cannot remove intracellular or free host DNA from lysed cells [6] | Virus enrichment, body fluid samples [6] |

| Targeted Amplification (PCR, MDA) | Selective enrichment of microbial genomes using specific or random primers [6] | High specificity, strong sensitivity for low biomass [6] | Primer bias affects species abundance quantification [6] | Low biomass, known pathogen screening [6] |

| Host Genome Digestion | Enzymatic (DNase) or chemical cleavage of host DNA while microbes are protected [6] | Efficient removal of free host DNA [6] | May damage microbial cell integrity if protocol is not optimized [6] | Tissue samples, samples with high host content [6] |

| Bioinformatics Filtering | Computational removal of reads aligning to host reference genome post-sequencing [6] | No experimental manipulation, highly compatible [6] | Dependent on a complete reference genome; cannot remove host-homologous sequences [6] | Routine samples, final data cleaning step [6] |

Impact and Evidence for Host DNA Removal

The effectiveness of host DNA purification is demonstrated by significant improvements in data quality. In studies of human and mouse colon biopsies, host DNA removal increased the number of bacterial reads and the number of bacterial species detected per sample [6]. Furthermore, the rate of bacterial gene detection increased by 33.89% in human and 95.75% in mouse colon tissues after host DNA removal, dramatically improving functional profiling [6]. Critically, these methods can enhance sensitivity without disrupting the native microbial community structure, as no significant differences in the dominance of major bacterial phyla were observed between experimental and control groups [6].

Diagram 1: A framework of host DNA removal strategies and their outcomes. "Pre-seq" methods are applied during sample preparation, while "Post-seq" filtering is a computational process.

Contamination and Background Noise

Contamination in viral metagenomics can be categorized as either external or internal, each with distinct origins and characteristics [8]. External contamination originates from outside the sample during collection and processing, including from patient skin, laboratory equipment, collection tubes, contaminated surfaces or air, and most notably, molecular biology reagents and kits [8]. These reagent-derived contaminants, often called the "kitome," form a unique profile specific to particular reagents and batches, making them largely indistinguishable from true microbiome signals [8]. Internal contamination typically arises from cross-contamination between samples during processing in the laboratory, which can be especially problematic in high-throughput amplicon sequencing [9].

Extraction kits represent a major source of nucleic acid background noise. One study identified 88 bacterial genera in commonly used DNA extraction kits, and it is estimated that 10–50% of the bacterial profiles in lower-airway human samples are contaminants derived from these kits [8]. RNA sequencing is particularly vulnerable due to the additional reverse transcription step; commercially available reverse transcriptase enzymes have been found to contain viral contaminants such as equine infectious anemia virus or murine leukemia virus [8].

Solutions for Contamination Detection and Control

Addressing contamination requires a multi-faceted approach combining experimental and computational strategies:

- Batch Control: Process all samples in a project using the same batches/lots of reagents to minimize variable background noise [8].

- Negative Controls: Include multiple negative controls (non-template controls) throughout the experimental process to identify contaminating sequences linked to reagents and protocols [7].

- Spike-In Controls: Use synthetic DNA spike-ins (SDSIs) or External RNA Control Consortium (ERCC) RNA standards added during sample processing to qualitatively track inter-sample contamination [9].

- Computational Tools: Employ specialized bioinformatics tools like Polyphonia, which detects inter-sample contamination directly from deep sequencing data using intrahost variant frequencies without requiring additional controls [9].

Table 2: Quantitative Performance of Contamination Detection Methods in a SARS-CoV-2 Study

| Method Category | Specific Method | Detection Principle | Contamination Events Detected | Key Limitation |

|---|---|---|---|---|

| Experimental Spike-Ins | SDSIs (DNA), ERCC (RNA) [9] | Qualitative tracking via added controls [9] | 6 events in 1102 samples [9] | Cannot detect contamination prior to spike-in addition [9] |

| Computational Tool | Polyphonia [9] | Analysis of minor alleles matching consensus of putative contaminant [9] | 2 events in 1102 samples (1 overlap with spike-ins) [9] | Requires sufficient read depth (≥100) and genomic coverage [9] |

Stochastic Effects and Quantification in Low Biomass Samples

The Challenge of Stochastic Effects and Absolute Quantification

In low microbial load samples, stochastic effects significantly impact detection reliability and quantification accuracy. These effects manifest as the random and inconsistent appearance of contaminating sequences, which may only appear in a subset of samples treated with the same laboratory component [7]. This unpredictability complicates distinguishing true signals from background noise, particularly for low-abundance taxa.

Traditional sequencing data is compositional, meaning it provides relative abundances rather than absolute quantities. This poses a critical limitation in clinical diagnostics, where knowing the absolute microbial load is essential for determining infection thresholds and guiding treatment decisions [10]. For example, large differences in magnitude between similar organisms in different environments may not be reflected in their relative proportions, leading to distorted conclusions [10].

Solutions for Robust Quantification

The implementation of internal spike-in controls provides a powerful solution for moving from relative to absolute quantification. In one study, researchers optimized full-length 16S rRNA gene sequencing using nanopore technology on mock community standards by varying DNA input, PCR cycles, and spike-in proportions [10]. The use of a defined spike-in control (ZymoBIOMICS Spike-in Control I) comprising Allobacillus halotolerans and Imtechella halotolerans at a fixed 16S copy number ratio of 7:3 provided robust quantification across varying DNA inputs and sample origins [10]. This method was validated using human samples from stool, saliva, nose, and skin, demonstrating high concordance between sequencing estimates and traditional culture methods [10].

For detecting low-abundance taxa, the choice of bioinformatics tools is crucial. The study found that the Emu algorithm performed well at providing genus and species-level resolution from full-length 16S rRNA sequencing data [10]. However, challenges remained in detecting low-abundance taxa and differentiating closely related species, indicating areas for further methodological refinement [10].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Overcoming Technical Hurdles

| Reagent/Material | Primary Function | Technical Hurdle Addressed | Example/Specification |

|---|---|---|---|

| Spike-In Controls | Internal standard for absolute quantification and contamination tracking [10] [9] | Stochastic effects, Quantification [10] | ZymoBIOMICS Spike-in Control I (Fixed 16S copy number ratio) [10] |

| DNA Extraction Kits (HMW) | Obtain pure, high-molecular-weight DNA with minimal contamination [11] [12] | Host DNA, Contamination [6] | Nanobind kits (PacBio), DNeasy Blood and Tissue Kit (Qiagen) [11] [12] |

| Enzymes for Host DNA Depletion | Selective degradation of host DNA while preserving microbial DNA [6] | High Host DNA [6] | DNase I, Lysozyme, Proteinase K [6] [13] [12] |

| Methylation-Sensitive Enzymes | Selective cleavage of methylated host DNA (e.g., CpG islands) [6] | High Host DNA [6] | Methylation-sensitive restriction enzymes [6] |

| High-Fidelity Polymerase | Accurate amplification with minimal contaminant introduction [12] [8] | Contamination [8] | Recombinant Taq polymerase (low microbial DNA) [8] |

| Size Selection Beads | Removal of low-molecular-weight DNA fragments (e.g., host DNA fragments) [11] | High Host DNA [6] | Short Read Eliminator (SRE) kit, AMPure XP beads [11] [12] |

| Bioinformatics Tools | In silico removal of host reads and contamination detection [9] [6] | Host DNA, Contamination [9] [6] | Polyphonia, KneadData, BMTagger, Bowtie2 [9] [6] |

Integrated Experimental Protocols

Protocol 1: Full-Length 16S rRNA Sequencing with Spike-In for Quantification

This protocol, adapted from nanopore sequencing studies, enables absolute quantification of bacterial communities [10]:

- DNA Extraction: Use QIAamp PowerFecal Pro DNA Kit for human samples (stool, saliva, skin, nose). Measure concentration with Qubit dsDNA BR Assay Kit [10].

- Spike-In Addition: Incorporate spike-in control comprising Allobacillus halotolerans and Imtechella halotolerans at a fixed proportion. Testing indicates 10% of total DNA is effective [10].

- 16S Amplification: Perform full-length 16S rRNA gene amplification for 25 cycles using adapted Oxford Nanopore Technology protocol (SQK-LSK109). For low biomass samples, consider increasing to 35 cycles [10].

- Library Preparation & Sequencing: Barcode, pool, and purify amplified DNA. Perform end repair and dA-tailing. Purify with SPRIselect magnetic beads. Sequence on MinION Mk1C device with R9.4 flow cell [10].

- Bioinformatic Analysis: Basecall with Guppy (high accuracy). Filter sequences (q-score ≥9, length 1,000-1,800 bp). Analyze with Emu for taxonomic classification [10].

Protocol 2: Host DNA-Removed Metagenomic Sequencing for Low Biomass Samples

This protocol, optimized for clinical swab specimens, maximizes pathogen detection sensitivity [14]:

- Sample Collection: Collect swab specimens and place in appropriate transport medium [14].

- Host Nucleic Acid Removal: Extract total nucleic acid, then mix with DNA enzyme and buffer to digest DNA and enrich RNA [14].

- cDNA Synthesis: Perform reverse transcription and cDNA synthesis from enriched RNA [14].

- Library Preparation: Use commercial library preparation kit (e.g., PMseq RNA infectious pathogens kit). Qualify cDNA libraries with Qubit 4.0 [14].

- Sequencing: Prepare DNA nanoballs (DNB) and load into sequencing chip. Perform sequencing on appropriate platform (e.g., MGSEQ-2000) with single-end 50 bp reads [14].

- Bioinformatic Analysis: Remove adaptors and low-quality reads. Separate host and microbial analysis workflows. For microbial analysis, remove sequences aligning to human reference genome before determining microbial species [14].

Diagram 2: An integrated end-to-end workflow for managing host DNA, contamination, and stochastic effects in microbial sequencing. The process combines wet-lab and computational steps.

The technical hurdles of contamination, high host DNA, and stochastic effects present significant but surmountable challenges in microbial sequencing, particularly for low-biomass samples. Success requires an integrated approach combining experimental and computational strategies. Key solutions include the implementation of spike-in controls for absolute quantification, targeted host DNA removal methods tailored to sample type, rigorous contamination monitoring using both controls and computational tools like Polyphonia, and full-length 16S rRNA sequencing with advanced algorithms like Emu for improved taxonomic resolution. As these methodologies continue to mature and standardize, they promise to enhance the reliability of microbial detection in research and clinical diagnostics, ultimately improving patient care and public health responses.

In microbial genomics research, the "signal" constitutes genuine biological information, such as the true presence and abundance of microbial taxa, while "noise" includes technical artifacts, contaminants, and stochastic sequencing errors. Low microbial load—samples containing a small total number of microbial cells—presents a fundamental challenge for next-generation sequencing (NGS) by critically compressing the signal-to-noise ratio. In these samples, minute contaminant DNA introduced during sample processing or reagent impurities can constitute a substantial proportion of the sequenced material, thereby obscuring the true biological signal [15]. This distortion is particularly problematic in clinical diagnostics where accurate bacterial load estimation determines infection thresholds and guides treatment decisions [15]. The compositional nature of standard sequencing data, which reveals only relative abundances, further complicates interpretation because a perceived increase in one taxon's abundance may merely reflect a decrease in another's rather than true biological variation [16]. Understanding and mitigating these effects is therefore essential for valid data interpretation across research, pharmaceutical development, and clinical applications.

Technical Foundations: How Microbial Load Affects Data Quality

The Sampling Fraction Problem

The core issue in low microbial load samples stems from the sampling fraction—the ratio between observed sequencing counts and the true, unobservable absolute abundance of microbes in the original ecosystem [16]. This fraction varies substantially between samples and is inversely related to microbial load; samples with lower total biomass have a greater proportion of their sequencing data consumed by contaminating DNA and technical noise.

Compositional Data Constraints: Microbiome sequencing data are inherently compositional, meaning they sum to a constant total (e.g., 100% relative abundance) [16]. This property creates interpretive pitfalls: in a low-load sample, a minor contaminant may appear as a dominant taxon in the relative abundance profile, while genuine but rare taxa might be indistinguishable from background noise. The following table summarizes key terminology essential for understanding these distortions:

Table 1: Key Terminology in Microbial Abundance Measurement

| Term | Definition | Interpretation Challenge |

|---|---|---|

| Absolute Abundance | The true, unobservable number of a microbial taxon in a unit volume of an ecosystem [16]. | The fundamental parameter of interest that sequencing cannot directly measure. |

| Relative Abundance | The proportion of a taxon's sequencing counts relative to the total counts in a sample [16]. | Can create misleading impressions when total microbial load differs between samples. |

| Sampling Fraction | The sample-specific factor linking expected observed abundance to true absolute abundance [16]. | Varies with microbial load and DNA extraction efficiency, confounding comparisons. |

| Library Size | The total number of sequencing reads obtained for a sample. | Often correlates with microbial load but is an imperfect proxy. |

Specific Artifacts in Low-Load Samples

Low microbial load environments exacerbate several technical artifacts that can be misinterpreted as biological signals:

Enhanced Contamination Sensitivity: Reagent-borne microbial DNA, which is negligible in high-biomass samples like stool, becomes a significant contaminant in low-biomass samples (e.g., skin swabs, nasal cavity, sterile tissue) [15]. Without proper controls, these contaminants can be misidentified as novel pathogens or biomarkers.

Stochastic Amplification Effects: During PCR amplification, stochastic fluctuations are magnified when template DNA copies are scarce. This can cause inconsistent detection of low-abundance taxa across technical replicates, creating false "differentially abundant" signals between sample groups [15].

Index Hopping and Cross-Talk: In multiplexed sequencing runs, index misassignment can cause reads from high-biomass samples to appear in low-biomass sample data, creating artificial signals that are particularly damaging when studying environments expected to have minimal native biomass [16].

Methodological Solutions: Experimental Design and Normalization

Incorporating Internal Controls and Spike-Ins

A powerful strategy to distinguish signal from noise involves adding known quantities of synthetic or foreign microbial cells as internal controls prior to DNA extraction.

Spike-In Protocol Optimization: As demonstrated in a 2025 validation study, researchers used ZymoBIOMICS Spike-in Control I (containing Allobacillus halotolerans and Imtechella halotolerans) added at a fixed proportion (e.g., 10%) of total DNA input [15]. This approach enables precise quantification by:

- Accounting for Technical Variation: The ratio between observed spike-in reads and expected abundance corrects for sample-to-sample differences in DNA extraction efficiency, PCR amplification bias, and sequencing depth [15].

- Enabling Absolute Quantification: The known number of spike-in cells allows conversion of relative sequencing proportions back to estimated absolute cell counts, overcoming the compositionality problem [15] [16].

Table 2: Normalization Methods for Managing Low-Load Artifacts

| Method | Principle | Advantages | Limitations |

|---|---|---|---|

| Spike-In Controls [15] | Adds known quantities of non-native microbes to samples before DNA extraction. | Enables absolute quantification; corrects for technical variation in entire workflow. | Requires careful optimization of spike-in ratio; may consume sequencing depth. |

| Rarefying [16] | Randomly subsamples sequences to equal library sizes across all samples. | Simple to implement; reduces library size heterogeneity. | Discards valid data; introduces artificial uncertainty; does not address compositionality. |

| Chemical DNA Spikes | Adds known quantities of synthetic DNA fragments. | Can be customized to avoid biological overlap; precise quantification. | Does not control for DNA extraction efficiency variation. |

| Microbial Load Prediction [17] | Machine learning model predicts absolute abundance from relative data. | No extra lab work needed; applicable to existing datasets. | Model performance depends on training data quality and representativeness. |

Optimized Wet-Lab Protocols for Low-Biomass Samples

Modified laboratory protocols are crucial for maximizing signal recovery from low microbial load samples:

- Increased DNA Input: For full-length 16S rRNA gene sequencing using nanopore technology, increasing template DNA input to 1.0-5.0 ng (as opposed to 0.1 ng) significantly improves taxonomic detection while maintaining quantitative accuracy [15].

- Limited PCR Cycles: Optimizing PCR cycle number (e.g., 25 cycles versus 35) reduces stochastic amplification artifacts and chimera formation while preserving representation of rare taxa [15].

- Extraction Blank Controls: Including extraction blanks (reagents without sample) throughout the workflow is essential to identify and quantify contaminant DNA backgrounds, allowing for computational subtraction of these noise signals [15].

Bioinformatics and Statistical Normalization

Computational methods help mitigate the impact of variable microbial loads:

- Identifying and Filtering Contaminants: Tools like Decontam use prevalence or frequency-based statistical methods to identify taxa that are more abundant in negative controls than in true samples, allowing for their removal from the dataset [16].

- Compositionally Aware Algorithms: Methods such as ANCOM-II account for the compositional nature of sequencing data when testing for differential abundance, thereby reducing false positives caused by load variation rather than genuine biological change [16].

- Machine Learning for Load Prediction: Recent advances enable prediction of microbial loads directly from relative abundance data using machine learning models trained on reference datasets with known absolute abundances, providing a cost-effective adjustment for large cohort studies [17].

Experimental Workflow: From Sample to Analysis

The following workflow diagram integrates the key methodological solutions for managing low microbial load challenges across the experimental pipeline:

The Scientist's Toolkit: Essential Research Reagents

Successful navigation of low microbial load challenges requires specific laboratory reagents and computational tools. The following table catalogues essential solutions referenced in the cited literature:

Table 3: Research Reagent Solutions for Low Microbial Load Studies

| Reagent / Material | Function | Example Use Case |

|---|---|---|

| Mock Community Standards (e.g., ZymoBIOMICS D6300/D6305/D6331) [15] | Validates entire sequencing workflow accuracy with known composition and abundance. | Assessing quantitative accuracy and detecting biases in low-load conditions. |

| Spike-In Controls (e.g., ZymoBIOMICS D6320) [15] | Provides internal reference for absolute quantification and technical variation. | Added to low-biomass samples (skin, nasal) to normalize for extraction and sequencing efficiency. |

| Full-Length 16S rRNA Primers (e.g., ONT PCR barcoding kit) [15] | Enables high-resolution taxonomic classification to species level. | Improving species-level discrimination in complex low-abundance communities. |

| High-Sensitivity DNA Extraction Kits (e.g., QIAamp PowerFecal Pro DNA Kit) [15] | Maximizes DNA yield from limited microbial biomass. | Processing low-load samples (skin swabs, water filters) to recover sufficient material. |

| Bioinformatic Tools (e.g., Emu [15], ANCOM-II [16]) | Performs compositionally aware statistical analysis and taxonomic classification. | Identifying genuine differentially abundant taxa while controlling for load variation. |

Distinguishing biological signal from technical noise in low microbial load sequencing requires an integrated approach spanning experimental design, wet-lab protocols, and computational analysis. The strategies outlined—thoughtful application of internal controls, optimized laboratory techniques, and compositionally aware bioinformatics—collectively provide a robust framework for generating reliable, interpretable data from challenging low-biomass samples. As microbial research increasingly focuses on environments with naturally sparse biomass, such as certain body sites in human health studies or oligotrophic environmental niches, mastering these approaches becomes fundamental to advancing our understanding of microbial communities and their functional impacts on human health and disease.

The study of human microbiome has transformed our understanding of health and disease, yet significant challenges remain in accurately characterizing microbial communities from low-biomass environments. Samples from sites such as blood, urine, and the upper respiratory tract often contain minimal microbial DNA, creating substantial technical hurdles for sequencing-based analyses. The low microbial load in these samples means that signals can be easily obscured by contamination, host DNA background, or sequencing artifacts, potentially leading to ecological misinterpretations that could be likened to "blue whales in the Himalayas or African elephants in Antarctica" [18]. This technical guide examines key case studies from these challenging environments, highlighting both the pitfalls and advanced methodologies essential for generating reliable data in low-biomass microbiome research.

Case Study 1: Upper Respiratory Tract Microbiome in Pneumonia

A study investigating the upper respiratory tract microbiome in hospitalized patients with Community-Acquired Pneumonia (CAP) provides a compelling case study for low-biomass analysis. Researchers characterized the nasopharyngeal and oropharyngeal microbiomes of patients with CAP of unknown etiology through metagenomic analysis [19]. The random sample of 10 patients from a larger trial revealed that only one patient exhibited a distinct nasopharyngeal microbiome dominated by Haemophilus influenzae, while the other nine patients showed presence of Streptococcus pneumoniae in their upper respiratory tract, suggesting this as a probable etiology despite negative results from conventional microbiological workups [19].

Methodological Framework

The experimental protocol incorporated several rigorous approaches to address low-biomass challenges:

- Sample Collection: Nasopharyngeal and oropharyngeal swabs were collected using Copan Flocked Swabs on hospital admission, placed in universal transport media, vortexed, aliquoted, and stored at -80°C [19].

- DNA Isolation: Total genomic DNA was isolated using the Maxwell 16 Blood DNA Purification Kit on an automated system [19].

- 16S rRNA Amplification: Amplification employed 16S rRNA-specific primers (27f and 534r) with the FastStart High Fidelity PCR System under the following cycling conditions: 95°C for 5 minutes, followed by 30 cycles of 94°C for 30 seconds, 56°C for 30 seconds, and 72°C for 1 minute 30 seconds, with a final extension of 8 minutes at 72°C [19].

- Sequencing and Analysis: PCR amplicons were sequenced using 454 GS FLX technology, and data were processed with bioinformatic pipelines for microbiome characterization [19].

Key Quantitative Findings

Table 1: Upper Respiratory Tract Microbiome Study Results

| Parameter | Finding | Significance |

|---|---|---|

| Patients with distinct H. influenzae microbiome | 1/10 (10%) | Suggested as probable CAP etiology |

| Patients with S. pneumoniae detected via PCR | 9/10 (90%) | Indicated as likely pathogen despite negative conventional tests |

| Sample type comparison | Substantial differences between nasopharyngeal and oropharyngeal microbiomes | Highlighted site-specific microbial communities |

Technical Considerations for Low-Biomass Samples

This study exemplifies the importance of molecular methods in detecting pathogens that conventional culture-based methods might miss in low-biomass environments. The use of targeted 16S rRNA amplification and sequencing provided insights into potential pathogens that would have remained undetected, demonstrating the value of these approaches for samples with limited microbial material [19].

Case Study 2: Blood Microbiome Investigation

Large-Scale Assessment of Microbial Presence

A rigorous large-scale study challenged prevailing assumptions about blood microbiome by examining samples from 9,770 healthy individuals [18]. This investigation implemented extensive controls for procedural contamination and sequencing artifacts, setting a benchmark for low-biomass research methodology.

Key Findings and Implications

The research identified only 117 microbial species with low signals in less than 18% of samples, with these species typically associated with other body sites rather than representing a resident blood microbiome [18]. Computational analysis revealed no identifiable patterns of microbial interaction with typical blood markers, effectively challenging the concept of a common blood microbiome in healthy individuals [18].

Methodological Rigor in Low-Biomass Setting

This study underscores several critical considerations for blood microbiome research:

- Comprehensive Controls: Implementation of extensive controls for procedural contamination across the entire experimental workflow.

- Large Sample Size: Analysis of nearly 10,000 individuals provides substantial statistical power.

- Computational Validation: Application of multiple analytical approaches to validate findings.

- Ecological Plausibility Assessment: Interpretation of results within established microbiological principles.

Cross-Cutting Methodological Advances

2bRAD-M: Innovative Approach for Challenging Samples

The 2bRAD-M method represents a significant advancement for low-biomass microbiome studies [20]. This approach utilizes Type IIB restriction enzymes to produce uniform, short DNA fragments (32 bp for BcgI enzyme) for sequencing, requiring as little as 1 pg of total DNA and tolerating up to 99% host DNA contamination [20].

Table 2: Performance Comparison of Microbiome Profiling Methods

| Method | Minimum DNA | Host DNA Tolerance | Species-Level Resolution | Cost Efficiency |

|---|---|---|---|---|

| 16S rRNA Sequencing | ~1 ng | Moderate | Limited (genus level) | High |

| Whole Metagenome Shotgun (WMS) | ≥20 ng (50 ng preferred) | Low | Yes | Low |

| 2bRAD-M | 1 pg | High (up to 99%) | Yes | Medium |

Experimental Workflow for Low-Biomass Samples

Statistical Considerations for Data Analysis

Microbiome data from low-biomass samples present unique statistical challenges including zero inflation, overdispersion, high dimensionality, and compositionality [21]. Appropriate normalization methods and statistical approaches are essential for robust analysis:

- Normalization Methods: Cumulative Sum Scaling (CSS), Relative Log Expression (RLE), and Trimmed Mean of M-values (TMM) help address library size differences [21].

- Differential Abundance Tools: Methods like metagenomeSeq, DESeq2, and ANCOM account for data characteristics specific to microbiome datasets [21].

- Batch Effect Correction: Linear mixed models (LIMMA), ComBat, and Remove Unwanted Variation (RUV) methods address technical variability [21].

Research Reagent Solutions

Table 3: Essential Research Reagents for Low-Biomass Microbiome Studies

| Reagent/Kit | Application | Key Features | Considerations for Low-Biomass |

|---|---|---|---|

| Maxwell 16 Blood DNA Purification Kit | DNA isolation | Automated, reduces cross-contamination | Consistent yield from minimal input [19] |

| HOT FIREPOL BLEND Master Mix | 16S rRNA PCR | High fidelity, includes MgCl₂ | Optimized for amplification from low DNA [13] |

| QIAamp Viral RNA Mini Kit | Nucleic acid extraction | Designed for low-concentration samples | Includes carrier RNA to improve recovery [22] |

| MGIEasy rRNA removal kit | Host and ribosomal RNA depletion | Probe hybridization and RNase H digestion | Critical for host-dominated samples [22] |

| NucleoSpin Blood Kit | DNA extraction from blood | Silica membrane-based purification | Includes lysozyme incubation for Gram-positive bacteria [13] |

Integrated Analysis of Methodological Principles

Contamination Control Framework

Effective low-biomass research requires systematic contamination control throughout the experimental workflow:

- Pre-analytical Phase: Standardized sample collection protocols, appropriate swab types, immediate stabilization.

- Analytical Phase: Negative extraction controls, reagent blanks, positive controls, technical replicates.

- Post-analytical Phase: Bioinformatics filters for contaminants, statistical identification of batch effects.

Validation and Interpretation Guidelines

Robust interpretation of low-biomass microbiome data requires:

- Ecological Plausibility: Findings should align with established microbiological principles [18].

- Independent Validation: Confirmation through multiple methodological approaches.

- Technical Correlation: Association between microbial load and technical positive controls.

- Biological Replication: Consistency across sample replicates and related sample types.

The investigation of microbiome in low-biomass environments such as urine, blood, and the upper respiratory tract demands specialized methodological approaches and rigorous interpretive frameworks. Case studies across these sample types demonstrate that careful experimental design incorporating appropriate controls, utilizing advanced molecular techniques like 2bRAD-M and targeted 16S sequencing, and applying stringent statistical analyses are essential for generating reliable data. As research in this challenging area continues to evolve, maintaining scientific rigor while exploring the potential roles of microbial communities in these environments will be crucial for advancing our understanding of human microbiology and its implications for health and disease.

Advanced Profiling Techniques for Low-Abundance Microbiomes

The identification and classification of bacterial pathogens are fundamental to advancing our understanding of human health, disease progression, and therapeutic development. For decades, the gold standard for bacterial identification in diagnostic microbiology laboratories has involved culture and biochemical testing (CBtest). This method, while widely available, faces significant limitations: not all bacterial species can be successfully cultured, particularly strict anaerobes, fastidious pathogens requiring enriched media, or viable-but-non-culturable (VBNC) organisms [23]. Furthermore, CBtest is time-consuming for slow-growing pathogens, potentially leading to patient morbidity, prolonged broad-spectrum antibiotic usage, and delayed pathogen-specific interventions [23].

The advent of next-generation sequencing (NGS) introduced 16S rRNA gene sequencing as a culture-independent alternative. This gene contains nine hypervariable regions (V1-V9) flanked by conserved segments, serving as a phylogenetic marker for bacterial taxonomy [24]. However, the most prevalent NGS technologies, such as Illumina, are restricted to reading short fragments (e.g., the V3-V4 regions, ~400-500 bp) due to their read-length limitations [25] [26]. This short-read approach often limits taxonomic resolution to the genus level, obscuring critical species-level and strain-level diversity that is essential for precise biomarker discovery, understanding pathogenesis, and tracking bacterial transmission [24] [26].

The emergence of third-generation, long-read sequencing technologies, such as those developed by Oxford Nanopore Technologies (ONT) and Pacific Biosciences, has overcome these read-length barriers. These platforms now enable routine sequencing of the full-length 16S rRNA gene (~1,500 bp, spanning V1-V9), promising enhanced taxonomic resolution down to the species level [25] [26]. This technical guide explores how full-length 16S rRNA sequencing with long-read technologies is revolutionizing microbial taxonomy, with a specific focus on the critical challenge of low microbial load samples, which are common in clinical contexts such as sterile body fluids, tissue biopsies, and human milk.

Comparative Analysis: Full-Length vs. Partial 16S Sequencing

The fundamental advantage of full-length 16S sequencing lies in the substantial increase in informative sites available for taxonomic classification. While short-read approaches rely on one or two hypervariable regions, full-length sequencing integrates information across all nine variable regions, providing a much more robust phylogenetic signal.

Empirical Evidence of Enhanced Resolution

Multiple studies have directly compared the taxonomic outcomes of full-length and partial 16S rRNA sequencing. A landmark comparative study analyzed 24 human gut microbiota samples using both V3-V4 short-read (Illumina) and full-length synthetic long-read (sFL16S) approaches. The results were striking: the sFL16S method classified 1,041 amplicon sequence variants (ASVs) compared to only 623 ASVs with the V3-V4 method [24]. This demonstrates a significant increase in the ability to resolve distinct bacterial taxa when the entire gene is sequenced.

Furthermore, alpha-diversity metrics, which quantify within-sample richness and evenness, were significantly higher across all indices (Observed_OTUs, Chao1, Shannon, Simpson) for the full-length method [24]. This indicates that relying on partial gene segments underestimates true microbial diversity, a critical consideration for ecological studies and investigations into dysbiosis.

Table 1: Comparative Performance of Full-Length vs. Partial 16S Sequencing

| Metric | V3-V4 Short-Read (e.g., Illumina) | V1-V9 Full-Length (e.g., ONT) | Significance |

|---|---|---|---|

| Read Length | ~400-500 bp [26] | ~1,500 bp [25] | Captures all variable regions |

| Typical Taxonomic Resolution | Genus level [26] | Species level [15] [26] | Enables precise biomarker discovery |

| Diversity (Alpha) Metrics | Lower [24] | Significantly Higher [24] | Avoids underestimation of richness |

| Identification of Shared Taxa | 54 unique species [24] | 430 unique species [24] | Greatly improved strain tracking |

| Quantitative Accuracy (with spike-in) | N/A | High correlation with culture (qPCR) [15] | Reliable absolute abundance estimation |

The impact on species-level identification is particularly profound. In a study of 123 subjects for colorectal cancer (CRC) biomarker discovery, Nanopore full-length 16S sequencing identified specific pathogenetic species such as Parvimonas micra, Fusobacterium nucleatum, and Peptostreptococcus anaerobius with high confidence [26]. These species-level biomarkers enabled the development of a predictive model for CRC with an AUC (Area Under the Curve) of 0.87, showcasing the direct clinical and research utility of high-resolution data [26]. In another analysis, full-length sequencing identified 430 unique bacterial species that were not detected by the V3-V4 method, which in turn found only 54 unique species [24]. This order-of-magnitude improvement is critical for studies of bacterial transmission, as it provides the necessary resolution to confidently track strains across different body sites or individuals [27].

Technical Foundations and Workflow

Implementing a robust full-length 16S sequencing protocol requires careful attention to each step, from sample preparation to bioinformatic analysis, especially when working with challenging samples.

Wet-Lab Experimental Protocol

The following methodology is adapted from optimized protocols used in recent studies [15] [26].

1. Sample Collection and DNA Extraction:

- Sample Collection: Collect samples (e.g., stool, saliva, tissue, low-biomass fluids) using appropriate sterile kits. For low-biomass samples, immediate freezing at -20°C or -80°C is recommended.

- DNA Extraction: Use kits designed for difficult samples and which minimize contamination. The DNeasy PowerSoil Pro (Qiagen) and MagMAX Total Nucleic Acid Isolation Kit have been validated for low-biomass human milk samples and provide consistent results with low contamination [27]. The QIAamp PowerFecal Pro DNA Kit is also widely used for stool and gut microbiome samples [15].

- DNA Quantification: Measure DNA concentration using a fluorometer (e.g., Qubit with dsDNA BR Assay Kit) due to its superior accuracy for low-concentration samples over spectrophotometry [15].

2. 16S rRNA Gene Amplification and Library Preparation:

- PCR Amplification: Amplify the full-length 16S rRNA gene using primers targeting the conserved regions. A commonly used set is 27F (5'-AGRGTTYGATYMTGGCTCAG-3') and 1492R (5'-RGYTACCTTGTTACGACTT-3') [25] [15]. The degeneracy of these primers is critical for unbiased amplification across diverse bacterial taxa [25].

- Reaction Mix: 50 ng genomic DNA, primers, and a high-fidelity PCR master mix (e.g., LongAMP Taq 2x Master Mix).

- Cycling Conditions: Initial denaturation at 95°C for 1 min; 25-35 cycles of 95°C for 20 s, 51-54°C for 30 s, 65°C for 2 min; final elongation at 65°C for 5 min [25] [15]. The number of cycles should be minimized for high-biomass samples to reduce PCR bias, but may be increased for low-biomass samples.

- Internal Controls for Quantification: For absolute quantification, include a spike-in control (e.g., ZymoBIOMICS Spike-in Control I) at a fixed proportion (e.g., 10%) of the total DNA input. This allows for the estimation of absolute microbial loads from sequencing data, moving beyond relative abundances [15].

- Library Preparation: Following amplification, barcode the amplicons for multiplexing. Then, proceed with end-repair, dA-tailing, and adapter ligation using manufacturer-specific kits (e.g., ONT's Ligation Sequencing Kit). Purify the final library using magnetic beads [15].

3. Sequencing:

- Load the library onto a long-read sequencer (e.g., Oxford Nanopore MinION Mk1C or GridION) using a compatible flow cell (e.g., R9.4.1 or newer R10.4.1). The R10.4.1 flow cell, with its dual-reader head, provides higher accuracy, especially in homopolymeric regions [26].

- Initiate a standard sequencing run, typically for 24-72 hours, with real-time basecalling enabled.

Figure 1: Core workflow for full-length 16S rRNA gene sequencing, highlighting the optional use of spike-in controls for absolute quantification.

Bioinformatic Analysis and Tool Selection

The analysis of long-read 16S data requires specialized tools that account for its higher error rate compared to Illumina data.

- Basecalling and Quality Control: Basecall raw signals to FASTQ format using the sequencer's software (e.g., Oxford Nanopore's Guppy or Dorado). Filter reads by quality (q-score ≥ 9 is common) and length (retain reads between 1,000 bp and 1,800 bp for 16S) [15]. Dorado's super-accurate (sup) model is recommended for highest quality, though high-accuracy (hac) provides a good balance of speed and quality [26].

- Taxonomic Classification: Use tools specifically designed for long-read amplicon data. Emu is a reference-based method that has demonstrated excellent performance for assigning taxonomy to full-length 16S sequences, achieving species-level resolution [15] [26]. Other options include NanoCLUST and the SmartGene IDNS software with its 16S Centroid database [28].

- Database Choice: The reference database is critical. While universal databases like SILVA are commonly used, specialized databases (e.g., Emu's Default database) can sometimes provide improved classification, though they may vary in comprehensiveness [26]. The selection should be guided by the specific microbial communities under investigation.

The Critical Hurdle: Low Microbial Load and Potential Solutions

A primary focus of modern microbial research involves samples where bacterial biomass is low relative to host DNA, such as clinical specimens from sterile sites (blood, CSF), formalin-fixed paraffin-embedded (FFPE) tissues, and human milk. These samples present unique challenges that are acutely relevant to the thesis on the impact of low microbial load.

Challenges in Low-Biomass Contexts

- High Host DNA Contamination: In human milk, over 90% of isolated DNA can be of human origin, drastically reducing the sequencing depth available for microbial profiling [27].

- Low Absolute Abundance of Bacteria: This increases the risk of false negatives and makes accurate quantification difficult. Standard whole-metagenome shotgun (WMS) sequencing often requires ≥50 ng of DNA and is inefficient under these conditions [20].

- DNA Degradation: Samples like FFPE tissues contain highly fragmented DNA, which is problematic for amplifying the full-length 16S gene [20].

Emerging Methodological Solutions

1. Optimized DNA Extraction and Enrichment: Comparative studies of DNA isolation kits for human milk (a classic low-biomass sample) found that the DNeasy PowerSoil Pro (PS) and MagMAX Total Nucleic Acid Isolation (MX) kits provided the most consistent 16S rRNA gene sequencing results with the lowest levels of contamination [27]. While bacterial enrichment methods (e.g., differential centrifugation) were tested, they did not substantially decrease host read-depth in subsequent metagenomic sequencing, suggesting that optimized direct extraction is currently more reliable [27].

2. Alternative Sequencing Strategies: For the most challenging samples (e.g., <1 pg microbial DNA, >99% host contamination, or severely fragmented DNA), novel methods like 2bRAD-M offer a powerful alternative. This technique uses type IIB restriction enzymes to produce uniform, short fragments (32 bp) that are highly specific to microbial species. Because it sequences only ~1% of the metagenome, it is cost-effective and exceptionally robust for low-biomass, high-host, or degraded samples where 16S PCR amplification fails [20].

3. Internal Controls and Absolute Quantification: Incorporating a synthetic spike-in control (e.g., ZymoBIOMICS Spike-in Control) of known concentration into the sample prior to DNA extraction allows for the calibration of sequencing reads. This enables the estimation of absolute bacterial loads from relative sequencing data, a crucial advancement for low-biomass studies where relative abundances can be misleading [15].

Table 2: Essential Research Reagent Solutions for Low-Biomass Studies

| Reagent / Kit | Function | Application Note |

|---|---|---|

| DNeasy PowerSoil Pro Kit (Qiagen) | DNA Isolation | Validated for low-biomass samples; effective inhibitor removal [27]. |

| MagMAX Total Nucleic Acid Kit (Thermo Fisher) | DNA Isolation | Provides consistent results with low contamination, suitable for automation [27]. |

| ZymoBIOMICS Spike-in Control I | Internal Control | Enables absolute quantification of microbial load; added pre-extraction [15]. |

| ONT 16S Barcoding Kit (SQK-RAB204) | Library Preparation | Streamlined workflow for full-length 16S amplification and barcoding [25]. |

| BcgI Restriction Enzyme | 2bRAD-M Library Prep | Key enzyme for 2bRAD-M, generates species-specific iso-length tags [20]. |

| LongAMP Taq Master Mix (NEB) | PCR Amplification | High-fidelity polymerase for robust amplification of full-length 16S gene [25]. |

Full-length 16S rRNA sequencing leveraging long-read technologies represents a paradigm shift in microbial taxonomy. It moves beyond the genus-level classifications of short-read approaches to deliver species-level and sometimes strain-level resolution. This is critically important for applications such as discovering disease-specific biomarkers, tracking pathogen transmission in hospital settings, and elucidating the fine-scale dynamics of microbial communities.

The integration of optimized wet-lab protocols—including careful DNA extraction, the use of degenerate primers, and the incorporation of spike-in controls—with specialized bioinformatic tools like Emu creates a powerful framework for reliable microbial analysis. This is particularly true for the daunting challenge of low-biomass samples, where methods like 2bRAD-M and absolute quantification are pushing the boundaries of what is detectable.

As long-read technologies continue to evolve, with ongoing improvements in accuracy (e.g., Q20+ chemistry), throughput, and cost-effectiveness, full-length 16S sequencing is poised to become the new gold standard for amplicon-based microbiome studies. It will undoubtedly play a central role in deepening our understanding of the microbial world and its profound impact on human health and disease, firmly establishing the value of taxonomic resolution in the face of low microbial abundance.

Figure 2: Logical framework outlining the major challenges of low microbial load samples and the corresponding methodological solutions that enable robust, species-level profiling.

Shotgun Metagenomics and Genome-Resolved Analysis for Functional Insights

Shotgun metagenomics, powered by advances in high-throughput sequencing, has revolutionized our ability to study uncultivated microbial communities directly from their environments. This approach provides unparalleled access to both the taxonomic composition and functional potential of microbiomes. For biomedical and clinical research, particularly in the context of low microbial load environments, translating complex metagenomic data into meaningful biological insights requires sophisticated genome-resolved analyses. This technical guide details the core principles, methodologies, and analytical frameworks of shotgun metagenomics and genome-resolved metagenomics, with a specific focus on challenges and solutions for profiling low-abundance taxa. We provide actionable protocols, resource tables, and visual workflows to equip researchers with the tools needed to advance microbiome medicine.

Shotgun metagenomics is the comprehensive sequencing of all DNA extracted from an environmental sample, such as human gut contents, soil, or water [29] [30]. Unlike targeted amplicon sequencing (e.g., 16S rRNA gene sequencing), which is limited to taxonomic profiling, shotgun sequencing randomly shears all microbial DNA into fragments that are sequenced, providing fragments from across the entirety of all microbial genomes present [29]. This allows researchers to address two fundamental questions simultaneously: "Who is there?" (taxonomic composition) and "What are they capable of doing?" (functional potential) [29].

The transition from 16S sequencing to whole-metagenome sequencing (WMS) represents a paradigm shift in microbiome science. While 16S sequencing has been instrumental in revealing microbial diversity, it has inherent limitations: it often fails to resolve taxa at the species level, cannot directly assess biological function, is prone to PCR amplification biases, and is unsuitable for detecting non-bacterial community members like viruses and fungi [31] [32]. Shotgun metagenomics circumvents these limitations by providing access to the entire genetic complement of a community [31].

However, shotgun metagenomics presents its own set of challenges. The data is immensely complex and computationally intensive to analyze [29]. A primary difficulty is that most communities are so diverse that individual genomes are rarely covered completely by sequencing reads, making it hard to determine the genome of origin for any given read [29]. Furthermore, samples from host-associated environments (e.g., human tissue) can be overwhelmed by host DNA, which can complicate the detection of microbial signals, especially when microbial load is low [29]. Despite these challenges, ongoing advancements in sequencing technology and bioinformatics have made shotgun metagenomics an increasingly accessible and powerful tool for clinical and environmental microbiology [30].

Genome-Resolved Metagenomics: A Game Changer

Genome-resolved metagenomics is a advanced analytical approach within shotgun metagenomics that aims to reconstruct individual microbial genomes directly from the mixed sequence data [31]. This process involves piecing together short sequencing reads into longer sequences (contigs) and then grouping these contigs into putative genomes, known as Metagenome-Assembled Genomes (MAGs) [31] [33].

The construction of MAGs is a transformative process that enables a versatile and in-depth study of the microbiome. Its capabilities include:

- Expanding the Microbiological Census: Uncovering novel microbial species that constitute the "microbial dark matter," previously inaccessible through cultivation or amplicon sequencing [31].

- Enabling Pangenome Studies: Allowing for the investigation of genetic variation within a microbial species, which is crucial for understanding strain-level dynamics and their association with host phenotypes [31].

- Identifying Novel Genes and Proteins: Facilitating the discovery of new protein families and functional elements encoded within microbial communities [31].

- Tracking Microbial Transmission: Enabling researchers to track the spread of specific commensal or pathogenic bacterial strains within and between hosts [31].

- Linking Genetics to Phenotype: Revealing statistical associations between microbial Single Nucleotide Variants (SNVs) or Structural Variants (SVs) and host health and disease states [31].

- Metabolic Modeling: Allowing for genome-scale metabolic modeling of uncultured bacterial species, which helps predict community interactions and functional outputs [31].

The process of generating MAGs involves two key computational steps (see Figure 1 for a visual workflow):

- Assembly: Short sequencing reads are pieced together into longer contigs based on overlapping sequences. This is typically done using assemblers that employ De Bruijn graph models, such as metaSPAdes or MEGAHIT, which are efficient for handling the complexity of metagenomic data [31] [33].

- Binning: Contigs are grouped into bins that ideally represent individual genomes. Binning algorithms leverage sequence composition (e.g., k-mer frequency) and coverage information across multiple samples to cluster contigs that likely originate from the same genome [31] [33].

The quality of a MAG is assessed by its completion (the percentage of universal single-copy genes present, indicating how complete the genome is) and contamination (the percentage of single-copy genes found in duplicate, indicating DNA from other species has been incorrectly binned) [33]. Tools like CheckM are used for this quality assessment [33].

Table 1: Common Bioinformatics Tools for Genome-Resolved Metagenomics

| Tool | Primary Function | Key Feature |

|---|---|---|

| metaSPAdes [31] | De Novo Assembly | Uses De Bruijn graphs for assembling complex metagenomic data. |

| MEGAHIT [31] | De Novo Assembly | A computationally efficient assembler for large datasets. |

| CONCOCT, MaxBin, metaBAT [33] | Binning | Individual binning tools that use composition and coverage. |

| metaWRAP [33] | Bin Refinement & Pipeline | Hybrid algorithm that consolidates bins from multiple methods to produce superior-quality MAGs. |

| CheckM [33] | Bin Quality Assessment | Estimates completion and contamination of MAGs using lineage-specific marker genes. |

Impact of Low Microbial Load on Sequencing and Analysis

The accurate profiling of microbial communities is critically dependent on the abundance of microbial DNA in a sample. Low microbial load presents a significant challenge for shotgun metagenomics, impacting everything from DNA extraction to downstream biological interpretation. This is a common scenario in clinical samples like tissue biopsies, cerebrospinal fluid (CSF), and blood, where host DNA can vastly outnumber microbial DNA [29] [34].

The primary challenges associated with low microbial load include:

- Host DNA Contamination: Host DNA can constitute the overwhelming majority of sequenced reads, drastically reducing the sequencing depth available for microbial genomes and increasing the cost of obtaining sufficient microbial data [29].

- Reduced Detection Sensitivity: Low-abundance microbial taxa, including pathogens of clinical interest, may fall below the detection limit of standard analytical pipelines. Their reads may be too sparse for reliable assembly into contigs or for accurate abundance estimation [34].

- Assembly Difficulties: De novo assembly of novel genomes from low-abundance taxa is particularly challenging, as the sparse read coverage is unlikely to generate contigs of sufficient length and quality [34].

- Inaccurate Abundance Quantification: Standard relative abundance profiling can be misleading in low-biomass scenarios, as small changes in one population can artificially inflate or deflate the perceived abundance of others due to the compositional nature of the data [10].

To overcome these challenges, specialized wet-lab and computational strategies are required:

- Molecular Enrichment: Techniques to selectively deplete host DNA (e.g., using propidium monoazide treatment) or enrich for microbial DNA prior to sequencing can improve the ratio of microbial to host reads [29].

- Ultra-Deep Sequencing: Generating a very high volume of sequencing data can help capture sufficient reads from low-abundance members, though this approach is costly [30].

- Spike-In Controls: Adding a known quantity of exogenous DNA (spike-ins) during library preparation allows for the estimation of absolute microbial load from relative sequencing data, converting compositional data to absolute abundance [10].

- Advanced Computational Profiling: Using sensitive, alignment-based tools that do not rely on assembly can help detect and quantify low-abundance taxa. Tools like ChronoStrain have been developed specifically to address these limitations.

ChronoStrain is a state-of-the-art Bayesian model designed for profiling strains, particularly those at low abundance, in longitudinal metagenomic data [34]. It explicitly models the presence or absence of each strain and produces a probability distribution over abundance trajectories, leveraging both the temporal information from multiple timepoints and the per-base uncertainty in sequencing quality scores to improve accuracy and lower the limit of detection [34]. Benchmarking on synthetic and real data has demonstrated that ChronoStrain outperforms other methods like StrainGST and mGEMS in accurately quantifying low-abundance strains [34].

Experimental Protocols and Methodologies

A Standard Shotgun Metagenomics Workflow

A typical shotgun metagenomics project involves a series of standardized steps from sample collection to data analysis. The following protocol outlines the critical stages.

Sample Collection & DNA Extraction

- Sample Collection: Collect samples (e.g., stool, saliva, tissue) using standardized, sterile protocols. For low-biomass samples, use ultraclean reagents and include "blank" sequencing controls to monitor contamination [30]. Immediate freezing at -80°C is recommended to preserve nucleic acid integrity [32].

- DNA Extraction: Use a commercial DNA extraction kit suitable for the sample type (e.g., QIAamp PowerFecal Pro DNA Kit for stool). The goal is to maximize DNA yield while minimizing bias. For complex communities, a rigorous lysis step involving enzymes like lysozyme, lysostaphin, and mutanolysin may be necessary to break down diverse cell walls [32].

Library Preparation & Sequencing

- Library Preparation: Fragment the purified DNA mechanically or enzymatically. Ligate platform-specific adapter sequences to the fragments to create a sequencing library. The choice of insert size and PCR amplification cycles should be optimized; excessive PCR can introduce bias [32].

- Sequencing Platform Selection: The Illumina platform is dominant due to its high output and accuracy [30]. For better resolution in complex regions or to obtain longer reads that aid assembly, platforms like PacBio (long-read) can be considered [30]. The choice involves a trade-off between read length, accuracy, cost, and throughput.

Computational Analysis

- Quality Control & Host Filtering: Process raw sequencing reads with tools like FastQC and Trimmomatic to remove low-quality bases and adapter sequences. Subsequently, align reads to the host genome (e.g., human GRCh38) using tools like BWA and remove matching reads to isolate microbial reads [33].

- Taxonomic & Functional Profiling (Assembly-free): For a direct assessment of community composition and function, reads can be aligned directly to reference databases of microbial genes and genomes using tools like Kraken2 (taxonomy) and HUMAnN (metabolic pathways) [29] [30].

- Genome-Resolved Analysis (Assembly-based): As detailed in Section 2, this involves assembly (with metaSPAdes or MEGAHIT), binning (with metaWRAP, which consolidates results from CONCOCT, MaxBin2, and metaBAT), and quality assessment (with CheckM) to generate high-quality MAGs [33].

Specialized Protocol: Quantitative Profiling with Spike-Ins

For absolute quantification in low-biomass contexts, a spike-in controlled protocol is essential [10].

- Obtain Spike-In Control: Use a commercially available spike-in control comprising known, exogenous bacterial species at a fixed ratio (e.g., ZymoBIOMICS Spike-in Control I) [10].

- Add Spike-In to Sample: Prior to DNA extraction, add a defined volume (e.g., comprising 10% of total DNA input mass) of the spike-in control to the sample. This controls for variations in extraction efficiency and PCR amplification [10].

- Proceed with Standard Workflow: Continue with DNA extraction, library preparation, and sequencing as described in section 4.1.

- Bioinformatic Quantification: After sequencing, perform taxonomic profiling. The known absolute abundance of the spike-in organisms in the initial mixture allows for the calculation of a scaling factor, which can be used to convert the relative abundances of all other taxa in the sample into absolute abundances [10].

Figure 1: Shotgun Metagenomics with Genome-Resolved Analysis Workflow. The diagram outlines the core pathway from sample to biological insights, including a specialized path (dashed lines) for absolute quantification using spike-in controls, which is critical for low microbial load studies.

Table 2: Research Reagent Solutions for Shotgun Metagenomics

| Item | Function/Application | Example(s) |

|---|---|---|

| Mock Community Standards | Benchmarking and validating the entire wet-lab and computational workflow. Contains a defined mix of microbial genomes at known abundances. | ZymoBIOMICS Microbial Community Standard (D6300); ZymoBIOMICS Gut Microbiome Standard (D6331) [10]. |

| Spike-In Controls | Added to samples prior to DNA extraction to enable absolute quantification of microbial load and abundances. | ZymoBIOMICS Spike-in Control I (D6320) [10]. |

| DNA Extraction Kits | Isolation of high-molecular-weight DNA from complex biological samples. Critical for minimizing bias. | QIAamp PowerFecal Pro DNA Kit [10]. |

| Library Prep Kits | Preparation of sequencing libraries compatible with high-throughput platforms. | Illumina DNA Prep; KAPA HyperPrep Kit [30]. |

| Reference Databases (Taxonomic) | Used for classifying sequencing reads or contigs into taxonomic groups. | SILVA, GreenGenes, RDP [30] [32]. |

| Reference Databases (Functional) | Used for annotating the functional potential of genes and metabolic pathways. | KEGG, UniProt, eggNOG, COG, CARD (for antibiotic resistance genes) [30]. |

Shotgun metagenomics and genome-resolved analysis have fundamentally transformed microbial ecology and microbiome medicine. By moving beyond the limitations of amplicon sequencing, these approaches provide a comprehensive view of the taxonomic and functional landscape of microbial communities. The ability to reconstruct metagenome-assembled genomes (MAGs) from complex sequence data is particularly powerful, enabling strain-level tracking, the discovery of novel species and genes, and the development of hypotheses about microbe-host interactions.

As the field progresses, the challenges posed by low microbial load environments, such as many clinical samples, are being met with innovative wet-lab and computational solutions. The integration of spike-in controls for absolute quantification and the development of sensitive algorithms like ChronoStrain are pushing the boundaries of detection and quantification. The continued growth of public genomic databases, coupled with more powerful and user-friendly bioinformatic pipelines like metaWRAP, is making genome-resolved metagenomics more accessible. Just as decoding the human genome ushered in the era of genomic medicine, the systematic decoding of commensal microbial genomes through genome-resolved metagenomics is accelerating our journey into the era of microbiome-based diagnostics and therapeutics.

In microbiome research, particularly studies involving low microbial load environments, standard sequencing outputs provide only relative abundance data, which can be profoundly misleading when total microbial biomass varies between samples. The use of internal controls, specifically spike-in standards, transforms relative microbiome data into absolute quantitative measurements. This technical guide explores the critical importance of spike-in controls for accurate microbial quantification, detailing experimental protocols and analytical frameworks that enable researchers to overcome the significant limitations of relative abundance data in low biomass studies. By providing a pathway to absolute quantification, these methods reveal true biological changes that would otherwise be obscured by the compositional nature of sequencing data, thereby addressing a fundamental challenge in microbial ecology and clinical diagnostics.