Navigating the Pitfalls: A Comprehensive Guide to Understanding and Mitigating False Positives in Low-Biomass Microbiome Research

This article provides a systematic framework for researchers, scientists, and drug development professionals grappling with the challenge of false positives in low-biomass microbiome studies.

Navigating the Pitfalls: A Comprehensive Guide to Understanding and Mitigating False Positives in Low-Biomass Microbiome Research

Abstract

This article provides a systematic framework for researchers, scientists, and drug development professionals grappling with the challenge of false positives in low-biomass microbiome studies. It explores the fundamental sources and impacts of false signals—from contamination and host DNA misclassification to computational artifacts—across critical environments like tumors, blood, and pharmaceuticals. The content details robust methodological approaches, from experimental design to advanced bioinformatic pipelines like MAP2B and Kraken2 with SSR confirmation, which significantly enhance specificity. A strong emphasis is placed on troubleshooting, optimization through rigorous controls, and validation strategies for benchmarking tool performance. By synthesizing foundational knowledge with practical, actionable solutions, this guide aims to empower the generation of reliable, reproducible data to advance biomedical discovery and clinical applications.

The Critical Challenge: Unmasking the Sources and Impact of False Positives in Low-Biomass Data

Low-biomass environments harbor minimal levels of microorganisms, often approaching the detection limits of standard DNA-based sequencing methods [1]. In these ecosystems, the microbial signal is faint, making them exceptionally vulnerable to contamination from external DNA sources, which can disproportionately influence results and lead to spurious biological conclusions [1] [2]. While sometimes quantitatively defined as containing fewer than 10,000 microbial cells per milliliter, it is more accurate to consider microbial biomass as a continuum, with analytical challenges intensifying as biomass decreases [2].

These environments are found across diverse fields, from human health to pharmaceutical manufacturing. The core challenge they present is the proportional nature of sequence-based data; when the target DNA signal is extremely low, even minute amounts of contaminating DNA can constitute most of the sequenced material, creating false positives and distorting ecological patterns or evolutionary signatures [1] [3].

Table 1: Examples of Low-Biomass Environments and Their Significance

| Category | Specific Examples | Research/Industrial Significance |

|---|---|---|

| Human Tissues | Fetal tissues, placenta, blood, lower respiratory tract, breast milk, some cancerous tumors [1] [2] [4] | Understanding disease etiology, infant development, and host-microbe interactions in sterile sites [2] [4]. |

| Natural & Built Environments | Atmosphere, hyper-arid soils, deep subsurface, treated drinking water, ice cores, cleanrooms [1] [5] | Planetary protection, astrobiology, assessing environmental contamination, and manufacturing sterility [5]. |

| Pharmaceutical Context | Metal surfaces, processing equipment, sterile drug products, and medical devices [1] [5] | Ensuring product safety, preventing microbial contamination, and complying with Good Manufacturing Practices (GMP). |

The accurate characterization of low-biomass environments is fraught with methodological pitfalls. Acknowledging and controlling for these sources of error is paramount, as they have fueled several scientific controversies, such as debates surrounding the existence of a placental microbiome [1] [2].

- External Contamination: DNA can be introduced at any stage, from sample collection to sequencing. Primary sources include human operators, sampling equipment, laboratory reagents, and kits [1] [2]. The microbial DNA inherent to molecular biology reagents is often called the "kitome" and is a critical confounding factor [5].

- Host DNA Misclassification: In host-associated samples like tumors or blood, over 99.99% of sequenced DNA can be host-derived [2]. During bioinformatic analysis, this host DNA can be misclassified as microbial, generating significant noise and potential false positives, especially if host DNA levels are confounded with an experimental phenotype [2].

- Cross-Contamination (Well-to-Well Leakage): Also known as the "splashome," this refers to the transfer of DNA between samples processed concurrently, such as in adjacent wells of a 96-well plate [1] [2]. This can compromise the integrity of all samples and violates the core assumptions of many computational decontamination tools [2].

- Batch Effects and Processing Bias: Technical variability introduced by different reagent batches, personnel, or laboratory protocols can create strong batch effects [2]. Furthermore, standard laboratory procedures can have variable efficiency for different microbial taxa, a phenomenon known as processing bias, which distorts the true biological signal [2].

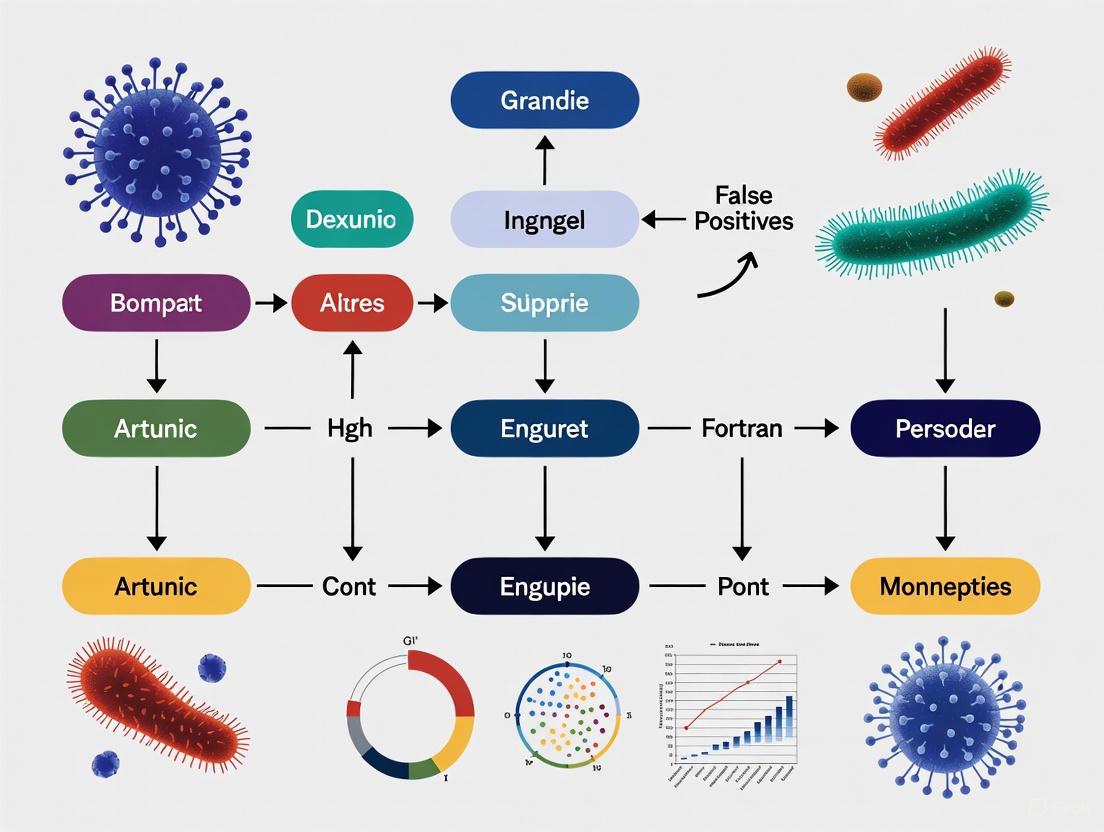

The following diagram illustrates how these challenges can introduce false positives throughout the research workflow, from sample collection to data analysis.

Impact of Confounding

A critical concept in low-biomass research is confounding. When batch processing is perfectly confounded with a phenotype of interest—for example, if all case samples are processed in one batch and all controls in another—the technical artifacts (contamination, bias) can create entirely artifactual signals that are misinterpreted as biological [2]. In an unconfounded design, where cases and controls are randomly distributed across processing batches, these artifacts are more likely to manifest as increased background noise rather than false discoveries [2].

Best Practices and Experimental Methodologies

Robust study design is the most effective defense against false positives. This involves a two-pronged approach: meticulous experimental planning to minimize contamination and the strategic use of controls to identify any residual contamination.

Foundational Experimental Design Principles

- Avoid Batch Confounding: The experimental design must ensure that phenotypes or covariates of interest are not confounded with processing batches. Active balancing tools are preferable to simple randomization [2].

- Implement Rigorous Decontamination: All sampling equipment, tools, and surfaces should be decontaminated. Effective protocols often involve treatment with 80% ethanol to kill cells, followed by a DNA-degrading solution like sodium hypochlorite (bleach) or UV-C irradiation to remove residual DNA [1].

- Use Personal Protective Equipment (PPE): Operators should wear gloves, masks, and clean suits to limit the introduction of human-associated contaminants from skin, hair, or aerosols [1].

Essential Research Reagent Solutions and Controls

The following table details key reagents and controls that are non-negotiable for rigorous low-biomass research.

Table 2: Key Research Reagent Solutions and Controls for Low-Biomass Studies

| Item | Function & Purpose | Specific Examples & Protocols |

|---|---|---|

| DNA Decontamination Reagents | To remove microbial cells and degrade environmental DNA on surfaces and equipment. | Sodium hypochlorite (bleach), hydrogen peroxide, UV-C light, commercially available DNA removal solutions [1]. |

| DNA-Free Consumables | To provide sterile, DNA-free collection vessels and tools for sample integrity. | Pre-treated (autoclaved/UV-irradiated) plasticware, single-use DNA-free swabs [1]. |

| Process Controls (Multiple Types) | To identify the identity, source, and extent of contamination introduced at various stages. | Blank Extraction Controls: Tubes with only lysis buffer processed through DNA extraction. No-Template Controls (NTC): Water used as a sample in PCR/library prep. Kit/Reagent Blanks: Swabs of air, sampling equipment, or PPE [1] [2] [5]. |

| Mock Communities | To assess accuracy, precision, and bias of the entire workflow, from DNA isolation to bioinformatic classification. | ZymoBIOMICS Microbial Community Standards (D6300/D6305) [4]. |

| Specialized DNA Isolation Kits | To efficiently lyse microbial cells and isolate high-quality DNA while co-purifying inhibitors common in certain matrices (e.g., milk). | DNeasy PowerSoil Pro Kit (Qiagen), MagMAX Total Nucleic Acid Isolation Kit (Thermo Fisher) have shown consistent performance with low contamination in milk studies [4]. |

Detailed Methodological Workflow: Surface Sampling for Pharmaceutical Controls

The following protocol, adapted from a study on ultra-low biomass cleanrooms, exemplifies a rigorous approach suitable for pharmaceutical manufacturing environments [5].

Protocol Steps:

- Surface Pre-wetting: Spray the target surface area (e.g., ~1 m²) with sterile, DNA-free PCR-grade water using a UV-treated spray bottle [5].

- Sample Collection: Use a specialized sampling device like the Squeegee-Aspirator for Large Sampling Area (SALSA) or a standardized swab. The SALSA device uses a vacuum and squeegee action to transfer the sampling liquid directly into a collection tube, bypassing the low recovery efficiency associated with elution from swabs [5].

- Sample Concentration: Concentrate the collected liquid using a device like the InnovaPrep CP-150, which uses hollow fiber filtration to concentrate microorganisms and DNA into a small volume (e.g., 150 µL) suitable for downstream analysis [5].

- DNA Extraction and Library Preparation: Extract DNA using a dedicated kit (e.g., Maxwell RSC). For sequencing, protocols may require modification, such as additional PCR cycles or the use of carrier DNA, to achieve sufficient library concentration from ultra-low inputs [5].

- Parallel Control Processing: Crucially, process control samples (e.g., sprayer water, sterile water) alongside the actual samples through every stage—collection, concentration, DNA extraction, and sequencing [5].

Computational and Bioinformatic Strategies

Even with optimal wet-lab practices, sophisticated computational tools are essential to distinguish true signal from noise. A significant challenge is that false positives are not necessarily low-abundance taxa, making simple abundance filtering ineffective [3].

Decontamination and Profiling Tools

- Control-Based Decontamination: Tools like decontam use the presence and abundance of sequence features in negative control samples to statistically identify and remove contaminants from experimental samples [2].

- Advanced Profiling with MAP2B: A key innovation is the MAP2B profiler, which moves beyond traditional markers or whole genomes. It uses species-specific Type IIB restriction endonuclease digestion sites as references. This approach leverages multiple features to distinguish true positives, including genome coverage (the uniformity of read distribution across the genome), sequence count, and taxonomic count, achieving superior precision in species identification [3].

A Framework for Validating Findings

The following workflow integrates experimental and computational best practices to minimize false positives.

Validation Steps:

- A. Rigorous Wet-Lab Design: The foundation is a properly designed experiment with unconfounded batches and comprehensive controls [2].

- B. Control-Based Decontamination: Use sequencing data from negative controls to computationally subtract contaminants from the dataset [2].

- C. Advanced Profiling: Apply a high-precision profiler like MAP2B, which uses a multi-feature model (genome coverage, taxonomic count) to further eliminate false positives that remain after control-based decontamination [3].

- D. Abundance & Prevalence Thresholds: Apply conservative thresholds, retaining only taxa that are consistently detected above a certain abundance across multiple replicates.

- E. Biological Plausibility Assessment: Finally, interpret the remaining microbial signals in the context of existing literature and biological plausibility for the given environment [1] [2].

Low-biomass microbiome research, which explores environments with minimal microbial presence such as human tissues, treated drinking water, and the deep subsurface, faces unique challenges that can compromise data integrity [1]. When studying these environments where microbial signals approach the limits of detection, the risk of false positives increases substantially through three primary mechanisms: external contamination, host DNA misclassification, and well-to-well leakage [2]. These pitfalls have led to controversies in the field, including debates about the existence of microbiomes in human placenta, blood, and tumors, where initial findings were later attributed to methodological artifacts rather than true biological signals [1] [2]. This technical guide examines these critical challenges and provides evidence-based strategies for accurate data generation and interpretation within the broader context of understanding false positives in low-biomass microbiome research.

External Contamination

External contamination refers to the introduction of microbial DNA from sources other than the sample of interest, occurring throughout experimental workflows from sample collection to sequencing [1] [2]. In low-biomass environments, where target DNA is minimal, contaminants can constitute a substantial proportion of the final sequencing data, potentially leading to erroneous biological conclusions [1]. Contamination sources are diverse and include sampling equipment, laboratory reagents, kits, personnel, and the laboratory environment itself [1]. The proportional nature of sequence-based datasets means that even minute amounts of contaminant DNA can drastically influence results and their interpretation when the authentic microbial signal is faint [1].

The impact of external contamination is particularly pronounced in clinical and environmental studies where findings inform significant health or ecological conclusions. For instance, contamination has distorted ecological patterns and evolutionary signatures, caused false attribution of pathogen exposure pathways, and led to inaccurate claims of microbes in various environments [1]. The controversy surrounding the 'placental microbiome' exemplifies how contamination issues can shape scientific debate, as initial reports of a resident placental microbiome were later challenged by studies demonstrating that signal could be explained by contamination controls [1] [2].

Prevention and Control Strategies

Preventing external contamination requires meticulous planning and execution at every experimental stage. The table below summarizes key contamination sources and corresponding mitigation strategies:

Table 1: Strategies to Mitigate External Contamination

| Contamination Source | Prevention Strategies | Control Recommendations |

|---|---|---|

| Sampling Equipment & Personnel | Decontaminate with 80% ethanol followed by DNA-degrading solutions (e.g., bleach, UV-C light); use personal protective equipment (PPE) including gloves, coveralls, and masks [1]. | Include swabs of PPE, air exposure controls, and surface swabs as sampling controls [1]. |

| Reagents & Kits | Use DNA-free reagents; pre-treat plasticware/glassware with autoclaving or UV-C sterilization; select kits with minimal microbial DNA [1]. | Include extraction blanks (reagents without sample) and library preparation controls [2]. |

| Laboratory Environment | Implement physical separation of pre- and post-PCR areas; use dedicated equipment for low-biomass work; maintain clean workspaces [1]. | Process controls alongside samples through all experimental steps to account for environmental contaminants [1]. |

Effective contamination control relies on comprehensive experimental designs that include multiple types of control samples. These controls should represent all potential contamination sources throughout the study [2]. Different control types serve distinct purposes: empty collection kits reveal contaminants from sampling materials; extraction blanks identify kit-borne contaminants; and no-template controls detect contamination during amplification [2]. Researchers should include multiple controls of each type, as contamination can be stochastic, and a single control may not capture all contaminants [2].

Host DNA Misclassification

Mechanisms and Consequences

Host DNA misclassification occurs when host-derived sequences are incorrectly identified as microbial in origin, particularly in metagenomic analyses of host-associated samples [2]. This phenomenon is especially problematic in low-biomass samples where host DNA can constitute the vast majority of sequenced material—for example, in tumor microbiome studies, only approximately 0.01% of sequenced reads may be truly microbial [2]. While sometimes termed "host contamination," this characterization is somewhat inaccurate since host DNA genuinely originates from the sample itself rather than external sources [2].

The primary mechanism driving host DNA misclassification involves PCR mis-priming, where "universal" bacterial primers anneal to human DNA sequences under suboptimal conditions [6]. This issue is particularly prevalent in 16S amplicon sequencing of human intestinal biopsy samples using commonly employed V3-V4 primers [6]. Research has identified human sequences on chromosomes 5, 11, and 17 as the main contributors to the majority of off-target sequences, which typically share a 5' motif and are approximately 300 bp in length [6]. When these off-target amplifications occur, they can be misclassified as bacterial sequences, creating false positives and obscuring true biological signals.

The consequences of host DNA misclassification extend beyond simple noise generation. Unaddressed host DNA contamination can lead to false bacterial identifications and obscure significant differences in microbiota composition [6]. In severe cases, this has led to retractions of high-profile studies and questioning of entire research fields, such as when host off-targets misclassified as bacteria led to false positive bacterial detection in brain tissues, calling into question discoveries regarding the brain microbiome [6].

Experimental and Computational Solutions

Multiple strategies exist to address host DNA misclassification, ranging from wet-lab procedures to bioinformatic corrections:

Table 2: Approaches to Mitigate Host DNA Misclassification

| Approach | Methodology | Considerations |

|---|---|---|

| Wet-Lab Methods | ||

| Primer Selection | Use primers targeting V1-V2 regions instead of V3-V4 [6]. | May underrepresent archaea and certain taxa like Prevotella, Streptococcus, and Fusobacterium [6]. |

| C3 Spacer Modification | Incorporate C3 spacer-modified nucleotides targeting off-target sequences to block mis-priming [6]. | Prevents off-target formation upstream without altering core protocol; retains use of standard V3-V4 primers [6]. |

| Host DNA Depletion | Implement procedures to reduce host DNA proportion before sequencing. | Potential risk of simultaneously depleting microbial DNA; requires optimization [2]. |

| Bioinformatic Methods | ||

| Reference-Based Filtering | Align reads to host reference genome (e.g., GRCh38) using tools like Bowtie2 or BWA; remove aligned reads [7] [6]. | Standard approach but wastes sequencing depth; reduces estimated alpha diversity [6]. |

| Double Human Read Removal | Apply multiple alignment tools sequentially for more comprehensive host read removal [7]. | Increases computational time but may improve host DNA detection in spatial microbiome studies [7]. |

The following diagram illustrates a recommended bioinformatic workflow for comprehensive host DNA removal in spatial host-microbiome studies:

Figure 1: Bioinformatic workflow for host DNA removal and microbiome decontamination, adapted from spatial host-microbiome profiling research [7].

Well-to-Well Leakage

Characteristics and Detection

Well-to-well leakage (also termed cross-contamination or "splashome") represents a previously underappreciated form of contamination where DNA transfers between samples processed concurrently in multi-well plates [2] [8]. This phenomenon occurs primarily during DNA extraction rather than PCR amplification and is highest with plate-based methods compared to single-tube extraction [8]. Empirical studies demonstrate that well-to-well leakage follows a distance-decay relationship, with the highest contamination rates occurring in immediately adjacent wells and rare events detected up to 10 wells apart [8].

The detection of well-to-well contamination requires specialized experimental designs and analytical approaches. Minich et al. (2019) developed a method using unique bacterial "source" isolates placed in specific wells across plates containing alternating low-biomass "sink" bacteria and no-template blanks [8]. This design enabled precise tracking of sequence transfer between wells. Subsequent research has employed strain-resolved analyses to identify well-to-well contamination in large-scale clinical metagenomic datasets by mapping strain sharing patterns to DNA extraction plate layouts [9]. These approaches reveal that nearby unrelated sample pairs are significantly more likely to share strains than those farther apart when well-to-well contamination has occurred [9].

The impact of well-to-well leakage extends to fundamental microbiome metrics, negatively affecting both alpha and beta diversity measurements [8]. This effect is most pronounced in lower biomass samples, where contaminating DNA constitutes a larger proportion of the total signal [8]. Importantly, well-to-well leakage violates the core assumption of most computational decontamination methods that microbes found in blanks represent external contaminants [2] [8]. Since the contaminating DNA in this case originates from other samples within the study, standard decontamination approaches that remove taxa appearing in negative controls will be ineffective and may inadvertently remove legitimate biological signal [8].

Methodological Recommendations

Based on empirical studies, the following strategies help minimize and account for well-to-well leakage:

Table 3: Strategies to Address Well-to-Well Leakage

| Strategy | Implementation | Rationale |

|---|---|---|

| Sample Randomization | Randomize samples across plates rather than grouping by experimental condition [8]. | Prevents systematic bias where contamination correlates with study groups. |

| Biomass Matching | Process samples with similar biomasses together when possible [8]. | Reduces directional contamination from high to low biomass samples. |

| Extraction Method Selection | Use manual single-tube extractions or hybrid plate-based cleanups for most critical low-biomass samples [8]. | Plate methods have more well-to-well contamination; single-tube methods have higher background contaminants [8]. |

| Comprehensive Controls | Include multiple negative controls distributed across plates, not just one per plate [2]. | Enables detection of spatial contamination patterns; single controls may miss contamination sources. |

Evidence from strain-resolved analyses demonstrates that well-to-well contamination exhibits clear spatial patterns on extraction plates. In one case study, a negative control located in column L primarily shared strains with samples from columns K and L, indicating adjacent samples as contamination sources [9]. This spatial dependency provides a signature for identifying well-to-well leakage during data analysis. Researchers can visualize strain sharing patterns in the context of extraction plate layouts to detect suspicious sharing between geographically proximate samples [9].

Integrated Experimental Design

The Scientist's Toolkit

Implementing robust low-biomass microbiome research requires specific reagents and materials designed to minimize and detect false positives. The following table details essential components of a contamination-aware toolkit:

Table 4: Research Reagent Solutions for Low-Biomass Microbiome Studies

| Reagent/Material | Function | Considerations |

|---|---|---|

| DNA-Free Collection Supplies | Single-use swabs, collection vessels; pre-treated by autoclaving or UV-C light sterilization [1]. | Maintain sterility until use; note that sterility ≠ DNA-free—may require additional DNA removal treatments [1]. |

| DNA Degradation Solutions | Sodium hypochlorite (bleach), hydrogen peroxide, or commercial DNA removal solutions for equipment decontamination [1]. | Effectively removes contaminating DNA that may persist after standard sterilization [1]. |

| Personal Protective Equipment (PPE) | Gloves, goggles, coveralls/cleansuits, shoe covers, face masks [1]. | Reduces contamination from human operators; extent should match sample sensitivity [1]. |

| Negative Control Materials | Empty collection vessels, sample preservation solutions, extraction blanks, no-template controls [1] [2]. | Should represent all contamination sources; include multiple controls of each type [2]. |

| Positive Control Materials | ZymoBIOMICS Microbial Community Standard or similar defined communities [9]. | Validates extraction and sequencing efficiency; helps identify well-to-well leakage [9]. |

Strategic Workflow Integration

Successful low-biomass microbiome research requires integrating contamination control throughout the entire experimental workflow. The following diagram outlines key considerations at each stage:

Figure 2: Integrated workflow for contamination control in low-biomass microbiome studies.

Critical to this integrated approach is avoiding batch confounding, where experimental groups are processed in separate batches [2]. When batches are confounded with phenotypes, contaminants and processing biases can create artifactual signals [2]. Instead, researchers should actively design unconfounded batches with similar ratios of cases and controls processed together [2]. If complete deconfounding is impossible, the generalizability of results should be assessed explicitly across batches rather than analyzing all data together [2].

The study of low-biomass microbiomes presents extraordinary challenges that demand rigorous methodological approaches. External contamination, host DNA misclassification, and well-to-well leakage represent interconnected pitfalls that can generate false positives and undermine biological conclusions. Addressing these challenges requires comprehensive strategies spanning experimental design, laboratory procedures, and bioinformatic analysis. By implementing the contamination control measures outlined in this guide—including appropriate controls, careful sample handling, strain-resolved analyses, and integrated workflows—researchers can significantly improve the reliability of low-biomass microbiome data. As the field continues to evolve, further development of standardized practices and validation methods will be essential for advancing our understanding of microbial communities in these challenging environments.

In the pursuit of biological truth, few challenges are as pervasive and consequential as the problem of false positive results. These erroneous signals—where a test incorrectly indicates the presence of a target organism, pathogen, or biological phenomenon—represent a fundamental threat to research integrity across microbiology, clinical diagnostics, and forensic science. The stakes are particularly elevated in low-biomass environments, where the target microbial signal approaches the limits of detection and can be easily overwhelmed by contaminating noise [1]. This technical guide examines how false positives compromise biological conclusions, fuel scientific controversies, and provides researchers with structured frameworks for mitigation.

The implications extend beyond academic discourse into tangible real-world consequences. In clinical diagnostics, false positives can lead to unnecessary treatments and psychological distress [10]. In food safety and forensic science, they can trigger costly recalls or contribute to wrongful convictions [11] [12]. A systematic analysis of wrongful convictions found that in 732 cases involving forensic evidence, 891 of 1,391 forensic examinations contained errors, with certain disciplines like seized drug analysis and bitemark comparison exhibiting error rates exceeding 70% [11]. Understanding and addressing false positives is therefore both a scientific imperative and an ethical obligation.

Quantitative Landscape: Assessing False Positive Rates Across Domains

The prevalence and impact of false positives vary considerably across biological disciplines and methodological approaches. The following table synthesizes key quantitative findings across multiple domains:

Table 1: False Positive Rates Across Biological Research and Diagnostic Domains

| Domain | False Positive Rate/Impact | Key Factors | Citation |

|---|---|---|---|

| COVID-19 Testing (Asymptomatic, low prevalence) | Positive Predictive Value (PPV) of 38-52% (2 in 5 to 1 in 2 positive results are false positives) | Low prevalence (0.5%), testing approach | [10] |

| Metagenomic Profiling | Average precision range of 0.11 to 0.60 across major tools | Analytical approach, database selection | [3] |

| Immunoassay-Based Testing | Analytical error rate of 0.4-4% | Endogenous antibody interference, cross-reactivity | [13] |

| Pediatric Urine Drug Screening | 5% of samples with targeted substances missed by standard immunoassay | Low drug concentrations, cutoff thresholds | [14] |

| Wrongful Convictions (Forensic Evidence) | 59% of hair comparison examinations contained errors; 77% of bitemark examinations contained errors | Invalid techniques, testimony errors, fraud | [11] |

These quantitative findings demonstrate that false positives represent a substantial challenge across multiple fields. The rates vary significantly based on pre-test probability, methodological approach, and analytical rigor. Particularly alarming are the findings in forensic science, where disciplines like bitemark analysis and seized drug testing have demonstrated exceptionally high error rates that have contributed to miscarriages of justice [11].

Bayesian Principles in Test Interpretation

The interpretation of biological tests must account for Bayesian principles, where the positive predictive value of a test is profoundly influenced by the pre-test probability of the condition being assessed [13]. Even tests with excellent accuracy characteristics can yield predominantly false positive results when applied to low-prevalence populations:

Table 2: Impact of Disease Prevalence on Test Interpretation (Using Immunoassay with 99.6% Accuracy as Example)

| Population | Prevalence | True Positives (per 1000) | False Positives (per 1000) | Positive Predictive Value |

|---|---|---|---|---|

| Young Adults (Subclinical Hypothyroidism) | 1% | 10 | 4 | ~71% |

| Older Women (Subclinical Hypothyroidism) | 17% | 170 | 4 | ~98% |

This mathematical relationship underscores why contextual interpretation of biological tests is essential. A test result should never be interpreted in isolation from the clinical or environmental context in which it was generated [13].

Ground Zero: False Positives in Low-Biomass Microbiome Research

Low-biomass microbiome research presents perhaps the most challenging environment for accurate biological inference. When studying environments with minimal microbial biomass—such as certain human tissues, atmospheric samples, or cleaned surfaces—the inevitable introduction of external contamination can completely obscure the true biological signal [1].

Contamination in low-biomass studies can originate from multiple sources and be introduced at virtually every stage of the research workflow:

Diagram 1: Contamination Pathways in Low-Biomass Studies

The proportional nature of sequence-based datasets means that even minute amounts of contaminating DNA can dramatically influence results when the authentic biological signal is minimal. This has fueled ongoing scientific debates about the existence of microbiomes in environments such as the human placenta, fetal tissues, and blood [1].

Case Study: The Placental Microbiome Controversy

The question of whether a resident microbiome exists in the human placenta illustrates how false positives can fuel sustained scientific controversies. Early studies suggesting the presence of a placental microbiome were subsequently challenged when careful contamination controls revealed that the microbial signals detected were indistinguishable from those present in negative controls [1]. A fetal meconium study that implemented rigorous controls—including swabbing maternal skin and exposing swabs to operating theatre air—concluded that any microbial signals detected were more likely attributable to contamination than to an authentic fetal microbiome [1]. This controversy highlights the critical importance of appropriate controls and meticulous technique when working with low-biomass samples.

Methodological Solutions: Reducing False Positives in Practice

Laboratory and Sampling Controls

Implementing comprehensive contamination controls throughout the experimental workflow is essential for reliable low-biomass research. Key recommendations include [1]:

- Sample Collection: Use single-use DNA-free collection materials; decontaminate equipment with ethanol followed by DNA-degrading solutions; implement appropriate personal protective equipment (PPE) to minimize human-derived contamination.

- Laboratory Processing: Use UV-irradiated laminar flow hoods; dedicate separate rooms for pre- and post-PCR work; use DNA-free reagents.

- Control Samples: Include extraction blanks (reagents without sample), sampling controls (swabs exposed to sampling environment), and positive controls with known low-biomass communities.

Bioinformatics Approaches

Computational methods play a crucial role in identifying and removing false positives from biological datasets:

Table 3: Bioinformatics Strategies for False Positive Mitigation in Metagenomics

| Strategy | Mechanism | Implementation Example |

|---|---|---|

| Threshold-Based Filtering | Setting minimum abundance thresholds for species calls | Often ineffective as false positives are not necessarily low-abundance [3] |

| Database Optimization | Using carefully curated reference databases to improve specificity | Kr2bac database showed near-perfect precision at confidence 0.25 vs. default databases [12] |

| Confirmation with Specific Markers | Verifying putative hits against unique genomic regions | Species-specific regions (SSRs) from Salmonella pan-genome eliminated false positives at confidence ≥0.25 [12] |

| Coverage-Based Filtering | Requiring uniform genomic coverage rather than fragmented hits | MAP2B uses even distribution of Type IIB restriction sites as indicator of true presence [3] |

The MAP2B (MetAgenomic Profiler based on type IIB restriction sites) approach represents a particularly innovative solution that leverages the even distribution of Type IIB restriction endonuclease digestion sites across microbial genomes as a reference instead of universal markers or whole genomes [3]. This method addresses a fundamental limitation of traditional profilers, which suffer from challenges like missing markers or multi-alignment of short reads.

The Researcher's Toolkit: Essential Reagents and Controls

Table 4: Essential Research Reagents and Controls for False Positive Mitigation

| Reagent/Control | Function | Application Notes |

|---|---|---|

| DNA Decontamination Solutions | Remove contaminating DNA from surfaces and equipment | Sodium hypochlorite (bleach), UV-C exposure, or commercial DNA removal solutions [1] |

| DNA-Free Reagents and Kits | Prevent introduction of contaminating DNA during extraction and amplification | Verify through 16S rRNA gene amplification and sequencing of extraction blanks [1] |

| Negative Control Swabs | Identify contamination introduced during sampling process | Expose to sampling environment without collecting actual sample [1] |

| Mock Communities | Assess accuracy and sensitivity of entire workflow | ATCC MSA-1002 or similar with known composition [3] |

| Species-Specific Markers | Confirm putative taxonomic assignments | Salmonella pan-genome SSRs of 1000 bp length [12] |

Experimental Protocols for False Positive Mitigation

Protocol: MAP2B Metagenomic Profiling for Enhanced Specificity

The MAP2B pipeline addresses false positive identification in whole metagenome sequencing data through the following methodology [3]:

Database Preparation:

- Perform in silico restriction digestion of microbial genomes from GTDB and Ensembl Fungi using CjepI (a Type IIB enzyme) as representative.

- Identify species-specific 2b tags (iso-length DNA fragments produced by digestion) that are single-copy within a species' genome and unique to that species.

- For each species, establish a set of approximately 8,607 species-specific 2b tags as reference markers.

Sequence Processing:

- Process WMS reads through the MAP2B pipeline, which maps reads to the database of species-specific 2b tags.

- Calculate genome coverage (Ci) for species i as Ci = Ui/Ei, where Ui is the number of observed distinct species-specific 2b tags, and Ei is the total number of species-specific 2b tags in the database.

False Positive Recognition:

- Utilize a machine learning model trained on CAMI2 simulated datasets with known composition.

- Employ a feature set including genome coverage, sequence count, taxonomic count, and G-score to distinguish true positives from false positives.

- Classify species present based on comprehensive feature analysis rather than relative abundance alone.

Protocol: Confirmatory Analysis for Pathogen Detection

For targeted pathogen detection in metagenomic datasets, such as identifying Salmonella in food safety applications, the following confirmatory workflow significantly reduces false positives [12]:

Initial Classification:

- Perform taxonomic classification with Kraken2 using appropriate confidence thresholds (≥0.25 rather than default 0).

- Select database carefully (kr2bac database outperforms others in specificity).

SSR Confirmation:

- Extract all reads classified as belonging to the target genus (Salmonella).

- Compare these reads against a database of species-specific regions (SSRs)—403 genus-specific regions of 1000 bp length from the Salmonella pan-genome.

- Retain only those reads that match SSRs with high specificity.

Validation:

- Apply to samples with known composition (mock communities) to validate sensitivity and specificity.

- Test against closely related non-target organisms to confirm specificity.

This approach reduced false positives from 16,904 reads to zero when applied to unpublished genomes of Salmonella-related organisms [12].

The problem of false positives in biological research represents a multifaceted challenge that demands both technical solutions and cultural shifts within the scientific community. As research continues to push detection limits—whether in searching for rare microbes, detecting minute quantities of pathogens, or exploring novel biological environments—the critical importance of rigorous false positive mitigation only grows stronger.

Promising future directions include the development of machine learning approaches that integrate multiple features beyond simple abundance thresholds [3], the creation of curated reference databases that better represent microbial diversity [12], and the adoption of comprehensive quality control frameworks that extend from sample collection through computational analysis [1]. Additionally, the forensic science community's development of an error typology to categorize and address sources of inaccurate evidence provides a model that could be adapted to other biological domains [11].

Ultimately, addressing the challenge of false positives requires acknowledging that every methodological approach carries inherent limitations and that scientific rigor is not achieved through technical sophistication alone, but through the relentless pursuit of biological truth via appropriate controls, transparent reporting, and epistemological humility.

The Sensitivity-Specificity Trade-off in Pathogen Detection and Taxonomic Classification

In the field of microbial metagenomics, particularly for pathogen detection in low-biomass environments, researchers face a fundamental computational challenge: the tension between sensitivity (correctly identifying true positives) and specificity (correctly rejecting true negatives). This trade-off presents particularly acute consequences in diagnostic and food safety contexts, where false positives can trigger unnecessary product recalls and costly production shutdowns, while false negatives may allow preventable illnesses to reach consumers [15]. The inherent difficulties of analyzing complex shotgun sequencing datasets are compounded when targeting low-abundance pathogens within samples containing overwhelming quantities of host, food matrix, and non-target microbial DNA [15]. These challenges are especially pronounced in low-biomass microbiome research, where the target DNA signal may be minimal compared to contaminant noise, potentially leading to spurious results if not properly controlled [1].

The core of this challenge lies in the analytical process itself. Metagenomic read classification algorithms primarily identify species by comparing sequencing data to existing databases, but this approach struggles with genetically similar organisms and species with limited representation in public repositories [15]. The conserved genetic sequences shared between related species create a perfect environment for misclassification, where non-pathogenic organisms may be incorrectly flagged as pathogens of concern. Understanding and managing this sensitivity-specificity trade-off is therefore not merely an academic exercise but a practical necessity for generating reliable, actionable results in pathogen detection and taxonomic classification.

Core Concepts: Defining Performance Metrics

To quantitatively assess classification performance, researchers employ specific metrics derived from confusion matrices, which compare tool predictions against known truths [16].

- Sensitivity (Recall): Measures the proportion of true positives correctly identified ( \frac{TP}{TP + FN} ). In diagnostic contexts, this represents the ability to detect a pathogen when it is truly present [16].

- Specificity: Measures the proportion of true negatives correctly identified ( \frac{TN}{TN + FP} ). This reflects the tool's ability to avoid false alarms [16].

- Precision: Measures the reliability of positive predictions ( \frac{TP}{TP + FP} ), indicating what percentage of taxa called "present" are truly present [16].

The inverse relationship between these metrics creates the central trade-off. Increasing confidence thresholds to reduce false positives typically decreases sensitivity, while lowering thresholds to catch more true positives typically increases false positives [15] [16]. The choice of emphasis depends on the application: disease screening may prioritize sensitivity to avoid missing infections, while confirmatory diagnostics may prioritize specificity to prevent false alarms [16].

In microbiome studies with inherent class imbalances (where true positives are rare relative to negatives), precision and recall often provide more meaningful performance assessment than sensitivity and specificity, as they focus specifically on the positive calls that are of primary interest [16].

Quantitative Landscape of Method Performance

Performance Variations in Taxonomic Classification

Table 1: Comparative Performance of Taxonomic Classification Tools

| Tool | Methodology | Strengths | Weaknesses | Reported Precision Range |

|---|---|---|---|---|

| Kraken2 [15] | k-mer based classification | High sensitivity, fast processing | Prone to false positives at default settings | Varies significantly with parameters (0 to 0.9+) |

| MetaPhlAn4 [15] | Marker-gene based (clade-specific) | High specificity, reduced false positives | Unable to detect low-abundance pathogens | Higher specificity but lower sensitivity |

| MAP2B [3] | Type IIB restriction sites | Superior precision, eliminates false positives | Novel approach, less established | Near-perfect precision in benchmark tests |

| Bracken [3] | Bayesian re-estimation | Improved abundance estimation | Dependent on Kraken2 output | 0.11 to 0.60 (CAMI2 benchmark) |

| mOTUs2 [3] | Phylogenetic marker genes | Profiling of unknown species | Limited taxonomic resolution | 0.11 to 0.60 (CAMI2 benchmark) |

Performance Variations in Differential Abundance Analysis

Table 2: Differential Abundance Method Performance Across 38 Datasets

| Method Category | Representative Tools | Typical False Positive Rate | Key Characteristics | Consistency Across Studies |

|---|---|---|---|---|

| Distribution-Based | DESeq2, edgeR, metagenomeSeq | Variable (edgeR: high FDR) | Model counts with statistical distributions | Variable performance |

| Compositional (CoDa) | ALDEx2, ANCOM-II | Lower FDR | Address compositional nature of data | Most consistent results |

| Non-parametric | Wilcoxon (on CLR) | High false positives | No distributional assumptions | Identifies largest number of ASVs |

| Hybrid Approaches | LEfSe, limma voom | Moderate to high | Combines statistical tests with LDA | Highly variable between datasets |

The quantitative evidence reveals substantial variability in tool performance. In taxonomic classification, Kraken2 with default parameters demonstrates high sensitivity but concerning false positive rates, while MetaPhlAn4 offers higher specificity but fails to detect Salmonella at low abundance levels [15]. The recently developed MAP2B profiler demonstrates particularly strong performance in false positive elimination, leveraging species-specific Type IIB restriction endonuclease digestion sites that are evenly distributed across microbial genomes [3].

In differential abundance testing, a comprehensive evaluation across 38 datasets revealed that different methods identify drastically different numbers and sets of significant features [17]. The percentage of significant amplicon sequence variants (ASVs) identified varied widely between tools, with means ranging from 0.8% to 40.5% across methods [17]. This variability underscores that biological interpretations can change substantially depending on the analytical method selected.

Experimental Approaches for False Positive Mitigation

Confidence Threshold Optimization in Kraken2

Kraken2 Confidence Threshold Optimization

Experimental evidence demonstrates that carefully adjusting Kraken2's confidence parameter significantly impacts the sensitivity-specificity balance. At the default setting of 0, the classifier exhibits maximum sensitivity but generates excessive false positives, with many Salmonella-derived reads misclassified as closely related genera like Escherichia, Shigella, and Citrobacter [15]. Systematically increasing the confidence threshold to 0.25 or higher dramatically reduces false positives while maintaining sufficient sensitivity for detection [15]. The optimal threshold depends on the specific reference database used, with some databases achieving near-perfect precision and high recall at confidence 0.25 [15].

Protocol: Confidence Parameter Optimization

- Input Preparation: Generate or obtain sequencing data with known composition (ground truth)

- Database Selection: Choose appropriate reference databases (e.g., Standard, MiniKraken, kr2bac)

- Parameter Sweep: Run Kraken2 with confidence values from 0 to 1 in increments of 0.1-0.25

- Performance Assessment: Calculate precision, recall, and F1-score at each threshold

- Threshold Selection: Identify confidence value that achieves acceptable balance for specific application

Species-Specific Region (SSR) Confirmation

SSR Confirmation Workflow

Research demonstrates that adding a confirmation step using species-specific regions (SSRs) effectively eliminates false positives while retaining true positives. This approach involves extracting reads tentatively classified as Salmonella by Kraken2 and realigning them against a curated database of 403 genus-specific regions from the Salmonella pan-genome [15]. These SSRs are 1000 bp regions shared by Salmonella genomes but absent from other organisms [15]. This confirmation step substantially reduced false positives across all database types tested, with complete elimination of false positives at confidence thresholds ≥0.25 [15]. The method successfully filtered out reads from novel, unpublished organisms related to Salmonella that would otherwise trigger false positive calls [15].

Protocol: SSR-Based Confirmation

- SSR Database Curation: Identify 1000 bp genomic regions shared by target pathogen genomes but absent from non-target organisms

- Initial Classification: Process reads through standard Kraken2 workflow

- Read Extraction: Extract all reads classified as belonging to target genus/species

- SSR Alignment: Align putative pathogen reads against SSR database

- Confirmation: Retain only reads that successfully align to SSRs

- Validation: Verify pipeline performance with simulated datasets of known composition

Type IIB Restriction Site Profiling (MAP2B)

The MAP2B approach represents an innovative methodology that leverages species-specific Type IIB restriction endonuclease digestion sites as taxonomic markers instead of universal single-copy genes or whole microbial genomes [3]. This method identifies approximately 8,607 species-specific "2b tags" for each species—iso-length DNA fragments produced by Type IIB enzyme digestion—which are abundantly and randomly distributed across microbial genomes [3]. By using genome coverage uniformity as a key feature for distinguishing true positives, MAP2B achieves superior precision compared to traditional profilers, as true positives should demonstrate relatively uniform distribution across their genomes rather than concentration in limited genomic regions [3].

Protocol: MAP2B Implementation

- In Silico Digestion: Perform computational restriction digestion of all microbial genomes in reference database using Type IIB enzyme (e.g., CjepI)

- Tag Identification: Extract species-specific 2b tags that are single-copy within each genome and unique to that species

- Database Construction: Compile catalog of species-specific tags from integrated genome databases (GTDB and Ensembl Fungi)

- Sample Processing: Digest sequencing reads in silico and map to tag database

- Coverage Calculation: Compute genome coverage as the ratio of observed distinct species-specific tags to total available tags for each species

- False Positive Recognition: Apply machine learning model trained on features including genome coverage, sequence count, taxonomic count, and G-score

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Materials for False Positive Control

| Category | Item | Specification/Function | Application Context |

|---|---|---|---|

| Computational Tools | Kraken2 [15] | k-mer based taxonomic classification | Initial pathogen detection |

| MetaPhlAn4 [15] | Marker-gene based profiling | High-specificity detection | |

| MAP2B [3] | Type IIB restriction site profiling | False-positive elimination | |

| specificity R package [18] | Analysis of feature specificity | Environmental variable association | |

| Reference Databases | Species-Specific Regions (SSRs) [15] | Pan-genome derived unique sequences | False positive confirmation |

| Type IIB Restriction Sites [3] | Species-specific restriction fragments | MAP2B profiling | |

| Genome Taxonomy Database [3] | Standardized microbial taxonomy | Taxonomic classification | |

| Laboratory Controls | Negative Controls [1] | Sterile water processed alongside samples | Contamination identification |

| DNA Decontamination Solutions [1] | Sodium hypochlorite, UV-C light sterilization | Equipment and surface treatment | |

| Personal Protective Equipment [1] | Cleanroom suits, gloves, masks | Contamination prevention during sampling | |

| Analytical Metrics | Precision-Recall Curves [16] | Visualization of classification performance | Tool optimization and selection |

| Rao's Quadratic Entropy [18] | Quantification of feature specificity | Environmental specificity analysis |

Effectively managing the sensitivity-specificity trade-off in pathogen detection requires a multifaceted approach that spans experimental design, computational analysis, and interpretation. Based on current evidence, the following best practices emerge:

Implement Multi-Layered Contamination Control: From sample collection through DNA sequencing, employ rigorous contamination control measures including appropriate personal protective equipment, reagent decontamination, and comprehensive negative controls [1]. In low-biomass studies, these controls are particularly critical as contaminants can constitute a substantial proportion of observed sequences.

Adopt Computational Confirmation Steps: Relying on a single classification tool with default parameters frequently produces misleading results. Implement orthogonal confirmation methods such as SSR verification or utilize tools like MAP2B that incorporate multiple features to distinguish true positives from false signals [15] [3].

Systematically Optimize Parameters: Default software settings are rarely optimal for specific applications. Conduct parameter sweeps using datasets of known composition to establish ideal confidence thresholds and filtering criteria for each research context [15].

Utilize Consensus Approaches: Given the substantial variability between differential abundance methods, employ multiple analytical approaches and focus on the intersection of their results rather than relying on a single method [17]. Tools such as ALDEx2 and ANCOM-II have demonstrated more consistent performance across studies [17].

Validate with Ground Truth Data: Before applying analytical pipelines to unknown samples, verify their performance using simulated datasets or mock communities where the true composition is known [15]. This validation provides crucial information about expected false positive and false negative rates.

Prioritize Based on Application Context: The optimal sensitivity-specificity balance depends on the research or diagnostic context. Food safety screening might emphasize specificity to avoid unnecessary product recalls, while clinical diagnostics might prioritize sensitivity to avoid missing infections [15] [16].

The rapid evolution of sequencing technologies and analytical methods continues to provide new approaches for addressing the fundamental challenge of accurate pathogen detection. By understanding the sources of error, implementing robust controls, and applying computational methods with appropriate validation, researchers can effectively navigate the sensitivity-specificity trade-off to generate reliable, actionable results in microbiome research and pathogen detection.

Building a Robust Defense: Methodological Strategies for Accurate Low-Biomass Analysis

In the specialized field of low-biomass microbiome research, where microbial signal approaches the limits of detection, the proportional impact of technical noise becomes profoundly magnified. Batch effects—systematic technical variations introduced during sample processing—represent a paramount source of false positives and spurious findings that can completely obscure true biological signals [19] [1]. These effects arise from differential processing of specimens across times, locations, sequencing runs, or personnel, creating structured noise that can be mistakenly attributed to biological phenomena [19]. In low-biomass environments such as certain human tissues, atmosphere, or hyper-arid soils, the contaminant "noise" can readily overwhelm the true microbial "signal," leading to inaccurate claims about microbial presence and function [1]. The scientific community has witnessed prominent debates regarding the 'placental microbiome' and other low-biomass environments where contamination concerns have challenged initial findings, highlighting the critical need for rigorous experimental design to prevent batch confounding [1].

The Nature and Impact of Batch Effects

Batch effects constitute a pervasive challenge in high-throughput microbiomics, affecting both marker-gene and metagenomic sequencing approaches. These technical artifacts manifest as systematic differences in microbial read counts, community composition estimates, and diversity metrics that are entirely unrelated to the biological questions under investigation [19] [20]. In case-control studies particularly, when batch effects become confounded with the primary variable of interest—for instance, if all cases are processed in one batch and all controls in another—the risk of false positive associations increases dramatically [21].

The unique characteristics of microbiome data exacerbate these challenges. Microbial read counts typically exhibit zero-inflation, over-dispersion, and complex distributions that violate the assumptions of traditional batch-correction methods developed for other genomic data types [19]. Furthermore, the compositional nature of microbiome sequencing data (where measurements represent proportions rather than absolute abundances) means that batch effects can distort the entire ecological picture [20].

Contamination in low-biomass microbiome studies can originate from multiple sources throughout the experimental workflow, with each introduction point potentially contributing to batch effects and false discoveries [1].

Table: Major Contamination Sources in Low-Biomass Microbiome Studies

| Contamination Source | Examples | Impact on Data |

|---|---|---|

| Human Operators | Skin cells, hair, aerosols from breathing/talking | Introduction of human-associated microbes (e.g., Staphylococcus, Corynebacterium) |

| Sampling Equipment | Non-sterile swabs, collection vessels, filters | Transfer of environmental contaminants or cross-sample contamination |

| Laboratory Reagents | DNA extraction kits, PCR reagents, water | Kitome contaminants (e.g., Pseudomonas, Burkholderia) that appear across samples |

| Laboratory Environment | Bench surfaces, airflow, equipment | Consistent background community across samples processed in same location/time |

| Cross-Contamination | Well-to-well leakage during PCR or library preparation | Spreading high-abundance samples to adjacent low-biomass samples |

The impact of these contamination sources is particularly severe in low-biomass studies because the introduced contaminant DNA may constitute a substantial proportion—or even the majority—of the final sequencing library [1]. This problem is compounded by the fact that sterility does not guarantee the absence of DNA, as cell-free DNA can persist on surfaces even after autoclaving or ethanol treatment [1].

Experimental Design Strategies to Prevent Batch Confounding

Randomization and Blocking Designs

Proper experimental design represents the first and most crucial line of defense against batch confounding. Strategic randomization of samples across processing batches ensures that technical variability does not become systematically correlated with biological conditions of interest.

- Complete Randomization: When batch capacity permits, randomly assign samples from all experimental groups to each processing batch, ensuring that biological conditions are equally represented across technical batches.

- Balanced Block Designs: When complete randomization is impractical, implement balanced blocking where each batch contains proportional representation of all experimental conditions, including case-control status, treatment groups, and time points.

- Reference Samples: Include identical reference samples or microbial community standards in each batch to facilitate technical variability assessment and downstream batch-effect correction [20].

Process Controls and Negative Controls

The implementation of comprehensive process controls enables explicit detection and quantification of contamination introduced throughout the experimental workflow.

- Extraction Blanks: Include samples containing only the DNA extraction reagents processed alongside experimental samples to identify contaminants derived from extraction kits and reagents [1].

- PCR/Library Preparation Blanks: Incorporate water blanks in amplification and library preparation steps to detect contamination introduced during these stages.

- Sample-Tracking Controls: Use synthetic DNA spikes or unique molecular identifiers to track cross-contamination between samples and validate sample identity throughout processing.

- Positive Controls: Employ known microbial communities (mock communities) with defined composition and abundance to assess technical variability in quantification and detection limits [20].

Table: Essential Process Controls for Low-Biomass Microbiome Studies

| Control Type | Composition | Purpose | Interpretation |

|---|---|---|---|

| Extraction Blank | DNA-free water or buffer processed through extraction | Identify contaminants from DNA extraction kits | Any sequences detected represent kit-derived contaminants |

| Library Preparation Blank | DNA-free water during library preparation | Detect contamination from amplification reagents | Sequences indicate amplification-stage contaminants |

| Mock Community | Defined mix of microbial strains at known abundances | Quantify technical bias in DNA extraction and sequencing | Discrepancies from expected composition reveal technical biases |

| Field Blank | Sterile collection device exposed to sampling environment | Identify environmental contamination during sampling | Sequences represent field-introduced contaminants |

Computational Approaches for Batch Effect Correction

Batch Effect Detection and Diagnostics

Before applying any batch correction method, researchers must first diagnose the presence and magnitude of batch effects using appropriate statistical and visualization approaches.

- Principal Coordinate Analysis (PCoA): Visualize sample separation in ordination space colored by batch membership versus experimental groups. Clear batch clustering indicates strong batch effects.

- Permutational Multivariate Analysis of Variance (PERMANOVA): Statistically test the proportion of variance explained by batch versus biological factors using distance matrices [21].

- Differential Abundance Analysis: Screen for taxa with statistically significant abundance differences between batches that might represent technical artifacts rather than biological signals.

Advanced Batch Correction Methods

Once detected, batch effects can be addressed using specialized computational methods designed for microbiome data's unique characteristics.

Conditional Quantile Regression (ConQuR) is a comprehensive batch effect removal method specifically designed for zero-inflated, over-dispersed microbiome count data [19]. Unlike methods that assume normal distributions, ConQuR uses a two-part quantile regression model that separately handles microbial presence-absence through logistic regression and abundance distribution through quantile regression, providing robust correction of mean, variance, and higher-order batch effects [19].

Percentile Normalization offers a model-free approach particularly suited for case-control studies [21]. This method converts case abundance distributions to percentiles of equivalent control distributions within each study or batch, effectively using the control samples as a stable reference frame that inherently accounts for batch-specific technical variability.

Bayesian Batch Correction (ComBat) and related linear methods can be applied with caution to appropriately transformed microbiome data, though their parametric assumptions may not always hold for microbial abundance distributions [21].

Implementation Protocols for Core Methodologies

Comprehensive Sample Collection and Handling Protocol

Proper sample collection and handling procedures are fundamental to minimizing batch effects and contamination from the earliest experimental stages.

Materials and Reagents:

- DNA-free collection swabs and containers

- Personal protective equipment (PPE): gloves, face masks, clean suits

- Nucleic acid degrading solution (e.g., bleach, DNA removal solutions)

- Sample preservation solution (pre-tested for DNA contamination)

- Environmental control swabs for sampling area monitoring

Procedure:

- Pre-sampling Decontamination: Treat all sampling equipment with 80% ethanol followed by nucleic acid degrading solution to remove viable cells and residual DNA [1].

- PPE Utilization: Wear appropriate PPE including gloves, goggles, coveralls, and shoe covers to minimize human-derived contamination [1].

- Control Collection: Collect multiple negative controls including:

- Empty collection vessels

- Swabs exposed to sampling environment air

- Aliquots of preservation solution

- Swabs of PPE and sampling surfaces

- Sample Preservation: Immediately preserve samples using DNA-stabilizing reagents and store at appropriate temperatures to prevent microbial growth changes.

- Documentation: Meticulously record sampling time, conditions, personnel, and any deviations from protocol.

Laboratory Processing and DNA Extraction Protocol

Standardized laboratory procedures minimize technical variability during sample processing.

Materials and Reagents:

- DNA extraction kits (same lot number for entire study)

- Molecular biology grade water (DNA-free)

- Mock community standards

- Extraction blanks

- Unique molecular identifiers for cross-contamination tracking

Procedure:

- Batch Design: Process samples in randomized or balanced block designs across extraction batches.

- Control Inclusion: Include extraction blanks and mock community standards in each processing batch.

- Technical Replicates: Process a subset of samples in duplicate across different batches to assess technical variability.

- Cross-Contamination Prevention: Include blank wells between samples in plate-based protocols, use filter tips, and maintain separate pre- and post-amplification areas.

- Documentation: Record batch identifiers, reagent lot numbers, personnel, and processing dates for all samples.

Data Generation and Sequencing Protocol

Standardized sequencing procedures ensure consistent data quality across batches.

Materials and Reagents:

- Library preparation kits (same lot number)

- Sequencing standards and controls

- Balanced barcoding strategies

- Positive control libraries

Procedure:

- Library Preparation: Use balanced barcoding strategies to distribute experimental conditions across sequencing lanes and positions.

- Control Libraries: Include control libraries in each sequencing run to monitor run-to-run variability.

- Sequencing Depth: Maintain consistent sequencing depth across samples through normalization and quantification.

- Batch Recording: Document sequencing batch, lane, flow cell, and instrument information for all samples.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Essential Research Reagents and Materials for Contamination Control

| Item | Function | Application Notes |

|---|---|---|

| DNA Degrading Solution (e.g., bleach, commercial DNA removal solutions) | Eliminates contaminating DNA from surfaces and equipment | Critical for decontaminating sampling equipment and work surfaces; more effective than autoclaving alone for DNA removal [1] |

| DNA-Free Collection Swabs | Sample collection without introducing contaminating DNA | Essential for low-biomass sampling; must be certified DNA-free by manufacturer |

| Personal Protective Equipment (PPE) | Minimizes human-derived contamination | Includes gloves, face masks, clean suits; should be donned immediately before sampling [1] |

| DNA Extraction Kit Lot | Consistent reagent composition across batches | Using the same lot number throughout study minimizes reagent-derived batch effects |

| Mock Microbial Communities | Quantifying technical variability and detection limits | Defined compositions of known microbial strains at predetermined ratios; processed alongside experimental samples [20] |

| Molecular Biology Grade Water | DNA-free water for blank controls and reagent preparation | Certified nuclease-free and DNA-free; used for extraction and PCR blanks |

| Unique Molecular Identifiers (UMIs) | Tracking cross-contamination between samples | DNA barcodes that uniquely label individual molecules from each sample |

| DNA Stabilization Reagents | Preserving sample integrity during storage and transport | Prevents microbial community changes between collection and processing |

Validation and Quality Assessment Framework

Quality Metrics and Acceptance Criteria

Rigorous quality assessment ensures that experimental processes meet required standards before proceeding to data analysis.

- Negative Control Thresholds: Establish maximum allowable contamination levels in negative controls (e.g., minimum read count thresholds, specific contaminant taxa exclusion).

- Mock Community Recovery: Define acceptable ranges for recovery of expected composition in mock community standards.

- Technical Replicate Correlation: Set minimum correlation thresholds for technical replicates processed across different batches.

- Batch Effect Diagnostics: Implement pre-defined criteria for batch effect magnitude using PERMANOVA or other statistical measures.

Reporting Standards and Documentation

Comprehensive documentation enables proper interpretation of results and facilitates meta-analyses.

Minimum Reporting Standards:

- Detailed description of randomization and blocking procedures

- Complete documentation of all process controls included

- Reagent lot numbers and equipment identifiers

- Personnel involved in each processing step

- All quality control metrics and any deviations from protocols

- Computational methods and parameters for batch effect correction

The investigation of low-biomass microbial environments—such as human tissues, forensic samples, ancient specimens, and sterile production facilities—approaches the sensitive limits of modern DNA detection technologies. In these contexts, the inevitable introduction of exogenous DNA during research workflows presents a profound risk, where contaminant "noise" can readily eclipse the true biological "signal" [1]. This contamination problem directly fuels the challenge of false positives in microbiome data, potentially leading to spurious biological conclusions, distorted ecological patterns, and inaccurate claims about the presence of microbes in specific environments [1] [22]. The debate surrounding the existence of microbiomes in historically sterile sites like the human placenta underscores the gravity of this issue [1]. Consequently, a rigorous, multi-stage decontamination strategy is not merely a best practice but a fundamental requirement for generating reliable and interpretable data in low-biomass microbiome research. This guide outlines evidence-based decontamination protocols from sample collection through DNA extraction, providing a framework to safeguard data integrity.

Contamination can infiltrate an experiment at virtually every stage, from the initial collection of a sample to the final computational analysis of its sequence data. Understanding these sources is the first step toward mitigating their impact.

- Sample Collection: Contamination can originate from human operators (skin, hair, breath), sampling equipment (non-sterile swabs, containers), and the immediate environment (air, surfaces) [1] [22].

- Reagents and Kits: Laboratory reagents, DNA extraction kits, and purification kits are well-documented sources of microbial DNA, each with its own distinct "background microbiota" profile that can vary significantly between manufacturers and even between production lots from the same brand [23].

- Laboratory Environment and Cross-Contamination: Laboratory surfaces, airflow, and equipment can harbor contaminating DNA. A significant, though often overlooked, problem is cross-contamination between samples during processing, such as well-to-well leakage in plate-based DNA extraction [1].

- Bioinformatic Analysis: Computational methods for analyzing metagenomic data can themselves generate false positives. Profilers that rely on universal markers or whole genomes can struggle to distinguish between true signals and contaminants, especially when dealing with short DNA sequences [24].

The diagram below illustrates the potential contamination sources and key control points throughout a typical research workflow.

(Diagram: Common sources of contamination (red) and key control points to mitigate them (green) throughout a typical low-biomass microbiome study workflow.)

Best Practices from Sample Collection to DNA Extraction

Sample Collection and Handling

The foundation of a contamination-aware study is laid during sampling. The practices at this stage are critical for preserving sample integrity.

- Decontaminate All Equipment: Sampling tools, collection vessels, and gloves should be decontaminated. While single-use, DNA-free items are ideal, re-usable equipment requires thorough decontamination. A recommended protocol involves treatment with 80% ethanol to kill microorganisms, followed by a nucleic acid-degrading solution (e.g., fresh sodium hypochlorite/bleach) to remove residual DNA [1]. Autoclaving or UV-C light sterilization alone is insufficient, as it may not remove persistent extracellular DNA.

- Use Personal Protective Equipment (PPE): Researchers should use appropriate PPE—including gloves, masks, clean suits, and shoe covers—to act as a barrier between the sample and contamination from skin, hair, and clothing [1]. For ultra-sensitive applications, ancient DNA laboratories often employ extensive PPE, including full suits, visors, and multiple glove layers [1].

- Implement Robust Sampling Controls: The inclusion of various negative controls during sampling is non-negotiable. These are essential for identifying the profile and sources of contaminants introduced during collection. Recommended controls include empty collection vessels, swabs of the air in the sampling environment, swabs of PPE, and aliquots of any preservation solutions used [1].

Laboratory Cleaning and Decontamination

Maintaining a DNA-clean laboratory environment is essential to prevent the introduction and spread of contaminants during downstream processing.

A forensic genetics study systematically compared common cleaning reagents and found significant differences in their efficacy [25]. The results, summarized in the table below, provide a quantitative basis for selecting decontamination agents.

Table 1: Efficacy of Common Laboratory Cleaning Reagents for DNA Decontamination

| Cleaning Reagent | Active Ingredient | DNA Recovered Post-Cleaning (%) | Efficacy |

|---|---|---|---|

| 1-3% Household Bleach | Hypochlorite (NaClO) | 0% | Complete DNA removal |

| 1% Virkon | Peroxymonosulfate (KHSO₅) | 0% | Complete DNA removal |

| DNA AWAY | Sodium Hydroxide (NaOH) | 0.03% | Near-complete removal |

| 0.1-0.3% Household Bleach | Hypochlorite (NaClO) | 0.66 - 1.36% | Partial DNA removal |

| 70% Ethanol | Ethanol | 4.29% | Inadequate alone |

| Liquid Isopropanol | Isopropanol | 87.99% | Inadequate alone |

Source: Adapted from [25].

Key Recommendations:

- For critical surfaces: Use freshly prepared household bleach (≥1% concentration) or 1% Virkon to effectively remove all amplifiable DNA [25]. Note that bleach can be corrosive and should be used with caution on metal surfaces; a subsequent wipe with 70% ethanol or water may be recommended [25].

- Physical Separation: Maintain strict physical separation of pre-PCR and post-PCR areas, with unidirectional workflow to prevent amplicon contamination [25].

- Ultraviolet Radiation: Use UV-C irradiation in cabinets and workstations to cross-link and degrade DNA on surfaces and plasticware when chemical agents are not suitable [1].

DNA Extraction and Library Preparation

The choice of wet-lab protocols at the DNA extraction and library preparation stages can significantly influence the observed microbial community, especially in low-biomass and ancient samples [26] [27].

DNA Extraction Protocol Selection: Different DNA extraction methods have varying efficiencies in recovering DNA from different sample types and preservation states. A study on archaeological dental calculus found that the choice between the QG (Rohland and Hofreiter 2007) and PB (Dabney et al. 2013) extraction methods impacted metrics like endogenous DNA content and clonality, with no single method consistently outperforming the other across all samples [26]. Similarly, a study on bird feces demonstrated that the commercial DNA extraction kit used dramatically influenced the measured diversity and composition of the gut microbiota, with only some kits successfully recovering DNA from more challenging samples [27]. This highlights that DNA extraction protocols must be optimized for the specific sample type.