Optimizing Enrichment Strategies for Low Microbial Biomass: A Comprehensive Guide for Robust Microbiome Research and Diagnostic Development

This article provides a comprehensive framework for overcoming the significant challenges of low microbial biomass research, a critical frontier in microbiology and clinical diagnostics.

Optimizing Enrichment Strategies for Low Microbial Biomass: A Comprehensive Guide for Robust Microbiome Research and Diagnostic Development

Abstract

This article provides a comprehensive framework for overcoming the significant challenges of low microbial biomass research, a critical frontier in microbiology and clinical diagnostics. It explores the foundational principles defining low-biomass environments and their unique pitfalls, such as contamination and host DNA interference. The content details cutting-edge methodological solutions, including specialized microbial enrichment protocols, host DNA depletion techniques, and optimized sequencing strategies. A strong emphasis is placed on rigorous troubleshooting, experimental controls, and validation methods to ensure data integrity. By synthesizing these core intents, this guide equips researchers and drug development professionals with the knowledge to generate reliable, reproducible, and clinically actionable insights from low-biomass samples, thereby accelerating discovery and translation.

Navigating the Low-Biomass Landscape: Defining Challenges and Critical Pitfalls in Microbial Detection

What Constitutes a Low-Biomass Sample? Key Definitions and Examples

FAQ: What is a low-biomass sample?

A low-biomass sample is one that contains very low levels of microbial life, approaching the limits of detection for standard DNA-based sequencing methods [1]. The key challenge is that the target microbial DNA "signal" from the sample can be easily overwhelmed by the contaminant "noise" introduced during collection or laboratory processing [1] [2] [3]. While sometimes defined quantitatively (e.g., below 10,000 microbial cells per mL), it is often more useful to think of microbial biomass as a continuum, where the same contamination issues have a disproportionately larger impact the fewer native microbes are present [2].

FAQ: What are examples of low-biomass environments?

Low-biomass environments are diverse and can be found in human, built, and natural settings. The table below categorizes and lists key examples.

Examples of Low-Biomass Environments and Samples [1] [2] [3]

| Category | Specific Examples |

|---|---|

| Human Tissues & Fluids | Respiratory tract [1] [4], blood [1], fetal tissues [1], placenta [2], urine [3], brain [1], breastmilk [1], cancerous tumours [2]. |

| Built Environments | Cleanrooms (e.g., for spacecraft assembly) [5], hospital operating rooms [5], treated drinking water [1], metal surfaces [1]. |

| Natural Environments | The atmosphere [1], hyper-arid soils [1], deep subsurface [1], ice cores [1], glaciers [2], snow [1], hypersaline brines [1]. |

| Other | Plant seeds [1], ancient/poorly preserved samples [1]. |

FAQ: What are the major technical challenges in low-biomass research?

Working with low-biomass samples presents unique hurdles that can compromise data integrity and lead to false conclusions.

- External Contamination: Microbial DNA from reagents, kits ("kitome"), sampling equipment, and laboratory personnel can be introduced during sample collection or processing. In low-biomass samples, this contaminating DNA can make up most or all of the detected signal [1] [5] [2].

- Cross-Contamination (Well-to-Well Leakage): DNA can transfer between samples processed concurrently, for example, in adjacent wells on a 96-well plate. This "splashome" can mislead analyses by mixing microbial signals between samples [1] [2].

- Host DNA Misclassification: In host-associated samples (e.g., human tissue), the vast majority of sequenced DNA is often from the host. If not properly accounted for, this host DNA can be misidentified as microbial during bioinformatic analysis, creating false signals [2].

- Batch Effects and Processing Bias: Differences in reagents, personnel, or protocols between processing batches can create technical variations that are confounded with the biological groups of interest, leading to artifactual findings [2].

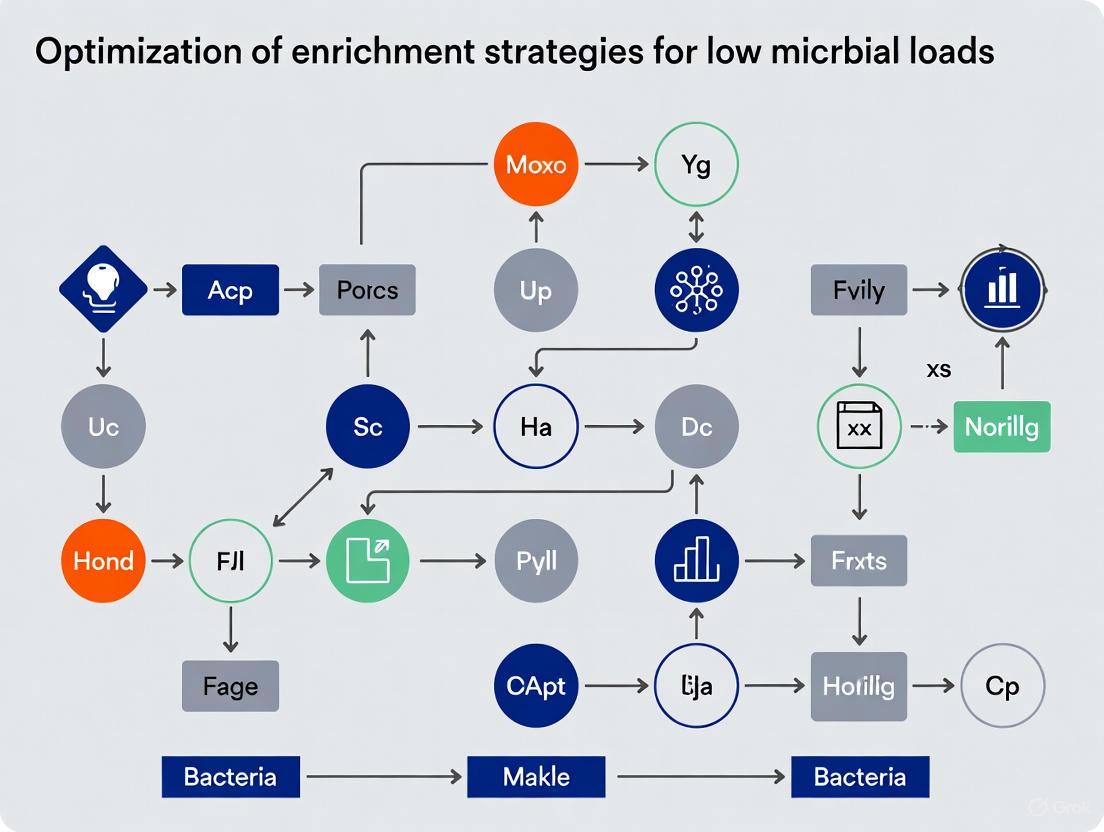

The diagram below illustrates a generalized experimental workflow for low-biomass microbiome research and the primary sources of contamination and bias at each stage.

Figure 1: Key contamination sources and technical biases in the low-biomass analysis workflow.

The Scientist's Toolkit: Essential Reagents & Materials

Success in low-biomass research depends on using the right tools to minimize and monitor contamination. The following table details key research reagent solutions.

Essential Research Reagents and Materials for Low-Biomass Studies [1] [5]

| Item | Function & Importance |

|---|---|

| DNA Decontamination Solutions | Sodium hypochlorite (bleach), hydrogen peroxide, or commercial DNA removal solutions are used to treat work surfaces and some equipment. This is critical to degrade contaminating DNA, as ethanol and autoclaving alone may not remove persistent DNA [1]. |

| Personal Protective Equipment (PPE) | Gloves, masks, cleanroom suits, and shoe covers act as a barrier to prevent contamination from human operators, including skin cells, hair, and aerosol droplets [1]. |

| DNA-Free Reagents & Kits | Using certified DNA-free water, buffers, and extraction kits is vital. Standard reagents contain their own microbiome ("kitome") which will be detected and can dominate the results of an ultra-low biomass sample [5]. |

| Surface Samplers | Devices like swabs, wipes, or specialized equipment (e.g., the SALSA squeegee-aspirator) are used to collect microbes from surfaces. High collection efficiency is key, as recovery from swabs can be as low as 10% [5]. |

| Sample Concentration Tools | Hollow fiber concentrators (e.g., InnovaPrep CP) or SpeedVac systems are used to concentrate diluted samples, boosting the target DNA signal for downstream molecular applications [5]. |

| Process Controls | These are blank samples (e.g., empty collection tubes, aliquots of sterile water, swabs of sterile surfaces) that are processed alongside real samples. They are essential for identifying the contaminant profile introduced by your specific reagents and workflow [1] [2]. |

Troubleshooting Guide: Mitigating Key Issues

Issue: High background contamination in negative controls and samples.

- Solution: Implement rigorous decontamination and the use of multiple process controls [1] [2].

- Decontaminate equipment and surfaces with 80% ethanol followed by a DNA-degrading solution like sodium hypochlorite [1].

- Use single-use, DNA-free consumables (tubes, tips, swabs) whenever possible [1].

- Include a variety of process controls to profile contamination from different sources. These should include:

- Analyze controls alongside samples and use bioinformatic decontamination tools (e.g.,

decontamin R) to subtract contaminant sequences identified in the controls from your experimental samples [2].

Issue: Inconsistent results between sample processing batches.

- Solution: Design your experiment to avoid batch confounding and minimize technical variation [2].

- Do not process all samples from one experimental group in a single batch. Instead, randomize or strategically distribute samples from all groups across each processing batch (e.g., DNA extraction plates, sequencing runs) [2].

- Use balanced batch designs with tools like

BalanceITto ensure that technical batches are not confounded with the biological conditions you are comparing [2]. - Use the same reagent lots for an entire study, as different lots can have distinct contaminant profiles [2].

Issue: Suspected cross-contamination between samples.

- Solution: Implement physical and procedural safeguards during liquid handling [1] [2].

- Use physical plate seals during shaking or centrifugation steps to prevent well-to-well leakage [1].

- Include negative control samples interspersed randomly on sample plates to detect splash events [2].

- Leave empty wells between samples of different types or high-concentration samples whenever possible to create a buffer zone [1].

Issue: Low microbial DNA yield, making sequencing difficult.

- Solution: Optimize collection and incorporate concentration steps, but be aware of the increased contamination risk [5].

- Use high-efficiency collection methods like the SALSA device, which has a reported recovery efficiency of 60% or higher for surfaces, compared to ~10% for some swabs [5].

- Concentrate samples post-collection using methods like hollow fiber concentration (e.g., InnovaPrep CP) to reduce elution volume and increase DNA concentration [5].

- Note: Increased sample manipulation raises the risk of secondary contamination. Always process negative controls through the exact same concentration steps [5].

Troubleshooting Guide: FAQs on Contamination and Host DNA

Q1: Why is host DNA removal critical for studying low-biomass plant microbiomes?

In plant microbiome studies, host-derived DNA acts as a significant contaminant that can obscure microbial signals. A plant's genome is substantially larger than a microbial genome; for instance, the rapeseed genome is about 1.1 Gb, while an average bacterial genome is only about 3.6 Mb [6]. Even a tiny amount of plant material can overwhelm the microbial DNA in a sample, leading to severely insufficient sequencing coverage of the microbial genomes [6]. This results in wasted sequencing resources, reduced detection sensitivity, and biased reconstruction of the microbial community [6]. Effective host DNA removal is therefore a prerequisite for achieving high-resolution metagenomic analysis in low-biomass niches like the plant endosphere and phyllosphere [6].

Q2: What are the primary methods for host DNA removal, and how do I choose?

The choice of method depends on your sample type, the specific microbial niche, and your experimental goals. The table below summarizes the core techniques [6].

Table: Comparison of Host DNA Removal and Microbial Enrichment Strategies

| Method Category | Specific Technique | Underlying Principle | Key Advantage | Reported Efficiency/Performance | Primary Limitation |

|---|---|---|---|---|---|

| Physical Separation | Density Gradient Centrifugation | Separates cells based on size and density differences. | Effectively enriches microbial cells. | ~24.6% non-host DNA content achieved in sugar beet endophytes [6]. | Can lower total microbial yield and introduce bias for certain microbial groups [6]. |

| Enzymatic & Mechanical Lysis | Enzymatic Digestion (e.g., Cellulase) | Uses enzymes to degrade the rigid plant cell wall while leaving microbial cells intact. | Highly specific to plant cell structures. | Requires custom optimization for different plant species and tissues [6]. | Not a universal solution; requires optimization [6]. |

| Bead Beating + DNase | Uses large grinding beads to selectively disrupt larger host cells, followed by DNase degradation of released DNA. | Effective for tough plant tissues. | Can reduce host DNA contamination by over 1000-fold, enabling high-quality MAG assembly [6]. | Requires careful optimization of bead size and shaking intensity to preserve microbial cells [6]. | |

| Chemical & Biochemical | Selective Lysis (e.g., Saponin) | Exploits differential vulnerability of host and microbial cells to mild detergents. | Works well for mammalian cells; potential for plant protoplasts. | Saponin shows promise in selectively lysing mammalian host cells [6]. | Less effective on plant cells with rigid walls without prior treatment [6]. |

| DNA Methylation Difference (e.g., NEBNext Kit) | Utilizes differences in CpG methylation patterns between host and microbial DNA. | Sequence-agnostic; leverages an inherent biochemical difference. | Commercially available, standardized kit. | Cell organelle DNA (e.g., chloroplasts) can complicate the process due to bacterial-like sequences [6]. | |

| Emerging Technologies | CRISPR-Cas9 | Guide RNA directs Cas9 to cut specific host DNA sequences (e.g., repetitive regions). | High specificity for targeted host genome reduction. | Successfully used to reduce host 16S rRNA gene contamination in rice amplicon sequencing [6]. | Requires prior knowledge of host genome sequence for gRNA design. |

| Nanopore Selective Sequencing (ReadUntil) | Real-time basecalling allows for ejection of unwanted host DNA molecules from the nanopore. | Real-time, sequence-based selection; can be applied post-library prep. | Allows for enrichment during the sequencing run itself. | Requires specialized equipment and real-time computing infrastructure. |

Q3: My microbial community profile looks skewed after host DNA removal. What could be the cause?

Many host removal techniques can introduce bias by preferentially enriching for or excluding certain microbial taxa, thereby distorting the observed community structure [6]. For example, density gradient centrifugation may co-enrich or lose microbial cells based on their physical properties. To diagnose this, it is crucial to:

- Use Internal Controls: Spike a known amount of synthetic DNA or a microbial standard into your sample before processing. This allows you to track losses and quantify bias [6].

- Quantify Microbial Load: Use quantitative PCR (qPCR) or digital PCR (dPCR) targeting universal microbial genes (e.g., 16S rRNA) to absolutely quantify microbial taxa before and after treatment [6].

- Compare Methods: Where possible, compare community profiles generated using different host DNA removal methods on the same sample to identify consistent, method-independent signals.

Troubleshooting Guide: FAQs on Batch Effects

Q4: What are batch effects in microbiome studies, and how can AI help?

Batch effects are technical variations introduced during different stages of experimentation (e.g., DNA extraction kits, sequencing runs, reagent lots) that are not related to the true biological signals of interest. In cross-habitat microbiome studies, AI faces the challenge of distinguishing genuine environmental constraints from these technical artifacts [7]. AI models require large, high-quality datasets with complete and standardized environmental metadata (e.g., temperature, pH, nutrients) to learn true biological patterns and avoid being confounded by batch effects [7].

Q5: What are the best practices for mitigating batch effects?

The most effective strategy is a combination of experimental design and computational correction.

- Standardization: Use the same protocols, reagents, and equipment for all samples within a study.

- Randomization: Process samples from different experimental groups randomly across sequencing batches.

- Batch Tracking: Meticulously record all technical variables, including DNA extraction kit lot numbers, sequencing run dates, and technician IDs.

- Experimental Replication: Include technical replicates across different batches to assess the magnitude of batch effects.

- Computational Correction: After sequencing, use bioinformatic tools (e.g., ComBat, RUV, or other normalization methods integrated into AI pipelines) to statistically adjust for batch effects. However, the gold standard is to minimize them at the source through careful experimental design.

Experimental Protocols for Key Challenges

Protocol 1: Bead-Based Selective Host Cell Lysis for Tough Plant Tissues

This protocol is adapted from methods described for effectively reducing host DNA contamination by over 1000-fold [6].

Principle: Larger plant host cells are more susceptible to mechanical disruption by larger grinding beads, while smaller microbial cells remain intact. The released host DNA is then degraded enzymatically.

Workflow:

Steps:

- Homogenization: Begin with a freshly homogenized plant sample in an appropriate buffer.

- Bead Beating: Add large-diameter grinding beads (e.g., 1.4 mm) to the sample. Use a bead beater with optimized settings (e.g., shaking speed and duration) that are sufficient to lyse plant cells but minimize damage to microbial cells.

- Separation: Centrifuge the sample at a low speed to pellet the intact microbial cells, leaving the lysed host cell debris in the supernatant.

- DNase Treatment: Carefully remove the supernatant and treat it with DNase to digest the released host DNA. This step prevents subsequent co-precipitation with microbial DNA.

- Wash and Extract: Wash the microbial cell pellet to remove any residual DNase and contaminants. Proceed with standard microbial DNA extraction protocols.

Protocol 2: A Multi-Modal AI Workflow for Batch Effect Correction and Pattern Discovery

This protocol outlines a strategy for using AI to overcome batch effects and uncover true biological signals in large-scale microbiome datasets, as discussed in the context of cross-habitat studies [7].

Principle: Integrate multiple data types and leverage AI models to separate technical noise from biological signal, enabling the discovery of robust microbial traits and environmental relationships.

Workflow:

Steps:

- Data Curation: Assemble a large dataset of metagenomic sequences from your target habitats. Crucially, compile a standardized set of environmental parameters (e.g., temperature, pressure, pH, salinity) for each sample [7].

- Preprocessing & Batch Identification: Process sequences through a standardized bioinformatic pipeline. Use exploratory data analysis (e.g., PCA) to visualize and identify clusters driven by batch effects versus biological conditions.

- AI Model Training: Train AI models, such as sequence-based large language models or geometric deep learning architectures, on the integrated genomic and environmental data. The goal is for the model to learn microbial traits and functions directly from genetic data and correlate them with environmental drivers [7].

- Theoretical Validation: Validate the AI model's predictions against established ecological theories. For instance, check if the model recapitulates the principle of "everything is everywhere, but the environment selects" or identifies known functional redundancies that maintain ecosystem stability. This step is critical to avoid AI "hallucinations" [7].

- Pattern Discovery and Interpretation: Use the validated model to generate new hypotheses about microbial adaptation mechanisms. The output shifts from simple taxonomic lists to a trait-based understanding of how "environment constrains life" and how "life records environment" [7].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents for Host DNA Removal and Microbial Enrichment

| Reagent / Kit | Function / Purpose | Specific Example / Note |

|---|---|---|

| Cellulase, Hemicellulase, Pectinase | Enzyme mixture for hydrolyzing plant cell walls to release microbial cells without lysing them. | Effectiveness varies by plant species and tissue type; requires optimization of enzyme concentration and incubation conditions [6]. |

| NEBNext Microbiome DNA Enrichment Kit | Biochemically enriches microbial DNA by exploiting differences in CpG methylation density between host (highly methylated) and microbial (low methylation) DNA. | A commercial solution for human-associated samples; performance on plant samples (with organelle DNA) may vary [6]. |

| Saponin / Triton X-100 | Mild detergents for selective lysis of mammalian host cells (which lack a cell wall). | Less effective on intact plant cells but can be useful for protoplast-based studies [6]. |

| Large Grinding Beads (1.4 mm) | For mechanical disruption of large host cells (e.g., plant cells) while preserving smaller microbial cells. | The size and material of the beads are critical parameters that need optimization for different sample matrices [6]. |

| DNase I | Enzyme used to degrade free DNA in samples after selective host cell lysis, preventing its carryover. | Used after bead beating to destroy released host DNA in the supernatant before microbial pellet collection [6]. |

| CRISPR-Cas9 with gRNAs | Targeted depletion of host DNA sequences (e.g., repetitive elements, chloroplast 16S gene) from sequencing libraries. | Requires design of specific guide RNAs (gRNAs) targeting the host genome of interest [6]. |

| Synthetic Spike-in DNA / Microbial Standards | Internal controls added to the sample at the start of processing to monitor efficiency, bias, and for absolute quantification. | Essential for quality control and validating the performance of any host DNA removal protocol [6]. |

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: My low-biomass microbiome study did not include negative controls. Can I still determine if my signals are contamination? Unfortunately, without negative controls, it is exceptionally difficult to rule out contamination. Negative controls (e.g., blank extraction kits, sterile water processed alongside your samples) are essential for identifying background DNA from reagents and the laboratory environment. In their absence, you cannot distinguish true low-biomass signals from contamination, and your results should be interpreted with extreme caution [8] [9].

Q2: I have detected bacterial DNA in my placental samples. Does this confirm the existence of a placental microbiome? Not necessarily. The detection of bacterial DNA alone is insufficient to confirm a resident microbiome. You must rigorously rule out contamination from reagents, delivery-associated exposure (e.g., vaginal bacteria during birth), and laboratory handling. Consistent findings across studies are lacking, and the most rigorous analyses suggest that these signals often originate from contaminants or rare, transient microbial intrusion rather than a consistent, living microbial community [10] [11] [9].

Q3: In my blood microbiome analysis, I found microbial DNA in only a small fraction of healthy individuals. Is my analysis faulty? Not necessarily. Large-scale studies have shown that microbial DNA is not universally present in healthy individuals. One study of 9,770 healthy people found no microbial species in 84% of participants, and those with a signal typically had only one species. This pattern supports a model of sporadic translocation of commensals from other body sites (like the gut or mouth) into the bloodstream, rather than a stable core blood microbiome [12] [13].

Q4: My differential abundance analysis of microbiome data is plagued by group-wise structured zeros (all zeros in one group). How should I handle this? Group-wise structured zeros present a significant challenge for many statistical models. A recommended strategy is to use a combined approach:

- First, use a method like DESeq2-ZINBWaVE to handle general zero-inflation across your dataset.

- Subsequently, apply DESeq2 with its built-in penalized likelihood estimation to properly test the significance of taxa that are entirely absent in one group but present in the other. This approach helps manage the infinite parameter estimates that standard models produce with such data [14].

Troubleshooting Guides

Issue: Inconsistent Findings in Low-Biomass Microbiome Studies

- Problem: Your results are inconsistent with other published studies, or you cannot replicate a reported low-biomass microbiome.

- Solution:

- Implement Rigorous Controls: For every batch of samples, process multiple negative controls (e.g., blank extractions) and positive controls (e.g., mock microbial communities) [8].

- Profile Your "Kitome": Sequence your negative controls to create a profile of contaminating taxa specific to your reagents and lab environment.

- Use In-Silico Decontamination: Apply bioinformatic tools like

DECONTAM(which uses prevalence or frequency methods) to identify and remove taxa in your samples that are also found in your negative controls [11] [13]. - Standardize Reporting: Adhere to reporting guidelines like the STORMS checklist to ensure all methodological details, including control data, are transparently documented [15].

Issue: Poor Signal-to-Noise Ratio in Blood Microbiome Metagenomics

- Problem: The high level of host DNA and low microbial biomass in blood samples makes detecting genuine microbial signals difficult.

- Solution:

- Increase Sequencing Depth: Sequence more deeply to increase the probability of capturing rare microbial reads.

- Apply Stringent Bioinformatic Filtering:

- Remove low-complexity sequences and host reads.

- Discard samples with an extremely low number of microbial reads (e.g., <100 read pairs).

- Apply abundance cut-offs and validate findings by aligning reads to reference genomes to check for sufficient coverage breadth [13].

- Leverage Batch Information: Use batch-specific information (kit types, lot numbers) to identify and filter out batch-specific contaminants, as true biological signals should be distributed across batches [13].

Table 1: Prevalence of Microbial DNA in Healthy Human Blood (Cohort: n=9,770)

| Metric | Value | Interpretation |

|---|---|---|

| Individuals with no detected microbes | 84% | Majority of healthy individuals show no microbial DNA in blood. |

| Individuals with at least one microbe | 16% | A minority harbors transient microbial DNA. |

| Median species per positive individual | 1 | Very low microbial load when present. |

| Most prevalent species | Cutibacterium acnes (4.7%) | No species was common across the population. |

Source: Adapted from Tan et al. (2023), Nature Microbiology [13].

Table 2: Key Controversies in Placental and Blood Microbiome Research

| Body Site | Supportive Evidence & Potential Pitfalls | Contrary Evidence & Methodological Critiques |

|---|---|---|

| Placenta | - Early DNA sequencing studies reported bacterial communities [9].- Potential for transient microbial exposure [10]. | - Re-analysis of 15 studies found signals attributable to contamination and mode of delivery [11].- Existence of germ-free mammal lines argues against a propagated placental microbiota [10].- Bacterial DNA signals are inconsistent and do not represent a true, replicating community [10] [9]. |

| Blood | - Some studies report bacterial DNA and even cultured bacteria in healthy blood [12] [16].- Dysbiosis of blood microbial profiles implicated in diseases [12]. | - Largest population study found no core microbiome; detects sporadic translocation of commensals [13].- Signals are highly susceptible to contamination from skin puncture and laboratory reagents [13] [8]. |

Experimental Protocols

Protocol: Conducting a Controlled Low-Biomass Microbiome Study from Sample Collection to Analysis

1. Sample Collection and DNA Extraction

- Materials:

- Sample collection kits (e.g., sterile swabs, blood collection tubes)

- DNA extraction kit

- Mock microbial community (Positive Control)

- Molecular grade water (Negative Control)

- Steps:

- Collect clinical samples using aseptic technique to minimize exogenous contamination.

- For every batch of extractions, include at least two types of controls:

- Negative Controls: Process tubes containing only sterile water through the entire DNA extraction and library preparation process.

- Positive Controls: Process a defined mock microbial community with known composition and abundance [8].

- Extract DNA from all samples and controls using your chosen kit, noting the batch and lot number of the kit.

2. Library Preparation and Sequencing

- Steps:

- Prepare sequencing libraries for all samples and controls.

- If using 16S rRNA amplicon sequencing, note that amplification biases can skew results; the positive control helps monitor this [8].

- Pool libraries and sequence on an appropriate platform with sufficient depth to detect low-abundance taxa.

3. Bioinformatic and Statistical Analysis

- Steps:

- Process Raw Data: Use pipelines like DADA2 to infer amplicon sequence variants (ASVs) for higher resolution than OTU clustering [11].

- Identify Contaminants: Use the negative control samples to create a list of contaminating taxa. Employ tools like

DECONTAM(in R) to subtract these from your experimental samples [11] [13]. - Validate with Positive Controls: Ensure your positive control data accurately reflects the known composition of the mock community. This validates your entire wet-lab and bioinformatic workflow.

- Differential Abundance Testing: For datasets with many zeros, employ a strategy that combines tools like DESeq2-ZINBWaVE for zero-inflation and DESeq2 for handling group-wise structured zeros [14].

Workflow and Pathway Diagrams

Diagram 1: Controlled Low-Biomass Microbiome Workflow. This diagram outlines the critical steps for a robust low-biomass microbiome study, highlighting the non-negotiable inclusion of controls and in-silico decontamination.

Diagram 2: Sources of Signals in Low-Biomass Studies. A key challenge is distinguishing true biological signals from various technical artifacts and contaminants.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Solutions for Low-Biomass Microbiome Research

| Item | Function in Research | Key Consideration |

|---|---|---|

| Mock Microbial Community | Serves as a positive control to validate DNA extraction efficiency, library prep, sequencing, and bioinformatic analysis [8]. | Choose a community relevant to your study (e.g., containing Gram-positive/negative bacteria). Results only confirm performance for that specific community. |

| DNA Extraction Kits | To isolate total DNA from samples. Different kits have different "kitomes" [8] [9]. | The kit itself is a major source of contaminating DNA. Always use the same kit lot for a study and record the lot number. |

| Molecular Grade Water | Serves as a negative control during DNA extraction and library preparation to identify contaminating DNA from reagents and the laboratory environment [8]. | Must be processed in parallel with every batch of samples. Its sequencing profile is essential for decontamination. |

| Decontamination Software (e.g., DECONTAM) | A bioinformatic tool used to identify and remove contaminating taxa from experimental samples based on their presence in negative controls [11] [13]. | Requires sequencing of negative controls. Can use prevalence-based or frequency-based methods to identify contaminants. |

| Standardized Reporting Checklist (STORMS) | A checklist to ensure complete and transparent reporting of microbiome studies, from epidemiology and lab methods to bioinformatics and statistics [15]. | Improves reproducibility and allows for critical assessment of study quality, especially important in controversial areas. |

The Critical Impact of Bacterial Load on Sequencing Data Fidelity

FAQs on Bacterial Load and Sequencing

Why is bacterial load a critical factor in sequencing data fidelity?

In specimens with low bacterial load, the small amount of microbial DNA must compete for sequencing resources with an overwhelming background of host and contaminating DNA. This can cause the sequence data to be dominated by background noise rather than the true biological signal.

- Inverse Power Relationship: When sequencing a low number of bacterial genomes (e.g., ≤ 10³ genome equivalents), over 90% of the resulting sequences can be erroneous or originate from background contamination, rather than the target sample [17].

- Background Contamination: Sterile laboratory reagents and DNA extraction kits contain trace amounts of bacterial 16S rDNA. While this background is negligible when sequencing high-bacterial-load specimens like stool, it becomes the dominant signal in low-bacterial-load samples, severely distorting the true microbiota profile [17].

Contamination can be introduced at multiple stages, from sample collection through computational analysis.

- Wet-Lab Sources: Common contaminants include bacterial genera such as Mycoplasma, Bradyrhizobium, Pseudomonas, and Staphylococcus, which can originate from laboratory reagents, kits, or the experimenter [18].

- Computational Artifacts: A significant finding is that fragments of the human Y-chromosome, which are missing from the standard human reference genome (GRCh38), can be incorrectly mapped to bacterial reference genomes. This creates a false association between certain bacteria and the male sex, which is actually a computational error [18].

- Sample Type and Batch Effects: The source of the biological sample (e.g., whole blood vs. lymphoblastoid cell lines) and the sequencing plate itself have been shown to strongly influence the profile of contaminating microbes, highlighting the importance of tracking batch variables [18].

What methods can enrich for microbial DNA in low-bacterial-load samples?

Host DNA depletion is a key strategy to increase microbial sequencing yield. Methods can be categorized as pre-extraction (physical removal of host cells) and post-extraction (chemical/enzymatic removal of host DNA).

The table below summarizes the performance of several host depletion methods tested on bronchoalveolar lavage fluid (BALF), a typically low-biomass sample [19].

| Method | Key Principle | Performance in BALF (Microbial Read Increase vs. Raw Sample) |

|---|---|---|

| K_zym (HostZERO Kit) | Pre-extraction; commercial kit | 100.3-fold |

| S_ase | Pre-extraction; saponin lysis + nuclease digestion | 55.8-fold |

| F_ase | Pre-extraction; 10 μm filtering + nuclease digestion | 65.6-fold |

| K_qia (QIAamp DNA Microbiome Kit) | Pre-extraction; commercial kit | 55.3-fold |

| R_ase | Pre-extraction; nuclease digestion | 16.2-fold |

| O_pma | Pre-extraction; osmotic lysis + PMA degradation | 2.5-fold |

Another novel technology, a Zwitterionic Interface Ultra-Self-assemble Coating (ZISC)-based filtration device, demonstrated >99% removal of white blood cells from blood samples, leading to a tenfold enrichment of microbial reads in metagenomic NGS (mNGS) for sepsis diagnosis [20].

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Low Library Yield from Low-Biomass Samples

Low library yield is a common symptom when working with samples containing insufficient bacterial material.

Symptoms:

- Final library concentration is well below expectations.

- Bioanalyzer electropherogram may show a dominant adapter-dimer peak (~70-90 bp) and a faint or missing library peak.

Root Causes and Corrective Actions [21]:

| Root Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality / Contaminants | Enzyme inhibition by residual salts, phenol, or EDTA. | Re-purify input sample; use fluorometric quantification (Qubit) instead of UV absorbance for higher accuracy. |

| Inaccurate Quantification / Pipetting Error | Suboptimal enzyme stoichiometry due to over/under-estimated input. | Use master mixes to reduce pipetting error; calibrate pipettes; run technical replicates. |

| Inefficient Adapter Ligation | Poor ligase performance or incorrect adapter-to-insert molar ratio. | Titrate adapter:insert ratio; ensure fresh ligase and buffer; optimize incubation time and temperature. |

| Overly Aggressive Purification | Desired DNA fragments are accidentally removed during clean-up steps. | Optimize bead-to-sample ratios; avoid over-drying magnetic beads. |

Guide 2: Implementing a Rigorous Contamination Control Plan

A proactive plan is essential to distinguish true signal from noise.

Step 1: Incorporate Comprehensive Controls

- Negative Controls: Process sterile water or saline alongside clinical specimens through every stage, including DNA extraction and sequencing. These controls will capture the "background contaminome" [17] [18].

- Sample Collection Controls: For BALF studies, collect saline wash from the bronchoscope prior to insertion to control for reagent and procedural contamination [17].

Step 2: Quantify Bacterial Load

- Use quantitative PCR (qPCR) to measure the 16S rRNA gene copy number in all specimens and negative controls. This provides an objective measure of bacterial abundance [17].

- Interpretation: If the bacterial load of a clinical specimen is similar to or only marginally higher than that of the negative controls, its microbiota profile is likely unreliable and should be interpreted with extreme caution or excluded [17].

Step 3: Apply Computational Decontamination

- Use bioinformatic tools (e.g., Kraken2, Bracken) to identify the taxonomic composition of your samples and controls [22].

- Subtract taxa found in negative controls from the clinical samples, or use statistical packages designed to identify and remove contaminant sequences.

Experimental Protocols

Protocol: Host Depletion Using Filtration and Nuclease Digestion (F_ase Method)

This pre-extraction method effectively removes host cells while preserving microbial integrity [19].

1. Sample Preparation

- Obtain respiratory sample (e.g., BALF) in a suspension volume of 1-2 mL.

- Add glycerol to a final concentration of 25% to cryopreserve microbial cells during processing.

2. Host Cell Depletion

- Pass the sample through a 10 μm sterile filter. Host cells (e.g., leukocytes, which are typically >10 μm) are retained, while most bacterial and viral particles pass through.

- Collect the filtrate.

3. DNase Digestion of Free-floating Host DNA

- To the filtrate, add a commercial DNase I enzyme and its corresponding buffer.

- Incubate at the recommended temperature (e.g., 37°C) for 30 minutes to degrade any host DNA released from lysed cells.

- Inactivate the DNase (often by adding STOP solution and heating).

4. Microbial DNA Extraction

- Centrifuge the DNase-treated filtrate at high speed (e.g., 16,000 × g) to pellet the microbial cells.

- Proceed with standard DNA extraction from the pellet using a commercial kit (e.g., Maxwell RSC PureFood Pathogen kit).

Host Depletion Workflow

Protocol: Quality and Contamination Control for Bacterial Isolate Sequencing

This bioinformatic protocol checks for contamination in sequencing data from bacterial isolates [22].

1. Assess Raw Read Quality

- Use

FalcoorFastQCto generate a quality control report on the raw FASTQ files. Check for per-base sequence quality, adapter content, and overrepresented sequences.

2. Trim and Filter Reads

- Use

Fastpto perform adapter trimming and quality filtering. Apply parameters such as a minimum read length and a minimum quality threshold.

3. Identify Contaminating Species

- Run

Kraken2with a standard database (e.g., RefSeq) on the filtered reads to classify them taxonomically. - Use

Brackento estimate the abundance of species present.

4. Visualize and Interpret Results

- Use

Recentrifugeto generate an interactive report that visualizes the taxonomic composition and highlights potential contaminants based on their prevalence in controls.

Bioinformatic Contamination Control

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function | Example Use Case |

|---|---|---|

| ZISC-based Filtration Device | Pre-extraction host depletion; selectively binds and retains host leukocytes from whole blood with >99% efficiency [20]. | Enriching microbial cells from blood for sepsis mNGS diagnostics. |

| QIAamp DNA Microbiome Kit | Pre-extraction host depletion; uses differential lysis to selectively remove host cells [19]. | Processing respiratory samples (BALF) to increase microbial read count. |

| NEBNext Microbiome DNA Enrichment Kit | Post-extraction host depletion; removes CpG-methylated host DNA, leaving behind non-methylated microbial DNA [20]. | Enriching microbial DNA after total DNA extraction; less effective for respiratory samples [19]. |

| Magnetic Beads (AMPure XP) | Purification and size-selection; binds DNA for washing and elution in a concentration-dependent manner. | Cleaning up adapter-dimer artifacts and selecting the correct library insert size post-amplification [21]. |

| Rapid Barcoding Kit (SQK-RBK114.24/.96) | Library preparation; enables quick tagmentation and barcoding of DNA for multiplexed sequencing on Nanopore platforms [23]. | Preparing 4-24 microbial isolate genomes for sequencing on a MinION flow cell. |

| Fluorometric Quantification Kit (Qubit) | Accurate nucleic acid quantification; uses fluorescent dyes that bind specifically to DNA, unlike UV absorbance. | Measuring the precise concentration of low-abundance microbial DNA in the presence of contaminants [21] [17]. |

Advanced Enrichment and Host-Depletion Techniques: From Laboratory to Clinical Application

Microbial Enrichment Methodology (MEM) is a advanced host-depletion technique designed to enable high-throughput metagenomic characterization from host-rich samples. In microbiome studies, samples like intestinal biopsies, saliva, and other tissues present a significant challenge: they contain a high ratio of host to microbial DNA, sometimes exceeding 99.99% host DNA. This overwhelming presence of host genetic material makes it difficult and cost-prohibitive to obtain sufficient microbial sequences for meaningful analysis using shotgun metagenomics. MEM effectively addresses this problem by selectively removing host DNA while preserving the native microbial community composition, allowing researchers to construct metagenome-assembled genomes (MAGs) directly from tissue samples and gain deeper insights into host-microbe interactions [24] [25].

Core Principles and Mechanism

MEM operates on the principle of selective physical lysis based on cellular size differences between host and microbial cells. The methodology leverages the substantial disparity in cell size—host cells are significantly larger than bacterial cells—to create differential mechanical stress during processing [24].

The fundamental steps in MEM's approach include:

Bead-beating with large beads: Unlike conventional microbial lysis that uses 0.1-0.5 mm beads, MEM employs larger 1.4 mm beads to create high mechanical shear stress. This preferentially lyses the larger, more fragile host cells while leaving the smaller, structurally robust bacterial cells intact [24].

Enzymatic treatment: After mechanical lysis, MEM incorporates Benzonase to degrade accessible extracellular nucleic acids released from the lysed host cells. Proteinase K is then added to further disrupt any remaining host cells and degrade histones to release DNA [24].

Minimal processing time: The entire MEM protocol is optimized to be completed within 20 minutes, using gentle processing conditions to prevent accidental lysis of microbial cells and maintain community integrity [24].

This strategic approach achieves more than 1,000-fold reduction in host DNA while maintaining microbial community composition, with approximately 90% of taxa showing no significant differences between MEM-treated and untreated control samples [24] [25].

Detailed Experimental Protocol

MEM Workflow Specification

The MEM protocol follows a sequential process to achieve optimal host depletion:

Sample Preparation

- Begin with fresh or frozen tissue samples (biopsies, scrapings) or body fluids (saliva)

- For mucosal samples, preliminary scraping may be necessary to isolate the epithelial layer with mucosa-associated bacteria

- For high-mucin samples like saliva, consider DTT pre-treatment to improve efficiency

Selective Lysis

- Add samples to tubes containing 1.4 mm ceramic beads

- Process using a bead beater with optimized settings to create mechanical shear stress

- Duration: Approximately 5-10 minutes

Enzymatic Treatment

- Add Benzonase to degrade extracellular nucleic acids

- Incubate for 5 minutes at room temperature

- Add Proteinase K to degrade host proteins and release DNA

- Incubate for additional 5-10 minutes

Microbial DNA Extraction

- Proceed with standard microbial DNA extraction kits

- Validate extraction efficiency and host depletion [24]

Critical Optimization Parameters

Several factors require careful optimization for different sample types:

- Bead size: Strictly maintain 1.4 mm beads—smaller beads may lyse microbial cells

- Processing time: Over-processing can damage microbial cells; under-processing reduces host depletion

- Sample type adjustments: Mucosal samples may require different parameters than liquid samples

- Temperature control: Maintain consistent temperature throughout processing to prevent microbial stress [24]

Performance Data and Comparative Analysis

Host Depletion Efficiency Across Sample Types

The following table summarizes MEM performance compared to alternative methods:

Table 1: Host Depletion Efficiency Across Methods and Sample Types

| Method | Sample Type | Host Depletion | Microbial Recovery | Key Limitations |

|---|---|---|---|---|

| MEM | Intestinal biopsies | >1,000-fold | ~69% (31% loss) | Requires optimization for different tissues |

| MEM | Saliva | ~40-fold | Maintained composition | Improved with DTT pre-treatment |

| MEM | Intestinal scrapings | ~1,600-fold | High retention | Minimal community perturbation |

| MolYsis | Various | Variable | Inconsistent across taxa | Taxa drop-out issues |

| QIAamp | Various | High | Significant bacterial losses | Community composition altered |

| lyPMA | Liquid samples | Effective | Highly variable | Incompatible with opaque tissues |

| NEBNext Microbiome Enrichment | Saliva | Substantial | Maintains diversity | CpG methylation-based approach [26] |

| Nanopore Adaptive Sequencing | Vaginal samples | Moderate (read-level) | No wet-lab alteration | Requires specialized equipment [27] |

Microbial Community Integrity Preservation

Table 2: Impact on Microbial Community Composition

| Method | Taxa with Significant Abundance Changes | Taxa Drop-out | Community Representation |

|---|---|---|---|

| MEM | ~10% | None detected | >90% taxa show no significant difference |

| MolYsis | Variable | Some taxa affected | Inconsistent preservation |

| QIAamp | Significant | Multiple taxa | Altered community structure |

| lyPMA | Highly variable | Dependent on host DNA levels | Unpredictable microbial losses |

MEM demonstrates superior preservation of microbial community integrity, with more than 90% of genera showing no significant difference in relative abundance between MEM-treated and control samples. All taxa consistently detected in control samples remain detectable after MEM processing [24].

Troubleshooting Guide

Common Experimental Challenges and Solutions

Problem: Inadequate host DNA depletion

- Potential Cause: Insufficient bead-beating time or incorrect bead size

- Solution: Verify bead size is precisely 1.4 mm; optimize bead-beating duration

- Prevention: Perform pilot tests with different processing times

Problem: Excessive microbial DNA loss

- Potential Cause: Over-processing or too vigorous mechanical treatment

- Solution: Reduce bead-beating intensity; shorten processing time

- Prevention: Include control samples to quantify microbial recovery

Problem: Inconsistent results between sample types

- Potential Cause: Failure to optimize protocol for specific sample matrices

- Solution: Adjust enzymatic treatment duration for different sample types

- Prevention: Establish sample-type specific protocols

Problem: Low overall DNA yield

- Potential Cause: Inefficient DNA extraction following host depletion

- Solution: Ensure compatibility between MEM processing and subsequent extraction kits

- Prevention: Validate entire workflow with mock communities [24]

Frequently Asked Questions (FAQs)

Q: How does MEM compare to methylation-based enrichment methods? A: MEM uses physical separation based on cell size differences, while methods like the NEBNext Microbiome DNA Enrichment Kit exploit differential CpG methylation patterns between host and microbial DNA. MEM doesn't rely on epigenetic markers and may be more suitable for samples where methylation patterns are unknown or variable [26].

Q: Can MEM be combined with other enrichment techniques? A: Yes, MEM can potentially be combined with other methods. For example, Nanopore's adaptive sequencing performs host depletion computationally during sequencing and could complement wet-lab methods like MEM [27].

Q: What sample types is MEM most suitable for? A: MEM has been validated across diverse sample types including intestinal biopsies, intestinal scrapings, saliva, and stool. It performs particularly well with tissue samples that have extremely high host DNA content [24].

Q: How does MEM affect the ability to construct metagenome-assembled genomes (MAGs)? A: MEM enables MAG construction from previously challenging samples. Researchers have successfully reconstructed MAGs for bacteria and archaea at relative abundances as low as 1% directly from human intestinal biopsies after MEM treatment [24] [25].

Q: What are the advantages of MEM over chemical lysis methods? A: MEM's mechanical approach based on size differences introduces lower bias compared to chemical lysis alternatives where lysis efficiency may vary based on bacterial cell wall structures. This results in more uniform preservation of microbial community composition [24].

Research Reagent Solutions

Table 3: Essential Reagents for MEM Implementation

| Reagent/Equipment | Specification | Function in Protocol |

|---|---|---|

| Ceramic beads | 1.4 mm diameter | Creates mechanical shear for selective host lysis |

| Benzonase | Molecular biology grade | Degrades extracellular nucleic acids |

| Proteinase K | PCR-grade | Digests host proteins and histones |

| Bead beater | Adjustable speed | Provides consistent mechanical processing |

| DNA extraction kits | Microbial-focused | Isolves microbial DNA after host depletion |

MEM Workflow Visualization

MEM Workflow Diagram: This visualization outlines the key steps in the Microbial Enrichment Methodology, from sample processing through downstream analysis.

MEM represents a significant advancement in host-depletion techniques, particularly valuable for tissue-associated microbiome studies. Its ability to remove host DNA by more than 1,000-fold while preserving microbial community integrity enables previously challenging applications like metagenome-assembled genome construction from low-biopsy samples. As microbiome research continues to focus on tissue-specific interactions rather than just fecal communities, methodologies like MEM will play a crucial role in uncovering the mechanistic insights into host-microbe relationships in health and disease [24] [25].

Comparative Analysis of Host-DNA Depletion Methods (MolYsis, QIAamp, lyPMA)

In the field of microbial genomics research, samples with high host DNA content and low microbial biomass present a significant analytical challenge. Effective host DNA depletion is crucial for obtaining sufficient microbial sequencing reads to characterize microbiomes accurately. This technical resource center provides a comprehensive comparison of three host-DNA depletion methods—MolYsis, QIAamp, and lyPMA—evaluating their performance across different sample types to guide researchers in selecting and troubleshooting appropriate protocols for their specific applications.

The table below summarizes the core characteristics and performance metrics of the three host-DNA depletion methods based on recent comparative studies:

| Method | Mechanism of Action | Optimal Sample Types | Host Depletion Efficiency | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| MolYsis | Differential lysis of host cells, centrifugal enrichment of microbes, DNase degradation of host DNA [28] | Sputum, nasopharyngeal aspirates [29] [30] | High (69.6% reduction in sputum; 17.7% reduction in BAL) [29] | Effective with frozen samples without cryoprotectants [29] [28] | Introduces taxonomic bias; reduces Gram-negative representation [29] [28] |

| QIAamp | Differential lysis, centrifugation, degradation of accessible nucleic acids [28] | Nasal swabs, oropharyngeal samples [29] [19] | High (75.4% reduction in nasal samples) [29] | Minimal impact on Gram-negative viability in frozen isolates [29] | Multiple wash steps risk biomass loss [31] |

| lyPMA | Osmotic lysis of host cells, PMA cross-linking and fragmentation of exposed DNA [29] [31] | Saliva, frozen respiratory samples [29] [31] | High (8.53% host reads in saliva vs. 89.29% in untreated) [31] | Low taxonomic bias; cost-effective; <5 min hands-on time [29] [31] | Reduced efficacy in BAL samples (no significant read increase) [29] |

Experimental Performance Data

The following table quantifies the impact of each method on sequencing outcomes across different respiratory sample types:

| Sample Type | Method | Host DNA Pre-Treatment | Microbial Reads Post-Treatment | Species Richness Change |

|---|---|---|---|---|

| Sputum | MolYsis | 99.2% [29] | 100-fold increase [29] | Not specified |

| Nasal Swab | QIAamp | 94.1% [29] | 13-fold increase [29] | +8 species [29] |

| BAL | MolYsis | 99.7% [29] | 10-fold increase [29] | +19 species [29] |

| Saliva | lyPMA | 89.29% [31] | 13.4-fold increase in bacterial DNA proportion [31] | Lowest taxonomic bias [31] |

Figure 1: Experimental workflows for three host-DNA depletion methods. Each method employs distinct mechanisms to selectively remove host genetic material while preserving microbial DNA for downstream analysis.

Troubleshooting Guide

Common Experimental Issues and Solutions

Problem: Low final DNA yield after host depletion

- Cause: Excessive biomass loss during multiple wash steps, particularly in low microbial biomass samples [31].

- Solutions:

- Process larger initial sample volumes to compensate for anticipated losses

- Pre-concentrate samples via centrifugation before applying depletion protocols

- For MolYsis: Ensure proper mixing during wash steps to prevent disproportionate microbial loss

- For QIAamp: Avoid overloading columns with excessive host cellular material

Problem: Incomplete host DNA depletion

- Cause: High levels of extracellular host DNA not effectively targeted by the method [31].

- Solutions:

- For lyPMA: Optimize PMA concentration (10 μM recommended) and ensure adequate light exposure for cross-linking [31]

- For MolYsis: Extend DNase incubation time or increase enzyme concentration for samples with high extracellular DNA

- Incorporate combination approaches for challenging samples (e.g., pre-filtration to remove extracellular DNA)

Problem: Taxonomic bias in resulting microbial profiles

- Cause: Differential susceptibility of microbial taxa to lysis or degradation steps [29] [28].

- Solutions:

- For Gram-negative bacteria: QIAamp shows minimal impact on viability in frozen isolates [29]

- If studying mixed communities: lyPMA demonstrates lowest taxonomic bias [31]

- Use mock communities specific to your sample type to validate protocol performance

- Consider chromatin immunoprecipitation (ChIP)-based methods for minimal bias, though with lower enrichment [28]

Problem: Reduced viability of specific pathogens after processing

- Cause: Method-specific impacts on microbial viability, particularly after freezing [29].

- Solutions:

Frequently Asked Questions (FAQs)

Which host depletion method performs best with frozen respiratory samples? MolYsis and QIAamp demonstrate better performance with frozen respiratory samples, even without cryoprotectants [29]. MolYsis showed 69.6% host reduction in sputum and 17.7% in BAL samples, while QIAamp achieved 75.4% host reduction in nasal swabs [29]. lyPMA performance varies significantly by sample type, showing excellent results in saliva but limited efficacy in BAL samples [29] [31].

How do these methods impact the detection of specific bacterial groups? All host depletion methods can introduce taxonomic biases. MolYsis has been shown to decrease the proportion of Gram-negative bacteria in sputum samples from people with cystic fibrosis [29]. QIAamp exhibits minimal impact on Gram-negative viability, even in non-cryoprotected frozen isolates [29]. lyPMA demonstrates the lowest overall taxonomic bias compared to untreated samples [31].

What is the optimal sequencing depth after host depletion? For most respiratory samples, species richness saturation occurs at approximately 0.5-2 million microbial reads [29]. This represents a substantial saving compared to non-depleted samples, where achieving this microbial read depth would require sequencing hundreds of millions of reads due to high host DNA content.

Can these methods be used with low microbial biomass samples? Yes, but with important considerations. Low biomass samples are particularly vulnerable to biomass loss during processing and contamination. MolYsis has been successfully applied to nasopharyngeal aspirates from premature infants, which represent challenging low-biomass samples [30]. Including appropriate negative controls is essential to identify potential contamination in low biomass applications [30].

Research Reagent Solutions

| Reagent/Kit | Manufacturer | Primary Function | Application Notes |

|---|---|---|---|

| MolYsis Basic | Molzym | Selective host cell lysis and DNase degradation | Effective for frozen samples; introduces taxonomic bias [29] [28] |

| QIAamp DNA Microbiome Kit | Qiagen | Differential lysis and nucleic acid degradation | Minimal impact on Gram-negative bacteria; effective for nasal swabs [29] [19] |

| Propidium Monoazide (PMA) | Multiple suppliers | Cross-links exposed DNA after photoactivation | Core component of lyPMA; 10 μM concentration optimal [29] [31] |

| HostZERO Microbial DNA Kit | Zymo Research | Commercial host depletion alternative | Compared alongside primary methods; high efficiency but variable by sample type [29] [19] |

| MasterPure Complete DNA & RNA Purification Kit | Lucigen | DNA extraction after host depletion | Compatible with MolYsis; improves Gram-positive recovery [30] |

The optimal host-DNA depletion method depends on specific research requirements, sample types, and target microorganisms. MolYsis offers high depletion efficiency for various respiratory samples, particularly sputum, though with some taxonomic bias. QIAamp provides excellent performance with nasal swabs and minimal impact on Gram-negative bacteria. lyPMA delivers the lowest taxonomic bias with simple implementation, making it ideal for saliva and similar matrices. Researchers should validate their chosen method using mock communities and sample-specific controls to ensure experimental objectives are met while recognizing the inherent limitations and biases of each approach.

Optimizing DNA Extraction and Library Preparation for Low-Input Samples

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical factors for successful DNA extraction from low-input samples?

The success of DNA extraction from low-input samples hinges on several key factors:

- Sample Preservation: DNA integrity begins with proper sample handling. Fresh or flash-frozen samples are ideal, while archived materials like Formalin-Fixed Paraffin-Embedded (FFPE) blocks often yield fragmented DNA due to chemical cross-linking [32].

- Lysis Method: A gentle, enzymatic digestion (e.g., using Proteinase K) is preferred to maximize DNA release while preserving fragment integrity. Harsh mechanical disruption can lead to shearing and sample loss [32].

- Purification Technology: Magnetic bead-based purification, often enhanced with carrier RNA, offers high recovery rates for trace amounts of DNA. Traditional spin columns can be less efficient for sub-nanogram inputs due to adsorption losses [32].

- Elution Volume: To avoid excessive dilution, elute the purified DNA in a small volume (e.g., ≤20 µL) to ensure a measurable concentration for downstream applications [32].

FAQ 2: How can I accurately quantify and assess the quality of my low-yield DNA?

Accurate quantification and quality control (QC) are crucial. The table below compares common methods:

Table 1: Quality Control Methods for Low-Input DNA

| QC Method | Primary Purpose | Key Advantage for Low-Input | Consideration |

|---|---|---|---|

| Qubit Fluorometry | Concentration | High sensitivity; detects as low as 0.01 ng/µL; specific for dsDNA [32]. | Does not provide information on fragment size. |

| TapeStation/Fragment Analyzer | Integrity & Size | Provides a DNA Integrity Number (DIN) and fragment size profile using minimal sample [32]. | More expensive than spectrophotometry. |

| NanoDrop UV Spectrophotometry | Purity | Quick check for contaminants (e.g., via 260/280 ratio) [32]. | Overestimates concentration at low levels; not recommended for precise quantification [32]. |

Recommended Workflow: Use Qubit for accurate concentration measurement, followed by capillary electrophoresis (e.g., TapeStation) to assess DNA integrity. A DIN ≥7 is a common threshold for proceeding to Next-Generation Sequencing (NGS) [32].

FAQ 3: My library preparation resulted in a high rate of adapter dimers. How can I prevent this?

Adapter dimer formation is a common challenge in low-input workflows where the adapter-to-insert ratio is inherently high.

- Optimize Adapter Concentration: Perform an adaptor titration experiment to determine the optimal dilution for your specific sample input, quality, and type [33].

- Modify Ligation Setup: To minimize adapter self-ligation, do not pre-mix the adapter with the ligation master mix. Instead, add the adapter to the sample first, mix, and then add the ligase master mix [33].

- Technical Adjustments: For some kits, diluting the provided adapters with nuclease-free water (e.g., 1/4 dilution) can reduce dimer formation [34].

- Post-Ligation Cleanup: If dimers form, they can often be removed by performing a bead-based cleanup using a 0.9x bead ratio, which selectively retains longer library fragments [33].

FAQ 4: My microbial samples have high host DNA contamination. What depletion strategies can I use?

For samples like milk or respiratory secretions, host DNA can overwhelm microbial signals. Pre-extraction methods that lyse mammalian cells and digest free DNA are effective.

Table 2: Overview of Host DNA Depletion Methods for Respiratory Samples [19]

| Method (Example) | Principle | Reported Performance (Microbial Read Increase vs. Raw) |

|---|---|---|

| Saponin Lysis + Nuclease (S_ase) | Lyses human cells with saponin, digests DNA. | 55.8-fold increase |

| Filtering + Nuclease (F_ase) | Filters host cells, digests DNA. | 65.6-fold increase |

| Commercial Kit (K_zym) | Combined lysis and digestion. | 100.3-fold increase |

| Nuclease Only (R_ase) | Digests free DNA only. | 16.2-fold increase |

These methods can significantly increase microbial read counts but may also introduce taxonomic biases and reduce total bacterial DNA biomass, so selection requires balancing efficiency and fidelity [19].

Troubleshooting Guides

Problem: Low Library Yield After Preparation

Potential Causes and Solutions:

- Cause: Input DNA is damaged or fragmented.

- Solution: For sheared DNA, use a Covaris instrument for controlled fragmentation. For FFPE-derived or other damaged DNA, use a DNA repair mix prior to library prep [33].

- Cause: Inefficient bead-based cleanup.

- Solution: Ensure SPRI beads are fully resuspended and do not dry out before elution. After the final ethanol wash, perform a quick spin and carefully remove all residual ethanol with a fine pipette tip to prevent inhibition [33].

- Cause: Adaptors are denatured.

- Solution: When diluting adaptors, always use 10 mM Tris-HCl (pH 7.5-8.0) with 10 mM NaCl and keep them on ice during use [33].

- Cause: Insufficient mixing during enzymatic steps.

- Solution: Mix samples thoroughly by pipetting up and down 10 times, ensuring the tip remains in the liquid to avoid bubble formation [33].

Problem: Over-amplification and PCR Bias in the Final Library

Potential Causes and Solutions:

- Cause: Too many PCR cycles.

- Solution: Reduce the number of PCR cycles. Start with the kit's recommendation and titrate downwards. Once PCR primers are depleted, libraries become over-amplified, leading to single-stranded fragments, heteroduplexes, and compromised data quality [33].

- Cause: Too much input DNA into the PCR.

- Solution: If you cannot further reduce PCR cycles, use only a fraction of the ligated library as PCR input or introduce a size selection step to narrow the input size range [33].

- General Consideration: Overamplification causes short fragments to be enriched, leading to an inaccurate representation of the sample and potential clustering biases on the sequencer [33].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for Low-Input Workflows

| Item | Function | Example Use Case |

|---|---|---|

| Magnetic Beads (e.g., SPRI beads) | Size-selective purification and cleanup of nucleic acids. | Post-ligation cleanup; PCR product purification. A 0.9x ratio selects against adapter dimers [33]. |

| DNA Repair Mix | Enzymatically reverses damage in DNA (e.g., nicks, deaminated bases). | Repair of DNA from FFPE or ancient samples prior to library construction [33]. |

| Carrier RNA | Enhances precipitation and recovery of trace nucleic acids during purification. | Added to magnetic bead solutions to improve yield from sub-nanogram DNA inputs [32]. |

| Ribonuclease (RNase) A | Degrades RNA to prevent it from co-purifying with DNA and interfering with quantification. | Standard step in DNA extraction protocols to ensure pure DNA samples. |

| Proteinase K | A broad-spectrum serine protease that digests proteins and inactivates nucleases. | Enzymatic lysis of tissues and cells during DNA extraction, especially useful for gentle lysis of low-input samples [32]. |

| Host Depletion Kits (e.g., QIAamp DNA Microbiome Kit) | Selectively lyse mammalian cells and digest host DNA, enriching for intact microbial cells. | Processing bronchoalveolar lavage (BALF) or milk samples to increase the proportion of microbial sequencing reads [19] [35]. |

Experimental Workflow: From Sample to Sequence

The following diagram illustrates the optimized end-to-end workflow for handling low-input and challenging samples, incorporating key troubleshooting and optimization points from the FAQs.

Low-Input Sample Processing Workflow

Frequently Asked Questions (FAQs)

FAQ 1: For a study focused on low microbial load samples (like urine or BALF), which method is more suitable and what specific precautions are necessary? Low microbial load samples are particularly challenging due to high host DNA contamination and high risk of contamination from reagents. Both methods require host depletion and careful experimental design.

- Shotgun Metagenomics is highly susceptible to being overwhelmed by host DNA. Implementing a robust host depletion method (e.g., saponin lysis with nuclease digestion) is critical to increase microbial read yield. For urine samples, a minimum volume of ≥3.0 mL is recommended for consistent profiling [36].

- Full-Length 16S rRNA Sequencing can be a more cost-effective initial approach, especially when combined with spike-in internal controls to enable absolute quantification of bacterial load, which is crucial for clinical diagnostics [37]. Regardless of the method, the inclusion of negative controls is non-negotiable to identify kit and laboratory-derived contaminants [36].

FAQ 2: We are getting a high percentage of host reads in our shotgun metagenomic data from respiratory samples. What can we do? This is a common issue. Several pre-extraction host depletion methods can significantly improve microbial read yield:

- Saponin lysis followed by nuclease digestion (Sase) and the HostZERO Microbial DNA Kit (Kzym) have shown the highest efficiency in removing host DNA from bronchoalveolar lavage fluid (BALF), reducing host DNA by up to four orders of magnitude [19].

- A newer method, 10 μm filtering followed by nuclease digestion (F_ase), also demonstrates a balanced performance, effectively increasing microbial reads while maintaining good bacterial DNA retention [19]. It is important to note that all host depletion methods can introduce some taxonomic bias, so the choice of method should be validated for your specific sample type and research question.

FAQ 3: Can full-length 16S rRNA sequencing with Nanopore provide species-level resolution for gut microbiome studies? Yes, a key advantage of full-length 16S sequencing is its improved taxonomic resolution. Studies evaluating the Emu classification tool on Nanopore data have shown that it performs well at providing genus and species-level resolution [37]. Furthermore, comparative analyses indicate that Oxford Nanopore-based 16S sequencing can capture a broader range of taxa compared to Illumina-based partial 16S sequencing [38]. This makes it a powerful tool for detailed compositional profiling.

FAQ 4: How does primer choice impact 16S rRNA sequencing results, and can it affect the detection of significant differences between experimental groups? Primer selection has a critical influence on the taxa detected. Different primer combinations can preferentially amplify specific bacterial groups, meaning some taxa might be detected by one primer set and missed by another [38]. However, a consistent finding is that despite these variations in taxonomic resolution, key microbial shifts induced by experimental conditions remain detectable. Significant differences between control and treatment groups are reliably found regardless of the primer choice, underscoring the robustness of the method for differential analysis [38].

FAQ 5: When is it justified to use the more expensive shotgun metagenomics approach over 16S rRNA sequencing? Shotgun metagenomics is justified when your research objectives extend beyond taxonomic profiling to include:

- Functional Potential: Identifying genes involved in metabolic pathways, antibiotic resistance, or virulence [39] [40].

- Strain-Level Analysis: Tracking specific strains within a community, which is crucial for understanding transmission or functional differences [40] [41].

- Discovery of Less Abundant Taxa: When a sufficient sequencing depth is achieved (>500,000 reads), shotgun sequencing has superior power to identify and quantify low-abundance genera that 16S sequencing may miss. These less abundant taxa can be biologically meaningful and able to discriminate between experimental conditions [39].

Comparison of Sequencing Methods

The table below summarizes the core characteristics of each method to guide your selection.

| Feature | Full-Length 16S rRNA Sequencing | Shotgun Metagenomics |

|---|---|---|

| Core Principle | Targeted amplification and sequencing of the entire 16S rRNA gene [37]. | Random sequencing of all DNA fragments in a sample [39]. |

| Taxonomic Resolution | High (species-level), especially with full-length gene [37] [38]. | Very High (species to strain-level) [38] [40]. |

| Functional Insights | Limited to inference from taxonomy. | Directly profiles functional genes, pathways, and ARGs [39] [40]. |

| Best for Low Biomass | More cost-effective for initial surveys; requires spike-in controls for quantification [37]. | Possible with intensive host depletion; high sequencing depth needed [19] [36]. |

| Relative Cost | Lower [39] | Higher |

| Key Limitations | - Primer bias affects taxa detection [38].- Limited functional data. | - High host DNA can overwhelm signal [19].- Higher cost and computational load. |

| Ideal Use Case | - Cost-effective taxonomic profiling.- Projects requiring high sample throughput.- Absolute quantification with spike-ins [37]. | - Studies requiring functional gene content.- Strain-level tracking.- Discovering low-abundance or non-bacterial members [39] [40]. |

Troubleshooting Common Experimental Issues

Issue 1: Low Detection of Microbial Reads in Shotgun Metagenomics

Problem: Your sequencing output is dominated by host reads, making microbial community analysis difficult. Solution: Implement an effective host DNA depletion protocol. The following workflow outlines a optimized method for respiratory samples, which can be adapted for other high-host-content samples [19].

Diagram Title: Host Depletion Workflow for Shotgun Sequencing

Additional Tips:

- For urine samples, using the QIAamp DNA Microbiome Kit has been shown to effectively deplete host DNA while maximizing microbial diversity and MAG recovery [36].

- Always quantify host and bacterial DNA loads before and after depletion using qPCR to assess method efficiency [19].

Issue 2: Inconsistent Profiling in Low Microbial Biomass Samples

Problem: Microbial community profiles are unstable or dominated by contaminants. Solution: Standardize sample volume and implement stringent contamination controls.

- Standardize Input: For urine microbiome studies, using a volume of ≥3.0 mL leads to the most consistent community profiles [36].

- Use Controls: Include negative controls (no-sample blanks) throughout your workflow (extraction to sequencing). Use these with bioinformatic tools like

decontam(prevalence-based method) to identify and remove contaminant sequences from your data [36].

Issue 3: Choosing Primers and Managing Bias in 16S rRNA Sequencing

Problem: Uncertainty about which 16S primers to use and concern about bias. Solution:

- Primer Selection: Acknowledge that all primer sets introduce some bias. If using short-read platforms, research primer sets (e.g., V3-V4) that best cover your taxa of interest [38].

- Move to Full-Length: Whenever possible, opt for full-length 16S rRNA gene sequencing (e.g., using Oxford Nanopore). This avoids the bias associated with amplifying only specific variable regions and provides superior taxonomic resolution [37] [41].

- Spike-in Controls: To move from relative to absolute abundance, incorporate a known quantity of synthetic or foreign microbial cells (spike-in control) during DNA extraction. This allows for the estimation of absolute microbial load in the original sample, which is particularly valuable for clinical diagnostics [37].

Research Reagent Solutions for Method Optimization

The table below lists key reagents and kits mentioned in recent literature for optimizing microbiome studies, particularly in challenging sample types.

| Reagent/Kit | Function | Application Context |

|---|---|---|

| ZymoBIOMICS Spike-in Control I | Internal control for absolute quantification [37]. | Added to samples before DNA extraction to estimate absolute bacterial load in full-length 16S sequencing [37]. |

| HostZERO Microbial DNA Kit (K_zym) | Pre-extraction host DNA depletion [19] [36]. | Effective for high-host-content samples like BALF and urine [19] [36]. |

| QIAamp DNA Microbiome Kit (K_qia) | Pre-extraction host DNA depletion [19] [36]. | Effective for BALF and urine; showed high bacterial retention in OP samples [19] [36]. |

| Saponin + Nuclease (S_ase) | Host cell lysis and DNA degradation [19]. | A highly effective, non-kit method for host depletion in respiratory samples [19]. |

| Mock Community Standards (e.g., ZymoBIOMICS) | Defined microbial mixtures for protocol validation [37]. | Used to optimize PCR conditions, DNA input, and benchmark bioinformatic pipelines for accuracy [37]. |

| Propidium Monoazide (PMA) | Selective degradation of free DNA and dead cell DNA [36]. | Can be used in host depletion protocols (O_pma) to reduce background noise [19] [36]. |

Incorporating Internal Controls and Spike-Ins for Absolute Quantification

Frequently Asked Questions

1. What is the fundamental difference between using spike-in controls and traditional normalization methods like RPM? Traditional methods like Reads Per Million (RPM) assume the total population of small RNAs remains constant between samples. However, in many biologically relevant scenarios, such as cancer patient plasma or during developmental transitions, this global amount can shift dramatically. Normalizing by total reads in these cases can obscure genuine biological changes. Spike-in controls, being synthetic oligonucleotides added at a known concentration before library preparation, provide an external, invariant baseline. This allows for the correction of technical variation and enables absolute quantification of molecules, moving beyond relative comparisons [42].

2. My microbial samples have extremely high host DNA background. Can spike-in or control strategies help with this? Yes, for metagenomic sequencing (mNGS) of samples with high host background, such as blood or bronchoalveolar lavage fluid (BALF), host depletion methods are a critical form of control. These are pre-processing steps designed to remove host DNA, thereby enriching the microbial signal. A recent study showed that methods like saponin lysis with nuclease digestion (Sase) or commercial kits like the HostZERO Microbial DNA Kit (Kzym) can reduce host DNA by over 99.9%, leading to a more than 50-fold increase in microbial reads for BALF samples. This significantly improves the sensitivity and diagnostic yield for pathogen detection [20] [19].