Optimizing Library Preparation for Metagenomic Sequencing: A Comprehensive Guide for Robust Microbial Profiling

Metagenomic next-generation sequencing (mNGS) is revolutionizing microbial community analysis and infectious disease diagnostics by enabling unbiased detection of pathogens.

Optimizing Library Preparation for Metagenomic Sequencing: A Comprehensive Guide for Robust Microbial Profiling

Abstract

Metagenomic next-generation sequencing (mNGS) is revolutionizing microbial community analysis and infectious disease diagnostics by enabling unbiased detection of pathogens. However, the accuracy and reliability of results are profoundly influenced by the library preparation workflow. This article provides a comprehensive guide for researchers and drug development professionals, covering foundational principles, methodological choices, and advanced optimization strategies. We synthesize current evidence to address key challenges, including host DNA depletion, input material selection, and kit bias, while offering practical troubleshooting and comparative performance data from recent clinical and environmental studies. The goal is to empower scientists with the knowledge to design robust, reproducible metagenomic studies that yield high-quality, clinically actionable data.

Core Principles and Impact of Library Preparation on Metagenomic Data Quality

In metagenomic sequencing research, library preparation constitutes the critical suite of molecular biology techniques that transform raw, extracted nucleic acids from complex samples into sequencing-ready formats. This process encodes the sample's genetic material with all necessary platform-specific motifs, enabling the subsequent detection of nucleotide sequences. The fidelity, efficiency, and quantitative accuracy of this preparatory bridge profoundly influence all downstream data, from taxonomic classification to functional characterisation of microbial communities [1]. The choice of methodology is particularly consequential in metagenomics, where the goal is to comprehensively capture the genomic diversity of a sample without introducing technical artefacts that could bias biological interpretation. As such, defining and optimising library preparation is a cornerstone of robust metagenomic research.

A Comparative Analysis of Library Preparation Methods

The selection of a library preparation method involves trade-offs between input requirements, bias, yield, and time efficiency. Systematic comparisons using defined samples provide critical guidance for selecting the most appropriate protocol.

Comparison of RNA Library Preparation Kits for Metatranscriptomics

A simplified benchmark using total RNA from four microbial species (Escherichia coli, Acinetobacter baylyi, Lactococcus lactis, and Bacillus subtilis) evaluated four cDNA synthesis and Illumina library preparation protocols: TruSeq Stranded Total RNA (TS), SMARTer Stranded RNA-Seq (SMART), Ovation RNA-Seq V2 (OV), and Encore Complete Prokaryotic RNA-Seq (ENC). Significant variations in organism representation and gene expression patterns were observed [1].

Table 1: Performance Comparison of RNA-Seq Library Preparation Methods [1]

| Method | Minimum Input Requirement | rRNA Depletion Required? | Key Synthesis Principle | Stranded? | Performance Summary |

|---|---|---|---|---|---|

| TruSeq Stranded (TS) | 100 ng depleted RNA | Yes | Random priming after RNA fragmentation | Yes | Generally best performance; limited by high input requirement. |

| SMARTer Stranded (SMART) | 1 ng depleted RNA | Yes | Random priming after RNA fragmentation | Yes | Best compromise for low input RNA; reliable quantitative results. |

| Ovation RNA-Seq V2 (OV) | 0.5 ng depleted RNA | Yes | Random and oligo(dT) priming with linear amplification | No | Only option for very low input; observed biases limit quantitative use. |

| Encore Complete (ENC) | 100 ng total RNA | No | Selective priming with decreased rRNA affinity | Yes | No prior depletion needed; uses bespoke adaptor ligation. |

The study concluded that the TruSeq method generally performed best but required hundreds of nanograms of total RNA. The SMARTer method was the best solution for lower amounts of input RNA, while the Ovation system, despite its utility for ultra-low inputs, introduced significant biases that limited its utility for quantitative analyses [1].

Comparison of DNA Library Preparation Kits for Illumina Sequencing

A separate systematic study compared nine commercial DNA library preparation kits using the same DNA sample (barcoded amplicons from phiX174) and a droplet digital PCR (ddPCR) assay to quantify efficiency at each protocol step [2]. The kits compared were NEBNext, NEBNext Ultra (New England Biolabs), SureSelectXT (Agilent), Truseq Nano, Truseq DNA PCR-free (Illumina), Accel-NGS 1S, Accel-NGS 2S (Swift Biosciences), KAPA Hyper, and KAPA HyperPlus (KAPA Biosystems).

The study revealed important variations in overall library preparation efficiencies, with kits that combined several steps into a single one exhibiting final yields 4 to 7 times higher than others. The most critical step, adaptor ligation, showed yield variations of more than a factor of 10 between kits. Some ligation efficiencies were so low they could impair the original library complexity. The anticorrelation observed between ligation and PCR yields means that a low ligation efficiency can be masked by a high-yield PCR amplification step, which itself can introduce bias and reduce complexity [2].

Table 2: Selected DNA Library Kit Preparation Efficiencies [2]

| Kit Name | Ligation Efficiency | Notable Protocol Features | Impact on Library |

|---|---|---|---|

| KAPA HyperPlus | ~100% | Combined steps; fragmentase treatment. | Preserves sample heterogeneity. |

| NEBNext Ultra | ~3.5% | Combined end-repair and A-tailing. | Very low ligation yield. |

| Illumina Truseq Nano | 15-40% | Classical multi-step protocol. | Moderate efficiency. |

| Truseq DNA PCR-free | N/A (Adaptors contain P5/P7) | No PCR step; stringent clean-ups. | Requires high input (1 μg). |

Automation in Library Preparation

Automation using liquid handling robotics presents a solution for enhancing throughput, reproducibility, and accuracy. A 2025 study compared manual and automated library preparation for Oxford Nanopore Technologies (ONT) long-read sequencing of environmental soil samples [3]. The findings demonstrated that automated preparation, while leading to a minor reduction in read and contig lengths, resulted in a slightly higher taxonomic classification rate and alpha diversity, including the detection of more rare taxa. Crucially, no significant difference in microbial community structure was identified between manual and automated libraries, validating automation for high-throughput applications where reproducibility and efficiency are paramount [3].

Detailed Experimental Protocols

This section outlines specific wet-lab methodologies as described in the comparative studies.

Application: Metatranscriptomic library preparation from microbial total RNA. Key Materials:

- Total RNA (from pure cultures or mixed community).

- Ribosomal RNA depletion kit (e.g., Ribo-Zero).

- Selected library prep kit (see Table 1).

- Magnetic beads for clean-up (e.g., SPRI).

- PCR cycler.

- Bioanalyzer or TapeStation for quality control.

Methodology:

- RNA Depletion: Perform ribosomal RNA depletion on total RNA according to the depletion kit's instructions. This step is crucial for all methods except the Encore Complete system.

- cDNA Synthesis & Library Build: Follow the specific protocol for the chosen kit, noting fundamental differences:

- TruSeq & SMARTer: Fragment depleted RNA using divalent cations + heat or heat alone, respectively. Synthesise cDNA using random primers.

- Ovation RNA-Seq V2: Synthesise cDNA from depleted RNA using a mix of random and oligo(dT) primers, followed by a linear amplification step.

- Encore Complete: Proceed directly from total RNA using selective primers designed to have decreased affinity for rRNA sequences.

- Adapter Ligation & Indexing: Ligate platform-specific adapters. For most kits, this includes index sequences for sample multiplexing.

- Library Amplification & Clean-up: Amplify the adapter-ligated DNA via PCR for a kit-dependent number of cycles. Perform final clean-up using magnetic beads to purify the sequencing-ready library.

- Quality Control: Quantify the final library using a fluorometric method (e.g., Qubit) and assess size distribution using a Bioanalyzer.

Application: High-throughput preparation of ONT sequencing libraries from environmental DNA. Key Materials:

- Genomic DNA (1 μg input recommended).

- ONT Ligation Sequencing Kit (e.g., SQK-LSK114).

- ONT PCR Barcoding Expansion 96 (EXP-PBC096).

- Bravo Automated Liquid Handing Platform (Agilent) or equivalent.

- PCR cycler.

Methodology:

- DNA Normalisation: Normalise all DNA samples to a uniform concentration (e.g., 1 μg in a standard volume) using ultra-pure water.

- Automated Setup: Transfer normalised DNA samples to a 96-well plate compatible with the liquid handling robot.

- Automated Library Construction: Execute the ONT Ligation Sequencing Kit protocol on the Bravo platform. The process typically includes:

- DNA Repair and A-tailing.

- Adapter Ligation: Ligation of barcoded adapters from the PCR Barcoding Expansion kit.

- Purification Steps: Bead-based clean-ups between major steps. (Note: A potential limitation is the lack of simultaneous temperature control and shaking during bead elution, which may reduce long fragment recovery).

- Pooling: Following automated preparation, pool barcoded libraries in equimolar ratios based on quantification.

- Sequencing: Load the pooled library onto a primed ONT flow cell (e.g., R10.4.1 PromethION) for sequencing.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Library Preparation Workflows

| Item | Function | Example Kits & Reagents |

|---|---|---|

| Magnetic Beads | Purification and size selection of nucleic acids after various enzymatic reactions. | SPRIselect beads, SparQ beads, MagBio HighPrep beads. |

| rRNA Depletion Kits | Reduces the abundant ribosomal RNA fraction in total RNA samples to enrich for mRNA. | Illumina Ribo-Zero Plus, QIAseq FastSelect, NEBNext rRNA Depletion. |

| Ultra II DNA Library Prep Kit | A widely used kit for Illumina sequencing based on the classical end-repair, A-tailing, and ligation workflow. | NEBNext Ultra II DNA Library Prep Kit (New England Biolabs) [4]. |

| Ligation Sequencing Kit | The standard kit for preparing genomic DNA or metagenomic samples for sequencing on Oxford Nanopore platforms. | ONT Ligation Sequencing Kit (e.g., SQK-LSK114) [3]. |

| PCR Barcoding Kit | Provides barcoded adapters for multiplexing samples in a single sequencing run, essential for high-throughput studies. | ONT PCR Barcoding Expansion 96 (EXP-PBC096) [3]. |

| DIY Library Prep Reagents | Low-cost, non-proprietary reagents for constructing sequencing libraries, ideal for scaling and cost-sensitive projects. | Santa Cruz Reaction (SCR) reagents [4]. |

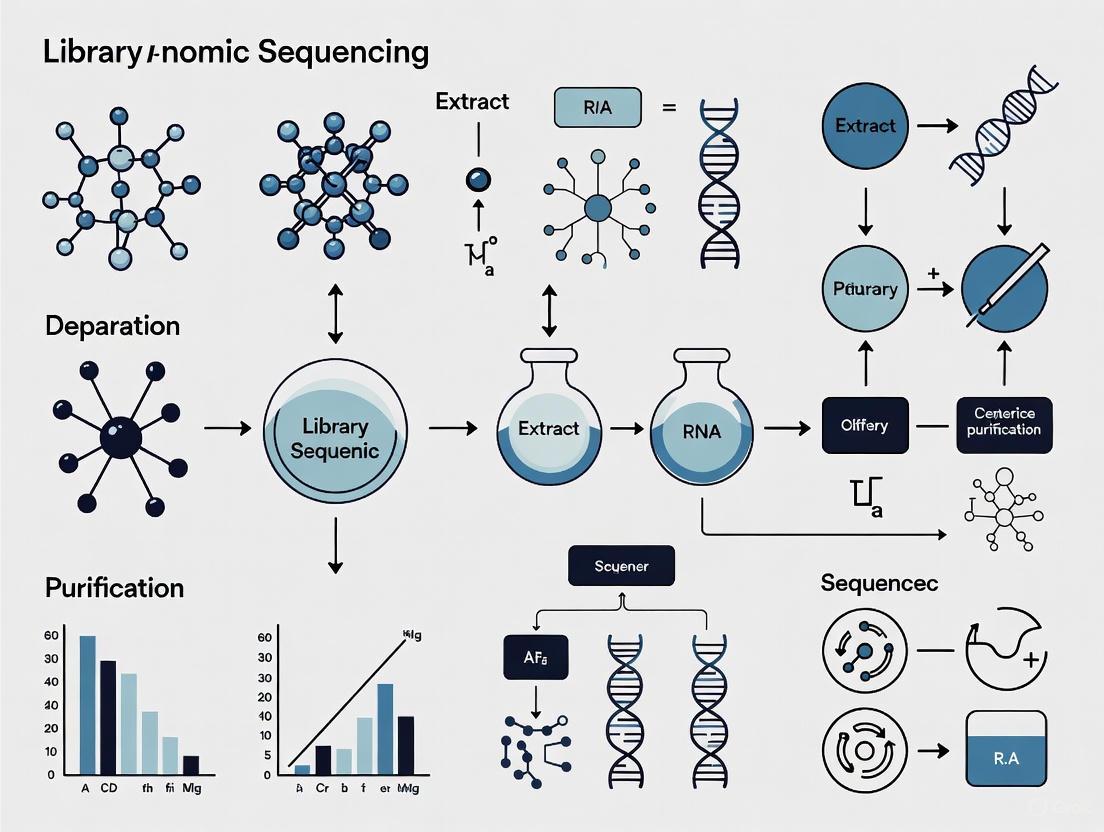

Workflow Visualization of Key Library Preparation Paths

The following diagram synthesises the core pathways for preparing metagenomic and metatranscriptomic libraries, highlighting critical decision points related to sample type, input, and methodology.

Diagram 1: Library Preparation Decision Workflow. This chart outlines the primary pathways for constructing sequencing libraries from DNA and RNA, with key decision points based on sample input, throughput needs, and the requirement for long-read or PCR-free data.

Within metagenomic sequencing research, the journey from a raw biological sample to a sequenced library is a critical determinant of data quality and reliability. This process, encompassing nucleic acid extraction through adapter ligation, constitutes the foundational wet-lab phase of any metagenomic study. The specific choices made during this preparatory stage can profoundly influence downstream analyses, including the detection of low-abundance taxa, the accuracy of taxonomic profiling, and the identification of functional potential within a microbial community [5]. In the context of ancient oral microbiome research, for instance, the selection of DNA extraction and library construction methods has been shown to significantly impact the recovery of endogenous DNA, microbial community composition, and the assessment of DNA damage patterns [5]. This application note details the core protocols and strategic considerations for these key workflow components, providing a structured guide for researchers aiming to optimize their metagenomic sequencing projects.

Nucleic Acid Extraction

The initial step in any metagenomic workflow is the liberation and purification of nucleic acids from the complex matrix of the sample, which can range from soil and water to human-associated biofilms like dental calculus.

Core Principles and Sample Considerations

The primary goal of extraction is to obtain pure, high-quality DNA or RNA that is representative of the entire microbial community present, while simultaneously removing substances that can inhibit downstream enzymatic reactions (e.g., humic acids, pigments, or calcium phosphates) [6] [5]. The quality of the extracted nucleic acids is intrinsically linked to the quality and preservation of the starting material. Fresh or appropriately frozen samples are always recommended, though this is not always feasible with archaeological or clinical samples [6].

The physical and chemical nature of the sample dictates the stringency of the lysis conditions required. Dense, mineralized matrices like dental calculus necessitate rigorous lysis buffers containing ethylenediaminetetraacetic acid (EDTA) to chelate calcium and destabilize the structure, alongside prolonged digestion with proteinase K to effectively release encapsulated DNA [5].

Method Comparison and Selection

Two silica-based extraction methods, optimized for recovering short, degraded DNA fragments, are commonly used in challenging metagenomic contexts such as ancient DNA research [5].

Table 1: Comparison of DNA Extraction Methods for Challenging Samples

| Feature | QG Method [5] | PB Method [5] |

|---|---|---|

| Core Principle | Silica-based purification with a binding buffer containing guanidinium thiocyanate. | Silica-based purification with a binding buffer of sodium acetate, isopropanol, and guanidinium hydrochloride. |

| Key Advantage | Effective DNA release and minimization of PCR inhibitors. | Enhanced recovery of ultra-short DNA fragments (<50 bp). |

| Typical Input | Standard to low input samples. | Ideal for highly degraded or low-biomass samples. |

| Considerations | May under-recover the shortest DNA fragments. | Particularly suited for ancient metagenomic or forensic applications. |

No single extraction method consistently outperforms another across all sample types and preservation states. The effectiveness of a protocol often depends on the specific sample context, and researchers must weigh factors such as expected DNA fragment length, sample age, and the presence of co-extracted inhibitors when selecting a method [5].

Library Construction: Fragmentation to Adapter Ligation

Following extraction, purified DNA must be converted into a sequencing-compatible format, known as a library. This process involves several standardized steps to prepare the DNA for the sequencing platform.

DNA Fragmentation

Short-read sequencing technologies require DNA fragments of a uniform, specific length (e.g., 200-600 bp) for optimal performance [7] [8]. The choice of fragmentation method can influence coverage uniformity and sequence bias.

Table 2: Comparison of DNA Fragmentation Methods

| Method | Mechanism | Advantages | Disadvantages |

|---|---|---|---|

| Physical Shearing (e.g., Acoustic) [7] [8] | Uses physical force (e.g., acoustics) to break DNA. | Minimal sequence bias; reproducible and uniform size distributions. | Requires specialized equipment (e.g., Covaris); potential for sample loss during handling. |

| Enzymatic Fragmentation [7] [8] | Uses enzymes (e.g., nucleases) to digest DNA. | Quick, cost-effective, and easily automated; suitable for low-input samples. | Potential for sequence-specific bias (e.g., GC bias); sensitive to reaction conditions. |

| Tagmentation [8] | Uses a transposase enzyme to simultaneously fragment DNA and attach adapter sequences. | Rapid and efficient; combines two steps into one, reducing hands-on time and sample loss. | Introduces sequence bias; optimization of enzyme-to-DNA ratio is critical. |

End Repair and A-Tailing

Fragmentation produces ends that are often incompatible with adapter ligation. The end-repair and A-tailing steps convert these heterogeneous ends into a uniform, ligation-ready format [8].

- End Repair: This process uses a combination of enzymes, typically T4 DNA polymerase and T4 polynucleotide kinase (PNK), to "blunt" the ends of the DNA fragments. The polymerase fills in 5' overhangs and chews back 3' overhangs, while the kinase phosphorylates the 5' ends, which is essential for subsequent ligation [8].

- A-Tailing: Following blunting, a single adenine (A) base is added to the 3' end of each fragment using an enzyme like Taq DNA polymerase. This creates a complementary overhang for ligation with thymine (T)-overhanging adapters, a strategy that minimizes fragment-to-fragment self-ligation and promotes correct adapter binding [7] [8].

Adapter Ligation

Adapter ligation is the final critical step, where short, double-stranded oligonucleotides are covalently attached to the prepared DNA fragments [6] [7]. These adapters are multifunctional, containing:

- Flow Cell Binding Sequences: Essential for attaching the library fragments to the sequencing surface (e.g., Illumina flow cells).

- Barcodes/Indexes: Short, unique DNA sequences that allow multiple libraries to be pooled and sequenced simultaneously (multiplexing) and later bioinformatically separated [6] [7].

- Unique Molecular Identifiers (UMIs): Random sequences used to tag individual molecules prior to amplification, enabling the bioinformatic correction of PCR duplicates and improving variant calling accuracy [7].

The ligation reaction is typically catalyzed by a DNA ligase enzyme, such as T4 DNA Ligase, which forms a phosphodiester bond between the fragment and the adapter [7] [8]. After ligation, a cleanup step is essential to remove excess adapters, adapter dimers, and enzyme buffers, which can interfere with sequencing efficiency [8].

Diagram 1: NGS Library Prep Workflow. This diagram outlines the key steps in preparing a next-generation sequencing library, from DNA fragmentation to the final adapter-ligated product.

The Scientist's Toolkit: Essential Reagents and Materials

Successful library preparation relies on a suite of specialized reagents and kits. The following table details key solutions used in the featured workflows.

Table 3: Key Research Reagent Solutions for NGS Library Preparation

| Item | Function | Application Notes |

|---|---|---|

| Proteinase K [5] | Digests proteins and degrades nucleases, facilitating DNA release from complex samples. | Critical for tough matrices like dental calculus; used with EDTA in lysis buffer. |

| Silica-based Binding Buffers (QG, PB) [5] | Enable purification and concentration of nucleic acids by binding them to a silica membrane/matrix in the presence of chaotropic salts. | Different formulations (e.g., QG vs. PB) optimize recovery of DNA across a range of fragment sizes. |

| T4 DNA Polymerase & T4 PNK [8] | Work in concert during end-repair to create blunt, phosphorylated ends on DNA fragments. | Essential for generating ends compatible with adapter ligation. |

| Taq DNA Polymerase [8] | Adds a single 'A' nucleotide to the 3' end of blunted DNA fragments (A-tailing). | Creates a complementary overhang for T-overhang adapters, guiding correct ligation. |

| T4 DNA Ligase [7] [8] | Catalyzes the formation of a phosphodiester bond between the DNA fragment and the adapter. | High-efficiency ligation is crucial for maximizing library yield and complexity. |

| Specialized Library Prep Kits (e.g., xGen kits) [7] | Provide optimized, pre-tested reagent mixes for a specific library prep method (e.g., ligation-based). | Streamline workflow, improve reproducibility, and reduce hands-on time. |

Metagenomic sequencing has revolutionized our ability to study complex microbial communities without the need for cultivation. However, the accuracy of these analyses is critically dependent on the quality of the library preparation process. Three major technical biases—host DNA contamination, GC content bias, and external DNA contamination—can severely skew results, leading to inaccurate biological interpretations. These challenges are particularly pronounced in low-biomass samples and clinical specimens, where microbial signals may be overwhelmed by non-target DNA. This application note examines the sources and impacts of these biases within the context of metagenomic library preparation and provides detailed protocols for their mitigation, enabling more reliable and reproducible research outcomes.

Host DNA Contamination: Impact and Depletion Strategies

The Challenge of Host DNA in Metagenomic Sequencing

Host DNA constitutes a major impediment to effective metagenomic sequencing, particularly in samples derived from host-associated environments. In respiratory samples like bronchoalveolar lavage (BAL) fluid, host DNA content can exceed 99.7%, while even nasal swabs average 94.1% host DNA [9]. This overwhelming presence of host genetic material drastically reduces the effective sequencing depth for microbial communities, limiting sensitivity for detecting low-abundance species and increasing sequencing costs substantially.

The impact of host DNA on taxonomic profiling is quantifiable and severe. Studies have demonstrated that increasing proportions of host DNA lead to decreased sensitivity in detecting both very low and low-abundant bacterial species [10]. When host DNA reaches 90% of a sample, even substantial sequencing efforts may fail to detect a significant number of microbial species present in the community. This effect is particularly problematic for clinical diagnostics where missing low-abundance pathogens could have significant implications for patient care.

Host DNA Depletion Methods: A Comparative Analysis

Multiple host depletion strategies have been developed, falling into two primary categories: pre-extraction methods that selectively lyse host cells before DNA isolation, and post-extraction methods that enrich for microbial DNA based on sequence characteristics. The performance of these methods varies significantly across sample types.

Table 1: Comparison of Host DNA Depletion Methods for Respiratory Samples

| Method | Mechanism | BAL Fluid (% Host DNA Reduction) | Nasal Swabs (% Host DNA Reduction) | Sputum (% Host DNA Reduction) | Bacterial DNA Retention |

|---|---|---|---|---|---|

| HostZERO | Pre-extraction: Selective lysis | 18.3% | 73.6% | 45.5% | Moderate |

| MolYsis | Pre-extraction: Selective lysis | 17.7% | 57.1% | 69.6% | Moderate |

| QIAamp Microbiome | Pre-extraction: Selective lysis | 13.5% | 75.4% | 22.5% | High |

| Benzonase | Pre-extraction: Enzyme-based | 10.8% | Not significant | 19.8% | Variable |

| lyPMA | Pre-extraction: Osmotic lysis + PMA | 5.7% | 41.1% | 18.3% | Low |

| S_ase | Pre-extraction: Saponin lysis + nuclease | ~99.99%* | - | - | Moderate |

| Microbiome Enrichment Kit | Post-extraction: Methylation-based | Poor performance for respiratory samples [11] | - | - | - |

*Data derived from different studies; direct comparisons should be made with caution [11] [9].

The efficacy of host depletion methods shows significant variation across sample types. For BAL fluid with extremely high host DNA content (>99%), even the most effective methods typically reduce host DNA by less than 20% [9]. In contrast, for nasal swabs with lower initial host DNA levels (~94%), methods like QIAamp and HostZERO can reduce host DNA by 75% or more [9]. This highlights the importance of matching depletion strategies to specific sample characteristics.

Detailed Protocol: Host Depletion Using Pre-extraction Methods

Principle: Selective lysis of mammalian cells followed by degradation of released DNA, while intact microbial cells remain protected by their cell walls.

Reagents Required:

- Saponin solution (0.025-0.5%)

- DNase I or Benzonase endonuclease

- DNase digestion buffer

- EDTA (for enzyme inactivation)

- Phosphate-buffered saline (PBS)

- Microbial DNA-free water

Procedure:

- Sample Preparation: Centrifuge 500 μL-1 mL of sample at low speed (500-1000 × g) to pellet host cells while leaving microbial cells in suspension.

- Host Cell Lysis: Resuspend pellet in 200 μL of saponin solution (0.025% for respiratory samples). Vortex thoroughly and incubate at room temperature for 15 minutes.

- DNase Treatment: Add 5 μL of Benzonase endonuclease or DNase I to the lysate. Include Mg²⁺ in the reaction buffer for enzyme activity.

- Digestion: Incubate at 37°C for 30 minutes with occasional mixing to digest released host DNA.

- Enzyme Inactivation: Add EDTA to a final concentration of 5 mM and incubate at 65°C for 10 minutes.

- Microbial Cell Collection: Centrifuge at high speed (10,000 × g) for 10 minutes to pellet microbial cells.

- DNA Extraction: Proceed with standard microbial DNA extraction protocols on the pellet.

Validation: Quantify host DNA depletion using qPCR targeting single-copy host genes (e.g., β-actin) and compare to microbial gene targets (e.g., 16S rRNA genes) [11].

GC Content Bias: Mechanisms and Correction Methods

Understanding GC Bias in Sequencing Data

GC content bias refers to the dependence between fragment count (read coverage) and GC content observed in Illumina sequencing data [12]. This bias presents as a unimodal relationship, where both GC-rich and AT-rich fragments are underrepresented in sequencing results, with optimal representation typically occurring at moderate GC content levels. This pattern can dominate the biological signal in analyses that focus on measuring fragment abundance within a genome, such as copy number estimation or comparative metagenomics.

The bias manifests differently across samples and is not consistent between experiments, making it challenging to develop universal correction methods. Research has demonstrated that it is the GC content of the full DNA fragment, not just the sequenced portion, that primarily influences fragment count [12]. This finding has important implications for library preparation and data analysis approaches.

Impact of GC Bias on Metagenomic Analyses

GC bias can substantially distort microbial community representations in metagenomic studies. Species with GC contents at the extremes of the distribution may be systematically underdetected, leading to:

- Inaccurate estimation of microbial relative abundances

- False negatives for potentially important community members

- Distorted functional predictions based on skewed taxonomic assignments

- Reduced comparability between studies using different sequencing protocols

The effect is particularly problematic when comparing communities across different samples or treatments, where technical bias may be confounded with biological signals of interest.

Detailed Protocol: Computational Correction of GC Bias

Principle: Model the relationship between observed read coverage and GC content, then normalize coverage based on this relationship to remove technical bias.

Software Requirements:

- R programming environment

- BEADS algorithm or similar GC correction tool

- BAM files from aligned sequencing data

- Reference genome or metagenome assembly

Procedure:

- GC Content Calculation: Compute GC content for sliding windows across the reference or for each contig in a metagenome assembly. Window size should approximate average fragment length.

- Read Coverage Calculation: Calculate read depth for each genomic window using tools like bedtools or custom scripts.

- GC-R coverage Relationship Modeling: Fit a unimodal curve to describe the relationship between GC content and read coverage. The BEADS algorithm uses the following approach:

- Model expected coverage as a function of GC content using local regression

- Account for fragment length effects if paired-end data available

- Generate normalization factors for each GC value

- Coverage Normalization: Adjust raw coverage values by applying GC-specific normalization factors.

- Validation: Assess correction efficacy by examining the relationship between normalized coverage and GC content, which should appear flat after successful correction.

Considerations: GC correction methods work best for high-coverage datasets and may be challenging to apply directly to complex metagenomic samples with heterogeneous GC contents across numerous microbial genomes [12].

Diagram 1: GC Bias Correction Workflow - This workflow outlines the computational process for identifying and correcting GC content bias in sequencing data.

Environmental and Reagent Contamination: Identification and Elimination

Contamination in metagenomic studies originates from multiple sources, including laboratory reagents, sampling equipment, personnel, and the laboratory environment itself. The impact of contamination is inversely proportional to sample microbial biomass—low-biomass samples such as fetal tissues, blood, and certain environmental samples are particularly vulnerable [13]. In these samples, contaminating DNA can comprise the majority of sequences obtained, potentially leading to spurious conclusions about community composition.

The controversial debate surrounding the existence of a placental microbiome exemplifies the critical importance of proper contamination control [13] [14]. Early reports of placental bacteria were later challenged by studies demonstrating that signal intensities in placental samples were indistinguishable from negative controls, highlighting how contamination can misdirect entire research fields.

Strategies for Contamination Prevention and Identification

Effective contamination management requires a multi-faceted approach addressing all stages from sample collection to data analysis:

Prevention During Sample Collection:

- Use single-use, DNA-free collection equipment

- Decontaminate reusable equipment with ethanol followed by DNA-degrading solutions (e.g., bleach, UV irradiation)

- Implement appropriate personal protective equipment (PPE) to minimize operator-derived contamination

- Include field blanks and sampling controls to identify environmental contaminants [13]

Laboratory Processing Controls:

- Process negative controls (reagent-only blanks) alongside experimental samples

- Use clean room facilities for low-biomass samples when possible

- Employ unique dual indices and unique molecular identifiers (UMIs) to identify cross-contamination [15]

Bioinformatic Identification:

- Utilize tools like decontam that implement statistical classification based on contaminant patterns [14]

- Apply frequency-based methods that exploit the inverse correlation between contaminant frequency and sample DNA concentration

- Use prevalence-based methods that identify sequences more common in negative controls than true samples

Detailed Protocol: Statistical Contaminant Identification with Decontam

Principle: Leverage the statistical properties of contaminants—specifically, their higher prevalence in low-DNA samples and negative controls—to distinguish them from true sample-derived sequences.

Software and Data Requirements:

- R programming environment with decontam package installed

- Feature table (ASV, OTU, or species table)

- Sample metadata including DNA concentrations

- Negative control sequencing data (optional but recommended)

Frequency-Based Method (Requires DNA Concentration Data):

- Data Preparation: Import feature table and sample metadata containing quantitation data.

- Contaminant Identification:

- Result Interpretation: Features with a probability score >0.5 are classified as contaminants.

- Table Filtering: Remove contaminant features from downstream analyses.

Prevalence-Based Method (Uses Negative Controls):

- Data Preparation: Include negative control samples in the feature table.

- Contaminant Identification:

- Validation: Compare contaminant classifications with known contaminant taxa databases.

Combined Approach: For maximum sensitivity, apply both methods independently and treat features identified by either method as contaminants [14].

Table 2: Common Contaminant Genera in Metagenomic Studies and Their Sources

| Contaminant Genus | Frequency of Detection | Primary Source | Recommended Handling |

|---|---|---|---|

| Cutibacterium acnes | Detected in 100% of plasma and urine samples [15] | Human skin, laboratory reagents | Remove with decontam or SIFT-seq |

| Pseudomonas | Common in multiple studies [14] | Water systems, laboratory surfaces | Include in negative controls |

| Bradyrhizobium | Common in soil studies [14] | Laboratory reagents | Statistical identification and removal |

| Methylobacterium | Frequent in low-biomass studies [14] | Laboratory water, plastics | Monitor via negative controls |

| Staphylococcus | Variable across studies | Human skin, cross-contamination | Careful interpretation in host-associated studies |

Integrated Workflow for Comprehensive Bias Control

A Unified Approach to Bias Mitigation

Effective management of the three major biases in metagenomic sequencing requires an integrated approach spanning experimental design, laboratory processing, and bioinformatic analysis. The following workflow provides a comprehensive strategy for minimizing these technical artifacts:

Experimental Design Phase:

- Determine sample biomass levels to assess contamination risk

- Select appropriate host depletion methods based on sample type

- Plan for sufficient sequencing depth to account for host DNA dilution

- Include appropriate controls (negative, positive, and sampling controls)

Laboratory Processing Phase:

- Implement selected host depletion protocol before DNA extraction

- Use contamination-aware techniques (sterile equipment, PPE, reagent screening)

- Employ unique dual indices to track cross-contamination

- Quantitate DNA to support frequency-based contaminant identification

Bioinformatic Analysis Phase:

- Apply GC bias correction to read coverage data

- Implement statistical contaminant identification (decontam)

- Remove identified contaminants from feature tables

- Validate results through comparison with negative controls

Advanced Method: SIFT-Seq for Contamination-Resistant Sequencing

Sample-Intrinsic microbial DNA Found by Tagging and sequencing (SIFT-seq) represents a novel approach that proactively labels sample-intrinsic DNA before library preparation, allowing bioinformatic identification and removal of contaminating DNA introduced during processing [15].

Principle: Chemical tagging of DNA in the original sample before DNA isolation, enabling distinction between true sample DNA and contaminants based on the presence of the tag.

Protocol Overview:

- DNA Tagging: Treat raw sample with bisulfite to convert unmethylated cytosines to uracils directly in the sample matrix.

- Library Preparation: Proceed with standard metagenomic library preparation.

- Bioinformatic Filtering: Identify and retain only sequences showing the bisulfite conversion pattern, indicating they were present in the original sample.

Performance: SIFT-seq reduces contaminant reads by up to three orders of magnitude and completely removes specific contaminant genera like Cutibacterium acnes from 62 of 196 clinical samples tested [15].

Diagram 2: Integrated Bias Mitigation Workflow - A comprehensive approach addressing multiple biases throughout the metagenomic sequencing pipeline.

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Addressing Metagenomic Biases

| Reagent/Kit | Primary Function | Application Context | Performance Considerations |

|---|---|---|---|

| HostZERO Microbial DNA Kit | Host DNA depletion | Respiratory samples, tissues | High host depletion for nasal swabs (73.6% reduction) |

| QIAamp DNA Microbiome Kit | Host DNA depletion | Various sample types | High bacterial retention (21% in OP samples) |

| Nextera XT DNA Library Prep Kit | Library preparation | Low-input metagenomic samples | Integrated tagmentation, low input requirements (1ng) |

| NEBNext Microbiome DNA Enrichment Kit | Methylation-based enrichment | Samples with differential methylation | Poor performance for respiratory samples |

| Benzonase Nuclease | Host DNA degradation | Pre-extraction protocols | Requires optimization for different sample types |

| Saponin | Selective host cell lysis | Pre-extraction protocols | Effective at low concentrations (0.025%) |

| Unique Dual Indices | Cross-contamination tracking | All metagenomic studies | Essential for identifying index hopping |

| Decontam R Package | Statistical contaminant identification | All metagenomic studies | Frequency and prevalence-based methods |

| BEADS Algorithm | GC bias correction | DNA-seq, metagenomics | Models unimodal GC-coverage relationship |

Host DNA contamination, GC content bias, and environmental contamination represent three major technical challenges that can severely compromise metagenomic sequencing results. Through implementation of appropriate host depletion strategies, computational correction methods, and rigorous contamination control protocols, researchers can substantially improve the accuracy and reliability of their microbial community analyses. The protocols and comparative data presented here provide a practical framework for addressing these biases across diverse sample types and research applications. As metagenomic sequencing continues to expand into increasingly challenging sample matrices, particularly in clinical diagnostics where low-biomass samples are common, robust bias mitigation strategies will become ever more critical for generating biologically meaningful results.

Within metagenomic next-generation sequencing (mNGS), the choice of nucleic acid source is a pivotal first step that fundamentally influences the profiling of a microbial community. The two principal pathways are whole-cell DNA (wcDNA), which extracts genomic material from intact microorganisms, and cell-free DNA (cfDNA), which targets short, extracellular DNA fragments freely circulating in body fluids or sample supernatants [16] [17]. This decision carries significant weight for researchers and drug development professionals, as it directly impacts the sensitivity, specificity, and representativeness of the results in the context of library preparation. The optimal choice is highly dependent on the sample type, the target pathogens, and the specific clinical or research question. This application note provides a structured comparison of these two pathways, supported by recent quantitative data, detailed experimental protocols, and visualization to guide this critical methodological choice.

Comparative Performance Analysis

Recent clinical studies have directly compared the effectiveness of wcDNA and cfDNA mNGS across various sample types, revealing distinct performance profiles. The table below summarizes key quantitative findings from comparative studies on body fluid and bronchoalveolar lavage fluid (BALF) samples.

Table 1: Comparative Performance of wcDNA mNGS and cfDNA mNGS in Clinical Studies

| Metric | Sample Type | wcDNA mNGS Performance | cfDNA mNGS Performance | Reference & Context |

|---|---|---|---|---|

| Host DNA Proportion | Clinical Body Fluids | Mean: 84% [16] | Mean: 95% (p < 0.05) [16] | PMC11934473 |

| Concordance with Culture | Clinical Body Fluids | 63.33% (19/30 samples) [16] | 46.67% (14/30 samples) [16] | PMC11934473 |

| Sensitivity (vs. Culture) | Body Fluid Samples | 74.07% [16] | Not Reported | PMC11934473 |

| Specificity (vs. Culture) | Body Fluid Samples | 56.34% [16] | Not Reported | PMC11934473 |

| Diagnostic Performance | BALF (Pulmonary Aspergillosis) | Outperformed conventional tests; inferior to cfDNA in RPM for Aspergillus [17] | Superior reads per million (RPM) for Aspergillus; AUC of 0.779 for predicting infection [17] | Frontiers in Cellular and Infection Microbiology, 2024 |

| Consistency with 16S NGS | Clinical Body Fluids | 70.7% (29/41 samples) [16] | Not Reported | PMC11934473 |

Experimental Protocols

Protocol A: Dual-Pathway DNA Extraction from Body Fluids

This protocol is adapted from a comparative study on clinical body fluid samples [16].

I. Sample Pre-Processing

- Centrifuge the collected body fluid sample (e.g., pleural, ascites, or drainage fluid) at 20,000 × g for 15 minutes at room temperature.

- Carefully transfer the supernatant to a new tube for cfDNA extraction. The resulting pellet will be used for wcDNA extraction.

II. Cell-Free DNA (cfDNA) Extraction from Supernatant

- Use the VAHTS Free-Circulating DNA Maxi Kit (Vazyme Biotech) or the QIAamp DNA Micro Kit (QIAGEN).

- Add 25 μl of Proteinase K and 800 μl of Buffer L/B to 400 μl of supernatant.

- Add 15 μl of magnetic beads, mix briefly, and incubate at room temperature for 5 minutes.

- Place the tube on a magnetic rack until the solution clears. Carefully remove and discard the supernatant.

- Wash the beads as per the manufacturer's instructions. Elute the extracted cfDNA in 50 μl of elution buffer.

III. Whole-Cell DNA (wcDNA) Extraction from Pellet

- Use a kit designed for microbial DNA, such as the Qiagen DNA Mini Kit or the Mag-Bind Universal Metagenomics Kit (Omega Biotek).

- Add two 3-mm nickel beads to the pellet and shake at 3,000 rpm for 5 minutes to mechanically lyse cells.

- Proceed with the DNA extraction according to the manufacturer's protocol.

- Elute the final wcDNA in 50-100 μl of elution buffer.

IV. Quality Control and Quantification

- Quantify DNA concentration using a fluorometric method (e.g., Qubit Fluorometric Quantitation).

- Assess DNA quality and fragment size via 0.8% Agarose Gel Electrophoresis (AGE).

Protocol B: Library Preparation and Sequencing

I. Library Construction

- Use the VAHTS Universal Pro DNA Library Prep Kit for Illumina (Vazyme) or the KAPA Hyper Prep Kit (KAPA Biosystems), which has been shown to detect a higher number of genes compared to transposase-based methods [18].

- For each sample, use 50–250 ng of input DNA for library preparation. Note that inputs within this range have shown no significant difference in gene detection for shotgun metagenomics [18].

- Follow the manufacturer's protocol for end-repair, adapter ligation, and library amplification.

II. Sequencing

- Sequence the pooled libraries on an Illumina NovaSeq platform using a 2 × 150 bp paired-end configuration.

- Aim for approximately 8 GB of data (~26 million reads) per sample for comprehensive analysis [16].

Workflow Visualization

The following diagram illustrates the critical decision points and parallel pathways for wcDNA and cfDNA analysis in mNGS.

Decision Pathway for wcDNA vs. cfDNA mNGS

The Scientist's Toolkit: Essential Research Reagents

The table below lists key reagents and kits critical for implementing the wcDNA and cfDNA pathways.

Table 2: Essential Reagents for wcDNA and cfDNA mNGS Workflows

| Reagent/Kits | Function | Specific Application Note |

|---|---|---|

| VAHTS Free-Circulating DNA Maxi Kit (Vazyme) | Extraction of cell-free DNA from sample supernatants. | Optimized for short-fragment cfDNA; includes magnetic bead-based purification [16]. |

| QIAamp DNA Micro Kit (QIAGEN) | Extraction of DNA from small volumes, suitable for both cfDNA and wcDNA. | Used for extracting cfDNA from BALF supernatant and wcDNA from pellets [17]. |

| Mag-Bind Universal Metagenomics Kit (Omega Biotek) | Extraction of microbial DNA from complex samples. | Demonstrated higher DNA yield and more detected genes compared to other soil-based kits [18] [19]. |

| Qiagen DNeasy PowerSoil Kit | DNA extraction from environmental and challenging clinical samples. | Effective for lysis of difficult-to-break microbial cell walls; includes inhibitor removal [18]. |

| KAPA Hyper Prep Kit (KAPA Biosystems) | DNA library construction for NGS. | Outperformed transposase-based kits in detected gene number and Shannon diversity index [18]. |

| VAHTS Universal Pro DNA Library Prep Kit (Vazyme) | Library preparation for Illumina sequencing. | Used in conjunction with mNGS for pathogen detection in body fluids [16]. |

Concluding Recommendations

The choice between wcDNA and cfDNA is context-dependent. wcDNA mNGS is generally recommended for maximum sensitivity in detecting a broad range of intracellular pathogens, particularly in samples from abdominal and other sterile site infections, despite its compromised specificity which requires careful clinical interpretation [16]. Conversely, cfDNA mNGS is superior for detecting pathogens that release DNA into the surrounding environment, as demonstrated in pulmonary aspergillosis, and is less affected by host DNA interference in certain fluid samples [17]. For the most comprehensive diagnostic picture, especially in critically ill patients, a dual-pathway approach utilizing both wcDNA and cfDNA from a single sample can provide complementary insights that enhance diagnostic precision beyond conventional microbiological tests alone.

In metagenomic sequencing, the quality and interpretability of data are profoundly shaped by the initial library preparation. Three technical metrics are paramount for evaluating library quality and informing downstream analysis: insert size, library complexity, and PCR duplication rates. Insert size refers to the length of the sample DNA fragment that is sequenced, which is a critical parameter influencing assembly and coverage [20]. Library complexity measures the diversity of unique DNA molecules in the library, indicating how well the original microbial community's diversity is represented [21] [22]. PCR duplication rate quantifies the fraction of sequencing reads that are artificial copies from a single original molecule, which can skew abundance estimates [23] [24]. Understanding and controlling these interrelated metrics is essential for generating robust, representative metagenomic data, particularly when dealing with diverse microbial communities of varying biomass.

Foundational Concepts and Their Experimental Measurement

Defining Insert Size and Fragment Size

In paired-end sequencing, the insert is the sample DNA fragment of interest that is sequenced from both ends. The insert size is the length of this fragment in base pairs. The fragment size, a related but distinct term, includes the insert plus the attached adapter sequences on both ends [20]. The selection of an appropriate insert size is a critical experimental design choice. If the insert size is shorter than the combined length of the two sequencing reads, the reads will overlap in the middle, facilitating more accurate sequence assembly. Conversely, if the insert size is longer, an unsequenced inner distance remains [20]. The distribution of insert sizes is not uniform; fragmentation methods produce a range of sizes, and the median of this distribution is typically reported [20].

Quantifying Library Complexity

Library complexity describes the number of unique DNA molecules in a sequencing library. A library with high complexity contains a vast diversity of unique fragments, which is vital for achieving uniform coverage across the genome or metagenome and for detecting rare variants. Low-complexity libraries, often resulting from insufficient input material or over-amplification, are dominated by a smaller set of sequences and yield uneven, biased data [22]. In metagenomics, the "complexity" of the biological sample itself (e.g., low-complexity coral microbiome vs. high-complexity soil microbiome) also interacts with library preparation, influencing achievable sequencing depth and duplication rates [21]. Complexity can be estimated bioinformatically using measures of sequence uniqueness and entropy, or by tracking unique molecular identifiers (UMIs) [22].

Understanding and Identifying PCR Duplicates

PCR duplicates are multiple sequencing reads that originate from an identical template DNA molecule due to amplification during the library preparation process [23] [24]. These duplicates do not represent independent biological observations and can lead to false positives in variant calling or inaccurate estimates of microbial abundance if misinterpreted as unique sequences. The frequency of PCR duplicates is highly dependent on the amount of starting material and the sequencing depth, with lower inputs and higher depths leading to higher duplicate rates [24]. Standard bioinformatic tools like Picard MarkDuplicates or SAMTools rmdup identify duplicates by finding read pairs that align to the exact same genomic start and end positions [23].

Quantitative Data on Influencing Factors

Impact of Input DNA and Community Type on Key Metrics

Experimental data demonstrates that input DNA quantity and microbial community type significantly influence key library metrics. One systematic assessment of five library preparation methods found that these factors statistically affected median fragment size, library concentration, read GC content, and duplication rate [21]. The duplication rate, in particular, was especially sensitive to community type, with low-diversity communities (e.g., coral, mock) exhibiting significantly elevated duplication rates compared to more complex communities [21]. Another study on a mock microbial community found that the percentage of reads lost during quality control increased with decreasing input DNA, particularly for the Nextera XT protocol [25].

Table 1: Impact of Input DNA and Community Type on Library Metrics [21] [25]

| Factor | Impact on Library Metrics |

|---|---|

| Input DNA Quantity | Lower inputs can shift GC content towards more GC-rich sequences [25], increase the number of low-quality/unmapped reads [25], and increase the fraction of reads removed during QC for some protocols (e.g., Nextera XT) [25]. |

| Community Complexity | Low-complexity communities (e.g., coral, mock) have statistically elevated sequence duplication rates compared to high-complexity communities (e.g., soil) [21]. |

Comparing Library Preparation Methods

The choice of library preparation method introduces specific biases and performance characteristics. A comparative study of methods including Illumina Nextera DNA Flex, Qiagen QIASeq FX DNA, PerkinElmer NextFlex Rapid DNA-Seq, and seqWell plexWell96 showed that the procedure, community type, and input DNA concentration all interact to influence final library characteristics [21]. Furthermore, the fragmentation method (e.g., mechanical shearing vs. enzymatic tagmentation) significantly impacts the distribution of insert sizes. Nextera XT libraries, which use tagmentation, had a significantly smaller mean insert size (110 bp) compared to methods using mechanical shearing like Mondrian (200 bp) and MALBAC (208 bp) [25].

Table 2: Characteristics and Performance of Different Library Prep Methods [21] [25] [8]

| Method / Characteristic | Fragmentation Approach | Typical Insert Size Bias/Note | Key Finding |

|---|---|---|---|

| Nextera XT / DNA Flex | Enzymatic (Tagmentation) | Smaller mean insert size (e.g., 110 bp) [25]; sensitive to DNA concentration [26]. | Cost-effective; performance comparable to gold-standard for high-complexity communities [21]. |

| Mechanical Shearing (e.g., Covaris) | Physical (Acoustic) | Larger, more tunable insert sizes; more random fragmentation [25] [8]. | Minimal sequence bias; considered robust and reproducible [8]. |

| Other Enzymatic Kits | Enzymatic (Non-Tagmentation) | Varies by kit; modern kits have reduced motif/GC bias [8]. | Automation-friendly and lower equipment cost [8]. |

Experimental Protocols for Measurement and Control

Protocol 1: Measuring Insert Size Without a Reference Genome

Accurately determining insert size is crucial for quality control, especially when a reference genome is unavailable or incomplete, as is common in metagenomics. This protocol uses the tool FLASH to measure insert sizes directly from FASTQ files.

- Principle: For read pairs where the insert size is less than the combined read length, the 3' ends of the reads will overlap. FLASH can merge these overlaps, and the length of the resulting contig equals the insert size [26].

- Procedure:

- Software Installation: Install FLASH (Fast Length Adjustment of SHort reads) from its official repository.

- Command Execution: Run FLASH on your paired-end FASTQ files. A basic command is:

flash read1.fastq read2.fastq -m 10 -M 100 -o output_prefix-m: Minimum overlap length (e.g., 10 bp).-M: Maximum overlap length (e.g., 100 bp).

- Data Extraction: After execution, FLASH will generate a histogram file (

output_prefix.hist) containing the distribution of assembled insert sizes. - Interpretation: The peak of this histogram represents the most common insert size. A broad or multi-peaked distribution may indicate issues with the fragmentation or size selection steps during library prep [26].

- Considerations: This method reliably identifies fragments with small inserts that are likely to contain adapter sequence, a common issue in Nextera XT libraries [26].

Protocol 2: Using UMIs to Accurately Remove PCR Duplicates

Standard duplicate removal based on mapping coordinates can be overly aggressive and biased. This protocol incorporates Unique Molecular Identifiers (UMIs) to distinguish technical duplicates from biologically identical reads.

- Principle: Before amplification, a random nucleotide UMI is ligated to each original cDNA/DNA molecule. All reads with the same UMI are definitively identified as PCR duplicates derived from a single molecule, regardless of their final sequence or mapping position [24].

- Procedure:

- Adapter Design: Modify standard sequencing adapters to include a random nucleotide UMI (e.g., 5-10 random bases) and a short, fixed "locator" sequence to anchor the UMI during sequencing [24].

- Library Preparation: Proceed with the standard library prep protocol using the UMI-containing adapters.

- Bioinformatic Processing: Use a UMI-aware bioinformatics pipeline (e.g., built with tools like

umisorfgbio) to:- Extract UMIs from read headers.

- Group reads by their UMI and genomic mapping coordinates.

- For each group of reads sharing the same UMI and coordinates, retain a single consensus read to correct for sequencing errors and discard the rest as duplicates [24].

- Considerations: UMI length is critical. For RNA-seq or small RNA-seq of highly abundant molecules, a 10-nt UMI (providing ~1 million unique combinations) may be necessary to ensure every unique molecule gets a unique tag [24].

Protocol 3: Standard Bioinformatic PCR Duplicate Removal

For libraries prepared without UMIs, this protocol uses Picard MarkDuplicates, a standard tool for identifying duplicates based on mapping coordinates.

- Principle: Picard identifies read pairs with the same outer alignment start and end positions and orientation. It marks all but the highest-quality read pair as duplicates, which are then ignored by downstream variant callers [23].

- Procedure:

- Input Data: You will need a coordinate-sorted BAM file containing your aligned sequencing reads.

- Software: Ensure Picard Tools (or a compatible replacement like GATK) is installed.

- Command Execution: Run the MarkDuplicates command. An example is:

I: Input sorted BAM file.O: Output BAM file with duplicate flags set.M: File to write duplicate metrics.

- Output Interpretation: The metrics file reports the number and percentage of duplicated reads. A high percentage (>20-50%) may indicate issues with low input DNA or over-amplification [23].

- Considerations: This method can incorrectly mark biologically identical reads from repetitive regions or highly expressed genes as duplicates, potentially introducing bias [24].

The Scientist's Toolkit: Research Reagent Solutions

Selecting the right reagents and kits is fundamental to successful library preparation. The following table details essential materials and their functions.

Table 3: Essential Research Reagents for Metagenomic Library Preparation

| Reagent / Kit | Primary Function | Key Considerations |

|---|---|---|

| Nextera DNA Flex / XT Kit | Transposase-based fragmentation and adapter tagging ("tagmentation") in a single step [21] [25]. | Sensitive to input DNA quantity; can produce a broad insert size distribution [26] [25]. Cost-effective for high-complexity communities [21]. |

| UMI Adapters (Custom) | Ligation of unique molecular identifiers to original molecules pre-amplification [24]. | UMI length must provide sufficient diversity for the experiment (e.g., 10-nt for small RNA-seq). A fixed "locator" sequence aids in accurate UMI identification [24]. |

| Covaris AFA System | Mechanical DNA shearing via focused acoustic energy for random fragmentation [25] [8]. | Produces a tight, tunable insert size distribution with minimal sequence bias. Requires specialized equipment [8]. |

| AMPure XP Beads | Solid-phase reversible immobilization (SPRI) for post-ligation and post-amplification cleanup and size selection [8]. | Critical for removing adapter dimers, unligated adapters, and short fragments. Bead-to-sample ratio controls size selection cutoff. |

| High-Fidelity PCR Mix | Amplification of adapter-ligated fragments, especially for low-input samples [8]. | Minimizes introduction of errors during amplification. The number of PCR cycles should be minimized to preserve library complexity and reduce duplicates [8]. |

| Host Depletion Kit (e.g., HostZERO) | Selective reduction of host (e.g., human) DNA in host-associated microbiome samples [27]. | Dramatically increases the fraction of microbial reads in shotgun metagenomic data, improving sequencing efficiency for the target community [27]. |

Selecting and Implementing the Right Protocol for Your Sample and Study

Within metagenomic sequencing research, the initial conversion of extracted DNA into a sequence-ready library is a critical step that profoundly influences the quality, reliability, and interpretability of the generated data. The choice of library preparation method can introduce biases in genome coverage, affect the detection of single nucleotide variants (SNVs) and indels, and ultimately determine the success of a study aimed at characterizing complex microbial communities [28]. This application note provides a structured comparison of predominant library preparation kits—including those from Illumina, KAPA HyperPlus/HyperPrep, and Nextera XT/Nextera DNA Flex—framed within the context of metagenomic sequencing. We summarize key performance data from controlled studies, detail standardized protocols for reproducibility, and visualize workflows to guide researchers and drug development professionals in selecting and implementing the optimal library preparation strategy for their specific research needs.

Kit Comparison and Performance Data

Comparative Specifications of Commercial Kits

The selection of a library preparation kit requires careful consideration of input DNA, workflow time, and application suitability. The table below compares key specifications for a range of commercially available kits.

Table 1: Specifications of Selected DNA Library Preparation Kits for Short-Read Sequencing [29] [30] [28]

| Supplier | Kit Name | System Compatibility | Assay Time | Input Quantity | PCR Required? | Key Applications |

|---|---|---|---|---|---|---|

| Illumina | Illumina DNA PCR-Free Prep | Illumina platforms | ~1.5 hours | 25 ng – 300 ng | No | De novo assembly, WGS |

| Illumina | Illumina DNA Prep | Illumina platforms | 3-4 hours | 1-500 ng (varies by genome size) | Yes | Amplicon sequencing, WGS |

| Illumina | Nextera XT | iSeq 100, MiSeq, NextSeq series | ~5.5 hours | 1 ng | Yes | 16S rRNA sequencing, amplicon sequencing, WGS |

| Roche | KAPA HyperPlus/HyperPrep | Illumina platforms | 2-3 hours | 1 ng – 1 μg | Optional (kit dependent) | WGS, WES, metagenomic sequencing |

| Integrated DNA Technologies (IDT) | xGen DNA EZ Library Prep Kit | Illumina platforms | <2 hours | 100 pg – 1 μg | Yes | Genotyping, WES, WGS |

| Arbor Biosciences | Library Prep Kit for myBaits | User-supplied adapters for Illumina | Protocol-dependent | 1 – 500 ng | Yes (post-capture) | Targeted sequencing (e.g., whole exome, phylogenetics) |

Performance Metrics in Whole Genome Sequencing

A independent study compared several enzymatic fragmentation-based kits and the tagmentation-based Illumina Nextera DNA Flex kit using human genomic DNA (cell line NA12878) with 10 ng and 100 ng input amounts [28]. The following table summarizes the key outcomes, which are highly relevant for metagenomic sequencing where input DNA can be limited and representative coverage is paramount.

Table 2: Performance Metrics of Library Prep Kits in a Whole Genome Sequencing Study [28]

| Kit | Fragmentation Method | Input DNA (PCR cycles) | Mean Insert Size from Sequencing (bp) | Key Performance Findings |

|---|---|---|---|---|

| Nextera DNA Flex (Illumina) | Tagmentation | 10 ng (8 cycles) | 326 (±2) | Reproducible performance. Coverage gaps can occur in specific genomic regions with tagmentation-based methods [31]. |

| 100 ng (5 cycles) | 366 (±2) | |||

| KAPA HyperPlus (Roche) | Enzymatic | 10 ng (9 cycles) | 240 (±9) | Robust performance. Produced consistent, high coverage; better coverage of low-coverage regions compared to Nextera XT [31]. |

| 100 ng (0 cycles, PCR-free) | 227 (±3) | |||

| NEBNext Ultra II FS (NEB) | Enzymatic | 10 ng (7 cycles) | 206 (±7) | Good performance. Libraries with insert sizes longer than the cumulative read length showed improved coverage and variant detection. |

| 100 ng (3 cycles) | 188 (±6) | |||

| SparQ (Quantabio) | Enzymatic | 10 ng (9 cycles) | 185 (±3) | Good performance. Shorter insert sizes observed, but performance improved with longer inserts. |

| 100 ng (0 cycles, PCR-free) | 244 (±10) | |||

| Swift 2S Turbo (Swift) | Enzymatic | 10 ng (6 cycles) | 330 (±12) | Good performance. Achieved one of the longest insert sizes among enzymatic methods in this study. |

| 100 ng (0 cycles, PCR-free) | 226 (±7) |

The study concluded that all tested kits produced high-quality data, but library insert size was a critical factor. Libraries with DNA insert fragments longer than the cumulative sum of both paired-end reads (e.g., >300 bp for 2x150 bp sequencing) avoid read overlap, leading to more unique sequence information, improved genome coverage, and increased sensitivity for SNV and indel detection [28]. Furthermore, libraries prepared with minimal or no PCR demonstrated the best performance for indel detection, highlighting the value of PCR-free workflows where input DNA allows [28].

Detailed Experimental Protocols

Protocol for KAPA HyperPlus Library Preparation (96 rxn)

The KAPA HyperPlus kit offers a streamlined, single-tube protocol that combines several enzymatic steps and reduces bead cleanups, making it suitable for a wide range of input amounts and sample types, including FFPE and cell-free DNA [30].

Reagents and Materials:

- KAPA HyperPlus Kit (Roche Cat. No. 07962347001 for 24 rxn or 07962363001 for 96 rxn) containing:

- KAPA End Repair & A-Tailing Buffer

- KAPA End Repair & A-Tailing Enzyme

- KAPA Ligation Buffer

- KAPA DNA Ligase

- KAPA HiFi HotStart ReadyMix (2X)

- KAPA Library Amplification Primer Mix (10X) [30]

- KAPA HyperPure Beads (Roche Cat. No. 08963843001) [32]

- KAPA Adapters (Single- or Dual-Indexed, purchased separately)

- Nuclease-free water

- Ethanol (80%)

- Thermal cycler

- Magnetic separation rack

- Agilent Tapestation or Bioanalyzer for quality control

Procedure:

- Fragmentation and End-Repair/A-Tailing: In a single tube, combine 1-1000 ng of genomic DNA in 50 µL of nuclease-free water with 7 µL of KAPA End Repair & A-Tailing Buffer and 3 µL of KAPA End Repair & A-Tailing Enzyme. Mix thoroughly and incubate in a thermal cycler at 65 °C for 30 minutes to fragment the DNA, followed immediately by 4 °C for 5 minutes for end-repair and A-tailing [30] [28].

- Adapter Ligation: To the same tube, add 30 µL of KAPA Ligation Buffer, 10 µL of KAPA DNA Ligase, and 10 µL of diluted KAPA Adapters (final concentration ~0.5 µM). Mix well and incubate at 20 °C for 60 minutes [30].

- Post-Ligation Cleanup: Add 50 µL of KAPA HyperPure Beads to the ligation reaction (1.0x ratio) to purify the adapter-ligated library. Follow the standard bead-based purification protocol: bind, wash twice with 80% ethanol, elute in a low-volume buffer (e.g., 22 µL) [30].

- Library Amplification (Optional): For PCR-amplified libraries, combine 20 µL of the purified ligation product with 25 µL of KAPA HiFi HotStart ReadyMix (2X) and 5 µL of KAPA Library Amplification Primer Mix (10X). Amplify using the following cycling conditions: 98 °C for 45 seconds; 6-10 cycles of 98 °C for 15 seconds, 60 °C for 30 seconds, 72 °C for 30 seconds; 72 °C for 1 minute; hold at 4 °C [30]. The number of cycles should be optimized based on input DNA.

- Post-Amplification Cleanup and Size Selection: Perform a double-sided SPRI (bead-based) size selection. First, add beads at a 0.6x ratio to remove large fragments. Transfer the supernatant to a new tube and add beads at a 0.15x ratio to recover the desired library fragments (typically ~300-600 bp). Elute the final library in 20-30 µL of buffer [30].

- Quality Control and Quantification: Assess the library's size distribution and integrity using an Agalient Tapestation D1000 or Bioanalyzer. Quantify the library using a fluorescence-based method (e.g., Qubit) and qPCR for accurate molar concentration prior to pooling and sequencing [28].

Protocol for Nextera XT DNA Library Preparation (96 rxn)

The Nextera XT kit utilizes a tagmentation reaction that simultaneously fragments DNA and adds adapter sequences, enabling a very rapid workflow suitable for high-throughput processing of amplicons, though it requires precise input DNA [31].

Reagents and Materials:

- Nextera XT DNA Library Prep Kit (Illumina, 96 samples) containing:

- Nextera XT Amplicon Tagment Buffer (ATB)

- Nextera XT Tagment DNA Enzyme (TDK)

- Nextera PCR Master Mix (NPM)

- Resuspension Buffer (RSB)

- Nextera XT Index Kit 1, 2 (Illumina)

- Nuclease-free water

- Magnetic beads (e.g., AMPure XP)

- Ethanol (80%)

- Thermal cycler

- Magnetic separation rack

- Agilent Tapestation or Bioanalyzer

Procedure:

- Tagmentation: Dilute genomic DNA or amplicons to 1 ng/µL in RSB. Combine 5 µL (5 ng) of diluted DNA with 10 µL of ATB and 5 µL of TDK. Mix thoroughly and incubate in a thermal cycler at 55 °C for 5-15 minutes, then hold at 10 °C [31]. The fragmentation time can be adjusted to modify the insert size distribution.

- Neutralize Tagmentation: Add 5 µL of Neutralize Tagment Buffer (NTB) to the tagmentation reaction. Mix by pipetting and incubate at room temperature for 5 minutes.

- PCR Amplification and Indexing: Add 5 µL of each Nextera XT Index Primer (i5 and i7) and 15 µL of NPM to the neutralized tagmentation reaction. Amplify using the following cycling conditions: 72 °C for 3 minutes; 95 °C for 30 seconds; 12 cycles of 95 °C for 10 seconds, 55 °C for 30 seconds, 72 °C for 30 seconds; 72 °C for 5 minutes; hold at 4 °C [31].

- Library Cleanup: Add 45 µL of magnetic beads (0.9x ratio) to the 50 µL PCR reaction. Purify the library by binding, washing twice with 80% ethanol, and eluting in 22.5 µL of RSB.

- Quality Control and Quantification: As with the KAPA protocol, assess the library's size distribution and concentration using instrumentation like the Tapestation and qPCR. Normalize libraries to 4 nM prior to pooling for sequencing [31].

Workflow Visualization and Technical Diagrams

Library Preparation Workflow Comparison

The following diagram illustrates the core procedural steps and key decision points for the two primary library preparation methods discussed: enzymatic fragmentation/ligation (e.g., KAPA HyperPlus) and tagmentation (e.g., Nextera XT).

Diagram 1: Comparison of enzymatic fragmentation/ligation and tagmentation library prep workflows. Key differences include the initial fragmentation/adapter addition step and the optionality of PCR in some enzymatic protocols [30] [31].

The Scientist's Toolkit: Essential Reagent Solutions

Successful library preparation relies on a suite of specialized reagents beyond the core kit components. The following table details key reagent solutions and their critical functions in the workflow.

Table 3: Essential Research Reagent Solutions for NGS Library Preparation

| Reagent/Material | Function/Description | Example Product(s) |

|---|---|---|

| Magnetic SPRI Beads | Size-selective purification of nucleic acids; used for cleanups and size selection between reaction steps. | KAPA HyperPure Beads [30], AMPure XP Beads |

| Universal Stubby Adapters | Short, double-stranded adapters with T-overhangs for ligation to A-tailed DNA fragments; require indexing via PCR. | xGen Stubby Adapters (IDT) [33] |

| Dual Indexed Adapters | Full-length or stubby adapters containing unique combinatorial barcodes (i5 and i7) for sample multiplexing; reduce index hopping. | KAPA Dual-Indexed Adapter Kits [30], xGen UDI-UMI Adapters (IDT) [33] |

| High-Fidelity DNA Polymerase | PCR enzyme with high accuracy and processivity; used for library amplification with minimal bias and high yield. | KAPA HiFi HotStart ReadyMix [30] |

| Library Quantification Kits | qPCR-based assays for accurate determination of the molar concentration of adapter-ligated fragments; essential for pooling libraries. | KAPA Library Quantification Kit [30] |

| Enzymatic Fragmentation Mix | Controlled digestion of DNA by a proprietary mix of enzymes to a desired fragment length; alternative to mechanical shearing. | Component of KAPA HyperPlus, NEBNext Ultra II FS [28] |

The landscape of NGS library preparation kits offers multiple robust paths for creating metagenomic sequencing libraries. Enzymatic fragmentation-based kits, such as KAPA HyperPlus/HyperPrep, provide flexibility in input DNA, reduced hands-on time, and performance comparable to established tagmentation-based methods like Illumina's Nextera DNA Flex and Nextera XT [28]. The critical technical considerations for kit selection include DNA input amount, the desire for a PCR-free workflow to minimize bias, and the paramount importance of achieving an optimal library insert size longer than the sequenced read length to maximize unique coverage and variant detection sensitivity [28]. By leveraging the comparative data, detailed protocols, and visual workflows provided in this application note, researchers can make informed decisions that enhance the quality and efficiency of their metagenomic sequencing projects, thereby accelerating discovery in microbial ecology and drug development.

Within metagenomic sequencing research, the accuracy and completeness of genomic data are fundamentally dependent on the initial steps of sample handling and nucleic acid extraction. The complexity and diversity of microbial communities, coupled with the unique biochemical challenges posed by different sample matrices, necessitate a tailored approach for each specimen type. This Application Note provides a structured guide to selecting and optimizing sample preparation protocols for three critical sample categories in microbiome research: soil, gut, and clinical specimens. Proper matching of extraction kits and methods to specific sample types ensures higher DNA yield, improved quality, and ultimately, more reliable sequencing data, forming the cornerstone of robust metagenomic library preparation.

Sample Type-Specific Challenges and Strategic Approaches

The table below summarizes the primary challenges and corresponding strategic solutions for different sample types.

Table 1: Key Challenges and Strategic Approaches for Different Sample Types

| Sample Type | Primary Challenges | Strategic Approach | Key Considerations |

|---|---|---|---|

| Soil | High inhibitor content (humic acids), immense microbial diversity, particle heterogeneity [34] [35]. | Physical separation of cells from soil matrix; inhibitor removal washes; size-selection for long-read sequencing [35]. | Avoid atypical areas during sampling; use stainless steel tools to prevent chemical contamination [34]. |

| Gut (Feces) | High host DNA content, variable biomass, sensitivity to confounders (diet, antibiotics) [36] [37]. | Standardized collection in stabilizers; host DNA depletion; careful confounder documentation [36] [38]. | Consistency in collection and storage is critical; document diet, medication, and host age [37]. |

| Clinical Body Fluids | Low microbial biomass (high contamination risk), high host DNA background, need for rapid diagnostics [39] [40]. | Centrifugation-based enrichment; cell-free vs. whole-cell DNA extraction; integration with culture [39] [38]. | Strict negative controls are mandatory to identify reagent or cross-sample contamination [39] [37]. |

Detailed Experimental Protocols

Soil Sample Protocol for Long-Read Metagenomics

The following protocol is optimized for obtaining high-molecular-weight (HMW) DNA from soil for advanced long-read sequencing, enabling the recovery of complete microbial genomes [35].

Materials & Reagents:

- Nycodenz gradient solution

- Skim-milk wash buffer

- Monarch HMW DNA Extraction Kit

- Oxford Nanopore Small Fragment Eliminator Kit (or equivalent)

- Stainless steel sampling tools and sterile spatula

Procedure:

- Sampling: Collect soil using a sterile stainless steel corer or spatula. Avoid areas with roots, debris, or atypical conditions. For a representative profile, collect multiple subsamples from the area of interest [34].

- Cell Separation (from [35]):

- Suspend 5-10 g of soil in a buffered solution (e.g., PBS) and homogenize gently.

- Layer the suspension over a Nycodenz gradient and centrifuge.

- Carefully extract the bacterial cell band from the gradient interface.

- Inhibitor Removal: Wash the harvested cell suspension with a skim-milk-based buffer to adsorb and remove PCR inhibitors like humic acids [35].

- HMW DNA Extraction: Extract DNA from the cleaned cell pellet using the Monarch HMW DNA Extraction Kit, following the manufacturer's instructions. This kit is designed to preserve long DNA fragments.

- DNA Size Selection: Purity and size-select the eluted DNA using the Small Fragment Eliminator Kit to enrich for fragments >10 kbp, which is crucial for long-read sequencing [35].

- Quality Control: Assess DNA quantity using a Qubit fluorometer and quality/fragment size using pulsed-field gel electrophoresis or a Fragment Analyzer.

Gut Microbiome Protocol for Shotgun Metagenomics

This protocol focuses on obtaining unbiased microbial DNA from fecal samples for shotgun metagenomic sequencing, which is critical for functional profiling [36] [41].

Materials & Reagents:

- OMNIgene Gut kit or 95% Ethanol (for field stabilization)

- QIAamp PowerFecal Pro DNA Kit (or equivalent with bead-beating)

- Benzonase (for host DNA depletion, optional)

Procedure:

- Sample Collection & Stabilization: Collect fecal sample directly into the OMNIgene Gut kit tube or a container with 95% ethanol. Immediate freezing at -80°C is an alternative if logistics allow [37]. Maintain consistent storage conditions for all samples in a study.

- Homogenization & Lysis:

- Weigh 180-220 mg of feces into a tube containing garnet beads and lysis buffer.

- Vortex thoroughly to homogenize. Bead-beating is essential for lysing tough Gram-positive bacterial cells.

- DNA Extraction: Proceed with the DNA extraction protocol of the QIAamp PowerFecal Pro DNA Kit, which includes steps to remove inhibitors common in feces.

- Host DNA Depletion (Optional): For samples with high host DNA content, treat the extracted DNA with Benzonase, an enzyme that digests linear DNA fragments, followed by a cleanup step. This enriches for microbial, often circular, DNA [38].

- Quality Control: Quantify DNA and confirm the absence of degradation via agarose gel electrophoresis.

Clinical Body Fluid Protocol for Pathogen Detection

This protocol compares two main approaches for clinical body fluids: whole-cell DNA (wcDNA) and microbial cell-free DNA (cfDNA) extraction, with wcDNA showing higher sensitivity for pathogen identification [39].

Materials & Reagents:

- Sterile containers for body fluid collection (e.g., BALF, CSF)

- Qiagen DNA Mini Kit

- VAHTS Free-Circulating DNA Maxi Kit