Sanger Sequencing for NGS Validation: A 2025 Guide for Researchers and Clinicians

This article provides a comprehensive guide for researchers and drug development professionals on the critical role of Sanger sequencing in validating Next-Generation Sequencing (NGS) findings.

Sanger Sequencing for NGS Validation: A 2025 Guide for Researchers and Clinicians

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical role of Sanger sequencing in validating Next-Generation Sequencing (NGS) findings. Covering foundational principles, established methodologies, and advanced troubleshooting, it details why Sanger remains the gold standard for confirmatory testing despite the rise of high-throughput technologies. Drawing on the most recent 2025 studies, we explore practical workflows for orthogonal verification, address common challenges in variant confirmation, and present comparative data on accuracy and sensitivity. The guide also synthesizes current best practices for optimizing validation pipelines to enhance reliability in clinical diagnostics and biomedical research, ensuring the highest confidence in reported genetic variants.

Why Sanger Sequencing Remains the Gold Standard for NGS Verification

In the era of next-generation sequencing (NGS), Sanger sequencing maintains a critical role in genomic verification and validation. Its reputation rests on a well-established benchmark: 99.99% single-base accuracy. This gold-standard status is not merely historical but is actively maintained in contemporary research and clinical pipelines, particularly for confirming critical genetic findings. This guide explores the experimental and technical foundations of this accuracy benchmark, objectively compares it with NGS performance, and details its indispensable application in verifying NGS-derived results within modern research and drug development.

The Technical Foundation of Sanger Accuracy

The exceptional accuracy of Sanger sequencing is a direct result of its refined methodology and unique approach to base calling.

Principle of Operation: Sanger sequencing, or chain-termination sequencing, operates by incorporating fluorescently-labeled dideoxynucleotides (ddNTPs) during DNA synthesis. Each ddNTP halts the elongation of the DNA strand at a specific nucleotide, producing DNA fragments of varying lengths. These fragments are then separated via capillary electrophoresis, a high-resolution process that determines the sequence by reading the fluorescently tagged terminal bases in order of fragment size [1] [2] [3]. This direct physical separation contributes significantly to its precision.

The Role of Phred Quality Scoring: The accuracy of each base call is quantitatively assessed using Phred quality scores (Q-score), a critical metric for evaluating sequencing data. A Phred score of 30, which is standard for high-quality Sanger data, indicates a 1 in 1,000 probability of an error, translating to a base-call accuracy of 99.9%. Notably, Sanger sequencing often achieves stretches of data with Q-scores of 40 or higher, equating to a phenomenal 99.99% accuracy (1 error in 10,000 bases) [4] [1]. This consistent, high-quality output across long reads (typically 500-1000 bp) is a key differentiator.

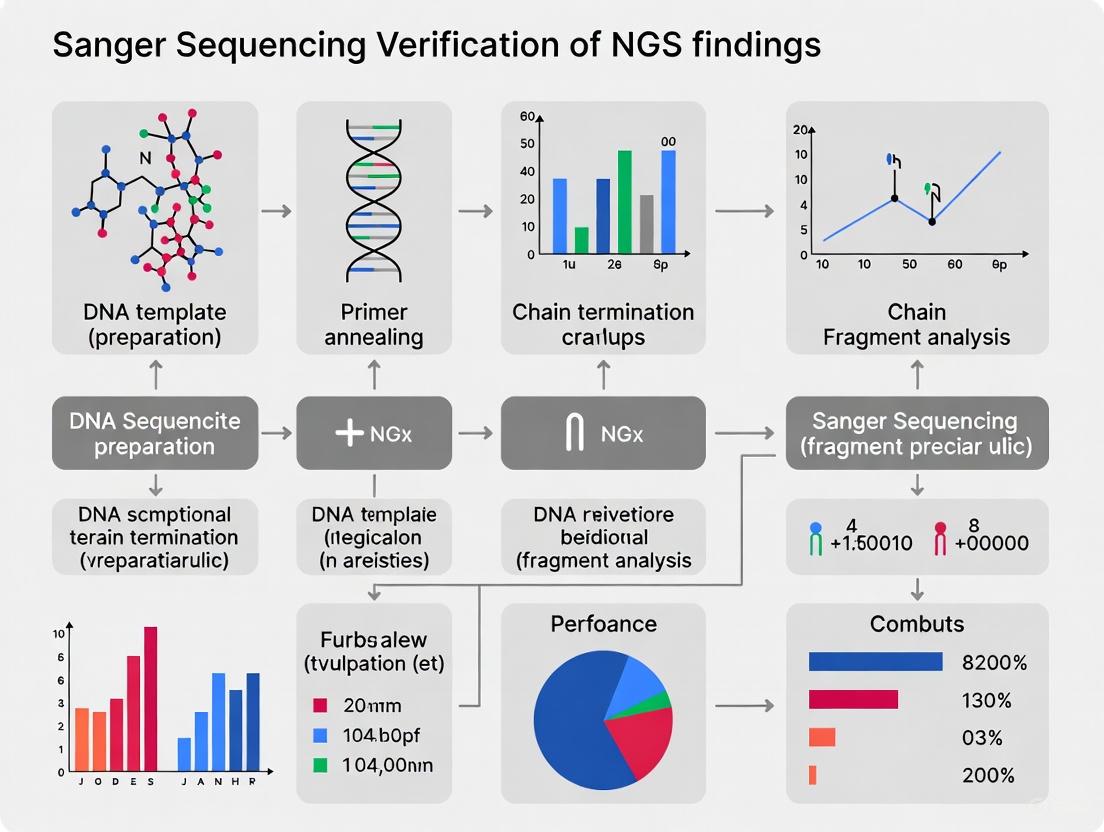

The following diagram illustrates the core workflow that enables this high accuracy:

Sanger Sequencing Workflow

Quantitative Comparison: Sanger Sequencing vs. NGS

While NGS offers unparalleled throughput, Sanger sequencing remains superior for targeted applications requiring maximum accuracy. The table below summarizes the core performance differences.

Table 1: Performance Comparison Between Sanger and Next-Generation Sequencing

| Aspect | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Single-Base Accuracy | 99.99% (QV ≥40) [4] | ~99.9% (Often requires Sanger validation) [4] |

| Read Length | 500 - 1,000 bp (high-quality region) [4] [2] | 150 - 300 bp (for Illumina) [2] [3] |

| Detection Limit for Variants | ~15-20% of the viral quasispecies [5] [3] | As low as 1% [6] [3] |

| Ideal Use Cases | Mutation verification, plasmid validation, single-gene studies [4] [6] | Whole-genome sequencing, variant discovery, transcriptomics [6] [3] |

| Best for Number of Targets | Cost-effective for 1-20 targets [6] [2] | Cost-effective for >20 targets [6] [2] |

This comparison highlights a key distinction: Sanger sequencing provides superior accuracy for a single DNA fragment, whereas NGS provides greater sensitivity for detecting rare, low-frequency variants in a mixed sample due to its deep sequencing capability [6] [3].

Sanger Sequencing for NGS Verification: Experimental Evidence

The practice of using Sanger sequencing to validate NGS findings is a cornerstone of rigorous genomic research. The evidence for its utility comes from large-scale, systematic studies.

Key Experimental Protocol: Large-Scale Validation

A seminal study from the ClinSeq project systematically evaluated the need for Sanger validation of NGS variants [7].

- Methodology: Researchers compared NGS variants in five genes across 684 participants against data generated from high-throughput Sanger sequencing of the same samples. Over 5,800 NGS-derived variants were checked against Sanger data.

- Findings: Of the 5,800+ variants, only 19 were not initially validated by the first-pass Sanger data. Upon re-sequencing with newly designed primers, 17 of these 19 NGS variants were confirmed, revealing that the Sanger method was more likely to incorrectly refute a true positive than to correctly identify a false positive.

- Conclusion: The study calculated a NGS validation rate of 99.965% using Sanger sequencing and questioned the routine necessity of this orthogonal validation, given that NGS data are at least as accurate as a single round of Sanger sequencing [7].

Application in Infectious Disease Research

In clinical settings, the choice between technologies depends on the required sensitivity.

- HIV Drug Resistance Study: A 2022 study on HIV-1 drug resistance in children and adolescents compared Sanger sequencing with NGS at multiple sensitivity thresholds (1% to 20%) [5].

- Findings: The agreement between the two technologies was high (up to 88%) for detecting any drug resistance mutation when a high NGS threshold (15-20%) was used. However, NGS detected substantially more mutations at lower thresholds (1-5%). This demonstrates that while Sanger is highly reliable for detecting dominant variants, NGS offers superior sensitivity for identifying minor populations [5].

The logical relationship in the verification workflow is outlined below:

NGS Verification Workflow

The Scientist's Toolkit: Essential Reagents for Sanger Sequencing

Successful Sanger sequencing relies on a set of core reagents and materials, each with a specific function.

Table 2: Key Research Reagent Solutions for Sanger Sequencing

| Reagent/Material | Function | Key Considerations |

|---|---|---|

| Template DNA | The target DNA to be sequenced (e.g., plasmid, PCR product). | Requires high purity and specific concentration (e.g., plasmids ≥100 ng/μL; PCR products ≥20 ng/μL) [4]. |

| Sequencing Primer | A short, single-stranded DNA fragment that anneals to a specific region to initiate sequencing. | Can be client-supplied or selected from universal primers (M13, T7, SP6). Specificity is critical [4] [8]. |

| Fluorescent ddNTPs | Dideoxynucleotides labeled with fluorescent dyes; each base (A, T, C, G) has a unique dye. | Incorporated by DNA polymerase to terminate chain elongation, generating labeled fragments [2]. |

| DNA Polymerase | Enzyme that catalyzes the template-dependent addition of nucleotides. | High-fidelity polymerase is essential for low error rates and robust performance through difficult templates [9]. |

| Capillary Electrophoresis System | Instrumentation that separates DNA fragments by size. | Systems like the Applied Biosystems 3730xl provide the high resolution needed for long, accurate reads [4]. |

Sanger sequencing's 99.99% accuracy benchmark is not a historical artifact but a living standard underpinned by a robust and reliable biochemical process. Its strength lies in delivering long-read, single-molecule resolution with exceptional precision, making it the undisputed gold standard for targeted validation work. In the context of a world increasingly dominated by NGS, its role has evolved rather than diminished. For confirming critical mutations, verifying gene edits, validating NGS-derived variants, and ensuring the integrity of plasmid constructs, Sanger sequencing provides a final, authoritative layer of confidence that remains unmatched for specific, high-stakes applications in research and drug development.

Orthogonal validation, the practice of confirming next-generation sequencing (NGS) findings with an independent method, traditionally Sanger sequencing, has been a cornerstone of clinical genetic testing to ensure maximal accuracy and reliability. As NGS technologies have matured, the necessity of validating every variant has been questioned, prompting a shift towards risk-based approaches. This guide examines the current regulatory and best practice landscape, defining the requirements for orthogonal confirmation and providing a comparative analysis of validation strategies. The core challenge lies in balancing the impeccable accuracy demanded of clinical diagnostics with the practical realities of throughput, cost, and turnaround time in the era of large-scale genomic testing.

Regulatory Frameworks and Professional Guidelines

Regulatory requirements for NGS validation are evolving, with professional societies leading the development of standards in the absence of universally mandated regulations.

- Professional Society Leadership: The Association for Molecular Pathology (AMP) and the National Society of Genetic Counselors have convened working groups to address the variability in laboratory validation practices, establishing a common framework for clinical laboratories to develop individualized policies [10].

- The CLEP Standard: The New York State Department of Health's Clinical Laboratory Evaluation Program (CLEP) requirements for analytical validation are widely recognized as a national standard. CLEP approval mandates detailed documentation, quality control metrics, validation studies (accuracy, precision, reproducibility), and orthogonal validation of variants [11].

- Shifting Perspectives: Recent guidelines reflect a nuanced approach. The European Society of Human Genetics (ESHG) acknowledges the challenges of NGS but also the imperative for standardized validation, while other studies conclude that routine orthogonal Sanger validation of NGS variants has "limited utility" once appropriate quality thresholds are established [7] [12].

Orthogonal Validation in Practice: A Data-Driven Comparison

The decision to implement universal or selective orthogonal validation hinges on quantitative performance data. The table below summarizes key metrics from recent large-scale studies evaluating NGS accuracy against Sanger sequencing.

Table 1: Comparative Performance of NGS versus Sanger Sequencing in Validation Studies

| Study and Technology | Cohort and Variant Numbers | Concordance Rate with Sanger | Key Factors Influencing Concordance |

|---|---|---|---|

| WGS Variant Analysis [13] | 1,756 variants from 1,150 WGS samples | 99.72% (5 discrepancies) | Quality (QUAL) score, read depth (DP), allele frequency (AF) |

| Large-Scale Exome Study [7] | ~5,800 NGS-derived variants from 684 exomes | 99.965% (initially 19 failures, 17 confirmed with new primers) | Variant quality score; primer design in Sanger |

| Target-Capture Gene Panels [14] | 1,080 SNVs and 124 Indels across 117 genes | 100% for SNVs (919 comparisons) | Sufficient depth of coverage (>100x); variant type (SNV vs. Indel) |

| Targeted Gene Panels [12] | 945 rare variants from 218 patients | >99.6% (3 discrepancies resolved in favor of NGS) | Allele dropout (ADO) in Sanger PCR; primer-binding variants |

Key Experimental Protocols from Cited Studies

The data in Table 1 are derived from rigorously validated clinical workflows:

- WGS Variant Confirmation Protocol [13]: DNA was sequenced using a PCR-free WGS protocol on a BGI platform with a mean coverage of 34.1x. Variants were called using GATK HaplotypeCaller. All 1,756 selected variants underwent Sanger sequencing, and the quality metrics (DP, AF, QUAL) of the 5 discordant variants were analyzed to establish robust filtering thresholds.

- High-Throughput Sanger Comparison Protocol [7]: This study leveraged the unique ClinSeq cohort, where exome sequencing (via Illumina platforms) was performed on samples with existing high-throughput Sanger data. Variants were called using the Most Probable Genotype (MPG) caller. Discrepant variants were investigated with re-designed Sanger primers, which often resolved the issue.

- Targeted Panel Validation Protocol [12]: Targeted NGS was performed on Illumina MiSeq using Agilent SureSelect or Haloplex for enrichment. Variants were called with GATK HaplotypeCaller and filtered for Phred quality ≥30 and coverage depth ≥30x. Sanger validation included careful primer design and checking for SNPs in primer-binding sites to prevent allelic dropout.

Evolving Best Practices: From Universal to Selective Validation

Best practices are moving away from universal Sanger validation towards a more strategic, data-driven approach. The diagram below illustrates the logical decision-making workflow for modern orthogonal validation.

Defining "High-Quality" Variants for Validation Bypass

The decision to bypass validation is guided by specific, measurable quality metrics established through large-scale Sanger confirmation studies.

- Caller-Agnostic vs. Caller-Specific Metrics: Quality parameters can be divided into two groups. Caller-agnostic metrics like read depth (DP ≥ 15) and allele frequency (AF ≥ 0.25) are universally applicable across technologies. In contrast, caller-specific metrics like the quality score (QUAL ≥ 100 for GATK HaplotypeCaller) depend on the variant-calling algorithm and require laboratory-specific validation [13].

- The Critical Role of Genomic Context: Even high-quality metrics can be misleading in complex genomic regions. Segment duplications, GC-rich areas, and repetitive sequences are notorious for causing false positives. Tools like ClinRay are being developed to predict probe reproducibility in these difficult regions, helping to identify variants that still require validation despite good nominal quality scores [15].

- Variant-Type Specific Considerations: The validation policy must be stratified by variant type. Multiple studies have demonstrated that single nucleotide variants (SNVs) passing quality thresholds have concordance rates approaching 100%, making their validation redundant. In contrast, insertion-deletion (indel) variants more frequently require Sanger confirmation due to complexities in alignment and calling, even with sufficient depth of coverage [14].

Emerging Strategies and Advanced Solutions

The field is advancing beyond simple quality thresholds towards more sophisticated, automated methods for ensuring variant accuracy.

Machine Learning and Bioinformatics Innovation

Supervised machine learning models are now being trained to differentiate high-confidence from low-confidence variants with high precision, reducing the need for wet-lab confirmation.

- Model Training and Performance: Studies have used variant calls from Genome in a Bottle (GIAB) reference samples to train models like Logistic Regression, Random Forest, and Gradient Boosting. These models use features such as allele frequency, read depth, mapping quality, and homopolymer context. One such model achieved 99.9% precision and 98% specificity in identifying true positive heterozygous SNVs [16].

- The "Digital Twin" Approach: Methods like ClinRay create synthetic data enhancements to predict the reproducibility of NGS probes in difficult-to-sequence regions. This bioinformatic approach, which achieved an AUC of 0.85-0.89, can flag variants with likely poor reproducibility for orthogonal validation without the need for costly wet-lab replicates [15].

The Scientist's Toolkit: Essential Reagents and Metrics

Table 2: Key Research Reagent Solutions and Quality Metrics for Orthogonal Validation

| Tool/Reagent | Primary Function in Validation | Application Notes |

|---|---|---|

| GIAB Reference Materials | Benchmark "truth set" for validating NGS pipelines and training ML models. | Essential for establishing lab-specific quality thresholds and for bioinformatics pipeline validation [16] [17]. |

| PCR-Free WGS Libraries | Reduces library preparation artifacts that can mimic true variants. | Critical for minimizing false positives in whole-genome studies; explained absence of certain artifacts in one study [13]. |

| Multiple Bioinformatics Callers | Provides computational orthogonal confirmation (e.g., DeepVariant). | Can be used for low-quality variants, though performance varies (F1-score 0.76 in one assessment) [13]. |

| Quality Metric: Depth (DP) | Measures number of reads covering a variant; indicates confidence. | Caller-agnostic; DP ≥ 15 suggested for WGS [13]. |

| Quality Metric: Allele Frequency (AF) | Proportion of reads supporting the variant; should be ~0.5 for germline heterozygotes. | Caller-agnostic; AF ≥ 0.25 suggested as threshold [13]. |

| Quality Metric: QUAL Score | Phred-scaled confidence that a variant exists at a given site. | Caller-specific (e.g., QUAL ≥ 100 for GATK); highly effective but not transferable between pipelines [13]. |

The paradigm for orthogonal validation is shifting decisively from a universal requirement to a targeted, evidence-based strategy. The consensus emerging from recent research and guideline development is that clinical laboratories should establish and validate internal quality thresholds based on their specific NGS and bioinformatics pipelines. For variants exceeding these thresholds—particularly SNVs in uniquely mappable regions—orthogonal Sanger validation is increasingly seen as redundant. Future efforts will focus on standardizing these quality metrics across platforms, refining machine learning models for variant triage, and integrating long-read sequencing technologies as a more comprehensive orthogonal method. The ultimate goal is a streamlined, cost-effective validation process that maintains the highest standards of clinical accuracy while fully leveraging the power of modern high-throughput genomics.

Sanger sequencing has long been regarded as the "gold standard" for confirming DNA sequence variants. However, with the maturation of Next-Generation Sequencing (NGS) technologies, the practice of universally validating NGS findings with Sanger sequencing is being re-evaluated. This guide objectively compares the performance of these technologies and outlines the specific scenarios where orthogonal Sanger verification remains indispensable in clinical diagnostics and research publications, supported by experimental data.

Next-Generation Sequencing has revolutionized genetics, enabling the simultaneous analysis of millions of DNA fragments. Despite its high throughput, the initial standards, including those from the American College of Medical Genetics (ACMG), often mandated that variants identified by NGS be confirmed by the orthogonal method of Sanger sequencing before reporting [13]. This practice was rooted in concerns over NGS errors related to sequencing artifacts, bioinformatics pipeline inaccuracies, and challenges in regions with complex architecture (e.g., high GC content) [18].

Recent large-scale studies have demonstrated that NGS data, when subjected to appropriate quality filters, can achieve exceptionally high accuracy, calling into question the utility of routine Sanger validation. One systematic evaluation of over 5,800 NGS-derived variants found a validation rate of 99.965% using Sanger sequencing, concluding that a single round of Sanger sequencing is more likely to incorrectly refute a true positive NGS variant than to correctly identify a false positive [7]. This guide synthesizes current evidence to define the key scenarios where Sanger verification is still required, providing a data-driven framework for researchers and clinicians.

Performance Comparison: NGS vs. Sanger Sequencing

The decision to use Sanger verification hinges on a clear understanding of the performance characteristics of both technologies. The following table summarizes key comparative metrics based on recent literature.

Table 1: Performance Comparison of NGS and Sanger Sequencing for Variant Detection

| Metric | Next-Generation Sequencing (NGS) | Sanger Sequencing |

|---|---|---|

| Throughput | High (Millions of parallel sequences) | Low (Single amplicons per reaction) |

| Cost per Base | Very Low | High |

| Single-Base Accuracy | High (Exceeding 99.9% for high-quality calls) [13] | Very High (Error rate ~0.01% or lower) [9] |

| Ideal Application | Interrogating multiple genes or the entire genome/exome | Targeted confirmation of specific variants |

| Key Strengths | Discovery of novel variants, detection of mosaicism (at sufficient depth), comprehensive profiling | Long read lengths, high consensus accuracy, single-molecule resolution |

| Key Limitations | False positives/negatives can occur in low-complexity or low-coverage regions [18] | Low throughput, inefficient for screening, prone to allelic dropout (ADO) from primer-binding SNPs [18] |

Key Scenarios Mandating Sanger Verification

Scenario 1: Clinical Diagnostics and Reporting of Pathogenic Variants

In clinical diagnostics, where a result directly impacts patient management, the highest level of certainty is required. While the trend is to move away from universal validation, Sanger confirmation remains critical in specific diagnostic contexts.

- Evidence and Data: A 2025 study on Whole Genome Sequencing (WGS) data found that while overall concordance with Sanger was 99.72%, certain variant quality parameters could identify false positives. The study demonstrated that applying caller-agnostic filters (Depth of coverage (DP) ≥ 15 and Allele Frequency (AF) ≥ 0.25) successfully isolated all unconfirmed variants into a "low-quality" bin, suggesting that variants failing these thresholds require Sanger verification before clinical reporting [13].

- Experimental Protocol: The standard protocol involves:

- Variant Identification: NGS is performed on a patient sample using a targeted panel, exome, or genome sequencing.

- Variant Filtering: Variants are filtered using established bioinformatic pipelines. Variants with a depth of coverage (DP) below 15-20x or an allele frequency (AF) below 20-25% are flagged for validation [13] [18].

- PCR Amplification: Specific primer pairs are designed to flank the variant of interest. These primers must be checked for common SNPs at their binding sites to prevent allelic dropout [18].

- Sanger Sequencing: PCR amplicons are purified and sequenced using fluorescent dye-terminator chemistry on a capillary electrophoresis instrument [19].

- Analysis: The resulting chromatograms are manually inspected for the presence of the variant, confirming both the zygosity and the base change.

Scenario 2: Validation of Critical Research Findings for Publication

In research publications, particularly those reporting novel, high-impact genetic findings, Sanger validation is often a requirement from journal reviewers to ensure the robustness of the data.

- Evidence and Data: The credibility of a research claim is paramount. Sanger sequencing provides an orthogonal method to confirm that a variant is not an NGS-specific artifact. This is especially true for novel mutations that constitute the central evidence of a study. Research has shown that discrepancies between NGS and Sanger can sometimes originate from Sanger-specific issues like allelic dropout (ADO); therefore, careful experimental design is essential [18].

- Experimental Protocol: The methodology is similar to the clinical diagnostic protocol but is often applied to a wider range of variant types, including those in complex genomic regions. Key steps include:

- Primer Design: Using tools like Primer3 to design primers that amplify a 500-800bp region encompassing the variant [7] [18].

- Template Preparation: Using high-quality, purified DNA (e.g., from plasmid minipreps or gel-extracted PCR products) as a template for the sequencing reaction [19].

- Bidirectional Sequencing: Sequencing the same amplicon from both forward and reverse primers to achieve comprehensive coverage and confirm the variant call [7].

Scenario 3: Verification of Genome Editing Outcomes

The field of genome editing (e.g., with CRISPR-Cas9) relies heavily on Sanger sequencing to confirm the precise nature of induced mutations, such as insertions or deletions (indels).

- Evidence and Data: Specialized computational tools (TIDE, ICE, DECODR) have been developed to deconvolute complex Sanger sequencing chromatograms from edited heterogeneous cell populations into quantitative indel frequencies [20]. A 2024 study systematically compared these tools and found that while they perform well with simple indels, their estimates can vary with more complex edits, underscoring the need for careful tool selection and interpretation, with Sanger as the foundational data source [20].

- Experimental Protocol:

- PCR Amplification: The genomic target site surrounding the CRISPR-Cas cut site is amplified from a pool of edited cells.

- Sanger Sequencing: The PCR products are directly subjected to Sanger sequencing, which produces mixed-base chromatograms at the site of editing due to heterogeneity.

- Computational Analysis: The sequencing trace data (.ab1 files) from the edited pool and a wild-type control are uploaded to a web tool (e.g., ICE or DECODR). The algorithm decomposes the complex trace to estimate the percentage of indels and their size distribution [20].

Scenario 4: Resolving Discrepancies or Ambiguous NGS Data

When NGS data is ambiguous, has low quality scores, or conflicts with phenotypic or other molecular data, Sanger sequencing is the definitive method for resolution.

- Evidence and Data: A 2020 study reported three cases of discrepancy between high-quality NGS calls and the initial Sanger sequencing result. Upon reinvestigation, all three NGS variants were confirmed to be correct, with the Sanger errors attributed to allelic dropout (ADO) caused by unknown SNPs in the primer-binding region. This highlights that in cases of discrepancy, the NGS result should not be automatically assumed false, and re-designing Sanger primers is a critical step [18].

- Experimental Protocol: The process involves:

- Re-examination of NGS Data: Inspecting the BAM file for alignment issues, strand bias, and low mapping quality at the variant site.

- Re-design of Sanger Primers: Designing new primers that bind to a different, unique genomic region to avoid suspected SNPs that cause ADO [18].

- Re-sequencing: Performing Sanger sequencing with the new primers to obtain a clear, unambiguous result.

Decision Workflows and Visualization

The following diagram illustrates the decision-making process for determining when Sanger verification is necessary, integrating the scenarios and quality thresholds discussed.

Decision Workflow for Sanger Verification

The Scientist's Toolkit: Essential Reagents and Materials

Successful Sanger verification relies on high-quality reagents and careful experimental preparation. The following table details key solutions and their functions.

Table 2: Essential Research Reagent Solutions for Sanger Verification

| Item | Function | Key Considerations |

|---|---|---|

| High-Purity Template DNA | Serves as the substrate for the sequencing reaction. | Plasmid, PCR product, or genomic DNA with OD260/OD280 ratio of ~1.8-2.0 [19]. Concentration: 10-100 ng/μL depending on type [19]. |

| Optimized Sequencing Primers | Provides the starting point for DNA polymerase. | 18-25 bases in length; designed to avoid secondary structures and SNPs in binding sites [18] [19]. |

| BigDye Terminator Kit | Fluorescently labeled dideoxynucleotides (ddNTPs) for chain termination. | The core chemistry for cycle sequencing. Requires optimization of dilution and usage to reduce costs [18]. |

| DNA Polymerase | Enzyme that catalyzes the template-dependent DNA synthesis. | High-fidelity enzymes are preferred. Typical use: 0.5-1.0 U per 10 μL reaction [19]. |

| Capillary Electrophoresis Instrument | Separates DNA fragments by size and detects fluorescent labels. | Instruments like ABI 3500 Series perform automated electrophoresis, data collection, and base calling [21]. |

The paradigm for Sanger verification of NGS findings is shifting from a routine, blanket practice to a targeted, evidence-based one. Data shows that NGS is highly accurate, and its false positives can be effectively predicted using quality metrics like depth of coverage and allele frequency. Sanger sequencing remains an indispensable tool in the molecular biologist's arsenal, but its application is now focused on key scenarios: clinical reporting of low-quality variants, cornerstone research findings, genome editing verification, and resolution of technical discrepancies. By adopting this refined approach, researchers and diagnosticians can optimize resources while maintaining the highest standards of data integrity.

The Complementary Roles of NGS and Sanger in Modern Genomics Workflows

Next-generation sequencing (NGS) and Sanger sequencing represent complementary technological pillars in modern genomic analysis. While NGS provides unprecedented throughput for discovering genetic variants across entire genomes or targeted gene panels, Sanger sequencing remains the gold standard for confirming these findings with exceptional accuracy [22] [23]. This complementary relationship is particularly crucial in clinical diagnostics and drug development, where verifiable accuracy is paramount for patient care and regulatory approval. The integration of both technologies creates a powerful workflow that leverages the discovery power of NGS with the verification reliability of Sanger sequencing, establishing a robust framework for genomic analysis across research and clinical applications.

Technical Comparison: NGS vs. Sanger Sequencing

The fundamental differences between NGS and Sanger sequencing technologies dictate their respective roles in genomic workflows. Understanding their technical specifications, advantages, and limitations enables researchers to deploy each method strategically.

Table 1: Technical Specifications and Performance Comparison

| Parameter | Sanger Sequencing | Next-Generation Sequencing (NGS) |

|---|---|---|

| Sequencing Principle | Chain termination with dideoxynucleotides (ddNTPs) [22] | Massively parallel sequencing [23] |

| Throughput | Low (single fragment per reaction) [23] | High (millions of fragments simultaneously) [23] [24] |

| Read Length | 500-1000 base pairs [25] [9] | 50-400 base pairs (short-read platforms) [26] |

| Accuracy | >99.9% (gold standard) [22] [23] | >99% (with sufficient coverage) [23] |

| Variant Detection Sensitivity | 15-20% [26] | <1% [26] |

| Cost-Effectiveness | Ideal for small projects, individual genes [23] [24] | Cost-effective for large projects, entire genomes [23] [24] |

| Time per Run | 20 minutes - 3 hours [26] | 48 hours (for standard NGS workflows) [26] |

| Key Applications | Variant confirmation, single gene testing, plasmid verification [22] [27] | Whole genome/exome sequencing, gene panel testing, transcriptomics [23] |

| Data Analysis Complexity | Minimal bioinformatics required [23] | Complex, requires specialized bioinformatics [23] |

Sanger sequencing operates on the principle of chain termination using fluorescently labeled dideoxynucleotides (ddNTPs) that lack the 3'-hydroxyl group necessary for DNA strand elongation [22]. When incorporated during DNA synthesis, these ddNTPs randomly terminate growing DNA strands, producing fragments of varying lengths that are separated by capillary electrophoresis to determine the nucleotide sequence [22] [24].

In contrast, NGS technologies employ massively parallel sequencing, simultaneously determining the sequence of millions to billions of DNA fragments [23] [24]. This high-throughput approach enables comprehensive genomic analysis but generates shorter reads than Sanger sequencing. The most commonly used NGS technique, sequencing by synthesis (SBS), involves amplifying and sequencing DNA fragments on a solid surface or in emulsion droplets, with nucleotide incorporation detected through various signal detection methods [24].

The exceptional accuracy of Sanger sequencing (>99.9%) establishes it as the reference standard for validating genetic variants, particularly for clinical applications [22] [23]. Meanwhile, NGS provides superior sensitivity for detecting low-frequency variants present in minor cell populations, with variant detection thresholds below 1% compared to Sanger's 15-20% sensitivity limit [26]. This makes NGS particularly valuable for oncology applications where detecting somatic mutations in heterogeneous tumor samples is critical.

Experimental Evidence: Validation Studies and Performance Metrics

Robust experimental studies have quantified the concordance between NGS and Sanger sequencing, providing empirical evidence to guide their complementary implementation in genomic workflows.

Table 2: Experimental Validation Studies of NGS-Sanger Concordance

| Study Focus | Sample Size | Key Findings | Concordance Rate |

|---|---|---|---|

| Targeted NGS Panel Validation [14] | 77 patient samples, 1080 SNVs, 124 indels | 100% concordance for recurrent variants in unrelated samples | 100% for SNVs |

| Large-Scale Exome Sequencing Validation [7] | 684 exomes, over 5,800 NGS-derived variants | Only 19 NGS variants not initially validated by Sanger; 17 confirmed with redesigned primers | 99.965% overall |

| Nanopore vs Sanger in Oncohematology [26] | 164 samples, 174 analyzed regions across 15 genes | Supported implementation of MinION technology for routine variant detection | 99.43% |

| NGS-Sanger Comparison in Clinical Context [14] | 7 1000 Genomes Project samples, 762 unique variants | High concordance with 1000 Genomes phase 1 data; all discrepancies resolved with additional data | 97.1% |

The remarkably high concordance rates demonstrated in these studies, particularly for single nucleotide variants (SNVs), question the utility of routine orthogonal Sanger validation for all NGS findings. The large-scale evaluation by the ClinSeq project, which analyzed over 5,800 NGS-derived variants, revealed an exceptional validation rate of 99.965% [7]. The authors concluded that "a single round of Sanger sequencing is more likely to incorrectly refute a true positive variant from NGS than to correctly identify a false positive variant from NGS," suggesting that routine Sanger validation of NGS variants has limited utility [7].

However, this does not eliminate the need for Sanger confirmation in specific scenarios. The same study found that when NGS variants failed Sanger validation, the issues were typically resolved by redesigning sequencing primers, indicating that primer design and genomic context can impact verification success [7]. Insertion-deletion variants (indels) may also require Sanger sequencing for precise characterization of their genomic location, even when initially detected by NGS [14].

Methodological Guide: Experimental Workflows and Protocols

Implementing an effective NGS-Sanger validation workflow requires careful experimental design and execution. The following section outlines standard protocols for orthogonal verification of NGS findings.

NGS Variant Detection Workflow

Diagram 1: NGS Variant Detection Workflow

The NGS variant detection process begins with sample preparation and DNA extraction using standardized methods such as salting-out protocols or column-based kits [7]. For targeted sequencing approaches, solution-hybridization capture systems (e.g., SureSelect, TruSeq) enrich specific genomic regions of interest [7]. Following library preparation, massively parallel sequencing occurs on platforms such as Illumina GAIIx or HiSeq series, generating millions to billions of short reads [7]. Image analysis and base calling transform raw signals into sequence data, which is then aligned to reference genomes (e.g., hg19) using tools like NovoAlign [7]. Variant calling identifies potential mutations, with quality thresholds such as minimum depth of coverage (>100x) and quality scores (e.g., MPG score ≥10) ensuring reliable variant detection [7].

Sanger Sequencing Validation Protocol

Diagram 2: Sanger Validation of NGS Variants

The Sanger validation workflow initiates with selecting NGS-derived variants requiring confirmation, prioritizing clinically significant mutations or those with borderline quality metrics [28]. PCR and sequencing primers are designed using specialized tools (e.g., PrimerTile, Primer3) that avoid known polymorphisms and optimize annealing conditions [7]. For the sequencing reaction, template DNA is amplified with fluorescently labeled ddNTPs using DNA polymerase, generating chain-terminated fragments [22]. Post-amplification cleanup removes excess primers and unincorporated nucleotides before capillary electrophoresis separates fragments by size [22] [24]. Fluorescence detection identifies the terminal ddNTP at each position, generating chromatograms for sequence determination [22]. Finally, sequence alignment tools compare results with reference sequences and the original NGS data to confirm variants [28].

Table 3: Essential Research Reagent Solutions for NGS-Sanger Workflows

| Reagent/Kit | Application | Key Features | Representative Examples |

|---|---|---|---|

| DNA Extraction Kits | Nucleic acid purification from various sample types | High purity, yield, and integrity | Salting-out method (Qiagen) [7] |

| Target Enrichment Systems | Selective capture of genomic regions for NGS | Comprehensive coverage, uniformity | SureSelect (Agilent), TruSeq (Illumina) [7] |

| NGS Library Prep Kits | Preparation of sequencing libraries | Efficiency, minimal bias | Illumina library prep kits [7] |

| DNA Polymerase | PCR amplification for Sanger sequencing | High fidelity, processivity | Optimized enzymes with proofreading activity [9] |

| Cycle Sequencing Kits | Sanger sequencing reactions | Fluorescent ddNTP incorporation | BigDye Terminator kits [7] |

| Capillary Electrophoresis Kits | Fragment separation for Sanger sequencing | High resolution, sensitivity | Applied Biosystems kits [28] |

Quality Control and Troubleshooting

Successful implementation of NGS-Sanger workflows requires rigorous quality control measures. For NGS data, ensure sufficient depth of coverage (>100x for heterozygous variants) and high-quality scores at variant positions [7] [14]. For Sanger validation, examine chromatograms for clean baseline separation between peaks and strong signal intensity throughout the sequence [22]. When Sanger fails to confirm NGS variants, consider redesigning sequencing primers to avoid problematic genomic regions, increasing template DNA concentration, or verifying NGS read alignment and variant calling parameters [7]. For indels, careful inspection of both forward and reverse Sanger sequences is essential to determine exact breakpoints [14].

Application Scenarios and Decision Framework

The complementary use of NGS and Sanger sequencing varies across research and clinical contexts, with specific applications benefiting from their integrated implementation.

Clinical Diagnostics and Genetic Testing

In clinical settings, NGS enables comprehensive testing for heterogeneous conditions through multi-gene panels, whole exome sequencing, or whole genome sequencing [26] [23]. For definitive diagnosis of monogenic disorders or confirmation of pathogenic variants, Sanger sequencing provides the requisite verification [22] [27]. This is particularly important for heritable conditions like BRCA-related cancers or cystic fibrosis, where diagnostic accuracy directly impacts patient management [22] [23]. In oncology, NGS identifies low-frequency somatic mutations in tumor samples, while Sanger confirms key therapeutic markers [26] [27].

Research Applications

In basic research, NGS facilitates discovery-based studies including novel variant identification, transcriptomic profiling, and epigenomic characterization [23]. Sanger sequencing then verifies key findings through orthogonal validation [27] [28]. Additional research applications include confirming genome editing outcomes (e.g., CRISPR-Cas9 modifications), validating plasmid constructs, and verifying synthetic biology constructs [27] [9]. The high accuracy of Sanger sequencing makes it indispensable for these applications where sequence precision is critical.

Decision Framework for Method Selection

Selecting the appropriate sequencing method or combination depends on multiple factors:

- Project Scale: For sequencing individual genes or limited targets (<20), Sanger sequencing is typically more practical and cost-effective. For analyzing hundreds to thousands of genes or entire genomes, NGS is preferable [22] [23].

- Variant Frequency: For detecting low-frequency variants (<15-20%) in heterogeneous samples, NGS provides superior sensitivity [26].

- Accuracy Requirements: For clinical applications requiring the highest possible accuracy for specific variants, Sanger confirmation remains essential [22] [28].

- Turnaround Time: For urgent clinical decisions (e.g., NPM1 mutational status in AML), targeted approaches like Sanger or third-generation sequencing (e.g., Nanopore) may provide faster results than standard NGS workflows [26].

- Resource Availability: Sanger requires minimal bioinformatics infrastructure, while NGS demands significant computational resources and expertise [23].

Emerging Trends and Future Directions

Sequencing technologies continue to evolve, with new developments enhancing their complementary roles. Third-generation sequencing technologies, such as Oxford Nanopore MinION, offer potential alternatives with real-time sequencing, long reads, and rapid turnaround times [26]. Recent studies demonstrate 99.43% concordance between MinION and Sanger sequencing in oncohematological diagnostics, suggesting potential for replacing Sanger in some verification scenarios [26].

Technical improvements in Sanger sequencing continue to optimize its performance, with developments in capillary array design, fluorescent detection systems, and DNA polymerase engineering enhancing throughput, accuracy, and read length [9]. Microfluidic chip technologies enable miniaturization and automation of Sanger sequencing, potentially increasing its efficiency for validation workflows [9].

Bioinformatics advances are streamlining the validation process through automated primer design, integrated data analysis platforms, and visualization tools that compare NGS and Sanger results [28]. Software solutions like Minor Variant Finder enhance Sanger's sensitivity for detecting low-frequency mutations, bridging one of the key sensitivity gaps between Sanger and NGS [28].

NGS and Sanger sequencing maintain complementary rather than competitive roles in modern genomics. NGS provides unparalleled discovery power for comprehensive genomic analysis, while Sanger delivers verifiable accuracy for confirmatory testing. This synergistic relationship creates a robust framework for genomic analysis across basic research, clinical diagnostics, and therapeutic development. As sequencing technologies evolve, their core strengths will likely maintain this complementary dynamic, with verification standards adapting to technological improvements rather than abandoning the fundamental principle of orthogonal validation that ensures reliability in genomic medicine.

The field of genomic sequencing has undergone a remarkable transformation over the past two decades, driven primarily by the advent of next-generation sequencing (NGS) technologies. As these high-throughput methods entered clinical and research laboratories, a critical question emerged: how should variants detected by NGS be validated to ensure accuracy? For years, Sanger sequencing served as the undisputed "gold standard" for orthogonal confirmation of NGS findings. However, as NGS technologies have matured, with demonstrated error rates below 0.1% under optimal conditions, the practice of reflexive Sanger validation has faced increasing scrutiny [7] [12]. This guide examines the evolving standards for validation practices, presenting comparative experimental data that inform current professional guidelines and laboratory practices.

The Era of Mandatory Sanger Validation

When NGS first transitioned from research to clinical applications, regulatory uncertainty and a natural caution regarding new technologies made Sanger confirmation a standard practice. This approach was rooted in Sanger's long-established reputation for accuracy, with a documented single-base sequencing error rate below 0.001% [26].

The fundamental rationale for this practice included:

- Error Prevention: Bioinformatic pipelines for NGS were initially novel and required verification

- Regulatory Compliance: Laboratories sought to minimize risk in clinical reporting

- Technical Limitations: Early NGS platforms had higher error rates in challenging genomic regions

During this period, Sanger validation was considered an essential quality control measure, particularly for variants with potential clinical significance [12].

Paradigm Shift: Evidence Challenging Routine Sanger Validation

Landmark Validation Studies

Comprehensive studies began questioning the utility of reflexive Sanger validation as NGS technology matured. A systematic evaluation published in Clinical Chemistry examined this practice using data from the ClinSeq project, which provided a unique opportunity to compare NGS variants with high-throughput Sanger sequencing on the same samples [7].

Table 1: Large-Scale Comparison of NGS vs. Sanger Sequencing

| Study Parameter | 19-Gene Analysis | 5-Gene Analysis (684 participants) |

|---|---|---|

| Total NGS Variants | 234 variants | >5,800 variants |

| Discrepant Variants | 0 | 19 initially |

| Resolution | N/A | 17 confirmed by redesigned Sanger primers, 2 had low NGS quality scores |

| Final Validation Rate | 100% | 99.965% |

This study demonstrated that a single round of Sanger sequencing was statistically more likely to incorrectly refute a true positive NGS variant than to correctly identify a false positive [7]. The authors concluded that routine orthogonal Sanger validation has limited utility and should not be considered a best practice standard.

Case Studies Highlighting Sanger Limitations

Further evidence emerged from smaller, focused studies. An analysis of 945 NGS variants from 218 patients revealed only three discrepancies with Sanger sequencing [12]. Upon deeper investigation, all three NGS calls were validated, with the discrepancies attributed to allelic dropout (ADO) during polymerase chain reaction or Sanger sequencing reactions. This phenomenon, often related to unpredictable private variants on primer-binding regions, highlighted that Sanger sequencing itself is not error-free [12].

Current Guidelines and Evolving Standards

Professional organizations have responded to this accumulating evidence by refining their recommendations regarding validation practices.

Key Guideline Updates

The Association for Molecular Pathology (AMP) and the College of American Pathologists have developed frameworks that emphasize an error-based approach to validation rather than reflexive Sanger confirmation [29]. These guidelines encourage laboratories to:

- Identify potential sources of errors throughout the analytical process

- Address these potential errors through test design, method validation, or quality controls

- Focus validation efforts on specific variant types or genomic regions where NGS performance is known to be challenging

The AMP and National Society of Genetic Counselors have further addressed this issue through a joint working group, establishing recommendations for standardizing orthogonal confirmation practices [10]. While specific guidelines vary between organizations, the overall trend is toward limiting Sanger validation to specific circumstances rather than applying it universally.

Emerging Technologies and Validation Paradigms

The Rise of Third-Generation Sequencing

New sequencing technologies continue to reshape the validation landscape. Oxford Nanopore Technology (ONT) represents a promising approach that combines long-read capabilities with decreasing turnaround times [26].

Table 2: Performance Comparison of Sequencing Technologies

| Parameter | Sanger Sequencing | NGS (Illumina) | Nanopore (MinION) |

|---|---|---|---|

| Single-Read Accuracy | >99% [26] | >99% [26] | >99% [26] |

| Read Length | 400-900 bp [26] | 50-500 bp [26] | Up to megabase scales [26] |

| Sensitivity | 15-20% [26] | 1% [26] | <1% [26] |

| Error Rate | 0.001% [26] | 0.1-1% [26] | ~5% (platform-dependent) [26] |

| Main Applications | SNVs, INDELs [26] | SNVs, INDELs [26] | SNVs, INDELs, complex structural variants [26] |

A 2025 study comparing Sanger sequencing with MinION technology for oncohematological diagnostics demonstrated 99.43% concordance, supporting the implementation of this technology as a viable alternative to Sanger for validation purposes [26].

Methodological Advancements Reducing Validation Needs

Technical improvements across the NGS workflow have substantially enhanced reliability:

- Hybrid capture-based enrichment methods using longer probes that tolerate mismatches better, reducing allelic dropout [29]

- Enhanced bioinformatics pipelines with improved alignment algorithms and variant calling

- Quality metrics such as Phred scores (>Q30), depth of coverage (>30x), and allele balance thresholds (>0.2) that reliably predict validation success [12]

Modern Validation Framework: A Risk-Based Approach

Contemporary best practices employ a nuanced, context-dependent strategy for variant validation:

Scenarios Warranting Orthogonal Confirmation

- Borderline quality metrics: Variants with coverage depth, quality scores, or allele frequencies near established thresholds

- Clinically critical findings: Variants with significant therapeutic implications

- Technically challenging regions: GC-rich areas, homopolymer stretches, or regions with pseudogenes

- Novel variant types: When laboratories first implement detection of complex variants

Circumstances Where Sanger Validation Adds Limited Value

- High-quality NGS variants: Those with strong quality metrics in well-validated assays

- Established variant types: In genes and variant classes with previously demonstrated high concordance

- High-throughput research settings: Where the 99.9%+ validation rate makes reflexive Sanger confirmation impractical

Evolution of Validation Standards: This diagram illustrates the transition from reflexive Sanger confirmation to a modern, risk-based approach that uses quality metrics to determine when orthogonal validation is necessary.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Sequencing Validation Studies

| Reagent/Material | Function | Application Notes |

|---|---|---|

| SureSelect Target Enrichment | Hybrid capture-based library preparation | Used in large-scale validation studies; tolerates mismatches better than amplification-based methods [7] |

| TruSeq / SureSelect Exome Capture | Solution-hybridization exome capture | Provides comprehensive coverage of coding regions for variant discovery [7] |

| BigDye Terminator v3.1 | Sanger sequencing chemistry | Standard for cycle sequencing reactions; used in discrepant resolution [7] |

| Primer3 Algorithm | Primer design for Sanger validation | Critical for avoiding variants in primer-binding sites that cause allelic dropout [12] |

| ForenSeq mtDNA Kits | Targeted NGS for mitochondrial DNA | Enables comparison of NGS vs. Sanger for forensic applications [30] |

| MiniON Flow Cells | Nanopore-based sequencing | Allows third-generation sequencing validation with long-read capabilities [26] |

The evolution of validation standards from reflexive Sanger confirmation to a nuanced, evidence-based framework reflects the maturation of NGS technologies. Current practices emphasize that validation strategies should be driven by performance data rather than tradition. As one comprehensive study concluded, "Validation of NGS-derived variants using Sanger sequencing has limited utility, and best practice standards should not include routine orthogonal Sanger validation of NGS variants" [7]. This paradigm shift enables more efficient resource allocation in both clinical and research settings while maintaining the rigorous standards necessary for accurate genomic interpretation.

Implementing a Robust Sanger Verification Workflow for NGS Findings

Next-generation sequencing (NGS) has revolutionized genomic research and clinical diagnostics, enabling the simultaneous analysis of millions of DNA fragments. However, the transition from massive parallel sequencing to clinically actionable results requires a robust validation framework to ensure accuracy and reliability. Sanger sequencing has long served as the gold standard for orthogonal confirmation of NGS-derived variants, but blanket validation of all variants is inefficient and costly. This guide provides a comprehensive, evidence-based framework for designing an effective validation pipeline that strategically employs Sanger confirmation where most needed, comparing this approach with emerging alternatives to optimize resource allocation while maintaining stringent quality standards in genomic research and drug development.

NGS and Sanger Sequencing: A Comparative Technological Foundation

Understanding the fundamental differences between NGS and Sanger sequencing technologies is crucial for designing an effective validation pipeline. Each method has distinct strengths and limitations that inform their complementary roles in variant verification.

Table 1: Fundamental Comparison of NGS and Sanger Sequencing Technologies

| Feature | Next-Generation Sequencing (NGS) | Sanger Sequencing |

|---|---|---|

| Throughput | Millions to billions of fragments simultaneously [31] | One DNA fragment at a time [31] |

| Speed | Entire human genome in hours [31] | Slow, suitable for single genes [31] |

| Cost | Under $1,000 per whole human genome [31] | High for large-scale applications [31] |

| Read Length | Short (50-600 base pairs, typically) [31] | Long (500-1000 base pairs) [31] |

| Best Applications | Whole genomes, exomes, large panels, novel discovery [31] [32] | Targeted validation, confirmation of specific variants [31] |

| Accuracy | Excellent for high-quality variants (≥99.72% concordance with Sanger) [13] | Considered gold standard [13] [18] |

The massively parallel approach of NGS creates unprecedented throughput but introduces specific error profiles that necessitate validation, particularly for clinical applications. Sanger sequencing provides precise, long-read accuracy but lacks the scalability for comprehensive genomic analysis [31]. Effective pipeline design leverages the strengths of both technologies while minimizing their respective limitations.

Establishing Quality Thresholds for Strategic Sanger Validation

Recent evidence demonstrates that establishing quality thresholds for NGS variants can dramatically reduce the need for Sanger confirmation while maintaining exceptional accuracy. Key quality metrics have emerged as reliable predictors of variant authenticity.

Table 2: Evidence-Based Quality Thresholds for Reducing Sanger Validation

| Quality Parameter | Proposed Threshold | Concordance with Sanger | Application Considerations |

|---|---|---|---|

| Coverage Depth (DP) | ≥15-20x [13] | 100% [13] | PCR-free protocols reduce bias; lower may be sufficient with high AF [13] |

| Allele Frequency (AF) | ≥0.25-0.30 [13] [18] | 100% [13] | Higher thresholds reduce false positives; consider tumor purity in oncology [13] |

| Variant Quality (QUAL) | ≥100 [13] | 100% [13] | Caller-specific (GATK HaplotypeCaller); not directly transferable between pipelines [13] |

| Filter Status | PASS [13] | High (99.72% overall) [13] | Variants failing FILTER should always be validated [13] |

Research on 1756 WGS variants demonstrated that implementing caller-agnostic thresholds (DP ≥15, AF ≥0.25) reduced the validation burden to just 4.8% of variants while maintaining 100% sensitivity for false positives. Using caller-specific QUAL scores (≥100) further reduced necessary validation to only 1.2% of variants [13]. These thresholds provide a robust framework for prioritizing Sanger confirmation while recognizing that laboratory-specific validation may be necessary to account for pipeline-specific characteristics.

Diagram 1: Variant validation decision workflow showing how quality thresholds dramatically reduce Sanger confirmation burden.

Comparative Analysis of Validation Approaches

Traditional vs. Threshold-Based Sanger Validation

The evolution from blanket Sanger confirmation to strategic, quality-based validation represents a significant advancement in NGS pipeline efficiency.

Table 3: Traditional vs. Modern Validation Approaches

| Validation Approach | Sanger Utilization | Advantages | Limitations |

|---|---|---|---|

| Traditional Blanket Validation | 100% of NGS variants | Maximum accuracy, compliance with early ACMG guidelines [18] | Resource-intensive, time-consuming, cost-ineffective [13] [18] |

| Quality-Threshold Approach | 1.2-4.8% of NGS variants [13] | Efficient resource allocation, faster turnaround, maintained accuracy [13] | Requires initial validation to establish lab-specific thresholds [13] |

| Variant-Type Specific Approach | All indels + low-quality SNVs | Balances comprehensive indel validation with SNV efficiency [14] | Still requires significant Sanger resources for indel-rich regions [14] |

Multiple studies have demonstrated that quality-focused approaches maintain exceptional accuracy while dramatically improving efficiency. One analysis of 919 comparisons between NGS and Sanger showed 100% concordance for high-quality variants [14], while another study of 1756 WGS variants demonstrated 99.72% overall concordance [13].

Alternative Validation Methods

While Sanger sequencing remains the established validation standard, several alternative approaches are emerging:

- Orthogonal NGS Methods: Using a different NGS platform or bioinformatics pipeline for confirmation provides scalability but may perpetuate systematic errors [13].

- Consensus Calling: Employing multiple variant callers (GATK, SAMtools, DeepVariant) increases specificity but requires sophisticated bioinformatics infrastructure [33].

- Array-Based Validation: Microarray genotyping offers high-throughput confirmation but is limited to known variants and cannot detect novel findings [33].

Research indicates that while these alternatives show promise, they have limitations. One evaluation found that using DeepVariant to validate low-quality variants (QUAL <100) achieved only an F1-score of 0.76, indicating significant limitations compared to Sanger [13].

Implementing the Validation Pipeline: Protocols and Methodologies

NGS Variant Calling with GATK Best Practices

The foundation of an effective validation pipeline begins with optimized NGS data processing:

- Read Mapping and Alignment: Map raw sequencing reads (FASTQ) to the reference genome (e.g., GRCh37/hg19, GRCh38/hg38) using BWA-MEM [18].

- Duplicate Marking: Identify and mark PCR duplicates using tools like Picard to prevent artificial inflation of variant support [33].

- Local Realignment and Base Quality Recalibration: Perform local realignment around indels and base quality score recalibration (BQSR) using GATK. This critical step significantly improves variant calling accuracy, with one study showing it improved positive predictive value from 35.25% to 88.69% for certain variant types [33].

- Variant Calling: Call variants using GATK HaplotypeCaller, which outperforms UnifiedGenotyper and SAMtools mpileup, demonstrating positive predictive values of 95.37% versus 69.89% for tool-specific variants [33].

- Variant Quality Score Recalibration (VQSR): Apply VQSR to filter variants based on a Gaussian mixture model of annotation values, which provides slightly better specificity (99.79% vs. 99.56%) compared to hard filtering [33].

Sanger Sequencing Validation Protocol

For variants requiring orthogonal confirmation, implement a rigorous Sanger sequencing protocol:

- Primer Design: Design primers flanking the target variant using Primer3, with optimal length of 18-25 bases, annealing temperature of 50-65°C, and GC content of 40-60%. Verify specificity with BLAST and check for polymorphisms in primer-binding sites [18] [19].

- PCR Amplification: Perform PCR in 25μL reactions containing 50ng genomic DNA, 10pmol of each primer, 2.5mM dNTPs, and FastStart Taq DNA Polymerase. Use touchdown PCR or optimized annealing temperatures for challenging regions [18] [19].

- PCR Product Purification: Treat amplicons with Exonuclease I and FastAP Thermosensitive Alkaline Phosphatase to remove excess primers and nucleotides [18].

- Sequencing Reaction and Analysis: Perform sequencing using BigDye Terminator chemistry on an ABI 3500xL Genetic Analyzer or similar platform. Analyze chromatograms using specialized software (e.g., Variant Reporter, Sequencher) with particular attention to indel regions where strand slippage can cause artifacts [18].

Resolution of Discrepant Results

Despite rigorous quality control, occasional discrepancies between NGS and Sanger results occur. A 2020 study analyzing 945 validated variants identified three discrepancies, all attributable to Sanger limitations rather than NGS errors [18]. Systematic troubleshooting should include:

- Allelic Dropout (ADO): Caused by polymorphisms in primer-binding sites preventing amplification of one allele. Resolution: Redesign primers or use different amplification conditions [18].

- Strand-Bias Artifacts: Uneven representation of forward and reverse strands in NGS data. Resolution: Examine alignment metrics and consider positional effects [33].

- Mapping Errors: Misalignment of reads in complex genomic regions. Resolution: Visualize reads in IGV and consider local assembly approaches [33].

Essential Research Reagent Solutions

Table 4: Key Reagents for NGS and Sanger Validation Workflows

| Reagent/Category | Specific Examples | Function | Considerations |

|---|---|---|---|

| NGS Library Prep | Illumina SureSelect, Haloplex, Agilent Magnis | Target enrichment, library construction | SureSelect for exome, Haloplex for panels; PCR-free preferred [13] [18] |

| NGS Sequencing | Illumina MiSeq, NextSeq; MGI DNBSEQ | Sequencing platform | MiSeq for panels, NextSeq for exomes; DNBSEQ as alternative [18] [34] |

| Variant Callers | GATK HaplotypeCaller, DeepVariant | Identify variants from aligned reads | GATK gold standard; DeepVariant for challenging variants [13] [33] |

| Sanger Sequencing | BigDye Terminator, FastStart Taq | Dideoxy sequencing reaction | BigDye standard for capillary electrophoresis [18] [19] |

| Nucleic Acid Purification | Tecan Freedom EVO, phenol-chloroform, column kits | DNA extraction and purification | Automated platforms increase throughput; manual methods for challenging samples [18] [19] |

Diagram 2: Systematic troubleshooting protocol for resolving discrepancies between NGS and Sanger sequencing results.

The evolution of NGS validation strategies from comprehensive Sanger confirmation to targeted, quality-driven approaches represents a maturation of genomic technologies. Evidence consistently demonstrates that implementing evidence-based thresholds for depth of coverage (≥15x), allele frequency (≥0.25), and variant quality (QUAL ≥100) can reduce Sanger validation to just 1.2-4.8% of variants while maintaining exceptional accuracy (99.72-100% concordance) [13].

Future developments will likely continue to reduce reliance on orthogonal validation through improved sequencing chemistries, enhanced bioinformatics algorithms, and standardized quality metrics. Emerging technologies like single-molecule sequencing and advanced computational methods may eventually obviate the need for Sanger confirmation entirely, but currently, a strategic, evidence-based validation pipeline remains essential for clinical-grade genomic analysis.

For research and drug development applications, implementing the tiered validation framework outlined in this guide provides an optimal balance of accuracy and efficiency, ensuring reliable variant confirmation while maximizing resource utilization in precision medicine initiatives.

Primer Design Best Practices for Sanger Verification of Variants

Next-Generation Sequencing (NGS) has revolutionized genomics by enabling the simultaneous analysis of millions of DNA fragments, providing unprecedented scale for variant discovery across entire genomes, exomes, and targeted panels [35] [23]. Despite its transformative impact, the American College of Medical Genetics (ACMG) has historically recommended orthogonal validation of NGS-identified variants before reporting, a role predominantly filled by Sanger sequencing [13]. This verification process is crucial in clinical diagnostics and drug development, where reporting accuracy directly impacts patient care and research conclusions.

Sanger sequencing remains the gold standard for confirmatory testing due to its exceptional per-base accuracy, which exceeds 99.99% for short reads [35] [23]. This verification process typically targets specific variants initially identified through NGS screening, leveraging Sanger's reliability for definitive confirmation of single-nucleotide variants (SNVs) and small insertions/deletions (indels) [35] [36]. The foundation of successful Sanger verification lies in optimal primer design, which ensures specific amplification and accurate sequencing of the target region containing the putative variant.

Comparative Analysis: NGS and Sanger Sequencing Performance

Technical and Performance Characteristics

The decision to use Sanger sequencing for NGS validation stems from their complementary strengths. While NGS offers superior throughput and sensitivity for variant discovery, Sanger provides unparalleled accuracy for confirming individual variants [23]. This synergy creates a powerful workflow: NGS enables broad discovery across thousands of targets, while Sanger delivers definitive verification of critical findings.

Table 1: Performance Comparison Between NGS and Sanger Sequencing

| Feature | Next-Generation Sequencing (NGS) | Sanger Sequencing |

|---|---|---|

| Fundamental Method | Massively parallel sequencing [35] | Chain termination with ddNTPs [35] |

| Single-Read Accuracy | >99% [26] | >99.99% (Gold Standard) [35] [23] |

| Typical Read Length | 50-500 base pairs [26] | 500-1000 base pairs [35] [36] |

| Variant Detection Sensitivity | 1-5% (can be <1% with deep sequencing) [26] [23] | 15-20% [26] |

| Primary Role in Verification | Variant discovery [23] | Orthogonal confirmation [13] |

| Best Applications | Whole genomes, exomes, transcriptomes, targeted panels [35] [23] | Single-gene targets, validation of NGS findings, plasmid sequencing [35] [23] |

Establishing Quality Thresholds for Sanger Validation

Recent studies indicate that not all NGS-identified variants require Sanger confirmation. Establishing quality thresholds allows laboratories to define "high-quality" variants that can be reported without orthogonal validation, significantly reducing time and cost [13]. Research on Whole Genome Sequencing (WGS) data demonstrates that applying specific quality filters can drastically reduce the validation burden while maintaining accuracy.

Table 2: Quality Thresholds for Filtering NGS Variants for Sanger Validation

| Parameter Type | Quality Threshold | Effect on Variant Set | Concordance with Sanger |

|---|---|---|---|

| Caller-Agnostic (DP & AF) | Depth of Coverage (DP) ≥ 15Allele Frequency (AF) ≥ 0.25 [13] | Reduces variants requiring validation to ~4.8% of initial set [13] | 100% [13] |

| Caller-Specific (QUAL) | QUAL ≥ 100 (for HaplotypeCaller) [13] | Reduces variants requiring validation to ~1.2% of initial set [13] | 100% [13] |

| Combined Threshold | FILTER = PASS, QUAL ≥ 100, DP ≥ 20, AF ≥ 0.2 [13] | Filters out 210 "low-quality" variants from a set of 1756 [13] | 100% for high-quality bin [13] |

Primer Design Fundamentals for Sanger Verification

Core Parameters for Optimal Primer Design

Proper primer design is arguably the most critical factor in successful Sanger sequencing, as even advanced sequencers cannot compensate for poorly designed primers [37]. The following parameters represent the consensus best practices from major sequencing centers and peer-reviewed literature [37] [38].

Table 3: Critical Parameters for Sanger Sequencing Primer Design

| Parameter | Optimal Range | Rationale & Practical Considerations |

|---|---|---|

| Primer Length | 18-24 nucleotides [37] [38] | Balances specificity with binding efficiency [37]. |

| GC Content | 40-60% [37] | Ideal for stable hybridization; extremes risk instability [37]. |

| GC Clamp | 1-2 G/C bases at the 3' end [38] | Promotes stable binding; avoid >3 G/C in final five bases [37]. |

| Melting Temperature (Tₘ) | 50-65°C (sweet spot: 60-64°C) [37] | Critical for binding specificity; primer pairs should have Tₘ within 2°C [37]. |

| Amplicon Size | 200-500 bp [37] | Optimal for Sanger sequencing; can extend to ~1000 bp [23]. |

| 3' End Placement | ≥50-60 bp upstream of variant [38] | Ensures the variant falls within high-quality sequencing read. |

| Avoid | Homopolymeric runs, repetitive elements, SNPs in primer site [37] | Prevents mispriming and amplification failures [37]. |

Avoiding Common Structural Pitfalls

Primer sequences must be screened for structural problems that compromise sequencing results:

- Secondary Structures: Hairpins and internal folding prevent primer binding to the target DNA. Avoid primers with strong intramolecular folding, particularly with competitive ΔG values [37].

- Self-Dimers and Cross-Dimers: Self-dimers occur when two copies of the same primer anneal, while cross-dimers form between forward and reverse primers. Both reduce primer availability and may generate non-specific products [37]. Use thermodynamic tools to screen designs, preferring ΔG values less negative than -9 kcal/mol for potential dimers [37].

- Runs and Repeats: Avoid long runs of the same nucleotide (e.g., "AAAA") and long di-nucleotide repeats (e.g., "ATATAT"), which can cause mispriming or polymerase slippage [37].

Experimental Protocol: A Workflow for Primer Design and Validation

Step-by-Step Primer Design Methodology

The following workflow provides a robust, reproducible protocol for designing primers for Sanger verification of NGS variants, grounded in best practices from NCBI Primer-BLAST, Primer3, and published guidelines [37].

Workflow Diagram Title: Sanger Verification Primer Design Protocol

Step 1: Define Target Region

- Select a target region of 100-200 base pairs flanking the NGS-identified variant [37]. Obtain the reference sequence from a curated database like NCBI or Ensembl using FASTA format or accession numbers [37].

Step 2: Utilize Primer Design Tools

- Use NCBI Primer-BLAST, which integrates Primer3's design engine with BLAST-based specificity checking [37] [39]. Input your target sequence or accession number and set constraints including product size (200-500 bp), Tₘ limits (58-62°C), and organism specificity [37].

Step 3: Evaluate Candidate Primers

- Screen candidate primers for GC content (40-60%), Tₘ values (within 2°C for paired primers), and specificity using Primer-BLAST reports [37]. Eliminate primers with potential secondary structures, self-dimers, or cross-dimers using tools like OligoAnalyzer [37].

Step 4: In Silico Validation

- Confirm primer specificity using Primer-BLAST against the appropriate genome background [37] [40]. Simulate amplicons via in silico PCR tools (e.g., UCSC in silico PCR) to verify expected product size and absence of spurious products [37] [39].

Step 5: Wet-Lab Testing and Optimization

- Order small-scale test primers and optimize PCR conditions using touchdown PCR if necessary [37]. Validate both primers individually before combined use, and sequence the resulting amplicon to confirm specific amplification of the target region containing the variant [37].

Computational Tools for Large-Scale Primer Design

For laboratories validating numerous NGS variants, automated primer design tools provide significant advantages. Tools like CREPE (CREate Primers and Evaluate) leverage Primer3 for design and In-Silico PCR (ISPCR) for specificity analysis, enabling parallelized primer design with integrated off-target assessment [39]. These pipelines are particularly valuable for clinical laboratories developing standardized protocols for verifying NGS findings across multiple disease genes.

Essential Reagents and Research Solutions

Successful Sanger verification requires high-quality reagents and materials throughout the workflow. The following table details key research solutions essential for robust primer design and validation.

Table 4: Essential Research Reagent Solutions for Sanger Verification

| Reagent/Material | Function in Workflow | Application Notes |

|---|---|---|

| High-Fidelity DNA Polymerase | PCR amplification of target region from genomic DNA | Reduces amplification errors; essential for accurate variant verification [37]. |

| Primer Design Software (Primer-BLAST, Primer3) | In silico primer design and parameter optimization | Automates design process; ensures adherence to thermodynamic parameters [37] [39]. |

| Specificity Checking Tools (OligoAnalyzer, BLAST) | Screening for secondary structures and off-target binding | Identifies potential dimers and hairpins; confirms target specificity [37]. |

| Capillary Electrophoresis System | Separation and detection of Sanger sequencing fragments | Industry standard for sequencing; provides high-quality trace data [35] [23]. |

| Template DNA Purification Kits | Isolation of high-quality genomic DNA | Pure template essential for efficient amplification and sequencing [23]. |

| Sequence Analysis Software | Analysis of chromatograms and variant calling | Enables base calling and visualization of sequence traces [41]. |

Sanger sequencing remains an indispensable tool for verifying NGS-derived variants, particularly in clinical diagnostics and precision medicine applications. The reliability of this verification process hinges on meticulous primer design that adheres to established thermodynamic parameters and incorporates comprehensive specificity checking. By implementing the quality thresholds and design principles outlined in this guide, researchers can establish robust, efficient workflows for validating NGS findings. The continued importance of Sanger verification in the NGS era underscores the enduring value of this foundational technology in ensuring the accuracy and reproducibility of genomic data.

Sample Preparation and PCR Amplification for Reliable Results

In genomic research and clinical diagnostics, the reliability of next-generation sequencing (NGS) findings hinges upon the initial steps of sample preparation and PCR amplification. Within the broader thesis of Sanger sequencing verification of NGS findings, this foundation becomes paramount. The accuracy and reproducibility of sequencing data are directly influenced by the quality of the starting material, the methods used for nucleic acid extraction, and the subsequent library construction [42]. Proper sample preparation minimizes artifacts and biases that could otherwise lead to false positives or negatives, thereby determining whether Sanger confirmation remains a necessary safeguard or becomes redundant validation [7] [12].

The central role of sample preparation has been demonstrated through large-scale studies comparing NGS results with traditional Sanger sequencing. When NGS variants meet specific quality thresholds—including sufficient coverage depth and allele frequency—their validation rate by Sanger sequencing can reach 99.965%, challenging the routine necessity of orthogonal confirmation for high-quality data [7] [13]. This guide systematically compares sample preparation methods and their performance impacts, providing researchers with evidence-based protocols to maximize data reliability from the outset.

Sample Preparation Methods: A Comparative Analysis

The journey toward reliable sequencing results begins with the extraction of high-quality nucleic acids from biological samples. Significant methodological variations exist at this critical first step, each with distinct implications for downstream applications and result verification.

DNA Extraction and Sample Type Considerations