Shallow Shotgun Sequencing: A Cost-Effective Path to Species-Level Microbiome Insights for Biomedical Research

This article explores shallow shotgun metagenomic sequencing (SSMS) as a powerful, cost-effective methodology bridging the gap between 16S rRNA gene sequencing and deep shotgun metagenomics.

Shallow Shotgun Sequencing: A Cost-Effective Path to Species-Level Microbiome Insights for Biomedical Research

Abstract

This article explores shallow shotgun metagenomic sequencing (SSMS) as a powerful, cost-effective methodology bridging the gap between 16S rRNA gene sequencing and deep shotgun metagenomics. Tailored for researchers and drug development professionals, we detail how SSMS provides species-level taxonomic resolution and functional profiling at a cost comparable to 16S sequencing. The content covers foundational principles, practical methodological applications, troubleshooting for complex samples, and rigorous validation against other techniques. Evidence demonstrates SSMS's lower technical variation, superior reproducibility, and growing utility in clinical and large-cohort studies, positioning it as an optimal tool for advancing microbiome research in biomedical science.

Beyond 16S: How Shallow Shotgun Sequencing Unlocks Deeper Microbiome Insights

Core Principles and Definition

What is Shallow Shotgun Sequencing?

Shallow shotgun metagenomic sequencing is a targeted approach to microbiome analysis that involves sequencing the entire genomic DNA content of a sample at a lower depth (typically 0.5 to 5 million reads) compared to deep shotgun sequencing. Unlike 16S rRNA amplicon sequencing which targets only specific hypervariable regions, shallow shotgun sequencing randomly fragments and sequences all DNA, enabling comprehensive taxonomic profiling across all microbial domains (bacteria, archaea, fungi, viruses) without PCR amplification bias [1] [2].

This method fills the critical gap between 16S sequencing and deep shotgun metagenomics, providing species-level taxonomic resolution at a cost comparable to 16S methodologies (approximately $80 per sample) while avoiding the primer biases and limited taxonomic coverage of amplicon-based approaches [2]. The core principle involves fragmenting all DNA in a sample into small pieces, sequencing these fragments, and then computationally reconstructing microbial community composition by aligning sequences to reference databases [1].

How does shallow shotgun sequencing differ from deep shotgun and 16S sequencing?

Table: Comparison of Metagenomic Sequencing Approaches

| Parameter | 16S rRNA Sequencing | Shallow Shotgun Sequencing | Deep Shotgun Sequencing |

|---|---|---|---|

| Sequencing Depth | ~30,000 reads [2] | ~100,000 to 5 million reads [2] | >1 million reads [2] |

| Taxonomic Resolution | Genus level (rarely species) [2] | Species level [3] [2] | Species to strain level [2] |

| Taxonomic Coverage | Bacteria and archaea only [2] | Bacteria, archaea, fungi, viruses [1] [2] | All domains including eukaryotes [1] |

| Functional Profiling | Not available [2] | Limited but possible [2] | Comprehensive [1] |

| PCR Amplification | Required (introduces bias) [2] | Not required [2] | Not required [1] |

| Host DNA Contamination | Not an issue (targeted) [2] | Yes, requires management [1] [2] | Significant issue [1] |

| Cost per Sample | ~$50 [2] | ~$80 [2] | >$150 [2] |

| Computational Requirements | Low [2] | Medium to High [2] | Very High [2] |

Technical Specifications and Sequencing Depth

What is the optimal sequencing depth for shallow shotgun sequencing?

The optimal sequencing depth for shallow shotgun sequencing depends on the specific research goals and sample complexity. For most applications targeting species-level taxonomic profiling, 100,000 to 5 million reads per sample provides sufficient coverage [2]. Studies have demonstrated that sequencing as few as 100,000 reads enables reliable species-level classification with solid statistical significance for many microbial communities [2].

For human microbiome applications, including vaginal, gut, and respiratory samples, depths between 0.5-5 million reads have proven effective for accurate community state type determination and pathogen detection [3] [4]. Lower depths within this range (100,000-1 million reads) often suffice for basic taxonomic profiling, while the upper range (1-5 million reads) enhances detection sensitivity for low-abundance species and enables limited functional insights [5].

Table: Recommended Sequencing Depth by Application

| Research Application | Recommended Depth | Key Considerations |

|---|---|---|

| Basic Taxonomic Profiling (species level) | 100,000 - 1 million reads [2] | Suitable for most community structure analyses |

| Low-Abundance Species Detection | 1 - 5 million reads [5] | Enhanced sensitivity for rare taxa |

| Clinical Pathogen Detection | 0.5 - 2 million reads [3] | Balance of cost and sensitivity for diagnostics |

| Vaginal CST Classification | 100,000 - 1 million reads [4] | Reliable for community state type determination |

| Limited Functional Insights | 2 - 5 million reads [2] | Basic functional annotation possible |

Experimental Protocol and Workflow

What is the complete workflow for shallow shotgun metagenomic sequencing?

Shallow Shotgun Sequencing Workflow

Sample Collection and Preservation Proper sample collection is critical for reliable metagenomic results. Use sterile containers to prevent contamination and freeze samples immediately at -20°C or -80°C after collection. For temporary storage, maintain samples at 4°C or use preservation buffers. Avoid freeze-thaw cycles by aliquoting samples before freezing [1].

DNA Extraction Protocol

- Lysis: Break open cells using chemical (enzymes) and mechanical (bead beating) methods to release DNA [1]

- Precipitation: Separate DNA from cellular debris using salt solutions and alcohol [1]

- Purification: Wash precipitated DNA to remove impurities and resuspend in water or buffer [1]

For challenging samples (e.g., spores, soil with humic acids), additional enzymatic treatments or specialized purification may be necessary [1].

Library Preparation for Shallow Shotgun Sequencing

- DNA Fragmentation: Use mechanical or enzymatic methods to fragment DNA into optimal sizes for sequencing (typically 200-500bp) [1]

- Adapter Ligation: Ligate molecular barcodes (index adapters) to identify individual samples after multiplexed sequencing [1]

- Library Cleanup: Purify and size-select DNA fragments to ensure proper insert size and remove adapter dimers [1]

Sequencing Platform Selection Both Illumina and Oxford Nanopore platforms support shallow shotgun sequencing. Nanopore technology offers advantages for shallow sequencing due to flexible flow cells and multiplexing options, including Flongle flow cells for individual samples or standard flow cells with up to 96-plex capability [4].

Troubleshooting Common Experimental Issues

How can I resolve low library yield in shallow shotgun preparations?

Low library yield is a common challenge that can undermine sequencing success. The table below outlines primary causes and corrective actions:

Table: Troubleshooting Low Library Yield

| Root Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality/Degraded DNA | Enzyme inhibition or fragmentation failure | Re-purify input sample; ensure 260/230 >1.8, 260/280 ~1.8; use fresh wash buffers [6] |

| Sample Contaminants | Residual phenol, EDTA, salts inhibit enzymes | Use clean columns or beads for purification; dilute residual inhibitors if necessary [6] |

| Inaccurate Quantification | UV absorbance overestimates usable material | Use fluorometric methods (Qubit, PicoGreen) instead of NanoDrop [6] |

| Fragmentation Issues | Over- or under-shearing reduces ligation efficiency | Optimize fragmentation parameters; verify size distribution before proceeding [6] |

| Adapter Ligation Efficiency | Poor ligase performance or wrong molar ratios | Titrate adapter:insert ratios; ensure fresh ligase and optimal temperature [6] |

| Overly Aggressive Cleanup | Desired fragment loss during size selection | Optimize bead:sample ratios; avoid bead over-drying [6] |

How can I minimize contamination and host DNA interference?

Host DNA contamination presents a significant challenge in host-associated microbiome studies. These strategies can improve microbial detection:

- Sample Selection: Collect consistent sample types representative of your population [1]

- Collection Protocols: Use sterile techniques and minimize environmental exposure [1]

- DNA Extraction Optimization: Include steps to selectively enrich microbial DNA or deplete host DNA [2]

- Negative Controls: Include extraction and sequencing controls to identify contamination sources [1]

- Bioinformatic Filtering: Remove host reads computationally during analysis [1]

For samples with high host DNA content (e.g., vaginal swabs, tissue biopsies), consider implementing targeted enrichment approaches or increasing sequencing depth to compensate for non-microbial reads [4] [2].

Data Analysis and Bioinformatics

What are the best practices for analyzing shallow shotgun data?

Taxonomic Profiling For taxonomic analysis from shallow shotgun data, specialized tools like Meteor2 provide optimized performance for lower-depth datasets. Meteor2 uses environment-specific microbial gene catalogs and has demonstrated 45% improved species detection sensitivity in shallow-sequenced human gut microbiota compared to alternatives like MetaPhlAn4 [5].

The tool supports 10 different ecosystems with 63,494,365 microbial genes clustered into 11,653 metagenomic species pangenomes, enabling comprehensive taxonomic, functional, and strain-level profiling even with limited sequencing depth [5].

Functional Profiling While deep shotgun sequencing provides more comprehensive functional analysis, shallow sequencing can still yield valuable functional insights. Meteor2 improves functional abundance estimation accuracy by 35% compared to HUMAnN3 based on Bray-Curtis dissimilarity, making it suitable for limited functional annotation from shallow datasets [5].

Analysis Workflow Integration

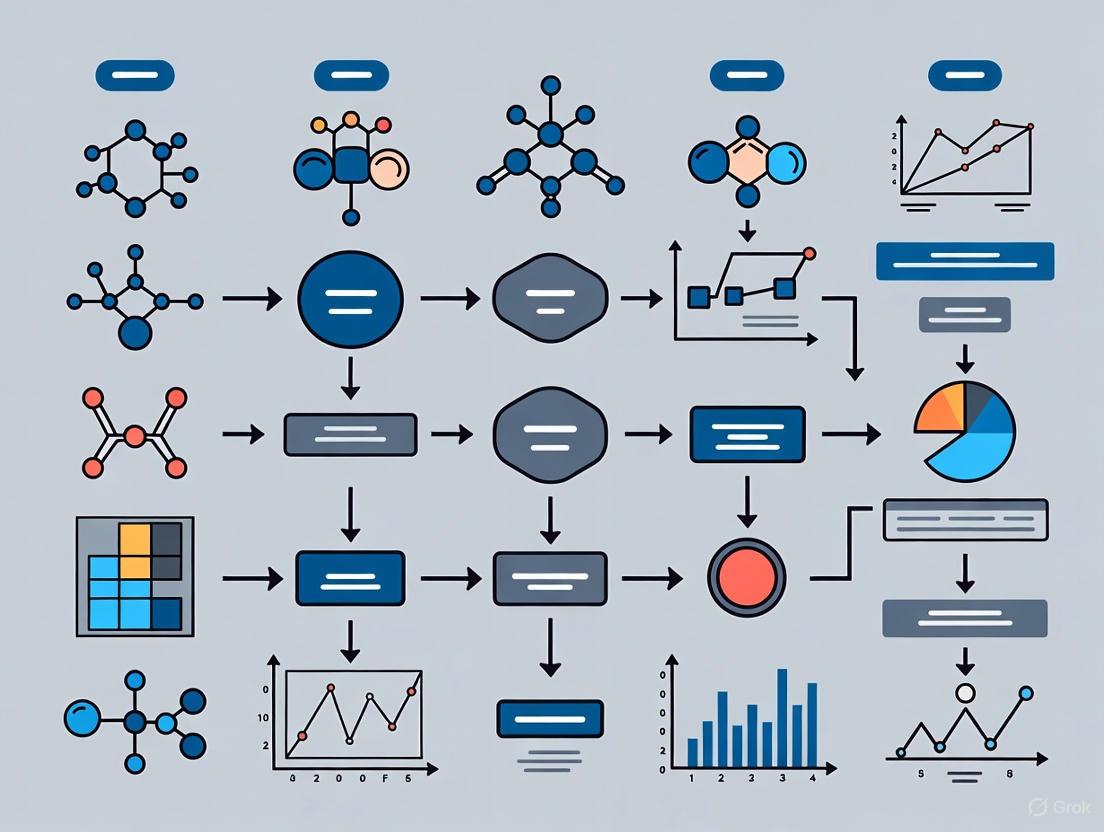

Shallow Shotgun Data Analysis Pipeline

Essential Research Reagents and Materials

What are the key reagents required for successful shallow shotgun sequencing?

Table: Essential Research Reagents for Shallow Shotgun Sequencing

| Reagent/Material | Function | Application Notes |

|---|---|---|

| DNA Extraction Kit | Extracts microbial DNA from samples | Choose kit appropriate for sample type (fecal, soil, tissue) [1] |

| DNA Quantification Reagents | Measures DNA concentration and quality | Fluorometric methods (Qubit) preferred over UV spectrophotometry [6] |

| Library Preparation Kit | Prepares DNA fragments for sequencing | Select kits with efficient low-input performance [1] |

| Size Selection Beads | Purifies DNA fragments by size | Magnetic beads with optimized sample:bead ratios [6] |

| Index Adapters | Multiplexes samples for sequencing | Unique dual indexing recommended to reduce index hopping [1] |

| Quality Control Reagents | Assesses library quality pre-sequencing | BioAnalyzer/TapeStation reagents for fragment analysis [6] |

| Negative Control Reagents | Detects contamination | DNA-free water and extraction controls [1] |

Frequently Asked Questions

Can shallow shotgun sequencing replace 16S rRNA sequencing for routine microbiome studies?

Yes, for many applications, shallow shotgun sequencing provides a superior alternative to 16S sequencing. It offers species-level resolution, detects all microbial domains (bacteria, archaea, fungi, viruses), avoids PCR amplification biases, and generates data that can be directly compared across studies [2]. At approximately $80 per sample, it is cost-competitive with 16S sequencing while providing substantially more comprehensive data [2].

How does sequencing depth affect species detection sensitivity in shallow shotgun sequencing?

Sequencing depth directly correlates with detection sensitivity for low-abundance species. Studies demonstrate that 100,000 reads provides reliable species-level classification for dominant community members, while 1-5 million reads significantly enhances detection of rare taxa [5] [2]. For example, in human gut microbiota, increasing depth from 100,000 to 5 million reads improves detection sensitivity for low-abundance species by at least 45% [5].

What are the limitations of shallow shotgun sequencing compared to deep sequencing?

The primary limitation is reduced capability for comprehensive functional profiling and genome assembly. While shallow sequencing excels at taxonomic classification, deep sequencing (>1 million reads) is required for detailed functional analysis, pathway reconstruction, and metagenome-assembled genomes [2]. Additionally, shallow sequencing may miss very low-abundance species in complex communities and provides limited strain-level resolution compared to deep sequencing approaches [5] [2].

Can I use shallow shotgun sequencing for clinical diagnostics?

Yes, shallow shotgun sequencing shows significant promise for clinical applications. Studies have demonstrated its effectiveness in detecting pathogens in cystic fibrosis patients, identifying vaginal community state types associated with health outcomes, and profiling microbiomes for diagnostic purposes [3] [4] [7]. The method particularly excels at detecting fastidious or unculturable pathogens that may be missed by traditional culture methods [3].

Technical Support Center

Troubleshooting Guides and FAQs

This section addresses common challenges researchers face when implementing cost-effective shallow shotgun sequencing protocols.

FAQ 1: My sequencing library yield is unexpectedly low. What are the primary causes and solutions?

Low library yield is a common issue that can often be traced to problems early in the preparation workflow [6].

- Potential Cause: Poor quality or contaminated nucleic acid input. Inhibitors like residual salts, phenol, or EDTA can reduce enzyme efficiency in downstream steps [6].

- Solution: Re-purify the input sample using clean columns or beads. Verify sample purity using spectrophotometric ratios (e.g., 260/280 ~1.8, 260/230 > 1.8) and use fluorometric quantification (e.g., Qubit) for greater accuracy than UV absorbance alone [6].

- Potential Cause: Inefficient adapter ligation during library preparation.

- Solution: Titrate the adapter-to-insert molar ratio to find the optimal conditions. Ensure ligase and buffer are fresh and have not expired [6].

FAQ 2: My sequencing data shows a high rate of adapter dimers. How can I prevent this?

A sharp peak around 70-90 bp in an electropherogram is a clear indicator of adapter-dimer contamination [6].

- Potential Cause: An excessive amount of adapters relative to the target insert DNA.

- Solution: Precisely calculate and optimize the adapter concentration. Increase the rigor of post-ligation cleanup using methods like solid-phase reversible immobilization (SPRI) beads with adjusted bead-to-sample ratios to exclude small fragments [6].

- Potential Cause: Incomplete purification after the ligation step, allowing unligated adapters to carry over.

- Solution: Ensure purification protocols are followed meticulously, including sufficient washing steps and avoidance of bead over-drying, which leads to inefficient elution [6].

FAQ 3: The data after a homopolymer repeat (e.g., a run of "AAAAA") becomes noisy and unreadable. What is happening?

This is a classic issue often related to polymerase slippage [8] [9].

- Potential Cause: The sequencing polymerase can stutter or disassociate on a stretch of mononucleotides, generating fragments of varying lengths that create a mixed signal [9].

- Solution: There is no reliable way to sequence directly through such regions with standard protocols. The most effective strategy is to design a new sequencing primer that binds just after the homopolymer region or to sequence toward the region from the reverse direction [9].

FAQ 4: My sequence data starts with high quality but then terminates abruptly. Why?

Sudden termination of good-quality sequence is frequently a sign of secondary structures in the DNA template [9].

- Potential Cause: Regions with high GC content or self-complementary sequences can form hairpin loops that the sequencing polymerase cannot pass through [9].

- Solution: Some core facilities offer alternate sequencing chemistries (e.g., "difficult template" protocols) designed to help polymerases resolve these structures. A more reliable solution is to design a new primer that sits directly on or avoids the problematic region altogether [9].

Quantitative Data for Cost-Effective Sequencing

The tables below summarize cost data and specifications relevant for planning shallow shotgun and other metagenomic sequencing projects. All prices are in Canadian Dollars (CAD) unless otherwise noted and are based on academic/government rates [10].

Table 1: Metagenome Sequencing Service Costs (Per Sample)

| Sequencing Platform | Depth (PE Reads) | Data Output (Gb) | Library Prep + Sequencing Cost (CAD) | DNA Extraction Cost (CAD) |

|---|---|---|---|---|

| NextSeq2000 (P3 cell) | 1X (~6 M reads) | 1.8 Gb | $35 | |

| 2X (~12 M reads) | 3.6 Gb | $35 | ||

| 4X (~24 M reads) | 7.2 Gb | $35 | ||

| PacBio Vega (HiFi) | Shallow (~500 Mb HiFi) | ~0.5 Gb | $35 | |

| PacBio Vega (HiFi) | MAG Assembly (~10 Gb HiFi) | ~10 Gb | $3000 | $35 |

Table 2: Client-Prepared Pool Sequencing Run Costs

| Sequencing Platform | Run Type / Output | Typical Sample Capacity (1X) | Academic Cost per Run (CAD) |

|---|---|---|---|

| NextSeq2000 | P1 (~100 M PE reads, 30 Gb) | ~16 samples | $4,000 |

| NextSeq2000 | P3 (~1.2 B PE reads, 360 Gb) | ~192 samples | $11,000 |

| MiSeq i100 | 25M 2x150 bp (~25 M PE reads, 7 Gb) | ~380 samples | $2,800 |

Experimental Protocols for Key Applications

Protocol: Cost-Effectiveness Analysis of a Diagnostic Sequencing Tool

This methodology is adapted from a prospective pilot study comparing metagenomic next-generation sequencing (mNGS) to traditional bacterial cultures for diagnosing central nervous system infections [11].

- Study Design and Randomization: Conduct a single-center randomized controlled trial. Patients are randomly assigned 1:1 to either the experimental group (diagnosed with the new sequencing tool, e.g., mNGS) or the control group (diagnosed with the standard method, e.g., pathogen culture) [11].

- Data Collection: Collect primary data on key outcome measures for both groups. These typically include:

- Diagnostic turnaround time (in days).

- Direct detection costs.

- Broader healthcare costs (e.g., anti-infective medication costs, total hospitalization costs).

- Clinical outcome scores or treatment response scores at discharge [11].

- Decision-Tree Modeling: Construct a decision-tree model (using software like TreeAge Pro) to compare the two strategies. Input the collected cost and outcome data into the model [11].

- Cost-Effectiveness Calculation: Calculate the primary economic metric, the Incremental Cost-Effectiveness Ratio (ICER). The formula is:

- ICER = (CostmNGS - CostControl) / (EffectivenessmNGS - EffectivenessControl) [11]

- Interpretation: Contextualize the ICER value against a recognized Willingness-To-Pay (WTP) threshold. For example, using a GDP-based threshold, an ICER less than or equal to the per capita GDP is considered cost-effective [11].

Workflow and Relationship Visualizations

The following diagrams illustrate the core experimental and decision-making workflows for implementing cost-effective sequencing.

Cost-Effective Sequencing Workflow

Cost Effectiveness Analysis Steps

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Sequencing Library Preparation

| Item | Function | Key Considerations |

|---|---|---|

| SPRI Beads | Purification and size selection of nucleic acids by binding to magnetic beads in a polyethylene glycol (PEG) solution. | The bead-to-sample ratio is critical. An incorrect ratio can lead to loss of desired fragments or failure to remove adapter dimers [6]. |

| Fluorometric Assay Kits (e.g., Qubit) | Accurate quantification of double-stranded DNA or RNA by binding to specific fluorescent dyes. | More accurate for sequencing than UV spectrophotometry, as it is less affected by contaminants like salts or free nucleotides [6]. |

| High-Fidelity DNA Polymerase | Amplification of the adapter-ligated library prior to sequencing. | Reduces PCR-induced errors and bias. Overcycling should be avoided to prevent duplicates and artifacts [6]. |

| Next-Generation Sequencing Adapters | Short, double-stranded oligonucleotides that allow the library fragments to bind to the sequencing flow cell. | The adapter-to-insert molar ratio must be optimized to maximize ligation efficiency and minimize adapter-dimer formation [6]. |

| Nucleic Acid Extraction Kits | Isolation of high-quality DNA or RNA from complex biological samples (e.g., tissue, blood, microbes). | Specialized kits may be required for difficult sample types (e.g., FFPE tissue, low-biomass microbiomes), which can incur extra costs [10]. |

For researchers in drug development and microbiology, the ability to profile the four major biological kingdoms—Bacteria, Archaea, Fungi, and Viruses—from a single sample is a powerful advancement. Shallow shotgun metagenomic sequencing (SMS) makes this multi-kingdom analysis a cost-effective reality. This approach sequences all genetic material in a sample at a lower depth than deep shotgun sequencing, providing species-level taxonomic resolution and functional insights at a cost comparable to 16S rRNA sequencing [12]. This technical support center is designed to help you navigate the experimental process and troubleshoot common challenges.

Troubleshooting Guides

Common Experimental Issues and Solutions

| Observation | Possible Cause | Solution |

|---|---|---|

| Low or uneven sequencing coverage | Insufficient library input during multiplexed capture [13] | Use 500 ng of each barcoded library during multiplexed hybridization capture to minimize duplicates and ensure uniform coverage [13]. |

| High PCR duplication rate | - PCR amplification artifacts- Suboptimal input DNA in multiplexed pools [13] | - Use a hot-start polymerase. - For multiplexed captures, ensure 500 ng of each library is pooled, not 500 ng total [13]. |

| High levels of host (e.g., human) DNA | Sample type (e.g., blood, biopsy) has high non-microbial DNA [12] | - Use laboratory protocols to deplete host cells or DNA prior to extraction.- For skin or blood samples, 16S/ITS sequencing may be more suitable [12]. |

| Low taxonomic resolution for rare taxa | Reference databases lack genomes for understudied microbes [12] | - For well-characterized environments (e.g., human gut), shallow SMS is excellent.- For novel environments (e.g., soil), 16S may currently identify more rare taxa [12]. |

| Inconsistent sequencing yield (Nanopore) | Known potential limitation of the platform [7] | Closely monitor sequencing run performance and be prepared to repeat if yield is insufficient for analysis [7]. |

Sample Quality and Preparation FAQs

Q: My sample types (e.g., skin swabs) are known to have high host DNA content. Is shallow shotgun sequencing still the best choice? A: For samples with high host DNA content, such as skin, blood, or biopsies, shallow SMS may not be optimal. A large proportion of your sequences will be "wasted" on host DNA, leaving very few for microbial profiling. In such cases, targeted approaches like 16S (for bacteria) or ITS (for fungi) sequencing are often more cost-effective and efficient [12].

Q: What are the critical steps to avoid contamination during sample prep? A: Contamination is a major concern for sensitive metagenomic assays.

- Use filter tips and single-use pipettes to prevent aerosol contamination.

- Work in a clean, dedicated area away from concentrated sources of DNA like PCR amplicons or other samples.

- Use HPLC-grade water and avoid autoclaving plastics and solutions, as this can introduce contaminants [14].

Q: How should I store my extracted DNA to ensure stability? A: Keep all protein and DNA samples at low temperature during work (4°C) and store them frozen at -20°C to -80°C to prevent degradation [14].

Experimental Protocols for Shallow Shotgun Sequencing

Workflow for Multi-Kingdom Microbiome Profiling

The following protocol, adapted from a schizophrenia microbiome study, details the steps for comprehensive multi-kingdom analysis [15].

Detailed Protocol Steps

Sample Collection and DNA Extraction

- Collect fecal samples and immediately store them at -80°C [15].

- Extract total genomic DNA using a combination of mechanical disruption and chemical lysis to ensure efficient breakage of cells from all microbial kingdoms [15].

- Purify DNA, removing proteins and RNA, and assess its quantity and quality [15].

Library Preparation and Multiplexing

- Fragment the purified DNA to ~300-400 bp pieces [15].

- Prepare sequencing libraries by performing end repair, A-tailing, and ligating adapters containing unique sample indexes (barcodes) [15] [13].

- Quantify the libraries and pool (multiplex) them together for a single sequencing run. For optimal results during target capture, use 500 ng of each barcoded library in the pool [13].

Shallow Shotgun Sequencing

Bioinformatics Analysis Protocol

Pre-processing and Quality Control

- Use tools like KneadData to remove low-quality reads and trim adapters.

- Align reads to a host genome (e.g., human) using Bowtie2 and remove them to eliminate host contamination [15].

Taxonomic Profiling

Functional Profiling

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Example/Note |

|---|---|---|

| Mechanical Lysis Beads | Ensures efficient breakage of tough microbial cell walls (e.g., fungal, Gram-positive bacteria) for complete DNA representation. | A key step in the DNA extraction protocol [15]. |

| Dual-Indexed Adapters | Allows for multiplexing of numerous samples in a single sequencing run, significantly reducing cost per sample. | 8 nt indexes are commonly used [13]. |

| Hybridization Capture Panel | For targeted enrichment of microbial genomes of interest before sequencing. | Requires 500 ng of each barcoded library per pool for best results [13]. |

| Kraken2/Bracken Database | Custom database for taxonomic classification of bacteria, archaea, fungi, and viruses. | Should incorporate NCBI RefSeq, FungiDB, and Ensembl genomes [15]. |

| EggNOG Database | For functional annotation of predicted genes, providing insights into metabolic pathways. | Used to identify pathways like tryptophan metabolism or biosynthesis of amino acids [15]. |

For researchers designing cost-effective microbiome studies, choosing the right sequencing method is paramount. While 16S rRNA gene sequencing has long been the workhorse for microbial community analysis, shallow shotgun sequencing emerges as a powerful alternative that overcomes critical limitations in taxonomic resolution. This technical guide explores the key advantages of shallow shotgun sequencing, providing troubleshooting guidance and experimental protocols to help researchers transition from genus-level identification to species and strain-level analysis while maintaining cost-efficiency for large cohort studies.

FAQ: 16S rRNA Sequencing vs. Shallow Shotgun Metagenomics

What is the fundamental difference between these methods?

16S rRNA Sequencing: An amplicon-based approach that targets and amplifies only the 16S rRNA gene—a specific genetic marker found in all bacteria and archaea. It analyzes one or several variable regions (V1-V9) of this approximately 1500 bp gene for phylogenetic classification [16] [17].

Shallow Shotgun Metagenomics: A whole-genome approach that sequences all genomic material in a sample at a lower depth (typically 2-5 million reads). Instead of targeting a single gene, it fragments and sequences all DNA, enabling detection of all microbial kingdoms and functional genes in a single workflow [18] [19].

Why can 16S sequencing struggle with species-level identification?

The 16S rRNA gene contains both highly conserved and variable regions. While this structure provides phylogenetic information, several factors limit its resolution:

- Variable Region Selection: Different variable regions have varying discriminatory power across bacterial taxa. No single region provides optimal resolution for all genera [20] [21].

- Short Read Limitations: Sequencing platforms that cannot capture the full-length gene (~1500 bp) must target sub-regions, which contain insufficient information for species discrimination [22].

- Genetic Similarity: Closely related bacterial species may have nearly identical 16S sequences, making them indistinguishable [22].

- Database Dependency: Taxonomic assignment relies on reference databases, which may have incomplete species-level representation or inconsistent nomenclature [21].

Quantitative Comparison: Resolution Capabilities

Table 1: Method Comparison for Taxonomic Resolution

| Feature | 16S rRNA Sequencing | Shallow Shotgun Sequencing |

|---|---|---|

| Taxonomic Resolution | Genus-level (some species) [17] | Species to strain-level (bacteria) [18] [19] |

| Kingdom Coverage | Primarily bacteria & archaea [17] | Multi-kingdom (bacteria, archaea, fungi, viruses) [18] |

| Functional Profiling | Predictive only (indirect) | Direct detection of functional pathways & AMR genes [18] |

| Primer Bias | Present - unequal amplification [21] | Absent - no target amplification [18] |

| Cost per Sample | Lower | Moderate (higher than 16S, lower than deep shotgun) [19] |

| Ideal Sample Type | Various environments | High microbial biomass (e.g., gut) [18] [19] |

Table 2: Species-Level Identification Rates by 16S Region [22]

| 16S Region | Species-Level Identification Rate | Notable Taxonomic Biases |

|---|---|---|

| V4 | ~44% | Poor for Proteobacteria |

| V1-V2 | ~65% | Poor for Actinobacteria |

| V3-V5 | ~68% | Variable across phyla |

| V6-V9 | ~72% | Best for Clostridium, Staphylococcus |

| Full-length (V1-V9) | >95% | Minimal bias across taxa |

Experimental Protocol: Shallow Shotgun Sequencing Workflow

Sample Preparation and DNA Extraction

- Sample Collection: Use appropriate stabilization buffers for your sample type (e.g., gut, skin, environmental)

- DNA Extraction: Employ bead-beating mechanical lysis with magnetic bead-based purification (e.g., Qiagen MagAttract PowerSoil DNA KF Kit) [19]

- Quality Control: Verify DNA quantity (≥2 ng/μL) and quality (260/280 ratio ~1.8) using fluorometric methods [19]

Library Preparation and Sequencing

- Library Prep: Use Illumina Nextera Flex DNA library prep kit with dual indexing [19]

- Sequencing Parameters: Sequence on Illumina platforms (NextSeq) for 2×150 bp paired-end reads [19]

- Sequencing Depth: Target 2-5 million reads per sample depending on sample type and complexity [18] [19]

Troubleshooting Guide

Common Challenges and Solutions

Table 3: Troubleshooting Sequencing Preparation

| Problem | Possible Causes | Solutions |

|---|---|---|

| Low library yield | Poor input DNA quality, contaminants, inaccurate quantification | Re-purify DNA, use fluorometric quantification, verify 260/230 ratios >1.8 [6] |

| Adapter dimer contamination | Suboptimal adapter ligation, inefficient purification | Titrate adapter:insert ratio, optimize bead cleanup parameters [6] |

| Host DNA contamination | High host:microbe ratio in sample type | Use differential lysis, probe-based host depletion, increase sequencing depth [19] |

| Inconsistent results between replicates | Human error in manual prep, reagent degradation | Implement automation, use master mixes, maintain reagent quality control [6] |

Optimization Recommendations

- For low-biomass samples: Consider increasing input material or using whole-genome amplification

- For host-associated samples with high human DNA: Implement host DNA depletion protocols [19]

- For functional analysis: Ensure sufficient sequencing depth for gene coverage (>3M reads for core functions) [18]

The Researcher's Toolkit: Essential Materials

Table 4: Key Research Reagent Solutions

| Reagent/Kit | Function | Application Notes |

|---|---|---|

| Qiagen MagAttract PowerSoil DNA KF Kit | DNA extraction from complex samples | Optimized for KingFisher robot; good yield/quality balance [19] |

| Illumina Nextera Flex DNA Library Prep Kit | Library preparation for shotgun sequencing | Includes tagmentation and amplification; compatible with low input [19] |

| SPRIselect Beads | Library clean-up and size selection | Remove adapter dimers; select optimal fragment sizes [6] |

| Illumina NextSeq Consumables | Sequencing reagents | High-output kits suitable for multiplexed shallow sequencing [19] |

Shallow shotgun sequencing represents a significant advancement over 16S rRNA sequencing for researchers requiring species-level taxonomic resolution while maintaining cost-effectiveness for large cohort studies. By providing multi-kingdom coverage, direct functional profiling, and reduced amplification bias, this method enables more comprehensive microbiome analysis. The protocols and troubleshooting guides presented here facilitate implementation of this powerful approach, particularly for gut microbiome research and other high-microbial-biomass applications where statistical significance across large sample sizes is paramount.

Frequently Asked Questions

What are the main advantages of shallow shotgun sequencing over 16S rRNA sequencing? Shallow shotgun sequencing (SS) provides lower technical variation and higher taxonomic resolution, enabling species and sometimes strain-level identification, unlike 16S sequencing which is often limited to the genus level [23]. It also allows for direct functional profiling of microbial communities, revealing the potential metabolic capabilities and genes present, which 16S sequencing can only predict indirectly [24].

My samples have low microbial biomass (e.g., skin, blood). How can I minimize contamination? Low-biomass samples are highly susceptible to contamination, which can distort your results. Key steps include:

- Using PPE: Wear gloves, masks, and clean suits to limit contamination from the researcher [25].

- Decontaminating equipment: Treat tools and work surfaces with ethanol and DNA-degrading solutions (e.g., bleach, UV-C light) [25].

- Maintaining kit consistency: Use the same batch of DNA extraction kits throughout a project to avoid batch-specific contaminant variation [26].

- Including controls: Process blank extraction controls (e.g., empty collection vessels, sample preservation solution) alongside your samples to identify contaminating sequences [25].

Why is my shallow shotgun data unable to classify a significant portion of reads? This is a common limitation of database dependencies. Public sequence databases, while extensive, contain errors and are incomplete [27]. If your sample contains novel species or strains not yet represented in the reference databases, they cannot be classified. Furthermore, databases can contain mislabeled sequences or contaminants that lead to false classifications [27].

How does host DNA contamination impact my shallow shotgun results? Host DNA (e.g., human DNA in a gut microbiome sample) does not contain the microbial information you are targeting. When present in high amounts, it consumes sequencing depth, reducing the number of reads available for analyzing the microbiome and lowering the sensitivity for detecting low-abundance microbes [24]. This is particularly critical in shallow sequencing, where the total number of reads is limited.

What is a cost-effective strategy for a large cohort study? Shallow shotgun sequencing is an excellent cost-effective strategy for large studies, especially when focusing on high-microbial-biomass samples like stool. It provides superior data quality compared to 16S sequencing at a cost that is becoming increasingly competitive, offering a strong balance between statistical power, taxonomic resolution, and functional insights [18] [24].

Troubleshooting Guides

Problem: High Levels of Host DNA Contamination

Potential Causes:

- Sample type inherently has high host-to-microbial DNA ratio (e.g., skin swabs, tissue biopsies) [24].

- DNA extraction protocol is not optimized to enrich for microbial cells or remove host DNA.

Solutions & Methodologies:

- Choose the Right Method: For sample types known to have high host DNA, 16S rRNA sequencing may be more suitable because it uses PCR to specifically amplify a microbial gene, making it less sensitive to host DNA contamination [24].

- Optimize Sequencing Depth: For shotgun sequencing, you can mitigate the issue by increasing the sequencing depth, although this increases cost. The key is to calibrate the depth based on the expected level of host contamination [24].

- Experimental Protocol for Host DNA Depletion: Consider using commercial host depletion kits. These kits typically use enzymatic treatments or probe-based capture to selectively degrade or remove host DNA (e.g., human DNA) from the sample extract before library preparation. Always include a non-depleted control to assess the impact of the depletion on the microbial community structure.

Problem: Poor Taxonomic Resolution or Unclassified Reads

Potential Causes:

- Database limitations: The microbial species in your sample are not in the reference database [27].

- Database errors: Sequences in the database are misannotated, contaminated, or taxonomically misclassified, leading to incorrect assignments [27].

- Insufficient sequencing depth: The "shallow" depth may be too low to provide enough genomic information for confident species-level assignment for rare taxa.

Solutions & Methodologies:

- Use Multiple Databases: Employ several curated taxonomic classification databases (e.g., RefSeq, GTDB) and compare the results. This can help identify consistent, reliable classifications and flag discrepancies.

- Perform Database Quality Control: Be aware that errors can propagate through databases. A network analysis perspective can help identify spurious entries. Tools that use this approach can flag records with low annotation confidence or unusual provenance for further inspection [27].

- Functional Profiling: If taxonomic classification fails, shift your focus to functional analysis. Classify reads against gene families (e.g., KEGG Orthologs) or pathway databases. The functional profile can still provide valuable biological insights even without perfect taxonomy [23].

Problem: Inconsistent Results Between Sample Batches

Potential Causes:

- Reagent contamination: Different batches of DNA extraction kits or reagents can have varying contaminant backgrounds [26].

- Cross-contamination: DNA carryover between samples during processing [25].

Solutions & Methodologies:

- Control for Kit Contamination:

- Standardization: Use the same batch of DNA extraction kits for an entire project [26].

- Blank Controls: With each batch of extractions, process multiple negative controls (e.g., molecular grade water) through the entire workflow from extraction to sequencing. The taxonomic profile of these blanks defines your "contaminant background" [25].

- Bioinformatic Subtraction: Use the data from your blank controls in post-processing tools (e.g., Decontamer, microDecon) to statistically identify and remove contaminant sequences found in your true samples from the final dataset [25].

- Prevent Cross-Contamination:

- Lab Practices: Use clean gloves and decontaminate work surfaces with 10% bleach or DNA-away solutions between samples.

- Physical Barriers: Use DNA-free filter tips and dedicated lab coats. Consider performing pre-PCR steps in a UV hood [25].

Data Presentation

Table 1: Comparison of Microbiome Sequencing Methods

| Factor | 16S rRNA Sequencing | Shallow Shotgun Sequencing | Deep Shotgun Sequencing |

|---|---|---|---|

| Cost (Relative) | ~$50 USD [24] | ~$150 USD (similar to 16S for large studies) [24] | Significantly higher [24] |

| Taxonomic Resolution | Genus-level (sometimes species) [24] | Species-level (sometimes strain) [23] [18] | Species and strain-level [24] |

| Functional Profiling | Predicted only [24] | Directly measured [23] [24] | Directly measured [24] |

| Technical Variation | Higher [23] | Lower [23] | Low |

| Best for Large Cohorts | Good | Excellent (cost-effective with high resolution) [18] | Poor (due to cost) |

Table 2: Essential Research Reagent Solutions

| Item | Function | Consideration for Low-Biomass Studies |

|---|---|---|

| DNA Extraction Kits | Lyses cells and purifies genomic DNA. | Use the same batch throughout a project. Select kits with minimal bacterial DNA contamination [26]. |

| Personal Protective Equipment (PPE) | Gloves, masks, and clean lab coats. | Critical to prevent introduction of contaminating DNA from researchers [25]. |

| Nucleic Acid Degrading Solutions (e.g., bleach, UV-C) | Destroys trace DNA on surfaces and equipment. | Essential for decontaminating work spaces and non-disposable tools before sample processing [25]. |

| Negative Control Kits | Sterile water or buffer processed as a sample. | Identifies contaminating DNA from reagents and the laboratory environment; required for bioinformatic decontamination [25]. |

| Host DNA Depletion Kits | Selectively removes host nucleic acids. | Vital for sequencing samples with high host DNA (e.g., tissue, blood) to increase microbial sequencing depth [24]. |

Experimental Protocols & Workflows

Detailed Methodology: Contamination-Aware Sampling and DNA Extraction for Low-Biomass Samples

This protocol is adapted from consensus guidelines for low-biomass microbiome studies [25].

Pre-Sampling Preparation:

- Decontaminate: Wipe all surfaces, tools, and equipment with 80% ethanol, followed by a DNA-degrading solution (e.g., 1-5% fresh bleach solution). Rinse with DNA-free water if required.

- PPE: Wear a fresh lab coat, gloves, and a mask. Change gloves between handling different samples or reagents.

Sample Collection:

- Use single-use, DNA-free collection vessels (e.g., sterile swabs, tubes) wherever possible.

- If using non-disposable tools, decontaminate thoroughly between each sample.

- Collect field blanks and equipment blanks (e.g., open a sterile swab in the air, place it in a tube; run a swab over decontaminated equipment).

DNA Extraction:

- In a pre-decontaminated workspace, extract DNA from your samples.

- In parallel, process extraction blanks (using DNA-free water instead of sample) and your field blanks through the entire extraction protocol.

- Use a consistent, validated DNA extraction kit for the entire study [26].

Library Preparation and Sequencing:

- Proceed with your chosen shallow shotgun library prep protocol.

- Include your extracted blanks in the sequencing run to capture contaminants introduced during library prep.

The following workflow diagram summarizes the key steps for a contamination-aware study design:

Diagram 1: Contamination-aware workflow.

Understanding Database Dependency and Error Propagation

The quality of your taxonomic classification is directly tied to the quality of the reference databases. The following diagram illustrates how a single error can propagate through the sequence database network, affecting downstream analyses [27].

Diagram 2: Database error propagation network.

From Lab to Analysis: A Practical Guide to Implementing Shallow Shotgun Sequencing

Technical FAQs: Shallow Shotgun Metagenomic Sequencing

FAQ 1: What are the main advantages of shallow shotgun sequencing over 16S rRNA amplicon sequencing for large-scale studies?

Shallow shotgun metagenomic sequencing (SSMS) provides several key advantages that make it ideal for cost-effective, large-scale microbiome studies [4] [23] [3]:

Higher Taxonomic Resolution: SSMS can resolve taxa to the species and even strain levels, while 16S sequencing typically cannot classify beyond genus level [23] [3]. One study showed SSMS successfully classified 14/20 of the most abundant taxonomic groups to species level, representing 44.7% mean relative abundance across samples [23].

Lower Technical Variation: SSMS demonstrates significantly lower technical variation compared to 16S sequencing for both library preparation and DNA extraction replicates [23].

Broader Functional Insights: SSMS enables direct characterization of functional gene content and microbial pathways, not just taxonomic classification [23].

Detection of Non-Bacterial Species: Unlike 16S sequencing, SSMS can detect viruses, fungi, and other non-prokaryotic species [4] [3].

Elimination of PCR Amplification Bias: SSMS does not require PCR amplification of specific gene regions, providing more accurate biological abundance measurements [4].

FAQ 2: What sequencing depth is considered "shallow" for cost-effective microbiome studies?

For shallow shotgun metagenomic sequencing, optimal depths range between 2-5 million reads per sample to balance cost and data quality [23]. This depth provides sufficient coverage for robust taxonomic and functional characterization while remaining cost-effective for large-scale studies [23].

FAQ 3: How does technical variation compare between shallow shotgun and 16S sequencing methods?

SSMS demonstrates significantly lower technical variation compared to 16S sequencing [23]:

Table: Technical Variation Comparison Between Sequencing Methods

| Variation Source | 16S Sequencing | Shallow Shotgun Sequencing | Statistical Significance |

|---|---|---|---|

| Library Prep Replicates | Higher variation | Lower variation | p = 0.0003 |

| DNA Extraction Replicates | Higher variation | Lower variation | p = 0.0351 |

| Between-Subject Biological Variation | Lower resolution | Higher resolution | PERMANOVA: R = 0.9202, p = 0.001 |

FAQ 4: What are the key considerations for DNA extraction in shallow shotgun sequencing workflows?

Proper DNA extraction is critical for successful SSMS [4]:

Input Requirements: Most protocols require a minimum of 1 ng/μL DNA concentration, with some samples needing multiple extraction attempts to achieve sufficient yield [4].

Extraction Methodology: Bead beating for 40 minutes at maximal speed has been successfully used in vaginal microbiome studies [4].

Quality Control: Use fluorometric quantification methods (e.g., Qubit with dsDNA HS Assay Kit) rather than spectrophotometry for accurate DNA quantification [4].

Sample Preservation: Collection tubes with DNA/RNA Shield solution help preserve sample integrity during storage and transport [4].

Troubleshooting Guides

Issue 1: Low DNA Yield from Sample Extractions

Table: Troubleshooting Low DNA Yield

| Problem | Potential Causes | Solutions |

|---|---|---|

| Insufficient starting material | Low microbial biomass samples | Concentrate sample; use larger input volume; pool multiple extractions |

| Inefficient cell lysis | Incomplete bead beating; tough cell walls | Increase bead beating duration to 40 min; optimize bead size mixture |

| DNA degradation | Improper sample storage; nucleases | Use DNA/RNA Shield collection tubes; store at -80°C immediately |

| Inhibition from sample matrix | PCR inhibitors present | Add additional purification steps; use inhibitor removal kits |

Issue 2: Variable Sequencing Yields in Nanopore-Based Shallow Shotgun Sequencing

Nanopore sequencing may exhibit marked variation in sequencing yields, which can impact data consistency [4]:

Preventive Measures:

Quality Control Checkpoints:

Issue 3: Poor Taxonomic Resolution in Data Analysis

Bioinformatic Solutions:

Experimental Enhancements:

Experimental Protocols

Protocol 1: DNA Extraction for Shallow Shotgun Sequencing

Based on ZymoBIOMICS DNA/RNA Miniprep Kit with Modifications [4]:

- Sample Preparation: Vortex sample collection tube and transfer 200 μL of suspension to bead beating tube [4]

- Buffer Addition: Add 350 μL of DNA/RNA Shield buffer to enable harvesting of 200 μL of bead-free liquid [4]

- Cell Lysis: Perform bead beating using Vortex Genie with 24 multi-tube attachment on maximal speed for 40 minutes [4]

- DNA Purification: Follow manufacturer's protocol with elution in 100 μL of nuclease-free water [4]

- Quality Control: Quantify using Qubit with 1× dsDNA HS Assay Kit; minimum acceptable concentration: 1 ng/μL [4]

Protocol 2: Nanopore Library Preparation for Shallow Shotgun Sequencing

Based on Ligation Sequencing Kit SQK-LSK109 with Barcoding [4]:

- DNA Input: Use remaining DNA after setting aside 10 ng for quality control [4]

- Library Preparation: Follow manufacturer's protocol for ligation sequencing kit [4]

- Barcoding: Apply barcoding using EXP-NBD196 expansion kit (12-16 samples per flow cell) [4]

- Adapter Ligation: Include short fragment buffer (SFB) to ensure equal purification of short and long fragments [4]

- Sequencing: Load library onto Nanopore GridION with R9.4.1 flow cells [4]

- Basecalling: Perform real-time basecalling and demultiplexing using MinKNOW with Guppy [4]

Workflow Visualization

Research Reagent Solutions

Table: Essential Materials for Shallow Shotgun Sequencing Workflows

| Reagent/Kit | Function | Application Notes |

|---|---|---|

| ZymoBIOMICS DNA/RNA Shield Collection Tubes | Sample preservation and stabilization | Maintains sample integrity during storage and transport; enables room-temperature storage [4] |

| ZymoBIOMICS DNA/RNA Miniprep Kit | Nucleic acid extraction | Modified with extended bead beating (40 min) for optimal lysis of diverse microorganisms [4] |

| Oxford Nanopore Ligation Sequencing Kit (SQK-LSK109) | Library preparation for nanopore sequencing | Enables long-read metagenomic sequencing; flexible multiplexing options [4] |

| Oxford Nanopore Barcoding Expansion Kit (EXP-NBD196) | Sample multiplexing | Allows 12-16 samples per flow cell; cost-effective for medium-throughput studies [4] |

| Qubit dsDNA HS Assay Kit | DNA quantification | Fluorometric measurement essential for accurate DNA concentration assessment [4] |

| Short Fragment Buffer (SFB) | Adapter ligation optimization | Ensures equal purification of short and long DNA fragments during library prep [4] |

Advanced Applications

Clinical Detection Enhancement [3]:

Shallow shotgun sequencing significantly improves detection of clinically relevant pathogens compared to culture methods and 16S sequencing. Key advancements include:

Species-Level Discrimination: SSMS can distinguish between closely related species such as Staphylococcus aureus vs. S. epidermidis and Haemophilus influenzae vs. H. parainfluenzae, which is not possible with 16S amplicon sequencing [3]

Detection of Fastidious Pathogens: SSMS reliably detects Mycobacterium spp. and other difficult-to-culture pathogens that are frequently missed by both culture methods and 16S sequencing [3]

Comprehensive Pathogen Profiling: SSMS identifies full pathogen communities in complex samples, providing more complete clinical pictures than targeted methods [3]

Cost-Benefit Analysis:

While per-sample sequencing costs are higher for SSMS than 16S sequencing, the significantly improved resolution and reduced technical variation make it more cost-effective for studies where species-level discrimination or functional profiling is essential [23] [3]. The ability to detect clinically significant species differentiations provides particular value in diagnostic applications [3].

Frequently Asked Questions (FAQs)

FAQ 1: What is the core difference between microbiota and microbiome? The terms are often used interchangeably, but technically, microbiota refers to the microorganisms themselves (bacteria, archaea, viruses, fungi, and protozoans) inhabiting a specific site. In contrast, the microbiome encompasses the entire habitat, including the microorganisms, their genomes, and the surrounding environmental conditions [29].

FAQ 2: For a large-scale study using shallow shotgun sequencing, is it better to use fecal samples or mucosal biopsies? For large-scale studies, fecal samples are generally the more practical and suitable choice. While mucosal biopsies provide a direct snapshot of the mucosa-associated microbiota, they are invasive, not suitable for healthy controls, expensive, and yield insufficient biomass for some analyses [30]. Shallow shotgun sequencing of stool samples provides a cost-effective, non-invasive, and repeatable method for large-scale biomarker discovery, offering species-level taxonomic resolution [23].

FAQ 3: How does shallow shotgun sequencing compare to 16S sequencing for taxonomic profiling? Shallow shotgun sequencing provides superior taxonomic resolution and lower technical variation compared to 16S amplicon sequencing. While 16S sequencing is cost-effective, it often cannot resolve taxonomy beyond the genus level. Shallow shotgun sequencing can classify a majority of reads to the species level and demonstrates less technical variation from DNA extraction and library preparation steps [23].

FAQ 4: My samples cannot be frozen immediately at -80°C. What is the best alternative storage method? If immediate freezing at -80°C is not possible, the following alternatives are effective:

- Refrigeration at 4°C for a short period has been shown to effectively maintain microbial diversity [31].

- Preservative Buffers, such as OMNIgene·GUT or AssayAssure, can maintain microbial composition at room temperature for several days, which is ideal for shipping [30] [31].

Troubleshooting Guides

Issue 1: Low DNA Yield from Stool Samples

Problem: Insufficient DNA is extracted from stool samples, particularly for low-biomass individuals or when using swabs.

Solution:

- Ensure Adequate Sample Volume: For stool, homogenizing the entire sample before taking an aliquot ensures a uniform and representative microbial analysis [31].

- Optimize Extraction Protocol: The choice of DNA extraction kit significantly impacts yield. Use kits benchmarked and validated for microbiome studies [31].

- Sample Collection Method: For shallow shotgun sequencing, which benefits from higher-quality DNA, dry swabs or fecal occult blood test cards may yield less DNA than a homogenized whole stool sample [32].

Issue 2: High Technical Variation in Sequencing Results

Problem: Replicates of the same sample show high variability in taxonomic abundance, making biological interpretation difficult.

Solution:

- Switch to Shallow Shotgun Sequencing: Studies have shown that shallow shotgun sequencing produces significantly lower technical variation from both DNA extraction and library preparation steps compared to 16S amplicon sequencing [23].

- Standardize Homogenization: For stool samples, incomplete homogenization can lead to subsampling bias due to the uneven distribution of bacteria within feces. Ensure a thorough homogenization protocol [30].

- Use Technical Replicates: Include replication at the DNA extraction and library preparation stages in your experimental design to quantify and account for technical noise [23].

Issue 3: Inconsistent or Unexpected Microbiome Profiles

Problem: Results do not align with expectations or published literature.

Solution:

- Verify Sample Collection Metadata: Numerous confounding factors, including diet, medication, age, and BMI, can drastically alter the microbiome [29]. Ensure detailed metadata collection.

- Check for Contamination: This is critical for low-biomass samples. Implement stringent contamination prevention protocols, including the use of personal protective equipment, sterile collection materials, and decontaminated environments [31].

- Review Primers (for 16S sequencing): If using 16S sequencing, the choice of primer set (e.g., V1V2, V4) can influence results, as some primers may underestimate species richness [31].

Comparative Data Tables

Table 1: Comparison of Common Gut Microbiome Sample Types

| Sample Type | Advantages | Disadvantages | Best for Shallow Shotgun? |

|---|---|---|---|

| Feces | Non-invasive; repeatable sampling; sufficient biomass; inexpensive [30] | A proxy for luminal content only; does not reflect mucosa-associated microbiota; uneven bacterial distribution [30] [32] | Yes, ideal for large-scale studies due to cost and practicality [23] |

| Mucosal Biopsy | Direct sampling of mucosa-associated microbiota; controllable sampling site [30] | Invasive; not suitable for healthy controls; bowel preparation alters microbiota; expensive [30] | Less ideal, limited by invasiveness and cost for large cohorts |

| Intestinal Aspirate | Direct sampling of luminal fluid; controllable sampling site [30] | Invasive; requires bowel preparation; patient discomfort; risk of contamination [30] | Less ideal due to invasiveness and procedure complexity |

Table 2: Sample Storage Methods for Microbiome Research

| Storage Method | Practicality | Impact on Microbiome | Best Use Case |

|---|---|---|---|

| Immediate freezing at -80°C | Low (requires constant freezing) | Considered the gold standard; minimal changes [30] [31] | All studies, when logistics allow |

| Refrigeration at 4°C | High | Minimal significant difference from -80°C for short-term storage [31] | Short-term storage/transport when freezing is unavailable |

| Preservative Buffers (e.g., OMNIgene·GUT) | High (room temp stable) | Maintains stability for days; may induce small systematic shifts [30] [31] | Large-scale or remote collection studies with mail-in samples |

| Room Temperature (no additive) | High | Significant changes in microbial composition after 24 hours [30] | Not recommended for critical long-term storage |

Experimental Protocols

Protocol 1: Standardized Fecal Sample Collection for Shallow Shotgun Sequencing

Objective: To collect, preserve, and store fecal samples in a manner that minimizes technical variation and is optimal for shallow shotgun metagenomic sequencing.

Materials:

- Sterile collection container (without preservatives for homogenization)

- -80°C Freezer OR appropriate preservative buffer (e.g., OMNIgene·GUT)

- Sample homogenizer (e.g., blender)

- Gloves and personal protective equipment

Procedure:

- Collect: Collect the whole stool sample in a sterile container.

- Homogenize: Thoroughly homogenize the entire sample immediately after collection. This is critical to mitigate bias from the uneven distribution of bacteria within feces [30].

- Aliquot: Transfer multiple small aliquots of the homogenate into cryotubes to avoid repeated freeze-thaw cycles.

- Preserve:

- Gold Standard: Flash-freeze aliquots in liquid nitrogen or on dry ice and transfer to a -80°C freezer for long-term storage [32].

- Practical Alternative: If freezing is not immediately possible, add the aliquot to a preservative buffer designed for room-temperature storage, following the manufacturer's instructions [31].

- Document: Record all relevant metadata, including time of collection, storage method, and time to preservation.

Protocol 2: DNA Extraction and Library Preparation for Shallow Shotgun Sequencing

Objective: To extract high-quality DNA and prepare libraries for shallow shotgun sequencing, minimizing technical variation.

Materials:

- DNA extraction kit validated for microbiome studies

- Library preparation kit compatible with your sequencing platform

- Equipment for quality control (e.g., Qubit, Bioanalyzer)

Procedure:

- DNA Extraction: Extract DNA from all samples using the same validated kit and protocol to reduce batch effects. Although different kits can produce comparable sequencing depths, they can vary in total DNA concentration [31].

- Quality Control: Quantify DNA concentration and assess quality/fragment size.

- Library Preparation: Prepare sequencing libraries using a robust protocol. Studies show that technical variation from library preparation is generally low, especially when using shallow shotgun sequencing [23].

- Pool and Sequence: Pool libraries in equimolar ratios and sequence to a target depth of 2-5 million reads per sample for shallow shotgun sequencing [23].

Workflow and Pathway Diagrams

Sample Collection Workflow

Sequencing Method Selection

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Application Note |

|---|---|---|

| OMNIgene·GUT Kit | A preservative buffer that stabilizes microbial DNA at room temperature for several days [30] [31]. | Essential for large-scale, multi-center studies where immediate freezing is logistically challenging. |

| RNAlater | A preservative that stabilizes and protects nucleic acids (both RNA and DNA). | Renders samples unsuitable for metabolomics; use on a separate aliquot if metabolomic analysis is planned [32]. |

| FTA Cards / Fecal Occult Blood Test Cards | Cards containing chemicals that lyse cells and stabilize DNA for transport at room temperature [30] [32]. | A practical and inexpensive method, though may induce small systematic shifts in taxon profiles compared to freezing. |

| Validated DNA Extraction Kit | Kits specifically benchmarked for microbiome studies to efficiently lyse a wide range of bacterial cell walls. | Critical for reproducibility. The choice of kit can impact DNA yield and influence the observed microbial community [31]. |

| Shallow Shotgun Library Prep Kit | Kits tailored for preparing metagenomic sequencing libraries for low-to-moderate sequencing depth. | Optimized protocols can help achieve the low technical variation demonstrated in comparative studies [23]. |

In the context of shallow shotgun sequencing research, achieving cost-efficiency without compromising data quality is paramount. Multiplexing, the process of pooling multiple uniquely tagged samples for a single sequencing run, is a foundational strategy for achieving this goal [33]. It allows the high data output of modern sequencers to be divided across many samples, drastically reducing the per-sample cost [33]. This technical resource addresses common challenges and provides detailed protocols for implementing robust, cost-effective multiplexing in your shallow shotgun sequencing workflows.

Workflow for Multiplexed Shallow Shotgun Sequencing

The following diagram illustrates the key stages in a typical multiplexed shallow shotgun sequencing experiment, from sample preparation to data analysis.

Essential Research Reagent Solutions

The following reagents and kits are critical for executing a successful multiplexed shallow shotgun sequencing experiment.

Table 1: Key Reagents for Multiplexed Library Preparation

| Reagent/Kits | Primary Function | Key Considerations for Cost-Effectiveness |

|---|---|---|

| Unique Dual Index (UDI) Adapters [33] | Provides a unique barcode sequence for each sample, enabling post-sequencing sample identification and multiplexing. | Eliminates index hopping and sample misidentification. Using a validated set of 384+ indexes allows for high-plex pooling [33]. |

| Library Preparation Kits [34] | Converts fragmented DNA into sequencing-ready libraries through steps like end-repair, A-tailing, and adapter ligation. | Select kits with streamlined protocols to reduce hands-on time and reagent use. Automation-compatible kits are preferable for high-throughput [35]. |

| Magnetic Beads [35] | Used for clean-up and size selection of libraries after various preparation steps, removing enzymes, salts, and short fragments. | Enables efficient miniaturization of reaction volumes, preserving precious samples and reducing reagent consumption [35]. |

| Pooling Quantification Kits | Accurately measures the concentration of each final barcoded library to ensure equal representation in the pool. | Critical for high pooling uniformity (low CV). Poor quantification leads to wasted sequencing capacity on over-represented samples [33]. |

| Automated Liquid Handling Systems [35] | Robots that automate pipetting steps in library prep, such as the I.DOT Liquid Handler or G.STATION NGS Workstation. | Reduces human error, increases reproducibility and throughput, and enables miniaturization of reaction volumes, leading to significant long-term savings [35]. |

Quantitative Data for Experimental Planning

Understanding the cost and performance metrics is crucial for planning a cost-effective study.

Table 2: Cost and Performance Metrics of Sequencing Approaches

| Parameter | 16S rRNA Amplicon Sequencing | Shallow Shotgun Metagenomics | Deep Shotgun Metagenomics |

|---|---|---|---|

| Approximate Cost per Sample (USD) [24] | ~$50 | ~$150 (similar to 16S with modified protocols) [24] | Significantly higher than $150 |

| Taxonomic Resolution [24] [36] | Genus-level (sometimes species) | Species-level, can sometimes distinguish strains [36] | Species-level and strain-level |

| Functional Profiling | Predicted (e.g., with PICRUSt) | Yes (functional potential) [24] | Yes (functional potential) |

| Multiplexing Potential | High (standard practice) | Very High (key for cost-reduction) [4] | Lower (due to required depth per sample) |

Table 3: Impact of Multiplexing on Sequencing Costs

| Number of Samples Multiplexed per Run | Estimated Cost per Sample (Relative) | Key Factor for Success |

|---|---|---|

| 12-plex | Moderate | Basic barcode design and pooling. |

| 96-plex | Low | Robust barcode set with high uniformity in pooling. |

| 384-plex | Very Low | High pooling uniformity and a large number of validated, unique barcodes [33]. |

Frequently Asked Questions and Troubleshooting

1. We observe a high coefficient of variation (CV) in read counts across our multiplexed samples. What are the primary causes and solutions?

- Problem: High CV indicates poor pooling uniformity, where some samples are over-represented and others under-represented in the sequencing data [33].

- Solutions:

- Accurate Library Quantification: Use fluorometric methods (e.g., Qubit) and qPCR-based assays designed for sequencing libraries instead of just spectrophotometry (e.g., Nanodrop). qPCR quantifies only fragments that are competent for sequencing [33].

- Normalize by Concentration: Precisely normalize all libraries to the same molarity before pooling. Using an automated liquid handler can drastically improve the accuracy and reproducibility of this step [35].

- Check Fragment Size Distribution: Ensure all libraries have a similar average fragment size, as significant variations can affect quantification accuracy and pooling balance.

2. How can we prevent misassignment of reads to the wrong sample (barcode hopping) during demultiplexing?

- Problem: Barcode hopping, or index swapping, can lead to cross-contamination between samples, compromising data integrity.

- Solutions:

- Use Unique Dual Indexes (UDIs): Employ adapter sets where both the i5 and i7 indexes are unique combinations. This virtually eliminates the risk of misassignment because a single swap event will not create a valid index pair [33].

- Design Orthogonal Barcodes: Select a barcode set where each index is maximally different from all others in sequence. This ensures that even with a sequencing error in the barcode region, the read can be accurately assigned to the correct sample [33].

- Follow Platform-Specific Guidelines: Adhere to the recommended workflows and chemistries from your sequencing platform provider (e.g., PacBio, Illumina, Oxford Nanopore) that are validated for robust multiplexing [33].

3. Our shallow shotgun sequencing of host-derived samples (e.g., swabs) yields a high percentage of host DNA. How can we improve microbial data yield cost-effectively?

- Problem: A high proportion of host DNA consumes sequencing depth, reducing the effective microbial coverage and increasing costs.

- Solutions:

- Host DNA Depletion: Use commercial kits designed to selectively remove host (e.g., human) DNA prior to library preparation. For example, the HostZERO Microbial DNA Kit has been successfully used in metagenomic studies of respiratory samples [36].

- Adjust Pooling Strategy: If host depletion is not fully effective, you can slightly over-pool samples with expected high host DNA content. This ensures that the total microbial DNA output across the pool is sufficient, though the per-sample microbial read count for high-host samples may be lower.

4. What are the key advantages of automating the library preparation process for high-plex multiplexing?

- Problem: Manual library prep for dozens to hundreds of samples is time-consuming, prone to error, and lacks reproducibility.

- Solutions:

- Improved Reproducibility and Traceability: Automated systems like the G.STATION NGS Workstation perform liquid handling with high precision, minimizing well-to-well and run-to-run variability and providing a traceable record [35].

- Dramatically Reduced Hands-on Time: Automation can reduce hands-on time from hours to minutes, freeing up skilled personnel for data analysis [35].

- Reagent and Sample Savings: Non-contact dispensers can work in the nanoliter range, enabling assay miniaturization and significant savings on precious reagents and samples [35].

Advanced Strategy: Integrating Multiplexing into a Shallow Shotgun Workflow

For a research project focusing on the vaginal microbiome using shallow shotgun sequencing, the following protocol was implemented, demonstrating the practical application of these strategies [4].

Detailed Methodology:

- Sample Lysis and DNA Extraction: ZymoBIOMICS DNA/RNA Miniprep Kit was used according to the manufacturer's instructions, with a modified, extended bead-beating step (40 minutes) to ensure robust lysis of a wide range of microbial cells [4].

- Library Preparation and Barcoding: For Oxford Nanopore sequencing, the ligation sequencing kit (SQK-LSK109) was used. Barcoding was performed using the EXP-NBD196 expansion kit, pooling 12-16 samples per flow cell. The Short Fragment Buffer (SFB) was used during adapter ligation to ensure equal representation of short and long DNA fragments [4].

- Library Pooling and Quantification: After barcoding, libraries were quantified and pooled in equimolar amounts based on accurate fluorometric quantification to ensure even representation.

- Sequencing and Demultiplexing: The pooled library was sequenced on a Nanopore GridION with R9.4.1 flow cells. Basecalling and demultiplexing (the separation of pooled reads back into individual sample files based on their barcodes) were performed in real-time using the MinKNOW software [4].

Outcome: This multiplexed shallow shotgun approach (≤ 1M reads per sample) provided species-level resolution of the vaginal microbiome, allowing for precise classification into Community State Types (CSTs) and detection of key pathogens like Gardnerella vaginalis with high sensitivity, all while maintaining cost-effectiveness suitable for larger-scale studies [4].

This technical support center provides troubleshooting guides and frequently asked questions for researchers constructing bioinformatic pipelines, with a special focus on protocols for cost-effective shallow shotgun sequencing.

Frequently Asked Questions (FAQs)

Data Generation & Experimental Design

Q1: What are the key practical differences between 16S rRNA and shotgun metagenomic sequencing for a cost-effective study?

The choice between these methods depends on your research goals, budget, and bioinformatics capabilities. The table below summarizes the critical differences.

Table: Comparison of 16S rRNA and Shotgun Metagenomic Sequencing

| Factor | 16S rRNA Sequencing | Shotgun Metagenomic Sequencing |

|---|---|---|

| Cost per Sample | ~$50 USD [24] | Starting at ~$150 USD; shallow shotgun can approach 16S cost [24] |

| Taxonomic Resolution | Bacterial genus (sometimes species) [24] | Bacterial species and sometimes strains [24] |

| Taxonomic Coverage | Bacteria and Archaea only [24] | All taxa, including bacteria, fungi, viruses, and archaea [24] |

| Functional Profiling | No direct profiling (only prediction) [24] | Yes, direct profiling of microbial genes and metabolic pathways [24] |

| Bioinformatics Complexity | Beginner to Intermediate [24] | Intermediate to Advanced [24] |

| Sensitivity to Host DNA | Low [24] | High; requires mitigation through sequencing depth or protocols [24] |

For cost-effective studies aiming for taxonomic and functional profiles, shallow shotgun sequencing has emerged as a powerful compromise, providing over 97% of the compositional and functional data of deep sequencing at a cost similar to 16S rRNA sequencing [24].

Q2: How can I optimize an enrichment protocol for low-quality, low-endogenous DNA samples, such as in paleogenomics?

Research on ancient DNA (aDNA) provides key insights for handling challenging samples. For libraries with very low endogenous DNA content (e.g., <27%), pooling up to four libraries and performing two rounds of in-solution hybridization enrichment has been shown to be both reliable and cost-effective [37]. Conversely, for libraries with higher endogenous content (>38%), a single round of enrichment is recommended to preserve library complexity and cost-efficiency, as a second round can lead to preferential re-capture of already-amplified molecules [37]. Furthermore, the commercial "Twist Ancient DNA" reagent has been benchmarked and shows robust enrichment of approximately 1.2 million target SNPs without introducing significant allelic bias, which is critical for downstream population genetics analyses [37].

Data Preprocessing & Quality Control

Q3: What are the essential quality control (QC) steps for raw sequencing data, and what tools can I use?

Quality control is a non-negotiable first step and should be performed at multiple stages of the pipeline. A three-stage QC strategy—at the raw data, alignment, and variant calling stages—is considered best practice [38]. For raw FASTQ data, the following metrics are crucial [38]:

- Base Quality Scores: The median base quality score (Phred score) should typically be >30 across reads. A sudden drop in quality can indicate adapter contamination or fluidics problems during the sequencing run [38].

- Nucleotide Distribution: The proportion of A, T, C, and G should be relatively stable across sequencing cycles. Major fluctuations often indicate issues [38].

- GC Content: The GC percentage should match the expected value for your sample type (e.g., ~49-51% for human exome regions). Abnormal GC content can indicate contamination [38].

- Duplication Rate: A high rate of PCR duplicates suggests low library complexity or over-amplification [38].

Tools like FastQC are standard for generating these metrics [38]. For automated filtering, trimming, and error correction, AfterQC offers advanced functions like bubble detection (common on Illumina NextSeq sequencers) and error correction based on overlapping regions in paired-end reads [39].

Q4: A large proportion of my reads are being filtered out. What could be the cause?

A high loss of reads during preprocessing can stem from several issues. Consult the troubleshooting guide below for common causes and solutions.

Table: Troubleshooting Guide for High Read Loss

| Symptoms | Potential Causes | Solutions and Checks |

|---|---|---|

| Sudden drop in base quality at read ends [38]. | Signal degradation in later sequencing cycles. | Implement read trimming using tools like Trimmomatic or AfterQC [38] [39]. |

| Abnormal nucleotide distribution or GC content [38]. | Adapter contamination, library preparation bias, or sample cross-contamination. | Use tools like Cutadapt or AfterQC to detect and remove adapters. Verify sample integrity and library prep protocol [39]. |

| High levels of PCR duplicates. | Over-amplification during library prep or insufficient starting material. | Check library complexity metrics. Consider reducing PCR cycles or using duplication marking tools like Picard [40]. |

| Low alignment rates. | Sample contamination, poor sequencing quality, or use of an inappropriate reference genome [40]. | Re-check raw data QC. Ensure the correct reference genome and alignment parameters are used. |

Analysis & Profiling

Q5: What is a recommended tool for comprehensive profiling from shallow shotgun metagenomic data?