Unveiling the Hidden Microbiome: Advanced Strategies for Robust Detection of Low-Abundance Taxa

The accurate detection and quantification of low-abundance microorganisms are critical for a comprehensive understanding of the microbiome's role in human health and disease.

Unveiling the Hidden Microbiome: Advanced Strategies for Robust Detection of Low-Abundance Taxa

Abstract

The accurate detection and quantification of low-abundance microorganisms are critical for a comprehensive understanding of the microbiome's role in human health and disease. This article provides a systematic guide for researchers and drug development professionals, exploring the foundational challenges posed by these taxa, evaluating current methodological solutions from bioinformatics to sequencing technologies, and offering practical troubleshooting and optimization strategies. It further establishes a rigorous framework for the validation and benchmarking of analytical approaches, synthesizing key insights to enhance the reproducibility and biological relevance of microbiome studies, with significant implications for biomarker discovery and therapeutic development.

The Critical Challenge: Why Low-Abundance Taxa Are Pivotal in Microbiome Research

Low-abundance taxa represent the microbial "dark matter" of any microbiome. While often overlooked, these rare species are a reservoir of genetic and functional diversity, capable of dramatically influencing community stability and host health. Their detection and accurate characterization, however, present significant technical challenges. This technical support center is designed to provide researchers and drug development professionals with targeted troubleshooting guides and FAQs to overcome these hurdles, thereby advancing research into this critical component of the holobiont.

Technical Support & Troubleshooting FAQs

Sample Collection & Preparation

Q: How should I collect and store samples to best preserve the DNA of low-abundance taxa?

The integrity of your results is determined at the very first step: sample collection. For most sample types, including soil, feces, and tissue, immediate freezing at -80°C after collection is critical [1]. Samples should subsequently be shipped on dry ice to preserve nucleic acids. The only exception to this rule is when using a manufactured collection device containing a DNA-stabilizing buffer, which allows for short-term room-temperature storage and transport [1]. It is highly recommended that samples stored in home freezers be transferred to a stable -80°C environment as soon as possible, as the freeze-thaw cycles of typical household appliances can degrade the microbiome [1].

Q: How much sample is needed for reliable detection of rare species?

Sufficient sample mass is crucial for detecting low-abundance members of the community. The recommended minimum quantities are [1]:

- Fecal swabs: Ensure the swab is visibly discolored.

- Skin/Oral swabs: Rub swab back and forth vigorously for 30 seconds to 3 minutes, depending on the site.

- Rodent fecal samples: 2-3 frozen pellets.

- Soil/Tissue sample: 1.00 g or approximately 0.4 mL of tissue.

For low-biomass samples, it is advisable to submit a larger sample mass to account for potential troubleshooting steps during DNA extraction and library preparation [1].

DNA Extraction & Library Preparation

Q: What extraction method is best for maximizing the recovery of diverse, including low-abundance, microbes?

A robust, bead-beating protocol is non-negotiable. The MO BIO Powersoil DNA extraction kit, optimized for both manual and automated extractions on platforms like the ThermoFisher KingFisher robot, is widely recommended [1]. The bead-beating step is essential for lysing particularly robust microbial cell walls (e.g., Gram-positive bacteria), ensuring that the DNA extract is representative of the entire community and not biased toward easily-lysed taxa [1].

Q: My final library yield is low. What are the most common causes and solutions?

Low library yield is a frequent bottleneck. The table below summarizes the primary causes and their corrective actions [2].

| Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality | Enzyme inhibition from contaminants (salts, phenol). | Re-purify input; ensure high purity (260/230 > 1.8); use fresh wash buffers. |

| Inaccurate Quantification | Pipetting errors or overestimation of usable material. | Use fluorometric methods (Qubit) over UV (NanoDrop); calibrate pipettes. |

| Inefficient Adapter Ligation | Poor ligase performance or incorrect adapter-to-insert ratio. | Titrate adapter:insert ratios; ensure fresh ligase and buffer; optimize incubation. |

| Overly Aggressive Cleanup | Desired fragments are excluded during size selection. | Optimize bead-to-sample ratios; avoid over-drying beads. |

Sequencing & Data Analysis

Q: Which sequencing region and technology should I use for the most accurate profile?

While the 16S V4 region is a common choice due to its optimal length for short-read Illumina sequencing (e.g., MiSeq), other regions may be more suitable for specific habitats. For instance, the V1-V3 region may provide better taxonomic classification for skin microbiota [1]. For the highest taxonomic resolution (species level) and to investigate functional potential, Shotgun Metagenomic Sequencing is the gold standard, as it sequences all DNA in a sample without primer bias [1]. Emerging long-read technologies, like Oxford Nanopore's R10.4.1 flow cells, can also generate full-length 16S reads with >99.5% raw read accuracy, potentially improving classification [3].

Q: How many sequencing reads are sufficient to detect low-abundance taxa?

There is no universal number, as it depends on the complexity of your microbial community and the desired statistical power. However, general guidelines exist. A standard service might collect up to 5,000 raw reads, but for differential abundance analysis or complex communities, a "Huge" service targeting 20,000 reads or a "Bronto" service targeting 500,000 reads may be necessary to capture the rare biosphere [3]. It is important to note that over-sequencing can inflate the number of spurious OTUs, and samples with low reads should not be automatically discarded, as this may reflect a true biological state [1].

Q: What are the best bioinformatic practices for analyzing low-abundance taxa?

The QIIME 2 platform is a powerful and widely-used tool for amplicon data analysis. Key steps for rare taxa include [4]:

- Using DADA2 to generate ASVs: This method provides single-nucleotide resolution, which is more accurate than traditional OTU clustering for distinguishing closely related, rare species.

- Avoiding excessive rarefaction: This can artificially remove rare sequences.

- Careful interpretation: Tools like ANCOM or LEfSe can identify differentially abundant features, but their results with very low-abundance taxa should be interpreted with caution and validated.

Essential Research Reagent Solutions

The following table details key reagents and kits critical for successful research into low-abundance taxa.

| Item | Function & Rationale |

|---|---|

| MO BIO Powersoil DNA Kit | DNA extraction; includes bead-beating step for robust lysis of diverse cell walls, critical for an unbiased community profile [1]. |

| Zymo DNA/RNA Shield | Sample preservation; stabilizes nucleic acids in samples immediately upon collection, preventing degradation and shifts in community structure. |

| Duolink PLA Probemaker Kit | Protein-protein interaction detection; allows for custom conjugation of PLA oligonucleotides to antibodies for detecting interactions involving rare taxa or their products [5]. |

| SequalPrep 96-well Plate Kit | PCR clean-up and normalization; enables high-throughput normalization of samples before pooling, ensuring even sequencing coverage [1]. |

| Zymo OneStep PCR Inhibitor Removal Kit | DNA purification; specifically designed to remove common contaminants from complex samples like soil and feces that can inhibit downstream enzymes [3]. |

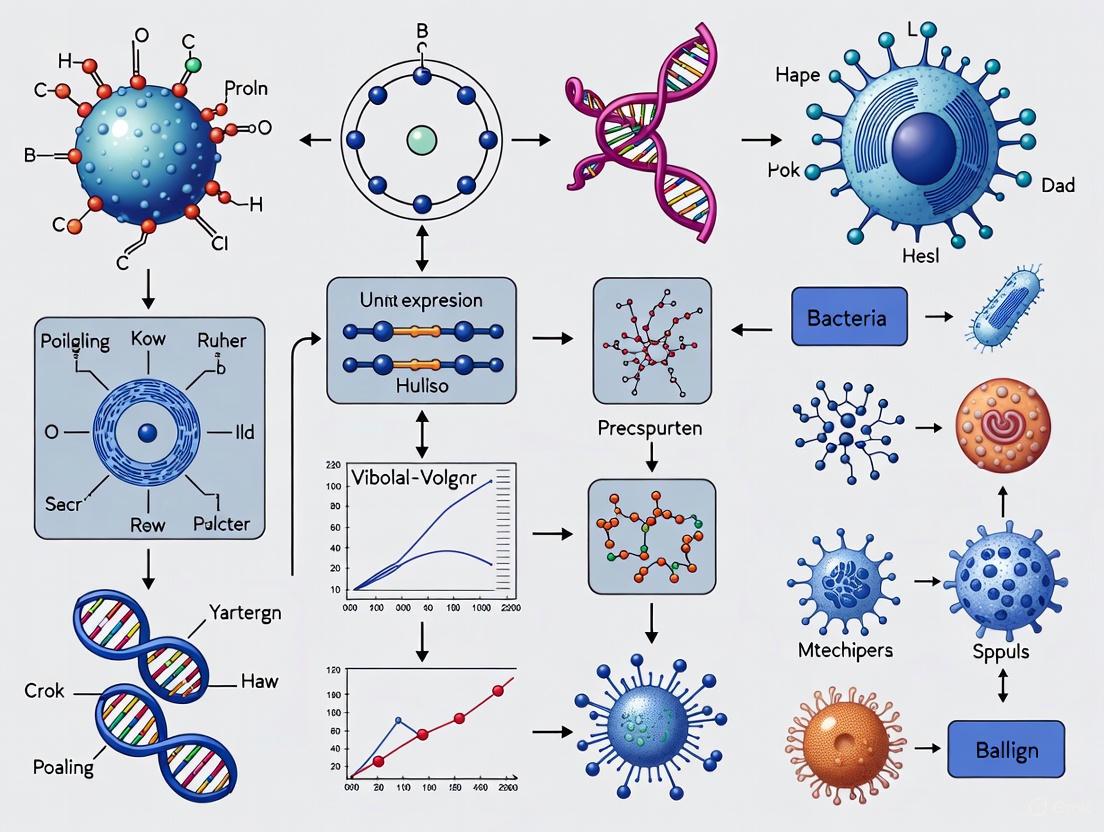

Experimental Workflow for Low-Abundance Taxa Research

The following diagram illustrates the integrated experimental and computational workflow designed to maximize the detection and accurate characterization of low-abundance microbial taxa.

Advanced Detection & Validation Methodologies

Statistical Estimation of Total Diversity

When your sequencing depth is insufficient to capture the full extent of diversity, statistical estimators can infer the number of unseen species. The ARC (Accumulation Rate Curve) estimator is a recently developed tool that models the rate of species accumulation to estimate total species richness. It is particularly effective in sparse data scenarios with a high proportion of unobserved species, though its performance can decrease if the underlying data distribution differs significantly from a log-normal model [6].

Targeted Validation with qPCR and Microfluidics

Quantitative PCR (qPCR) is an essential complement to sequencing. It provides absolute abundance of specific microbial populations, allowing you to confirm whether a taxon that appears "low abundance" in relative terms is genuinely rare or is being dwarfed by a bloom of other species [1].

For functional validation, microfluidic soil chip systems offer a groundbreaking approach. These chips simulate soil pore spaces and allow for the direct observation and manipulation of microbial interactions. A pioneering study used UV-induced phototoxicity to selectively suppress a low-abundance keystone protist (Hypotrichia), directly demonstrating its disproportionate role in preventing "mesopredator release" and maintaining fungal diversity [7]. This technology provides a platform to move from correlation to causation in low-abundance taxon research.

Functional Inference from Taxonomic Data

While shotgun metagenomics directly assays gene content, functional potential can be predicted from 16S rRNA data using tools like PICRUSt (Phylogenetic Investigation of Communities by Reconstruction of Unobserved States) [8]. For example, this method revealed an increased abundance of antibiotic resistance-related genes in the grapevine leaf microbiome when challenged by a fungal pathogen, highlighting a functional shift that could be linked to low-abundance taxa [8].

Core Concepts: Keystone Pathogen Hypothesis FAQ

What is the Keystone Pathogen Hypothesis? The keystone pathogen hypothesis proposes that certain low-abundance microbial pathogens can orchestrate inflammatory disease by remodelling a normally benign microbiota into a dysbiotic, or imbalanced, state. Their impact on the community is disproportionately large relative to their abundance [9] [10].

How does a keystone pathogen differ from a dominant pathogen? Unlike dominant pathogens that cause disease by becoming the numerically predominant member of the microbiota, a keystone pathogen can instigate inflammation and dysbiosis even when present as a quantitatively minor component [9]. Its influence is defined by its function and interaction with the host, not its biomass.

What is a real-world example of a keystone pathogen? Porphyromonas gingivalis in periodontitis is a canonical example. In mouse models, this bacterium, at very low colonization levels (<0.01% of the total bacterial count), subverts the host immune system, allowing for uncontrolled growth of the commensal microbiota. This leads to a dysbiotic community that drives destructive inflammation and bone loss, the hallmark of periodontitis [9] [11].

Why is detecting low-abundance taxa so challenging? Low-abundance taxa are difficult to detect and quantify for several reasons, as outlined in the table below.

Table 1: Key Challenges in Low-Abundance Taxa Research

| Challenge | Description |

|---|---|

| Technical Noise | PCR and sequencing errors can create spurious operational taxonomic units (OTUs), disproportionately inflating the perceived diversity of rare species [12]. |

| Low Reliability | Low-abundance OTUs are often inconsistently detected in technical replicates of the same sample, reducing the reliability of datasets [12]. |

| Computational Limits | Naive assembly of deep metagenomic datasets to find rare species requires immense computational resources (hundreds of GB to TB of RAM) [13]. |

| Compositional Effects | Microbiome data is compositional (relative), meaning an increase in one taxon appears as a decrease in all others, making it hard to identify true "driver" taxa [14]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Resources for Keystone Pathogen Research

| Reagent / Resource | Function in Research |

|---|---|

| C5a Receptor Antagonist | A research tool used to inhibit the complement C5a receptor (C5aR). It can reverse P. gingivalis-induced dysbiosis in mouse models, validating the host immune pathway as a therapeutic target [9]. |

| Gingipain-based Vaccine | An experimental vaccine targeting P. gingivalis gingipain enzymes. In non-human primates, it reduced bone loss and total bacterial load, demonstrating the keystone pathogen's role in stabilizing the dysbiotic community [9]. |

| ChronoStrain Database | A custom database of marker sequence "seeds" (e.g., virulence factors, core genes) used by the ChronoStrain algorithm to profile strain-level abundances in longitudinal metagenomic studies [15]. |

| Latent Strain Analysis (LSA) | A computational de novo pre-assembly method that partitions sequencing reads from different genomes in fixed memory, enabling the detection of bacterial strains present at relative abundances as low as 0.00001% [13]. |

| ZicoSeq | An optimized differential abundance analysis (DAA) method designed to control for false positives across diverse settings while maintaining high statistical power, addressing the challenges of compositional data and zero inflation [14]. |

Troubleshooting Guides & Experimental Protocols

FAQ: How can I improve the reliability of my low-abundance OTU data in 16S amplicon studies?

Problem: Low-abundance OTUs show poor detection agreement between technical replicates, leading to unreliable data.

Solution: Implement a data filtering strategy to remove likely spurious OTUs.

- Recommended Protocol: Based on a systematic evaluation of reliability and variability in 16S rRNA amplicon sequencing [12]:

- Sequence your samples in multiple replicates where feasible.

- Filter your OTU table by removing any OTU with a read count below 10 in an individual sample. This simple threshold significantly improves reliability.

- Expected Outcome: This method increased OTU detection reliability from 44.1% to 73.1%, while removing only 1.12% of total reads, preserving most of your sequencing data [12].

FAQ: What is the best method for strain-level tracking of a low-abundance pathogen over time?

Problem: Standard metagenomic profiling tools lack the sensitivity and temporal modeling to accurately track low-abundance strains in longitudinal studies.

Solution: Use a Bayesian method that incorporates temporal information and base-call quality scores.

- Recommended Protocol: The ChronoStrain pipeline for longitudinal strain profiling [15]:

- Inputs: Provide raw FASTQ files with quality scores, sample metadata with collection timepoints, and a database of genome assemblies or marker seeds for your target strains.

- Bioinformatic Processing: ChronoStrain constructs a custom marker database and filters reads against it.

- Bayesian Modeling: The model uses a stochastic process to estimate a probability distribution over abundance trajectories for each strain, explicitly modeling its presence or absence.

- Output: The algorithm outputs a presence/absence probability and a probabilistic abundance timeseries for each strain, significantly improving the detection of low-abundance taxa compared to state-of-the-art methods [15].

The following workflow diagram illustrates the ChronoStrain pipeline.

FAQ: How does a keystone pathogen like P. gingivalis actually cause dysbiosis?

Problem: The molecular mechanism by which a low-abundance pathogen triggers community-wide dysbiosis is unclear.

Solution: The mechanism involves sophisticated subversion of the host immune system.

- Experimental Evidence: The established mechanism for P. gingivalis in mouse periodontitis involves a targeted disruption of the complement-Toll-like receptor (TLR) signaling crosstalk [9] [11]:

- Complement Subversion: P. gingivalis secretes gingipain proteases that act as a C5 convertase, generating high local levels of the anaphylatoxin C5a.

- Receptor Crosstalk: C5a engages the C5a receptor (C5aR) on neutrophils. This signaling crosstalks with TLR2, which is simultaneously activated by P. gingivalis surface ligands.

- Immune Suppression: This crosstalk blocks the intracellular killing capacity of neutrophils, impairing their ability to clear not only P. gingivalis but also the rest of the commensal community.

- Dysbiotic Expansion: The unchecked growth of the microbiota leads to inflammation and tissue destruction. The resulting breakdown products (e.g., degraded proteins and heme) fuel the growth of proteolytic and asaccharolytic bacteria, stabilizing the dysbiotic state [9].

The diagram below summarizes this host-subversion mechanism.

Advanced Methodologies: Detecting the Needle in the Haystack

For projects requiring de novo discovery of very low-abundance strains without a reference genome, methods like Latent Strain Analysis (LSA) are critical.

- LSA Experimental Workflow [13]:

- Pool Samples: Combine metagenomic data from multiple samples to increase the chance of detecting rare organisms.

- k-mer Analysis: Break all sequencing reads down into short k-mers (subsequences of length k).

- Streaming Singular Value Decomposition (SVD): Perform a fixed-memory SVD on the k-mer abundance matrix across samples to identify latent variables called "eigengenomes," which represent covarying groups of k-mers from the same underlying genome.

- Read Partitioning: Use the eigengenomes to partition all sequencing reads into biologically informed clusters.

- Assembly: Assemble each read partition individually, making the assembly of genomes from taxa at abundances as low as 0.00001% computationally feasible [13].

Table 3: Comparison of Strain-Level Profiling Methods

| Method | Key Approach | Best Use Case | Considerations |

|---|---|---|---|

| ChronoStrain [15] | Bayesian, time-aware modeling of quality-score filtered reads. | Longitudinal studies requiring high sensitivity for low-abundance strain tracking. | Requires sample timepoint metadata; improved interpretability for temporal blooms. |

| Latent Strain Analysis (LSA) [13] | Deconvolution of k-mer covariance (eigengenomes) for read partitioning. | Discovery-focused studies aiming to reconstruct very low-abundance (<0.00001%) genomes from large datasets. | Scalable to terabyte-sized datasets with fixed memory; can separate closely related strains. |

| StrainGST [15] | Mapping reads to a reference genome database and using unique SNPs. | Single-sample profiling when a high-quality reference database for target strains is available. | Performance can degrade for low-abundance strains or when references are incomplete. |

Troubleshooting Guide: Identifying and Managing Spurious OTUs

FAQ 1: What are spurious OTUs, and why are they a problem?

Spurious Operational Taxonomic Units (OTUs) are artificially generated sequences mistakenly identified as unique microbial taxa. They are a significant problem because they can drastically inflate estimates of microbial diversity. One study found that OTU clustering combined with singleton removal still resulted in approximately 50% (in mock communities) to 80% (in gnotobiotic mice) of taxa being spurious [16]. These artifacts can lead to incorrect biological interpretations, obscure true ecological patterns, and reduce the reproducibility of microbiome studies.

FAQ 2: What are the primary causes of noisy sequences and spurious OTUs?

The causes can be broken down into experimental and bioinformatic sources:

- Experimental and Sequencing Errors: These include PCR errors (such as point mutations and chimeras), sequencing platform errors, low DNA concentration leading to amplified background noise, and the presence of free environmental DNA in samples [16] [17].

- Bioinformatic Processing: The choice of analysis algorithm (OTU-clustering vs. Amplicon Sequence Variant (ASV) denoising) and its parameters significantly influences the number of spurious sequences generated [16] [18].

FAQ 3: How can I improve the reliability of OTU detection in my data?

The reliability of OTU detection—measured as the agreement in detecting an OTU across sample replicates—can be significantly improved by applying abundance-based filtering. One study showed that without any filtering, reliability was only 44.1%. Filtering OTUs with fewer than 10 reads in individual samples increased reliability to 73.1% while removing only 1.12% of total reads [19]. This method is more efficient than applying a relative abundance cutoff across the entire dataset.

FAQ 4: What is the difference between OTU-clustering and ASV-based methods?

The table below summarizes the key differences and performance metrics based on benchmarking studies:

| Feature | OTU-Clustering Methods (e.g., UPARSE) | ASV-Denoising Methods (e.g., DADA2, Deblur) |

|---|---|---|

| Core Principle | Clusters sequences based on a similarity threshold (e.g., 97%) [18]. | Uses statistical models to distinguish true biological sequences from errors, providing single-nucleotide resolution [18]. |

| Typical Output | OTUs (Operational Taxonomic Units). | ASVs (Amplicon Sequence Variants) or zOTUs (zero-radius OTUs). |

| Error Rate | Tends to achieve clusters with lower error rates but suffers from over-merging of distinct taxa [18]. | Has a consistent output but can over-split non-identical 16S rRNA gene copies from the same strain [18]. |

| Spurious Taxa | Generally higher fraction of spurious taxa compared to ASV methods [16]. | Generally lower fraction of spurious taxa, though this depends on the targeted gene region and barcoding system [16]. |

| Resemblance to Expected Community | High (led by UPARSE in benchmarking) [18]. | High (led by DADA2 in benchmarking) [18]. |

FAQ 5: Is there a recommended abundance threshold for filtering spurious sequences?

Yes, research on mock communities suggests that applying a relative abundance threshold of 0.25% is effective for preventing the analysis of most spurious taxa in both OTU- and ASV-based approaches. Using this cutoff has been shown to improve reproducibility and reduce variation in richness estimates by 38% compared to only removing singletons [16]. For an absolute count threshold, filtering OTUs with <10 reads in a sample is a practical and reliable option [19] [20].

Quantitative Data on Spurious OTUs and Filtering Efficacy

The following tables summarize key quantitative findings from recent research to guide your experimental design and data analysis.

Table 1: Prevalence of Spurious Taxa in Different Community Types [16]

| Community Type | Analysis Method | Approximate Spurious Taxa | Recommended Threshold |

|---|---|---|---|

| Mock Communities (in vitro) | OTU clustering (no filter) | ~50% | Relative abundance < 0.25% |

| Gnotobiotic Mice (in vivo) | OTU clustering (no filter) | ~80% | Relative abundance < 0.25% |

| Various Mocks | ASV analysis | Lower than OTUs, but variable | Relative abundance < 0.25% |

Table 2: Impact of Low-Abundance OTU Filtering on Detection Reliability [19]

| Filtering Method | Reliability of Detection (% Agreement in Triplicates) | Percentage of Total Reads Removed |

|---|---|---|

| No filtering | 44.1% (SE=0.9) | 0% |

| Filter OTUs with <10 reads in a sample | 73.1% | 1.12% |

| Filter OTUs with <0.1% abundance in dataset | 87.7% (SE=0.6) | 6.97% |

Detailed Experimental Protocols

Protocol 1: A Standard Workflow for 16S rRNA Data Processing to Minimize Spurious OTUs

This protocol synthesizes steps from multiple methodological studies [16] [18] [19].

- Sequence Quality Control & Merging: Check sequence quality with FastQC. Merge paired-end reads using tools like USEARCH's

fastq_mergepairsor VSEARCH. - Primer & Length Trimming: Strip primer sequences using tools like

cutPrimers. Perform length trimming to remove atypically long or short reads. - Quality Filtering: Filter reads based on expected errors (e.g.,

fastq_maxee_rate = 0.01in USEARCH) and remove reads with ambiguous bases. - Chimera Removal: Identify and remove chimeric sequences using tools like UCHIME or VSEARCH.

- Clustering/Denoising:

- OTU Approach: Cluster sequences into OTUs at 97% similarity using a robust algorithm like UPARSE or Average Neighborhood.

- ASV Approach: Denoise sequences using tools like DADA2 or Deblur to infer exact sequence variants.

- Abundance Filtering: Apply a low-abundance filter. It is recommended to remove features with fewer than 10 reads in a sample [19] [20] or with a relative abundance below 0.25% [16].

- Taxonomic Classification: Assign taxonomy to the filtered OTUs/ASVs using a reference database (e.g., SILVA, Greengenes).

- Diversity Analysis: Proceed with alpha- and beta-diversity analyses on the filtered abundance table.

Protocol 2: Benchmarked Comparison of Clustering and Denoising Algorithms

This protocol is based on a comprehensive benchmarking analysis [18].

- Data Selection: Use a complex, well-defined mock community (e.g., the HC227 community with 227 bacterial strains) as a ground truth for evaluation.

- Unified Preprocessing: Process all datasets through the same rigorous quality control, merging, and filtering steps to ensure a fair comparison.

- Algorithm Application: Analyze the preprocessed data using a panel of standard algorithms. For OTUs: include UPARSE, DGC (Distance-based Greedy Clustering), and Average Neighborhood. For ASVs: include DADA2, Deblur, and UNOISE3.

- Performance Metrics Evaluation: Compare the outputs of each algorithm based on:

- Error Rate: The number of erroneous sequences output.

- Over-splitting/Over-merging: The tendency to split one true biological sequence into multiple OTUs/ASVs or to merge distinct sequences into one.

- Resemblance to Expected Community: How closely the resulting microbial composition matches the known composition of the mock community using alpha and beta diversity measures.

Workflow and Decision Diagrams

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Research Reagents and Materials for Low-Biomass Microbiome Research

| Reagent / Material | Function / Application | Example Use-Case |

|---|---|---|

| Defined Microbial Mock Communities (e.g., ZymoBIOMICS) | Serves as a ground-truth control to validate sequencing and bioinformatic workflows, allowing for quantification of spurious OTUs and error rates [16] [18]. | Added to experimental samples as a positive control to benchmark laboratory and computational performance. |

| Free DNA Removal Solution (e.g., iQ-Check, Bio-Rad) | Enzymatically degrades free extracellular DNA present in a sample, reducing a potential source of contaminating sequences and spurious OTUs [16]. | Treatment of samples prior to DNA extraction, particularly crucial for low-biomass environments. |

| High-Fidelity DNA Polymerase | Reduces PCR errors introduced during amplification, thereby minimizing one source of sequence noise that can lead to spurious OTUs [17]. | Used during the PCR amplification step of library preparation to ensure high-fidelity copying of 16S rRNA genes. |

| Phylogenetic Tree (e.g., built with FastTree2) | Provides evolutionary relationships between sequences, which can be leveraged in bioinformatic tools to improve the power of association tests by borrowing information from related taxa [21]. | Used in advanced association tests like POST to guide the analysis and enhance the detection of outcome-associated OTUs. |

Frequently Asked Questions

What is the core trade-off in replicate analyses for low-biomass studies? The core trade-off is between retaining sufficient data for robust biological interpretation and applying stringent filters to reduce technical noise and contamination. Overly aggressive filtering can discard authentic low-abundance taxa, while insufficient filtering allows contaminants to create false positives and reduce agreement between replicates [22] [23].

Why is replicate analysis particularly crucial for low-abundance taxa research? In low-biomass samples, the signal from true microbial DNA can be near the limit of detection. Contaminating DNA from reagents, kits, or the laboratory environment can therefore constitute a large proportion of the sequenced data, making replicate analysis essential to distinguish a consistent, authentic signal from stochastic noise [23].

Which differential abundance methods are most consistent for replicate analyses? A large-scale comparison of 14 differential abundance tools found that ALDEx2 and ANCOM-II produce the most consistent results across datasets and agree best with a consensus of different methods [22]. Using a consensus approach based on multiple methods is recommended for robust results [22].

What are the key negative controls to include in my experimental design? You should incorporate several types of controls [24] [23]:

- Kit/Reagent Controls: An aliquot of sterile water or buffer processed through the entire DNA extraction and library preparation pipeline.

- Sampling Controls: "Empty" collection vessels, swabs exposed to the air in the sampling environment, or swabs of personal protective equipment (PPE).

- Processing Controls: Samples of any preservation solutions or sampling fluids used.

How can I visually assess the trade-off in my own data? You can use a PERMANOVA test on beta-diversity distances to quantify how much of the variance in your data is explained by your sample groups versus your batch/replicate groups. A stronger sample group effect and a weaker batch effect indicate higher data quality and reliability [24].

Troubleshooting Guides

Problem: Low Agreement Between Technical Replicates

Potential Causes and Solutions:

Cause: Contamination or Cross-Contamination

- Solution: Implement rigorous decontamination protocols. Use single-use, DNA-free consumables where possible. For re-usable equipment, decontaminate with 80% ethanol followed by a nucleic acid degrading solution (e.g., bleach, UV-C light) [23]. Include and sequence negative controls to identify contaminant sequences.

- Solution: Use personal protective equipment (PPE) like gloves, masks, and clean suits to minimize contamination from the researcher [23].

Cause: Insufficient Sequencing Depth

- Solution: Check library sizes for all samples. If many samples have low total counts, consider filtering them out or using statistical methods that account for varying sequencing depth. Rarefaction or data transformations can help control for uneven sampling depth [25].

Cause: Inconsistent DNA Extraction

- Solution: To minimize variation, use the same batch of DNA extraction kits for all samples in a study. If this is not possible, store samples and extract all DNA at the same time [24].

Problem: Excessive Data Loss After Quality Filtering

Potential Causes and Solutions:

Cause: Overly Stringent Filtering Thresholds

- Solution: Rather than applying a single hard cutoff, use "independent filtering," where filtering is based on overall abundance and prevalence across all samples, independent of the test statistic. Adjust prevalence and abundance thresholds iteratively while monitoring the stability of core results [22] [25].

Cause: High Proportion of Rare Taxa

- Solution: Agglomerate data at a higher taxonomic rank (e.g., Genus or Family level) for specific analyses. This reduces the feature space and the burden of multiple-hypothesis testing while preserving broader biological signals [25].

Cause: Contamination Inflating Feature Counts

- Solution: Use prevalence-based or frequency-based decontamination tools (like the

decontamR package) to identify and remove putative contaminants using your negative control samples, rather than blanket prevalence filters [25].

- Solution: Use prevalence-based or frequency-based decontamination tools (like the

Methodologies and Data

Experimental Protocol: A Rigorous Workflow for Low-Biomass Replicate Analysis

This protocol is designed to maximize reliability from sample collection to data analysis [24] [23] [25].

Sample Collection:

- Decontaminate: Treat all sampling equipment with ethanol and a DNA-degrading solution.

- Use PPE: Wear gloves, mask, and a clean lab coat.

- Collect Controls: Immediately at the sampling site, collect negative controls (e.g., empty collection tube, air swab).

Sample Storage and DNA Extraction:

- Store samples consistently (e.g., all at -80°C) and use the same preservation method.

- Extract DNA from all samples and controls in a randomized order within a short timeframe using the same kit lot.

Sequencing and Bioinformatic Processing:

- Sequence samples and controls together on the same sequencing run.

- Process raw sequences through a standard pipeline (DADA2, QIIME2, etc.) to generate an Amplicon Sequence Variant (ASV) table.

Quality Control and Contamination Removal:

- Calculate library sizes and plot distributions to identify outliers.

- Apply a contamination removal tool (e.g.,

decontam) using the negative controls to identify and remove contaminant ASVs. - Apply mild prevalence and abundance filtering (e.g., features must be present in at least 1-2 samples with a count of 2-3).

Analysis of Replicates:

- Calculate alpha and beta diversity metrics.

- Use PERMANOVA to test if replicate samples cluster more closely together than non-replicate samples.

- Perform differential abundance testing using a consensus of ALDEx2 and ANCOM-II [22].

Quantitative Data on Method Performance

Table 1: Comparison of Differential Abundance Tool Performance on 38 Microbiome Datasets [22]

| Tool | Input Data | Key Characteristic | Reported Consistency |

|---|---|---|---|

| ALDEx2 | Counts | Compositional (CLR transformation); Uses Wilcoxon test | High |

| ANCOM-II | Counts | Compositional (ALR transformation); Handles random effects | High |

| DESeq2 | Counts | Negative binomial model; RNA-seq adapted | Variable |

| edgeR | Counts | Negative binomial model; RNA-seq adapted | High FDR noted |

| LEfSe | Rarefied Counts | Non-parametric; LDA score; Often requires rarefaction | Variable |

Table 2: Essential Research Reagent Solutions for Low-Biomass Studies [24] [23]

| Reagent / Material | Function | Key Consideration |

|---|---|---|

| DNA-free Swabs & Tubes | Sample collection and storage. | Pre-treated (e.g., autoclaved, UV-irradiated) to minimize contaminant DNA. |

| Nucleic Acid Degrading Solution | Decontamination of surfaces and equipment. | Sodium hypochlorite (bleach) or commercial DNA removal solutions. |

| Sample Preservation Buffer | Stabilizes microbial DNA between collection and processing. | 95% ethanol, OMNIgene Gut kit, or other commercial buffers suitable for field storage [24]. |

| DNA Extraction Kit | Purification of microbial DNA from samples. | Use a single kit lot for entire study; kit itself is a major contamination source [24]. |

| Negative Control Reagents | Sterile water or buffer processed alongside samples. | Identifies contaminating DNA introduced from kits and laboratory reagents [23]. |

Workflow Visualization

The following diagram illustrates the logical workflow and trade-offs involved in a robust replicate analysis pipeline.

The study of microbiomes has predominantly focused on bacterial communities, often overlooking the critical roles played by archaea and fungi. These low-abundance taxa, however, are now recognized as significant contributors to ecosystem functioning and host health. Research into these organisms is complicated by their low biomass, which makes them highly susceptible to being masked by contamination and methodological artifacts. This technical support center provides targeted guidance to help researchers overcome the unique challenges associated with detecting and analyzing low-abundance archaea and fungi, thereby improving the reliability and reproducibility of your findings.

FAQs and Troubleshooting Guides

What are the most critical steps to prevent contamination in low-biomass microbiome studies?

Contamination control is paramount when working with low-biomass samples like those expected for archaea and fungi. Key steps must be taken during sample collection and DNA extraction [23].

FAQ: At which stages is my experiment most vulnerable to contamination? Contamination can be introduced at every stage, from sample collection to sequencing. Major sources include human operators, sampling equipment, reagents, kits, and the laboratory environment itself. Cross-contamination between samples during processing is also a significant risk [23].

Troubleshooting Guide: I am getting high levels of human DNA in my samples. How can I reduce this? Problem: High levels of host or human DNA in samples, which can overwhelm the signal from low-abundance microbial taxa. Solution:

- Use PPE: Researchers should cover exposed body parts with personal protective equipment (PPE) including gloves, cleansuits, and masks to limit contact and aerosol droplets [23].

- Decontaminate Equipment: Thoroughly decontaminate all tools and surfaces. A recommended protocol is decontamination with 80% ethanol to kill contaminating organisms, followed by a nucleic acid degrading solution (e.g., sodium hypochlorite/bleach) to remove trace DNA. Use single-use, DNA-free collection vessels where possible [23].

- Include Controls: Always include negative controls (e.g., an empty collection vessel, a swab of the air, or an aliquot of preservation solution) that are processed alongside your samples. These are essential for identifying the source and extent of contamination [23] [26].

How can I optimize my wet-lab protocol specifically for low-abundance archaea and fungi?

Standard protocols for high-biomass samples are often unsuitable. Optimization is required for sample collection, DNA extraction, and library preparation.

FAQ: Why can't I use my standard fecal DNA extraction kit for respiratory or tissue samples? High-biomass protocols often involve robotic automation which can lead to significant material loss in low-biomass samples. Low-biomass protocols require manual processing to maximize recovery and are optimized for different lysis conditions to break tough fungal cell walls [26].

Troubleshooting Guide: My DNA yields from fungal spores are consistently low. What can I improve? Problem: Low DNA yield from tough-to-lyse fungal or archaeal cells. Solution:

- Enhanced Lysis: Use a combination of mechanical and chemical lysis. For fungi, this typically involves bead-beating with zirconium beads (e.g., 0.1 mm) in a bead-beater, combined with chemical lysis buffers. This dual approach helps break down robust cell walls [26].

- Inhibit Degradation: Ensure samples are immediately frozen after collection (e.g., on dry ice or at -80°C) and aliquoted to avoid repeated freeze-thaw cycles, which degrade DNA [26].

- Use Positive Controls: Include a whole-cell positive control (e.g., ZymoBIOMICS Microbial Community Standard) to monitor the efficiency of your entire DNA extraction and sequencing workflow [26].

Which bioinformatic tools should I use for differential abundance analysis, and why do different tools give different results?

Choosing the right differential abundance (DA) tool is critical, as different methods can produce vastly different results. The choice depends on how the tool handles the core challenges of microbiome data.

FAQ: Why do I get different lists of significant taxa when I use different DA methods on the same dataset? Microbiome data is compositional, sparse (zero-inflated), and highly variable. DA methods use different statistical models and approaches to handle these properties. Some methods test for changes in "true absolute abundance," while others test for changes in "true relative abundance," leading to different interpretations and results [27] [28] [14].

Troubleshooting Guide: I am unsure which differential abundance method to trust for my analysis of fungal communities. Problem: Lack of consensus and consistency in DA tool results. Solution:

- Understand Method Types: Select methods that explicitly address compositional data. Tools like ALDEx2 and ANCOM-BC use compositional data analysis (CoDa) principles, such as log-ratio transformations, and generally show better false-positive control [28] [14].

- Use a Consensus Approach: Given that no single method is optimal for all scenarios, a robust strategy is to apply multiple DA methods (e.g., ALDEx2, ANCOM-BC, and a count-based model like

corncob) and focus on the taxa that are consistently identified as significant across several of them [28]. - Filter Rare Taxa Judiciously: Apply prevalence filtering (e.g., retaining features present in at least 10% of samples) independently of your test statistic to reduce sparsity and improve power, but be aware that this can also influence results [28].

Table 1: Comparison of Common Differential Abundance Methods

| Method | Underlying Approach | Handling of Zeros | Addresses Compositionality? | Reported Performance |

|---|---|---|---|---|

| ALDEx2 | Bayesian, CLR transformation | Imputed with a prior | Yes (CLR) | Consistent results, good FDR control, lower power [28] [14] |

| ANCOM-BC | Linear model, log-ratio | Pseudo-count | Yes (Additive log-ratio) | Consistent results, good FDR control [28] [14] |

| DESeq2 / edgeR | Negative binomial model | Untreated (modeled as count) | Via robust normalization (e.g., RLE, TMM) | Can have high FDR; power depends on setting [28] [14] |

| MaAsLin2 | Generalized linear model | Pseudo-count | Via normalization | Variable performance across datasets [28] |

| corncob | Beta-binomial model | Modeled as count | Via normalization | Flexible for modeling variability [14] |

Experimental Protocols for Key Experiments

Protocol 1: Reliable Microbial Profiling of Low-Biomass Samples

This protocol is adapted from established methods for upper respiratory tract samples and is applicable to other low-biomass niches like archaea and fungi in various environments [26].

1. Sample Collection and Storage:

- Collection: Use sterile, single-use swabs (e.g., COPAN eSwabs). For surface or tissue sampling, swab the area thoroughly. Submerge the swab in a suitable liquid transport medium (e.g., liquid Amies).

- Storage: Immediately place samples on dry ice and transfer to a -80°C freezer for long-term storage. Aliquot samples during the first thaw to avoid freeze-thaw cycles [26].

2. DNA Extraction:

- Lysis: Use a combination of mechanical and chemical lysis. Add samples to tubes containing zirconium beads (0.1 mm) and a lysis buffer. Process in a bead-beater (e.g., Mini-Beadbeater-24) for a defined period to ensure complete cell disruption.

- Purification: Purify DNA using a magnetic bead-based cleanup system (e.g., Binding buffer and Magnetic beads solution). Wash with appropriate buffers and elute in a low-volume elution buffer (e.g., from QIAGEN) to maximize DNA concentration [26].

3. 16S/ITS rRNA Gene Amplicon Sequencing:

- Amplification: Amplify the target gene (e.g., 16S V4 region for archaea/bacteria, ITS1/2 for fungi) using a high-fidelity DNA polymerase (e.g., Phusion Hot Start II).

- Library Preparation and Sequencing: Construct sequencing libraries following standard Illumina protocols. Use a MiSeq reagent kit v.3 (2x300 bp) for paired-end sequencing on an Illumina MiSeq platform [26].

Protocol 2: Metabolomic Profiling of Fungal Cultures

Metabolomics can reveal functional insights from fungi that are missed by DNA-based methods [29].

1. Sample Preparation:

- Rapid Sampling and Quenching: Rapidly sample from a bioreactor or culture. Quench metabolism immediately to stabilize metabolite levels. The cold methanol quenching method (60% v/v methanol at -40°C) is common, but be aware of potential metabolite leakage. Rapid filtration into liquid nitrogen is an alternative.

- Metabolite Extraction: Extract metabolites using a solvent system with high efficiency, such as a methanol/water (1:1) mixture. Lyophilize (freeze-dry) the sample before extraction for better reproducibility [29].

2. Instrumental Analysis:

- LC-MS Analysis: Use Liquid Chromatography-Mass Spectrometry (LC-MS) for broad coverage. A C18 column is standard for reverse-phase separation. Perform both full-scan MS1 (for metabolite fingerprinting) and data-dependent MS2 (for identification).

- Data Processing: Process raw data using software for peak picking, alignment, and annotation. Compare MS1 data against in-silico libraries of fungal metabolite masses for identification [29].

Workflow and Pathway Diagrams

Diagram 1: Low-Biomass Research Workflow

Low-Biomass Research Workflow

Diagram 2: Contamination Control Strategy

Contamination Control Strategy

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for Low-Biomass Microbial Research

| Item | Function / Purpose | Example Product / Specification |

|---|---|---|

| Sterile Sampling Swabs | Collect samples without introducing contaminants. | COPAN eSwabs (480CE, 482CE, 484CE) with liquid Amies medium [26]. |

| Zirconium Beads | Mechanical cell disruption for efficient lysis of tough fungal and archaeal cell walls during DNA extraction. | 0.1 mm beads for use in a bead-beater [26]. |

| Magnetic Bead DNA Cleanup Kit | Purify and concentrate low-yield DNA after extraction; more efficient for low volumes than column-based kits. | Kits with binding, wash, and elution buffers (e.g., from LGC Biosearch Technologies) [26]. |

| DNA Elution Buffer | Resuspend purified DNA in a stable, low-salt buffer compatible with downstream applications. | Low-EDTA TE buffer or commercial elution buffer (e.g., from QIAGEN) [26]. |

| Whole Cell & DNA Positive Controls | Monitor extraction efficiency and detect batch effects. A known community standard is essential. | ZymoBIOMICS Microbial Community Standard (D6300) and DNA Standard (D6306) [26]. |

| High-Fidelity DNA Polymerase | Accurate amplification of the target 16S/ITS region for sequencing with low error rates. | Phusion Hot Start II DNA Polymerase [26]. |

| Cold Methanol (-40°C) | Quench metabolic activity in fungal cultures for metabolomic studies to stabilize metabolite levels. | HPLC grade methanol for quenching [29]. |

| Methanol/Water (1:1) Solvent | Efficient extraction of a wide range of intracellular metabolites from fungal mycelia or spores. | Mixed solvent for metabolomic extraction [29]. |

From Theory to Practice: Cutting-Edge Wet-Lab and Computational Methods for Enhanced Detection

Technology Comparison at a Glance

The following table summarizes the core characteristics of the three major sequencing platforms, highlighting their key differences for research applications, particularly in detecting low-abundance taxa.

Table 1: Core Sequencing Technology Specifications

| Feature | Short-Read (Illumina) | Long-Read (PacBio HiFi) | Long-Read (Oxford Nanopore) |

|---|---|---|---|

| Typical Read Length | 50-300 bases [30] | 15,000-20,000 bases [31] | 1,000 to >1,000,000 bases; ultra-long reads possible [32] [33] |

| Primary Technology | Sequencing by synthesis (reversible terminators) [30] | Single Molecule, Real-Time (SMRT) sequencing with Circular Consensus Sequencing (CCS) [31] | Nanopore sensing; measures changes in ionic current [32] [34] |

| Typical Accuracy | >99.9% [33] | >99.9% [31] | 87-98%; recent chemistries report >99% [35] [33] |

| Key Advantage for Low-Abundance Taxa | High accuracy and established pipelines for high-throughput amplicon sequencing. | High accuracy combined with long reads for precise species-level classification [35]. | Ultra-long reads span repetitive regions; real-time analysis allows for adaptive sampling [32]. |

| Key Limitation for Low-Abundance Taxa | Short reads may not resolve closely related species, leading to ambiguous taxonomic assignments [35] [36]. | Generally lower throughput than Illumina; requires more DNA input [33]. | Higher raw error rates can complicate identification of rare taxa without specialized analysis tools [35]. |

Table 2: Performance in Microbial Community Profiling (e.g., 16S rRNA Sequencing)

| Aspect | Short-Read (Illumina) | Long-Read (PacBio & Nanopore) |

|---|---|---|

| Target Region | Hypervariable regions (e.g., V4, V3-V4) [35] | Nearly full-length 16S rRNA gene [35] [36] |

| Taxonomic Resolution | Often limited to genus level due to short read length [35] | Finer resolution, enabling more confident species-level identification [35] [36] |

| Ability to Detect Novel Taxa | Limited by the shortness of the sequence fragment [36] | Improved, as full-length gene provides more phylogenetic information [36] |

| Representative Finding | In soil microbiome studies, the V4 region alone failed to cluster samples by soil type [35]. | Full-length 16S sequencing clearly differentiates microbial communities by environment (e.g., soil type, lake basin) [35] [36]. |

Workflow Diagrams

Core Sequencing Workflows

Figure 1: Core sequencing workflows for short-read and long-read technologies.

Decision Pathway for Low-Abundance Taxa Research

Figure 2: Decision pathway for selecting sequencing technology in low-abundance taxa research.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My microbiome study failed to resolve species-level differences. Could the sequencing technology be the cause? Yes. Short-read sequencing of partial 16S rRNA gene regions (e.g., V4) often lacks the resolution to distinguish between closely related bacterial species [35]. Switching to a full-length 16S rRNA approach using long-read sequencing can provide the necessary resolution for species-level classification and improve detection of low-abundance taxa [36].

Q2: For a first-time user, which long-read technology is more accessible? Oxford Nanopore's MinION offers a lower barrier to entry due to its portability, lower initial instrument cost, and rapid library preparation (under 10 minutes for some kits) [32]. However, for applications demanding consistently high accuracy, such as characterizing rare variants, PacBio HiFi may be preferable [31] [35].

Q3: Can I detect base modifications like methylation with these technologies? Yes, but this is a key differentiator for long-read technologies. Both PacBio and Nanopore can detect epigenetic modifications like 5mC from native DNA without additional treatments like bisulfite conversion [31] [34]. PacBio detects methylation by measuring polymerase kinetics [31], while Nanopore detects it through changes in the current signal as the modified base passes through the pore [34].

Q4: I am getting a high number of adapter dimers in my NGS library. What is the cause and how can I fix it? A high peak at ~70-90 bp in your electropherogram indicates adapter dimers. This is typically caused by an incorrect adapter-to-insert molar ratio or inefficient purification after ligation [2]. To fix this, titrate your adapter concentration, ensure proper cleanup using bead-based size selection with the correct bead-to-sample ratio, and verify that your input DNA is not degraded and is accurately quantified using a fluorometric method [2].

Troubleshooting Common Experimental Issues

Table 3: Troubleshooting Common Sequencing Preparation Errors

| Problem | Possible Cause | Solution |

|---|---|---|

| Low Library Yield | Degraded DNA/RNA, contaminants (salts, phenol), inaccurate quantification [2]. | Re-purify input sample; use fluorometric quantification (Qubit) instead of UV absorbance; check sample quality via electrophoresis. |

| High Duplicate Rate (NGS) | Over-amplification during PCR, insufficient starting material [2]. | Reduce the number of PCR cycles; increase input DNA if possible. |

| Poor Sequence Quality | Low signal intensity, poor polymerase activity, contaminated reagents [37]. | Check template concentration (100-200 ng/µL for Sanger); ensure high-quality, clean templates. |

| Inability to Phase Haplotypes (Short-Reads) | Short read length prevents linking distant variants [33]. | Switch to long-read sequencing, which can phase haplotypes over long distances without the need for complex statistical methods or trio-based phasing [31]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Reagent Solutions for Sequencing-Based Microbial Diversity Studies

| Reagent / Kit | Function | Consideration for Low-Abundance Taxa |

|---|---|---|

| DNA Extraction Kit (e.g., ZymoBIOMICS, Quick-DNA) | Isolates high-quality genomic DNA from complex samples (soil, water). | Use kits with inhibitors removal to ensure pure DNA from low-biomass samples, critical for efficient library prep [36]. |

| 16S rRNA PCR Primers | Amplifies the target gene for amplicon sequencing. | For long-read sequencing, use primers targeting the near-full-length 16S gene (e.g., 27F/1492R) for maximum taxonomic resolution [35] [36]. |

| SMRTbell Prep Kit (PacBio) | Prepares DNA libraries for PacBio sequencing by creating circular templates [31]. | Enables HiFi sequencing, which provides the high accuracy needed to confidently distinguish rare taxa [31] [35]. |

| Ligation Sequencing Kit (Nanopore) | Prepares DNA libraries for Nanopore sequencing by adding motor proteins and adapters [32]. | The ability to sequence ultra-long reads helps resolve repetitive regions and complex genomic structures that may harbor novel, low-abundance organisms [34]. |

| Magnetic Beads (SPRI) | Purifies and size-selects DNA fragments after enzymatic reactions. | Critical for removing adapter dimers and other contaminants that can consume sequencing reads and reduce coverage for your target amplicons [2]. |

Detailed Experimental Protocols

Protocol: Full-Length 16S rRNA Amplicon Sequencing for High-Resolution Microbiome Profiling

This protocol is adapted from recent soil and freshwater microbiome studies that successfully used long-read sequencing for high-resolution taxonomic profiling [35] [36].

1. DNA Extraction:

- Use a dedicated microbial DNA extraction kit (e.g., Quick-DNA Fecal/Soil Microbe Microprep Kit) following the manufacturer's protocol [35].

- Critical Step: Include a negative extraction control (e.g., molecular grade water) to monitor for contamination, which is crucial when targeting low-abundance taxa.

- Quantify DNA using a fluorometer (e.g., Qubit) and check quality via agarose gel electrophoresis or Fragment Analyzer [35].

2. PCR Amplification:

- Use primers targeting the near-full-length 16S rRNA gene. For example:

- Perform PCR in triplicate 25 µL reactions to reduce bias. A typical reaction mix includes:

- 5-50 ng genomic DNA

- 1X High-Fidelity PCR Master Mix

- 0.5 µM of each primer

- Cycling Conditions:

- Initial denaturation: 95°C for 3-5 min

- 25-30 cycles of: 95°C for 30 sec, 55°C for 30 sec, 72°C for 60-90 sec [35]

- Final extension: 72°C for 5 min

- Critical Step: Minimize PCR cycles to reduce chimera formation, which can create false "rare" taxa.

3. Library Preparation and Sequencing:

- For PacBio: Pool amplicons, then prepare a SMRTbell library using the SMRTbell Prep Kit. Sequence on a Sequel IIe system with a 10-hour movie time to generate HiFi reads [35].

- For Nanopore: Purify amplicons with magnetic beads. Prepare the library using the Ligation Sequencing Kit and Native Barcoding Kit for multiplexing. Load onto a MinION or PromethION flow cell [35] [36].

Protocol: Mitigating GC-Bias in Shotgun Metagenomic Sequencing

Short-read technologies often show coverage dips in high-GC regions, which can lead to the under-representation of certain taxa [33]. To mitigate this:

- Use PCR-Free Library Prep Kits: Whenever possible, select library preparation protocols that avoid PCR amplification, as PCR is a major source of GC bias [2].

- Verify with QC: After library preparation, check the fragment size distribution using a Fragment Analyzer or Bioanalyzer to ensure a normal distribution without a skew toward short fragments [2].

- Employ K-mer Based Analysis: During bioinformatic analysis, use k-mer-based abundance correction tools to adjust for remaining sequence-based biases.

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between OTUs and ASVs?

Operational Taxonomic Units (OTUs) are clusters of similar sequences, traditionally defined by a 97% similarity threshold to approximate species-level diversity. This method groups sequences together, blurring minor variations [38] [39]. In contrast, Amplicon Sequence Variants (ASVs) are exact, error-corrected biological sequences that provide single-nucleotide resolution without relying on arbitrary clustering thresholds. ASVs represent unique biological entities within a microbial community [38] [40].

FAQ 2: Why are ASVs particularly better for detecting low-abundance taxa?

Traditional OTU clustering often integrates low-frequency sequences with more abundant ones, presuming that rare sequences are potential errors [40]. ASV methods, like DADA2, use a sophisticated error model to statistically distinguish true biological sequences from sequencing errors, even at low frequencies [41] [42]. This allows for the confident identification and retention of rare taxa in the analysis, which are often key determinants in microbial community structure and function [43].

FAQ 3: How does the choice between OTUs and ASVs impact my diversity estimates?

Studies demonstrate that OTU clustering consistently leads to an underestimation of alpha diversity (within-sample diversity) because it genetically diverse sequences into a single unit [42]. The table below summarizes the core performance differences relevant to detecting the full spectrum of microbial diversity, including rare species.

Table 1: Impact on Ecological Diversity Metrics: OTU vs. ASV Approaches

| Ecological Metric | OTU Clustering (97%) | ASV (DADA2) | Implication for Low-Abundance Taxa |

|---|---|---|---|

| Alpha Diversity | Underestimated [42] | Higher, more accurate resolution [42] | Rare species are clustered away, reducing apparent diversity. |

| Beta Diversity | Distorted patterns [42] | More accurate community differentiation [42] | Enables precise tracking of rare taxon distribution across samples. |

| Gamma Diversity | Marked underestimation [42] | Comprehensive picture of total diversity [42] | Captures the full extent of rare species in a population. |

| Spurious Taxa | Higher risk of false positives [38] | Effectively controlled via error modeling [38] [41] | Reduces noise, allowing for confident study of genuine rare sequences. |

FAQ 4: My computational resources are limited. Can I still use ASVs?

While ASV generation is computationally more intensive than reference-based OTU clustering, mature and optimized pipelines like DADA2 are available [38] [39]. For large-scale population studies with well-characterized sample types (e.g., human gut), reference-based OTUs may still be a valid, computationally efficient choice [38]. However, for novel environments or when studying rare biospheres, the advantages of ASVs often justify the computational investment. It is recommended to evaluate the trade-offs based on your specific research goals [40].

FAQ 5: Are ASV results reproducible across different studies?

Yes, one of the key advantages of ASVs is their reproducibility. Because an ASV is an exact sequence, it is a stable unit that can be directly compared and referenced across different studies and laboratories, facilitating meta-analyses [38] [39]. OTUs, especially those generated de novo, can vary depending on the specific dataset and parameters used, making cross-study comparisons less reliable [38].

Troubleshooting Guides

Issue 1: Inconsistent Diversity Estimates and Loss of Rare Taxa

Problem: The analysis fails to detect known low-abundance species, or diversity metrics seem inconsistently across batches.

Solution:

- Switch to an ASV-based pipeline: Replace OTU clustering with a denoising algorithm like DADA2 [41]. This directly addresses the core problem by applying a unified error model to distinguish true biological sequences from noise.

- Avoid closed-reference OTU clustering: This method will discard any sequence not in its reference database, which is a major cause of losing novel and rare taxa [38]. If OTUs must be used, an open-reference approach is a better option.

- Validate with a mock community: Use a standardized microbial community (e.g., ZymoBIOMICS Standard) to benchmark your pipeline's ability to accurately detect low-abundance members [38].

Issue 2: High Contamination Background or Chimera Rates

Problem: The final output contains a high number of spurious sequences or chimeras, which complicates the interpretation of results, especially for rare variants.

Solution:

- Utilize ASV-based chimera removal: ASV pipelines like DADA2 excel at chimera detection. Because ASVs are exact sequences, chimeras can be identified as exact combinations of more prevalent "parent" ASVs in the same sample [38] [41].

- Enable the "Remove chimeras" option: In workflows like the "Detect Amplicon Sequence Variants and Assign Taxonomies" in the CLC Microbial Genomics Module, ensure the chimera removal step is toggled on [44].

- Inspect the workflow reports: Always check the output reports for the number of chimeras removed to monitor the effectiveness of this step [44].

Issue 3: Handling Low-Biomass Samples and Sequencing Errors

Problem: In low-biomass samples, sequencing errors can be misinterpreted as genuine rare taxa, leading to false positives.

Solution:

- Rely on the integrated error model of ASV tools: DADA2 uses a parametric error model to learn the specific error rates of your sequencing run. This statistical foundation is key to accurately identifying true biological sequences versus errors, even in challenging samples [41].

- Do not override quality filtering parameters: Use the default quality filtering and trimming settings in the DADA2 workflow, as these are optimized to remove low-quality reads that contribute to errors [41].

- Follow a established protocol: Adhere to a documented workflow, such as the DADA2 tutorial in Galaxy, which provides step-by-step guidance on optimal parameter settings for error modeling and sequence variant inference [41].

Experimental Protocols

Detailed Protocol: ASV Generation with DADA2 for Maximizing Low-Abundance Taxa Detection

This protocol is adapted from the Galaxy/DADA2 tutorial and is designed for processing 16S rRNA amplicon data [41].

I. Sample Preparation and Sequencing

- Target Gene: Amplify a variable region of the 16S rRNA gene (e.g., V4).

- Sequencing Platform: Illumina MiSeq or HiSeq, producing paired-end reads (e.g., 2x250 bp).

II. Data Preprocessing (In DADA2)

- Filter and Trim: Quality filter raw FASTQ files based on learned error rates.

- Typical parameters:

truncLen=c(240,160)(forward, reverse),maxN=0,maxEE=c(2,2). These values should be inspected and adjusted based on your data's quality profile [41].

- Typical parameters:

- Dereplication: Combine identical reads into a single unique sequence to reduce computational load.

- Learn Error Rates: DADA2 learns the specific error rates from your dataset, which is critical for the subsequent denoising step. This is a core step for accurate error correction.

III. Core ASV Inference and Chimera Removal

- Denoise Samples: The DADA2 algorithm uses the learned error rates to infer true biological sequences in each sample. This is the step that resolves exact sequence variants.

- Merge Paired-end Reads: Merge the denoised forward and reverse reads to create the full-length ASV sequences.

- Construct Sequence Table: Build a table tracking the frequency of each ASV in every sample.

- Remove Chimeras: Identify and remove chimeric sequences using the

removeBimeraDenovofunction, which detects chimeras by aligning ASVs to more abundant "parent" sequences.

IV. Downstream Analysis

- Assign Taxonomy: Classify ASVs against a reference database (e.g., SILVA, Greengenes) to obtain taxonomic identities.

- Build Abundance Table: Generate a final refined abundance table for ecological analysis.

The following diagram illustrates the core bioinformatic workflow for deriving ASVs, highlighting the key steps that enhance the detection of true low-abundance sequences.

Comparative Experimental Design: OTU vs. ASV

To empirically demonstrate the superiority of ASVs for your research on low-abundance taxa, the following parallel experimental design is recommended.

Table 2: Key Experimental Comparison: OTU Clustering vs. ASV Denoising

| Experimental Component | OTU Clustering Protocol | ASV Denoising Protocol |

|---|---|---|

| Bioinformatics Tool | UPARSE or VSEARCH for clustering. | DADA2 for denoising and error correction [41]. |

| Key Parameter | Cluster sequences at 97% identity. | Use default DADA2 parameters for error learning and inference. |

| Reference Database | For closed-reference: SILVA or Greengenes. | Same databases used for taxonomy assignment post-inference. |

| Mock Community | Essential for both protocols. Use a standardized community (e.g., ZymoBIOMICS) with known low-abundance members. | |

| Primary Metric for Success | Accuracy: Measure false positive (spurious OTUs/ASVs) and false negative (missed rare species) rates against the mock community truth [38]. | |

| Secondary Metric for Success | Diversity Estimates: Compare the number of unique units (OTUs vs. ASVs) and alpha diversity indices, expecting higher, more accurate values from ASVs [42]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for ASV-Based Metagenomic Studies

| Item Name | Function / Application | Relevance to Low-Abundance Taxa |

|---|---|---|

| ZymoBIOMICS Microbial Community Standard | A synthetic mock community of known composition and abundance. Serves as a critical positive control for benchmarking pipeline accuracy [38]. | Validates the ability of your ASV pipeline to correctly identify and quantify low-abundance species without generating spurious sequences. |

| DADA2 (Open-Source R Package) | The core bioinformatic tool for denoising amplicon data and inferring exact Amplicon Sequence Variants (ASVs) [41]. | Its statistical error model is specifically designed to distinguish true biological sequences (including rare ones) from sequencing errors. |

| SILVA or Greengenes Database | Curated databases of high-quality rRNA gene sequences. Used for taxonomic assignment of the final ASVs. | A comprehensive database is crucial for correctly identifying the taxonomic origin of both abundant and rare sequence variants. |

| Illumina MiSeq Reagent Kit v3 | Reagents for 2x300 paired-end sequencing on the Illumina platform. Commonly used for 16S amplicon studies. | Sufficient read length and quality are prerequisites for accurate merging and denoising, which directly impacts rare taxon detection. |

| QIIME 2 or phyloseq | Bioinformatic frameworks for downstream ecological analysis of the ASV table, including diversity calculations and visualization [41]. | Enables robust statistical analysis of the community data, including the role and dynamics of low-abundance taxa. |

What are Metagenome-Assembled Genomes (MAGs) and why are they important for detecting low-abundance taxa?

Metagenome-Assembled Genomes (MAGs) are draft genomes reconstructed from complex microbial communities through metagenomic sequencing and assembly, representing organisms that have not yet been isolated or cultured [45] [46]. They constitute a substantial portion of the "microbial dark matter" in environmental and host-associated microbiomes. For research on low-abundance taxa, MAGs are crucial because they provide genomic information for the vast number of microbial species that are absent from traditional reference databases built from isolate genomes [45] [47]. This enables detection and characterization of previously uncharacterized species that may be present at low abundance but still biologically significant.

How does MetaPhlAn 4 incorporate MAGs to improve taxonomic profiling?

MetaPhlAn 4 integrates MAGs with traditional isolate genomes using the Species-Level Genome Bin (SGB) system to create a dramatically expanded reference database [45] [48] [46]. The tool groups both reference genomes and MAGs into known SGBs (kSGBs, containing isolate genomes with taxonomic labels) and unknown SGBs (uSGBs, defined solely from MAGs without species-level taxonomic assignment) [45]. From this integrated genome collection, MetaPhlAn 4 identifies unique clade-specific marker genes, allowing it to profile both characterized and uncharacterized species in metagenomic samples with significantly improved sensitivity [45] [48].

Table: MetaPhlAn 4 Database Composition Integrating MAGs

| Component | Description | Scale in MetaPhlAn 4 |

|---|---|---|

| Total Microbial Genomes | Integrated reference genomes and MAGs | ~1.01 million genomes [45] [48] |

| Reference Genomes | Isolate genomes from NCBI | ~236,600 genomes [45] [48] |

| Metagenome-Assembled Genomes (MAGs) | Genomes reconstructed from metagenomes | ~771,500 MAGs [45] [48] |

| Species-Level Genome Bins (SGBs) | Clusters of genomes at ~5% genetic distance | 26,970 SGBs [45] [48] |

| Known SGBs (kSGBs) | SGBs with representative isolate genomes | 21,978 kSGBs [45] [48] |

| Unknown SGBs (uSGBs) | SGBs defined solely from MAGs | 4,992 uSGBs [45] [48] |

| Unique Marker Genes | Clade-specific genes for profiling | ~5.1 million genes [48] |

Technical FAQs on MAGs in MetaPhlAn 4

How does the SGB framework in MetaPhlAn 4 improve detection of low-abundance taxa?

The Species-Level Genome Bin (SGB) framework groups microbial genomes based on whole-genome genetic distances at 5% average nucleotide identity (ANI), creating clusters of roughly species-level diversity [45] [46]. This framework improves low-abundance taxon detection through several mechanisms:

Expanded Genomic Diversity: By incorporating over 771,500 MAGs, the SGB framework captures genomic diversity missing from isolate-only databases [45].

Taxonomic Resolution: Genetically distinct subclades within traditionally defined species are separated into multiple SGBs (e.g., Prevotella copri is represented by four distinct SGBs) [45], allowing finer resolution of low-abundance lineages.

Taxonomic Consolidation: Incorrectly separated species are merged into single SGBs (e.g., Lawsonibacter asaccharolyticus and Clostridium phoceensis merged into SGB15154) [45], reducing false positives and improving quantification accuracy.

Marker Gene Enrichment: The expanded genomic diversity enables identification of more specific marker genes, with MetaPhlAn 4 containing ~5.1 million unique clade-specific marker genes compared to previous versions [48].

What performance improvements can be expected when using MetaPhlAn 4 with MAGs compared to previous versions?

Independent evaluations demonstrate that MetaPhlAn 4 provides substantial improvements in profiling comprehensiveness and accuracy:

Table: Performance Improvements with MetaPhlAn 4's MAG-Informed Database

| Performance Metric | Improvement with MetaPhlAn 4 | Context and Validation |

|---|---|---|

| Read Explanation | ~20% more reads in human gut microbiomes [45] | Better detection of previously uncharacterized taxa |

| Read Explanation | >40% more reads in rumen microbiome [45] | Particularly significant in less-characterized environments |

| Species Detection | 336 additional mouse-associated uSGBs detected [49] | In mouse studies, beyond what assembly could recover from the same samples |

| Mouse Microbiome Profiling | Increased from 197 to 740 detected SGBs [49] | MetaPhlAn 3 vs. MetaPhlAn 4 on same mouse samples |

| Unknown Species Abundance | uSGBs dominate mouse gut (50.88% vs. 48.94% kSGBs) [49] | Demonstrates importance of MAG-derived uSGBs |

| Environmental Sample Accuracy | Highest species-level F1 score (0.84) across environments [47] | Outperformed other methods on synthetic benchmarks |

What are the specific computational requirements for running MetaPhlAn 4 with the expanded MAG database?

MetaPhlAn 4 requires:

- Python: Version 3 or newer with numpy and Biopython libraries [48]

- Alignment Tool: BowTie2 (version 2.3 or higher) must be present in the system path [48]

- Installation: Recommended through conda via Bioconda channel (

conda install -c bioconda metaphlan) [48] - Database: The default database (mpavJun23CHOCOPhlAnSGB_202403) includes both reference genomes and MAGs [50]

Troubleshooting Guides

Issue: Inconsistent taxonomic profiles between runs or compared to tutorials

Problem Description: Users report that MetaPhlAn 4 generates different taxonomic profiles when analyzing the same data compared to tutorial examples or between different runs [50].

Potential Causes and Solutions:

Database Version Mismatch:

- Cause: Using different database versions than those used in tutorials or previous analyses

- Solution: Explicitly specify the database version with

--bowtie2dbparameter and ensure consistency across comparisons - Verification: Check that you're using the latest database (mpavJun23CHOCOPhlAnSGB_202403) [50]

Parameter Inconsistencies:

- Cause: Different default parameters between MetaPhlAn versions

- Solution: For compatibility with MetaPhlAn 3 databases, use the

--mpa3parameter [48] - Documentation: Always document the exact parameters and database versions used for reproducibility

Input File Issues:

- Cause: Improperly formatted input files or incorrect input type specification

- Solution: Validate input file format and explicitly specify

--input_type(fasta or fastq) [50]

Issue: Problems with merging MetaPhlAn tables from multiple samples

Problem Description: The merge_metaphlan_tables.py script fails with "UnboundLocalError: local variable 'names' referenced before assignment" when processing profiles with more than four header rows [51].

Root Cause: The script contains conditional code that only handles input files with 1 or 4 header rows, but some MetaPhlAn outputs contain 5 header rows [51].

Solutions:

- Temporary Fix: Modify line 31 of

merge_metaphlan_tables.pytoif len(headers) >= 4:[51] - Alternative Approach: Use the

--nprocparameter for parallel processing of multiple samples during initial profiling rather than merging individual profiles - Long-term Solution: Check for updated versions of MetaPhlAn that address this bug

Issue: Poor detection of microbial taxa in low-biomass samples

Problem Description: MetaPhlAn 4 shows reduced sensitivity in samples with high host content (e.g., tissue samples with >70% host cells) [52].

Context: In metatranscriptomic samples with high host content, marker-gene based methods like MetaPhlAn 4 show reduced recall compared to k-mer based approaches [52].

Recommended Solutions:

- Parameter Adjustment:

Alternative Workflow:

- For low-microbial biomass samples with high host content, consider using Kraken 2/Bracken with optimized confidence thresholds (e.g.,

-confidence 0.05or-confidence 0.1) [52] - For metatranscriptomic samples with >70% host content, Kraken 2/Bracken demonstrated superior recall compared to MetaPhlAn 4 [52]

- For low-microbial biomass samples with high host content, consider using Kraken 2/Bracken with optimized confidence thresholds (e.g.,

Hybrid Approach:

- Use MetaPhlAn 4 for well-characterized environments and high-quality samples

- Employ k-mer based methods for challenging low-biomass samples

- Validate findings with multiple approaches when working with critical samples

Experimental Protocols for Validating MAG-Informed Taxonomic Profiling

Protocol: Benchmarking MetaPhlAn 4 Performance in Your Specific Environment

Purpose: To validate the improvement offered by MetaPhlAn 4's MAG-informed database for your specific research context, particularly for detecting low-abundance taxa.

Materials and Reagents:

Table: Essential Research Reagents and Computational Tools

| Item | Function/Application | Specifications/Alternatives |

|---|---|---|

| MetaPhlAn 4 Software | Core taxonomic profiling tool | Version 4.0.6 or newer [48] |

| BowTie2 | Read alignment against marker genes | Version 2.3 or higher [48] |

| CHOCOPhlAnSGB Database | Integrated genome and MAG database | mpavJun23CHOCOPhlAnSGB_202403 [50] |

| Positive Control Datasets | Method validation | Publicly available datasets (e.g., SRS014476) [50] |