Validating Metagenomic Classifiers: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive framework for the validation of metagenomic classifiers, essential tools for unbiased pathogen detection and microbiome analysis in clinical and pharmaceutical research.

Validating Metagenomic Classifiers: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive framework for the validation of metagenomic classifiers, essential tools for unbiased pathogen detection and microbiome analysis in clinical and pharmaceutical research. It covers foundational principles, methodological approaches, troubleshooting strategies, and comparative benchmarking, addressing critical needs for accuracy, reliability, and clinical translation. Designed for researchers, scientists, and drug development professionals, this guide synthesizes current methodologies, performance metrics, and optimization techniques to ensure robust implementation of metagenomic classification in diagnostic development and therapeutic discovery.

The Fundamentals of Metagenomic Classification: Principles and Challenges

Metagenomic sequencing has revolutionized microbiology by enabling the direct, unbiased interrogation of complex microbial communities, moving beyond culture-dependent approaches to allow more rapid species detection and the discovery of novel microorganisms [1]. The computational challenge of identifying all species present in these samples has led to the development of numerous metagenomic classifiers—software tools designed to taxonomically classify sequencing data and estimate taxonomic abundance profiles [1]. Accurate taxonomic classification is fundamental to diverse applications, from clinical diagnostics and pathogen detection in food safety to environmental surveying of microbial ecosystems [1] [2] [3]. However, the rapid development of classification tools, combined with the complexity of metagenomic data and reference databases, makes comprehensive benchmarking essential for researchers to select appropriate methods for their specific needs [1] [4].

This guide provides an objective comparison of metagenomic classifier performance based on recent benchmarking studies, detailing experimental methodologies and presenting quantitative data to inform tool selection within the broader context of validation research for metagenomic classifiers. We examine the fundamental principles underlying different classification approaches, their performance characteristics across various metrics and sample types, and provide recommendations for their application in research settings.

Fundamental Principles of Metagenomic Classification

Classification Approaches and Terminology

Metagenomic classifiers employ distinct strategies to assign taxonomic labels to sequencing data. Taxonomic binning approaches classify individual sequence reads to reference taxa, while taxonomic profiling methods report the relative abundances of taxa within a dataset without necessarily classifying every read [1]. In practice, these terms are often used interchangeably, as binning approaches can generate profiles by summing individual read classifications [1].

These tools can be broadly categorized into three computational approaches based on their reference databases and comparison methods:

DNA-to-DNA classification: Compares sequencing reads directly to genomic databases of DNA sequences using BLASTn-like algorithms [1]. These methods typically use k-mer based approaches (short nucleotide subsequences of length k, usually ~31 nucleotides) or FM-indexing to reduce computational requirements compared to traditional BLAST, which is considered sensitive but computationally intensive for large datasets [1] [5].

DNA-to-Protein classification: Translates DNA reads into all six potential reading frames and compares them to protein sequence databases using BLASTx-like algorithms [1] [6]. While more computationally intensive due to the translation step, these methods can be more sensitive for detecting novel and highly divergent sequences because amino acid sequences evolve more slowly than nucleotide sequences [1]. A limitation is that they primarily target coding regions and may miss non-coding sequences [1].

Marker-based classification: Utilizes a curated set of gene sequences with good discriminatory power between species, such as the 16S rRNA gene for bacteria [1] [3]. These methods are computationally efficient but introduce potential bias if marker genes are not evenly distributed among microbial groups of interest [1]. They may also miss species that lack the targeted marker genes [1].

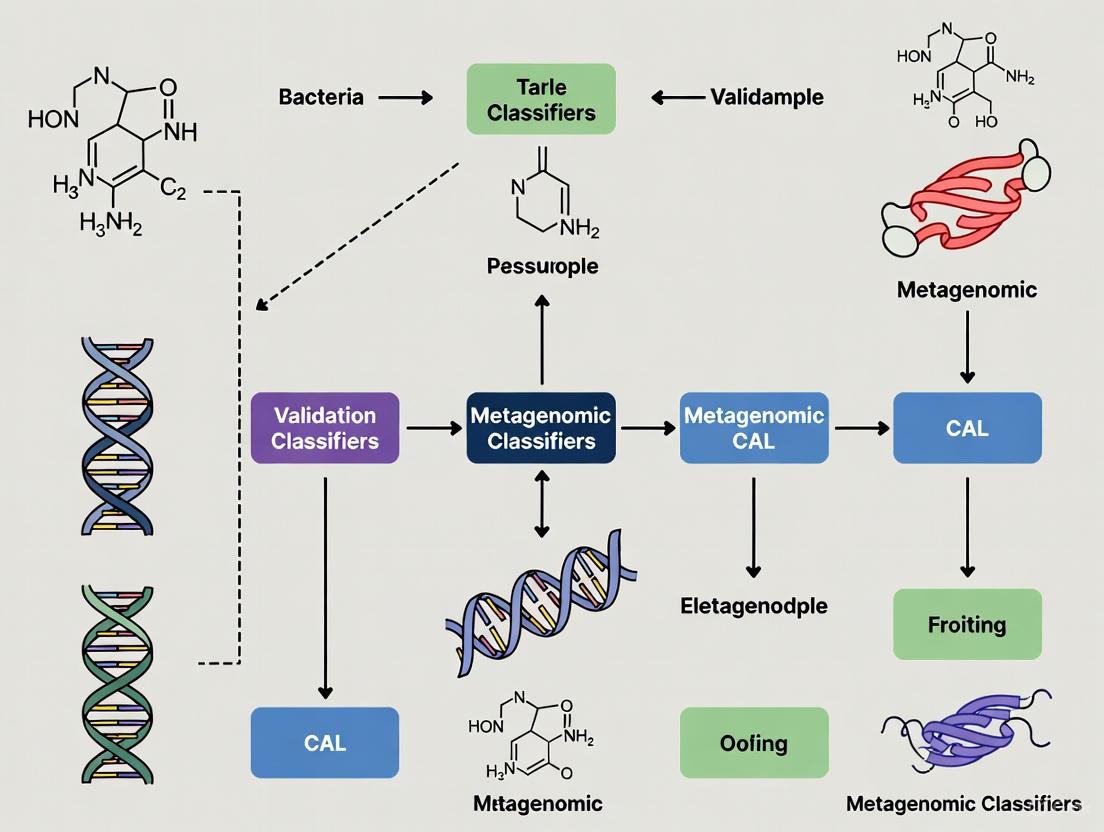

The following diagram illustrates the fundamental workflow and decision process for selecting a classification approach:

The Critical Role of Reference Databases

All metagenomic classifiers depend on pre-computed reference databases of previously sequenced microbial genetic sequences, whose size and quality present considerable computational challenges [1]. Popular databases include RefSeq (complete microbial genomes), BLAST nt and nr (nucleotide and protein sequences), SILVA (16S rRNA sequences), and GenBank [1]. The exponential growth of these databases—with BLAST nt containing over 10^12 nucleotides as of 2025—creates both opportunities and challenges [7]. While more comprehensive databases can improve classification by including more reference species, they also increase computational resources, potential for false positives, and require careful quality control to remove contaminated or mislabeled sequences [7].

Database composition acts as a significant confounder in classifier comparisons, as different tools are distributed with pre-compiled databases that may use entirely different sequence sources or versions [1] [3]. Benchmarking studies have demonstrated that database differences can substantially impact performance, emphasizing the need for comparisons using uniform databases where possible [1] [7].

Experimental Benchmarking Frameworks and Metrics

Standard Evaluation Metrics and Methodologies

Robust benchmarking of metagenomic classifiers requires standardized metrics and experimental designs. The most important performance metrics are precision (the proportion of correctly identified species among all species reported by the tool) and recall (the proportion of true positive species correctly identified by the tool) [1]. The F1 score (harmonic mean of precision and recall) provides a single metric balancing both concerns [4].

Since researchers often filter out taxa below specific abundance thresholds, performance should be evaluated across all potential thresholds using precision-recall curves, where each point represents precision and recall scores at a specific abundance threshold [1]. The area under the precision-recall curve (AUPR) provides a comprehensive performance measure across all thresholds [4].

Benchmarking typically employs two primary dataset types:

- Synthetic datasets: Created by in silico simulation of metagenomic reads from known genomes, providing exact ground truth but potentially missing characteristics of real sequencing data [4].

- Defined Mock Communities (DMCs): Well-defined mixtures of known organisms that are physically combined and sequenced, providing realistic data with known composition [3]. DMCs better capture the complexities of actual metagenomic experiments but may have less precise abundance control [3].

The following workflow outlines a standardized benchmarking approach for metagenomic classifiers:

Table 1: Key Research Reagents and Resources for Metagenomic Classification Benchmarking

| Resource Type | Specific Examples | Function and Application |

|---|---|---|

| Reference Databases | RefSeq, BLAST nt/nr, SILVA, GTDB | Provide reference sequences for taxonomic classification; completeness and quality significantly impact results [1] [7] |

| Mock Communities | ZymoBIOMICS Gut Microbiome Standard, ATCC Microbiome Standard | Defined mixtures of known microorganisms that provide ground truth for validation [3] [8] |

| Classification Tools | Kraken2, MetaPhlAn, Centrifuge, Kaiju, Minimap2 | Software implementations of different classification algorithms for performance comparison [9] [2] |

| Sequencing Technologies | Illumina (short-read), PacBio HiFi, Oxford Nanopore (long-read) | Platforms generating metagenomic data with different read lengths and error profiles [9] [3] |

| Evaluation Frameworks | CAMI (Critical Assessment of Metagenome Interpretation), Taxometer | Standardized approaches and tools for classifier assessment and improvement [4] [8] |

Comparative Performance Analysis of Metagenomic Classifiers

Performance Across Short-Read Sequencing Platforms

Multiple benchmarking studies have evaluated classifier performance on short-read sequencing data across various sample types. In pathogen detection scenarios using simulated food metagenomes, Kraken2/Bracken achieved the highest classification accuracy with consistently superior F1-scores across all tested food matrices, while Centrifuge exhibited the weakest performance [2]. MetaPhlAn4 also performed well, particularly for specific pathogens in certain food types, but demonstrated limitations in detecting pathogens at the lowest abundance level (0.01%) [2].

For environmental applications such as wastewater treatment microbial communities, a comparative study found Kaiju emerged as the most accurate classifier at both genus and species levels, followed by RiboFrame and kMetaShot [6]. The study highlighted substantial misclassification risks across all classifiers and databases, which could significantly hinder technological advancements by introducing errors for key microbial clades [6].

Table 2: Performance Comparison of Short-Read Metagenomic Classifiers

| Classifier | Classification Approach | Strengths | Limitations | Optimal Use Cases |

|---|---|---|---|---|

| Kraken2/Bracken | k-mer based (DNA-to-DNA) | High F1-scores in pathogen detection; broad detection range down to 0.01% abundance; fast classification [2] | Confidence threshold significantly impacts classification rates; higher false positives in complex samples [4] [6] | Clinical pathogen detection; general microbial profiling [2] |

| MetaPhlAn4 | Marker-based | High precision; computationally efficient; good for specific pathogens in certain matrices [2] | Limited detection sensitivity at low abundances (<0.01%); depends on marker gene representation [2] | Human microbiome studies; targeted taxonomic profiling [3] |

| Kaiju | DNA-to-Protein | High accuracy at genus and species levels; captures true abundance ratios well [6] | Computationally intensive; high memory requirements (~200GB RAM) [6] | Environmental samples; diverse microbial communities [6] |

| Centrifuge | k-mer based (DNA-to-DNA) | Comprehensive database coverage | Higher false positive rates; demonstrated weaker performance in multiple studies [2] [4] | Applications requiring broad taxonomic coverage |

Performance on Long-Read Sequencing Technologies

With the increasing popularity of long-read sequencing technologies (PacBio and Oxford Nanopore), comprehensive benchmarking has become essential. A 2024 study evaluating 13 classification pipelines on long-read data revealed that general-purpose mappers like Minimap2 and Ram achieved similar or better accuracy on most testing metrics compared to specialized classification tools, though they were significantly slower (up to ten times) than the fastest kmer-based tools [9].

The study categorized tools into four groups: kmer-based (Kraken2, Bracken, Centrifuge, CLARK, CLARK-S), mapping-based tools tailored for long reads (MetaMaps, MEGAN-LR, deSAMBA), general-purpose long-read mappers (Minimap2, Ram), and protein database-based tools (Kaiju, MEGAN-LR with protein database) [9]. Notably, protein-based tools generally underperformed compared to nucleotide-based approaches on long-read data [9].

Table 3: Performance of Long-Read Metagenomic Classifiers Across Multiple Metrics

| Classifier | Classification Approach | Read-Level Accuracy | Abundance Estimation | Computational Speed | Memory Requirements |

|---|---|---|---|---|---|

| Minimap2 | General-purpose mapper | Highest accuracy on most datasets [9] | Accurate with alignment mode | Slow (up to 10x slower than kmer-based) [9] | Moderate [9] |

| Kraken2 | k-mer based | High but lower than mappers [9] | Good with Bracken post-processing | Fast | High (~200GB RAM) [6] |

| MetaMaps | Mapping-based (long-read tailored) | High, similar to general mappers [9] | Accurate | Medium | Moderate [9] |

| CLARK-S | k-mer based | Lower than mappers but minimal false positives [9] | Good specificity | Fast | Moderate [9] |

| Kaiju | DNA-to-Protein | Significantly lower on long-read data [9] | Less accurate than nucleotide-based | Medium | High [6] |

Impact of Database Selection and Completeness

Database composition significantly influences classifier performance. A 2025 study addressing the dynamic nature of reference data highlighted how database quality control dramatically affects results [7]. For instance, using decontaminated databases reduced spurious Plasmodium classifications in published metagenomic data, demonstrating how database quality impacts research conclusions [7].

Temporal comparisons revealed inconsistencies in taxonomic assignments stemming from asynchronous updates between public sequence and taxonomy databases, particularly affecting taxa like Listeria monocytogenes and Naegleria fowleri [7]. This emphasizes the importance of treating reference databases as dynamic entities requiring ongoing quality control and validation [7].

Classifier performance also depends on database completeness relative to sample composition. Tools struggle when samples contain species not represented in databases, though some algorithms (like Minimap2 and MEGAN-N) assign these reads to phylogenetically similar species present in the database, while others (like CLARK-S and Ram) tend to leave them unassigned [9].

Advanced Strategies for Enhanced Classification Accuracy

Ensemble Approaches and Filtering Strategies

Given that no single classifier excels across all scenarios, researchers have developed strategies to combine tools and improve overall accuracy. Strikingly, the number of species identified by different tools can differ by over three orders of magnitude on the same datasets [4]. Various strategies can ameliorate taxonomic misclassification, including abundance filtering, ensemble approaches, and tool intersection [4].

Pairing tools with different classification strategies (k-mer, alignment, marker) can combine their respective advantages [4]. For k-mer-based tools, applying abundance thresholds significantly increases precision and F1 scores, bringing them to a similar range as marker-based tools, which tend to be more precise initially [4].

Innovative Methods Leveraging Multiple Data Features

Novel approaches that integrate multiple data features show promise for enhancing classification accuracy. Taxometer, a neural network-based method, improves taxonomic classifications of metagenomic contigs using both tetra-nucleotide frequencies (TNFs) and abundance profiles across samples [8]. When applied to MMseqs2 annotations, Taxometer increased the average share of correct species-level contig annotations from 66.6% to 86.2% on CAMI2 human microbiome datasets [8].

The integration of abundance information proved particularly valuable, with the combined model (TNFs + abundances) producing 18-35% more correct species labels than models using only TNFs or abundances separately [8]. This approach demonstrates the potential of leveraging multiple data features beyond sequence similarity alone.

Alternative approaches include using data compressors as features for taxonomic classification, with one study achieving 95% accuracy by combining features from multiple compressors, though it found no significant correlation between compression performance and classification accuracy [10].

Recommendations and Future Directions

Evidence-Based Tool Selection Guidelines

Based on comprehensive benchmarking studies, tool selection should be guided by specific research requirements:

- For clinical pathogen detection: Kraken2/Bracken provides the broadest detection range, correctly identifying pathogen sequences down to 0.01% abundance [2].

- For long-read data analysis: kmer-based tools like Kraken2 offer a good balance of speed and accuracy, while general-purpose mappers like Minimap2 provide highest accuracy when computational resources permit [9].

- For environmental samples with unknown species: Tools that use database-independent features (like Taxometer) or approaches that handle novel taxa gracefully are preferable [8].

- When computational resources are limited: Marker-based methods like MetaPhlAn4 offer good precision with reduced computational requirements [2] [3].

Critical Research Gaps and Development Needs

Despite extensive benchmarking, important challenges remain. Most tools are prone to reporting organisms not present in datasets, except CLARK-S [9]. Performance degrades when samples contain high proportions of host genetic material or when database representation is incomplete [9]. Discrepancies among tools when applied to real datasets highlight the need for continuous improvement [9].

Future development should focus on:

- Improved handling of novel species not represented in reference databases

- Better integration of multiple data features (sequence similarity, abundance, TNFs)

- Enhanced database quality control and versioning practices

- Specialized algorithms for challenging scenarios like high host DNA contamination

Regular updates and careful curation of databases are equally important as algorithmic improvements to ensure classification effectiveness [9] [7].

As the field advances, the combination of diverse categories of tools and databases will likely be necessary to analyze complex samples, with ensemble approaches providing more robust taxonomic profiling across diverse research applications [4].

Metagenomic analysis has revolutionized microbial ecology by enabling the comprehensive study of microbial communities directly from environmental samples, without the need for cultivation. The field relies on three principal algorithmic approaches for taxonomic profiling: k-mer-based, alignment-based, and marker-gene methods. Each approach offers distinct trade-offs in computational efficiency, sensitivity, and resolution, making them suitable for different applications ranging from clinical diagnostics to ancient DNA studies. As advancements in sequencing technologies, particularly long-read platforms, generate increasingly complex datasets, the selection of an appropriate classification strategy becomes paramount for accurate biological interpretation. This guide provides a comparative analysis of these core methodologies, supported by recent benchmarking studies and experimental data, to inform researchers and drug development professionals in their selection of metagenomic classifiers.

Core Algorithmic Principles

k-mer-Based Methods

k-mer-based methods operate by breaking down sequencing reads and reference databases into short subsequences of length k (k-mers). Taxonomic assignment is achieved by comparing the k-mer content of query reads against a pre-computed k-mer database, often utilizing efficient data structures like hash tables for rapid exact matching.

- Mechanism: Tools like Kraken2 and its abundance estimation component Bracken map k-mers to the lowest common ancestor (LCA) of all genomes containing that k-mer. This strategy enables very fast classification against extensive reference databases [11] [12]. Recent developments, such as SKA (Split K-mer Analysis), optimize this further for tracking bacterial pathogen transmission by focusing on split k-mers, enhancing speed and specificity [11].

- Strengths: The primary advantage is computational speed and efficiency, as k-mer matching avoids the computational overhead of full-sequence alignment. This makes k-mer-based tools particularly suitable for analyzing large-scale metagenomic datasets [11] [2].

- Limitations: Accuracy can be affected by genomic repeats and conserved regions, where the same k-mer may appear in multiple taxa, potentially leading to ambiguous assignments. Database completeness is also crucial, as the absence of a genome can lead to false negatives [11].

Alignment-Based Methods

Alignment-based methods perform detailed, base-by-base comparisons between sequencing reads and reference sequences. This approach can leverage nucleotide-level alignment (DNA-to-DNA) or translated search (DNA-to-protein), where reads are translated in six frames before being aligned to a protein database.

- Mechanism: Traditional aligners like BWA (Burrows-Wheeler Aligner) are employed by tools such as NABAS+, which uses strict RefSeq curation to ensure one high-quality genome per species for precise identification [13]. For functional analysis, BLASTX serves as a sensitive but slow gold standard, while DIAMOND offers a faster alternative for translated searches [12].

- Strengths: Alignment-based methods generally provide high accuracy and sensitivity, especially for detecting divergent sequences or those with homology at the protein level. They are less prone to false positives caused by short, spurious matches, making them suitable for clinical applications where precision is critical [13].

- Limitations: The main drawback is high computational demand, requiring significant processing time and memory resources, which can be prohibitive for very large datasets [12] [13].

Marker-Gene Methods

Marker-gene methods identify and quantify taxa based on the presence of unique, clade-specific marker genes. These genes are typically single-copy, universal housekeeping genes that are phylogenetically informative.

- Mechanism: Tools like MetaPhlAn4 use a predefined set of marker genes unique to specific taxonomic clades. By detecting these markers in metagenomic samples, the tool can infer taxonomic composition and relative abundances without the need for a full-genome database [2] [14].

- Strengths: This approach offers high taxonomic specificity and is computationally efficient due to the reduced search space. It is highly robust against the presence of closely related species and horizontal gene transfer events, as it relies on conserved, lineage-defining genes [14].

- Limitations: The reliance on marker genes limits its resolution for organisms lacking established markers or for detecting strains with atypical genomes. Its performance is also constrained by the depth of marker gene databases and may miss taxa not represented therein [2].

The following diagram illustrates the foundational workflows of these three core algorithmic approaches.

Figure 1: Workflow comparison of the three core algorithmic approaches for metagenomic classification.

Performance Benchmarking and Experimental Data

Performance in Foodborne Pathogen Detection

A comprehensive benchmarking study evaluated four metagenomic classifiers for detecting foodborne pathogens in simulated food metagenomes. The tools were tested against defined relative abundance levels (0%, 0.01%, 0.1%, 1%, and 30%) of Campylobacter jejuni, Cronobacter sakazakii, and Listeria monocytogenes within complex food matrices.

Table 1: Performance of Metagenomic Classifiers in Pathogen Detection

| Tool | Algorithm Type | Highest F1-Score | Limit of Detection | Key Strength |

|---|---|---|---|---|

| Kraken2/Bracken | k-mer-based | Consistently Highest | 0.01% | Broadest detection range across all food matrices |

| Kraken2 | k-mer-based | High | 0.01% | Excellent sensitivity for low-abundance pathogens |

| MetaPhlAn4 | Marker-gene | Moderate | 0.1% | Superior for C. sakazakii in dried food |

| Centrifuge | k-mer-based (FM-index) | Weakest | >0.01% | Lower overall accuracy in this application |

The study concluded that Kraken2/Bracken was the most effective tool for pathogen detection in food safety applications, achieving the highest F1-scores across all tested food metagenomes and correctly identifying pathogens down to the 0.01% abundance level. MetaPhlAn4 served as a valuable alternative for certain pathogen-matrix combinations but was limited in detecting the lowest abundance level (0.01%) [2].

Performance on Ancient vs. Modern Metagenomic Data

The performance of metagenomic classifiers varies significantly between modern and ancient DNA (aDNA) samples due to characteristic aDNA damage patterns, including deamination (C→T/G→A misincorporations), fragmentation, and contamination with modern DNA. A benchmarking study on simulated ancient dental calculus metagenomes assessed classifiers across a spectrum of DNA degradation.

Table 2: Classifier Performance on Ancient vs. Modern Metagenomes

| Tool | Algorithm Type | Performance on Modern DNA | Performance on Ancient DNA | Key Finding |

|---|---|---|---|---|

| Kraken2/Bracken | k-mer-based | Excellent | Good but affected by damage | Complementary strengths with marker methods |

| MetaPhlAn4 | Marker-gene | Excellent | More robust to fragmentation | Maintains better precision with ancient DNA |

| MALT/HOPS | Alignment-based | Good | Specialized for aDNA damage | High memory requirements (>1 TB RAM) |

| NABAS+ | Alignment-based | High accuracy | Not specifically tested | Superior false positive reduction in deep-sequenced samples |

The study revealed that contamination with modern DNA has the most pronounced negative effect on classifier performance, more significant than deamination or fragmentation. It also found that k-mer-based (e.g., Kraken2/Bracken) and marker-gene (e.g., MetaPhlAn4) methods exhibit complementary strengths for ancient metagenome profiling. While k-mer-based methods showed high sensitivity, marker-gene methods demonstrated greater robustness to damage-induced errors, suggesting that a combined approach may yield optimal results [14].

Functional Profiling and Protein Mapping

Functional analysis of metagenomes involves characterizing the protein-coding potential and metabolic pathways within a microbial community. Traditional tools like BLASTX and DIAMOND perform translated searches but struggle with "multi-mapping," where a single read aligns to multiple homologous proteins from different taxa, complicating downstream quantification [12].

The novel tool kMermaid addresses this challenge by using a k-mer-based approach to map reads directly to taxa-agnostic clusters of homologous proteins. This method resolves ambiguity, as over 93% of reads can be uniquely mapped to a single protein cluster compared to only 7% when mapped to individual proteins using BLASTX or DIAMOND. kMermaid combines the sensitivity of alignment-based protein mapping with the computational efficiency of k-mer methods, enabling fast, unambiguous functional classification even on standard computers [12].

Experimental Protocols and Methodologies

Benchmarking Protocol for Pathogen Detection

The food safety benchmarking study [2] employed the following rigorous methodology:

- Sample Simulation: Metagenomes for three food products (chicken meat, dried food, and milk) were simulated, each spiked with specific pathogens (Campylobacter jejuni, Cronobacter sakazakii, and Listeria monocytogenes) at defined relative abundance levels (0%, 0.01%, 0.1%, 1%, and 30%).

- Tool Execution: Four tools—Kraken2, Kraken2/Bracken, MetaPhlAn4, and Centrifuge—were run on the simulated datasets using their standard parameters and recommended databases.

- Performance Metrics: The primary evaluation metric was the F1-score (the harmonic mean of precision and recall), providing a balanced measure of each tool's accuracy in predicting pathogen presence and abundance.

Protocol for Assessing Ancient DNA Performance

The benchmarking of ancient metagenomic classifiers [14] involved:

- Data Simulation: Using Gargammel, the researchers generated simulated human dental calculus metagenomes with successively raised levels of DNA damage to create a spectrum from modern (no damage) to ancient (high damage) profiles. Damage models included:

- Deamination: Introduction of C→T and G→A misincorporation patterns, particularly at fragment ends.

- Fragmentation: Generation of shorter read lengths to mimic post-mortem degradation.

- Contamination: Introduction of modern human and environmental microbial DNA sequences.

- Classifier Evaluation: A range of DNA-to-DNA (e.g., Kraken2), DNA-to-protein, and DNA-to-marker (e.g., MetaPhlAn4) classifiers were executed on the damaged datasets.

- Holistic Assessment: Performance was measured using F1-scores, which account for both misclassifications and unclassifiable reads (false negatives), providing a comprehensive view of each tool's efficacy on degraded material.

Table 3: Key Computational Tools and Databases for Metagenomic Analysis

| Resource Name | Type | Primary Function | Application Context |

|---|---|---|---|

| Kraken2/Bracken | Software | k-mer-based taxonomic profiling & abundance estimation | Broad pathogen detection; general community profiling [2] |

| MetaPhlAn4 | Software | Marker-gene-based taxonomic profiling | Efficient and specific profiling; ancient DNA studies [2] [14] |

| kMermaid | Software | k-mer-based functional read assignment to protein clusters | Resolving multi-mapping in functional analysis [12] |

| NABAS+ | Software | Alignment-based taxonomic profiling (uses BWA) | Clinical diagnosis requiring high precision [13] |

| Gargammel | Software | Simulation of ancient metagenomes with damage patterns | Benchmarking classifier performance on aDNA [14] |

| RefSeq | Database | Curated collection of reference genomes & proteins | Reference database for alignment and k-mer-based tools [13] |

| Custom Protein Cluster Database | Database | kMermaid's model of homologous protein groups | Enables unique functional read assignment [12] |

The comparative analysis of k-mer-based, alignment-based, and marker-gene methods reveals a landscape where no single algorithmic approach universally outperforms the others. k-mer-based methods like Kraken2/Bracken offer an optimal balance of speed and sensitivity, making them ideal for large-scale screening and detecting low-abundance pathogens. Alignment-based methods like NABAS+ provide superior accuracy and reduced false positives, which is critical for clinical diagnostics. Marker-gene methods like MetaPhlAn4 deliver high taxonomic specificity and robustness in challenging contexts like ancient DNA analysis. The emerging trend involves leveraging the complementary strengths of these approaches, such as using k-mer-based tools for initial screening followed by alignment-based validation for critical findings, or employing hybrid strategies to overcome the limitations of individual methods. Furthermore, the development of specialized tools like kMermaid for functional profiling indicates a maturation of the field, addressing more nuanced analytical challenges beyond taxonomic assignment. The choice of a metagenomic classifier must therefore be guided by the specific research question, the nature of the sample, and the available computational resources.

Metagenomic classification has become a cornerstone of modern microbiome research, enabling scientists to decipher the complex composition of microbial communities from diverse environments, including the human body, wastewater treatment systems, and agricultural ecosystems. The accuracy of this process is fundamentally dependent on the reference databases used to assign taxonomic labels to sequence data. Despite the critical importance of these databases, their composition, inherent biases, and limitations significantly impact classification outcomes and can potentially lead to erroneous biological conclusions. This guide provides an objective comparison of how database choice affects the performance of popular metagenomic classification tools, presenting supporting experimental data from recent benchmarking studies. Understanding these factors is essential for researchers, scientists, and drug development professionals who rely on metagenomic analysis for biomarker discovery, pathogen detection, and therapeutic development.

Database Composition and Classification Performance

The comprehensiveness and specificity of reference databases directly influence classification accuracy. Studies consistently demonstrate that databases tailored to specific environments dramatically improve classification rates and accuracy compared to general-purpose databases.

Impact of Database Choice on Classification Metrics

Table 1: Classification Performance Across Different Reference Databases

| Database | Composition | Classification Rate | Accuracy | Key Limitations |

|---|---|---|---|---|

| RefSeq | General-purpose, public database | 50.28% | Variable; lower for novel microbes | Biased toward well-studied species; poor for understudied environments [15] |

| Hungate | Rumen-specific cultured genomes | 99.95% | High for known rumen microbes | Limited to cultured organisms; misses uncultured diversity [15] |

| RUG (Rumen Uncultured Genomes) | Metagenome-assembled genomes from rumen | 45.66% | High when MAGs have accurate taxonomic labels | Dependent on quality of MAG taxonomic assignment [15] |

| RefHun | RefSeq + Hungate genomes | ~100% | Improved over RefSeq alone | Still contains RefSeq biases for non-rumen taxa [15] |

| RefRUG | RefSeq + RUG MAGs | 70.09% | Substantially improved for novel microbes | Dependent on MAG quality and taxonomic labeling [15] |

| SILVA | Ribosomal RNA gene database | <2% (with Kraken2) | Variable | Limited to ribosomal genes; reduced classification rate [6] |

Experimental Evidence of Database Limitations

Research on the rumen microbiome, an understudied environment with many novel microbes, clearly demonstrates how database choice affects classification. When a simulated metagenomic dataset derived from cultured rumen microbial genomes (Hungate collection) was classified using Kraken2 with different databases, RefSeq alone classified only 50.28% of reads, despite 119 of the 460 Hungate genomes being present in RefSeq at the time of analysis [15]. This indicates significant gaps in even comprehensive general databases for specialized environments.

The addition of relevant genomes to reference databases substantially improves classification. Adding rumen uncultured genomes (MAGs) to RefSeq increased classification rates to 70.09%—approximately 1.4 times more reads than RefSeq alone [15]. This highlights how environment-specific genomic resources can mitigate database limitations.

Benchmarking Metagenomic Classifiers

Multiple studies have evaluated the performance of metagenomic classification tools using different databases and approaches. The optimal classifier often depends on the specific application, required taxonomic resolution, and computational resources.

Performance Comparison of Classification Tools

Table 2: Classifier Performance Across Experimental Contexts

| Classifier | Classification Approach | Recommended Context | Strengths | Limitations |

|---|---|---|---|---|

| Kaiju | Amino acid alignment (six-frame translation) | General metagenomics; accurate species-level classification [6] | Highest accuracy at genus and species levels; captures abundance ratios well [6] | High RAM requirements (>200 GB) [6] |

| Kraken2/Bracken | k-mer matching | Broad pathogen detection; low-abundance taxa [2] | Detects pathogens down to 0.01% abundance; high F1-scores [2] | Strong dependency on confidence thresholds [6] |

| RiboFrame | 16S rRNA extraction + k-mer classification | Targeted ribosomal analysis | Low misclassification rates; minimal RAM (20 GB) [6] | Limited to ribosomal genes; underestimates complexity [6] |

| kMetaShot | k-mer-based MAG classification | Metagenome-assembled genome analysis | No erroneous genus-level classifications on MAGs [6] | High computational demand (24 GB per thread) [6] |

| MetaPhlAn4 | Marker-based profiling | Well-characterized microbiomes | Species-level resolution for known organisms [2] | Limited detection at 0.01% abundance [2] |

| Centrifuge | Alignment-based classification | General metagenomics | Efficient memory use [2] | Weakest performance in pathogen detection benchmarks [2] |

Experimental Protocols for Benchmarking

To evaluate classifiers for wastewater treatment microbial communities, researchers created an in silico mock community representing key taxa in activated sludge and aerobic granular sludge systems [6]. This controlled approach enabled precise performance assessment:

Mock Community Design: The mock community included simplified yet representative microbial populations from wastewater treatment systems, including Candidatus Accumulibacter, Candidatus Competibacter, Tetrasphaera, Zoogloea, Pseudomonas, Thauera, and Flavobacterium [6].

Sequencing Simulation: Generated 50 million paired-end reads (150 bp) simulating Illumina short-read sequencing [6].

Quality Control: Processed reads with BBDuk, retaining 92.6% (46,315,875 reads) for analysis [6].

Classification Parameters: Tested each classifier with multiple settings and databases. For example, Kaiju was evaluated with E-values from 0.0001 to 0.01 and minimum alignment lengths from 11 to 42 amino acids [6].

Performance Metrics: Assessed genus and species-level classification accuracy, misclassification rates, false negatives, and computational requirements [6].

In food safety applications, researchers simulated metagenomes representing three food products (chicken meat, dried food, and milk) with pathogens (Campylobacter jejuni, Cronobacter sakazakii, and Listeria monocytogenes) spiked at defined relative abundances (0%, 0.01%, 0.1%, 1%, and 30%) [2]. This design enabled evaluation of detection limits and quantitative accuracy across abundance levels.

Database-Driven Biases and Error Profiles

Different classification approaches and databases introduce specific biases that researchers must consider when interpreting results.

Taxonomic Misclassification Patterns

In wastewater treatment microbial communities, Kaiju and Kraken2 (using nt_core database) exhibited approximately 25% erroneous classifications at the genus level [6]. Kraken2 showed particularly strong dependence on confidence thresholds, with misclassification rates increasing at a confidence level of 0.99, where false negatives became more frequent than correct classifications [6].

Eukaryote-prokaryote misclassification represents another significant challenge. Analysis of wastewater communities revealed substantial risk of misclassifying eukaryotes as bacteria and vice versa across all classifiers and databases [6]. This has particular implications for studying complex environments where eukaryotic microbes like fungi, protozoa, and lower metazoans play crucial ecological roles.

Impact on Abundance Estimation

For abundance estimation, Kaiju most closely mirrored actual mock community proportions when using appropriate databases (nreuk and nreuk+), successfully capturing the ratio between the four most abundant genera [6]. In contrast, Kraken2 completely missed true genus abundances when using the SILVA database, while RiboFrame overestimated the abundance of Flavobacterium despite using the same database [6]. This demonstrates that both the classifier algorithm and database choice impact quantitative accuracy.

Emerging Approaches and Solutions

Reference-Guided Assembly

Reference-guided assembly approaches like MetaCompass address database limitations by using available genomic sequences to improve metagenomic assembly [16]. This method:

- Identifies reference genomes relevant to the sample through marker gene alignment

- Clusters references to reduce redundancy

- Aligns reads to clustered references

- Generates contigs guided by reference genomes while allowing for sequence variation [16]

In human microbiome samples, MetaCompass assemblies represented 31-90% of the total de novo assembly size across different body sites, achieving up to 97% for some posterior fornix samples [16]. This demonstrates that reference-guided approaches can effectively cover substantial portions of microbial communities when appropriate references exist.

Metagenome-Assembled Genomes (MAGs)

MAGs dramatically improve classification for understudied environments by representing uncultivated microbes. Classification accuracy improved substantially when MAGs were added to reference databases, particularly when MAGs were assembled from the same environment as the classification data and had formal taxonomic lineages assigned [15].

Database Customization Strategies

Custom database construction tailored to specific research questions significantly enhances classification. Successful approaches include:

- Environment-Specific Genomes: Adding cultured isolates from the target environment (e.g., Hungate collection for rumen)[ccitation:9]

- MAG Integration: Incorporating high-quality MAGs from similar environments [15]

- Taxonomic Balancing: Ensuring representation across taxonomic groups to minimize false positives [15]

- Strain-Level Resolution: Including multiple strain references where necessary for discrimination

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Metagenomic Classification

| Tool/Resource | Function | Application Context |

|---|---|---|

| Kaiju | Amino acid-based taxonomic classification | Accurate species-level classification; functional potential assessment [6] |

| Kraken2/Bracken | k-mer-based classification and abundance estimation | Sensitive pathogen detection; low-abundance taxon identification [2] |

| MetaCompass | Reference-guided metagenomic assembly | Improving contiguity and completeness of metagenomic assemblies [16] |

| Hungate Collection | Cultured rumen microbial genomes | Rumen microbiome studies; agricultural research [15] |

| RUG Database | Rumen Uncultured Genomes (MAGs) | Classification of novel rumen microbes [15] |

| BBDuk | Quality control and adapter removal | Preprocessing of raw sequencing reads [6] |

| MetaBAT2 | Metagenome binning | MAG generation from assembled contigs [6] |

| SILVA Database | Curated ribosomal RNA gene database | 16S rRNA-based taxonomic profiling [6] |

Workflow Diagram for Database Selection

The following diagram illustrates a systematic approach for selecting appropriate reference databases and classification tools based on research objectives:

Reference database composition fundamentally limits the accuracy of metagenomic classification. General databases like RefSeq show significant biases toward well-studied species and perform poorly for understudied environments. The integration of environment-specific genomic resources, including cultured isolates and metagenome-assembled genomes, dramatically improves classification rates and accuracy. Classifier performance varies substantially across tools, with Kaiju demonstrating highest accuracy for species-level classification, while Kraken2/Bracken provides superior sensitivity for low-abundance pathogen detection. Researchers must carefully select databases and classifiers aligned with their specific research questions and validate results using appropriate mock communities and statistical controls. As the field advances, continued development of comprehensive, balanced reference databases and transparent benchmarking standards will be essential for advancing metagenomic research and its applications in human health, environmental science, and drug development.

Metagenomic sequencing has revolutionized microbiology, enabling the diagnosis of disease, identification of pandemic agents, and revealing the microbial importance of our microbiome and environment [17]. However, the accuracy of metagenomic analysis depends fundamentally on the reference sequence databases used for taxonomic classification [17] [18]. Issues with reference sequence databases are pervasive and can significantly impact research outcomes and conclusions [17] [15]. Database incompleteness and sequence divergence represent two fundamental challenges that affect the sensitivity, precision, and overall validity of metagenomic classifier results [19] [15]. This guide objectively compares classifier performance against these challenges, providing experimental data and methodologies essential for researchers validating metagenomic classifiers in pharmaceutical and biomedical contexts.

The selection of appropriate reference databases is not merely a technical step but a fundamental methodological consideration that can determine the success or failure of metagenomic studies [18] [15]. As genomic repositories grow at an unprecedented pace, the ability of classification tools to leverage comprehensive, well-curated references becomes increasingly critical for accurate taxonomic profiling in drug development and clinical diagnostics [20].

Understanding the Core Challenges

Database incompleteness occurs when reference databases lack representation of specific taxa present in samples, leading to false negatives and inaccurate abundance estimates [15]. This problem is particularly acute for understudied environments like the rumen microbiome, where many microbes remain uncultured and absent from public references [15]. One study found that using the standard NCBI RefSeq database alone resulted in approximately 50% of reads from rumen microbial genomes being unclassified, simply because the reference database lacked appropriate representations [15].

The growth of public genomic repositories is dramatically outpacing computational resources, creating challenges for maintaining comprehensive reference sets [20]. Furthermore, database representation is highly uneven, with substantial biases toward well-studied organisms. For instance, in NCBI RefSeq, the 187 most represented species have as many base pairs as the remaining 27,662 species combined [20]. This imbalance means that unless classifiers can efficiently handle massive, comprehensive databases, many novel or less-studied organisms will be missed in analyses.

Sequence divergence encompasses both genetic variation between reference sequences and actual samples, as well as errors within reference databases themselves [17]. Taxonomic misannotation affects approximately 3.6% of prokaryotic genomes in GenBank and 1% in its curated subset RefSeq [17]. Additionally, database contamination is widespread, with systematic evaluations identifying 2,161,746 contaminated sequences in NCBI GenBank and 114,035 in RefSeq [17].

Sequence divergence challenges are compounded by technical issues like chimeric sequences, poor quality references, and inappropriate inclusion of host or vector sequences [17]. These problems lead to false positive classifications, where organisms are detected that aren't actually present in samples. In a striking example, one analysis detected turtles, bull frogs, and snakes in human gut samples simply by changing the reference database [17].

Comparative Performance of Classification Tools

Performance Against Database Incompleteness

Classifier performance varies significantly when dealing with incomplete databases. Experimental data demonstrates that strategies to enhance database comprehensiveness directly impact classification accuracy.

Table 1: Classification Rates Across Different Database Configurations [15]

| Database Composition | Classification Rate | Notes |

|---|---|---|

| Hungate (rumen-specific) | 99.95% | Nearly complete classification of rumen-derived reads |

| RefSeq (standard) | 50.28% | Limited representation of specialized communities |

| Mini Kraken2 | 39.85% | Reduced database size impacts sensitivity |

| RUG (MAGs from rumen) | 45.66% | MAGs improve representation of uncultivated microbes |

| RefSeq + RUG | 70.09% | 1.4x improvement over RefSeq alone |

| RefSeq + Hungate | ~100% | Near-complete classification with specialized references |

The addition of Metagenome-Assembled Genomes (MAGs) to reference databases substantially improves classification accuracy for underrepresented taxa [15]. One study demonstrated that MAGs improved metagenomic read classification rates by 50-70%, whereas adding cultured isolate genomes from the Hungate collection showed only approximately 10% improvement [15]. This highlights the particular value of MAGs for representing uncultivated microbes in environments where many taxa remain uncharacterized.

Performance Against Sequence Divergence

Tools vary in their resilience to sequence divergence and database errors, with important implications for false positive rates and abundance estimation accuracy.

Table 2: Tool Performance Metrics with Long-Read Sequencing Data [9] [19]

| Tool Category | Precision | Recall | False Positive Rate | Abundance Accuracy |

|---|---|---|---|---|

| General-purpose mappers (Minimap2, Ram) | High | High | Low | High |

| Mapping-based tools (MetaMaps, deSAMBA) | High | Moderate-High | Low | Moderate-High |

| k-mer-based (Kraken2, CLARK-S) | Moderate | Moderate-High | Variable | Moderate |

| Protein-based (Kaiju, MEGAN-P) | Moderate | Low-Moderate | High | Low-Moderate |

General-purpose mappers like Minimap2 achieve superior accuracy in read-level classification, outperforming specialized taxonomic classifiers in many scenarios [9]. However, this comes at a computational cost, with general-purpose mappers being up to ten times slower than the fastest k-mer-based tools [9].

In food safety applications, Kraken2/Bracken demonstrated the highest classification accuracy with consistently higher F1-scores across all tested food metagenomes, correctly identifying pathogen sequence reads down to the 0.01% abundance level [2]. MetaPhlAn4 also performed well but was limited in detecting pathogens at the lowest abundance levels (0.01%) [2].

Impact of Read Technology and Quality

Sequencing technology significantly influences classifier performance against these challenges. PacBio HiFi datasets generally yield better classification results than Oxford Nanopore Technologies (ONT) data, though both long-read technologies outperform short-read approaches for taxonomic classification [19]. One benchmarking study found that with PacBio HiFi data, top-performing methods detected all species down to the 0.1% abundance level with high precision [19].

Read length also affects performance, with datasets containing a large proportion of shorter reads (< 2 kb length) resulting in lower precision and worse abundance estimates compared to length-filtered datasets [19]. This has important implications for experimental design in pharmaceutical and clinical applications where detection sensitivity is critical.

Experimental Protocols for Benchmarking Classifier Performance

Standardized Mock Community Experiments

Well-defined mock communities with known compositions provide the gold standard for evaluating classifier performance against database challenges [19]. The experimental workflow involves:

Mock Community Selection: Standardized mock communities like ZymoBIOMICS Gut Microbiome Standard (17 species including bacteria, archaea, and yeasts in staggered abundances from 14% to 0.0001%) and ATCC MSA-1003 (20 bacterial species at various abundance levels) provide known composition ground truth [19]. These communities should represent the taxonomic diversity relevant to the research context.

Sequencing and Quality Control: Sequence mock communities using relevant technologies (PacBio HiFi, ONT, or Illumina). For PacBio HiFi, the Zymo community typically yields median read lengths of 8.1 kb [19]. Perform standard quality control including adapter removal, quality filtering, and length filtering.

Classification and Analysis: Process reads through multiple classifiers using different reference databases. Calculate precision, recall, F1-score, L1 distance (Manhattan distance), and abundance correlation compared to known composition [18] [19]. Specifically evaluate performance at low abundance levels (0.01% and below) where database incompleteness has the greatest impact.

Simulated Metagenome Experiments

While mock communities provide biological reality, simulated datasets offer complete control over composition and the ability to test specific database gaps [21].

Community Design: Create in silico communities with user-defined abundance profiles that include taxa with varying representation in reference databases. Include related species to test specificity and divergent sequences to test robustness.

Read Simulation: Use platform-specific simulators like InSilicoSeq for Illumina and DeepSim for Nanopore to generate realistic reads [21]. Incorporate technology-specific error profiles and length distributions.

Database Manipulation: Systematically remove specific taxa from reference databases to simulate incompleteness, or introduce sequence variations to simulate divergence. This enables controlled evaluation of how these factors impact classification accuracy.

Computational Resource Assessment

Given the growing size of comprehensive reference databases, resource utilization is a practical consideration [20].

Table 3: Computational Resource Requirements [9] [21] [20]

| Tool | Memory Usage | Classification Speed | Database Size |

|---|---|---|---|

| Kraken2 | High (~200 GB) | Fast | Large |

| Kaiju | High (~200 GB) | Moderate | Large |

| Minimap2 | Moderate | Slow | Reference-dependent |

| CLARK-S | Moderate | Fast | Moderate |

| RiboFrame | Low (~20 GB) | Fast | Small |

| ganon2 | Low | Fast | Compact (50% smaller) |

Metrics should include peak memory usage, classification time, and disk space requirements for databases. ganon2 represents a recent advancement with indices approximately 50% smaller than state-of-the-art methods while maintaining competitive classification performance [20].

Best Practices for Mitigating Database Challenges

Database Selection and Curation

- Use Comprehensive, Updated References: Regularly update reference databases to include newly sequenced genomes. Studies show that a 2-year-old RefSeq release contains 34,208 fewer species than the current version [20].

- Supplement with Environment-Specific Genomes: Add MAGs and cultured isolates from relevant environments to standard databases. This improves classification rates by 50-70% for understudied environments [15].

- Implement Quality Filtering: Remove contaminated, low-quality, or taxonomically problematic sequences using tools like BUSCO, CheckM, GUNC, and CheckV [17].

Tool Selection and Parameter Optimization

- Match Tool to Application: For pathogen detection in complex matrices, Kraken2/Bracken provides the best sensitivity at low abundances [2]. For overall community profiling with long reads, general-purpose mappers like Minimap2 offer highest accuracy despite slower speed [9].

- Optimize Confidence Thresholds: Kraken2 performance is highly dependent on confidence thresholds, with values around 0.05-0.2 often providing better precision than the default of 0 [18].

- Combine Approaches: Use multiple classification strategies (k-mer-based, mapping-based, protein-based) for challenging samples to leverage complementary strengths [9].

Table 4: Key Research Reagents and Computational Resources

| Resource | Type | Function in Validation | Example Sources |

|---|---|---|---|

| ZymoBIOMICS Standards | Mock Community | Ground truth for performance benchmarking | Zymo Research |

| ATCC MSA-1003 | Mock Community | Known composition for sensitivity assessment | ATCC |

| NCBI RefSeq | Reference Database | Standardized references for classification | NCBI |

| GTDB | Reference Database | Alternative taxonomy for prokaryotes | GTDB Consortium |

| Hungate Collection | Specialized Database | Rumen-specific references | Public repositories |

| MEGAN-LR | Analysis Software | Taxonomic profiling of long reads | University of Tübingen |

| Kraken2/Bracken | Classification Pipeline | k-mer-based classification & abundance estimation | CCB, JHU |

| ganon2 | Classification Tool | Memory-efficient large-scale classification | Open source |

Database incompleteness and sequence divergence remain significant challenges for metagenomic classification, but systematic benchmarking and appropriate tool selection can substantially mitigate their impact. Experimental data demonstrates that combining comprehensive, well-curated databases with optimized classification algorithms enables accurate taxonomic profiling even for complex microbial communities. The continued development of efficient classification tools like ganon2 that can leverage ever-growing genomic repositories promises to further enhance our ability to overcome these fundamental challenges in metagenomic analysis.

For researchers validating metagenomic classifiers in pharmaceutical and clinical contexts, regular benchmarking using mock communities and simulated datasets provides essential validation of performance limits. This ensures that taxonomic classifications supporting drug development decisions and clinical diagnostics maintain the highest standards of accuracy and reliability.

Metagenomic sequencing has revolutionized microbial ecology and clinical diagnostics by enabling comprehensive profiling of microbial communities directly from environmental or host-associated samples. However, the analytical accuracy of these studies is fundamentally constrained by two inherent properties of the resulting data: high dimensionality and compositionality. High dimensionality occurs when the number of microbial features (taxa, genes) far exceeds the number of samples, complicating statistical analysis and increasing false discovery rates [22] [23]. Compositionality arises because metagenomic data represents relative abundances rather than absolute counts, where the increase of one taxon necessarily leads to the apparent decrease of others due to fixed sequencing depth [22] [23]. These characteristics, if unaddressed, can lead to spurious associations, reduced generalizability, and inaccurate taxonomic profiling.

The validation of metagenomic classifiers depends critically on recognizing and accounting for these data properties. This guide provides a systematic comparison of computational approaches and their performance in addressing these challenges, offering researchers evidence-based recommendations for selecting and validating taxonomic classification tools in various experimental contexts.

Performance Comparison of Metagenomic Classifiers

Benchmarking Results Across Multiple Studies

Table 1: Comparative Performance of Taxonomic Classification Tools

| Classifier | Sequencing Type | Precision | Recall | Key Strengths | Key Limitations | Recommended Applications |

|---|---|---|---|---|---|---|

| Kraken2/Bracken | Short-read | High [2] | High [2] | Detects pathogens down to 0.01% abundance; High F1-scores [2] | Performance depends heavily on reference database quality [24] | Food safety, pathogen surveillance, clinical diagnostics [2] |

| Kaiju | Short-read | High [25] | High [25] | Protein-level alignment reduces false positives; Accurate abundance estimates [25] | Computationally intensive for large datasets [25] | Environmental samples with novel taxa; Community profiling [25] |

| BugSeq | Long-read | High [19] | High [19] | High precision/recall without filtering; All species detection down to 0.1% abundance [19] | Optimized for PacBio HiFi data [19] | Long-read datasets; Low-biomass samples [19] |

| MEGAN-LR & DIAMOND | Long-read | High [19] | High [19] | High precision/recall without filtering; Good for complex communities [19] | Requires substantial computational resources [19] | Long-read datasets; Functional annotation [19] |

| MetaPhlAn4 | Short-read | Moderate [2] | Variable [2] | Low false positive rate; Reliable for abundant taxa [2] | Limited detection at <0.01% abundance [2] | Community profiling; Well-characterized microbiomes [2] |

| Centrifuge | Short-read | Lower [2] | Moderate [2] | Comprehensive nt database coverage [7] | Higher false positive rate; Weaker performance in benchmarks [2] | Applications requiring broad taxonomic coverage [7] |

Impact of Reference Databases on Classification Accuracy

The performance of metagenomic classifiers is substantially influenced by the choice and quality of reference databases. Studies demonstrate that database selection can dramatically impact both classification rate and accuracy.

Table 2: Reference Database Impact on Taxonomic Classification

| Database | Contents | Classification Rate | Accuracy | Best Suited For |

|---|---|---|---|---|

| NCBI RefSeq | Comprehensive bacterial, archaeal, viral genomes; human genome; vectors [24] | Low for understudied environments [24] | Poor for novel microbes [24] | Well-characterized human microbiomes [24] |

| Hungate (Rumen-specific) | 460 cultured rumen microbial genomes [24] | Improved with addition of relevant genomes [24] | High for target environment [24] | Specialized environments; Agricultural microbiomes [24] |

| RUG (Rumen Uncultured Genomes) | Metagenome-assembled genomes from rumen [24] | Greatly improved (50-70%) [24] | High when MAGs have accurate taxonomic labels [24] | Environments with many uncultured microbes [24] |

| Custom nt (Centrifuge) | Curated NCBI nt with quality control [7] | Moderate to high [7] | Improved by reducing spurious classifications [7] | Clinical metagenomics; Forensics; Environmental samples [7] |

Experimental evidence indicates that classification accuracy improves most significantly when using databases tailored to the specific environment being studied. For instance, adding cultured reference genomes from the rumen to standard databases improved classification accuracy for rumen samples, while metagenome-assembled genomes (MAGs) further enhanced accuracy by representing uncultivated microbes [24]. However, the accuracy gains from MAGs were strongly dependent on the quality of taxonomic labels assigned to these genomes [24].

Experimental Protocols for Benchmarking Studies

Methodology for Classifier Performance Evaluation

Benchmarking studies typically employ carefully designed experimental protocols to evaluate classifier performance under controlled conditions:

Mock Community Design: Researchers utilize synthetic microbial communities with known compositions to establish ground truth for evaluation. These mock communities contain defined species at staggered abundance levels (e.g., 0.01% to 30%) to assess detection limits and quantitative accuracy [2] [19]. Common mock communities include the ATCC MSA-1003 (20 bacterial species) and ZymoBIOMICS standards (varying complexity) [19].

Sequencing Data Generation: Both short-read (Illumina) and long-read (PacBio HiFi, Oxford Nanopore) technologies are employed to generate benchmarking datasets. For comprehensive evaluation, datasets may include:

- In silico simulated reads from known genomes [24]

- Empirical sequencing data from mock communities [19]

- Spiked-in pathogens in complex matrices [2]

Performance Metrics: Standardized metrics enable objective comparison across tools:

- Precision: Proportion of correct positive classifications among all positive classifications

- Recall: Proportion of actual positives correctly identified

- F1-score: Harmonic mean of precision and recall

- Classification rate: Percentage of input reads successfully classified

- Abundance estimation accuracy: Correlation between estimated and true relative abundances

Parameter Optimization: Studies typically evaluate multiple parameter settings for each classifier, such as confidence thresholds, minimal alignment lengths, and database versions, to determine optimal configurations [19] [25].

Addressing Compositionality in Metagenomic Data Analysis

The compositional nature of metagenomic data requires specialized statistical approaches to avoid spurious correlations. The SelEnergyPerm method exemplifies a sophisticated approach to this challenge through its protocol:

Logratio Transformation: Data is transformed using pairwise logratios to move from constrained composition space to standard Euclidean space, ensuring sub-compositional coherence [23].

Feature Selection: The method employs parsimonious feature selection to identify minimal sets of taxonomic features that capture between-group associations while maintaining statistical power in high-dimensional settings [23].

Permutation Testing: Non-parametric significance testing using energy distance metrics validates associations against null distributions, controlling for false discoveries [23].

This approach directly addresses the simplex constraints of relative abundance data, where traditional Euclidean-based statistical methods have limited applicability and increased Type I error [23].

Visualization of Metagenomic Analysis Workflows

Workflow for Benchmarking Metagenomic Classifiers

Benchmarking Metagenomic Classifiers Workflow

This workflow illustrates the standardized approach for evaluating metagenomic classifiers, beginning with controlled mock communities and proceeding through sequencing, analysis, and performance assessment stages.

Data Analysis Pipeline Addressing Compositionality

Compositional Data Analysis Pipeline

This diagram outlines the specialized processing pipeline required for analyzing compositional metagenomic data, highlighting critical steps that address high dimensionality and compositionality challenges.

Table 3: Key Research Reagent Solutions for Metagenomic Classifier Validation

| Resource Type | Specific Examples | Function in Validation | Considerations for Use |

|---|---|---|---|

| Reference Materials | ATCC MSA-1003, ZymoBIOMICS Standards [19] | Provide ground truth with known composition for accuracy assessment | Select communities relevant to your study ecosystem |

| Reference Databases | NCBI RefSeq, Hungate Collection, Custom nt [24] [7] | Enable taxonomic assignment through sequence comparison | Database choice significantly impacts results; prefer environment-specific databases [24] |

| Bioinformatics Tools | Kraken2, Kaiju, BugSeq, MEGAN-LR [2] [19] [25] | Perform taxonomic classification and profiling | Tool performance varies by data type (short vs. long reads) and application [19] |

| Statistical Methods | SelEnergyPerm, Logratio Analysis [23] | Address compositionality and high dimensionality in downstream analysis | Essential for avoiding spurious correlations in relative abundance data [23] |

| Benchmarking Frameworks | CAMI, CAMDA [22] | Provide standardized assessments and community challenges | Enable objective comparison across different tools and approaches [22] |

The validation of metagenomic classifiers requires careful consideration of data quality challenges, particularly high dimensionality and compositionality. Evidence from benchmarking studies indicates that optimal tool selection depends on the specific research context: Kraken2/Bracken excels in sensitive pathogen detection, Kaiju provides robust classification across diverse taxa, and long-read specialized tools like BugSeq offer high precision with third-generation sequencing data. Critically, reference database choice profoundly impacts accuracy, with environment-specific databases consistently outperforming generic alternatives. Researchers should prioritize approaches that explicitly address compositionality through appropriate statistical methods and validate classifiers using relevant mock communities that reflect their target ecosystems.

Methodological Approaches and Real-World Applications in Biomedical Research

Taxonomic Classifier Architectures: Kraken2, Kaiju, MetaPhlAn, and Centrifuge

Metagenomic taxonomic classifiers are essential tools for translating raw sequencing data into meaningful biological insights by identifying the microbial taxa present in a sample. The architectural choices underlying these tools—ranging from k-mer matching and protein alignment to marker-based strategies and compressed full-text indices—directly shape their performance characteristics, accuracy, and suitable application domains. This guide objectively compares the architectures and performance of four widely used classifiers—Kraken2, Kaiju, MetaPhlAn, and Centrifuge (and its successor Centrifuger)—framed within the context of validation research for metagenomic classifiers.

Core Architectural Principles and Classification Mechanisms

The fundamental algorithms and data structures employed by metagenomic classifiers determine their computational efficiency, sensitivity, and specificity. The following diagram illustrates the core classification workflows for the four tools.

- Kraken2 employs a k-mer-based exact matching approach. It examines k-mers (short subsequences of length k) within a query read and consults a reference database that maps each k-mer to the lowest common ancestor (LCA) of all genomes known to contain it [1] [26]. The taxonomic label for the read is determined by the LCA that collects the most k-mers above a user-defined confidence threshold [26].

- Kaiju operates via protein-level homology search. It performs a six-frame translation of nucleotide reads into amino acid sequences and aligns them to a database of microbial proteins using the Burrows-Wheeler Transform (BWT) and the FM-index [6]. This method leverages the higher conservation of amino acid sequences compared to nucleotides, potentially offering greater sensitivity for classifying reads from divergent or novel microorganisms [1] [6].

- MetaPhlAn uses a marker gene-based strategy. Instead of using entire genomes, it relies on a curated set of unique, clade-specific marker genes [27] [28]. Reads are aligned directly to this custom database, and the presence and abundance of taxa are inferred from the markers detected [27]. This approach provides high taxonomic specificity and direct relative abundance estimates but is inherently limited to the genomic diversity captured by its marker set [1].

- Centrifuge/Centrifuger utilizes a memory-efficient FM-index for classification. Centrifuge performs backward search on the Burrows-Wheeler Transform (BWT) of the reference genome database to find semi-maximal matches with no constrained length [29]. Its successor, Centrifuger, introduces a novel run-block compression scheme for the BWT, achieving sublinear space complexity and reducing memory usage by half compared to conventional FM-indexes, while maintaining lossless compression and supporting fast rank queries [29].

Performance Comparison and Benchmark Data

Classifier performance varies significantly across metrics such as precision, recall, speed, and resource consumption, depending on the dataset and experimental conditions. The table below synthesizes key findings from multiple benchmarking studies.

| Classifier | Core Algorithm | Best-Performance Context | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Kraken2 [26] [6] | k-mer & LCA | - Modern, undamaged metagenomes [30]- High speed with large databases [26] | - Very fast classification [1]- Scalable with database size [31] | - Precision affected by database & confidence score [26]- Lower accuracy on ancient DNA [30] |

| Kaiju [6] | Protein alignment (BWT/FM-index) | - Complex environmental samples [6]- Ancient/damaged DNA [30]- Detecting divergent taxa | - High accuracy (genus/species level) [6]- Robust to sequencing errors & evolution | - High RAM (~200 GB) [6]- Slower than k-mer tools [1] |

| MetaPhlAn4 [27] [32] | Marker gene alignment | - High-abundance community profiling [27]- Integrating MAGs for unknown taxa [32] | - High taxonomic specificity [27]- Low comp. requirements [28]- Direct abundance profiling | - Limited to marker genes [1]- Lower sensitivity for low-abundance/novel taxa |

| Centrifuger [29] | Run-block compressed FM-index | - Accurate classification at lower taxonomic levels [29]- Microbial genomes with mild repetitiveness | - Lossless compression, sublinear space [29]- High accuracy for microbial data [29] | - Performance on highly repetitive sequences may be less optimal [29] |

Quantitative Performance Insights:

- Kraken2's Precision-Sensitivity Trade-off: A systematic evaluation of Kraken2 demonstrated that the choice of confidence score (CS) significantly impacts performance. With comprehensive databases (e.g., Standard, nt), increasing CS from 0 to 1.0 led to a significant increase in precision but a decrease in classification rate. For smaller databases (e.g., Minikraken), a CS above 0.4 resulted in no reads being classified [26]. This highlights the critical need to balance database size and stringency settings.

- Kaiju's Accuracy in Complex Mock Communities: In a benchmark of a wastewater treatment mock community, Kaiju emerged as the most accurate classifier at both genus and species levels, with its inferred genus abundances closely mirroring the actual mock proportions. However, approximately 25% of its classifications were erroneous, and it required over 200 GB of RAM [6].

- MetaPhlAn4's Comprehensive Profiling: By integrating over 1.01 million prokaryotic reference and metagenome-assembled genomes (MAGs), MetaPhlAn 4 defines unique marker genes for 26,970 species-level genome bins (SGBs), 4,992 of which are taxonomically unidentified. This allows it to explain ~20% more reads in human gut microbiomes and over 40% more in less-characterized environments compared to previous methods [27]. In mouse studies, it revealed that unknown species (uSGBs) often dominate the gut microbiome and can be the strongest biomarkers for dietary changes [32].

- Centrifuger's Efficiency and Accuracy: On simulated metagenomic data, Centrifuger demonstrated superior accuracy at lower taxonomic levels, attributed to its lossless compression and use of unconstrained match lengths. Its novel run-block compressed BWT (RBBWT) consumed up to 46.9% less space than a standard wavelet tree and 24.8% less than run-length compressed BWT (RLBWT) for genus-level Legionella genomes, while maintaining fast rank query speeds [29].

Experimental Protocols for Classifier Validation

Robust validation of metagenomic classifiers relies on standardized experiments using datasets with known composition. The following diagram outlines a core benchmarking workflow, with detailed methodologies described thereafter.

Benchmarking Using Simulated Metagenomes

Simulated datasets with known ground truth are the gold standard for calculating accuracy metrics.

- Mock Community Design: Benchmarks often use in silico generated mock communities designed to reflect the microbial complexity of the environment being studied (e.g., human gut, wastewater [6]). The composition, including the selection of species and their relative abundances, is predefined.

- Sequencing Simulation: Tools like Mason [29] or Gargammel [30] are used to simulate sequencing reads from the mock community. Parameters such as read length, sequencing error rate (e.g., 1% [29]), and insert size are controlled. For ancient DNA benchmarks, damage patterns like deamination (C→T and G→A misincorporations) and fragmentation are introduced [30].

- Contamination Introduction: To assess robustness, modern DNA contamination (both host and environmental) can be added at varying levels, as this is a major confounder in real ancient metagenomic studies [30].

Performance Metrics and Analysis

Evaluations must go beyond simple classification rates to provide a holistic view of performance.

- Precision, Recall, and F1 Score: These are fundamental metrics [1] [30]. Precision is the proportion of correctly identified species among all species reported by the tool. Recall is the proportion of species in the sample that were correctly identified. The F1 score is the harmonic mean of precision and recall [30].

- Precision-Recall (PR) Curves: Since users often filter out low-abundance taxa, plotting precision and recall across all possible abundance thresholds (a PR curve) provides a more realistic performance assessment than a single value [1]. The area under the PR curve is a valuable composite metric.

- Abundance Estimation Accuracy: The difference between the calculated relative abundance and the true relative abundance for each taxon is a critical measure of profiling fidelity [26].

- Computational Resource Usage: Memory (RAM) consumption and processing speed are practical constraints, especially for large datasets [29] [6].

This table details essential computational reagents and databases used in classifier development and validation experiments.

| Reagent / Resource | Function in Validation | Example in Use |

|---|---|---|

| Reference Databases | Provide known sequences for read comparison/classification; size/composition major performance factor [1]. | NCBI RefSeq, GTDB, SILVA, custom MetaPhlAn marker DB [27] [26] [28] |

| In Silico Mock Communities | Ground truth for accuracy metrics (precision, recall); enable controlled performance tests [6]. | Wastewater microbial community mock [6] |

| Read Simulators | Generate synthetic sequencing reads with controlled parameters (error, damage, abundance) [29] [30]. | Mason [29], Gargammel (aDNA damage) [30] |

| Metagenome-Assembled Genomes (MAGs) | Expand reference databases with uncultivated taxa; improve profiling of unknown species [27]. | 1.01M prokaryotic genomes/MAGs in MetaPhlAn4 [27] |

| Performance Metrics Software | Calculate standardized metrics for objective tool comparison [1] [30]. | Precision, Recall, F1 score, Abundance correlation |